Best AI tools for< Scrape Url >

20 - AI tool Sites

Synterrix

Synterrix is an advanced AI tool designed for Google Sheets, offering features like fine-tuned AI models, bulk processing of prompts and image URLs, autocomplete tasks with AI, scraping text from URLs, and generating formulas with AI. It aims to enhance productivity and efficiency by providing AI-powered solutions for various tasks within Google Sheets, catering to both large teams and lean teams with tight budgets.

ScrapeComfort

ScrapeComfort is an AI-driven web scraping tool that offers an effortless and intuitive data mining solution. It leverages AI technology to extract data from websites without the need for complex coding or technical expertise. Users can easily input URLs, download data, set up extractors, and save extracted data for immediate use. The tool is designed to cater to various needs such as data analytics, market investigation, and lead acquisition, making it a versatile solution for businesses and individuals looking to streamline their data collection process.

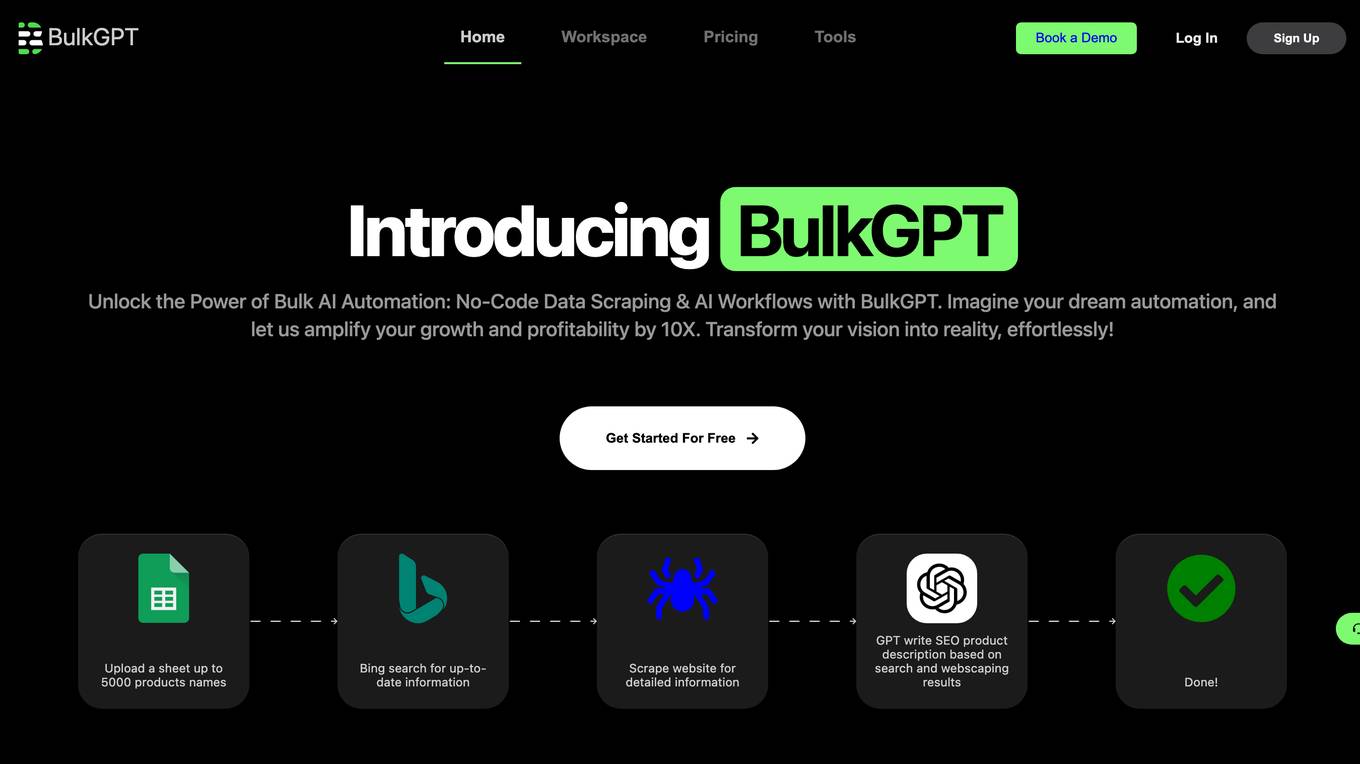

BulkGPT

BulkGPT is a no-code AI workflow automation tool that allows users to build custom workflows for mass web scraping and content creation without the need for coding. It simplifies tasks such as scraping web pages, generating SEO blogs, and creating personalized messages. The tool enables users to upload data, run it in Google Sheets, integrate it with other tools via API, and automate content creation efficiently. BulkGPT offers features like web scraping in Google Sheets, crawling any URL for data extraction, and AI-powered tasks such as SEO content creation, e-commerce product description generation, ChatGPT automation, data scraping, and marketing email campaigns.

Simplescraper

Simplescraper is a web scraping tool that allows users to extract data from any website in seconds. It offers the ability to download data instantly, scrape at scale in the cloud, or create APIs without the need for coding. The tool is designed for developers and no-coders, making web scraping simple and efficient. Simplescraper AI Enhance provides a new way to pull insights from web data, allowing users to summarize, analyze, format, and understand extracted data using AI technology.

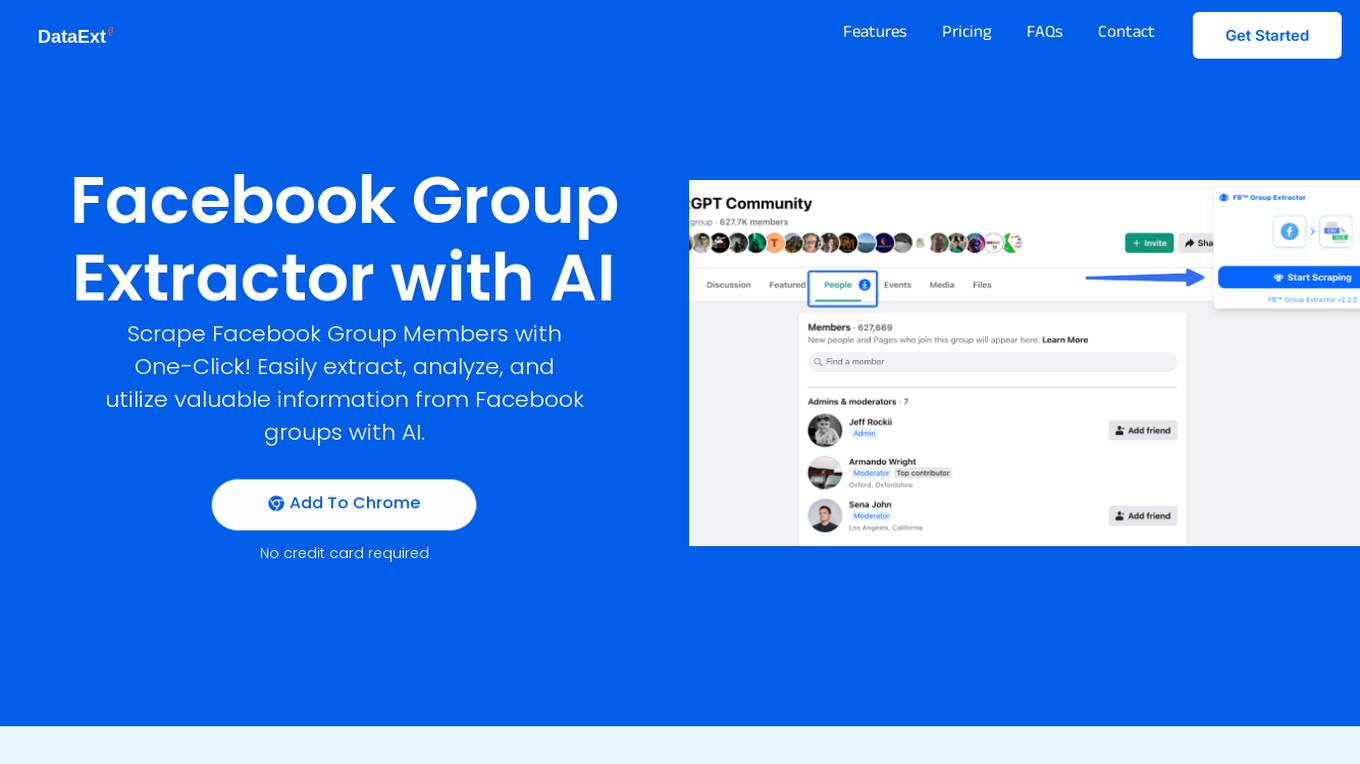

FB Group Extractor

FB Group Extractor is an AI-powered tool designed to scrape Facebook group members' data with one click. It allows users to easily extract, analyze, and utilize valuable information from Facebook groups using artificial intelligence technology. The tool provides features such as data extraction, behavioral analytics for personalized ads, content enhancement, user research, and more. With over 10k satisfied users, FB Group Extractor offers a seamless experience for businesses to enhance their marketing strategies and customer insights.

FetchFox

FetchFox is an AI-powered web scraping tool that allows users to extract data from any website by providing a prompt in plain English. It runs as a Chrome Extension and can bypass anti-scraping measures on sites like LinkedIn and Facebook. FetchFox is designed to quickly gather data for tasks such as lead generation, research data assembly, and market segment analysis.

FinalScout

FinalScout is an AI-powered email finding and outreach tool that helps users find valid email addresses for professionals and craft tailored emails with the assistance of ChatGPT technology. With a massive and accurate database of business profiles, company profiles, and email addresses, FinalScout guarantees up to 98% email deliverability. Users can say goodbye to the stress of writing outreach emails and leverage EmailAI's advanced AI technology to generate highly personalized emails effortlessly. The platform is GDPR & CCPA compliant, ensuring the secure handling of business data.

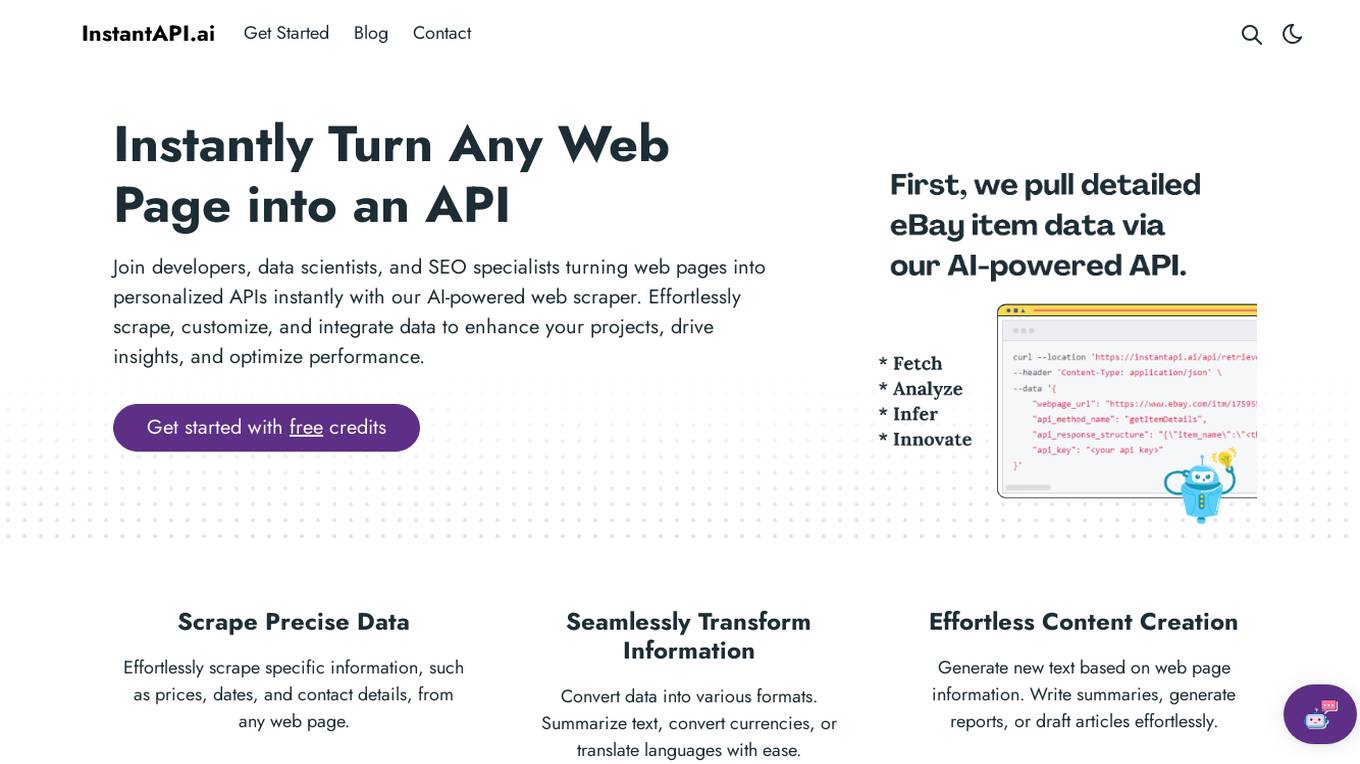

InstantAPI.ai

InstantAPI.ai is an AI-powered web scraping tool that allows developers, data scientists, and SEO specialists to instantly turn any web page into a personalized API. With the ability to effortlessly scrape, customize, and integrate data, users can enhance their projects, drive insights, and optimize performance. The tool offers features such as scraping precise data, transforming information into various formats, generating new content, providing advanced analysis, and extracting valuable insights from data. Users can tailor the output to meet specific needs and unleash creativity by using AI for unique purposes. InstantAPI.ai simplifies the process of web scraping and data manipulation, offering a seamless experience for users seeking to leverage AI technology for their projects.

Linkeddit

Linkeddit is an AI-powered tool designed to help users find potential customers on Reddit who are actively seeking solutions. By analyzing millions of conversations in real-time, Linkeddit identifies high-intent prospects discussing relevant product categories. The tool provides curated lists of decision-makers with verified buying intent, engagement metrics, and context to help convert warm leads into customers. Linkeddit also offers features like direct post links, engagement metrics, buying intent score, export-ready lists, and personalized outreach suggestions, enabling users to efficiently connect with the right audience on Reddit.

IG Lead Gen

IG Lead Gen is an AI-powered tool designed to automate Instagram lead generation for B2B founders. It offers custom lead filtering based on metrics like Follower count, Following count, Age of Lead, Verification Status, and Link in Bio. The tool utilizes proprietary AI technology to identify and scrape active Instagram users likely to convert to customers. Users can effortlessly export leads in various formats through the advanced dashboard. IG Lead Gen aims to streamline the process of generating targeted leads, saving time, and enabling users to focus on growing their business.

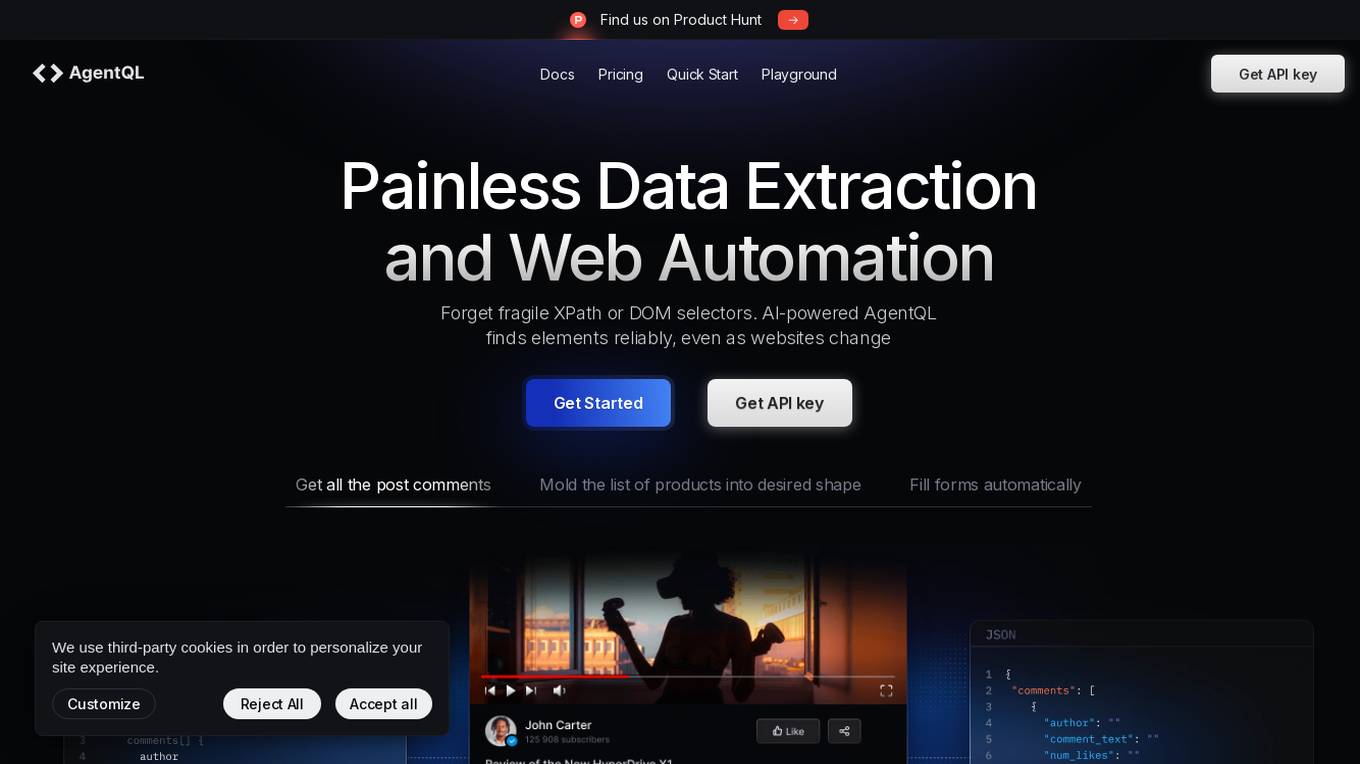

AgentQL

AgentQL is an AI-powered tool for painless data extraction and web automation. It eliminates the need for fragile XPath or DOM selectors by using semantic selectors and natural language descriptions to find web elements reliably. With controlled output and deterministic behavior, AgentQL allows users to shape data exactly as needed. The tool offers features such as extracting data, filling forms automatically, and streamlining testing processes. It is designed to be user-friendly and efficient for developers and data engineers.

Apify

Apify is a full-stack web scraping and data extraction platform that offers ready-made web scrapers for popular websites, serverless program building, and AI access to Actors. It provides solutions for various industries like Enterprise, Startups, and Universities by offering data for generative AI, AI agents, lead generation, market research, and more. Apify also supports web content crawling and extraction for AI models, LLM applications, and RAG pipelines. With a marketplace of over 10,000 Actors, Apify enables users to build custom web scraping solutions and easily integrate with other tools.

Browse AI

Browse AI is a leading AI-powered web scraping and monitoring platform that allows users to scrape, extract, monitor, and integrate data from almost any website with no coding required. With features like AI change detection, dynamic content capture, and 7,000+ integrations, Browse AI offers easy, reliable, and scalable data extraction solutions. Users can transform websites into live datasets, automate data extraction, and monitor website changes effortlessly. The platform caters to various industries and use cases, providing fully managed web scraping services and custom data post-processing for enterprise clients.

Firecrawl

Firecrawl is an advanced web crawling and data conversion tool designed to transform any website into clean, LLM-ready markdown. It automates the collection, cleaning, and formatting of web data, streamlining the preparation process for Large Language Model (LLM) applications. Firecrawl is best suited for business websites, documentation, and help centers, offering features like crawling all accessible subpages, handling dynamic content, converting data into well-formatted markdown, and more. It is built by LLM engineers for LLM engineers, providing clean data the way users want it.

Goless

Goless is a browser automation tool that allows users to automate tasks on websites without the need for coding. It offers a range of features such as data scraping, form filling, CAPTCHA solving, and workflow automation. The tool is designed to be easy to use, with a drag-and-drop interface and a marketplace of ready-made workflows. Goless can be used to automate a variety of tasks, including data collection, data entry, website testing, and social media automation.

Airtop

Airtop is a browser automation tool designed for AI agents, allowing users to automate web tasks using natural language commands. It offers inexpensive and scalable AI-powered cloud browsers, enabling effortless scraping and control of any website. Airtop simplifies the process of managing cloud browser infrastructure, freeing users to focus on their core business activities. The tool supports a wide range of use cases, including automating tasks that were previously challenging, such as interacting with sites behind logins and virtualizing the DOM.

Extracto.bot

Extracto.bot is an AI web scraping tool that automates the process of extracting data from websites. It is a no-configuration, intelligent web scraper that allows users to collect data from any site using Google Sheets and AI technology. The tool is designed to be simple, instant, and intelligent, enabling users to save time and effort in collecting and organizing data for various purposes.

Runner H

Runner H is an AI tool that enables users to create, run, and scale web automations effortlessly. It offers a platform for building super intelligence through VLMs, LLMs, and Agents API Beta. Users can join the API beta to access advanced features and functionalities. The tool aims to put AI to work for users, providing a seamless experience for automating tasks and processes.

Magic Loops

Magic Loops is an AI tool that allows users to create automated workflows using ChatGPT automations. Users can connect data, send emails, receive texts, scrape websites, and more. The tool enables users to automate various tasks by creating personalized loops that respond to specific triggers and inputs.

Drippi.ai

Drippi.ai is an AI-powered cold outreach assistant designed to automate personalized outreach messages on Twitter. It utilizes AI technology to streamline lead scraping, lead matching, and message tailoring for improved engagement and reply rates. The platform offers in-depth analytics, lead discovery solutions, and personalized campaigns to enhance user's Twitter DM campaigns. Drippi aims to help users save time and resources by automating the process of crafting highly targeted outreach messages.

1 - Open Source AI Tools

firecrawl

Firecrawl is an API service that takes a URL, crawls it, and converts it into clean markdown. It crawls all accessible subpages and provides clean markdown for each, without requiring a sitemap. The API is easy to use and can be self-hosted. It also integrates with Langchain and Llama Index. The Python SDK makes it easy to crawl and scrape websites in Python code.

17 - OpenAI Gpts

Advanced Web Scraper with Code Generator

Generates web scraping code with accurate selectors.

Scraping GPT Proxy and Web Scraping Tips

Scraping ChatGPT helps you with web scraping and proxy management. It provides advanced tips and strategies for efficiently handling CAPTCHAs, and managing IP rotations. Its expertise extends to ethical scraping practices, and optimizing proxy usage for seamless data retrieval

CodeGPT

This GPT can generate code for you. For now it creates full-stack apps using Typescript. Just describe the feature you want and you will get a link to the Github code pull request and the live app deployed.

Domain Email Scraper

Assists in ethically finding domain emails, keeping methods confidential.