Best AI tools for< Run On Multiple Gpus >

20 - AI tool Sites

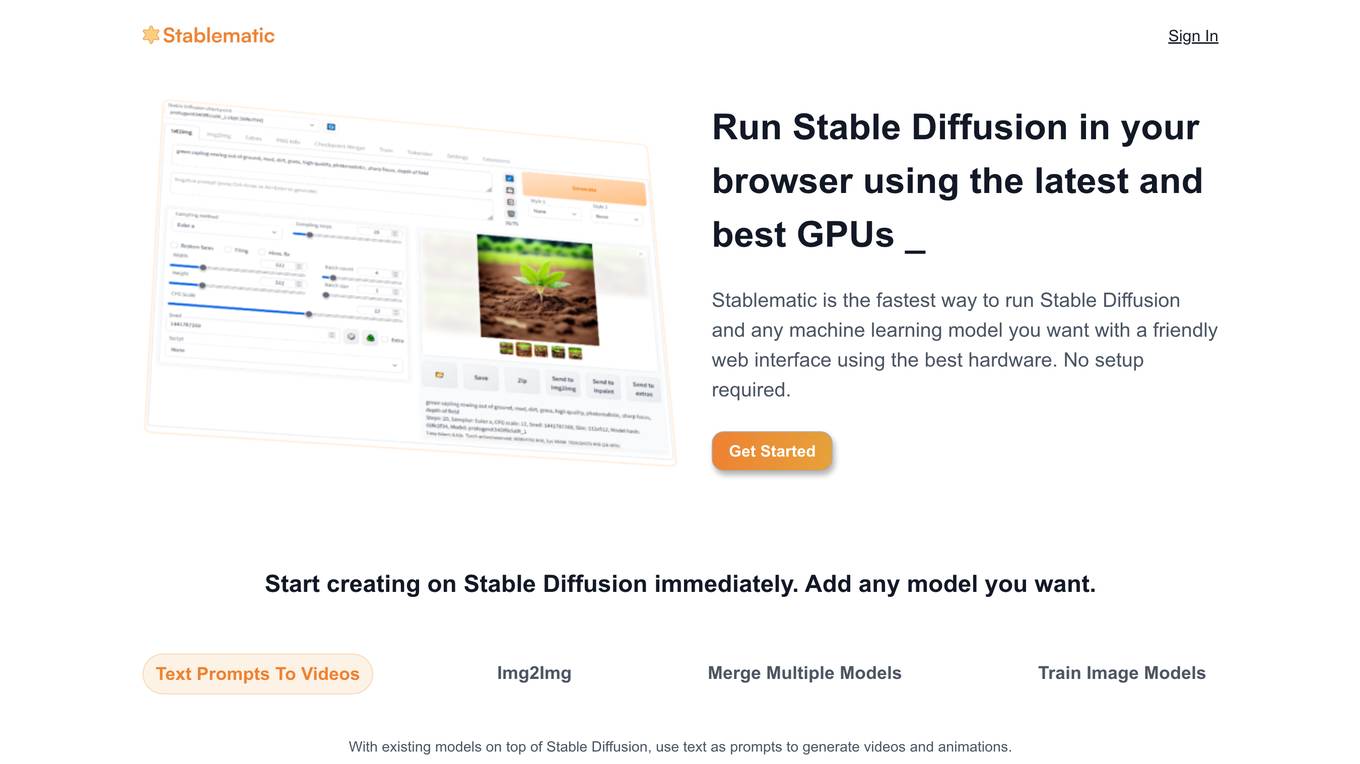

Stablematic

Stablematic is a web-based platform that allows users to run Stable Diffusion and other machine learning models without the need for local setup or hardware limitations. It provides a user-friendly interface, pre-installed plugins, and dedicated GPU resources for a seamless and efficient workflow. Users can generate images and videos from text prompts, merge multiple models, train custom models, and access a range of pre-trained models, including Dreambooth and CivitAi models. Stablematic also offers API access for developers and dedicated support for users to explore and utilize the capabilities of Stable Diffusion and other machine learning models.

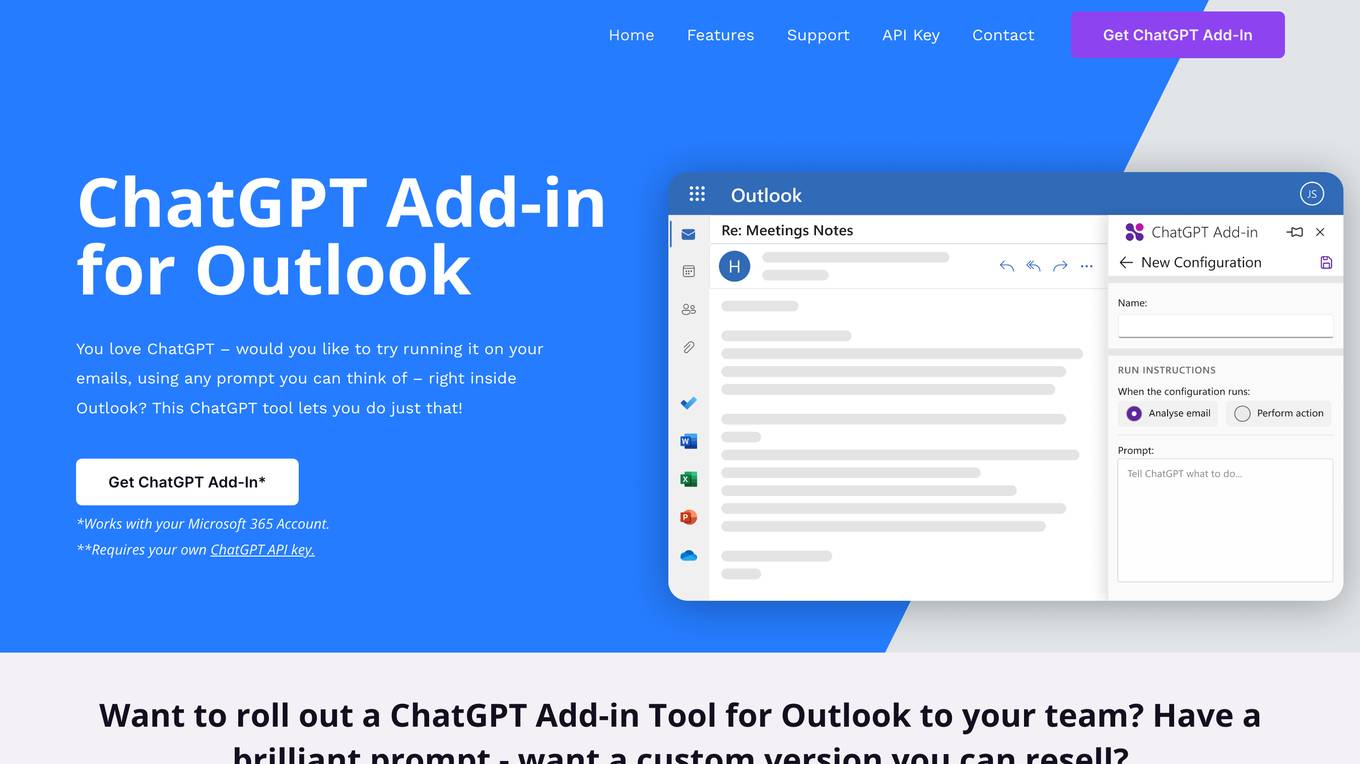

ChatGPT for Outlook

ChatGPT for Outlook is an AI tool that integrates the power of ChatGPT into Microsoft Outlook, allowing users to run ChatGPT on emails to generate summaries, highlights, and important information. Users can create custom prompts, process entire emails or specific parts, manage multiple configurations, and improve email efficiency. Blueberry Consultants, the developer, offers custom versions for businesses and teams, enabling users to resell the tool with unique prompts. The tool enhances productivity and information management within Outlook, leveraging AI technology for email processing and organization.

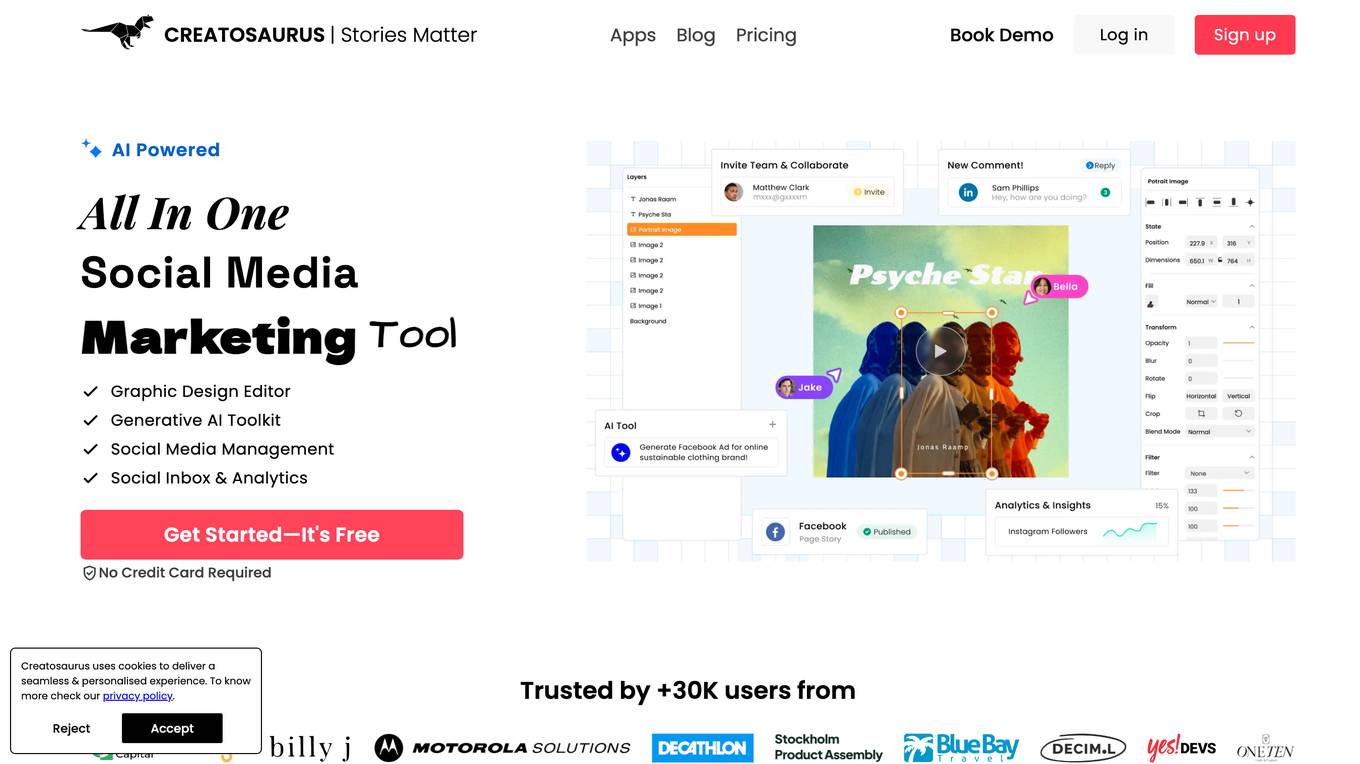

Creatosaurus

Creatosaurus is an all-in-one creative and marketing platform that helps businesses and individuals create, manage, and publish content across multiple social media platforms. It offers a range of features, including a social media scheduler, a graphic design editor, an AI content writer, and a social inbox. Creatosaurus is designed to help businesses save time and money on their marketing efforts, while also improving the quality and reach of their content.

ONNX Runtime

ONNX Runtime is a production-grade AI engine designed to accelerate machine learning training and inferencing in various technology stacks. It supports multiple languages and platforms, optimizing performance for CPU, GPU, and NPU hardware. ONNX Runtime powers AI in Microsoft products and is widely used in cloud, edge, web, and mobile applications. It also enables large model training and on-device training, offering state-of-the-art models for tasks like image synthesis and text generation.

Helium 10

Helium 10 is an AI-powered platform designed for everyday sellers on Amazon, Walmart, and TikTok. It offers a suite of tools to help sellers analyze product potential, optimize listings, manage inventory, and run advertising campaigns. The platform empowers sellers with AI-driven insights to increase profits and expand their brand presence across multiple marketplaces.

Edyt AI

Edyt AI is an AI-powered content optimization tool designed to help users create SEO-optimized content effortlessly. With the ability to generate articles in seconds and run multiple AI iterations on content, Edyt AI streamlines the content creation process. Users can improve existing blogs with just a few clicks, add external and internal links, and optimize content for better rankings. The tool ensures content quality by analyzing thousands of articles and words, making it a valuable asset for content creators and marketers.

Cortex Labs

Cortex Labs is a decentralized world computer that enables AI and AI-powered decentralized applications (dApps) to run on the blockchain. It offers a Layer2 solution called ZkMatrix, which utilizes zkRollup technology to enhance transaction speed and reduce fees. Cortex Virtual Machine (CVM) supports on-chain AI inference using GPU, ensuring deterministic results across computing environments. Cortex also enables machine learning in smart contracts and dApps, fostering an open-source ecosystem for AI researchers and developers to share models. The platform aims to solve the challenge of on-chain machine learning execution efficiently and deterministically, providing tools and resources for developers to integrate AI into blockchain applications.

Functionize

Functionize is an AI-powered test automation platform that helps enterprises improve their product quality and release faster. It uses machine learning to automate test creation, maintenance, and execution, and provides a range of features to help teams collaborate and manage their testing process. Functionize integrates with popular CI/CD tools and DevOps pipelines, and offers a range of pricing options to suit different needs.

Functionize

Functionize is an AI Agentic Automation Platform for Enterprises that offers expert AI agents to handle business processes autonomously. The platform utilizes deep learning neural networks to deliver unparalleled performance across various enterprise applications. Functionize's AI agents run autonomously, self-heal workflows, and redefine efficiency and reliability in automation. The platform provides immediate value with pretrained automation, evolves with operational environments, and ensures seamless adaptability and precision in every task. Functionize helps mitigate risks, unlock gains, and support digital transformation for enterprises.

Tensoic AI

Tensoic AI is an AI tool designed for custom Large Language Models (LLMs) fine-tuning and inference. It offers ultra-fast fine-tuning and inference capabilities for enterprise-grade LLMs, with a focus on use case-specific tasks. The tool is efficient, cost-effective, and easy to use, enabling users to outperform general-purpose LLMs using synthetic data. Tensoic AI generates small, powerful models that can run on consumer-grade hardware, making it ideal for a wide range of applications.

Gretel.ai

Gretel.ai is a synthetic data platform purpose-built for AI applications. It allows users to generate artificial, synthetic datasets with the same characteristics as real data, enabling the improvement of AI models without compromising privacy. The platform offers APIs for generating anonymized and safe synthetic data, training generative AI models, and validating models with quality and privacy scores. Users can deploy Gretel for enterprise use cases and run it on various cloud platforms or in their own environment.

ThirdAI

ThirdAI is an AI platform that offers a production-ready solution for building and deploying AI applications quickly and efficiently. It provides advanced AI/GenAI technology that can run on any infrastructure, reducing barriers to delivering production-grade AI solutions. With features like enterprise SSO, built-in models, no-code interface, and more, ThirdAI empowers users to create AI applications without the need for specialized GPU servers or AI skills. The platform covers the entire workflow of building AI applications end-to-end, allowing for easy customization and deployment in various environments.

Captur

Captur is an AI-powered platform that enables users to automate manual image review workflows with easy-to-use APIs. The platform offers real-time guidance to improve user experience, provides AI models for various checks, and helps transform operations for enterprises. Captur's edge AI platform allows developers, product owners, and operations teams to build, test, deploy, and iterate AI models efficiently. The platform is designed to run on-device, ensuring real-time intelligence without lag or weak signals. Captur is a comprehensive solution for deploying edge AI into real-world operations, offering features such as live guidance, quick scanning, confidence-building, and privacy verification.

Cartesia Sonic Team Blog Research Playground

Cartesia Sonic Team Blog Research Playground is an AI application that offers real-time multimodal intelligence for every device. The application aims to build the next generation of AI by providing ubiquitous, interactive intelligence that can run on any device. It features the fastest, ultra-realistic generative voice API and is backed by research on simple linear attention language models and state-space models. The founding team, who met at the Stanford AI Lab, has invented State Space Models (SSMs) and scaled it up to achieve state-of-the-art results in various modalities such as text, audio, video, images, and time-series data.

Raman Labs

Raman Labs is an AI tool that offers dedicated modules for computer vision-based tasks. It allows users to integrate machine learning functionality into their existing applications with just 2 lines of code, ensuring real-time performance even with high-resolution data on consumer-grade CPUs. The tool provides a clean and minimalistic API for easy integration, robust to large scale and resolution variations, versatile to run on various platforms, and adaptive to scale with the computing power of the system.

Juno

Juno is an AI tool designed to enhance data science workflows by providing code suggestions, automatic debugging, and code editing capabilities. It aims to make data science tasks more efficient and productive by assisting users in writing and optimizing code. Juno prioritizes privacy and offers the option to run on private servers for sensitive datasets.

NeuProScan

NeuProScan is an AI platform designed for the early detection of pre-clinical Alzheimer's from MRI scans. It utilizes AI technology to predict the likelihood of developing Alzheimer's years in advance, helping doctors improve diagnosis accuracy and optimize the use of costly PET scans. The platform is fully customizable, user-friendly, and can be run on devices or in the cloud. NeuProScan aims to provide patients and healthcare systems with valuable insights for better planning and decision-making.

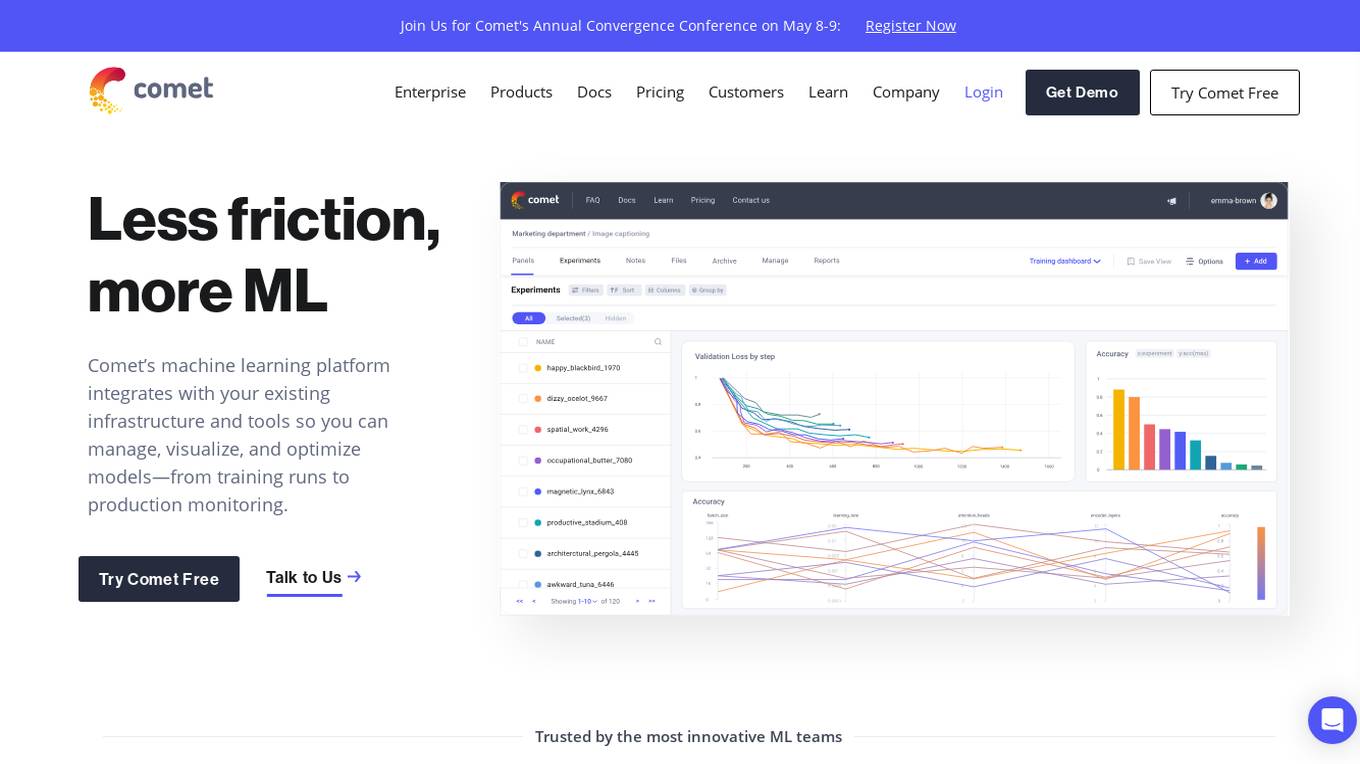

Comet ML

Comet ML is an extensible, fully customizable machine learning platform that aims to move ML forward by supporting productivity, reproducibility, and collaboration. It integrates with existing infrastructure and tools to manage, visualize, and optimize models from training runs to production monitoring. Users can track and compare training runs, create a model registry, and monitor models in production all in one platform. Comet's platform can be run on any infrastructure, enabling users to reshape their ML workflow and bring their existing software and data stack.

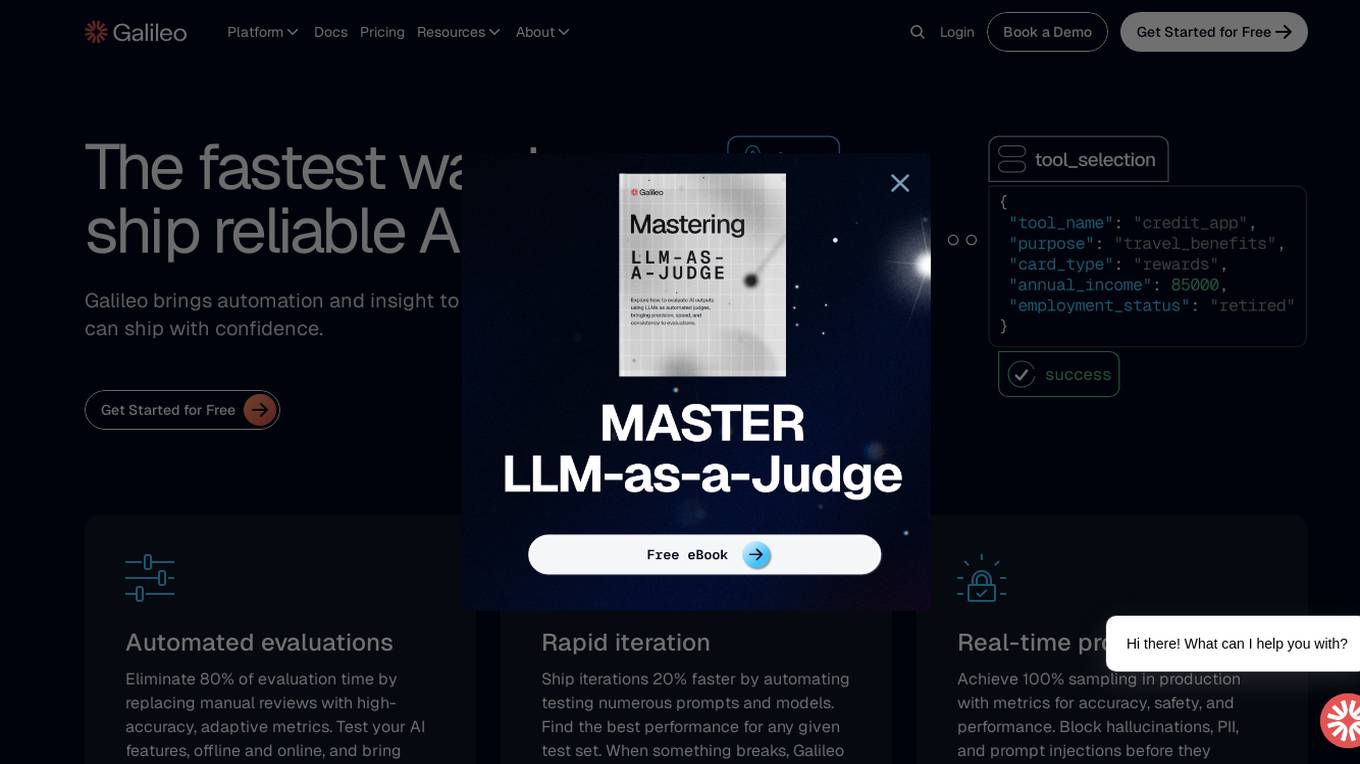

Galileo AI

Galileo AI is a platform that offers automated evaluations for AI applications, bringing automation and insight to AI evaluations to ensure reliable and confident shipping. It helps in eliminating 80% of evaluation time by replacing manual reviews with high-accuracy metrics, enabling rapid iteration, achieving real-time protection, and providing end-to-end visibility into agent completions. Galileo also allows developers to take control of AI complexity, de-risk AI in production, and deploy AI applications flexibly across different environments. The platform is trusted by enterprises and loved by developers for its accuracy, low-latency, and ability to run on L4 GPUs.

ADXL

ADXL is an AI-powered platform that revolutionizes digital marketing by offering efficient, smart, and affordable solutions for global advertisers. It provides multi-channel AI automation to help businesses achieve their marketing goals, expand their reach, and enhance control over their advertising campaigns. With features like AI-optimized copy, automated retargeting, cross-channel optimization, and instant lead ads, ADXL simplifies ad management and delivers superior results beyond human limits. The platform is designed to streamline ad creation, distribution, and management, enabling users to reach their sales goals with minimal effort and cost. ADXL eliminates the need for technical skills and expertise, making it accessible to marketing managers, small business owners, and agencies looking to scale their advertising efforts.

1 - Open Source AI Tools

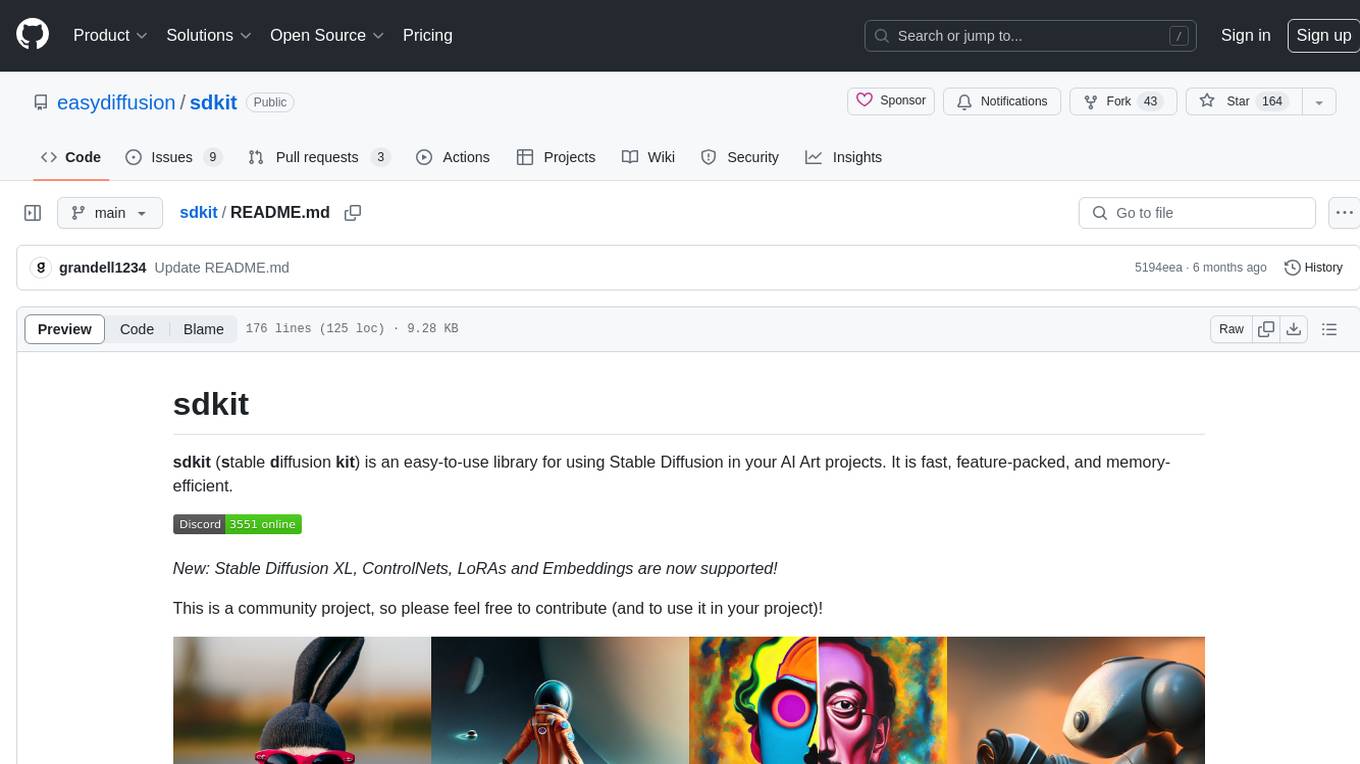

sdkit

sdkit (stable diffusion kit) is an easy-to-use library for utilizing Stable Diffusion in AI Art projects. It includes features like ControlNets, LoRAs, Textual Inversion Embeddings, GFPGAN, CodeFormer for face restoration, RealESRGAN for upscaling, k-samplers, support for custom VAEs, NSFW filter, model-downloader, parallel GPU support, and more. It offers a model database, auto-scanning for malicious models, and various optimizations. The API consists of modules for loading models, generating images, filters, model merging, and utilities, all managed through the sdkit.Context object.

20 - OpenAI Gpts

Community Design™

A community-building GPT based on the wildly popular Community Design™ framework from Mighty Networks. Start creating communities that run themselves.

Unix Shell Simulator with Visuals

UNIX terminal responses with OS process visuals. (on or off) [off] by default until GPT-4 behaves better... Bash profiles and advanced memory system for realistic bash simulation. V1 (beta)

Dungeon Maestro

D&D 5e Dungeon Master based on the SRD ruleset. Rich storytelling and an infinite adventure!

Flutter Tools nvim Guide

Explains Flutter-tools for Neovim, focusing on basics and troubleshooting.

Digital Marketing Coach

Guiding you through digital media, focusing on asking the right questions and understanding answers in marketing.

Amazon Seller Assistant

Expert in Amazon selling, providing precise guidance on various Amazon-related issues.

ChatEUC

Your expert guide for all things EUC, with a focus on battery safety, maintenance, and protective gear.

Consulting & Investment Banking Interview Prep GPT

Run mock interviews, review content and get tips to ace strategy consulting and investment banking interviews

Dungeon Master's Assistant

Your new DM's screen: helping Dungeon Masters to craft & run amazing D&D adventures.

Database Builder

Hosts a real SQLite database and helps you create tables, make schema changes, and run SQL queries, ideal for all levels of database administration.

Restaurant Startup Guide

Meet the Restaurant Startup Guide GPT: your friendly guide in the restaurant biz. It offers casual, approachable advice to help you start and run your own restaurant with ease.

Code Helper for Web Application Development

Friendly web assistant for efficient code. Ask the wizard to create an application and you will get the HTML, CSS and Javascript code ready to run your web application.