Best AI tools for< Reformat Function >

1 - AI tool Sites

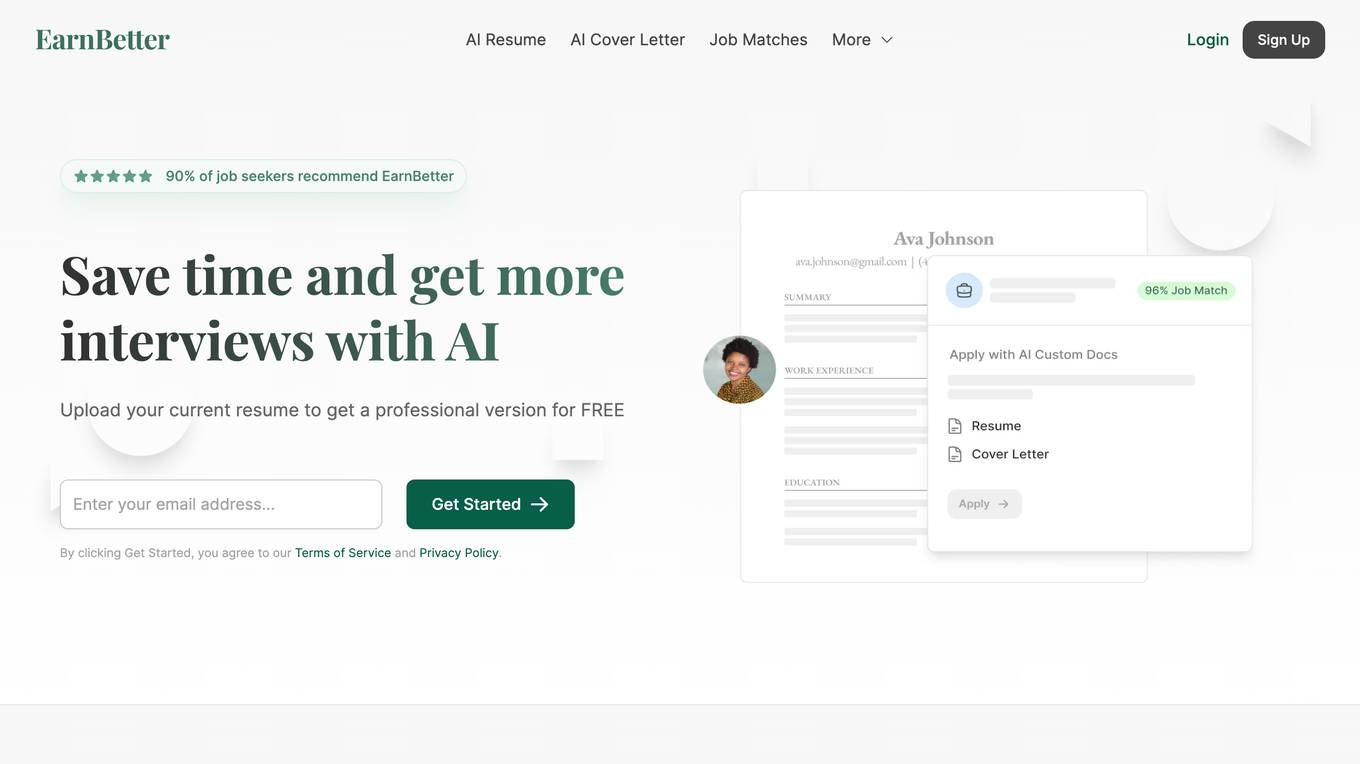

EarnBetter

EarnBetter is an AI-powered platform that offers assistance in creating professional resumes, cover letters, and job search support. The platform utilizes artificial intelligence to rewrite and reformat resumes, generate tailored cover letters, provide personalized job matches, and offer interview support. Users can upload their current resume to get started and access a range of features to enhance their job search process. EarnBetter aims to streamline the job search experience by providing free, unlimited, and professional document creation services.

1 - Open Source AI Tools

gptel-aibo

gptel-aibo is an AI writing assistant system built on top of gptel. It helps users create and manage content in Emacs, including code, documentation, and novels. Users can interact with the Language Model (LLM) to receive suggestions and apply them easily. The tool provides features like sending requests, applying suggestions, and completing content at the current position based on context. Users can customize settings and face settings for a better user experience. gptel-aibo aims to enhance productivity and efficiency in content creation and management within Emacs environment.