Best AI tools for< Model Environments >

20 - AI tool Sites

Luma AI

Luma AI is an AI application that specializes in AI video generation using advanced models like Ray3 and Dream Machine. The platform aims to provide production-ready images and videos with precision, speed, and control. Luma AI focuses on building multimodal general intelligence to generate, understand, and operate in the physical world, catering to a new era of creativity and human expression.

Luma AI

Luma AI is a 3D capture platform that allows users to create interactive 3D scenes from videos. With Luma AI, users can capture 3D models of people, objects, and environments, and then use those models to create interactive experiences such as virtual tours, product demonstrations, and training simulations.

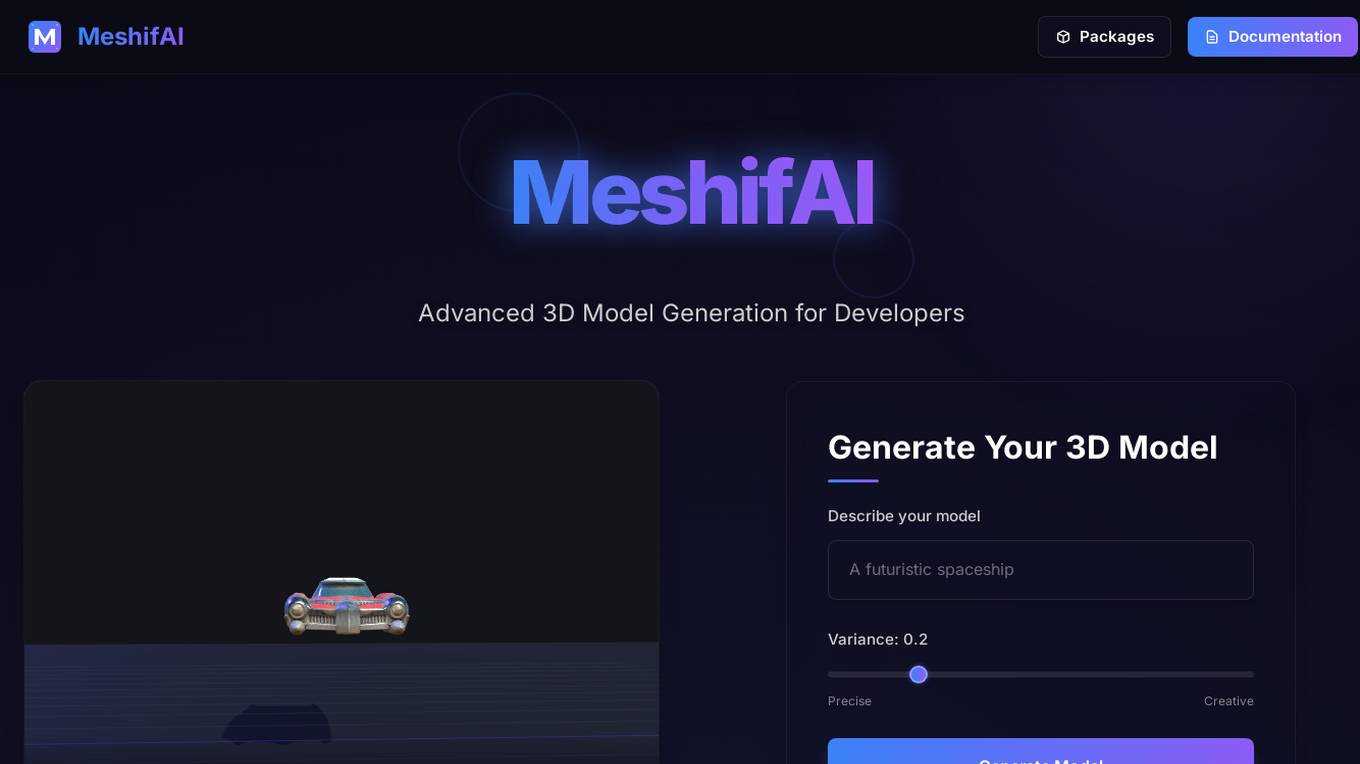

MeshifAI

MeshifAI is a Text-to-3D Generation Platform designed for developers to easily generate 3D models using advanced AI technology. The platform offers powerful tools to incorporate AI-powered 3D generation capabilities into applications, supporting JavaScript and Unity Engine environments. Developers can describe their models, specify variance, and create precise and creative 3D models with ease. MeshifAI simplifies the process of generating custom 3D models from text descriptions, providing quick and efficient solutions for developers seeking to enhance their projects with AI-generated content.

Industrial Engineer AI

Industrial Engineer AI is an advanced AI tool designed to assist industrial engineers in optimizing processes and improving efficiency in manufacturing environments. The application utilizes machine learning algorithms to analyze data, identify bottlenecks, and suggest solutions for streamlining operations. With its user-friendly interface and powerful capabilities, Industrial Engineer AI is a valuable tool for professionals looking to enhance productivity and reduce costs in industrial settings.

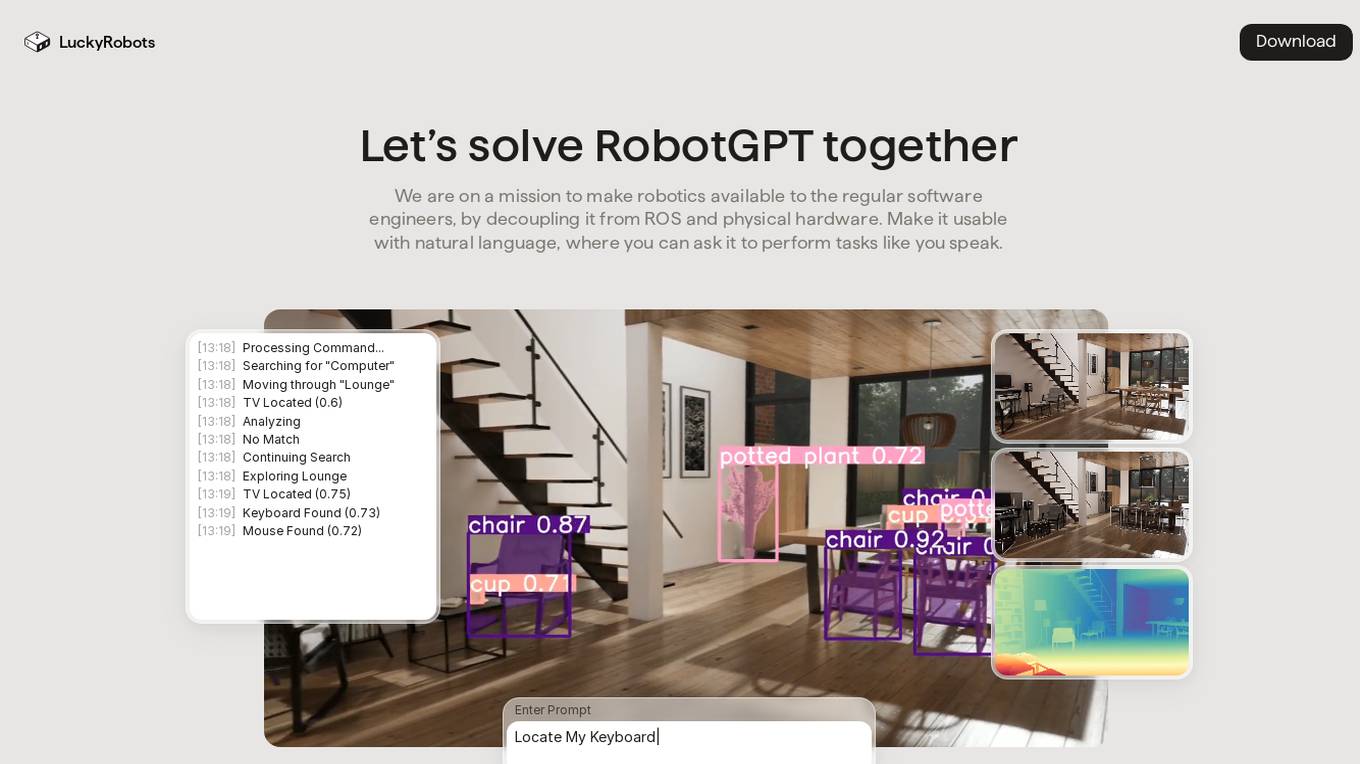

LuckyRobots

LuckyRobots is an AI tool designed to make robotics accessible to software engineers by providing a simulation platform for deploying end-to-end AI models. The platform allows users to interact with robots using natural language commands, explore virtual environments, test robot models in realistic scenarios, and receive camera feeds for monitoring. LuckyRobots aims to train AI models on real-world simulations and respond to natural language inputs, offering a user-friendly and innovative approach to robotics development.

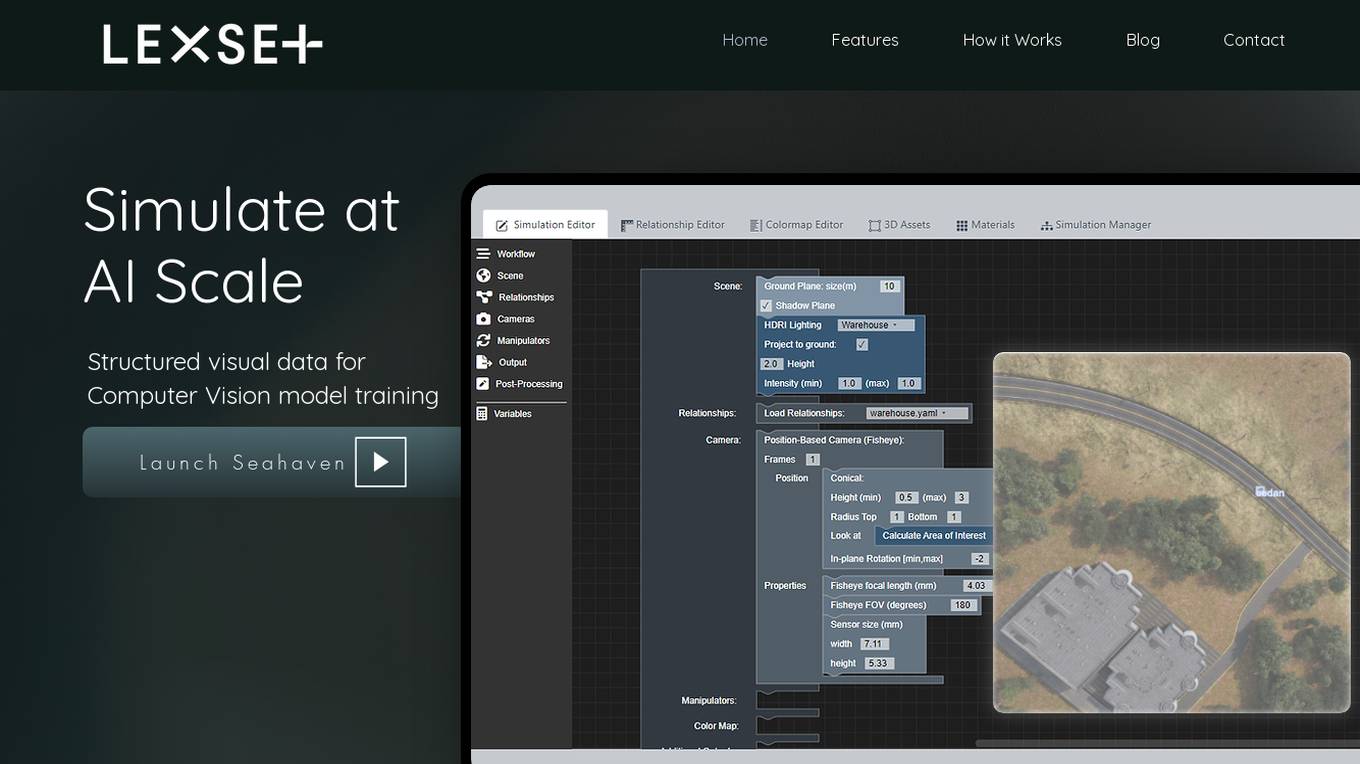

Lexset

Lexset is an AI tool that provides synthetic data generation services for computer vision model training. It offers a no-code interface to create unlimited data with advanced camera controls and lighting options. Users can simulate AI-scale environments, composite objects into images, and create custom 3D scenarios. Lexset also provides access to GPU nodes, dedicated support, and feature development assistance. The tool aims to improve object detection accuracy and optimize generalization on high-quality synthetic data.

Together AI

Together AI is an AI Acceleration Cloud platform that offers fast inference, fine-tuning, and training services. It provides self-service NVIDIA GPUs, model deployment on custom hardware, AI chat app, code execution sandbox, and tools to find the right model for specific use cases. The platform also includes a model library with open-source models, documentation for developers, and resources for advancing open-source AI. Together AI enables users to leverage pre-trained models, fine-tune them, or build custom models from scratch, catering to various generative AI needs.

Valohai

Valohai is a scalable MLOps platform that enables Continuous Integration/Continuous Deployment (CI/CD) for machine learning and pipeline automation on-premises and across various cloud environments. It helps streamline complex machine learning workflows by offering framework-agnostic ML capabilities, automatic versioning with complete lineage of ML experiments, hybrid and multi-cloud support, scalability and performance optimization, streamlined collaboration among data scientists, IT, and business units, and smart orchestration of ML workloads on any infrastructure. Valohai also provides a knowledge repository for storing and sharing the entire model lifecycle, facilitating cross-functional collaboration, and allowing developers to build with total freedom using any libraries or frameworks.

Alluxio

Alluxio is a data orchestration platform designed for the cloud, offering seamless access, management, and running of AI/ML workloads. Positioned between compute and storage, Alluxio provides a unified solution for enterprises to handle data and AI tasks across diverse infrastructure environments. The platform accelerates model training and serving, maximizes infrastructure ROI, and ensures seamless data access. Alluxio addresses challenges such as data silos, low performance, data engineering complexity, and high costs associated with managing different tech stacks and storage systems.

EZClaws

EZClaws is an AI tool designed for one-click OpenClaw hosting, allowing users to deploy and manage OpenClaw instances with ease. It offers a fully managed platform for hosting AI agents, powered by world-class AI models like GPT-4 and Claude. EZClaws simplifies the deployment process by handling server setup, SSH keys, Docker configs, and more, all through a user-friendly interface. With features such as automated provisioning, isolated and encrypted environments, built-in usage tracking, and quick deployment times, EZClaws streamlines the hosting experience for AI enthusiasts and developers.

WTRI

WTRI is an AI application that offers FutureView™, a suite of tools designed to help individuals and businesses rehearse their future scenarios in a virtual environment. By leveraging cognitive agility assessment, event generation, modeling, and virtual world platforms, WTRI aims to assist users in making agile decisions and adapting to rapidly changing business environments. With a focus on risk management and outcome optimization, WTRI provides a unique approach to strategic planning and preparedness.

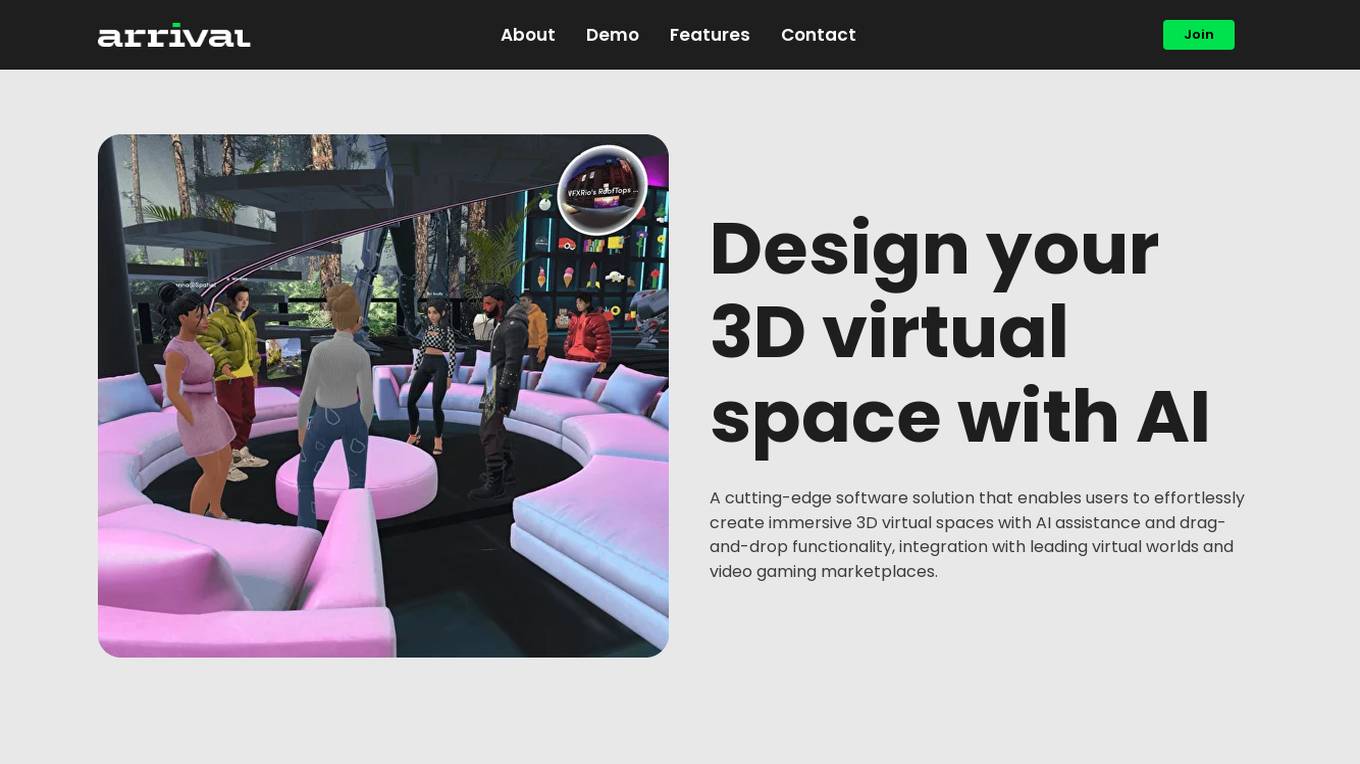

Arrival

Arrival is a cutting-edge software solution that allows users to design 3D virtual spaces with AI assistance and drag-and-drop functionality. It enables effortless creation of immersive environments by utilizing a built-in text-to-3D ML model, a user-friendly drag & drop interface, and seamless integration with leading virtual worlds and video gaming marketplaces.

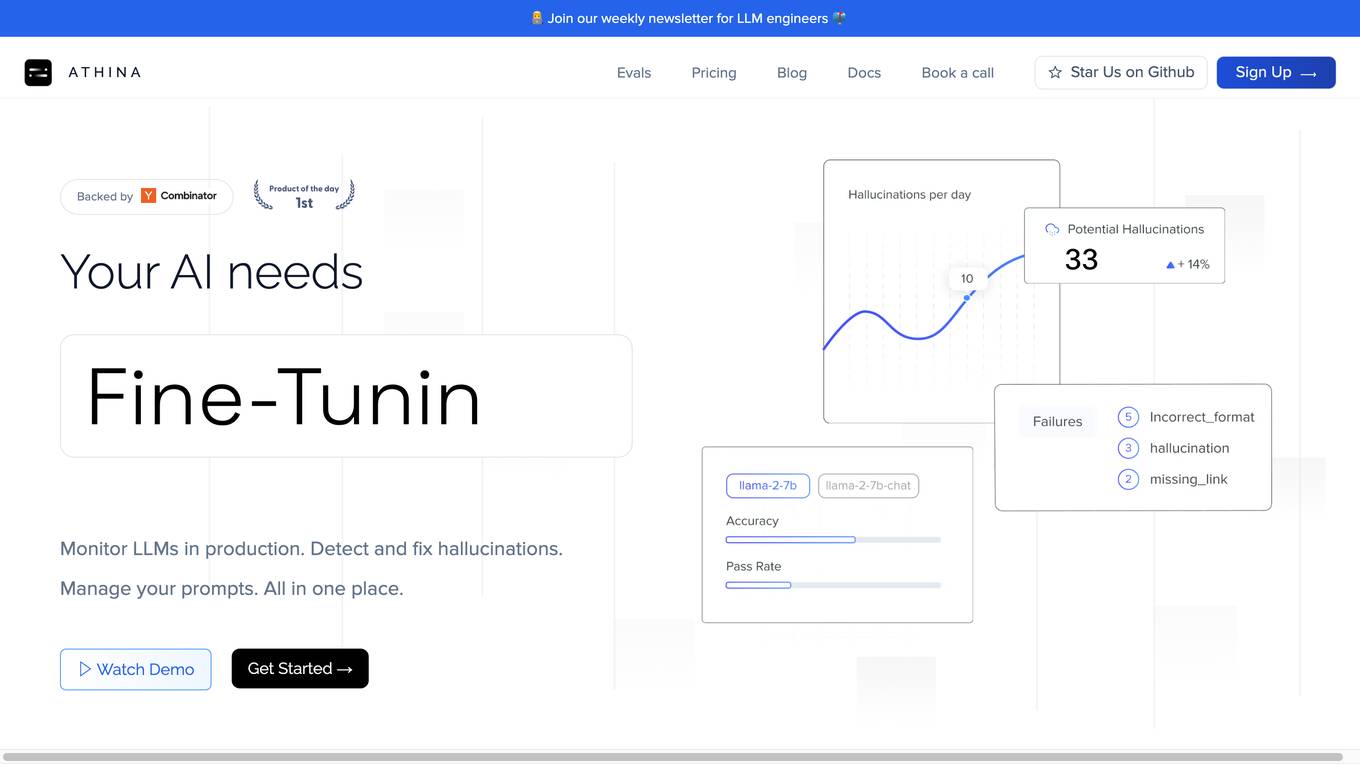

Athina AI

Athina AI is a comprehensive platform designed to monitor, debug, analyze, and improve the performance of Large Language Models (LLMs) in production environments. It provides a suite of tools and features that enable users to detect and fix hallucinations, evaluate output quality, analyze usage patterns, and optimize prompt management. Athina AI supports integration with various LLMs and offers a range of evaluation metrics, including context relevancy, harmfulness, summarization accuracy, and custom evaluations. It also provides a self-hosted solution for complete privacy and control, a GraphQL API for programmatic access to logs and evaluations, and support for multiple users and teams. Athina AI's mission is to empower organizations to harness the full potential of LLMs by ensuring their reliability, accuracy, and alignment with business objectives.

crewAI

crewAI is a platform for Multi AI Agents Systems that offers a user-friendly framework for automating workflows with AI agents. It simplifies the process of building and deploying multi-agent automations, providing support for various AI models and templates. With a focus on privacy and security, crewAI ensures that each agent runs in isolated environments. The platform is suitable for enterprises and developers looking to leverage AI technologies effectively.

TitanML

TitanML is a platform that provides tools and services for deploying and scaling Generative AI applications. Their flagship product, the Titan Takeoff Inference Server, helps machine learning engineers build, deploy, and run Generative AI models in secure environments. TitanML's platform is designed to make it easy for businesses to adopt and use Generative AI, without having to worry about the underlying infrastructure. With TitanML, businesses can focus on building great products and solving real business problems.

H2O.ai

H2O.ai is a leading AI platform that offers a range of open-source and enterprise solutions for machine learning and AI applications. The platform includes products such as H2O-3, H2O Wave, Sparkling Water, H2O AI Cloud, H2O Driverless AI, and more. H2O.ai aims to democratize AI by providing tools for building, deploying, and managing AI/ML models in various environments, including the cloud. The platform also emphasizes explainable AI to enhance transparency and trustworthiness in AI applications.

Deepfake Detection Challenge Dataset

The Deepfake Detection Challenge Dataset is a project initiated by Facebook AI to accelerate the development of new ways to detect deepfake videos. The dataset consists of over 100,000 videos and was created in collaboration with industry leaders and academic experts. It includes two versions: a preview dataset with 5k videos and a full dataset with 124k videos, each featuring facial modification algorithms. The dataset was used in a Kaggle competition to create better models for detecting manipulated media. The top-performing models achieved high accuracy on the public dataset but faced challenges when tested against the black box dataset, highlighting the importance of generalization in deepfake detection. The project aims to encourage the research community to continue advancing in detecting harmful manipulated media.

Qubinets

Qubinets is a cloud data environment solutions platform that provides building blocks for building big data, AI, web, and mobile environments. It is an open-source, no lock-in, secured, and private platform that can be used on any cloud, including AWS, Digital Ocean, Google Cloud, and Microsoft Azure. Qubinets makes it easy to plan, build, and run data environments, and it streamlines and saves time and money by reducing the grunt work in setup and provisioning.

Synthesis AI

Synthesis AI is a synthetic data platform that enables more capable and ethical computer vision AI. It provides on-demand labeled images and videos, photorealistic images, and 3D generative AI to help developers build better models faster. Synthesis AI's products include Synthesis Humans, which allows users to create detailed images and videos of digital humans with rich annotations; Synthesis Scenarios, which enables users to craft complex multi-human simulations across a variety of environments; and a range of applications for industries such as ID verification, automotive, avatar creation, virtual fashion, AI fitness, teleconferencing, visual effects, and security.

SoraWebui

SoraWebui is an open-source web platform that simplifies video creation by allowing users to generate videos from text using OpenAI's Sora model. It provides an easy-to-use interface and one-click website deployment, making it accessible to both professionals and enthusiasts in video production and AI technology. SoraWebui also includes a simulated version of the Sora API called FakeSoraAPI, which allows developers to start developing and testing their projects in a mock environment.

1 - Open Source AI Tools

AI4U

AI4U is a tool that provides a framework for modeling virtual reality and game environments. It offers an alternative approach to modeling Non-Player Characters (NPCs) in Godot Game Engine. AI4U defines an agent living in an environment and interacting with it through sensors and actuators. Sensors provide data to the agent's brain, while actuators send actions from the agent to the environment. The brain processes the sensor data and makes decisions (selects an action by time). AI4U can also be used in other situations, such as modeling environments for artificial intelligence experiments.

20 - OpenAI Gpts

EIA model

Generates Environmental impact assessment templates based on specific global locations and parameters.

HydroGPT

HydroGPT is an expert in water resources engineering, specializing in hydrology, hydraulics, and drainage design. It provides detailed assistance in modeling concepts, methodologies, scopes of work, and drainage report writing, including aerial image analysis.

LiDAR GPT - LAStools Comprehensive Expert

Expert in LAStools with in-depth command line knowledge.

Blender Buddy AI

Concise and helpful expert in Blender 3D, guiding users in all aspects of 3D creation.

LFG GPT

Talk to Navigation with Large Language Models: Semantic Guesswork as a Heuristic for Planning (LFG)

GaiaAI

The pressing environmental issues we face today require novel approaches and technological advancements to effectively mitigate their impacts. GaiaAI offers a range of tools and modes to promote sustainable practices and enhance environmental stewardship.

Unreal Assistant

Assists with Unreal Engine 5 C++ coding, editor know-how, and blueprint visuals.

SandNet-AI VoX

Create voxel art references. Assets, scenes, weapons, general design. Type 'Create + text'. English, Portuguese, Philipines,..., +60 others.