Best AI tools for< Manage Server Deployments >

20 - AI tool Sites

WebServerPro

The website is a platform that provides web server hosting services. It helps users set up and manage their web servers efficiently. Users can easily deploy their websites and applications on the server, ensuring a seamless online presence. The platform offers a user-friendly interface and reliable hosting solutions to meet various needs.

DeployMaster

The website is a platform for managing software deployments. It allows users to automate the deployment process, ensuring smooth and efficient delivery of software updates and changes to servers and applications. With features like version control, rollback options, and monitoring capabilities, users can easily track and manage their deployments. The platform simplifies the deployment process, reducing errors and downtime, and improving overall productivity.

MOM AI Restaurant Assistant

MOM AI Restaurant Assistant is an innovative AI tool designed to transform restaurants by automating ordering processes, enhancing online presence, and providing cost-effective solutions. It offers features such as simple registration, in-store and online deployment, real-time order notifications, and flexible pricing. The application helps restaurants improve efficiency, reduce manual work, and increase customer engagement through AI technology.

EZClaws

EZClaws is an AI tool designed for one-click OpenClaw hosting, allowing users to deploy and manage OpenClaw instances with ease. It offers a fully managed platform for hosting AI agents, powered by world-class AI models like GPT-4 and Claude. EZClaws simplifies the deployment process by handling server setup, SSH keys, Docker configs, and more, all through a user-friendly interface. With features such as automated provisioning, isolated and encrypted environments, built-in usage tracking, and quick deployment times, EZClaws streamlines the hosting experience for AI enthusiasts and developers.

HostAI

HostAI is a platform that allows users to host their artificial intelligence models and applications with ease. It provides a user-friendly interface for managing and deploying AI projects, eliminating the need for complex server setups. With HostAI, users can seamlessly run their AI algorithms and applications in a secure and efficient environment. The platform supports various AI frameworks and libraries, making it versatile for different AI projects. HostAI simplifies the process of AI deployment, enabling users to focus on developing and improving their AI models.

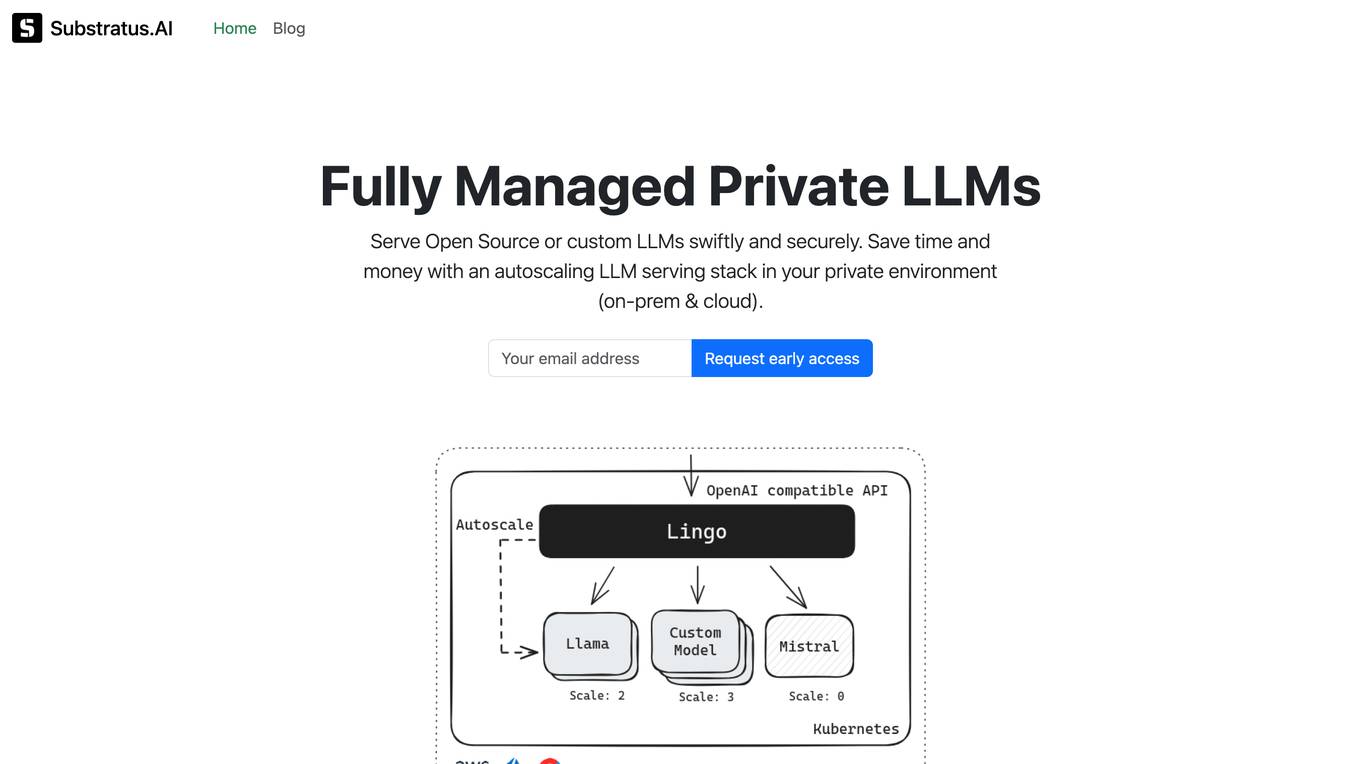

Substratus.AI

Substratus.AI is a fully managed private LLMs platform that allows users to serve LLMs (Llama and Mistral) in their own cloud account. It enables users to keep control of their data while reducing OpenAI costs by up to 10x. With Substratus.AI, users can utilize LLMs in production in hours instead of weeks, making it a convenient and efficient solution for AI model deployment.

Seldon

Seldon is an MLOps platform that helps enterprises deploy, monitor, and manage machine learning models at scale. It provides a range of features to help organizations accelerate model deployment, optimize infrastructure resource allocation, and manage models and risk. Seldon is trusted by the world's leading MLOps teams and has been used to install and manage over 10 million ML models. With Seldon, organizations can reduce deployment time from months to minutes, increase efficiency, and reduce infrastructure and cloud costs.

Baseten

Baseten is a machine learning infrastructure that provides a unified platform for data scientists and engineers to build, train, and deploy machine learning models. It offers a range of features to simplify the ML lifecycle, including data preparation, model training, and deployment. Baseten also provides a marketplace of pre-built models and components that can be used to accelerate the development of ML applications.

Nory

Nory is an AI-powered operating system designed for successful restaurants, offering business intelligence, inventory management, workforce optimization, payroll services, and growth funding. It helps restaurant businesses achieve consistency in operational standards and profitability across multiple venues by leveraging AI to forecast sales, plan labor deployment, and manage inventory effectively. Nory streamlines supply chain management, workforce operations, and payroll processing, ultimately leading to reduced costs, increased efficiency, and improved employee satisfaction. The platform serves as a comprehensive solution for restaurant groups looking to grow their brand and margins.

Team9

Team9 is an AI workspace application that allows users to hire AI Staff and collaborate with them as real teammates. It is built on OpenClaw and Moltbook, offering a zero-setup, managed OpenClaw experience. Users can assign tasks, share context, and coordinate work within the platform. Team9 aims to help users build an AI team, run AI-powered collaboration, and increase work efficiency with minimal overhead. The application connects users to the revolutionary AI agent ecosystem, providing instant deployment of OpenClaw without the need for complex setup or manual configuration.

Tecton

Tecton is an AI data platform that helps build smarter AI applications by simplifying feature engineering, generating training data, serving real-time data, and enhancing AI models with context-rich prompts. It automates data pipelines, improves model accuracy, and lowers production costs, enabling faster deployment of AI models. Tecton abstracts away data complexity, provides a developer-friendly experience, and allows users to create features from any source. Trusted by top engineering teams, Tecton streamlines ML delivery processes, improves customer interactions, and automates release processes through CI/CD pipelines.

Singlebase

Singlebase.cloud is an AI-powered platform that serves as an alternative to Firebase and Supabase. It offers a comprehensive suite of tools and services to facilitate faster development and deployment through a unified API. The platform includes features such as Vector Database, NoSQL Database, Vector Embeddings, Generative AI, RAG, Knowledge Base, File storage, and Authentication, catering to a wide range of development needs.

BentoML

BentoML is a platform for software engineers to build, ship, and scale AI products. It provides a unified AI application framework that makes it easy to manage and version models, create service APIs, and build and run AI applications anywhere. BentoML is used by over 1000 organizations and has a global community of over 3000 members.

Sturdy

Sturdy is an AI application designed for B2B businesses to drive insights and actions that improve business performance and revenue retention. It provides product insights, sentiment trends, account-based summaries, and custom signals. Sturdy offers automations, AI readiness assessment, and turnkey deployment for customer intelligence. The application ensures privacy, security, and compliance-first mindset in data integration and collection. Sturdy converts unstructured customer interactions into actionable data for decision-making and forecasting.

Kie.ai

Kie.ai is an AI platform that offers access to DeepSeek R1 & V3 APIs for secure and scalable AI solutions. It provides advanced reasoning models for tasks in math, coding, and language, along with versatile natural language processing capabilities. With no local deployment required, developers can easily integrate the APIs into their projects for fast and efficient AI solutions. Kie.ai ensures data security by hosting the APIs on U.S.-based servers, offering affordable pricing plans and comprehensive documentation for seamless integration.

OpenResty Server Manager

The website seems to be experiencing a 403 Forbidden error, which typically indicates that the server is denying access to the requested resource. This error is often caused by incorrect permissions or misconfigurations on the server side. The message 'openresty' suggests that the server may be using the OpenResty web platform. Users encountering this error may need to contact the website administrator for assistance in resolving the issue.

OpenResty Server

The website is currently displaying a '403 Forbidden' error, which indicates that the server understood the request but refuses to authorize it. This error is typically caused by insufficient permissions or misconfiguration on the server side. The 'openresty' message suggests that the server is using the OpenResty web platform, which is based on NGINX and Lua programming language. Users encountering this error may need to contact the website administrator for assistance in resolving the issue.

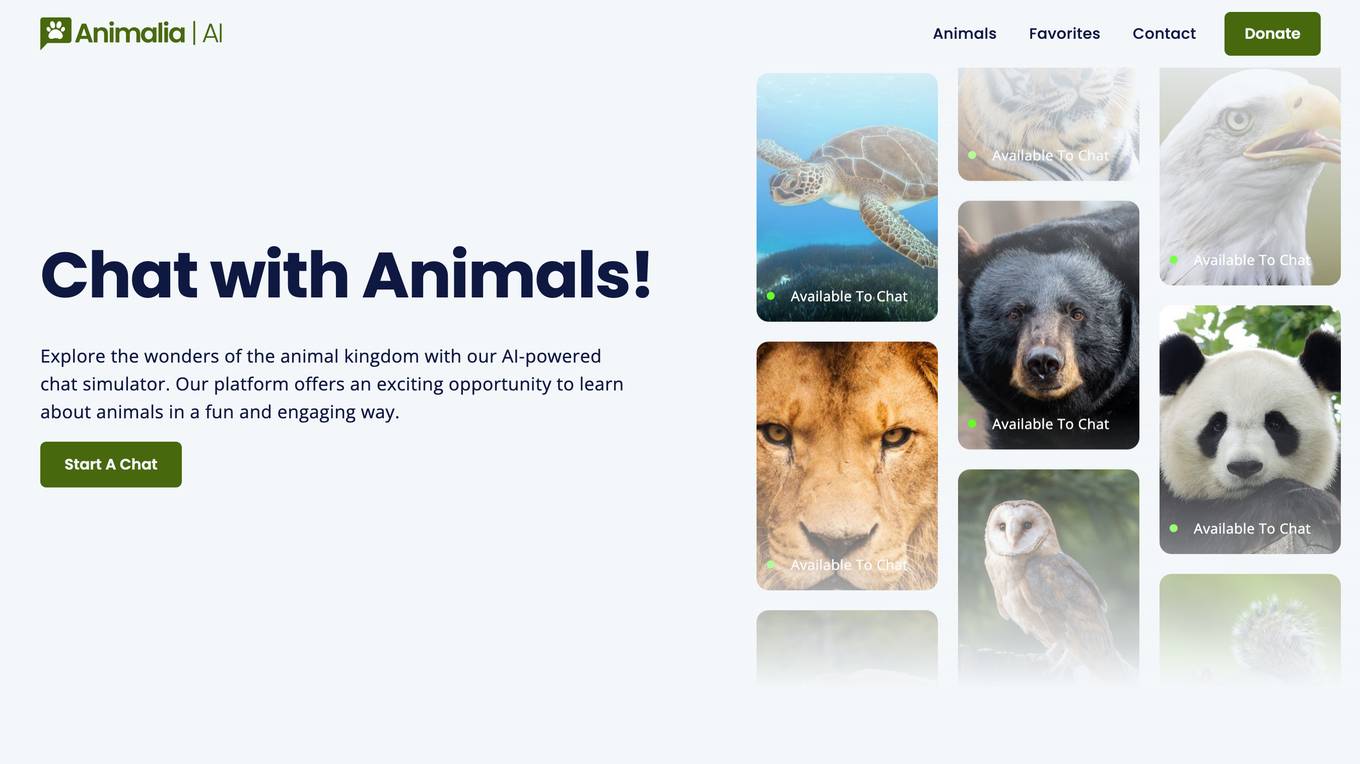

VoteMinecraftServers

VoteMinecraftServers is a modern Minecraft voting website that provides real-time live analytics to help users stay ahead in the game. It utilizes advanced AI and ML technologies to deliver accurate and up-to-date information. The website is free to use and offers a range of features, including premium commands for enhanced functionality. VoteMinecraftServers is committed to data security and user privacy, ensuring a safe and reliable experience.

502 Bad Gateway

The website seems to be experiencing technical difficulties at the moment, showing a '502 Bad Gateway' error message. This error typically occurs when a server acting as a gateway or proxy receives an invalid response from an upstream server. The 'nginx' reference in the error message indicates that the server is using the Nginx web server software. Users encountering this error may need to wait for the issue to be resolved by the website's administrators or try accessing the site at a later time.

403 Forbidden Resolver

The website is currently displaying a '403 Forbidden' error, which means that the server is refusing to respond to the request. This could be due to various reasons such as insufficient permissions, server misconfiguration, or a client error. The 'openresty' message indicates that the server is using the OpenResty web platform. It is important to troubleshoot and resolve the issue to regain access to the website.

1 - Open Source AI Tools

airo

Airo is a tool designed to simplify the process of deploying containers to self-hosted servers. It allows users to focus on building their products without the complexity of Kubernetes or CI/CD pipelines. With Airo, users can easily build and push Docker images, deploy instantly with a single command, update configurations securely using SSH, and set up HTTPS and reverse proxy automatically using Caddy.

20 - OpenAI Gpts

SQL Server assistant

Expert in SQL Server for database management, optimization, and troubleshooting.

Baci's AI Server

An AI waiter for Baci Bistro & Bar, knowledgeable about the menu and ready to assist.

Software expert

Server admin expert in cPanel, Softaculous, WHM, WordPress, and Elementor Pro.

アダチさん13号(SQLServer篇)

安達孝一さんがSE時代に蓄積してきた、SQL Serverのナレッジやノウハウ等 (SQL Server 2000/2005/2008/2012) について、ご質問頂けます。また、対話内容を基に、ChatGPT(GPT-4)向けの、汎用的な質問文例も作成できます。

BashEmulator GPT

BashEmulator GPT: A Virtualized Bash Environment for Linux Command Line Interaction. It virtualized all network interfaces and local network