Best AI tools for< Manage Containers >

20 - AI tool Sites

Microsoft Azure

Microsoft Azure is a cloud computing service that offers a wide range of products and solutions for businesses and developers. It provides tools for AI, machine learning, databases, analytics, compute, containers, hybrid cloud, and more. Azure enables users to build, deploy, and scale AI-powered applications and agents faster, with a focus on data security and flexibility. The platform offers a pay-as-you-go model and a free trial period of up to 30 days, with no upfront commitment required. Azure aims to empower businesses to innovate and modernize their applications and infrastructure in a secure and scalable environment.

Lacework

Lacework is a cloud security platform that provides comprehensive security solutions for DevOps, Containers, and Cloud Environments. It offers features such as Code Security, Workload Protection, Identities and Entitlements management, Posture Management, Kubernetes Security, Data Posture Management, Infrastructure as Code security, Software Composition Analysis, Application Security Testing, Edge Security, and Platform Overview. Lacework empowers users to secure their entire cloud infrastructure, prioritize risks, protect workloads, and stay compliant by leveraging AI-driven technologies and behavior-based threat detection. The platform helps automate compliance reporting, fix vulnerabilities, and reduce alerts, ultimately enhancing cloud security and operational efficiency.

EdrawMax

EdrawMax is a diagramming software that uses AI to help users create stunning diagrams. It has a wide range of features, including smart containers, Boolean operations, a customizable symbol library, data import and export, and presentation mode. EdrawMax is available for Windows, Mac, Linux, iOS, and Android, and it offers a variety of templates to help users get started. With its powerful features and ease of use, EdrawMax is a great choice for anyone who needs to create diagrams.

Kubeflow

Kubeflow is an open-source machine learning (ML) toolkit that makes deploying ML workflows on Kubernetes simple, portable, and scalable. It provides a unified interface for model training, serving, and hyperparameter tuning, and supports a variety of popular ML frameworks including PyTorch, TensorFlow, and XGBoost. Kubeflow is designed to be used with Kubernetes, a container orchestration system that automates the deployment, management, and scaling of containerized applications.

GrapixAI

GrapixAI is a leading provider of low-cost cloud GPU rental services and AI server solutions. The company's focus on flexibility, scalability, and cutting-edge technology enables a variety of AI applications in both local and cloud environments. GrapixAI offers the lowest prices for on-demand GPUs such as RTX4090, RTX 3090, RTX A6000, RTX A5000, and A40. The platform provides Docker-based container ecosystem for quick software setup, powerful GPU search console, customizable pricing options, various security levels, GUI and CLI interfaces, real-time bidding system, and personalized customer support.

Start Left® Security

Start Left® Security is an AI-driven application security posture management platform that empowers product teams to automate secure-by-design software from people to cloud. The platform integrates security into every facet of the organization, offering a unified solution that aligns with business goals, fosters continuous improvement, and drives innovation. Start Left® Security provides a gamified DevSecOps experience with comprehensive security capabilities like SCA, SBOM, SAST, DAST, Container Security, IaC security, ASPM, and more.

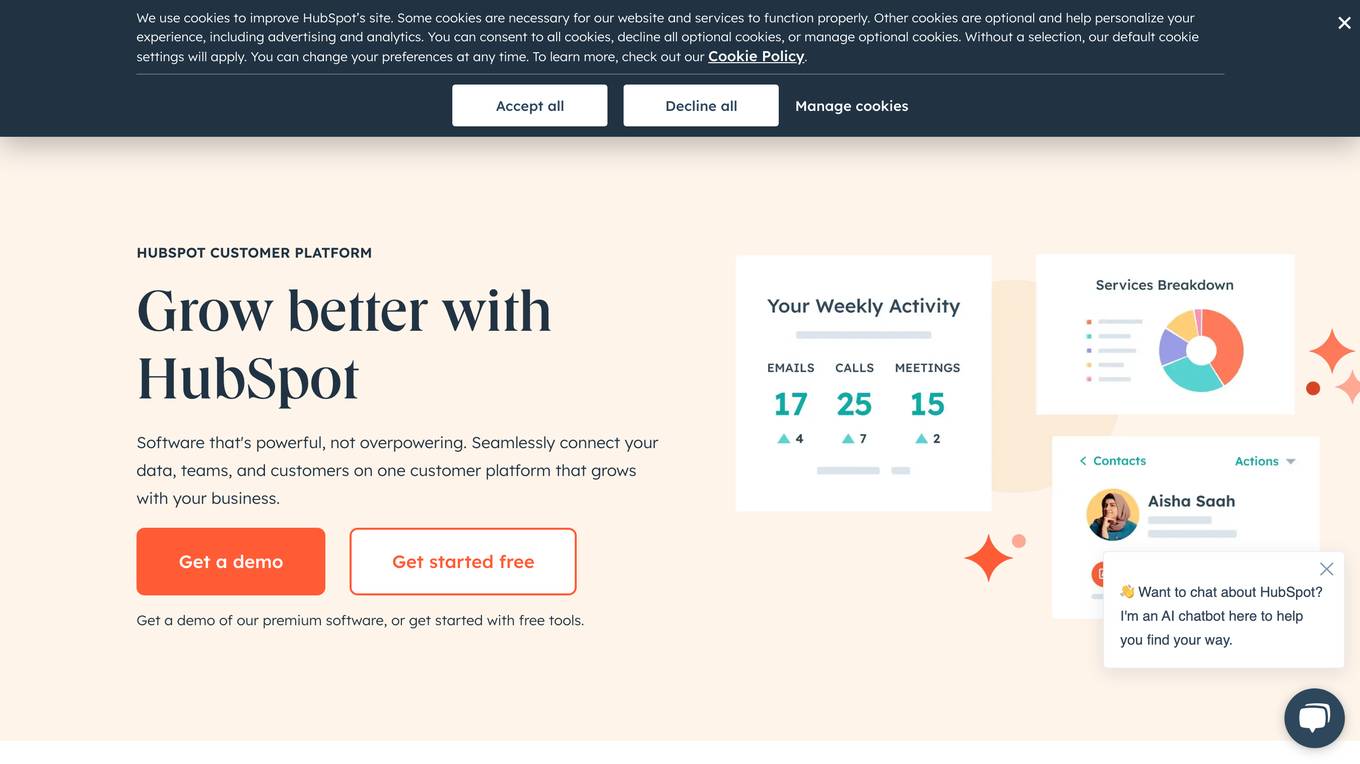

HubSpot

HubSpot is a customer relationship management (CRM) platform that provides software and tools for marketing, sales, customer service, content management, and operations. It is designed to help businesses grow by connecting their data, teams, and customers on one platform. HubSpot's AI tools are used to automate tasks, personalize marketing campaigns, and provide insights into customer behavior.

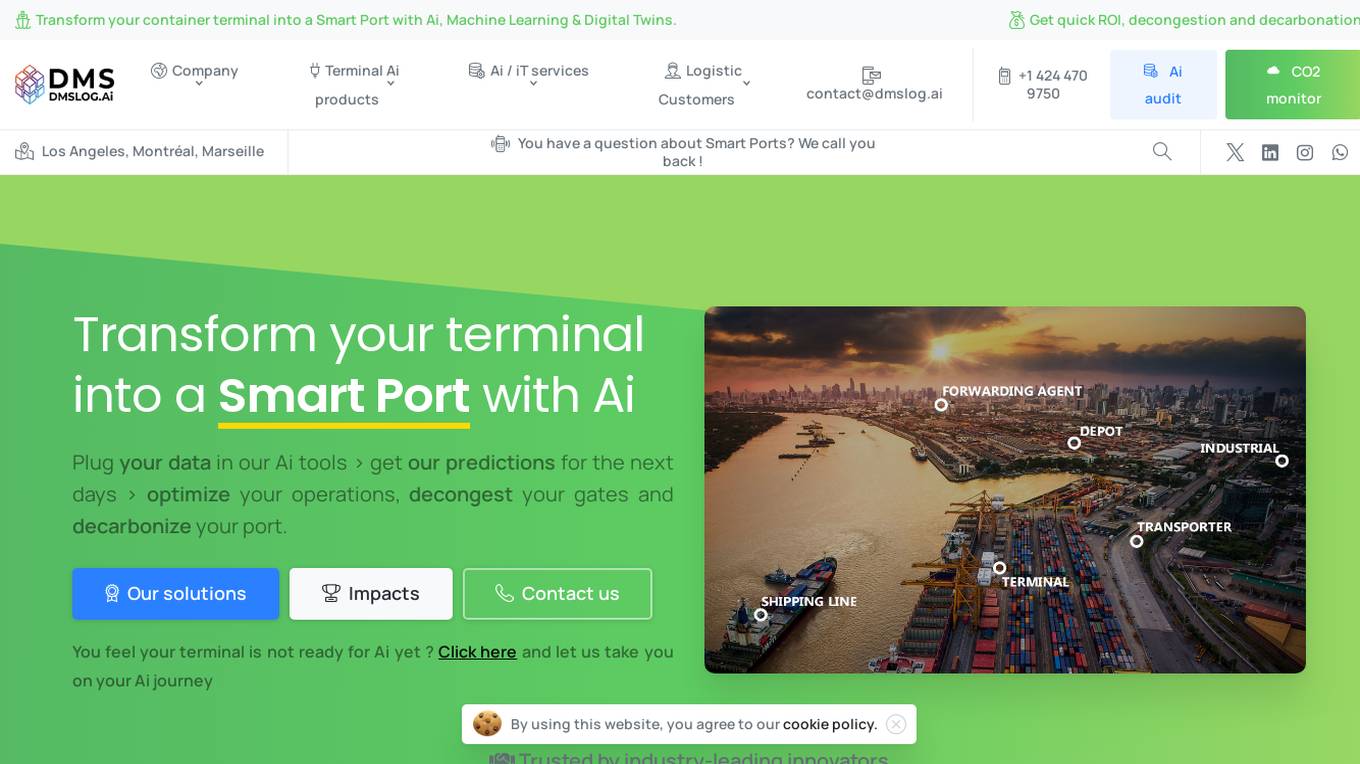

DMSLOG.Ai

DMSLOG.Ai is an AI tool designed for Smart Port terminal optimization, decongestion, and decarbonation. It offers solutions powered by AI, machine learning, and digital twins to transform container terminals into Smart Ports, providing quick ROI, decongestion, and decarbonation. The tool is used globally on a daily basis, offering plug-and-play AI solutions for various terminal operations and carbon footprint monitoring.

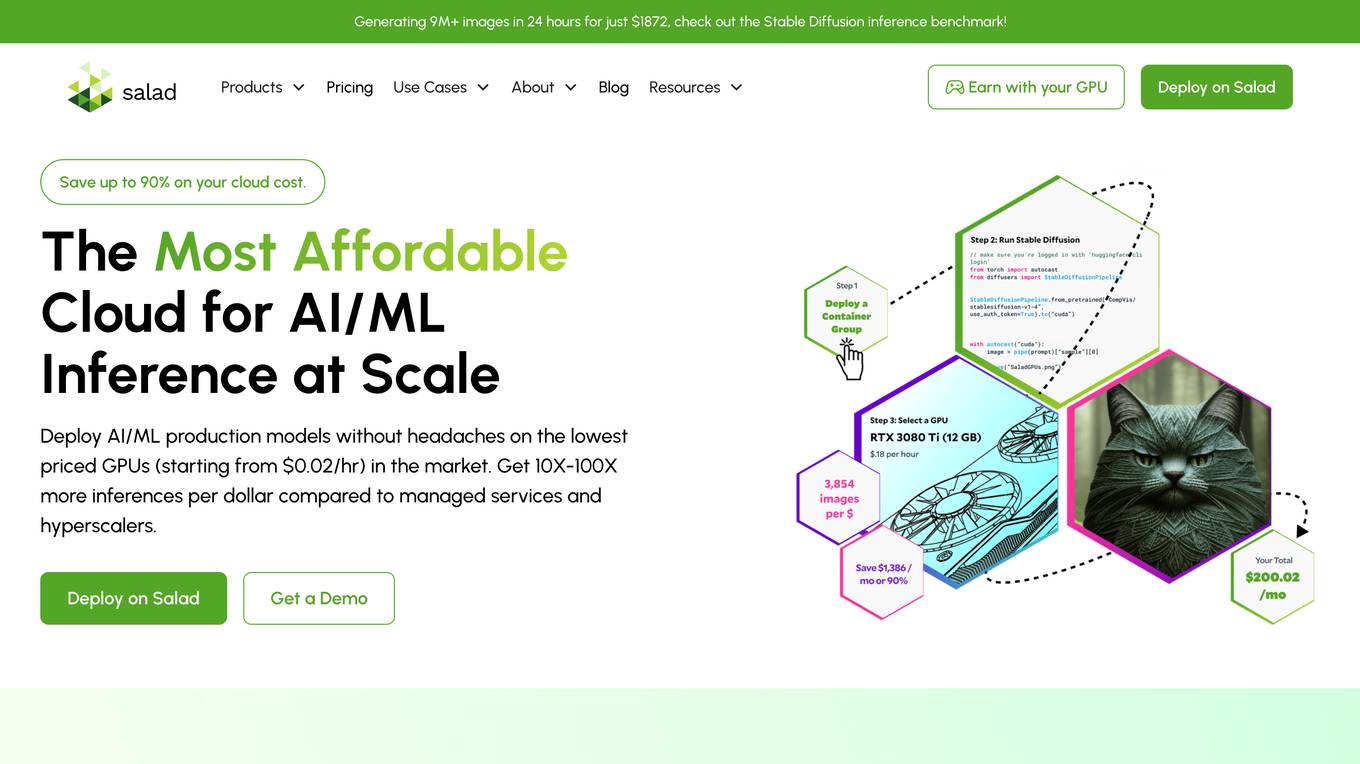

Salad

Salad is a distributed GPU cloud platform that offers fully managed and massively scalable services for AI applications. It provides the lowest priced AI transcription in the market, with features like image generation, voice AI, computer vision, data collection, and batch processing. Salad democratizes cloud computing by leveraging consumer GPUs to deliver cost-effective AI/ML inference at scale. The platform is trusted by hundreds of machine learning and data science teams for its affordability, scalability, and ease of deployment.

SoundHound AI

SoundHound AI is a global leader in conversational intelligence, providing voice AI solutions for businesses to offer exceptional conversational experiences to their customers. Their proprietary technology enables best-in-class speed and accuracy in multiple languages across automotive, TV, IoT, and customer service industries. SoundHound offers innovative AI-driven products like Smart Answering, Smart Ordering, and Dynamic Interaction™, a real-time customer service interface. With SoundHound Chat AI, a powerful voice assistant integrated with Generative AI, the company powers millions of products and services, handling billions of interactions annually for top-tier businesses.

Auto Gmail - ChatGPT AI for email inbox

Auto Gmail is a Chrome extension that connects to your Gmail inbox and uses your data and ChatGPT to draft email responses to every inbound message. It is the best way of saving hours every day. Instead of improving your productivity with shortcuts or apps like Superhuman, simply let our AI learn about you and answer all your emails instead of you. You don't need to click or instruct the AI, it works on mobile and desktop and writes emails even when you're not in Gmail. Upon opening your inbox, you'll see the new drafts, ready to be sent. Your inbox contains everything you ever sent and therefore a big part of your knowledge. Stop repeating the same things and let Auto Gmail do the work. You stay in the driver seat of course and no email is ever sent without you actually hitting that "Send" button. Upon installation, drafts will appear even on the mobile app, allowing you to answer faster while on the go. You can instruct the AI and give it additional context such as links you'd like it to use (calendar links, tutorials, product pages and so on). Under the hood, Auto Gmail works by connecting to the Gmail API and uses ChatGPT (GPT 4) to draft email answers. For context, the most similar emails are passed along to help ChatGPT draft the most relevant answers. Auto Gmail retrains every week on your latest messages to stay up to date on new knowledge you sent per email.

SiteRetriever

SiteRetriever is a self-hosted AI chatbot platform that allows users to build powerful chatbots without monthly fees. It offers a completely self-contained and self-hosted solution, providing full control over data and costs. With optimized speed and advanced language models, SiteRetriever enables users to create smart AI chatbots that understand context and provide accurate responses. The platform ensures full privacy and security by keeping data on users' servers, with no external API calls or data sharing. Users can easily embed the chatbot on any website using a simple JavaScript snippet, and customize its appearance, behavior, and responses. SiteRetriever simplifies the setup process, allowing users to upload content, configure the bot, and embed it on their site within minutes.

Cleochat

Cleochat.com is a website that serves as a domain parking page created by Sedo. It provides resources and information related to the domain. The page contains a disclaimer stating that Sedo, the domain parking service, does not have any relationship with third-party advertisers and does not control or endorse any specific service or trademark mentioned on the page. The website also includes a privacy policy.

Jaydeeai

Jaydeeai.com is a website that serves as a domain parking page created by the domain owner using Sedo Domain Parking. It does not provide any AI tool or application but rather displays information and resources related to the domain. The webpage contains a disclaimer stating that Sedo, the domain parking service, does not have any relationship with third-party advertisers and does not endorse or recommend any specific service or trademark. Additionally, the website mentions its privacy policy.

SocialBee

SocialBee is an AI-powered social media management tool that helps businesses and individuals manage their social media accounts efficiently. It offers a range of features, including content creation, scheduling, analytics, and collaboration, to help users plan, create, and publish engaging social media content. SocialBee also provides insights into social media performance, allowing users to track their progress and make data-driven decisions.

Height

Height is an autonomous project management tool designed for teams involved in designing and building projects. It automates manual tasks to provide space for collaborative work, focusing on backlog upkeep, spec updates, and bug triage. With project intelligence and collaboration features, Height offers a customizable workspace with autonomous capabilities to streamline project management. Users can discuss projects in context and benefit from an AI assistant for creating better stories. The tool aims to revolutionize project management by offloading routine tasks to an intelligent system.

Moning

Moning is a platform designed to help users manage and boost their wealth easily. It provides tools for a global view of wealth, making better investment decisions, avoiding costly mistakes, and increasing performance. With features like AI Analysis, Dividends calendar, and Dividend and Growth Safety Scores, Moning offers a mix of Human & Artificial Intelligence to enhance investment knowledge and decision-making. Users can track and manage their wealth through a comprehensive dashboard, access detailed information on stocks, ETFs, and cryptos, and benefit from quick screeners to find the best investment opportunities.

Legitt AI

Legitt AI is an AI-powered Contract Lifecycle Management platform that offers a comprehensive solution for managing contracts at scale. It combines automation and intelligence to revolutionize contract management, ensuring efficiency, accuracy, and compliance with legal standards. The platform streamlines contract creation, signing, tracking, and management processes by embedding intelligence in every step. Legitt AI enhances contract review processes, contract tracking, and contract intelligence at scale, providing users with insights, recommendations, and automated workflows. With robust security measures, scalable infrastructure, and integrations with popular business tools, Legitt AI empowers businesses to manage contracts with precision and efficiency.

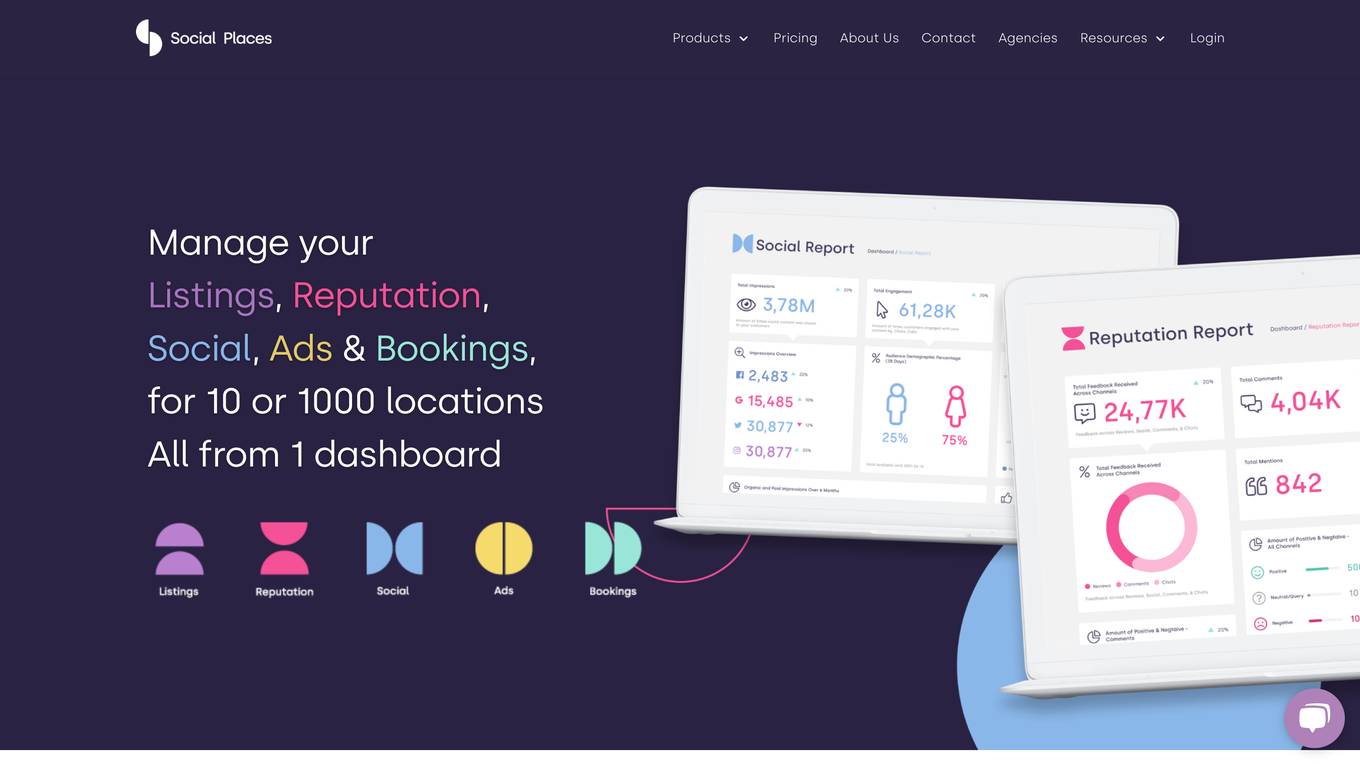

Social Places

Social Places is a leading franchise marketing agency that provides a suite of tools to help businesses with multiple locations manage their online presence. The platform includes tools for managing listings, reputation, social media, ads, and bookings. Social Places also offers a conversational AI chatbot and a custom feedback form builder.

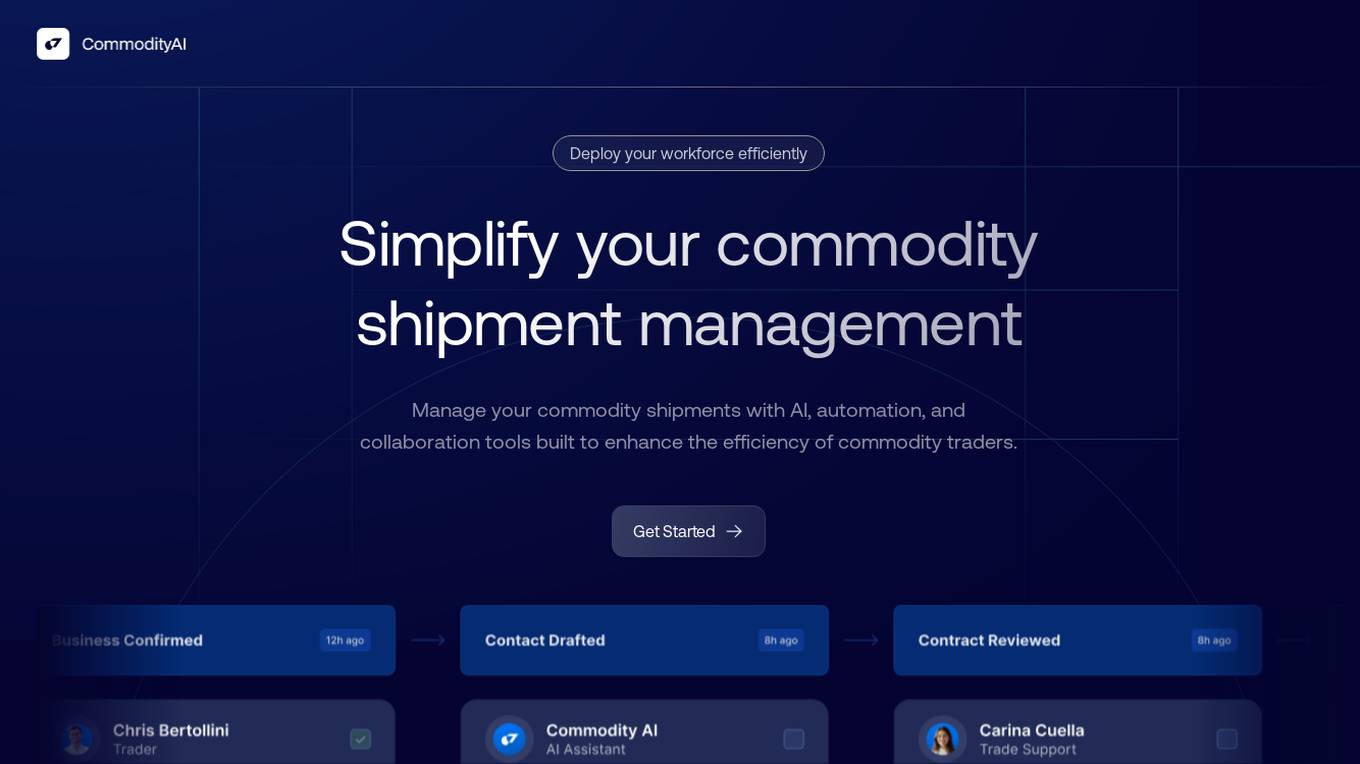

CommodityAI

CommodityAI is a web-based platform that uses AI, automation, and collaboration tools to help businesses manage their commodity shipments and supply chains more efficiently. The platform offers a range of features, including shipment management automation, intelligent document processing, stakeholder collaboration, and supply-chain automation. CommodityAI can help businesses improve data accuracy, eliminate manual processes, and streamline communication and collaboration. The platform is designed for the commodities industry and offers commodity-specific automations, ERP integration, and AI-powered insights.

4 - Open Source AI Tools

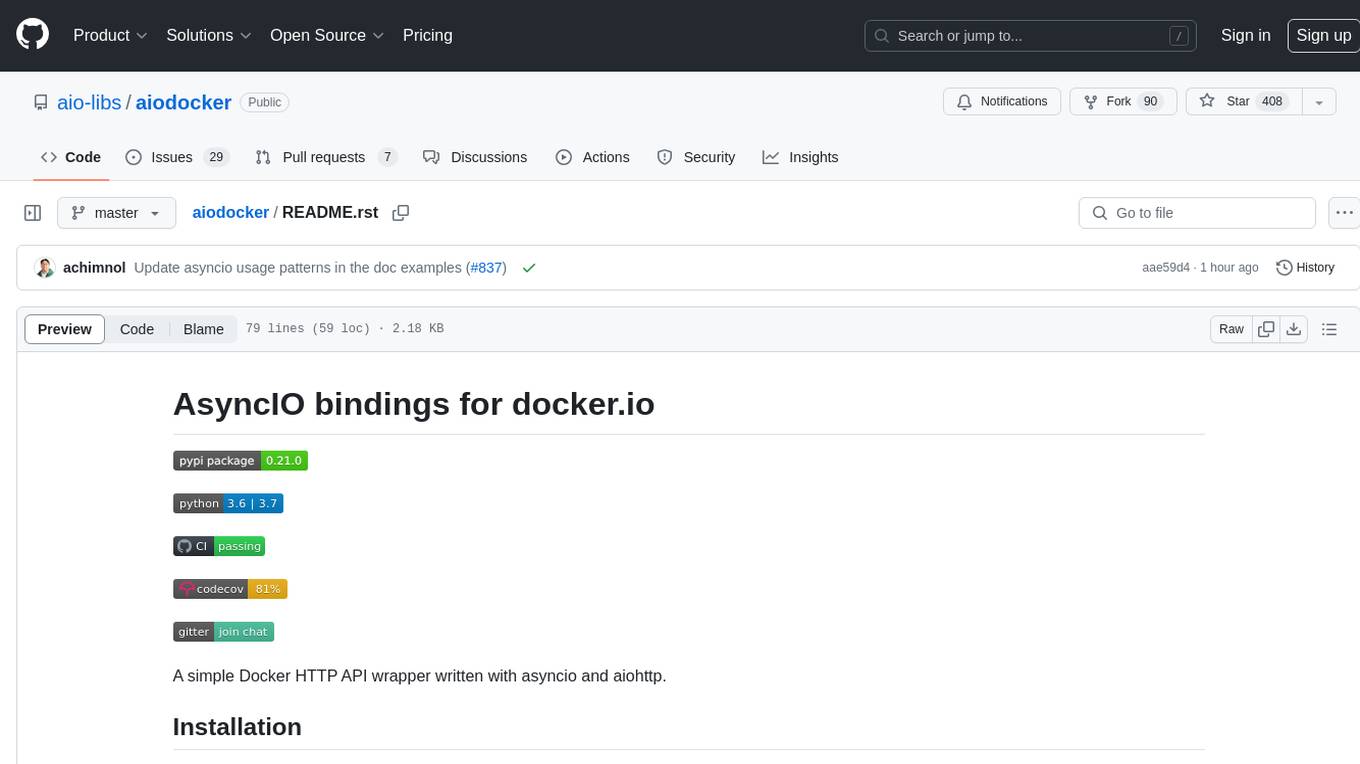

aiodocker

Aiodocker is a simple Docker HTTP API wrapper written with asyncio and aiohttp. It provides asynchronous bindings for interacting with Docker containers and images. Users can easily manage Docker resources using async functions and methods. The library offers features such as listing images and containers, creating and running containers, and accessing container logs. Aiodocker is designed to work seamlessly with Python's asyncio framework, making it suitable for building asynchronous Docker management applications.

cheat-sheet-pdf

The Cheat-Sheet Collection for DevOps, Engineers, IT professionals, and more is a curated list of cheat sheets for various tools and technologies commonly used in the software development and IT industry. It includes cheat sheets for Nginx, Docker, Ansible, Python, Go (Golang), Git, Regular Expressions (Regex), PowerShell, VIM, Jenkins, CI/CD, Kubernetes, Linux, Redis, Slack, Puppet, Google Cloud Developer, AI, Neural Networks, Machine Learning, Deep Learning & Data Science, PostgreSQL, Ajax, AWS, Infrastructure as Code (IaC), System Design, and Cyber Security.

libmodal

libmodal is a cross-language client SDK for Modal, providing lightweight alternatives to the Modal Python Library. It allows users to start Sandboxes, call Modal Functions, and manage containers. The SDK supports JavaScript and Go languages, with similar features and APIs for each. Users can interact with Modal from any project by authenticating with Modal and adding the SDK to their application. The repository aims to add more features over time while keeping behavior consistent across languages.

toolhive-studio

ToolHive Studio is an experimental project under active development and testing, providing an easy way to discover, deploy, and manage Model Context Protocol (MCP) servers securely. Users can launch any MCP server in a locked-down container with just a few clicks, eliminating manual setup, security concerns, and runtime issues. The tool ensures instant deployment, default security measures, cross-platform compatibility, and seamless integration with popular clients like GitHub Copilot, Cursor, and Claude Code.

20 - OpenAI Gpts

The Dock - Your Docker Assistant

Technical assistant specializing in Docker and Docker Compose. Lets Debug !

Docker and Docker Swarm Assistant

Expert in Docker and Docker Swarm solutions and troubleshooting.

BASHer GPT || Your Bash & Linux Shell Tutor!

Adaptive and clear Bash guide with command execution. Learn by poking around in the code interpreter's isolated Kubernetes container!

GptInfinite - PAI (Paid Access Integrator)

💲Monetize your new or existing GPTs! 💳Choose from free trial, freemium or premium pricing models. 🔐Generate and verify keys. 📦Self contained w/ no need for apis or actions. ✨Instant access to updates. 💾Worry free backups ⏱Save time and effort. 💰Monetize today! -v0.60

FODMAPs Dietician

Dietician that helps those with IBS manage their symptoms via FODMAPs. FODMAP stands for fermentable oligosaccharides, disaccharides, monosaccharides and polyols. These are the chemical names of 5 naturally occurring sugars that are not well absorbed by your small intestine.

Cognitive Behavioral Coach

Provides cognitive-behavioral and emotional therapy guidance, helping users understand and manage their thoughts, behaviors, and emotions.

1ACulma - Management Coach

Cross-cultural management. Useful for those who relocate to another country or manage cross-cultural teams.

Finance Butler(ファイナンス・バトラー)

I manage finances securely with encryption and user authentication.

GroceriesGPT

I manage your grocery lists to help you stay organized. *1/ Tell me what to add to a list. 2/ Ask me to add all ingredients for a receipe. 3/ Upload a receipt to remove items from your lists 4/ Add an item by simply uploading a picture. 5/ Ask me what items I would recommend you add to your lists.*

Family Legacy Assistant

Helps users manage and preserve family heirlooms with empathy and practical advice.