Best AI tools for< Improve Model Speed >

20 - AI tool Sites

VoiceCanvas

VoiceCanvas is an advanced AI-powered multilingual voice synthesis and voice cloning platform that offers instant text-to-speech in over 40 languages. It utilizes cutting-edge AI technology to provide high-quality voice synthesis with natural intonation and rhythm, along with personalized voice cloning for more human-like AI speech. Users can upload voice samples, have AI analyze voice features, generate personalized AI voice models, input text for conversion, and apply the cloned AI voice model to generate natural voice speech. VoiceCanvas is highly praised by language learners, content creators, teachers, business owners, voice actors, and educators for its exceptional voice quality, multiple language support, and ease of use in creating voiceovers, learning materials, and podcast content.

V7

V7 is an AI data engine for computer vision and generative AI. It provides a multimodal automation tool that helps users label data 10x faster, power AI products via API, build AI + human workflows, and reach 99% AI accuracy. V7's platform includes features such as automated annotation, DICOM annotation, dataset management, model management, image annotation, video annotation, document processing, and labeling services.

Eigen Technologies

Eigen Technologies is an AI-powered data extraction platform designed for business users to automate the extraction of data from various documents. The platform offers solutions for intelligent document processing and automation, enabling users to streamline business processes, make informed decisions, and achieve significant efficiency gains. Eigen's platform is purpose-built to deliver real ROI by reducing manual processes, improving data accuracy, and accelerating decision-making across industries such as corporates, banks, financial services, insurance, law, and manufacturing. With features like generative insights, table extraction, pre-processing hub, and model governance, Eigen empowers users to automate data extraction workflows efficiently. The platform is known for its unmatched accuracy, speed, and capability, providing customers with a flexible and scalable solution that integrates seamlessly with existing systems.

Pongo

Pongo is an AI-powered tool that helps reduce hallucinations in Large Language Models (LLMs) by up to 80%. It utilizes multiple state-of-the-art semantic similarity models and a proprietary ranking algorithm to ensure accurate and relevant search results. Pongo integrates seamlessly with existing pipelines, whether using a vector database or Elasticsearch, and processes top search results to deliver refined and reliable information. Its distributed architecture ensures consistent latency, handling a wide range of requests without compromising speed. Pongo prioritizes data security, operating at runtime with zero data retention and no data leaving its secure AWS VPC.

Moshi AI

Moshi AI by Kyutai is an advanced native speech AI model that enables natural, expressive conversations. It can be installed locally and run offline, making it suitable for integration into smart home appliances and other local applications. The model, named Helium, has 7 billion parameters and is trained on text and audio codecs. Moshi AI supports native speech input and output, allowing for smooth communication with the AI. The application is community-supported, with plans for continuous improvement and adaptation.

Bibit AI

Bibit AI is a real estate marketing AI designed to enhance the efficiency and effectiveness of real estate marketing and sales. It can help create listings, descriptions, and property content, and offers a host of other features. Bibit AI is the world's first AI for Real Estate. We are transforming the real estate industry by boosting efficiency and simplifying tasks like listing creation and content generation.

Voam

Voam is a productive AI platform that helps you to automate your tasks and improve your productivity. With Voam, you can create custom AI models to automate any task, from simple data entry to complex decision-making. Voam is easy to use and requires no coding experience. You can create an AI model in minutes and start automating your tasks right away.

Kokoro TTS Online

Kokoro TTS Online is a professional cloud service powered by the Kokoro 82M open-source model. It offers text-to-speech conversion with natural speech synthesis using advanced AI technology. Users can transform text into natural-sounding speech in seconds, choose from multiple voices, and experience superior audio quality. Kokoro TTS is user-friendly, supports American and British English, and is suitable for various applications such as creating voiceovers, podcasts, and learning materials.

Lamini

Lamini is an enterprise-level LLM platform that offers precise recall with Memory Tuning, enabling teams to achieve over 95% accuracy even with large amounts of specific data. It guarantees JSON output and delivers massive throughput for inference. Lamini is designed to be deployed anywhere, including air-gapped environments, and supports training and inference on Nvidia or AMD GPUs. The platform is known for its factual LLMs and reengineered decoder that ensures 100% schema accuracy in the JSON output.

AIby.email

AIby.email is an AI-powered email assistant that helps you write better emails, faster. It uses natural language processing to understand your intent and generate personalized email responses. AIby.email also offers a variety of other features, such as email scheduling, tracking, and analytics.

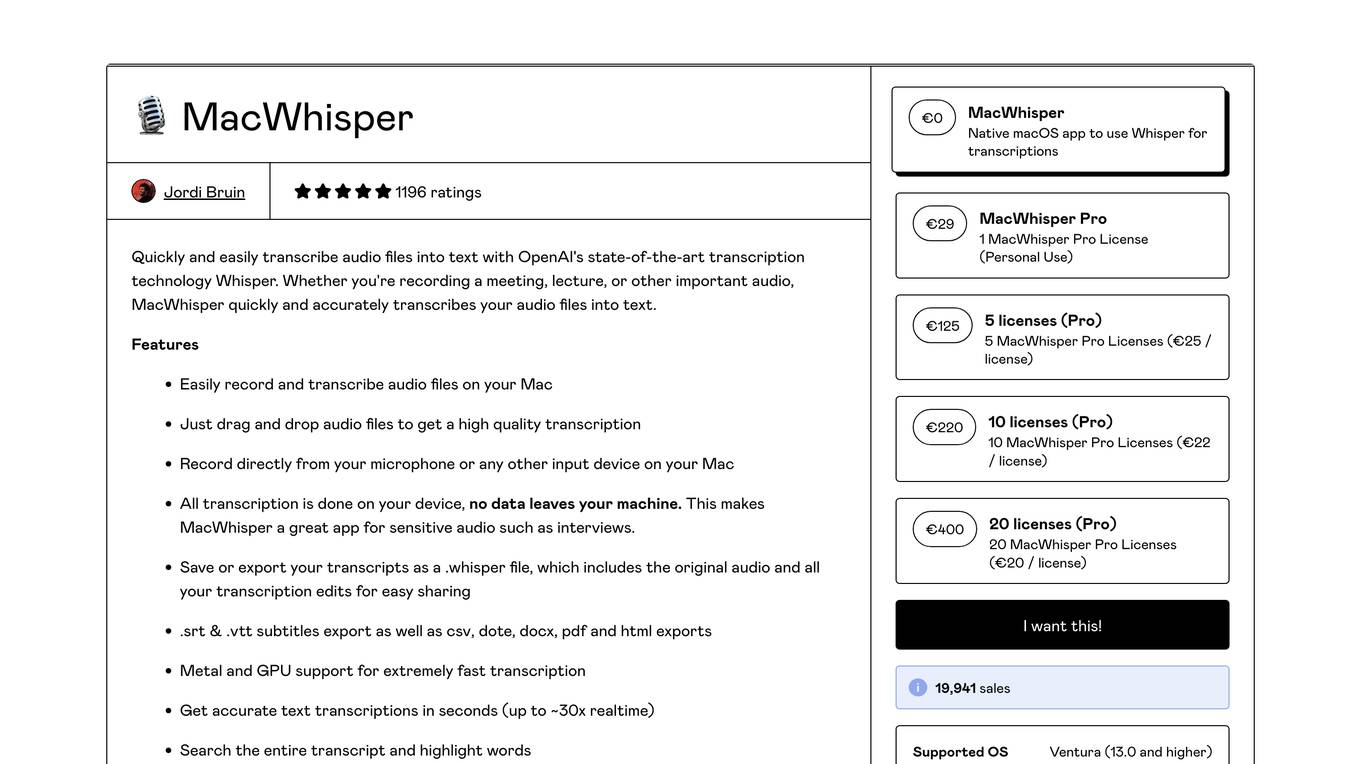

MacWhisper

MacWhisper is a native macOS application that utilizes OpenAI's Whisper technology for transcribing audio files into text. It offers a user-friendly interface for recording, transcribing, and editing audio, making it suitable for various use cases such as transcribing meetings, lectures, interviews, and podcasts. The application is designed to protect user privacy by performing all transcriptions locally on the device, ensuring that no data leaves the user's machine.

Aispect

Aispect is an AI tool that offers a new way to experience events by turning live speech into captivating visuals in real-time. It supports over 30 languages and allows users to create images from audio without storing the original recordings. With a pay-as-you-go model, users can purchase credits for image creation or opt for monthly subscription plans. Aispect is ideal for events, webinars, meetings, and news feeds, providing a seamless and secure platform for enhancing audio-visual experiences.

CodeParrot

CodeParrot is an AI tool designed to speed up frontend development tasks by generating production-ready frontend components from Figma design files using Large Language Models. It helps developers reduce UI development time, improve code quality, and focus on more creative tasks. CodeParrot offers customization options, support for frameworks like React, Vue, and Angular, and integrates seamlessly into various workflows, making it a must-have tool for developers looking to enhance their frontend development process.

Frugal

Frugal is an intelligent application cost engineering platform that optimizes code to reduce cloud costs automatically. It is the first AI-powered cost optimization platform built for engineers, empowering them to find and fix inefficiencies in code that drain cloud budgets. The platform aims to reinvent cost engineering by enabling developers to reduce application costs and improve cloud efficiency through automated identification and resolution of wasteful practices.

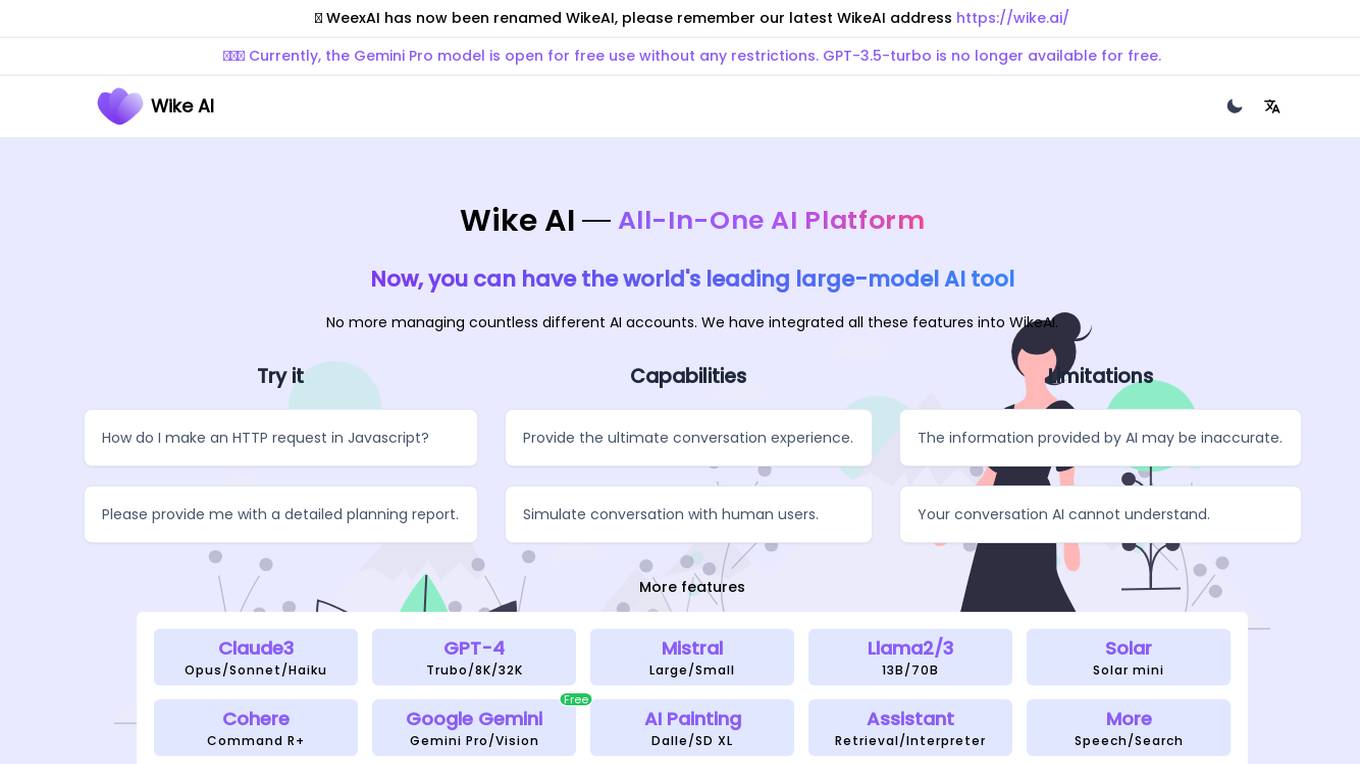

WikeAI

WikeAI is an all-in-one AI platform that provides access to top AI models such as GPT-4, Claude3, Mistral, and Llama2. It offers professional-level cross-model integration, allowing users to experience powerful language understanding, speech synthesis, and visual generation technology without switching between multiple systems. WikeAI simplifies the process of using AI for content writing by generating blog articles, product descriptions, social media ads, and more in seconds. The platform offers different pricing plans tailored to various user needs, from casual users to language creators.

Zuni

Zuni is a turbocharged AI tool that operates directly from your Chrome sidebar, providing easy access to advanced AI models like ChatGPT at 3x speeds. With Zuni, users can enhance productivity by leveraging premium AI models from OpenAI, Google, Anthropic, DeepSeek, Mistral, and Meta. The tool offers different pricing tiers, including a free tier with 10 message credits per day and a Pro plan with 1,500 message credits per month and priority access to new models. Zuni also ensures user satisfaction with a money-back guarantee and flexible billing options.

Salt AI

Salt AI is a development engine tailored for life sciences organizations, aiming to accelerate advancements in the field by enabling faster adoption and utilization of AI technologies. The platform offers reliable and reproducible AI processes, optimized for speed and efficiency, and promotes transparency and collaboration within workflows. With a focus on supporting best-in-class life sciences research models, Salt AI empowers users to enhance their understanding of biological processes through flexible and performant AI solutions.

CallTeacher

CallTeacher is an AI-powered language learning platform that provides personalized lessons and interactive exercises to help learners improve their speaking, listening, reading, and writing skills. The platform uses advanced speech recognition and natural language processing technologies to provide real-time feedback and tailored learning experiences. With CallTeacher, learners can access a vast library of lessons covering various topics and levels, and they can also connect with native speakers for live practice sessions.

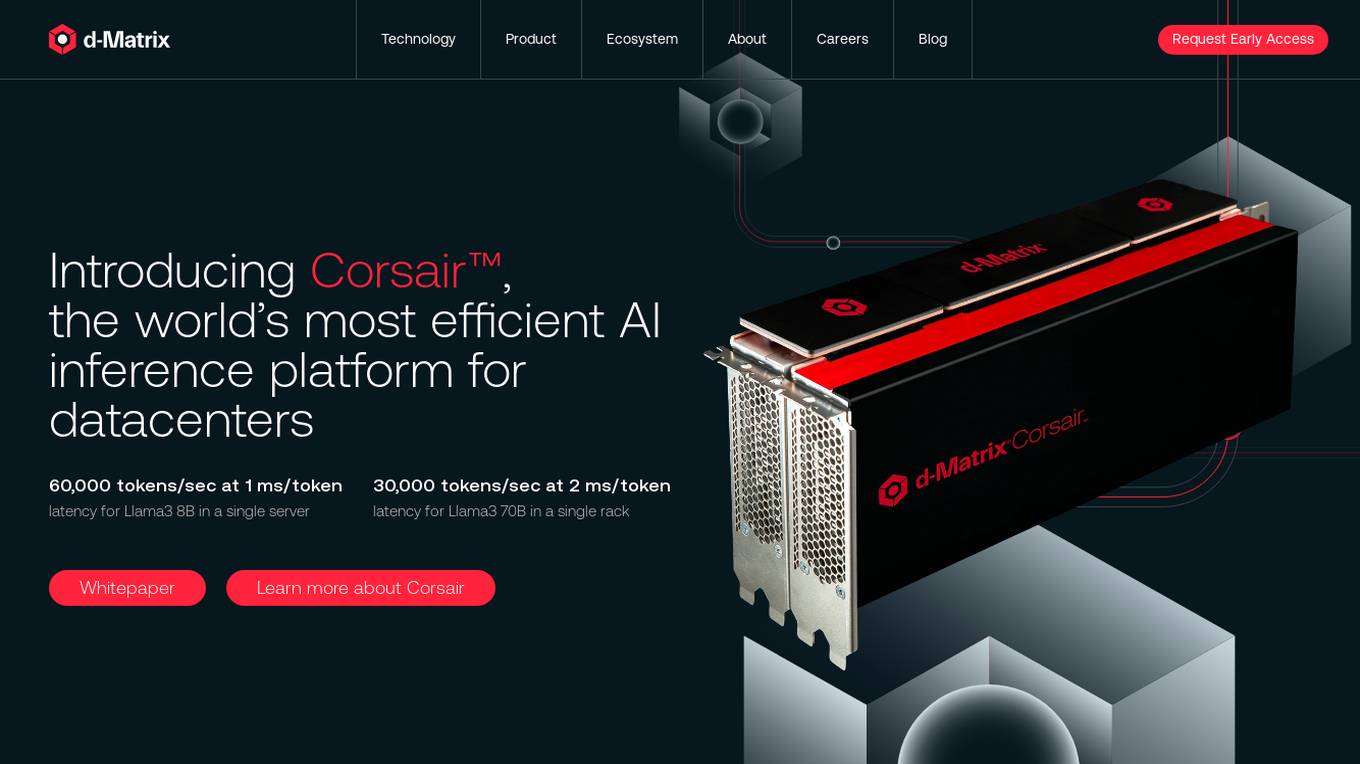

d-Matrix

d-Matrix is an AI tool that offers ultra-low latency batched inference for generative AI technology. It introduces Corsair™, the world's most efficient AI inference platform for datacenters, providing high performance, efficiency, and scalability for large-scale inference tasks. The tool aims to transform the economics of AI inference by delivering fast, sustainable, and scalable AI solutions without compromising on speed or usability.

Flux AI Image Generator

Flux AI Image Generator is a cutting-edge AI tool developed by Black Forest Labs. It utilizes advanced AI techniques to transform textual prompts into high-quality images, offering enhanced image quality, improved prompt adherence, advanced human anatomy rendering, a variety of artistic styles, and exceptional processing speed. The tool stands out for its hybrid architecture, superior performance, and versatility in generating various types of images, making it suitable for applications like game development and architectural visualization.

1 - Open Source AI Tools

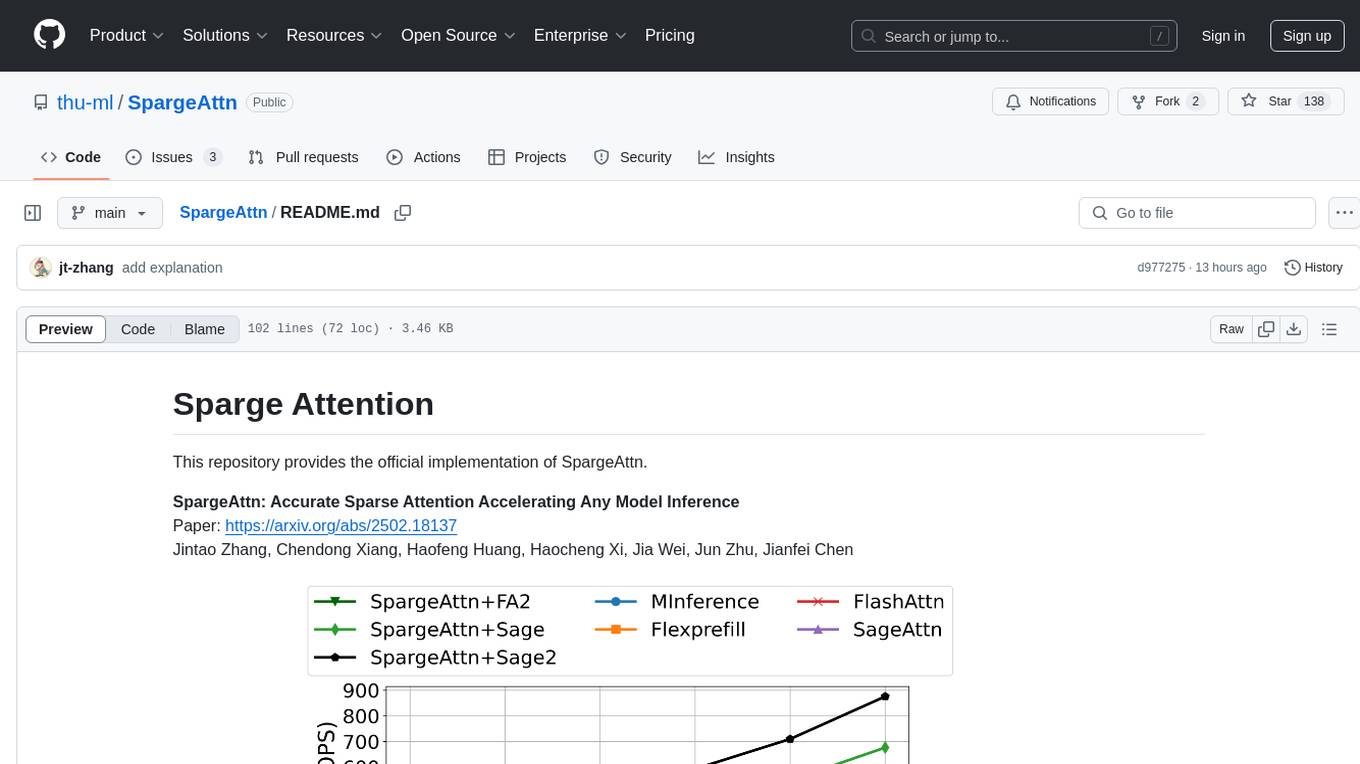

SpargeAttn

SpargeAttn is an official implementation designed for accelerating any model inference by providing accurate sparse attention. It offers a significant speedup in model performance while maintaining quality. The tool is based on SageAttention and SageAttention2, providing options for different levels of optimization. Users can easily install the package and utilize the available APIs for their specific needs. SpargeAttn is particularly useful for tasks requiring efficient attention mechanisms in deep learning models.

20 - OpenAI Gpts

Palm Reader

Moved to https://chat.openai.com/g/g-KFnF7qssT-palm-reader . Interprets palm readings from user-uploaded hand images. Turned off setting to use data for OpenAi to improve model.

Face Reader

Moved to https://chat.openai.com/g/g-q6GNcOkYx-face-reader. Reads faces to tell fortunes based on Chinese face reading. Turned off setting to use data for OpenAi to improve model.

Back Propagation

I'm Back Propagation, here to help you understand and apply back propagation techniques to your AI models.

Business Model Advisor

Business model expert, create detailed reports based on business ideas.

Create A Business Model Canvas For Your Business

Let's get started by telling me about your business: What do you offer? Who do you serve? ------------------------------------------------------- Need help Prompt Engineering? Reach out on LinkedIn: StephenHnilica

Business Model Canvas Wizard

Un aiuto a costruire il Business Model Canvas della tua iniziativa

Modelos de Negocios GPT

Guía paso a paso para la creación y mejora de modelos de negocio usando la metodología Business Model Canvas.

Agent Prompt Generator for LLM's

This GPT generates the best possible LLM-agents for your system prompts. You can also specify the model size, like 3B, 33B, 70B, etc.

Face Rating GPT 😐

Evaluates faces and rates them out of 10 ⭐ Provides valuable feedback to improving your attractiveness!