Best AI tools for< Fuse Models >

1 - AI tool Sites

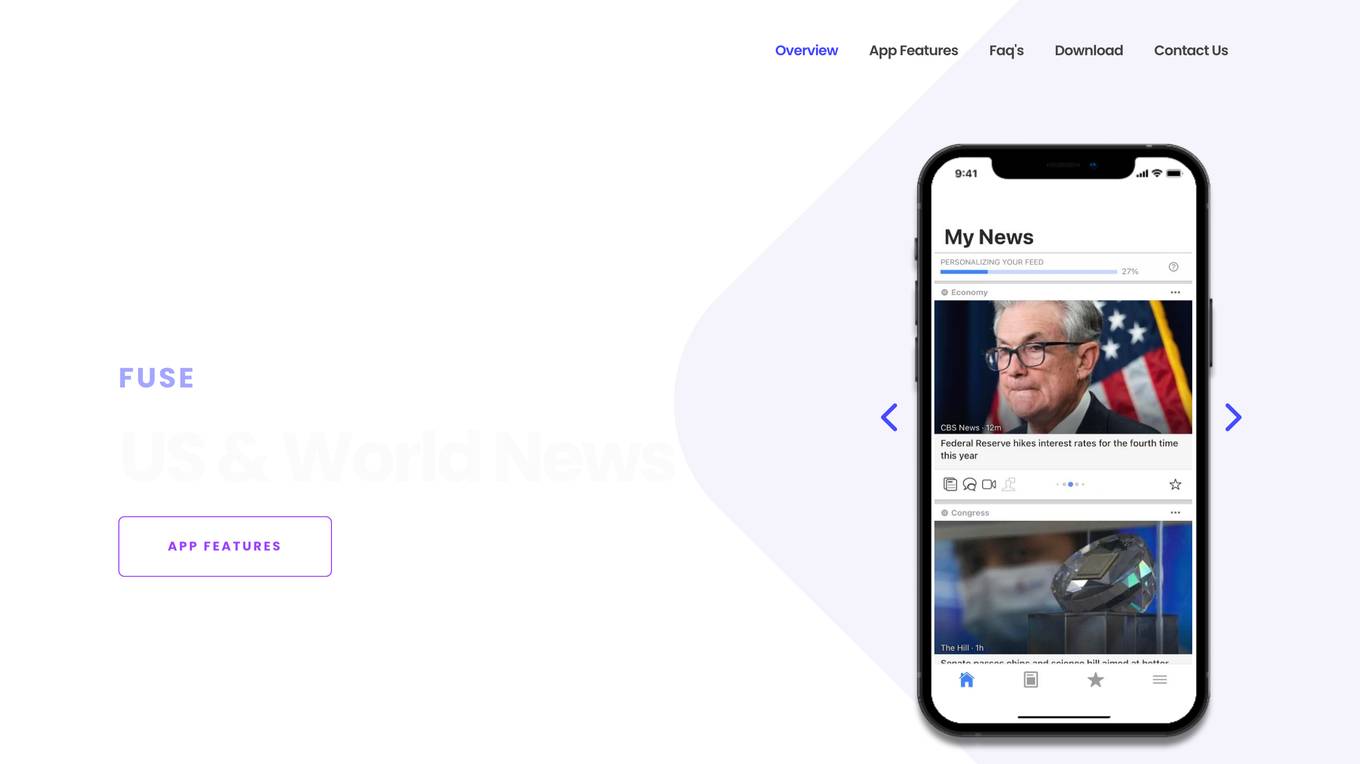

Fuse

Fuse is a smart news aggregator that delivers personalized and complete coverage of top news stories from the U.S. and around the world. Stories are covered from every angle - with articles, videos and opinions from trusted sources. Fuse employs AI/ML algorithms to continuously collect, organize, prioritize and personalize news stories. Articles, videos and opinions are collected from all the major news media outlets and automatically organized by stories and topics.

1 - Open Source AI Tools

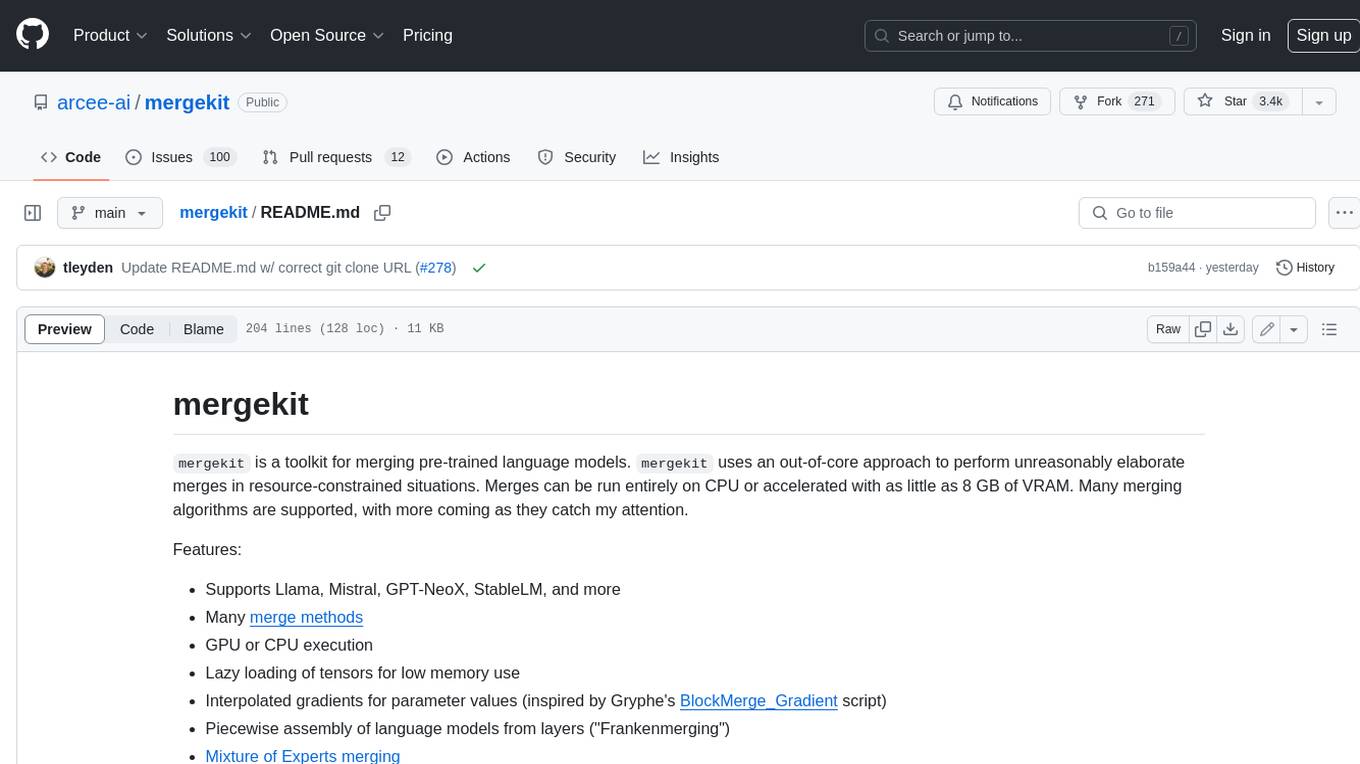

mergekit

Mergekit is a toolkit for merging pre-trained language models. It uses an out-of-core approach to perform unreasonably elaborate merges in resource-constrained situations. Merges can be run entirely on CPU or accelerated with as little as 8 GB of VRAM. Many merging algorithms are supported, with more coming as they catch my attention.

2 - OpenAI Gpts

PokedexGPT V3

Containing The Entire Pokemon Universe | All Gen Pokemon, Items, Abilities, Berrys, Eggs, Region Details, Etc | Battle Simulation | Upload Image for Pokedex to ID | Fuse Pokemon | Explore || Type Menu to see full options.