Best AI tools for< Evaluate Service Performance >

20 - AI tool Sites

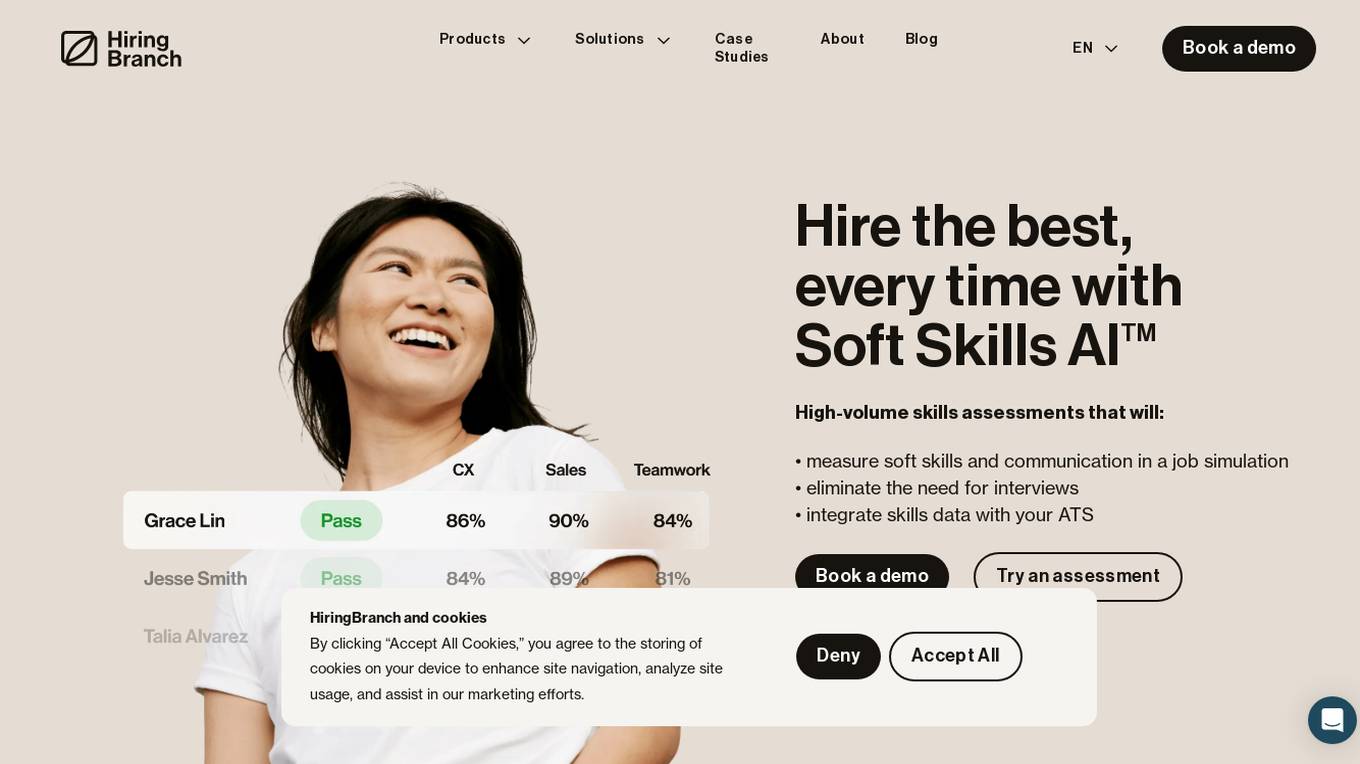

HiringBranch

HiringBranch is an AI-powered platform that offers high-volume skills assessments to help companies hire the best candidates efficiently. The platform accurately measures soft skills and communication through open-ended conversational assessments, eliminating the need for traditional interviews. HiringBranch's AI skills assessments are tailored to various industries such as Telecommunication, Retail, Banking & Insurance, and Contact Centers, providing real-time evaluation of role-critical skills. The platform aims to streamline the hiring process, reduce mis-hires, and improve retention rates for enterprises globally.

Welo Data

Welo Data is an AI tool that specializes in AI benchmarking, model assessment, and training high-quality datasets for AI models. The platform offers services such as supervised fine tuning, reinforcement learning with human feedback, data generation, expert evaluations, and data quality framework to support the development of world-class AI models. With over 27 years of experience, Welo Data combines language expertise and AI data to deliver exceptional training and performance evaluation solutions.

CloudExam AI

CloudExam AI is an online testing platform developed by Hanke Numerical Union Technology Co., Ltd. It provides stable and efficient AI online testing services, including intelligent grouping, intelligent monitoring, and intelligent evaluation. The platform ensures test fairness by implementing automatic monitoring level regulations and three random strategies. It prioritizes information security by combining software and hardware to secure data and identity. With global cloud deployment and flexible architecture, it supports hundreds of thousands of concurrent users. CloudExam AI offers features like queue interviews, interactive pen testing, data-driven cockpit, AI grouping, AI monitoring, AI evaluation, random question generation, dual-seat testing, facial recognition, real-time recording, abnormal behavior detection, test pledge book, student information verification, photo uploading for answers, inspection system, device detection, scoring template, ranking of results, SMS/email reminders, screen sharing, student fees, and collaboration with selected schools.

Getbound

Getbound is an AI solutions provider that enables companies to evaluate, customize, and scale technology solutions with artificial intelligence easily and quickly. They offer services such as AI consulting, NLP solutions, MLOps, generative AI development, data engineering services, and computer vision solutions. Getbound empowers businesses to turn data into savings, automate processes, and improve overall performance through AI technologies.

IngestAI

IngestAI is a Silicon Valley-based startup that provides a sophisticated toolbox for data preparation and model selection, powered by proprietary AI algorithms. The company's mission is to make AI accessible and affordable for businesses of all sizes. IngestAI's platform offers a turn-key service tailored for AI builders seeking to optimize AI application development. The company identifies the model best-suited for a customer's needs, ensuring it is designed for high performance and reliability. IngestAI utilizes Deepmark AI, its proprietary software solution, to minimize the time required to identify and deploy the most effective AI solutions. IngestAI also provides data preparation services, transforming raw structured and unstructured data into high-quality, AI-ready formats. This service is meticulously designed to ensure that AI models receive the best possible input, leading to unparalleled performance and accuracy. IngestAI goes beyond mere implementation; the company excels in fine-tuning AI models to ensure that they match the unique nuances of a customer's data and specific demands of their industry. IngestAI rigorously evaluates each AI project, not only ensuring its successful launch but its optimal alignment with a customer's business goals.

PlaymakerAI

PlaymakerAI is an AI-powered platform that provides football analytics and scouting services to football clubs, agents, media companies, betting companies, researchers, and educators. The platform offers access to AI-evaluated football data from hundreds of leagues worldwide, individual player reports, analytics and scouting services, media insights, and a Playmaker Personality Profile for deeper understanding of squad dynamics. PlaymakerAI revolutionizes the way football organizations operate by offering clear, insightful information and expert assistance in football analytics and scouting.

ELSA

ELSA is an AI-powered English speaking coach that helps you improve your pronunciation, fluency, and confidence. With ELSA, you can practice speaking English in short, fun dialogues and get instant feedback from our proprietary artificial intelligence technology. ELSA also offers a variety of other features, such as personalized lesson plans, progress tracking, and games to help you stay motivated.

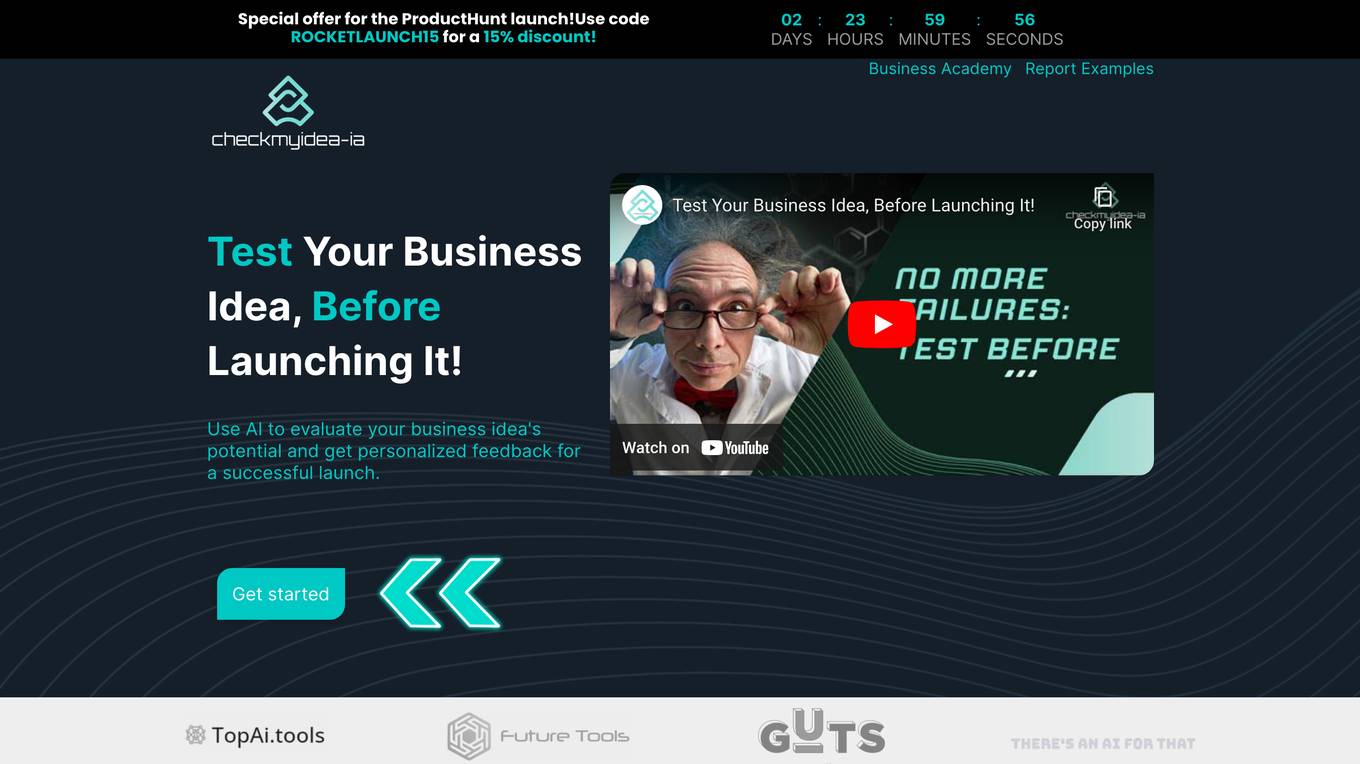

Checkmyidea-IA

Checkmyidea-IA is an AI-powered tool that helps entrepreneurs and businesses evaluate their business ideas before launching them. It uses a variety of factors, such as customer interest, uniqueness, initial product development, and launch strategy, to provide users with a comprehensive review of their idea's potential for success. Checkmyidea-IA can help users save time, increase their chances of success, reduce risk, and improve their decision-making.

A Million Dollar Idea

A Million Dollar Idea is an AI-powered business idea generator that helps entrepreneurs and small business owners come up with new and innovative business ideas. The tool uses a variety of data sources, including industry trends, market research, and user feedback, to generate ideas that are tailored to the user's specific needs and interests. A Million Dollar Idea is a valuable resource for anyone who is looking to start a new business or grow an existing one.

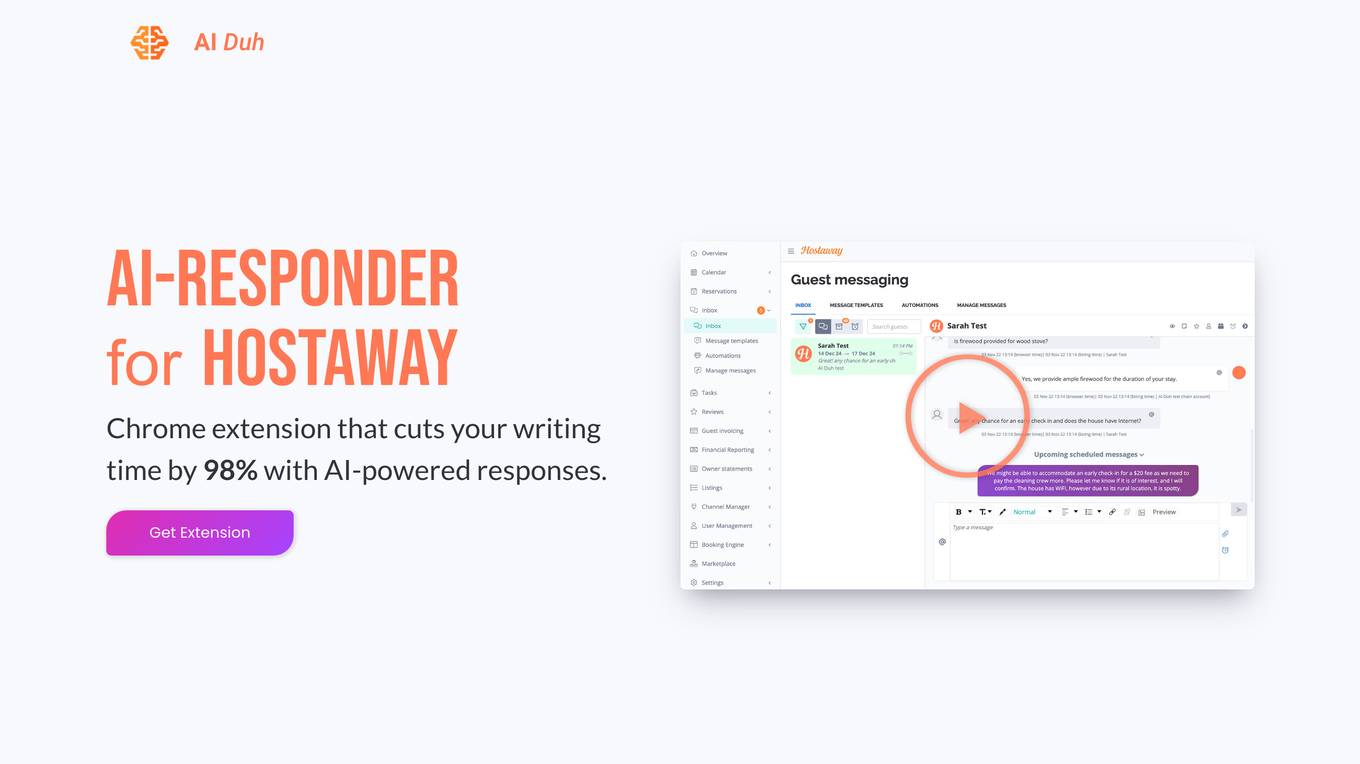

AI-Responder for HostAway

AI-Responder for HostAway is a Chrome extension powered by Kortex Invest AI that significantly reduces writing time by 98%. It offers automated responses based on property-specific knowledge documents, guest data, and previous chat history. The AI tool is designed to provide high-quality responses, with a focus on accuracy and efficiency, particularly in the gambling industry. It helps users navigate complex topics, such as evaluating online casino platforms, by offering quick solutions and convenient workflow. The tool aims to save time and enhance decision-making processes by leveraging advanced AI models.

Deal Protectors

Deal Protectors is an AI-driven website designed to help users evaluate their car deals to ensure they are getting the best possible price. The platform allows users to upload their deal or input essential details for analysis by an advanced AI machine. Deal Protectors aims to provide transparency, empowerment, and community support in the car purchasing process by comparing deals with national and regional averages. Additionally, the website features a protection forum for sharing dealership experiences, a game called 'Beat The Dealer,' and testimonials from satisfied customers.

iPhone中古買取情報局

iPhone中古買取情報局 is a website that provides detailed information on factors affecting the price decrease in iPhone used trade-in, such as the condition of the device, evaluation criteria for different capacities, and differences between trade-in and resale. The site also offers guidance on preparing for the trade-in process, including data processing and initialization steps, the impact of accessories on evaluation, and understanding market fluctuations based on the timing of trade-ins. Users can learn about the importance of external appearance checks and how they influence the assessment value, as well as tips for a smooth and secure trade-in experience.

Lucida AI

Lucida AI is an AI-driven coaching tool designed to enhance employees' English language skills through personalized insights and feedback based on real-life call interactions. The tool offers comprehensive coaching in pronunciation, fluency, grammar, vocabulary, and tracking of language proficiency. It provides advanced speech analysis using proprietary LLM and NLP technologies, ensuring accurate assessments and detailed tracking. With end-to-end encryption for data privacy, Lucy AI is a cost-effective solution for organizations seeking to improve communication skills and streamline language assessment processes.

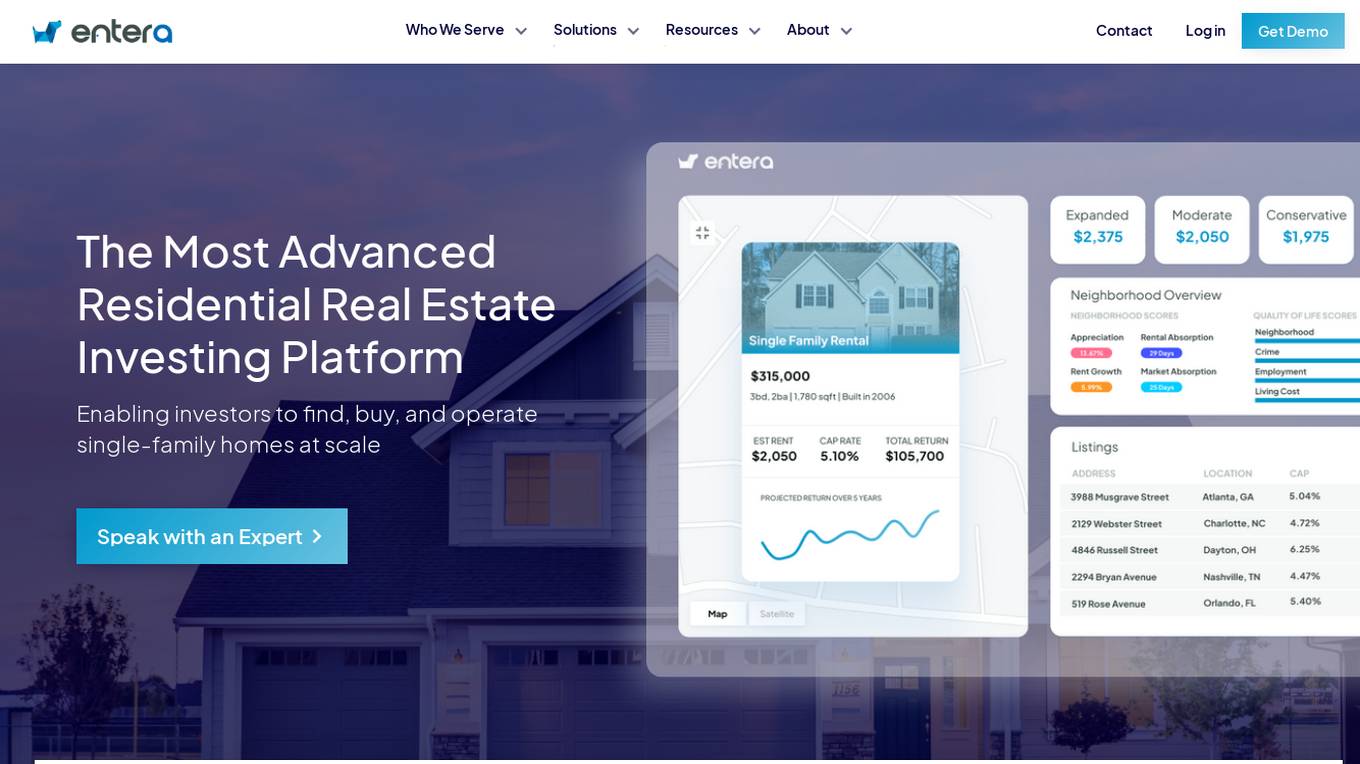

Entera

Entera is an advanced residential real estate investment platform that enables investors to find, buy, and operate single-family homes at scale. Fueled by AI and full-service transaction services, Entera serves operators, funds, agents, and builders by providing access to on and off-market homes, real-time market data, analytics tools, and expert services. The platform modernizes the real estate buying process, helping clients make data-driven investment decisions, scale their operations, and maximize success.

Datumbox

Datumbox is a machine learning platform that offers a powerful open-source Machine Learning Framework written in Java. It provides a large collection of algorithms, models, statistical tests, and tools to power up intelligent applications. The platform enables developers to build smart software and services quickly using its REST Machine Learning API. Datumbox API offers off-the-shelf Classifiers and Natural Language Processing services for applications like Sentiment Analysis, Topic Classification, Language Detection, and more. It simplifies the process of designing and training Machine Learning models, making it easy for developers to create innovative applications.

Wix

Wix.com is a website builder platform that allows users to create stunning websites without the need for coding skills. With a user-friendly interface and a wide range of customizable templates, Wix empowers individuals and businesses to establish their online presence effortlessly. Users can choose from various design elements, add functionalities through apps, and optimize their websites for different devices. Wix also provides hosting services and domain registration to simplify the entire website creation process.

Resume Roaster AI

The website offers a service where users can have their resumes analyzed by an AI tool. Users can submit their resumes to receive feedback and suggestions for improvement. The AI tool evaluates resumes based on various criteria such as formatting, content, and relevance to the job market. It provides users with valuable insights to enhance their resumes and increase their chances of landing their desired job.

HeHealth

HeHealth is an AI-powered application that revolutionizes sexual health by providing early detection services. The platform collaborates with public health entities to transform sexual health care delivery at a population level. Addressing the global silent epidemic of the sexual health crisis, HeHealth aims to bridge the critical gap in accessing timely and confidential sexual health advice. With a focus on STI detection, the application offers a discreet and efficient AI-powered Penis Health Checker, ensuring accessibility, accuracy, and affordability for users. Through a simple survey, scan, and summary process, users can evaluate their penis health in just three steps.

Studious Score AI

Studious Score AI is an AI-powered platform that offers knowledge and skill evaluation services supported by reputable individuals and organizations. The platform aims to revolutionize credentialing by providing a new approach. Studious Score AI is on a mission to establish itself as the global benchmark for assessing skills and knowledge in various aspects of life. Users can explore different categories and unlock their potential through the platform's innovative evaluation methods.

EveryPixel

EveryPixel is a website that provides image and photo analysis services. It offers various tools and features to help users analyze and enhance their images. Users can upload images to the platform and receive detailed insights such as image quality, composition, and aesthetic score. EveryPixel uses advanced algorithms and machine learning techniques to provide accurate and reliable image analysis results. The platform is user-friendly and suitable for photographers, designers, and anyone looking to improve their images.

1 - Open Source AI Tools

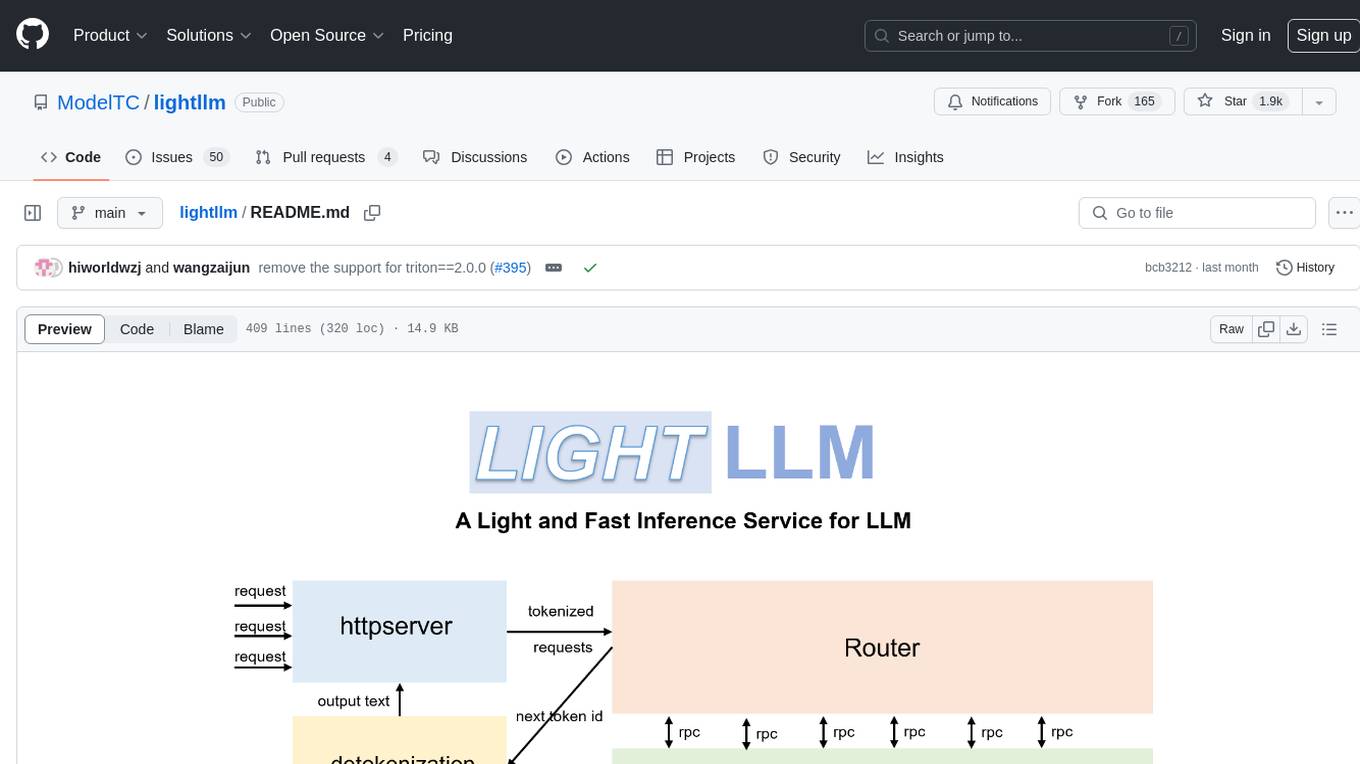

lightllm

LightLLM is a Python-based LLM (Large Language Model) inference and serving framework known for its lightweight design, scalability, and high-speed performance. It offers features like tri-process asynchronous collaboration, Nopad for efficient attention operations, dynamic batch scheduling, FlashAttention integration, tensor parallelism, Token Attention for zero memory waste, and Int8KV Cache. The tool supports various models like BLOOM, LLaMA, StarCoder, Qwen-7b, ChatGLM2-6b, Baichuan-7b, Baichuan2-7b, Baichuan2-13b, InternLM-7b, Yi-34b, Qwen-VL, Llava-7b, Mixtral, Stablelm, and MiniCPM. Users can deploy and query models using the provided server launch commands and interact with multimodal models like QWen-VL and Llava using specific queries and images.

20 - OpenAI Gpts

Wordon, World's Worst Customer | Divergent AI

I simulate tough Customer Support scenarios for Agent Training.

WM Phone Script Builder GPT

I automatically create and evaluate phone scripts, presenting a final draft.

Vorstellungsgespräch Simulator Bewerbung Training

Wertet Lebenslauf und Stellenanzeige aus und simuliert ein Vorstellungsgespräch mit anschließender Auswertung: Lebenslauf und Anzeige einfach hochladen und starten.

Conversation Analyzer

I analyze WhatsApp/Telegram and email conversations to assess the tone of their emotions and read between the lines. Upload your screenshot and I'll tell you what they are really saying! 😀

Argumentum

Stephen Toulmin’s Theory of Argumentation. FIRST TIME? Start with "Good morning!" PRIMEIRA VEZ? Comece com um "Bom dia!"

Rate My {{Startup}}

I will score your Mind Blowing Startup Ideas, helping your to evaluate faster.

Stick to the Point

I'll help you evaluate your writing to make sure it's engaging, informative, and flows well. Uses principles from "Made to Stick"

LabGPT

The main objective of a personalized ChatGPT for reading laboratory tests is to evaluate laboratory test results and create a spreadsheet with the evaluation results and possible solutions.

SearchQualityGPT

As a Search Quality Rater, you will help evaluate search engine quality around the world.

Business Model Canvas Strategist

Business Model Canvas Creator - Build and evaluate your business model

I4T Assessor - UNESCO Tech Platform Trust Helper

Helps you evaluate whether or not tech platforms match UNESCO's Internet for Trust Guidelines for the Governance of Digital Platforms

Investing in Biotechnology and Pharma

🔬💊 Navigate the high-risk, high-reward world of biotech and pharma investing! Discover breakthrough therapies 🧬📈, understand drug development 🧪📊, and evaluate investment opportunities 🚀💰. Invest wisely in innovation! 💡🌐 Not a financial advisor. 🚫💼