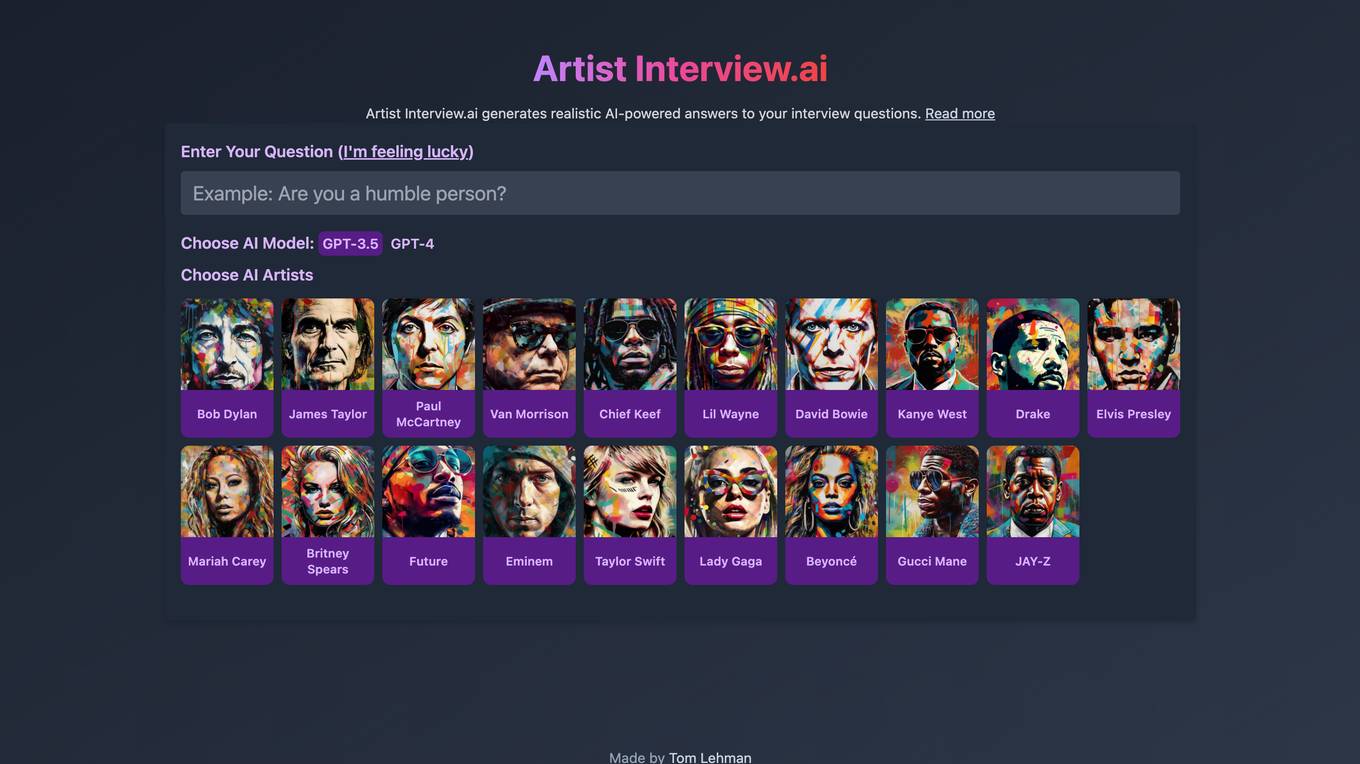

Best AI tools for< Error Handling >

20 - AI tool Sites

Error Handling Application

The website is currently experiencing an application error, indicating a server-side exception. Users encountering this error are advised to check the server logs for more information. The error digest number provided is 3308662818.

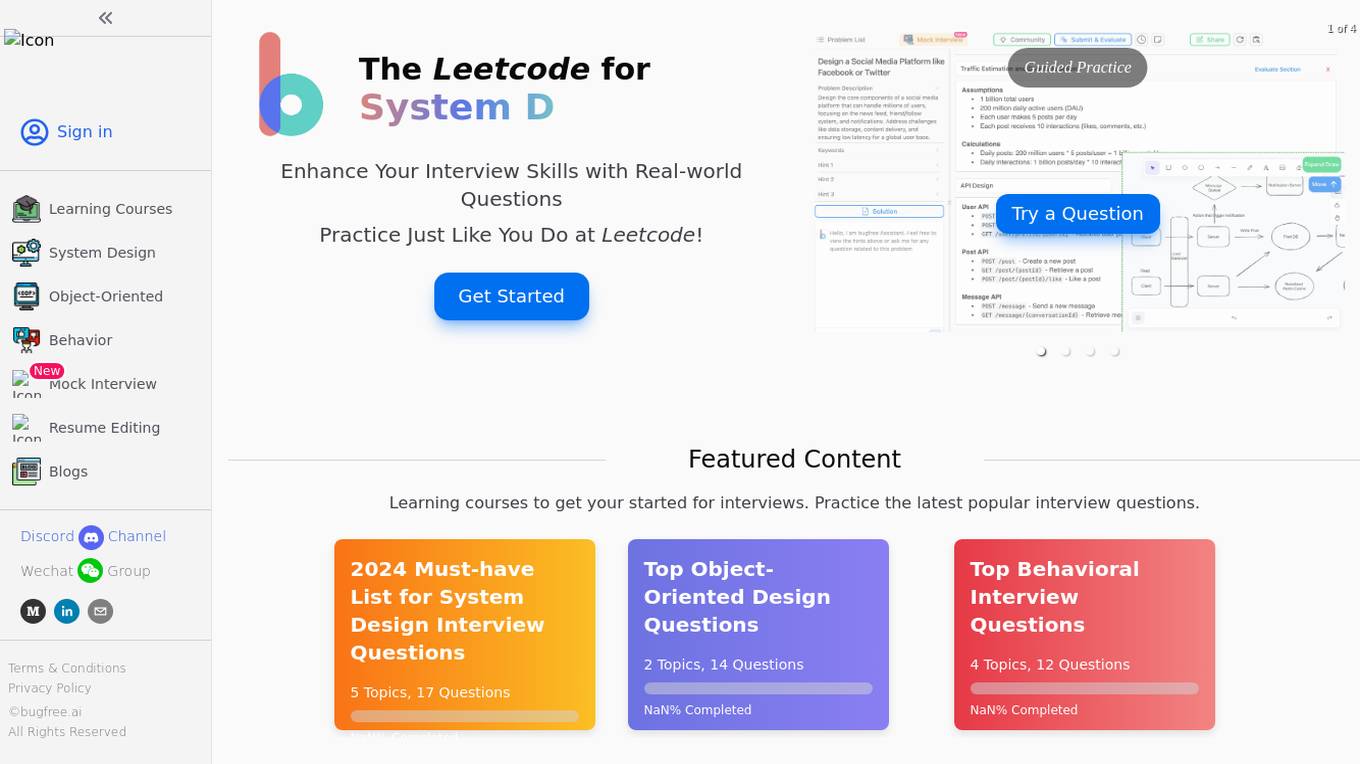

BugFree.ai

BugFree.ai is an AI-powered platform designed to help users practice system design and behavior interviews, similar to Leetcode. The platform offers a range of features to assist users in preparing for technical interviews, including mock interviews, real-time feedback, and personalized study plans. With BugFree.ai, users can improve their problem-solving skills and gain confidence in tackling complex interview questions.

Error 403 Handler

The website displays a '403 Forbidden Server Error' message, indicating that the user does not have permission to access the document. The page suggests reloading the page, going back to the previous page, or visiting the home page. It is a standard error message displayed by web servers when access to a resource is restricted.

Server Error Handler

The website encountered a server error, preventing it from fulfilling the user's request. The error message indicates a temporary issue that may be resolved by trying again after 30 seconds.

Server Error Handler

The website encountered a server error and could not complete the request. Users are advised to try again in 30 seconds. The error message indicates a temporary issue with the server's functionality.

Application Error

The website is experiencing an application error, which indicates a technical issue preventing the proper functioning of the application. An application error can occur due to various reasons such as bugs in the code, server issues, or incorrect user input. It is essential to troubleshoot and resolve application errors promptly to ensure the smooth operation of the website.

Application Error

The website is experiencing an application error, which indicates a technical issue preventing the proper functioning of the application. An application error can occur due to various reasons such as software bugs, server issues, or incorrect user inputs. It is essential for the website administrators to troubleshoot and resolve the error promptly to ensure a seamless user experience.

404 Error Page

The website page displays a 404 error message indicating that the deployment cannot be found. It provides a code (DEPLOYMENT_NOT_FOUND) and an ID (sin1::4wq5g-1718736845999-777f28b346ca) for reference. Users are advised to consult the documentation for further information and troubleshooting.

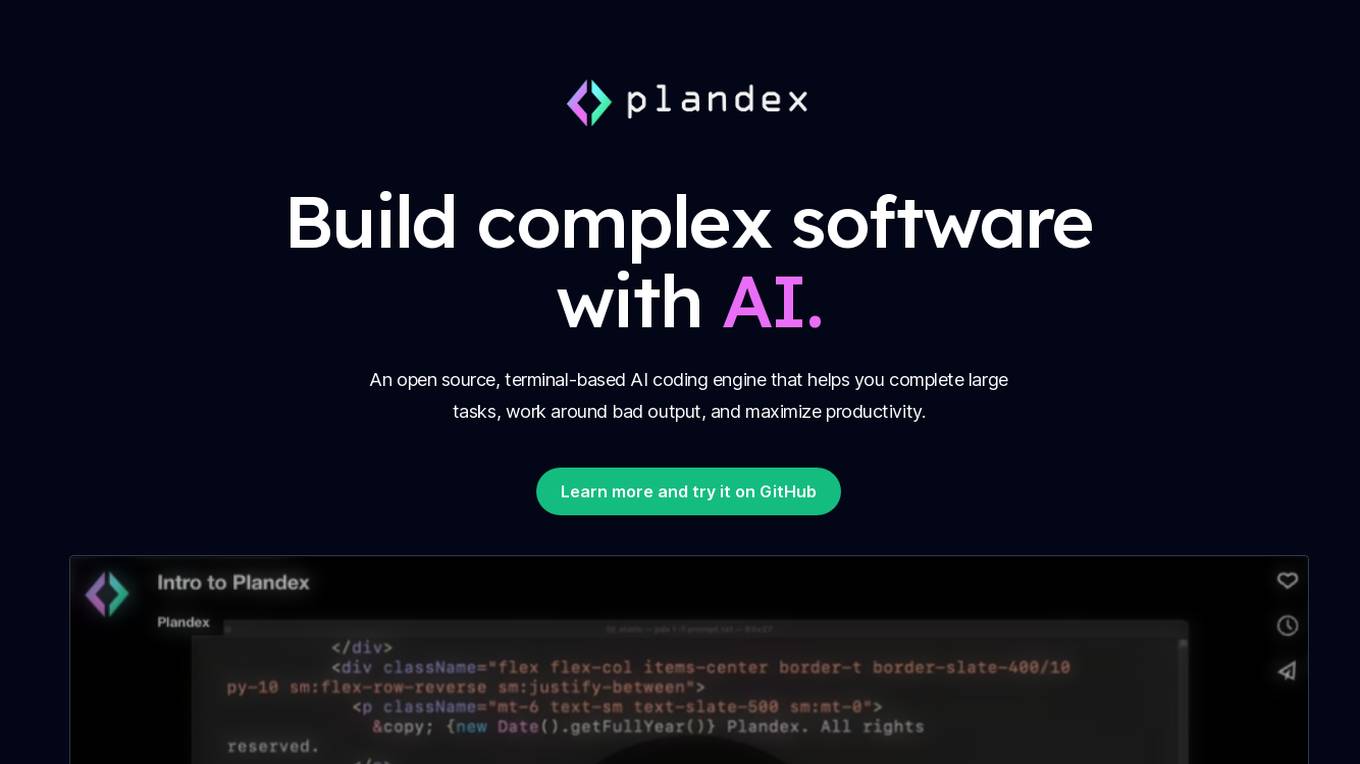

Plandex

Plandex is an open-source, terminal-based AI coding engine that assists developers in completing complex programming tasks, handling problematic output, and enhancing productivity. It is designed to simplify software development by leveraging AI capabilities.

Composio

Composio is an integration platform for AI Agents and LLMs that allows users to access over 150 tools with just one line of code. It offers seamless integrations, managed authentication, a repository of tools, and powerful RPA tools to streamline and optimize the connection and interaction between AI Agents/LLMs and various APIs/services. Composio simplifies JSON structures, improves variable names, and enhances error handling to increase reliability by 30%. The platform is SOC Type II compliant, ensuring maximum security of user data.

502 Bad Gateway

The website seems to be experiencing technical difficulties at the moment, showing a '502 Bad Gateway' error message. This error typically occurs when a server acting as a gateway or proxy receives an invalid response from an upstream server. The 'nginx' reference in the error message indicates that the server is using the Nginx web server software. Users encountering this error may need to wait for the issue to be resolved by the website's administrators or try accessing the site at a later time.

403 Forbidden OpenResty

The website is currently displaying a '403 Forbidden' error message, which indicates that the server understood the request but refuses to authorize it. This error is often encountered when trying to access a webpage without proper permissions. The 'openresty' mentioned in the message refers to a web platform based on NGINX and LuaJIT, commonly used for building high-performance web applications. The website may be experiencing technical issues or undergoing maintenance.

OpenResty Server

The website is currently displaying a '403 Forbidden' error, which indicates that the server understood the request but refuses to authorize it. This error is typically caused by insufficient permissions or misconfiguration on the server side. The 'openresty' message suggests that the server is using the OpenResty web platform, which is based on NGINX and Lua programming language. Users encountering this error may need to contact the website administrator for assistance in resolving the issue.

Page Publisher

The website is a platform where users can publish and share their pages. If a user encounters a 404 error, they are advised to ensure that their page is public and named 'Index'. The page will go live within a few seconds after being published for the first time.

403 Forbidden Error Handler

The website encountered a 403 Forbidden error, indicating that the user does not have permission to access the resource. This error is commonly encountered when trying to access a webpage or resource without the necessary authorization. The ErrorDocument was unable to handle the request, resulting in the Forbidden error message.

N/A

The website appears to be displaying a '403 Forbidden' error message, which indicates that the server is refusing to respond to the request. This could be due to various reasons such as insufficient permissions, server misconfiguration, or access restrictions. The 'openresty' mentioned in the text is likely the web server software being used. It is not an AI tool or application, but rather a server-side software for handling web requests.

OpenResty

The website is currently displaying a '403 Forbidden' error, which indicates that the server understood the request but refuses to authorize it. This error is often encountered when trying to access a webpage without the necessary permissions. The 'openresty' mentioned in the text is likely the software running on the server. It is a web platform based on NGINX and LuaJIT, known for its high performance and scalability in handling web traffic. The website may be using OpenResty to manage its server configurations and handle incoming requests.

Accountable

Accountable is an AI-powered assistant designed to help individuals manage their taxes and finances effortlessly. The application offers a comprehensive solution for handling tax declarations error-free and stress-free. Users can rely on the AI Assistant to answer tax-related questions, ensure accurate tax returns, and provide personalized tax tips. Accountable also assists in organizing paperwork, generating professional invoices, scanning receipts for tax deductions, and offering insights on tax savings. With a user-friendly interface and top-notch customer support, Accountable simplifies tax management for freelancers, entrepreneurs, and small business owners.

Humanizey

Humanizey is an AI humanizer tool designed to transform AI-generated text into human-like content to bypass AI detection systems such as Turnitin & GPTZero. It ensures 100% human score and plagiarism-free output, supporting over 100 languages. Humanizey offers error-free and plagiarism-free rewriting, integrated AI detector, undetectable AI writing, and flexible mode options to enhance content uniqueness and SEO ranking. With 24/7 support and secure data handling, Humanizey is a reliable solution for writers, bloggers, students, and SEO experts seeking to evade AI detection and maintain content distinctiveness.

Koxy AI

Koxy AI is an AI-powered serverless back-end platform that allows users to build globally distributed, fast, secure, and scalable back-ends with no code required. It offers features such as live logs, smart errors handling, integration with over 80,000 AI models, and more. Koxy AI is designed to help users focus on building the best service possible without wasting time on security and latency concerns. It provides a No-SQL JSON-based database, real-time data synchronization, cloud functions, and a drag-and-drop builder for API flows.

1 - Open Source AI Tools

Mastering-GitHub-Copilot-for-Paired-Programming

Mastering GitHub Copilot for AI Paired Programming is a comprehensive course designed to equip you with the skills and knowledge necessary to harness the power of GitHub Copilot, an AI-driven coding assistant. Through a series of engaging lessons, you will learn how to seamlessly integrate GitHub Copilot into your workflow, leveraging its autocompletion, customizable features, and advanced programming techniques. This course is tailored to provide you with a deep understanding of AI-driven algorithms and best practices, enabling you to enhance code quality and accelerate your coding skills. By embracing the transformative power of AI paired programming, you will gain the tools and confidence needed to succeed in today's dynamic software development landscape.

20 - OpenAI Gpts

Word Problem Solver

Expert at solving and explaining word problems, with error correction.

Discrete Mathematics

Precision-focused Language Model for Discrete Mathematics, ensuring unmatched accuracy and error avoidance.

A Smart Home Assistant

Have a quick question regarding your smart home setup? Chat with #SmartHomeAssistant for pairing, error codes, and tips on #ConnectedDevices. Your essential guide to #SmartHomeAutomation. #TechSupport

Log Analyzer

I'm designed to help You analyze any logs like Linux system logs, Windows logs, any security logs, access logs, error logs, etc. Please do not share information that You would like to keep private. The author does not collect or process any personal data.

GPT API Schema Builder

Create an API Spec For You Custom GPT. Instantly turn API docs into OpenAPI specs with our tool! Paste a cURL or a doc link, and get a perfect spec in a snap. It’s quick, easy, and error-free. Perfect for devs who want to save time and hassle.

Tech Support Advisor

From setting up a printer to troubleshooting a device, I’m here to help you step-by-step.

Ask Cris about File Maker

An experiment in personal FileMaker guidance from the collective works of lifetime award-winning FileMaker trainer, Cris Ippolite. Not just links to resources, but direct access to 20+ years of custom training curriculum combined with expert AI instruction without the noise of external web links.

TYPO3 GPT

Specialist for technical and editorial TYPO3 support. // FEATURES: Optional browsing via external api with 'web: search query' and optimized GitHub access.