Best AI tools for< Deploy Support Bot >

20 - AI tool Sites

SupBot

SupBot is an AI-powered support solution that enables businesses to handle customer support instantly. It offers the ability to train and deploy AI support bots with ease, allowing for seamless integration into any website. With a user-friendly interface, SupBot simplifies the process of setting up and customizing support bots to meet specific requirements. The platform is designed to enhance customer service efficiency and streamline communication processes through the use of AI technology.

ibl.ai

ibl.ai is a generative AI platform that focuses on education, providing cutting-edge solutions for institutions to create AI mentors, tutoring apps, and content creation tools. The platform empowers educators by giving them full control over their code, data, and models. With advanced features and support for both web and native mobile platforms, ibl.ai seamlessly integrates with existing infrastructure, making it easy to deploy across organizations. The platform is designed to enhance learning experiences, foster critical thinking, and engage students deeply in educational content.

Kapa.ai

Kapa.ai is an AI tool that builds accurate AI agents from technical documentation and various other sources. It helps deploy AI assistants across support, documentation, and internal teams in a matter of hours. Trusted by over 200 leading companies with technical products, Kapa.ai offers pre-built integrations, customer results, and an analytics platform to track user questions and content gaps. The tool focuses on providing grounded answers, connecting existing sources, and ensuring data security and compliance.

BotGPT

BotGPT is a 24/7 custom AI chatbot assistant for websites. It offers a data-driven ChatGPT that allows users to create virtual assistants from their own data. Users can easily upload files or crawl their website to start asking questions and deploy a custom chatbot on their website within minutes. The platform provides a simple and efficient way to enhance customer engagement through AI-powered chatbots.

BotStacks

BotStacks is a conversational AI solution that offers premium AI sidekicks to automate, engage, and succeed. It provides a platform for designing, building, and deploying AI chatbots with advanced technology accessible to everyone. With features like canvas designer, knowledge base, and chat SDKs, BotStacks empowers users to create personalized and scalable AI assistants. The application focuses on easy design flow, seamless integration, customization, scalability, and accessibility for non-technical users, making it a gateway to the future of conversational AI.

Synthreo

Synthreo is a Multi-Tenant AI Automation Platform designed for Managed Service Providers (MSPs) to empower businesses by streamlining operations, reducing costs, and driving growth through intelligent AI agents. The platform offers cutting-edge AI solutions that automate routine tasks, enhance decision-making, and facilitate collaboration between human teams and digital labor. Synthreo's AI agents provide transformative advantages for businesses of all sizes, enabling operational efficiency and strategic growth.

AudioCodes VoiceAI Connect

AudioCodes VoiceAI Connect is a cloud-based platform that enables developers to build and deploy voicebots. It provides a range of features, including connectivity to any contact center or SIP trunk, support for any speech engine or bot framework, and the ability to reduce the cost of speech services by up to 40%. VoiceAI Connect is available as a fully managed service (Enterprise edition) and as a self-service SaaS solution (AudioCodes Live Hub) to support any deployment, integration, or regulatory needs.

Graphlogic.ai

Graphlogic.ai is an AI-powered platform that offers Conversational AI solutions through text and voice bots. It provides partner-enabled services for various industries, including HR, customer support, marketing, and internal task management. The platform features AI-powered chatbots with goal-oriented NLU and rule-based bots, seamless integrations with CRM systems, and 24/7 omnichannel availability. Graphlogic.ai aims to transform and speed up customer service and FAQ conversations by providing instant replies in a human-like manner. It also offers dedicated HR manager bots, hiring assistants for mass recruitment, responsible managers for internal tasks, and outbound marketing coordinators.

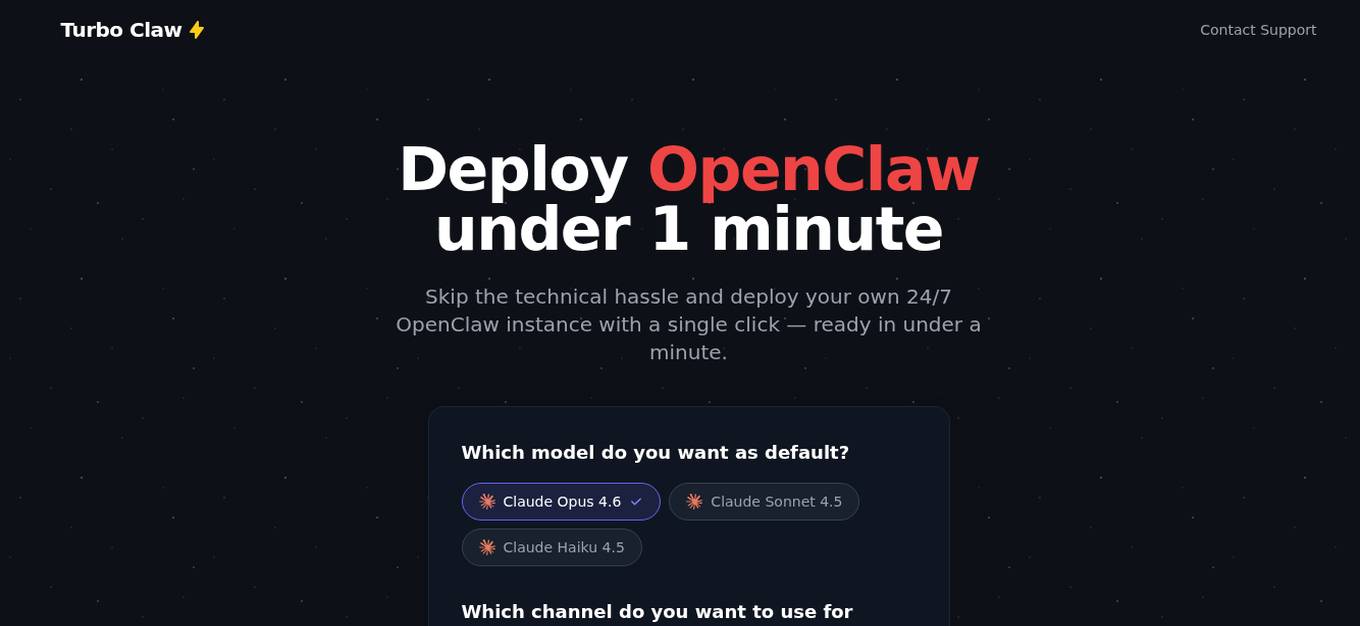

TurboClaw

TurboClaw is an AI-powered platform that allows users to deploy their own 24/7 OpenClaw instance in under a minute, eliminating technical hassles. Users can choose from different AI models and messaging channels, and the platform handles server management, Docker setup, SSL configuration, and OpenClaw deployment. With TurboClaw, users can quickly set up AI bots for various tasks such as drafting replies, translating messages, organizing inboxes, managing subscriptions, finding best prices online, generating content ideas, setting goals, and more.

SingleStore

SingleStore is a real-time data platform designed for apps, analytics, and gen AI. It offers faster hybrid vector + full-text search, fast-scaling integrations, and a free tier. SingleStore can read, write, and reason on petabyte-scale data in milliseconds. It supports streaming ingestion, high concurrency, first-class vector support, record lookups, and more.

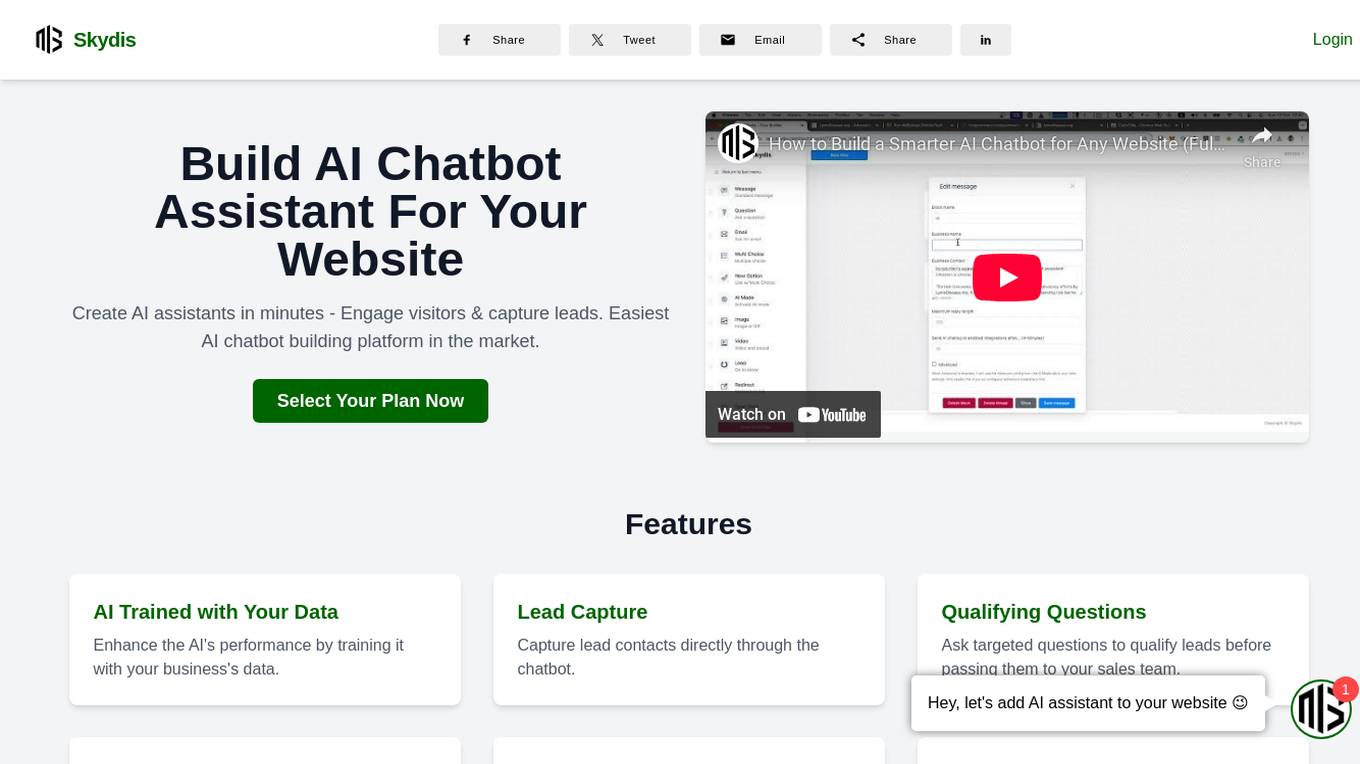

Skydis

Skydis is an AI chatbot building platform that allows users to create AI assistants for their websites in minutes. The platform offers features such as AI training with user data, lead capture, qualifying questions, lead export & Zapier integration, drag & drop bot builder, AI smart answers, 24/7 operation, customizable appearance, and easy integration on any website or platform. Skydis prioritizes user privacy and confidentiality by securely storing data and not publishing content. It offers various pricing plans to cater to different business needs and provides advanced AI capabilities to engage visitors and capture leads effectively.

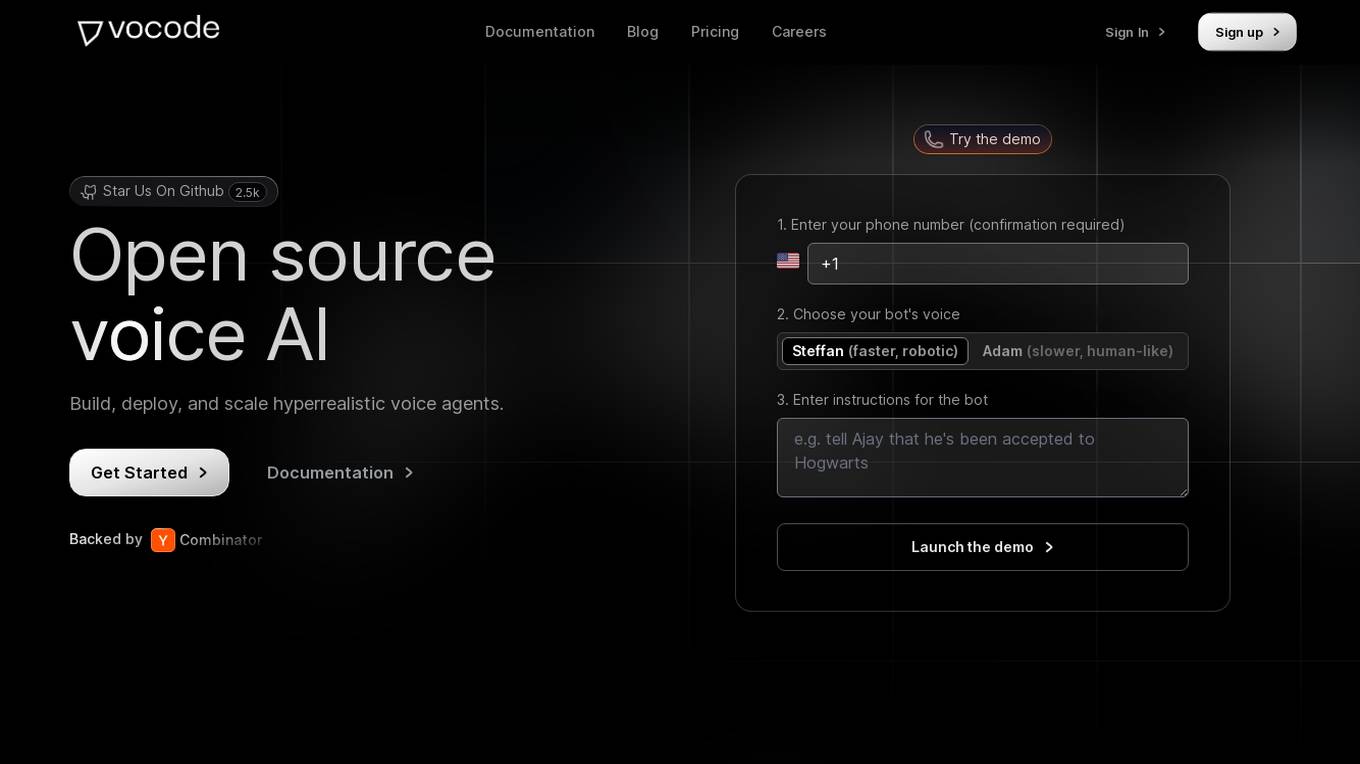

Vocode

Vocode is an open-source voice AI platform that enables users to build, deploy, and scale hyperrealistic voice agents. It offers fully programmable voice bots that can be integrated into workflows without the need for human intervention. With multilingual capability, custom language models, and the ability to connect to knowledge bases, Vocode provides a comprehensive solution for automating actions like scheduling, payments, and more. The platform also offers analytics and monitoring features to track bot performance and customer interactions, making it a valuable tool for businesses looking to enhance customer support and engagement.

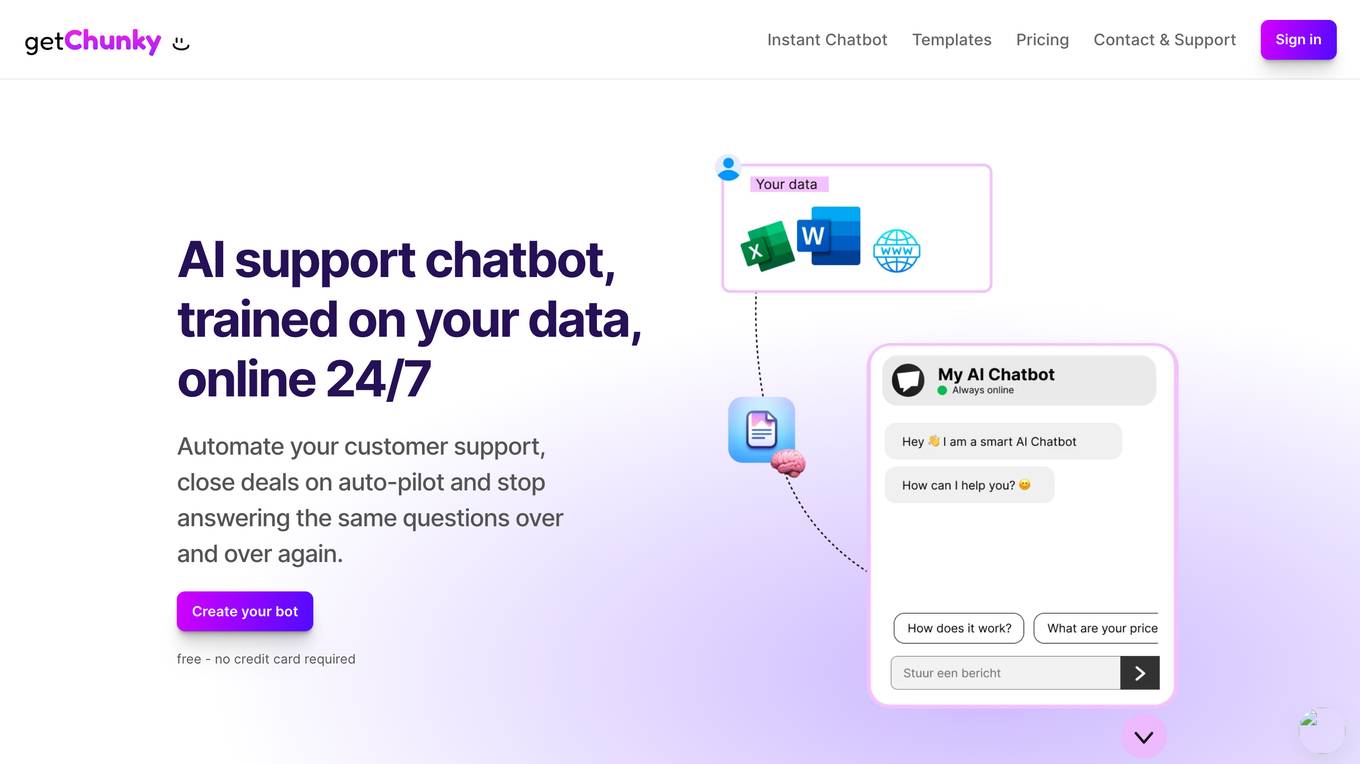

Chunky

Chunky is an AI chatbot builder that allows users to create human-like chatbots effortlessly. With Chunky, users can automate customer support, answer frequently asked questions, and save time by setting up their own AI-powered bot in just a few minutes. The platform offers a user-friendly experience with no coding required, integrated chatbot deployment on websites, and customization options for branding. Chunky utilizes the ChatGPT API and Embeddings provided by OpenAI to deliver smart chatbot interactions in close to 95 languages.

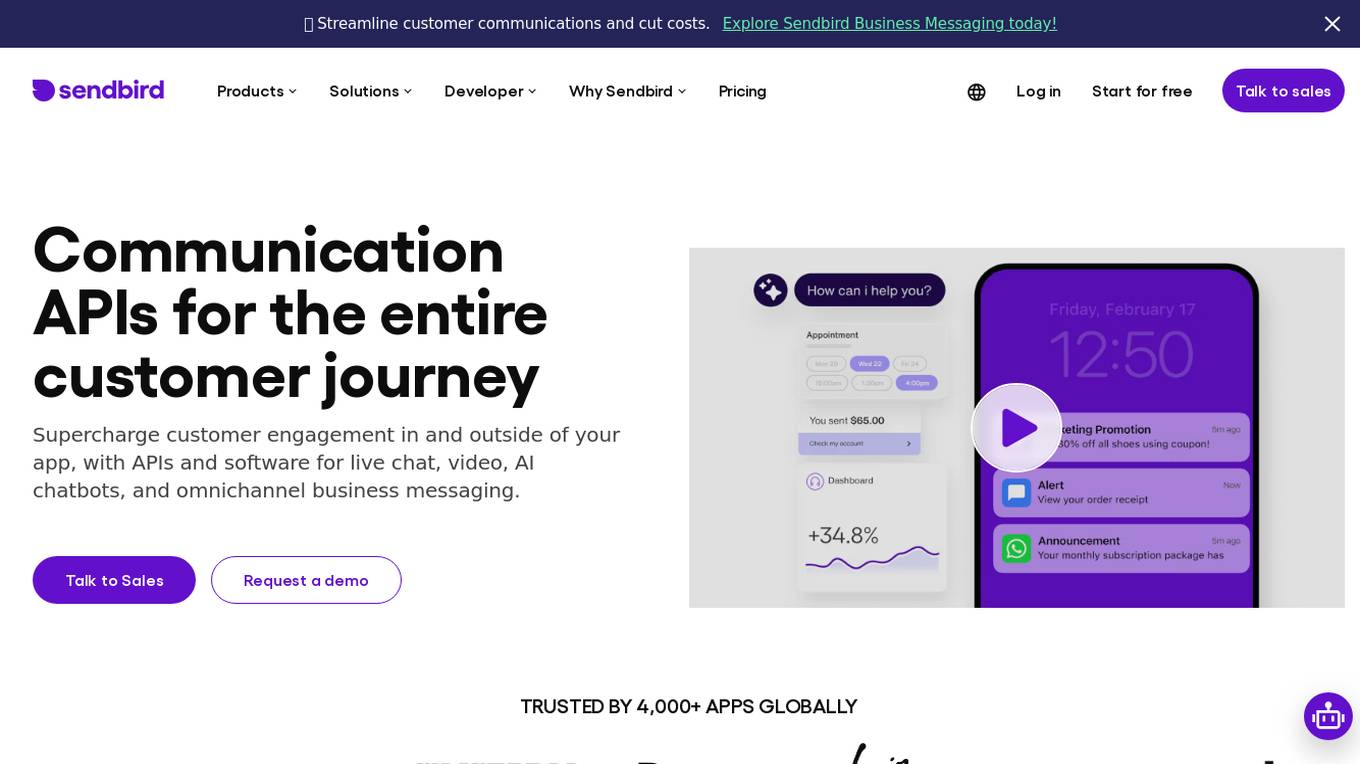

Sendbird

Sendbird is a communication API platform that offers solutions for chat, AI chatbots, SMS, WhatsApp, KakaoTalk, voice, and video. It provides tools for live chat, video, and omnichannel business messaging to enhance customer engagement both within and outside of applications. With a focus on enterprise-level scale, security, and compliance, Sendbird's platform is trusted by over 4,000 apps globally. The platform offers intuitive APIs, sample apps, tutorials, and free trials to help developers easily integrate communication features into their applications.

SiteRetriever

SiteRetriever is a self-hosted AI chatbot platform that enables users to build and deploy chatbots on their websites without any monthly fees. It offers a completely self-contained and self-hosted solution, providing full control over data and costs. With features like easy embedding, complete control over customization, lightning-fast responses, and smart AI powered by advanced language models, SiteRetriever simplifies the process of creating and managing chatbots. Users can upload content, configure bot settings, and embed the chatbot on their site within minutes. Join the waitlist to experience the benefits of SiteRetriever and take control of your chatbot today.

GrapixAI

GrapixAI is a leading provider of low-cost cloud GPU rental services and AI server solutions. The company's focus on flexibility, scalability, and cutting-edge technology enables a variety of AI applications in both local and cloud environments. GrapixAI offers the lowest prices for on-demand GPUs such as RTX4090, RTX 3090, RTX A6000, RTX A5000, and A40. The platform provides Docker-based container ecosystem for quick software setup, powerful GPU search console, customizable pricing options, various security levels, GUI and CLI interfaces, real-time bidding system, and personalized customer support.

FARSPEAK.AI

FARSPEAK.AI is an AI application that offers RESTful AI for databases, allowing users to query databases using natural language and deploy AI agents to enhance data processing. The application supports MongoDB Atlas, provides up-to-date embeddings, and offers both structured and unstructured data support. FARSPEAK simplifies work for AI engineers, app & web developers, and product designers by enabling faster AI feature development, natural language querying, and insights generation from data.

GptSdk

GptSdk is an AI tool that simplifies incorporating AI capabilities into PHP projects. It offers dynamic prompt management, model management, bulk testing, collaboration chaining integration, and more. The tool allows developers to develop professional AI applications 10x faster, integrates with Laravel and Symfony, and supports both local and API prompts. GptSdk is open-source under the MIT License and offers a flexible pricing model with a generous free tier.

Caffe

Caffe is a deep learning framework developed by Berkeley AI Research (BAIR) and community contributors. It is designed for speed, modularity, and expressiveness, allowing users to define models and optimization through configuration without hard-coding. Caffe supports both CPU and GPU training, making it suitable for research experiments and industry deployment. The framework is extensible, actively developed, and tracks the state-of-the-art in code and models. Caffe is widely used in academic research, startup prototypes, and large-scale industrial applications in vision, speech, and multimedia.

Apache MXNet

Apache MXNet is a flexible and efficient deep learning library designed for research, prototyping, and production. It features a hybrid front-end that seamlessly transitions between imperative and symbolic modes, enabling both flexibility and speed. MXNet also supports distributed training and performance optimization through Parameter Server and Horovod. With bindings for multiple languages, including Python, Scala, Julia, Clojure, Java, C++, R, and Perl, MXNet offers wide accessibility. Additionally, it boasts a thriving ecosystem of tools and libraries that extend its capabilities in computer vision, NLP, time series, and more.

0 - Open Source AI Tools

20 - OpenAI Gpts

Frontend Developer

AI front-end developer expert in coding React, Nextjs, Vue, Svelte, Typescript, Gatsby, Angular, HTML, CSS, JavaScript & advanced in Flexbox, Tailwind & Material Design. Mentors in coding & debugging for junior, intermediate & senior front-end developers alike. Let’s code, build & deploy a SaaS app.

Azure Arc Expert

Azure Arc expert providing guidance on architecture, deployment, and management.

Instructor GCP ML

Formador para la certificación de ML Engineer en GCP, con respuestas y explicaciones detalladas.

Docker and Docker Swarm Assistant

Expert in Docker and Docker Swarm solutions and troubleshooting.