Best AI tools for< Deploy Integrations >

20 - AI tool Sites

Composio

Composio is an integration platform for AI Agents and LLMs that allows users to access over 150 tools with just one line of code. It offers seamless integrations, managed authentication, a repository of tools, and powerful RPA tools to streamline and optimize the connection and interaction between AI Agents/LLMs and various APIs/services. Composio simplifies JSON structures, improves variable names, and enhances error handling to increase reliability by 30%. The platform is SOC Type II compliant, ensuring maximum security of user data.

Tray.ai

Tray.ai is an AI-ready iPaaS (Integration Platform as a Service) that empowers businesses to seamlessly integrate and automate AI technologies. It offers a composable platform for building intelligent agents, delivering microservices, and ensuring scalability and governance. With Tray.ai, users can accelerate integration and automation delivery, infuse AI into business processes, and leverage AI data integration securely. The platform enables various use cases such as automating IT onboarding, lead management, embedded integrations, support agents, and order-to-cash processes.

PixieBrix

PixieBrix is an AI engagement platform that allows users to build, deploy, and manage internal AI tools to drive team productivity. It unifies AI landscapes with oversight and governance for enterprise scale. The platform is enterprise-ready and fully customizable to meet unique needs, and can be deployed on any site, making it easy to integrate into existing systems. PixieBrix leverages the power of AI and automation to harness the latest technology to streamline workflows and take productivity to new heights.

Portkey

Portkey is a control panel for production AI applications that offers an AI Gateway, Prompts, Guardrails, and Observability Suite. It enables teams to ship reliable, cost-efficient, and fast apps by providing tools for prompt engineering, enforcing reliable LLM behavior, integrating with major agent frameworks, and building AI agents with access to real-world tools. Portkey also offers seamless AI integrations for smarter decisions, with features like managed hosting, smart caching, and edge compute layers to optimize app performance.

Gnani.ai

Gnani.ai is an AI application that offers a suite of agentic AI solutions for enterprises, including Inya Workforce for AI automation, Inya Assist for real-time agent support, Inya Shield for voice biometrics, and Inya Insights for call analytics. The platform enables businesses to build and deploy voice and chat agents quickly without the need for coding. With features like automated QA, voice biometrics, and real-time call analytics, Gnani.ai helps businesses improve customer experience, increase operational efficiency, and drive revenue growth across various industries.

Synthreo

Synthreo is an AI tool that empowers businesses to streamline operations, reduce costs, and drive growth through intelligent AI agents. It provides cutting-edge AI agent solutions that automate routine tasks, enhance decision-making, and create seamless collaboration between human teams and digital labor. Synthreo offers transformative advantages for businesses of all sizes, enabling operational efficiency and strategic growth. The platform implements advanced security measures, complies with industry standards, and upholds ethical AI usage. Businesses can leverage AI-powered digital labor to achieve unprecedented efficiency, innovation, and growth.

Prompt Security

Prompt Security is a platform that secures all uses of Generative AI in the organization: from tools used by your employees to your customer-facing apps.

Stately

Stately is a visual logic builder that enables users to create complex logic diagrams and code in minutes. It provides a drag-and-drop editor that brings together contributors of all backgrounds, allowing them to collaborate on code, diagrams, documentation, and test generation in one place. Stately also integrates with AI to assist in each phase of the development process, from scaffolding behavior and suggesting variants to turning up edge cases and even writing code. Additionally, Stately offers bidirectional updates between code and visualization, allowing users to use the tools that make them most productive. It also provides integrations with popular frameworks such as React, Vue, and Svelte, and supports event-driven programming, state machines, statecharts, and the actor model for handling even the most complex logic in predictable, robust, and visual ways.

PixieBrix

PixieBrix is an AI engagement platform that allows users to build, deploy, and manage internal AI tools to enhance team productivity. It unifies the AI landscape with oversight and governance for enterprise-scale operations, increasing speed, accuracy, compliance, and satisfaction. The platform leverages AI and automation to streamline workflows, improve user experience, and unlock untapped potential. With a focus on enterprise readiness and customization, PixieBrix offers extensibility, iterative innovation, scalability for teams, and an engaged community for idea exchange and support.

SingleStore

SingleStore is a real-time data platform designed for apps, analytics, and gen AI. It offers faster hybrid vector + full-text search, fast-scaling integrations, and a free tier. SingleStore can read, write, and reason on petabyte-scale data in milliseconds. It supports streaming ingestion, high concurrency, first-class vector support, record lookups, and more.

Magick

Magick is a cutting-edge Artificial Intelligence Development Environment (AIDE) that empowers users to rapidly prototype and deploy advanced AI agents and applications without coding. It provides a full-stack solution for building, deploying, maintaining, and scaling AI creations. Magick's open-source, platform-agnostic nature allows for full control and flexibility, making it suitable for users of all skill levels. With its visual node-graph editors, users can code visually and create intuitively. Magick also offers powerful document processing capabilities, enabling effortless embedding and access to complex data. Its real-time and event-driven agents respond to events right in the AIDE, ensuring prompt and efficient handling of tasks. Magick's scalable deployment feature allows agents to handle any number of users, making it suitable for large-scale applications. Additionally, its multi-platform integrations with tools like Discord, Unreal Blueprints, and Google AI provide seamless connectivity and enhanced functionality.

Beam AI

Beam AI is a leading platform for Agentic Automation and AI Agents, offering solutions for automating manual workflows with AI agents to boost productivity. The platform is used by Fortune 500 companies and scale-ups, providing a seamless experience from start to finish. With features like pre-trained agents, integrations, and easy-to-navigate UI, Beam AI empowers users to create and deploy AI tools tailored to their specific needs. The platform is designed to scale any business, offering industry-specific solutions and world-class support for building AI-native organizations.

Graphlogic.ai

Graphlogic.ai is an AI-powered platform that offers Conversational AI solutions through text and voice bots. It provides partner-enabled services for various industries, including HR, customer support, marketing, and internal task management. The platform features AI-powered chatbots with goal-oriented NLU and rule-based bots, seamless integrations with CRM systems, and 24/7 omnichannel availability. Graphlogic.ai aims to transform and speed up customer service and FAQ conversations by providing instant replies in a human-like manner. It also offers dedicated HR manager bots, hiring assistants for mass recruitment, responsible managers for internal tasks, and outbound marketing coordinators.

Fetch AI

Fetch AI is an open platform that allows users to build, deploy, and monetize AI applications and services. It provides a new AI economy by connecting multiple integrations to create new services and offers a range of features to transform legacy systems to be AI ready without changing existing APIs. The platform enables users to make their services discoverable on the Fetch.ai Platform with the first open network for AI Agents.

BotStacks

BotStacks is a conversational AI solution that offers premium AI sidekicks to automate, engage, and succeed. It provides a platform for designing, building, and deploying AI chatbots with advanced technology accessible to everyone. With features like canvas designer, knowledge base, and chat SDKs, BotStacks empowers users to create personalized and scalable AI assistants. The application focuses on easy design flow, seamless integration, customization, scalability, and accessibility for non-technical users, making it a gateway to the future of conversational AI.

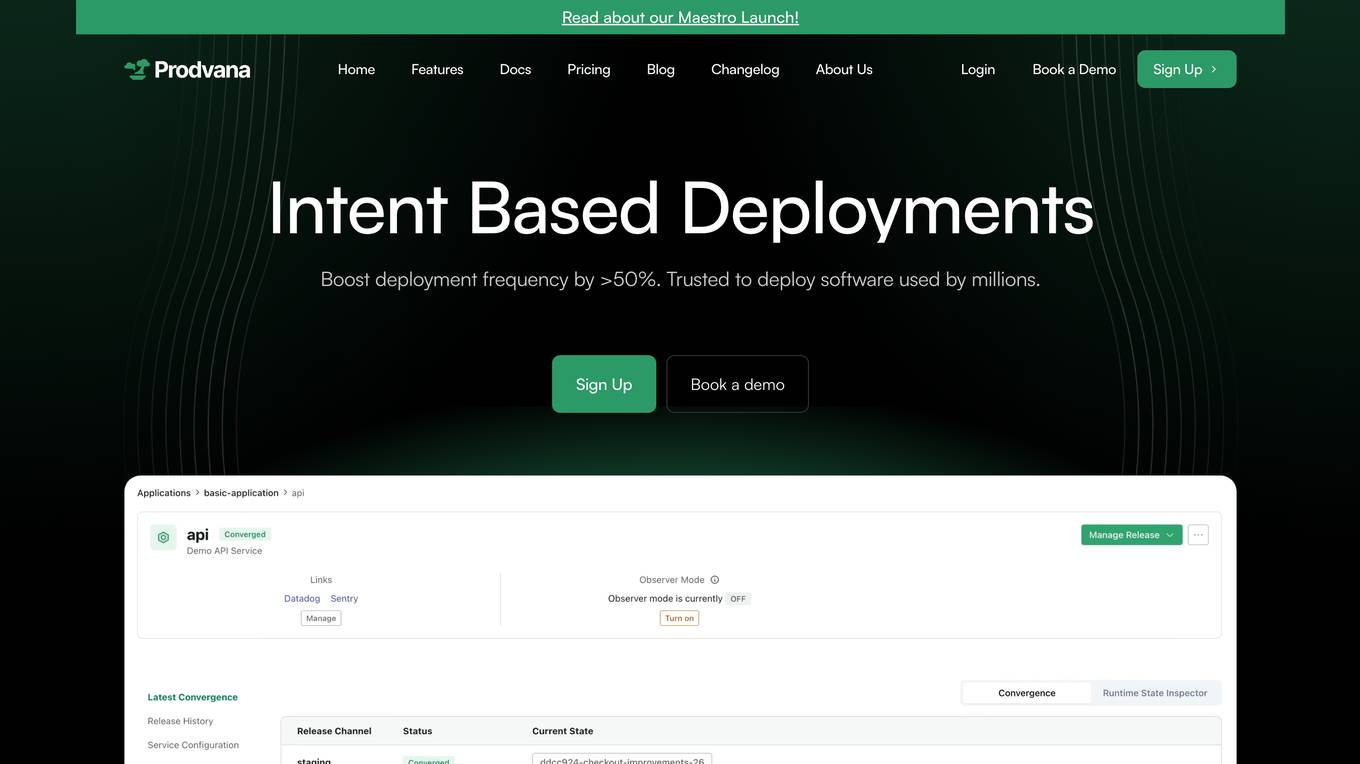

Prodvana

Prodvana is an intelligent deployment platform that helps businesses automate and streamline their software deployment process. It provides a variety of features to help businesses improve the speed, reliability, and security of their deployments. Prodvana is a cloud-based platform that can be used with any type of infrastructure, including on-premises, hybrid, and multi-cloud environments. It is also compatible with a wide range of DevOps tools and technologies. Prodvana's key features include: Intent-based deployments: Prodvana uses intent-based deployment technology to automate the deployment process. This means that businesses can simply specify their deployment goals, and Prodvana will automatically generate and execute the necessary steps to achieve those goals. This can save businesses a significant amount of time and effort. Guardrails for deployments: Prodvana provides a variety of guardrails to help businesses ensure the security and reliability of their deployments. These guardrails include approvals, database validations, automatic deployment validation, and simple interfaces to add custom guardrails. This helps businesses to prevent errors and reduce the risk of outages. Frictionless DevEx: Prodvana provides a frictionless developer experience by tracking commits through the infrastructure, ensuring complete visibility beyond just Docker images. This helps developers to quickly identify and resolve issues, and it also makes it easier to collaborate with other team members. Intelligence with Clairvoyance: Prodvana's Clairvoyance feature provides businesses with insights into the impact of their deployments before they are executed. This helps businesses to make more informed decisions about their deployments and to avoid potential problems. Easy integrations: Prodvana integrates seamlessly with a variety of DevOps tools and technologies. This makes it easy for businesses to use Prodvana with their existing workflows and processes.

Roboflow

Roboflow is an AI tool designed for computer vision tasks, offering a platform that allows users to annotate, train, deploy, and perform inference on models. It provides integrations, ecosystem support, and features like notebooks, autodistillation, and supervision. Roboflow caters to various industries such as aerospace, agriculture, healthcare, finance, and more, with a focus on simplifying the development and deployment of computer vision models.

Forma.ai

Forma.ai is an AI-powered sales performance management software designed to optimize and run sales compensation processes efficiently. The platform offers features such as AI-powered plan configuration, connected modeling for optimization, end-to-end automation of sales comp management, flexible data integrations, and next-gen automation. Forma.ai provides advantages such as faster decision-making, revenue capture, cost reduction, flexibility, and scalability. However, some disadvantages include the need for AI skills, potential data security concerns, and initial learning curve. The application is suitable for jobs in sales operations, finance, human resources, sales compensation planning, and sales performance data management. Users can find Forma.ai using keywords like sales comp, AI-powered software, sales performance management, sales incentives, and sales compensation. The tool can be used for tasks like design with AI, plan and model, deploy and manage, optimize comp plans, and automate sales comp.

Rapport Software

Rapport Software is an AI-generated character animation tool that allows users to create, animate, and deploy emotionally intelligent characters to enhance dialogue with the audience. It offers features like recognizing and reflecting emotions, accurate lip sync, support for any language, ready-made or custom-built character options, and integrations with text-to-speech and speech-recognition tools. The application aims to build deeper connections, increase sales, and humanize AI through relatable characters and meaningful conversations.

Passarel

Passarel is an AI tool designed to simplify teammate onboarding by developing bespoke GPT-like models for employee interaction. It centralizes knowledge bases into a custom model, allowing new teammates to access information efficiently. Passarel leverages various integrations to tailor language models to team needs, handling contradictions and providing accurate information. The tool works by training models on chosen knowledge bases, learning from data and configurations provided, and deploying the model for team use.

0 - Open Source AI Tools

20 - OpenAI Gpts

Flask Expert Assistant

This GPT is a specialized assistant for Flask, the popular web framework in Python. It is designed to help both beginners and experienced developers with Flask-related queries, ranging from basic setup and routing to advanced features like database integration and application scaling.

Frontend Developer

AI front-end developer expert in coding React, Nextjs, Vue, Svelte, Typescript, Gatsby, Angular, HTML, CSS, JavaScript & advanced in Flexbox, Tailwind & Material Design. Mentors in coding & debugging for junior, intermediate & senior front-end developers alike. Let’s code, build & deploy a SaaS app.

Azure Arc Expert

Azure Arc expert providing guidance on architecture, deployment, and management.

Instructor GCP ML

Formador para la certificación de ML Engineer en GCP, con respuestas y explicaciones detalladas.

Docker and Docker Swarm Assistant

Expert in Docker and Docker Swarm solutions and troubleshooting.