Best AI tools for< Deploy Ai Infrastructure >

20 - AI tool Sites

Cerebium

Cerebium is a serverless AI infrastructure platform that allows teams to build, test, and deploy AI applications quickly and efficiently. With a focus on speed, performance, and cost optimization, Cerebium offers a range of features and tools to simplify the development and deployment of AI projects. The platform ensures high reliability, security, and compliance while providing real-time logging, cost tracking, and observability tools. Cerebium also offers GPU variety and effortless autoscaling to meet the diverse needs of developers and businesses.

Denvr DataWorks AI Cloud

Denvr DataWorks AI Cloud is a cloud-based AI platform that provides end-to-end AI solutions for businesses. It offers a range of features including high-performance GPUs, scalable infrastructure, ultra-efficient workflows, and cost efficiency. Denvr DataWorks is an NVIDIA Elite Partner for Compute, and its platform is used by leading AI companies to develop and deploy innovative AI solutions.

Nscale

Nscale is a full-stack, scalable, and sustainable AI cloud platform that offers a wide range of AI services and solutions. It provides services for developing, training, tuning, and deploying AI models using on-demand services. Nscale also offers serverless inference API endpoints, fine-tuning capabilities, private cloud solutions, and various GPU clusters engineered for AI. The platform aims to simplify the journey from AI model development to production, offering a marketplace for AI/ML tools and resources. Nscale's infrastructure includes data centers powered by renewable energy, high-performance GPU nodes, and optimized networking and storage solutions.

Enterprise AI

Enterprise AI provides comprehensive information, news, and tips on artificial intelligence (AI) for businesses. It covers various aspects of AI, including AI business strategies, AI infrastructure, AI technologies, AI platforms, careers in AI, and enterprise applications of AI. The website offers insights into the latest AI trends, best practices, and industry news. It also provides resources such as e-books, webinars, and podcasts to help businesses understand and implement AI solutions.

DDN A³I

DDN A³I is an AI storage platform that maximizes business differentiation and market leadership through data utilization, AI, and advanced analytics. It offers comprehensive enterprise features, easy deployment and management, predictable scaling, data protection, and high performance. DDN A³I enables organizations to accelerate insights, reduce costs, and optimize GPU productivity for faster results.

Lambda Docs

Lambda Docs is an AI tool that provides cloud and hardware solutions for individuals, teams, and organizations. It offers services such as Managed Kubernetes, Preinstalled Kubernetes, Slurm, and access to GPU clusters. The platform also provides educational resources and tutorials for machine learning engineers and researchers to fine-tune models and deploy AI solutions.

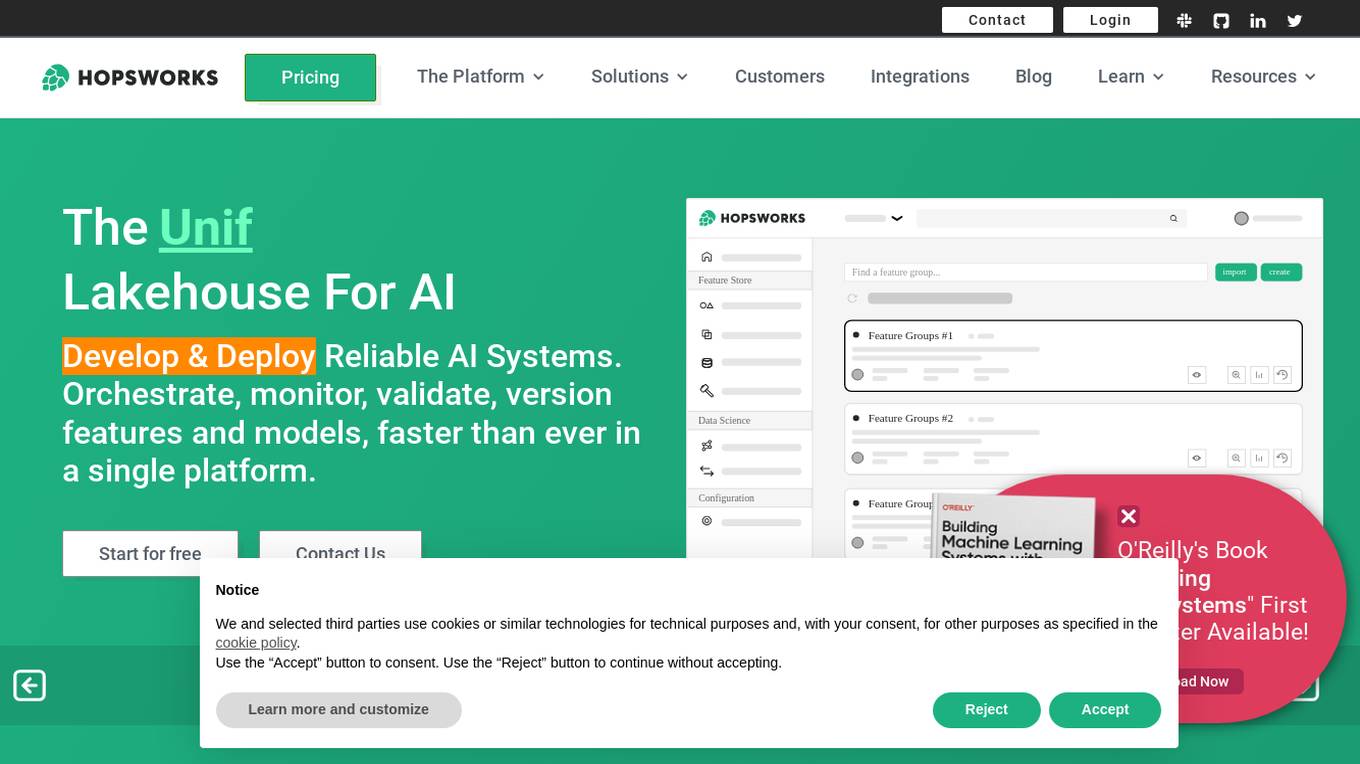

Hopsworks

Hopsworks is an AI platform that offers a comprehensive solution for building, deploying, and monitoring machine learning systems. It provides features such as a Feature Store, real-time ML capabilities, and generative AI solutions. Hopsworks enables users to develop and deploy reliable AI systems, orchestrate and monitor models, and personalize machine learning models with private data. The platform supports batch and real-time ML tasks, with the flexibility to deploy on-premises or in the cloud.

Anyscale

Anyscale is a company that provides a scalable compute platform for AI and Python applications. Their platform includes a serverless API for serving and fine-tuning open LLMs, a private cloud solution for data privacy and governance, and an open source framework for training, batch, and real-time workloads. Anyscale's platform is used by companies such as OpenAI, Uber, and Spotify to power their AI workloads.

Mystic.ai

Mystic.ai is an AI tool designed to deploy and scale Machine Learning models with ease. It offers a fully managed Kubernetes platform that runs in your own cloud, allowing users to deploy ML models in their own Azure/AWS/GCP account or in a shared GPU cluster. Mystic.ai provides cost optimizations, fast inference, simpler developer experience, and performance optimizations to ensure high-performance AI model serving. With features like pay-as-you-go API, cloud integration with AWS/Azure/GCP, and a beautiful dashboard, Mystic.ai simplifies the deployment and management of ML models for data scientists and AI engineers.

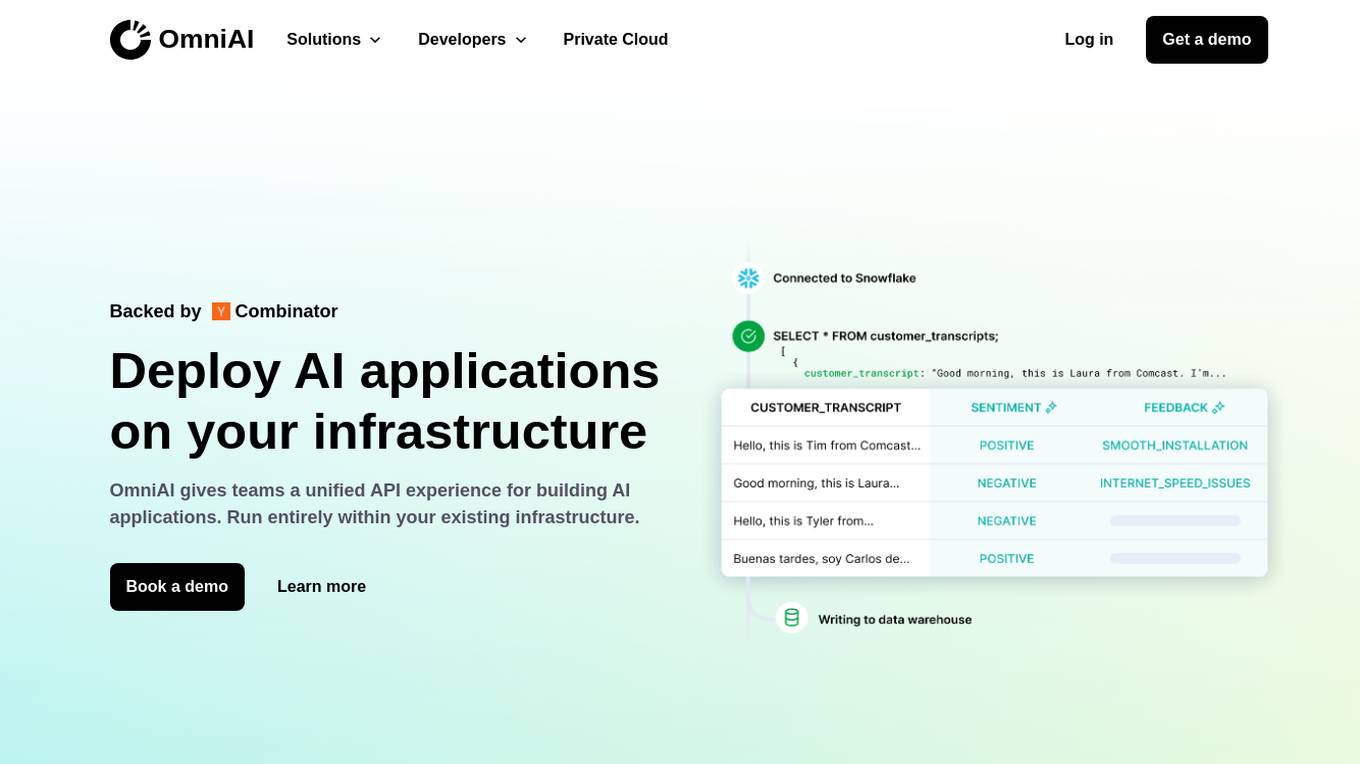

OmniAI

OmniAI is an AI tool that allows teams to deploy AI applications on their existing infrastructure. It provides a unified API experience for building AI applications and offers a wide selection of industry-leading models. With tools like Llama 3, Claude 3, Mistral Large, and AWS Titan, OmniAI excels in tasks such as natural language understanding, generation, safety, ethical behavior, and context retention. It also enables users to deploy and query the latest AI models quickly and easily within their virtual private cloud environment.

Microtica

Microtica is an AI-powered cloud delivery platform that offers a comprehensive suite of DevOps tools to help users build, deploy, and optimize their infrastructure efficiently. With features like AI Incident Investigator, AI Infrastructure Builder, Kubernetes deployment simplification, alert monitoring, pipeline automation, and cloud monitoring, Microtica aims to streamline the development and management processes for DevOps teams. The platform provides real-time insights, cost optimization suggestions, and guided deployments, making it a valuable tool for businesses looking to enhance their cloud infrastructure operations.

DataRobot

DataRobot is a leading provider of AI cloud platforms. It offers a range of AI tools and services to help businesses build, deploy, and manage AI models. DataRobot's platform is designed to make AI accessible to businesses of all sizes, regardless of their level of AI expertise. DataRobot's platform includes a variety of features to help businesses build and deploy AI models, including: * A drag-and-drop interface that makes it easy to build AI models, even for users with no coding experience. * A library of pre-built AI models that can be used to solve common business problems. * A set of tools to help businesses monitor and manage their AI models. * A team of AI experts who can provide support and guidance to businesses using the platform.

ibl.ai

ibl.ai is a generative AI platform that focuses on education, providing cutting-edge solutions for institutions to create AI mentors, tutoring apps, and content creation tools. The platform empowers educators by giving them full control over their code, data, and models. With advanced features and support for both web and native mobile platforms, ibl.ai seamlessly integrates with existing infrastructure, making it easy to deploy across organizations. The platform is designed to enhance learning experiences, foster critical thinking, and engage students deeply in educational content.

UbiOps

UbiOps is an AI infrastructure platform that helps teams quickly run their AI & ML workloads as reliable and secure microservices. It offers powerful AI model serving and orchestration with unmatched simplicity, speed, and scale. UbiOps allows users to deploy models and functions in minutes, manage AI workloads from a single control plane, integrate easily with tools like PyTorch and TensorFlow, and ensure security and compliance by design. The platform supports hybrid and multi-cloud workload orchestration, rapid adaptive scaling, and modular applications with unique workflow management system.

Lambda

Lambda is a superintelligence cloud platform that offers on-demand GPU clusters for multi-node training and fine-tuning, private large-scale GPU clusters, seamless management and scaling of AI workloads, inference endpoints and API, and a privacy-first chat app with open source models. It also provides NVIDIA's latest generation infrastructure for enterprise AI. With Lambda, AI teams can access gigawatt-scale AI factories for training and inference, deploy GPU instances, and leverage the latest NVIDIA GPUs for high-performance computing.

Backend.AI

Backend.AI is an enterprise-scale cluster backend for AI frameworks that offers scalability, GPU virtualization, HPC optimization, and DGX-Ready software products. It provides a fast and efficient way to build, train, and serve AI models of any type and size, with flexible infrastructure options. Backend.AI aims to optimize backend resources, reduce costs, and simplify deployment for AI developers and researchers. The platform integrates seamlessly with existing tools and offers fractional GPU usage and pay-as-you-play model to maximize resource utilization.

Domino Data Lab

Domino Data Lab is an enterprise AI platform that enables users to build, deploy, and manage AI models across any environment. It fosters collaboration, establishes best practices, and ensures governance while reducing costs. The platform provides access to a broad ecosystem of open source and commercial tools, and infrastructure, allowing users to accelerate and scale AI impact. Domino serves as a central hub for AI operations and knowledge, offering integrated workflows, automation, and hybrid multicloud capabilities. It helps users optimize compute utilization, enforce compliance, and centralize knowledge across teams.

FriendliAI

FriendliAI is a generative AI infrastructure company that offers efficient, fast, and reliable generative AI inference solutions for production. Their cutting-edge technologies enable groundbreaking performance improvements, cost savings, and lower latency. FriendliAI provides a platform for building and serving compound AI systems, deploying custom models effortlessly, and monitoring and debugging model performance. The application guarantees consistent results regardless of the model used and offers seamless data integration for real-time knowledge enhancement. With a focus on security, scalability, and performance optimization, FriendliAI empowers businesses to scale with ease.

Daily

Daily is a platform offering real-time voice, video, and AI solutions for developers. It provides ultra-low latency, open-source SDKs, and enterprise reliability since 2016. Daily collaborates with NVIDIA on Voice Agent Blueprint, offers Pipecat - a vendor-neutral open-source orchestration framework, Daily Bots for Pipecat Cloud deployment, and Daily Infrastructure for running real-time calls on WebRTC global infrastructure. The platform ensures the best video quality on every network, with a global mesh network, low latency, and enterprise-grade security features.

Codimite

Codimite is an AI-assisted offshore development company that provides a range of services to help businesses accelerate their software development, reduce costs, and drive innovation. Codimite's team of experienced engineers and project managers use AI-powered tools and technologies to deliver exceptional results for their clients. The company's services include AI-assisted software development, cloud modernization, and data and artificial intelligence solutions.

2 - Open Source AI Tools

infra

E2B Infra is a cloud runtime for AI agents. It provides SDKs and CLI to customize and manage environments and run AI agents in the cloud. The infrastructure is deployed using Terraform and is currently only deployable on GCP. The main components of the infrastructure are the API server, daemon running inside instances (sandboxes), Nomad driver for managing instances (sandboxes), and Nomad driver for building environments (templates).

genai-factory

GenAI Factory is a collection of end-to-end blueprints to deploy generative AI infrastructures in Google Cloud Platform (GCP), following security best practices. It embraces Infrastructure as Code (IaC) best practices, implements infrastructure in Terraform, and follows the least-privilege principle. The tool is compatible with Cloud Foundation Fabric FAST project-factory and application templates, allowing users to deploy various AI applications and systems on GCP.

20 - OpenAI Gpts

ML Engineer GPT

I'm a Python and PyTorch expert with knowledge of ML infrastructure requirements ready to help you build and scale your ML projects.

AI Engineering

AI engineering expert offering insights into machine learning and AI development.

Europe Ethos Guide for AI

Ethics-focused GPT builder assistant based on European AI guidelines, recommendations and regulations

ecosystem.Ai Use Case Designer v2

The use case designer is configured with the latest Data Science and Behavioral Social Science insights to guide you through the process of defining AI and Machine Learning use cases for the ecosystem.Ai platform.

Frontend Developer

AI front-end developer expert in coding React, Nextjs, Vue, Svelte, Typescript, Gatsby, Angular, HTML, CSS, JavaScript & advanced in Flexbox, Tailwind & Material Design. Mentors in coding & debugging for junior, intermediate & senior front-end developers alike. Let’s code, build & deploy a SaaS app.

Personality AI Creator

I will create a quality data set for a personality AI, just dive into each module by saying the name of it and do so for all the modules. If you find it useful, share it to your friends

AI-Framer

Professional yet friendly WebXR coding assistant, utilizing primarily A-frame and Three.js frameworks.

Gary Marcus AI Critic Simulator

Humorous AI critic known for skepticism, contradictory arguments, and combining Animal and Machine Learning related Terms.

Software development front-end GPT - Senior AI

Solve problems at front-end applications development - AI 100% PRO - 500+ Guides trainer

AppCrafty 🧰

Hello, I'm AppCrafty, your AI coding companion tailored for the creative and dynamic world of startups. I'm here to simplify the journey from concept to deployment across iOS, Android, and web platforms. Let's create something amazing together!

TonyAIDeveloperResume

Chat with my resume to see if I am a good fit for your AI related job.