Best AI tools for< Compare Experiments >

20 - AI tool Sites

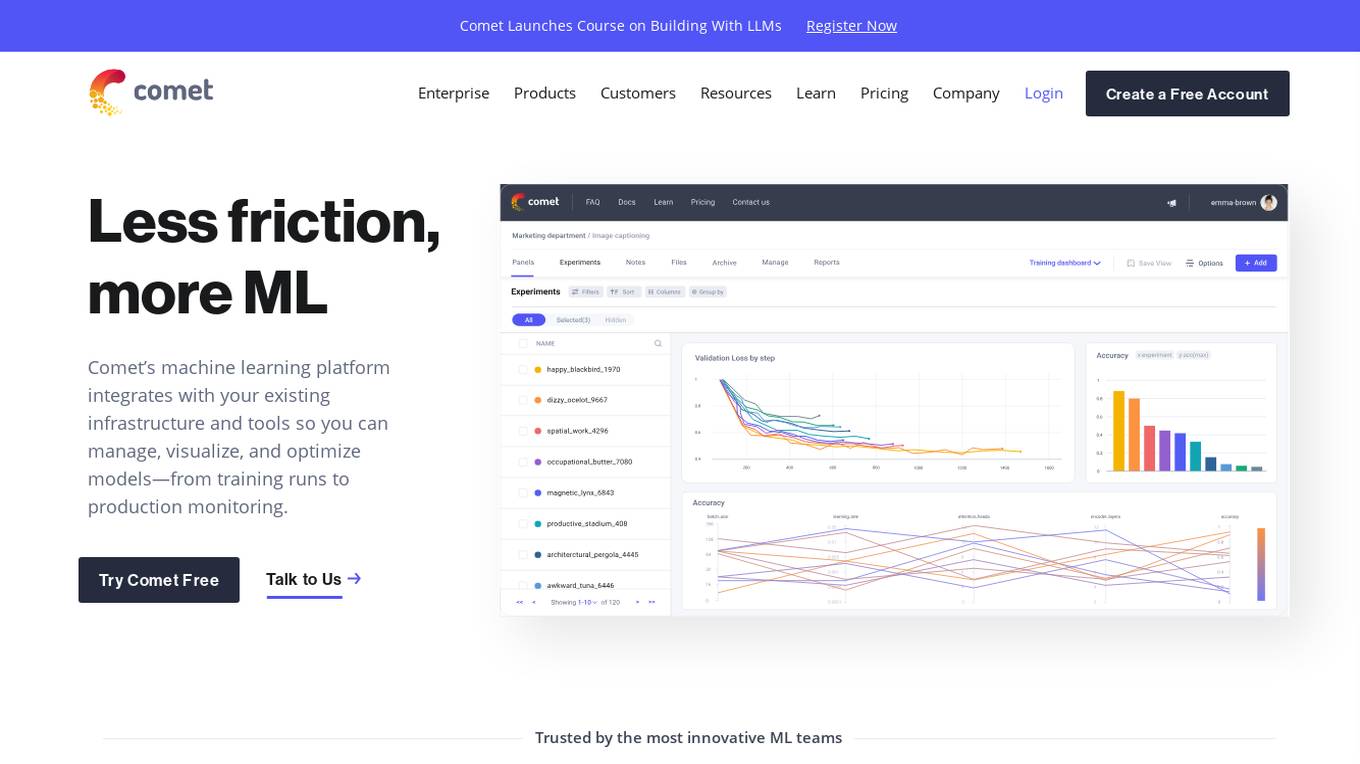

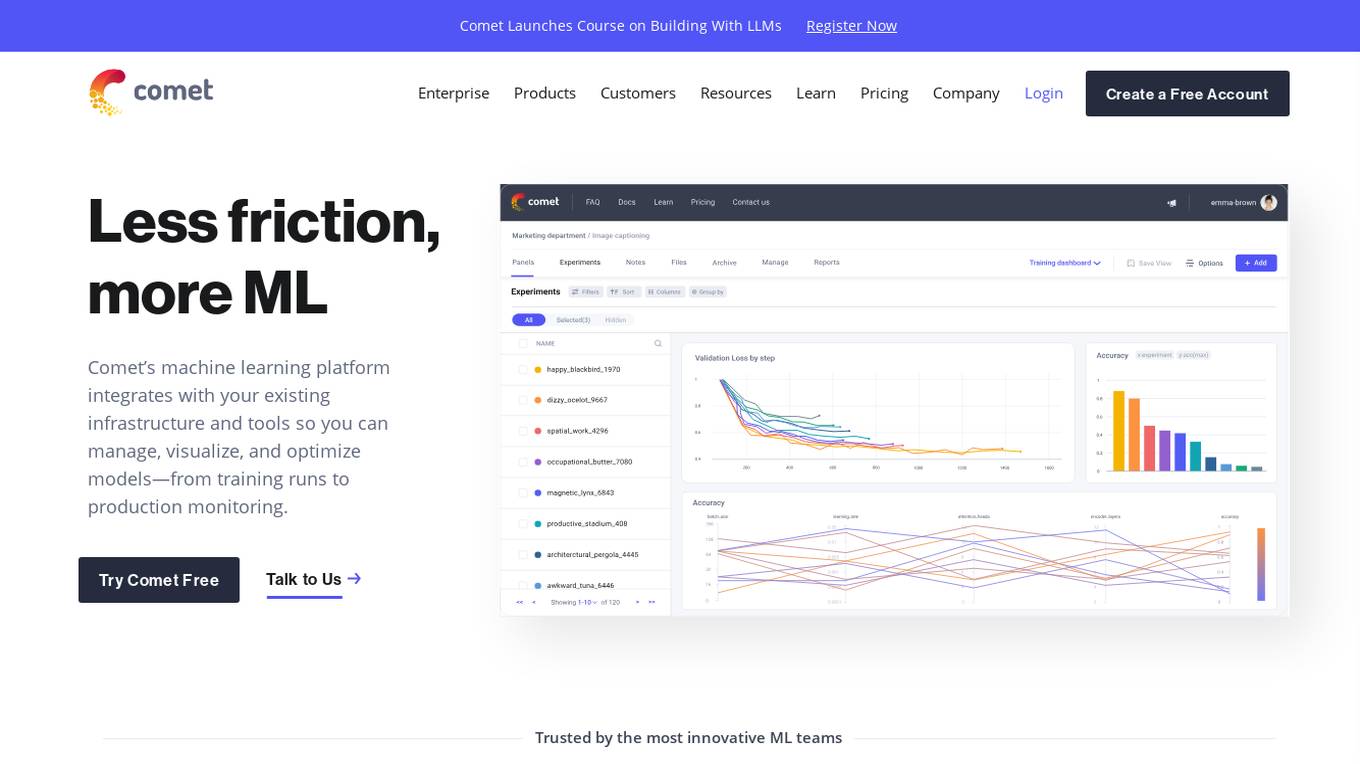

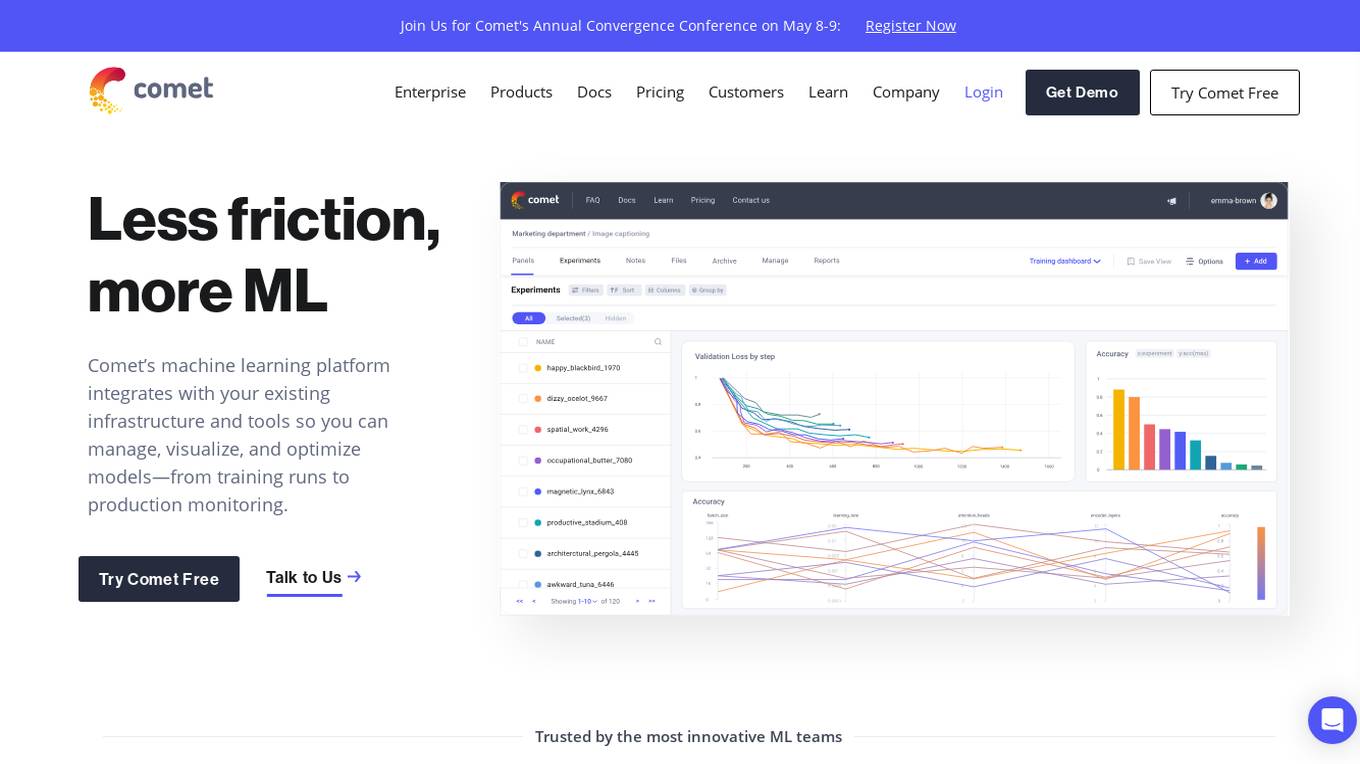

Comet ML

Comet ML is a machine learning platform that integrates with your existing infrastructure and tools so you can manage, visualize, and optimize models—from training runs to production monitoring.

Comet ML

Comet ML is a machine learning platform that integrates with your existing infrastructure and tools so you can manage, visualize, and optimize models—from training runs to production monitoring.

Comet ML

Comet ML is an extensible, fully customizable machine learning platform that aims to move ML forward by supporting productivity, reproducibility, and collaboration. It integrates with existing infrastructure and tools to manage, visualize, and optimize models from training runs to production monitoring. Users can track and compare training runs, create a model registry, and monitor models in production all in one platform. Comet's platform can be run on any infrastructure, enabling users to reshape their ML workflow and bring their existing software and data stack.

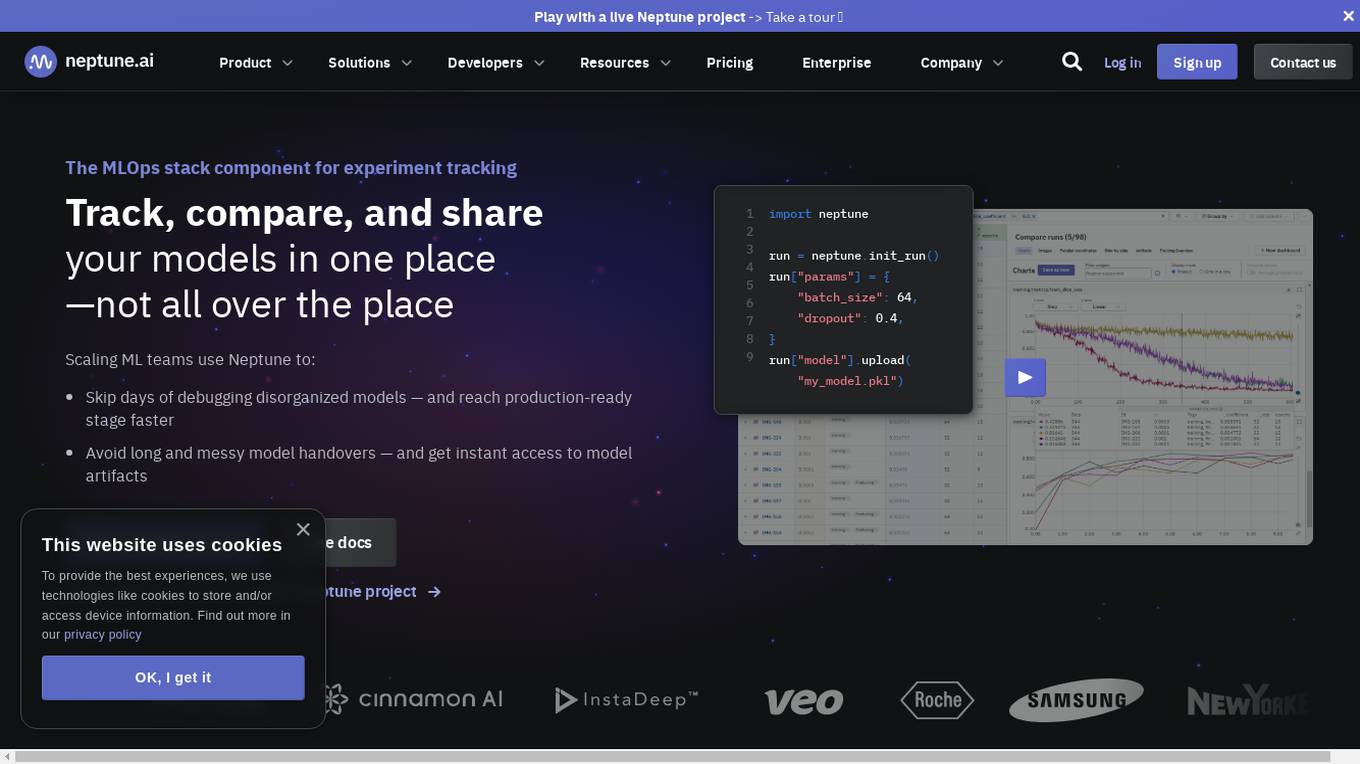

Neptune

Neptune is an MLOps stack component for experiment tracking. It allows users to track, compare, and share their models in one place. Neptune is used by scaling ML teams to skip days of debugging disorganized models, avoid long and messy model handovers, and start logging for free.

Aim

Aim is an open-source, self-hosted AI Metadata tracking tool designed to handle 100,000s of tracked metadata sequences. Two most famous AI metadata applications are: experiment tracking and prompt engineering. Aim provides a performant and beautiful UI for exploring and comparing training runs, prompt sessions.

Aim

Aim is an open-source experiment tracker that logs your training runs, enables a beautiful UI to compare them, and an API to query them programmatically. It integrates seamlessly with your favorite tools.

PubCompare

PubCompare is a powerful AI-powered tool that helps scientists search, compare, and evaluate experimental protocols. With over 40 million protocols in its database, PubCompare is the largest repository of trusted experimental protocols. PubCompare's AI-powered search features allow users to find similar protocols, highlight critical steps, and evaluate the reproducibility of protocols based on in-protocol citations. PubCompare is available from any computer and requires no download.

pl.aiwright

pl.aiwright is an AI-powered dialogue generation tool designed for interactive narratives. It offers features such as analyzing and clustering large dialogue graphs, dialogue generation using a mix of code and natural language, playtests for gathering user feedback, and tools for experimental analysis. The tool enables users to create engaging dialogues for various applications, such as games, virtual simulations, and interactive storytelling.

Permar

Permar is an AI-powered website optimization tool that helps businesses increase their conversion rates. It uses reinforcement learning techniques to dynamically adapt website optimization, resulting in an average uplift in conversion rates of 10-12% compared to static A/B tests. Permar also offers a complete toolkit of features to help businesses create high-converting landing pages, including dynamic A/B testing, real-time optimization, and growth experiment ideas.

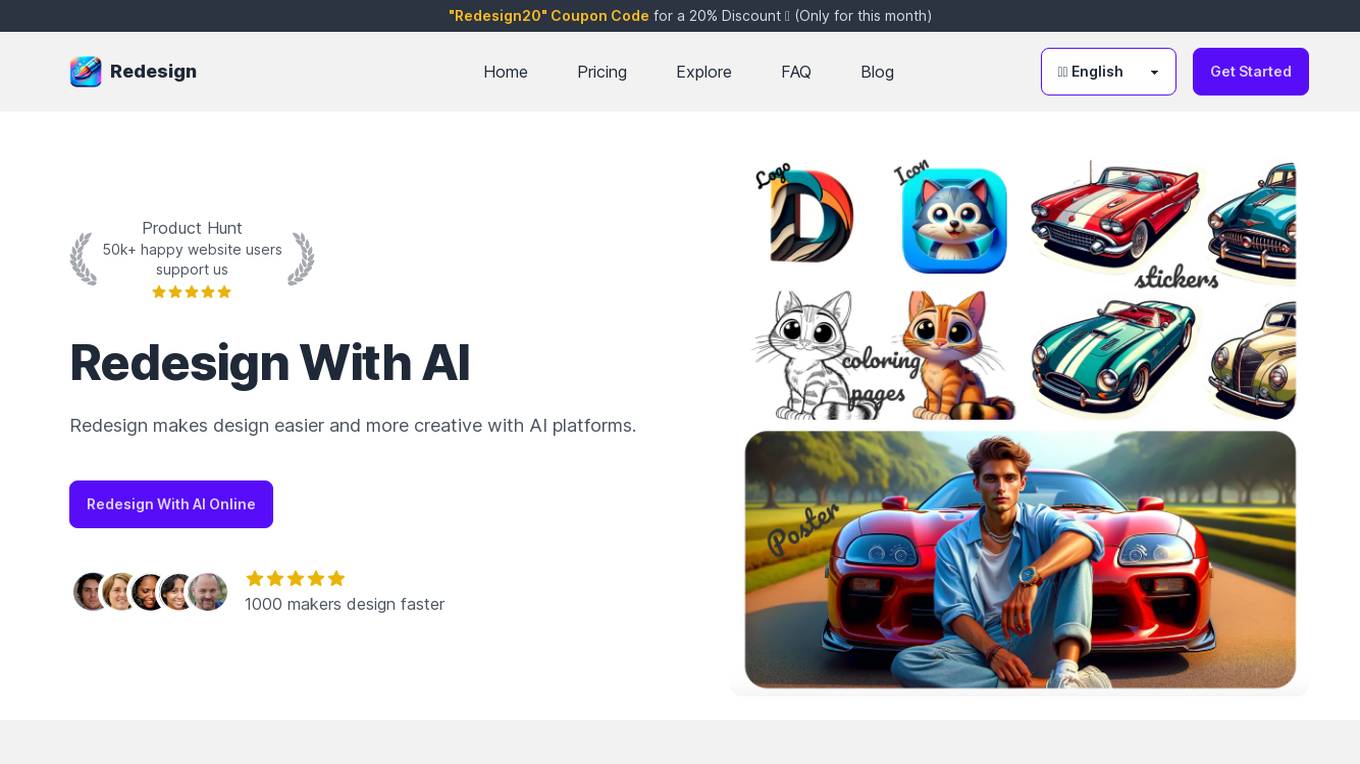

Redesign With AI

Redesign With AI is an online platform that leverages artificial intelligence to make design easier and more creative. It offers users the ability to generate high-quality design images quickly, saving time and money compared to hiring a professional designer. With intuitive interfaces and unlimited creativity, Redesign With AI empowers users to explore and experiment with various creative ideas. The platform caters to a wide range of design needs, from icons and logos to stickers and posters, making it a versatile tool for designers and non-designers alike.

Redesign With AI

Redesign With AI is an online platform that leverages artificial intelligence to make design easier and more creative. It offers users the ability to generate high-quality design images quickly, saving time and money compared to hiring a professional designer. The platform provides intuitive interfaces for users with varying levels of design experience, allowing unlimited creativity and experimentation with various ideas. Redesign With AI has received positive feedback from users who have found it helpful in creating unique designs for websites, apps, posters, stickers, and more.

Stable Diffusion AI

Stable Diffusion AI is an online platform that utilizes deep learning techniques to generate high-quality design images quickly and efficiently. It offers a user-friendly interface for users with varying levels of design experience to explore and experiment with unlimited creative ideas. The platform is cost-effective, saving time and money compared to hiring a professional designer. Stable Diffusion AI is an open-source project, allowing users to access and modify its code for their needs.

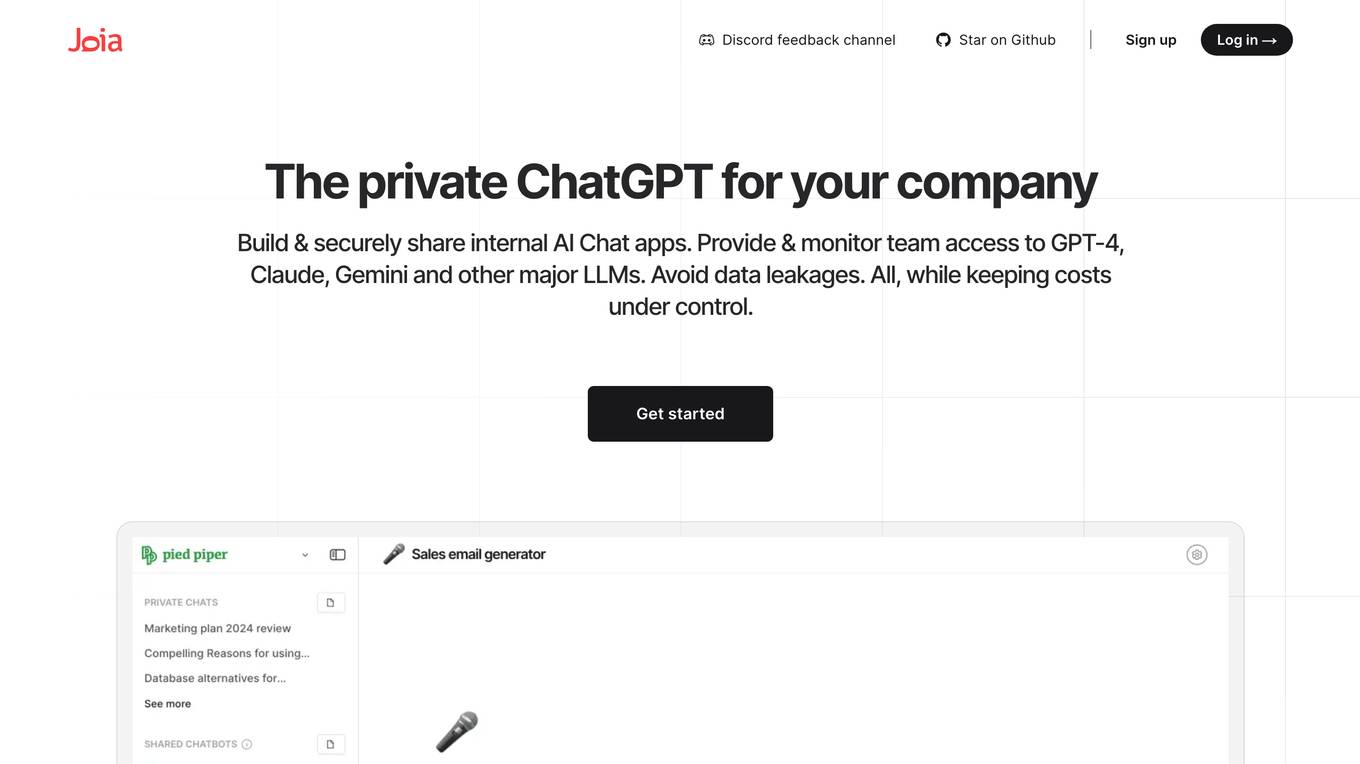

Joia

Joia is a private ChatGPT alternative built for collaboration within teams. It provides secure access to various large language models (LLMs) like GPT-4, Claude, and Gemini, allowing teams to build and share internal AI chat applications. Joia prioritizes data security, cost control, and offers a more affordable option compared to ChatGPT for Teams, with savings of up to 70%. It enables users to experiment with different LLMs and create personalized chatbots for repetitive tasks, enhancing team collaboration and efficiency.

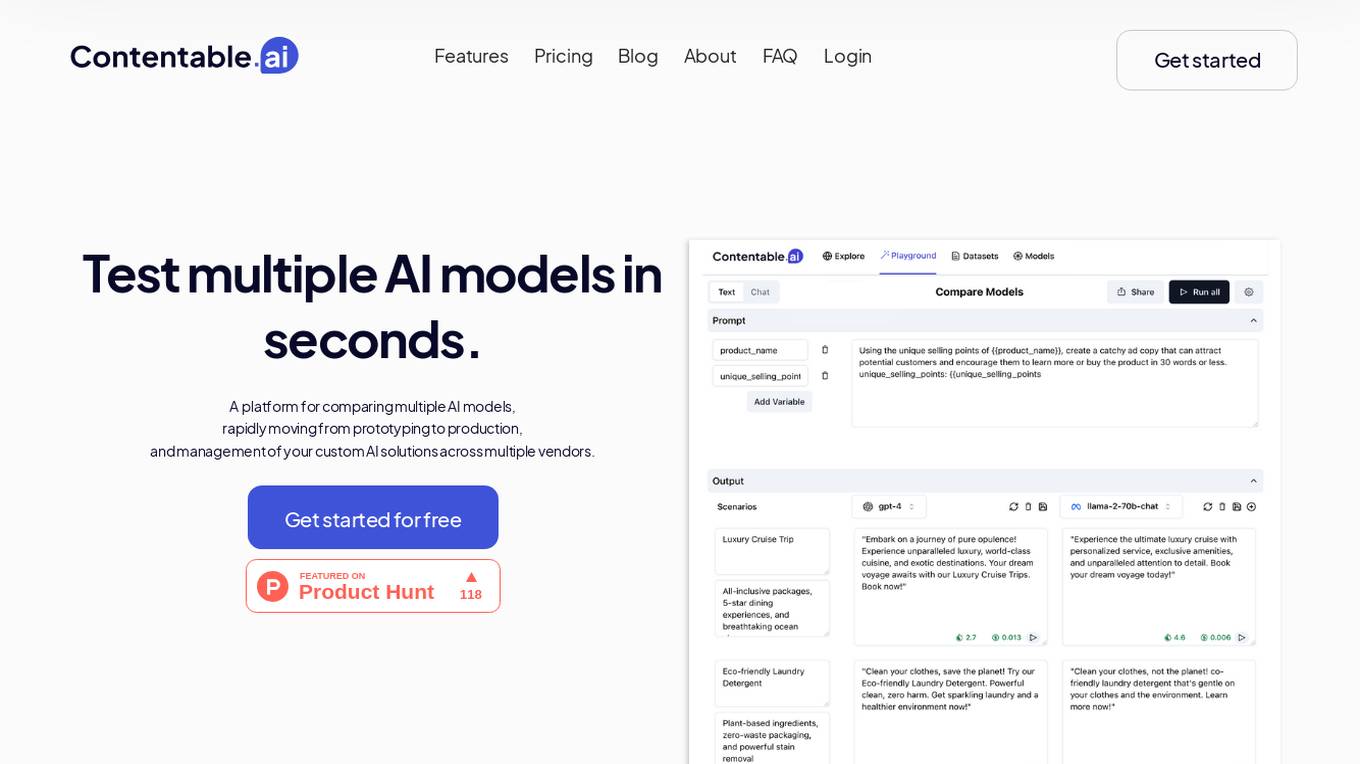

Contentable.ai

Contentable.ai is a platform for comparing multiple AI models, rapidly moving from prototyping to production, and management of your custom AI solutions across multiple vendors. It allows users to test multiple AI models in seconds, compare models side-by-side across top AI providers, collaborate on AI models with their team seamlessly, design complex AI workflows without coding, and pay as they go.

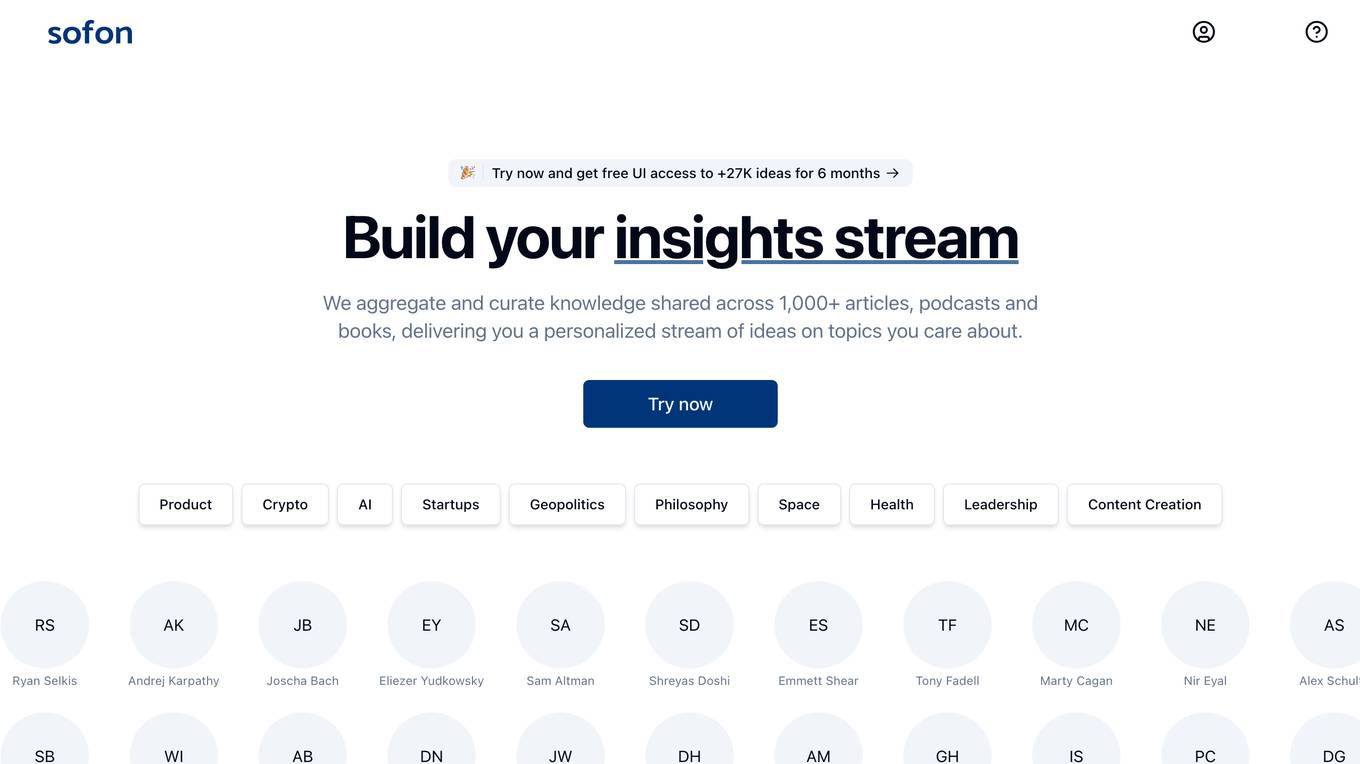

Sofon

Sofon is a knowledge aggregation and curation platform that provides users with personalized insights on topics they care about. It aggregates and curates knowledge shared across 1,000+ articles, podcasts, and books, delivering a personalized stream of ideas to users. Sofon uses AI to compare ideas across hundreds of people on any question, saving users thousands of hours of curation. Users can indicate the people they want to learn from, and Sofon will curate insights across all their knowledge. Users can receive an idealetter, which is a unique combination of ideas across all the people they've selected around a common theme, delivered at an interval of their choice.

Product Discovery & Comparison Platform

The website is a platform that allows users to discover and compare various products across different categories such as cars, phones, laptops, TVs, smartwatches, and more. Users can easily find information on high-end, mid-tier, and low-cost options for each product category, enabling them to make informed purchasing decisions. The site aims to simplify the process of product research and comparison for consumers looking to buy different items.

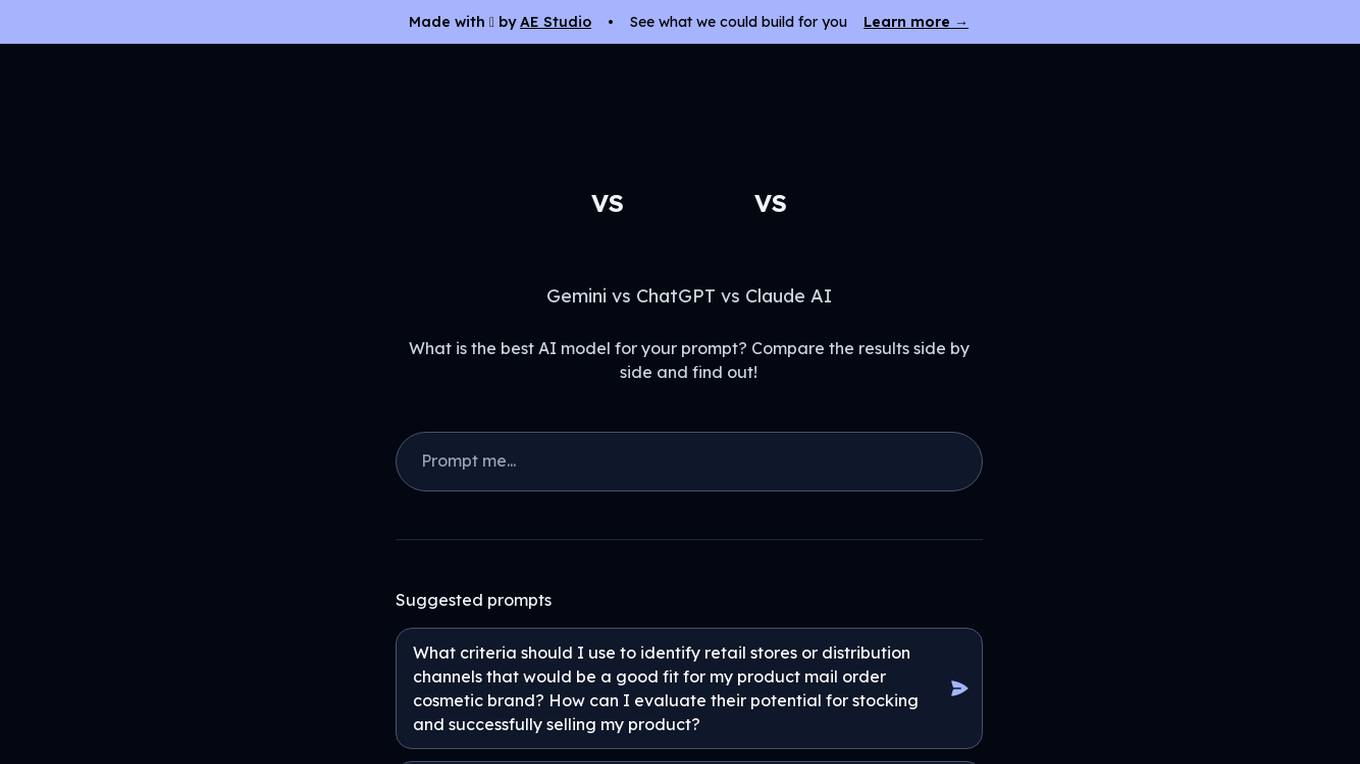

LLM Clash

LLM Clash is a web-based application that allows users to compare the outputs of different large language models (LLMs) on a given task. Users can input a prompt and select which LLMs they want to compare. The application will then display the outputs of the LLMs side-by-side, allowing users to compare their strengths and weaknesses.

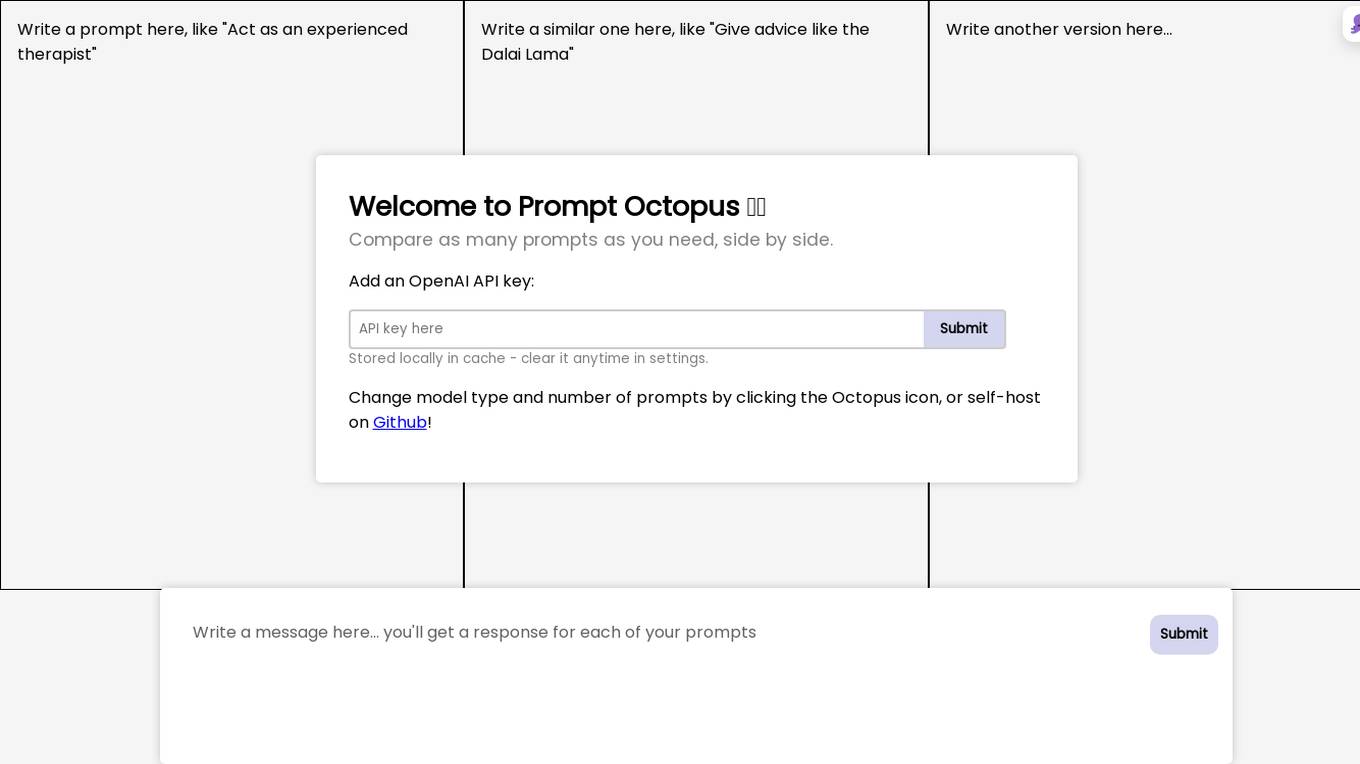

Prompt Octopus

Prompt Octopus is a free tool that allows you to compare multiple prompts side-by-side. You can add as many prompts as you need and view the responses in real-time. This can be helpful for fine-tuning your prompts and getting the best possible results from your AI model.

Gemini vs ChatGPT

Gemini is a multi-modal AI model, developed by Google. It is designed to understand and generate human language, and can be used for a variety of tasks, including question answering, translation, and dialogue generation. ChatGPT is a large language model, developed by OpenAI. It is also designed to understand and generate human language, and can be used for a variety of tasks, including question answering, translation, and dialogue generation.

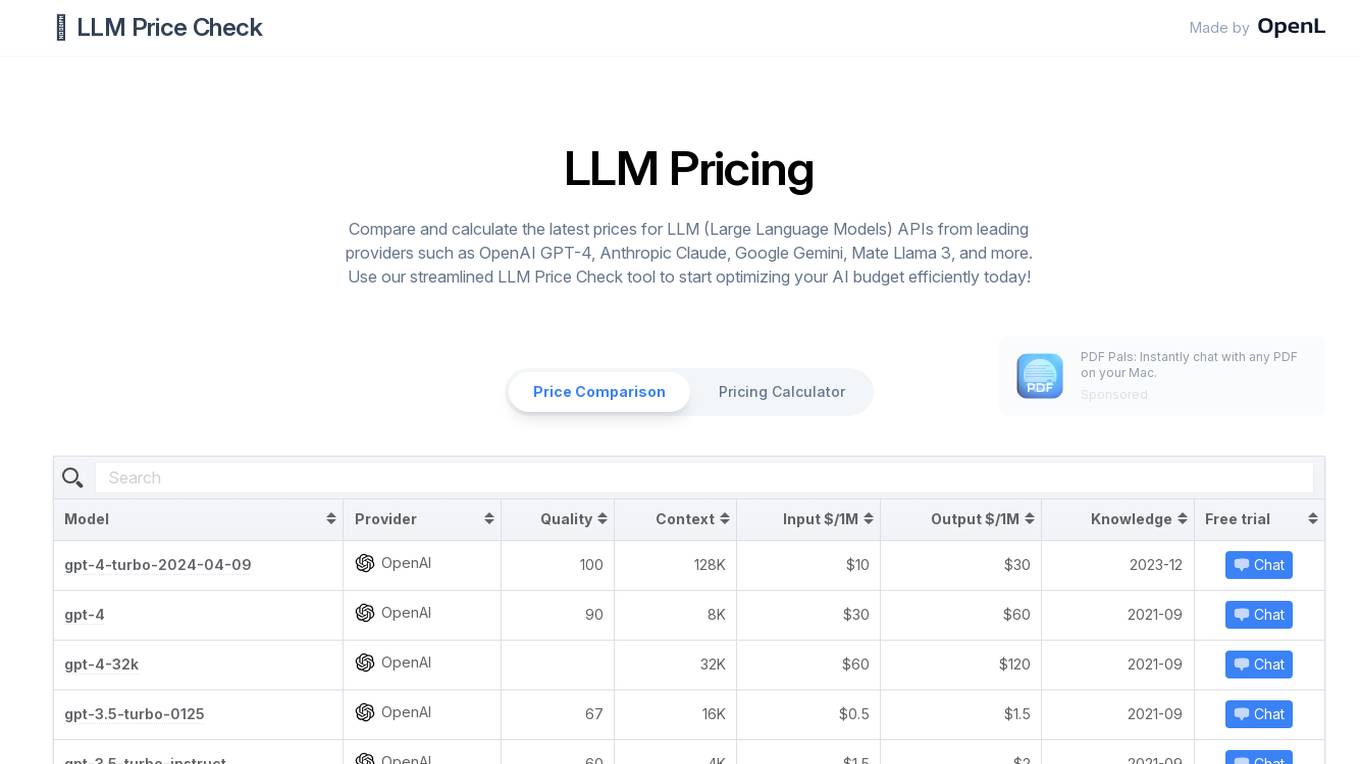

LLM Price Check

LLM Price Check is an AI tool designed to compare and calculate the latest prices for Large Language Models (LLM) APIs from leading providers such as OpenAI, Anthropic, Google, and more. Users can use the streamlined tool to optimize their AI budget efficiently by comparing pricing, sorting by various parameters, and searching for specific models. The tool provides a comprehensive overview of pricing information to help users make informed decisions when selecting an LLM API provider.

1 - Open Source AI Tools

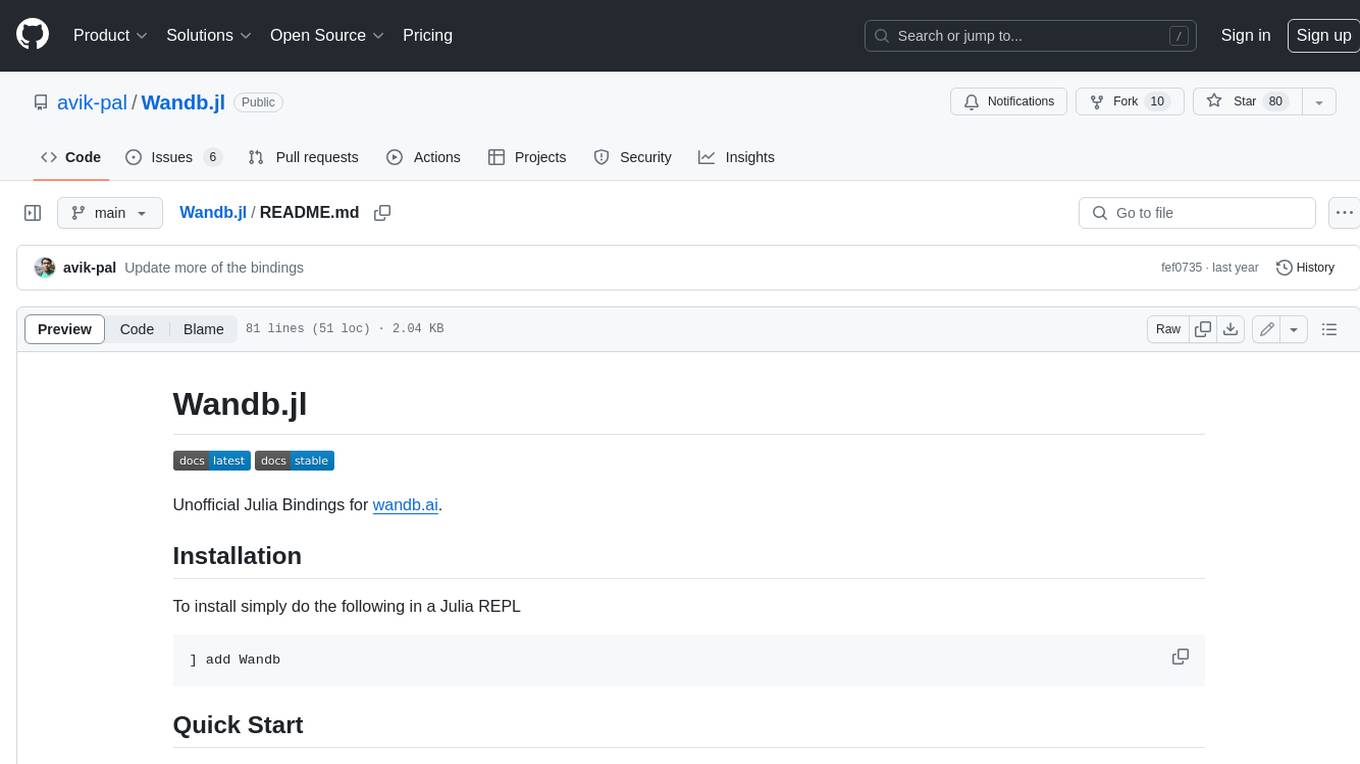

Wandb.jl

Unofficial Julia Bindings for wandb.ai. Wandb is a platform for tracking and visualizing machine learning experiments. It provides a simple and consistent way to log metrics, parameters, and other data from your experiments, and to visualize them in a variety of ways. Wandb.jl provides a convenient way to use Wandb from Julia.

20 - OpenAI Gpts

Best Spy Apps for Android (Q&A)

FREE tool to compare best spy apps for Android. Get answers to your questions and explore features, pricing, pros and cons of each spy app.

GPTValue

Compare similar GPTs outputs quality on the same question, identify the most valuable one.

TV Comparison | Comprehensive TV Database

Compare TV Devices Uncover the pros and cons of different latest TV models.

PerspectiveBot

Provide TOPIC & different views to compare: Gateway to Informed Comparisons. Harness AI-powered insights to analyze and score different viewpoints on any topic, delivering balanced, data-driven perspectives for smarter decision-making.

Calorie Count & Cut Cost: Food Data

Apples vs. Oranges? Optimize your low-calorie diet. Compare food items. Get tailored advice on satiating, nutritious, cost-effective food choices based on 240 items.

Best price kuwait

A customized GPT model for price comparison would search and compare product prices on websites in Kuwait, tailored to local markets and languages.

Software Comparison

I compare different software, providing detailed, balanced information.

Website Conversion by B12

I'll help you optimize your website for more conversions, and compare your site's CRO potential to competitors’.

Course Finder

Find the perfect online course in tech, business, marketing, programming, and more. Compare options from top platforms like Udemy, Coursera, and EDX.

AI Hub

Your Gateway to AI Discovery – Ask, Compare, Learn. Explore AI tools and software with ease. Create AI Tech Stacks for your business and much more – Just ask, and AI Hub will do the rest!

🔵 GPT Boosted

GPT- 5 ? | Enhanced version of GPT-4 Turbo, don't believe, try and compare! | ver .001