Best AI tools for< Classify Text Documents >

20 - AI tool Sites

Lettria

Lettria is a no-code AI platform for text that helps users turn unstructured text data into structured knowledge. It combines the best of Large Language Models (LLMs) and symbolic AI to overcome current limitations in knowledge extraction. Lettria offers a suite of APIs for text cleaning, text mining, text classification, and prompt engineering. It also provides a Knowledge Studio for building knowledge graphs and private GPT models. Lettria is trusted by large organizations such as AP-HP and Leroy Merlin to improve their data analysis and decision-making processes.

Totoy

Totoy is a Document AI tool that redefines the way documents are processed. Its API allows users to explain, classify, and create knowledge bases from documents without the need for training. The tool supports 19 languages and works with plain text, images, and PDFs. Totoy is ideal for automating workflows, complying with accessibility laws, and creating custom AI assistants for employees or customers.

AnythingLLM

AnythingLLM is an all-in-one AI application designed for everyone. It offers a suite of tools for working with LLM (Large Language Models), documents, and agents in a fully private environment. Users can install AnythingLLM on their desktop for Windows, MacOS, and Linux, enabling flexible one-click installation and secure, fully private operation without internet connectivity. The application supports custom models, including enterprise models like GPT-4, custom fine-tuned models, and open-source models like Llama and Mistral. AnythingLLM allows users to work with various document formats, such as PDFs and word documents, providing tailored solutions with locally running defaults for privacy.

PDF AI

The website offers an AI-powered PDF reader that allows users to chat with any PDF document. Users can upload a PDF, ask questions, get answers, extract precise sections of text, summarize, annotate, highlight, classify, analyze, translate, and more. The AI tool helps in quickly identifying key details, finding answers without reading through every word, and citing sources. It is ideal for professionals in various fields like legal, finance, research, academia, healthcare, and public sector, as well as students. The tool aims to save time, increase productivity, and simplify document management and analysis.

CategorAIze.io

CategorAIze.io is an AI-powered tool that helps users categorize data effortlessly using the latest AI technologies. Users can define custom categories, upload data items, and let the cutting-edge LLM AI automatically assign entries based on their content without the need for pretraining. The tool supports multi-level hierarchies, text and image-based categorization, and offers pay-as-you-go pricing options. Additionally, users can access the tool via browser, API, and plugins for a seamless experience.

Mistral AI

Mistral AI is a cutting-edge AI technology provider for developers and businesses. Their open and portable generative AI models offer unmatched performance, flexibility, and customization. Mistral AI's mission is to accelerate AI innovation by providing powerful tools that can be easily integrated into various applications and systems.

Aigclist

Aigclist is a website that provides a directory of AI tools and resources. The website is designed to help users find the right AI tools for their needs. Aigclist also provides information on AI trends and news.

Aipify

Aipify is a platform that allows users to build AI-powered APIs in seconds. With Aipify, users can access the latest AI models, including GPT-4, to enhance their applications' capabilities. Aipify's APIs are easy to use and affordable, making them a great choice for businesses of all sizes.

OpenTrain AI

OpenTrain AI is a data labeling marketplace that leverages artificial intelligence to streamline the process of labeling data for machine learning models. It provides a platform where users can crowdsource data labeling tasks to a global community of annotators, ensuring high-quality labeled datasets for training AI algorithms. With advanced AI algorithms and human-in-the-loop validation, OpenTrain AI offers efficient and accurate data labeling services for various industries such as autonomous vehicles, healthcare, and natural language processing.

FreedomGPT

FreedomGPT is a powerful AI platform that provides access to a wide range of AI models without the need for technical knowledge. With its user-friendly interface and offline capabilities, FreedomGPT empowers users to explore and utilize AI for various tasks and applications. The platform is committed to privacy and offers an open-source approach, encouraging collaboration and innovation within the AI community.

NLTK

NLTK (Natural Language Toolkit) is a leading platform for building Python programs to work with human language data. It provides easy-to-use interfaces to over 50 corpora and lexical resources such as WordNet, along with a suite of text processing libraries for classification, tokenization, stemming, tagging, parsing, and semantic reasoning, wrappers for industrial-strength NLP libraries, and an active discussion forum. Thanks to a hands-on guide introducing programming fundamentals alongside topics in computational linguistics, plus comprehensive API documentation, NLTK is suitable for linguists, engineers, students, educators, researchers, and industry users alike.

PyAI

PyAI is an advanced AI tool designed for developers and data scientists to streamline their workflow and enhance productivity. It offers a wide range of AI capabilities, including machine learning algorithms, natural language processing, computer vision, and more. With PyAI, users can easily build, train, and deploy AI models for various applications, such as predictive analytics, image recognition, and text classification. The tool provides a user-friendly interface and comprehensive documentation to support users at every stage of their AI projects.

Taylor AI

Taylor AI is an artificial intelligence company that specializes in building AI infrastructure for government agencies and law firms. Established in 2023, Taylor AI offers custom AI solutions for various organizations, including government agencies, law firms, and private companies. The company is supported by prominent investors such as Y Combinator, General Catalyst, FoundersX, and Gaingels. Headquartered in San Francisco, California, Taylor AI aims to provide cutting-edge AI technology to its clients.

GPTKit

GPTKit is a free AI text generation detection tool that utilizes six different AI-based content detection techniques to identify and classify text as either human- or AI-generated. It provides reports on the authenticity and reality of the analyzed content, with an accuracy of approximately 93%. The first 2048 characters in every request are free, and users can register for free to get 2048 characters/request.

Cogniflow

Cogniflow is a no-code AI platform that allows users to build and deploy custom AI models without any coding experience. The platform provides a variety of pre-built AI models that can be used for a variety of tasks, including customer service, HR, operations, and more. Cogniflow also offers a variety of integrations with other applications, making it easy to connect your AI models to your existing workflow.

Cohere

Cohere is a leading provider of artificial intelligence (AI) tools and services. Our mission is to make AI accessible and useful to everyone, from individual developers to large enterprises. We offer a range of AI tools and services, including natural language processing, computer vision, and machine learning. Our tools are used by businesses of all sizes to improve customer service, automate tasks, and gain insights from data.

Predibase

Predibase is a platform for fine-tuning and serving Large Language Models (LLMs). It provides a cost-effective and efficient way to train and deploy LLMs for a variety of tasks, including classification, information extraction, customer sentiment analysis, customer support, code generation, and named entity recognition. Predibase is built on proven open-source technology, including LoRAX, Ludwig, and Horovod.

Nesa Playground

Nesa is a global blockchain network that brings AI on-chain, allowing applications and protocols to seamlessly integrate with AI. It offers secure execution for critical inference, a private AI network, and a global AI model repository. Nesa supports various AI models for tasks like text classification, content summarization, image generation, language translation, and more. The platform is backed by a team with extensive experience in AI and deep learning, with numerous awards and recognitions in the field.

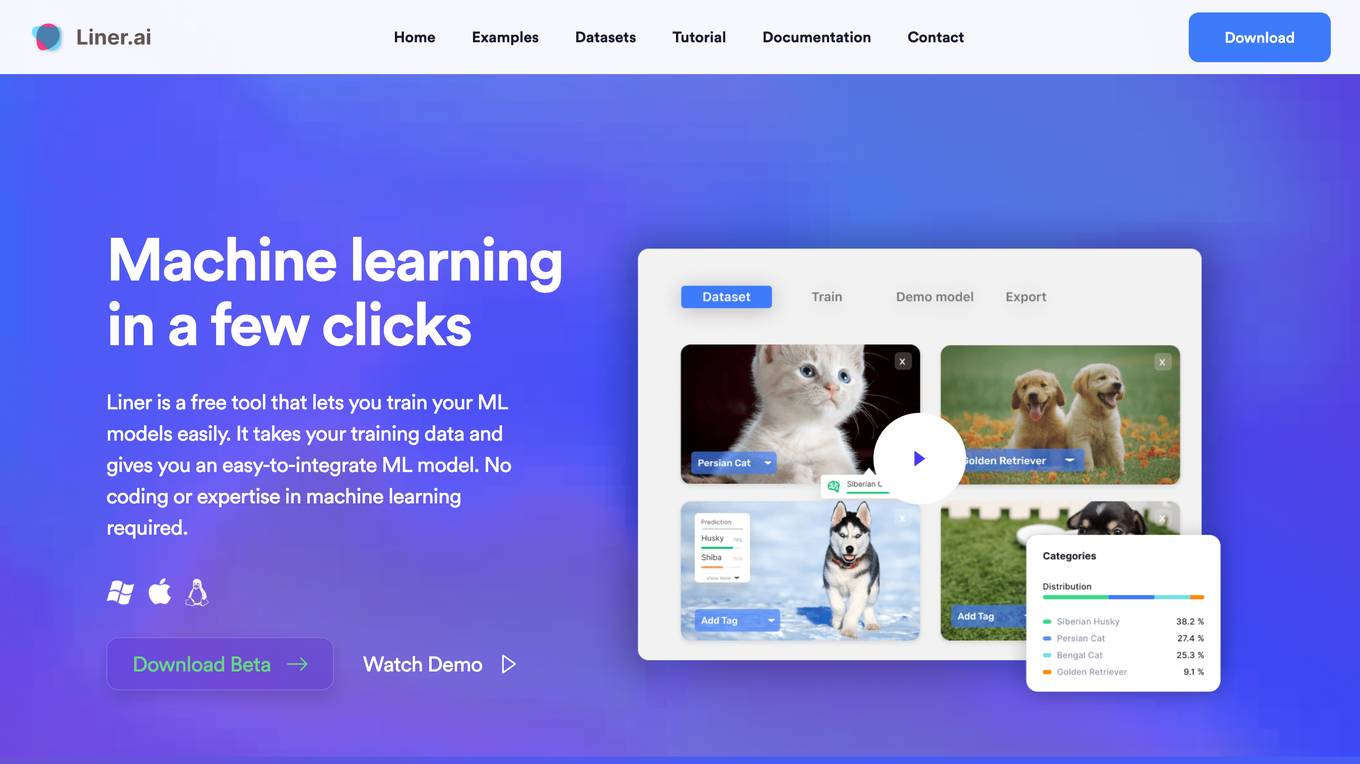

Liner.ai

Liner is a free and easy-to-use tool that allows users to train machine learning models without writing any code. It provides a user-friendly interface that guides users through the process of importing data, selecting a model, and training the model. Liner also offers a variety of pre-trained models that can be used for common tasks such as image classification, text classification, and object detection. With Liner, users can quickly and easily create and deploy machine learning applications without the need for specialized knowledge or expertise.

Marvin

Marvin is a lightweight toolkit for building natural language interfaces that are reliable, scalable, and easy to trust. It provides a variety of AI functions for text, images, audio, and video, as well as interactive tools and utilities. Marvin is designed to be easy to use and integrate, and it can be used to build a wide range of applications, from simple chatbots to complex AI-powered systems.

0 - Open Source AI Tools

20 - OpenAI Gpts

Dr. Classify

Just upload a numerical dataset for classification task, will apply data analysis and machine learning steps to make a best model possible.

Prompt Injection Detector

GPT used to classify prompts as valid inputs or injection attempts. Json output.

NACE Classifier

NACE (Nomenclature of Economic Activities) is the European statistical classification of economic activities. This is not an official product. Official information here: https://nacev2.com/en

TradeComply

Import Export Compliance | Tariff Classification | Shipping Queries | Logistics & Supply Chain Solutions

LiDAR GPT - LAStools Comprehensive Expert

Expert in LAStools with in-depth command line knowledge.

GICS Classifier

GICS is a classification standard developed by MSCI and S&P Dow Jones Indices. This GPT is not a MSCI and S&P product. Official website : https://www.msci.com/our-solutions/indexes/gics

UNSPSC Explorer

Expert in UNSPSC Codes (United Nations Standard Products and Services Code®).

DGL coding assistant

Assists with DGL coding, focusing on edge classification and link prediction.

Lexi - Article Classifier

Classifies articles into knowledge domains. source code: https://homun.posetmage.com/Agents/

Cloud Scholar

Super astronomer identifying clouds in English and Chinese, sharing facts in Chinese.

Not Hotdog

What would you say if I told you there is an app on the market that can tell you if you have a hot dog or not a hot dog.

MDR Navigator

Medical Device Expert on MDR 2017/745, IVDR 2017/746 and related MDCG guidance

Rock Identifier GPT

I identify various rocks from images and advise consulting a geologist for certainty.