Best AI tools for< Build Streaming Ai Pipelines >

20 - AI tool Sites

SingleStore

SingleStore is a real-time data platform designed for apps, analytics, and gen AI. It offers faster hybrid vector + full-text search, fast-scaling integrations, and a free tier. SingleStore can read, write, and reason on petabyte-scale data in milliseconds. It supports streaming ingestion, high concurrency, first-class vector support, record lookups, and more.

Workflow DevKit

Workflow DevKit is an AI tool designed to make any TypeScript function durable by bringing durability, reliability, and observability to async JavaScript. It allows users to build apps and AI agents that can suspend, resume, and maintain state with ease. The tool provides a simple declarative API to define and use workflows, enabling users to move from hand-rolled queues and custom retries to durable, resumable code with simple directives. Workflow DevKit offers observability features to inspect every run end-to-end, pause, replay, and time-travel through steps with traces, logs, and metrics automatically captured. It is universally compatible with various frameworks and can power a wide array of applications, from streaming real-time agents to CI/CD pipelines or multi-day email subscriptions workflows. The tool ensures reliability without the need for plumbing, making it easy to build reliable, long-running processes with automatic retries, state persistence, and observability built-in.

Encord

Encord is a complete data development platform designed for AI applications, specifically tailored for computer vision and multimodal AI teams. It offers tools to intelligently manage, clean, and curate data, streamline labeling and workflow management, and evaluate model performance. Encord aims to unlock the potential of AI for organizations by simplifying data-centric AI pipelines, enabling the building of better models and deploying high-quality production AI faster.

Lume AI

Lume AI is an AI-powered data mapping suite that automates the process of mapping, cleaning, and validating data in various workflows. It offers a comprehensive solution for building pipelines, onboarding customer data, and more. With AI-driven insights, users can streamline data analysis, mapper generation, deployment, and maintenance. Lume AI provides both a no-code platform and API integration options for seamless data mapping. Trusted by market leaders and startups, Lume AI ensures data security with enterprise-grade encryption and compliance standards.

Microtica

Microtica is an AI-powered cloud delivery platform that offers a comprehensive suite of DevOps tools to help users build, deploy, and optimize their infrastructure efficiently. With features like AI Incident Investigator, AI Infrastructure Builder, Kubernetes deployment simplification, alert monitoring, pipeline automation, and cloud monitoring, Microtica aims to streamline the development and management processes for DevOps teams. The platform provides real-time insights, cost optimization suggestions, and guided deployments, making it a valuable tool for businesses looking to enhance their cloud infrastructure operations.

Glozo

Glozo is an AI-powered sourcing platform designed to streamline recruitment processes by matching job requirements to suitable candidates efficiently. The platform utilizes AI to enhance sourcing accuracy, reduce time-to-hire, and minimize costs for recruiters. Glozo offers features such as AI-powered candidate search, automated screening, and access to a vast talent database from various sources. The platform caters to recruiters in the tech industry, finance sector, and other fields, helping them build stronger talent pipelines and make informed hiring decisions.

Prophecy

Prophecy is an AI data preparation and analysis tool that leverages AI-driven visual workflows to turn raw data into trusted insights. It is designed for self-serve use by business users and is built to scale on platforms like Databricks, Snowflake, and BigQuery. With Prophecy, users can prompt AI Agents to generate visual workflows, discover and explore datasets, and document their analysis efficiently. The tool aims to enhance productivity by allowing users to interact with AI Agents to streamline data preparation and analytics processes.

Recrew AI

Recrew AI is an AI-powered recruitment tool that revolutionizes the hiring process by leveraging artificial intelligence to parse resumes, match candidates, and build a diverse candidate pipeline in minutes. It helps recruiters overcome challenges such as sorting profiles, extracting accurate data, reducing lean time, identifying top talent, expanding talent pools, and making data-driven hiring decisions. Recrew AI aims to streamline recruitment processes, eliminate manual errors, reduce bias, and enhance overall efficiency in the recruitment industry.

Momentic

Momentic is a purpose-built AI tool for modern software testing, offering automation for E2E, UI, API, and accessibility testing. It leverages AI to streamline testing processes, from element identification to test generation, helping users shorten development cycles and enhance productivity. With an intuitive editor and the ability to describe elements in plain English, Momentic simplifies test creation and execution. It supports local testing without the need for a public URL, smart waiting for in-flight requests, and integration with CI/CD pipelines. Momentic is trusted by numerous companies for its efficiency in writing and maintaining end-to-end tests.

RunPod

RunPod is a cloud platform specifically designed for AI development and deployment. It offers a range of features to streamline the process of developing, training, and scaling AI models, including a library of pre-built templates, efficient training pipelines, and scalable deployment options. RunPod also provides access to a wide selection of GPUs, allowing users to choose the optimal hardware for their specific AI workloads.

Fetcher

Fetcher is an AI candidate sourcing tool designed for recruiters to streamline the talent acquisition process. It leverages advanced AI technology and expert teams to efficiently source high-quality candidate profiles that match specific hiring requirements. The tool also provides smart recruitment analytics, personalized diversity search criteria, and verified contact information to enhance candidate engagement and streamline the recruitment process. With robust technology integrations and a focus on automating processes, Fetcher aims to help companies of all sizes attract top talent and optimize their recruitment strategies.

Papnox ERP

Papnox ERP is an AI-powered ERP software designed specifically for paper distributors. It offers cloud-based accounting, invoicing, and inventory management solutions. The platform includes modules for CRM, payroll management, website building, and report generation. Papnox ERP is known for its early adoption of disruptive technologies and provides unique insights into the paper distribution industry. The software aims to streamline business operations and enhance efficiency for paper distributors.

Bizware AI

Bizware AI is an all-in-one AI-powered platform designed to help businesses automate their sales and marketing processes, attract and close more customers, and build long-lasting client relationships on autopilot. The platform offers a wide range of features such as social media management, website chat widget, forms & surveys, automated nurture sequences, email & SMS marketing, phone system & autodialer, CRM & sales pipeline management, invoicing & payments, review & reputation management, and reporting & analytics. Bizware AI aims to streamline sales, generate more leads, build brand reputation, impress existing customers, and automate various tasks to help businesses grow efficiently.

HireList.io

HireList.io is an AI-driven recruitment software designed to streamline the hiring process for startups. It offers a range of features to help businesses target the right talent, connect with the right candidates, and build their dream team quickly and efficiently. HireList's AI-powered candidate filter narrows down the pool of applicants, highlighting only those who fit the bill. The platform also provides a streamlined hiring pipeline, taking businesses from job posting to welcoming their new hire. With HireList, businesses can create and tailor job postings, review and comment on candidates, and track their progress through the hiring process. The platform also offers communication automation, structured interviews, and performance tracking to help businesses refine their hiring practices.

Valohai

Valohai is a scalable MLOps platform that enables Continuous Integration/Continuous Deployment (CI/CD) for machine learning and pipeline automation on-premises and across various cloud environments. It helps streamline complex machine learning workflows by offering framework-agnostic ML capabilities, automatic versioning with complete lineage of ML experiments, hybrid and multi-cloud support, scalability and performance optimization, streamlined collaboration among data scientists, IT, and business units, and smart orchestration of ML workloads on any infrastructure. Valohai also provides a knowledge repository for storing and sharing the entire model lifecycle, facilitating cross-functional collaboration, and allowing developers to build with total freedom using any libraries or frameworks.

Wedo AI

Wedo AI is an all-in-one AI-powered platform designed to help businesses attract customers, convert leads, and manage various aspects of online marketing, sales, and delivery. It offers a range of tools such as AI ads, chat bots, social media planner, websites, ecommerce store, memberships, CRM, email marketing, analytics, and more. Wedo AI aims to streamline processes, increase efficiency, and drive revenue growth for entrepreneurs, startups, influencers, non-profits, coaches, contractors, freelancers, and consultants. The platform provides features for managing finances, automating billing, creating funnels, building websites, selling products, engaging with customers, and analyzing data to make informed decisions.

SubSync

SubSync is the #1 CRM for Home Services that leverages AI technology to help home service businesses accelerate their revenue by generating, engaging, and converting new leads. It offers a comprehensive all-in-one platform for lead prospecting, marketing automation, and sales management. SubSync's innovative features streamline the process of finding and connecting with potential customers, empowering sales teams to focus on quality interactions. With SubSync, users can automate time-consuming workflows, track campaign performance, and optimize their sales funnel, ultimately leading to increased efficiency and higher ROI.

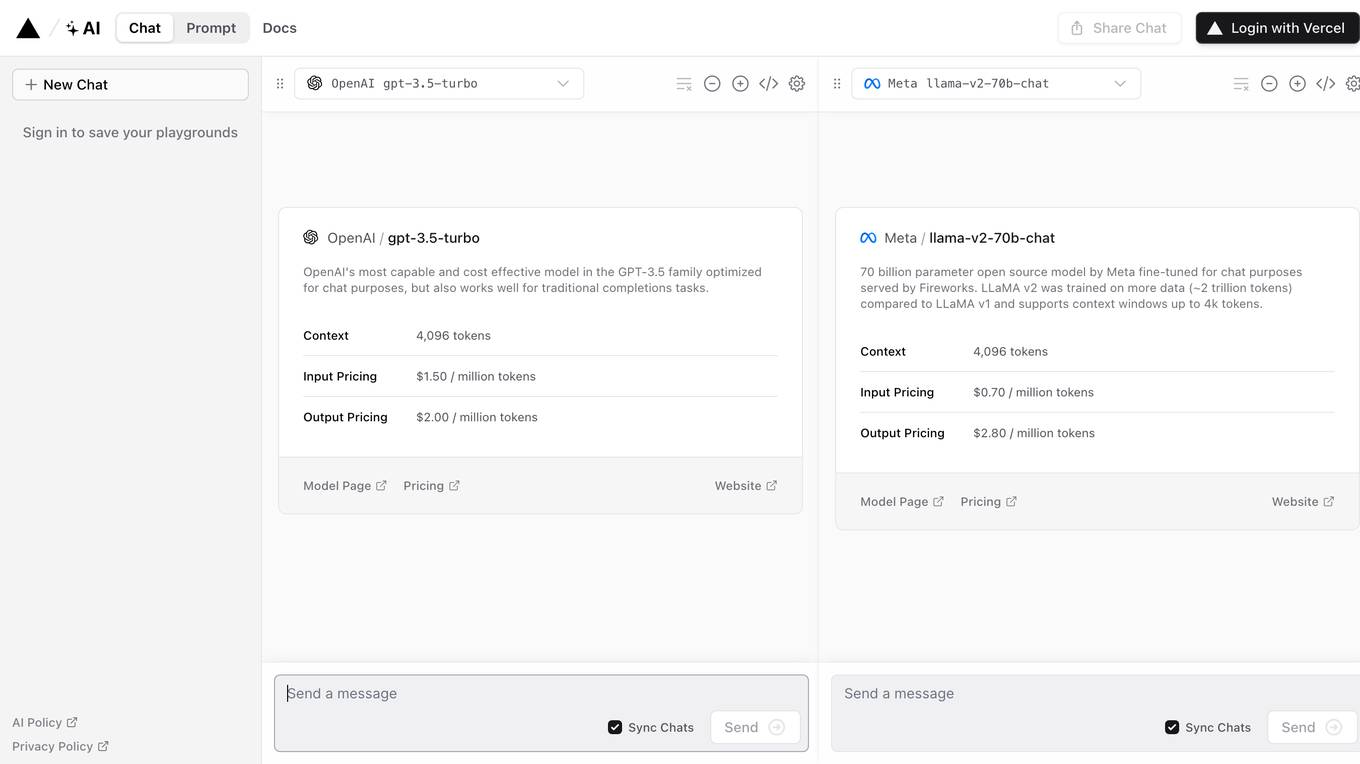

AI SDK

The AI SDK is a free open-source library designed to empower developers to build AI-powered products. It offers a unified provider API, generative UI capabilities, framework-agnostic support, and streaming AI responses. The SDK has received high praise from developers for its ease of use, speed of development, and comprehensive documentation.

AI SDK 6

The AI SDK 6 is an AI Toolkit for TypeScript developed by the creators of Next.js. It is a free open-source library that provides the necessary tools to build AI-powered products. The SDK allows users to create dynamic, AI-powered user interfaces, switch between AI providers easily, and receive streaming AI responses instantly. It is framework-agnostic, compatible with popular frameworks like React, Next, Vue, Nuxt, and SvelteKit. The SDK has received positive feedback from developers for its ease of use, fast deployment, and powerful features.

Chat SDK

Chat SDK is a unified TypeScript SDK for building chat bots across various platforms such as Slack, Microsoft Teams, Google Chat, Discord, and more. It allows users to write bot logic once and deploy it everywhere. The SDK provides features like actions, cards, direct messages, emoji support, file uploads, modals, slash commands, streaming, error handling, and platform adapters. With full TypeScript support and AI streaming capabilities, Chat SDK simplifies the development of chatbots for different communication channels.

1 - Open Source AI Tools

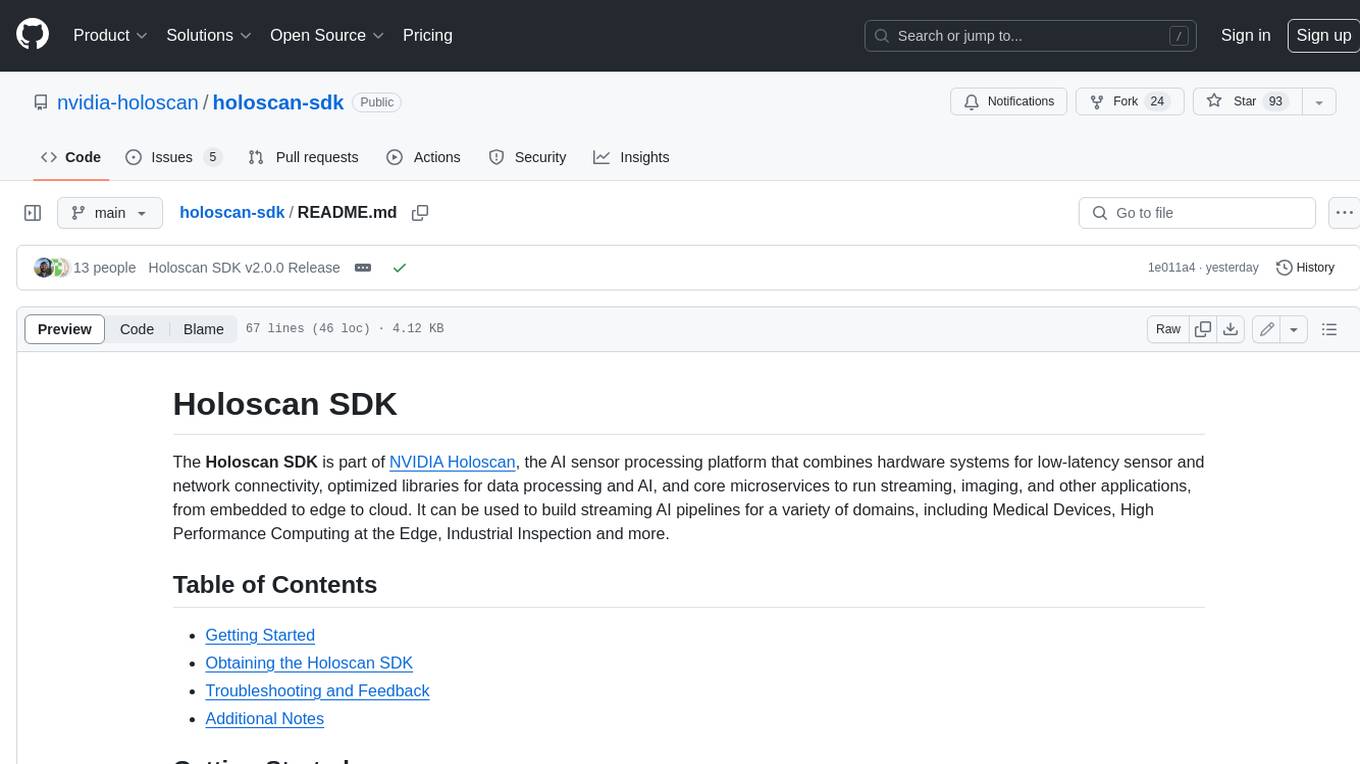

holoscan-sdk

The Holoscan SDK is part of NVIDIA Holoscan, the AI sensor processing platform that combines hardware systems for low-latency sensor and network connectivity, optimized libraries for data processing and AI, and core microservices to run streaming, imaging, and other applications, from embedded to edge to cloud. It can be used to build streaming AI pipelines for a variety of domains, including Medical Devices, High Performance Computing at the Edge, Industrial Inspection and more.

20 - OpenAI Gpts

Relume

An interface for Relume's AI Site Builder, designed to streamline the web design and development process

Centesimus Annus Pro Pontifice GPT

Expert in Church's social doctrine, aiding in understanding and spreading teachings.

Build a Brand

Unique custom images based on your input. Just type ideas and the brand image is created.

Beam Eye Tracker Extension Copilot

Build extensions using the Eyeware Beam eye tracking SDK

Business Model Canvas Strategist

Business Model Canvas Creator - Build and evaluate your business model

League Champion Builder GPT

Build your own League of Legends Style Champion with Abilities, Back Story and Splash Art

RenovaTecno

Your tech buddy helping you refurbish or build a PC from scratch, tailored to your needs, budget, and language.

Gradle Expert

Your expert in Gradle build configuration, offering clear, practical advice.

XRPL GPT

Build on the XRP Ledger with assistance from this GPT trained on extensive documentation and code samples.