Best AI tools for< Accelerate Llms >

20 - AI tool Sites

Mirage

Mirage is a custom AI platform that builds custom LLMs to accelerate productivity. It is backed by Sequoia and offers a variety of features, including the ability to create custom AI models, train models on your own data, and deploy models to the cloud or on-premises.

Gradient

Gradient is an AI automation platform designed specifically for enterprise AI purposes. It offers a seamless way to automate manual workflows with minimal effort, providing business intuition and industry expertise. The platform ensures unmatched compliance with various regulations and prioritizes privacy and security. Gradient's Agent Foundry enables users to automate tasks, integrate data, and optimize workflows efficiently, making it a valuable tool for modern enterprises.

Centari

Centari is a platform for deal intelligence that leverages generative AI to transform complex documents into actionable insights. It helps users unlock more dealflow, enrich marketing materials, visualize market trends, and streamline the deal validation process. With features like data extraction, intuitive validation, and deal history visualization, Centari empowers users to make informed decisions and win deals with competitive knowledge.

Denvr DataWorks AI Cloud

Denvr DataWorks AI Cloud is a cloud-based AI platform that provides end-to-end AI solutions for businesses. It offers a range of features including high-performance GPUs, scalable infrastructure, ultra-efficient workflows, and cost efficiency. Denvr DataWorks is an NVIDIA Elite Partner for Compute, and its platform is used by leading AI companies to develop and deploy innovative AI solutions.

ModelOp

ModelOp is the leading AI Governance software for enterprises, providing a single source of truth for all AI systems, automated process workflows, real-time insights, and integrations to extend the value of existing technology investments. It helps organizations safeguard AI initiatives without stifling innovation, ensuring compliance, accelerating innovation, and improving key performance indicators. ModelOp supports generative AI, Large Language Models (LLMs), in-house, third-party vendor, and embedded systems. The software enables visibility, accountability, risk tiering, systemic tracking, enforceable controls, workflow automation, reporting, and rapid establishment of AI governance.

OneSky Localization Agent

OneSky Localization Agent (OLA) is an AI-powered platform designed to streamline the localization process for mobile apps, games, websites, and software products. OLA leverages multiple Large Language Models (LLMs) and a collaborative translation approach by a team of AI agents to deliver high-quality, accurate translations. It offers post-editing solutions, real-time progress tracking, and seamless integration into development workflows. With a focus on precision-engineered AI translations and human touch, OLA aims to provide a smarter way for global growth through efficient localization.

Graphcore

Graphcore is a cloud-based platform that accelerates machine learning processes by harnessing the power of IPU-powered generative AI. It offers cloud services, pre-trained models, optimized inference engines, and APIs to streamline operations and bring intelligence to enterprise applications. With Graphcore, users can build and deploy AI-native products and platforms using the latest AI technologies such as LLMs, NLP, and Computer Vision.

Elastic

Elastic is a Search AI Company that offers a platform for building tailored experiences, search and analytics, data ingestion, visualization, and generative AI solutions. The company provides services like Elastic Cloud for real-time insights, Elastic AI Assistant for retrieval and generation, and Search AI Lake for faster integration with LLMs. Elastic aims to help businesses scale with low-latency search AI and accelerate problem resolution with observability powered by advanced ML and analytics.

Serenity Star

Serenity Star is a Generative AI deployment service that offers Models As A Service to help businesses increase productivity and design tailored solutions. The platform provides access to over 100 LLMs, an ecosystem with agents, co-pilots, and plugins, and features low code and no code solutions for quick market release. Serenity Star aims to simplify the implementation of Generative AI in enterprises by offering tools, support, and resources for process optimization, innovation, revenue maximization, and informed decision-making.

Innovation Acceleration

Innovation Acceleration is an AI-powered platform that empowers organizations to unlock their creative potential through the integration of advanced AI technologies and structured innovation frameworks. The platform offers a systematic and repeatable approach to creative thinking using Systematic Inventive Thinking (SIT) and Natural Language Processing (NLP) tools such as Large Language Models (LLMs) and generative AI (GenAI). Innovation Acceleration aims to accelerate the innovation process by guiding users through creating customized, industry-leading products, processes, strategies, and marketing innovations.

Prem AI

Prem is an AI platform that empowers developers and businesses to build and fine-tune generative AI models with ease. It offers a user-friendly development platform for developers to create AI solutions effortlessly. For businesses, Prem provides tailored model fine-tuning and training to meet unique requirements, ensuring data sovereignty and ownership. Trusted by global companies, Prem accelerates the advent of sovereign generative AI by simplifying complex AI tasks and enabling full control over intellectual capital. With a suite of foundational open-source SLMs, Prem supercharges business applications with cutting-edge research and customization options.

Adjust

Adjust is an AI-driven platform that helps mobile app developers accelerate their app's growth through a comprehensive suite of measurement, analytics, automation, and fraud prevention tools. The platform offers unlimited measurement capabilities across various platforms, powerful analytics and reporting features, AI-driven decision-making recommendations, streamlined operations through automation, and data protection against mobile ad fraud. Adjust also provides solutions for iOS and SKAdNetwork success, CTV and OTT performance enhancement, ROI measurement, fraud prevention, and incrementality analysis. With a focus on privacy and security, Adjust empowers app developers to optimize their marketing strategies and drive tangible growth.

Tidio

Tidio is an AI-powered customer service solution that helps businesses automate support, convert more leads, and increase revenue. With Lyro AI Chatbot, businesses can answer up to 70% of customer inquiries without human intervention, freeing up support agents to focus on high-value requests. Tidio also offers live chat, helpdesk, and automation features to help businesses provide excellent customer support and grow their business.

Tidio

Tidio is an AI-powered customer service solution that helps businesses automate their support and sales processes. With Lyro AI Chatbot, businesses can solve up to 70% of customer problems without human intervention. Tidio also offers live chat, helpdesk, and automation features to help businesses provide excellent customer service and grow their revenue.

Law Insider

Law Insider is an AI-powered platform that offers contract drafting, review, and redlining services at the speed of AI. With over 10,000 customers worldwide, Law Insider provides standard templates, public contracts, and resources for legal professionals. Users can search for sample contracts and clauses using the Law Insider database or generate original drafts with the help of their AI Assistant. The platform also allows users to review and redline contracts directly in Microsoft Word, ensuring compliance with industry standards and expert playbooks. Law Insider's features include AI-powered contract review and redlining, benchmarking against the Law Insider Index, playbook builder, and upcoming AI clause drafting with trusted validation. Users can access the world's largest public contract database and watch tutorial videos to better understand the platform's capabilities.

EarnBetter

EarnBetter is an AI-powered platform that offers assistance in creating professional resumes, cover letters, and job search support. The platform utilizes artificial intelligence to rewrite and reformat resumes, generate tailored cover letters, provide personalized job matches, and offer interview support. Users can upload their current resume to get started and access a range of features to enhance their job search process. EarnBetter aims to streamline the job search experience by providing free, unlimited, and professional document creation services.

HubSpot

HubSpot is an AI-powered platform that offers CRM, marketing, sales, customer service, and content management tools. It provides a unified platform optimized by AI, with features such as marketing automation, sales pipeline development, customer support, content creation, and data organization. HubSpot caters to businesses of all sizes, from startups to large enterprises, helping them generate leads, automate processes, and improve customer retention. The platform also offers a range of integrations and solutions tailored to different business needs.

Scale AI

Scale AI is an AI tool that accelerates the development of AI applications for enterprise, government, and automotive sectors. It offers Scale Data Engine for generative AI, Scale GenAI Platform, and evaluation services for model developers. The platform leverages enterprise data to build sustainable AI programs and partners with leading AI models. Scale's focus on generative AI applications, data labeling, and model evaluation sets it apart in the AI industry.

Gab AI

Gab AI is an uncensored and unfiltered AI platform that offers a wide range of AI tools and applications. It provides users with access to various AI characters, chatbots, image generators, and creative writing prompts. The platform aims to accelerate users' creativity and knowledge by engaging them in conversations, generating content, and exploring different AI-generated outputs.

Sense Talent Engagement Platform

Sense Talent Engagement Platform is an AI-powered recruitment platform that offers a comprehensive suite of tools to streamline the hiring process. It provides automation workflows, database cleanup, interview scheduling, text messaging, mass texting, WhatsApp and SMS integration, mobile app support, candidate matching, AI chatbot, job matching, scheduling bot, smart FAQ, pre-screening, sourcing, live chat, instant apply, talent CRM, generative AI, voice AI, referrals, analytics, and more. The platform caters to various industries such as financial services, healthcare, logistics, manufacturing, retail, staffing, technology, and more, helping organizations attract, engage, and retain top talent efficiently.

1 - Open Source AI Tools

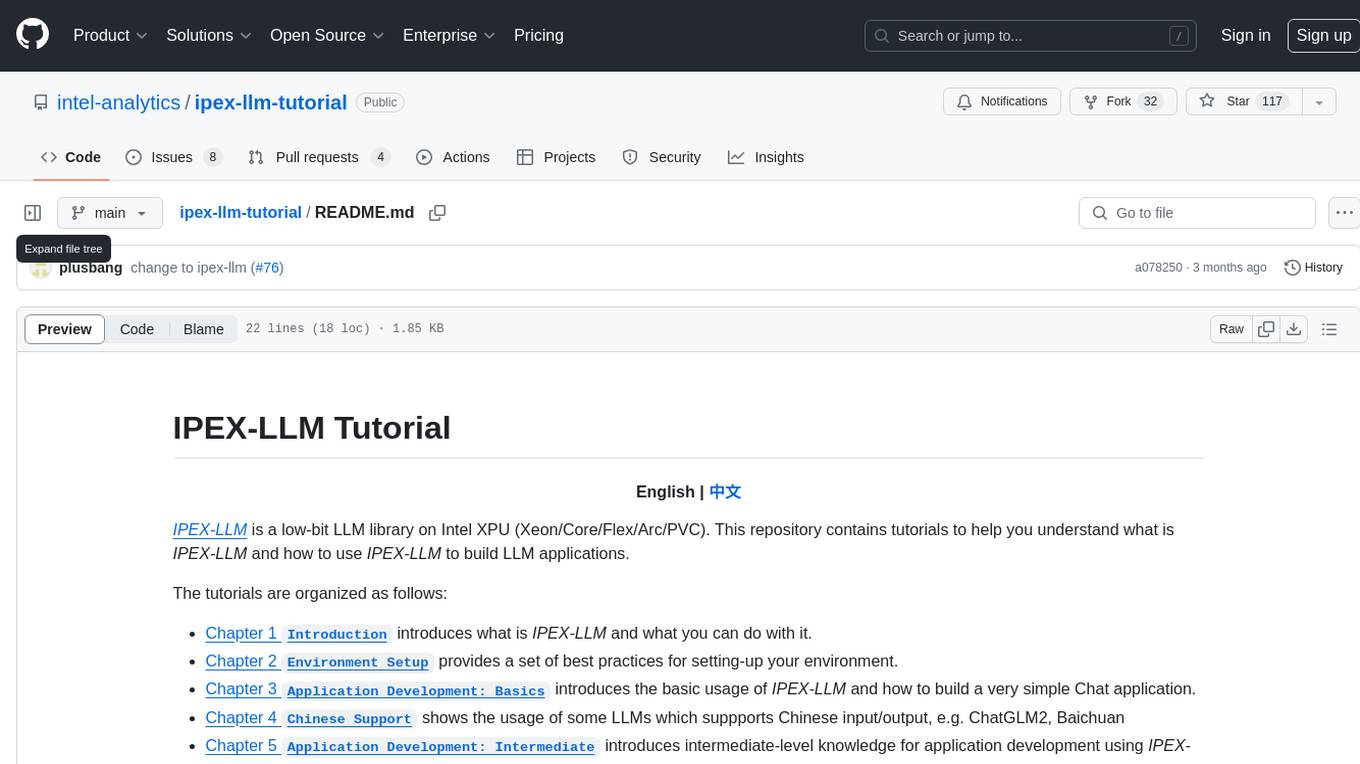

ipex-llm-tutorial

IPEX-LLM is a low-bit LLM library on Intel XPU (Xeon/Core/Flex/Arc/PVC) that provides tutorials to help users understand and use the library to build LLM applications. The tutorials cover topics such as introduction to IPEX-LLM, environment setup, basic application development, Chinese language support, intermediate and advanced application development, GPU acceleration, and finetuning. Users can learn how to build chat applications, chatbots, speech recognition, and more using IPEX-LLM.

7 - OpenAI Gpts

Material Tailwind GPT

Accelerate web app development with Material Tailwind GPT's components - 10x faster.

Tourist Language Accelerator

Accelerates the learning of key phrases and cultural norms for travelers in various languages.

Digital Entrepreneurship Accelerator Coach

The Go-To Coach for Aspiring Digital Entrepreneurs, Innovators, & Startups. Learn More at UnderdogInnovationInc.com.

24 Hour Startup Accelerator

Niche-focused startup guide, humorous, strategic, simplifying ideas.

Backloger.ai - Product MVP Accelerator

Drop in any requirements or any text ; I'll help you create an MVP with insights.

Digital Boost Lab

A guide for developing university-focused digital startup accelerator programs.