Best AI tools for< Accelerate Computation >

20 - AI tool Sites

Lilac

Lilac is an AI tool designed to enhance data quality and exploration for AI applications. It offers features such as data search, quantification, editing, clustering, semantic search, field comparison, and fuzzy-concept search. Lilac enables users to accelerate dataset computations and transformations, making it a valuable asset for data scientists and AI practitioners. The tool is trusted by Alignment Lab and is recommended for working with LLM datasets.

Zapata AI

Zapata AI is an Industrial Generative AI application that empowers enterprises to revolutionize their industry by building and deploying cutting-edge AI applications. It specializes in tackling complex business challenges with precision using quantum techniques and advanced computing technologies. The platform offers solutions for various industries, accelerates quantum research, and provides expert perspectives on Generative AI and quantum computing.

Cerebras

Cerebras is an AI tool that offers products and services related to AI supercomputers, cloud system processors, and applications for various industries. It provides high-performance computing solutions, including large language models, and caters to sectors such as health, energy, government, scientific computing, and financial services. Cerebras specializes in AI model services, offering state-of-the-art models and training services for tasks like multi-lingual chatbots and DNA sequence prediction. The platform also features the Cerebras Model Zoo, an open-source repository of AI models for developers and researchers.

Proscia

Proscia is a leading provider of digital pathology solutions for the modern laboratory. Its flagship product, Concentriq, is an enterprise pathology platform that enables anatomic pathology laboratories to achieve 100% digitization and deliver faster, more precise results. Proscia also offers a range of AI applications that can be used to automate tasks, improve diagnostic accuracy, and accelerate research. The company's mission is to perfect cancer diagnosis with intelligent software that changes the way the world practices pathology.

Cradle

Cradle is a protein engineering platform that uses machine learning to design improved protein sequences. It allows users to import assay data, generate new sequences, test them in the lab, and import the results to improve the model. Cradle can be used to optimize multiple properties of a protein simultaneously, and it has been used by leading biotech teams to accelerate new and ongoing projects.

Altair

Altair is a global leader in computational intelligence, offering software and cloud solutions in simulation, HPC, data analytics, and AI. The platform provides advanced technology for accelerating AI adoption, powering engineering processes, and enabling sustainability solutions across various industries. Altair's products and platforms cater to diverse sectors such as aerospace, automotive, healthcare, and more, with a focus on digital twin technology, generative AI, and cloud computing. The company also hosts events, webinars, and training programs to support users in leveraging their tools effectively.

Lavo Life Sciences

Lavo Life Sciences is an AI-accelerated crystal structure prediction application that helps in drug development by providing accurate predictions for small molecule drugs. The application utilizes AI technology to optimize solid-state formulations, reduce turnaround time, mitigate risks, and discover novel polymorphs, ultimately streamlining the pharmaceutical research and development process.

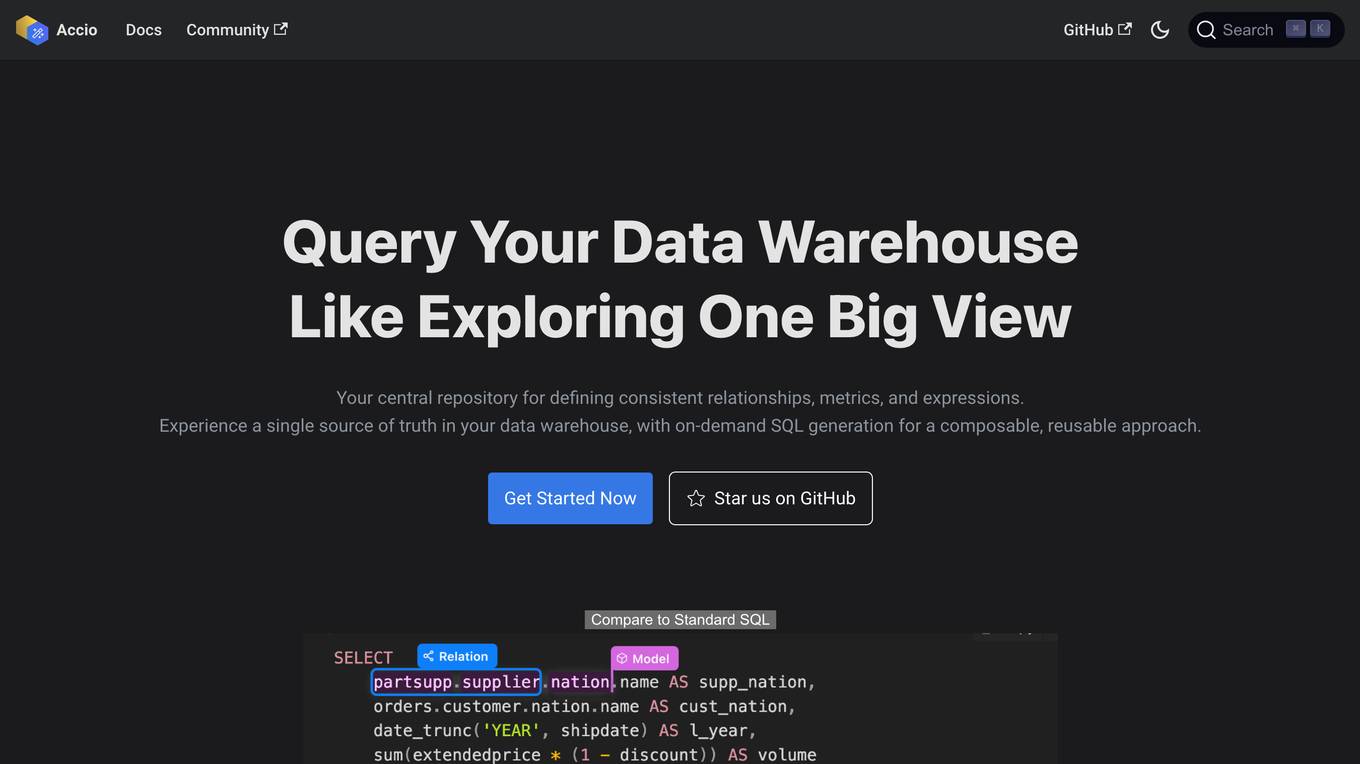

Accio

Accio is a data modeling tool that allows users to define consistent relationships, metrics, and expressions for on-the-fly computations in reports and dashboards across various BI tools. It provides a syntax similar to GraphQL that allows users to define models, relationships, and metrics in a human-readable format. Accio also offers a user-friendly interface that provides data analysts with a holistic view of the relationships between their data models, enabling them to grasp the interconnectedness and dependencies within their data ecosystem. Additionally, Accio utilizes DuckDB as a caching layer to accelerate query performance for BI tools.

Owkin

Owkin is a full-stack AI biotech company that integrates the best of human and artificial intelligence to deliver better drugs and diagnostics at scale. By understanding complex biology through AI, Owkin identifies new treatments, de-risks and accelerates clinical trials, and builds diagnostic tools to reduce time to impact for patients.

BizWith.AI

BizWith.AI is an AI-powered automation platform that helps businesses save time, cut costs, and outperform the competition. With BizWith.AI, businesses can create unique, plagiarism-free content, images, voice overs and transcriptions in a matter of seconds. They can also schedule posts, automate responses, and get insights to enhance their social media presence. BizWith.AI's AI-powered chatbot can handle customer queries effectively, reducing support costs and improving customer satisfaction. Businesses can also expand their team with 32 AI agents and coders, providing them with a diverse range of specialized assistance.

Trend Hunter

Trend Hunter is an AI-powered platform that offers a vast database of ideas and innovations, trend reports, consumer insights, advisory services, and training programs. It combines human researchers with AI to provide data-driven insights for innovators. The platform helps businesses accelerate innovation, identify trends, and stay ahead of the competition. Trend Hunter's AI capabilities include natural language processing, machine learning, image recognition, and consumer insights analysis.

Artwork Flow

Artwork Flow is an AI-powered artwork management software that helps streamline compliance checks, proofreading, and automation processes for packaging artwork. It empowers brand and marketing teams to achieve faster time to market by providing unified and intelligent creative management. The software offers features such as online proofing, smart tagging, workflow automation, and brand compliance checks. Artwork Flow is trusted by global packaging artwork teams and has received positive feedback for its effectiveness and user-friendly interface.

Deepfake Detection Challenge Dataset

The Deepfake Detection Challenge Dataset is a project initiated by Facebook AI to accelerate the development of new ways to detect deepfake videos. The dataset consists of over 100,000 videos and was created in collaboration with industry leaders and academic experts. It includes two versions: a preview dataset with 5k videos and a full dataset with 124k videos, each featuring facial modification algorithms. The dataset was used in a Kaggle competition to create better models for detecting manipulated media. The top-performing models achieved high accuracy on the public dataset but faced challenges when tested against the black box dataset, highlighting the importance of generalization in deepfake detection. The project aims to encourage the research community to continue advancing in detecting harmful manipulated media.

ytRank.ai

ytRank.ai is an AI-powered tool designed to help YouTube creators optimize their content strategy, outsmart the competition, and accelerate channel growth. It offers a range of powerful features such as discovering trending keywords, analyzing SEO scores, generating compelling titles, and creating optimized tags. By leveraging AI technology, ytRank.ai aims to boost channel rankings, visibility, and attract more viewers. With a focus on increasing visibility, engagement, and long-term growth, ytRank.ai is a valuable tool for YouTubers seeking to enhance their online presence.

Adjust

Adjust is an AI-driven platform that helps mobile app developers accelerate their app's growth through a comprehensive suite of measurement, analytics, automation, and fraud prevention tools. The platform offers unlimited measurement capabilities across various platforms, powerful analytics and reporting features, AI-driven decision-making recommendations, streamlined operations through automation, and data protection against mobile ad fraud. Adjust also provides solutions for iOS and SKAdNetwork success, CTV and OTT performance enhancement, ROI measurement, fraud prevention, and incrementality analysis. With a focus on privacy and security, Adjust empowers app developers to optimize their marketing strategies and drive tangible growth.

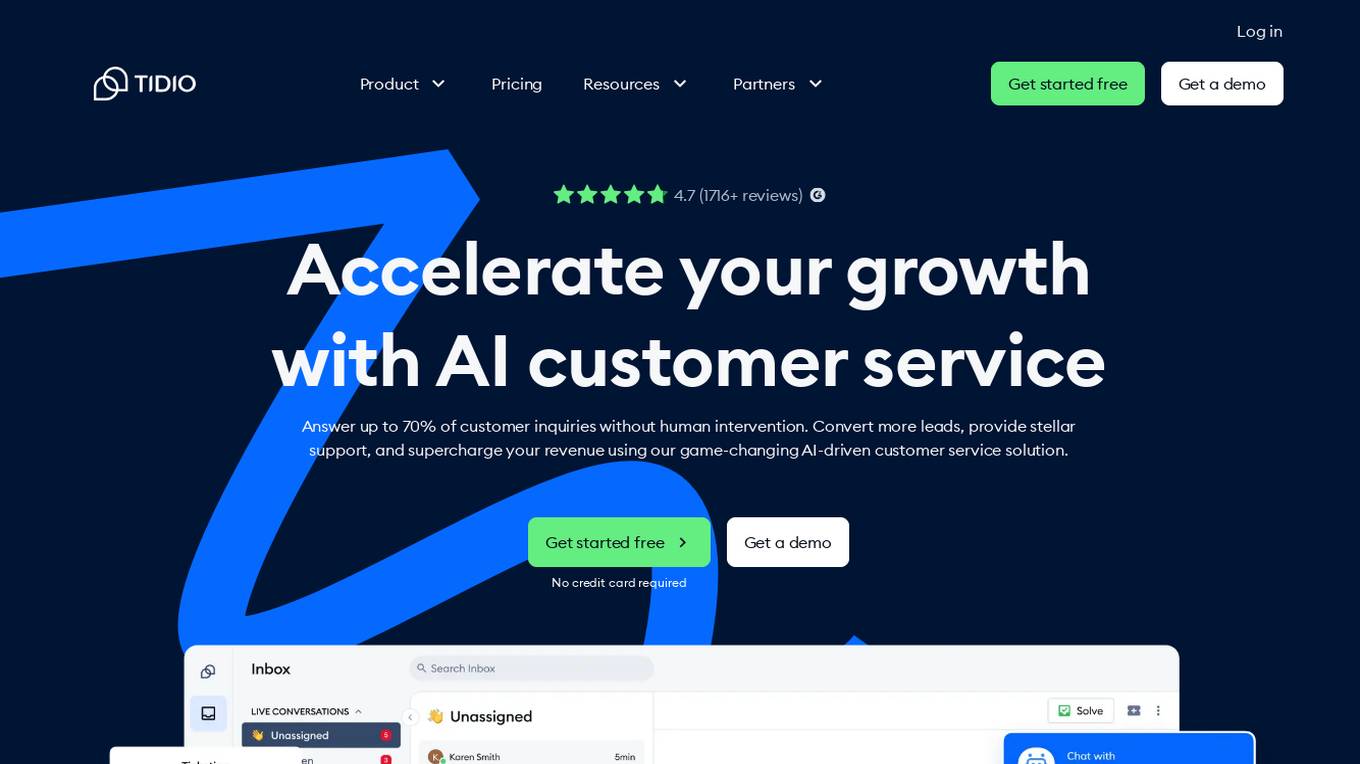

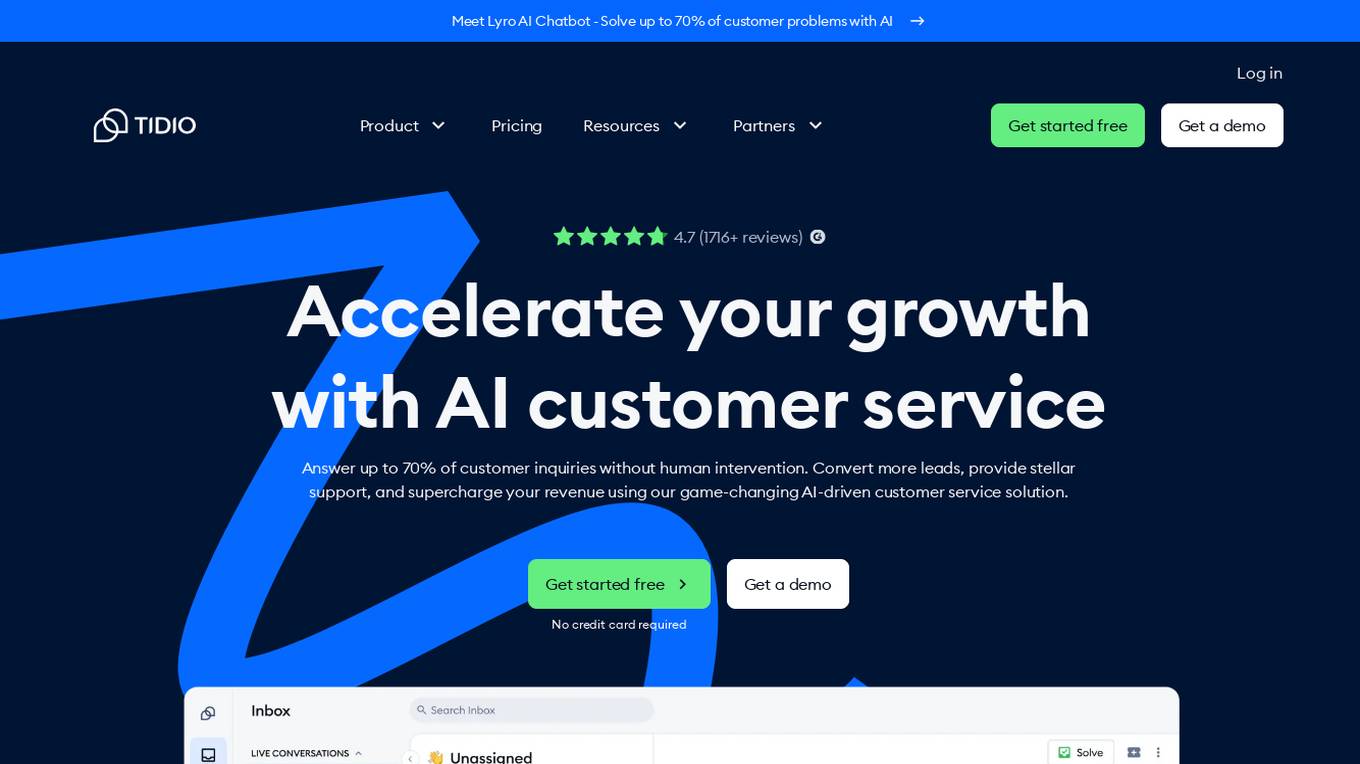

Tidio

Tidio is an AI-powered customer service solution that helps businesses automate support, convert more leads, and increase revenue. With Lyro AI Chatbot, businesses can answer up to 70% of customer inquiries without human intervention, freeing up support agents to focus on high-value requests. Tidio also offers live chat, helpdesk, and automation features to help businesses provide excellent customer support and grow their business.

Tidio

Tidio is an AI-powered customer service solution that helps businesses automate their support and sales processes. With Lyro AI Chatbot, businesses can solve up to 70% of customer problems without human intervention. Tidio also offers live chat, helpdesk, and automation features to help businesses provide excellent customer service and grow their revenue.

Law Insider

Law Insider is an AI-powered platform that offers contract drafting, review, and redlining services at the speed of AI. With over 10,000 customers worldwide, Law Insider provides standard templates, public contracts, and resources for legal professionals. Users can search for sample contracts and clauses using the Law Insider database or generate original drafts with the help of their AI Assistant. The platform also allows users to review and redline contracts directly in Microsoft Word, ensuring compliance with industry standards and expert playbooks. Law Insider's features include AI-powered contract review and redlining, benchmarking against the Law Insider Index, playbook builder, and upcoming AI clause drafting with trusted validation. Users can access the world's largest public contract database and watch tutorial videos to better understand the platform's capabilities.

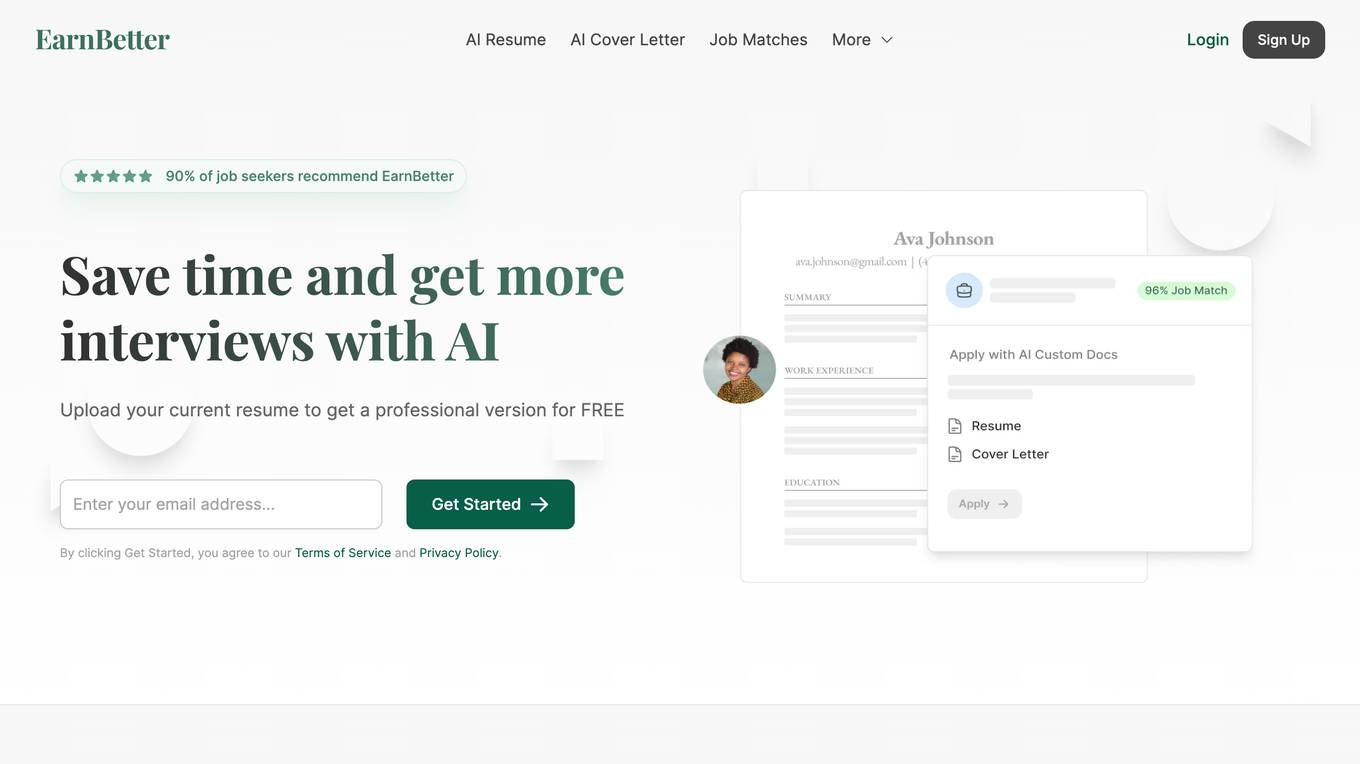

EarnBetter

EarnBetter is an AI-powered platform that offers assistance in creating professional resumes, cover letters, and job search support. The platform utilizes artificial intelligence to rewrite and reformat resumes, generate tailored cover letters, provide personalized job matches, and offer interview support. Users can upload their current resume to get started and access a range of features to enhance their job search process. EarnBetter aims to streamline the job search experience by providing free, unlimited, and professional document creation services.

HubSpot

HubSpot is an AI-powered platform that offers CRM, marketing, sales, customer service, and content management tools. It provides a unified platform optimized by AI, with features such as marketing automation, sales pipeline development, customer support, content creation, and data organization. HubSpot caters to businesses of all sizes, from startups to large enterprises, helping them generate leads, automate processes, and improve customer retention. The platform also offers a range of integrations and solutions tailored to different business needs.

1 - Open Source AI Tools

MInference

MInference is a tool designed to accelerate pre-filling for long-context Language Models (LLMs) by leveraging dynamic sparse attention. It achieves up to a 10x speedup for pre-filling on an A100 while maintaining accuracy. The tool supports various decoding LLMs, including LLaMA-style models and Phi models, and provides custom kernels for attention computation. MInference is useful for researchers and developers working with large-scale language models who aim to improve efficiency without compromising accuracy.

7 - OpenAI Gpts

Material Tailwind GPT

Accelerate web app development with Material Tailwind GPT's components - 10x faster.

Tourist Language Accelerator

Accelerates the learning of key phrases and cultural norms for travelers in various languages.

Digital Entrepreneurship Accelerator Coach

The Go-To Coach for Aspiring Digital Entrepreneurs, Innovators, & Startups. Learn More at UnderdogInnovationInc.com.

24 Hour Startup Accelerator

Niche-focused startup guide, humorous, strategic, simplifying ideas.

Backloger.ai - Product MVP Accelerator

Drop in any requirements or any text ; I'll help you create an MVP with insights.

Digital Boost Lab

A guide for developing university-focused digital startup accelerator programs.