Best AI tools for< Generate Inferences >

20 - AI tool Sites

Tensoic AI

Tensoic AI is an AI tool designed for custom Large Language Models (LLMs) fine-tuning and inference. It offers ultra-fast fine-tuning and inference capabilities for enterprise-grade LLMs, with a focus on use case-specific tasks. The tool is efficient, cost-effective, and easy to use, enabling users to outperform general-purpose LLMs using synthetic data. Tensoic AI generates small, powerful models that can run on consumer-grade hardware, making it ideal for a wide range of applications.

Salad

Salad is a distributed GPU cloud platform that offers fully managed and massively scalable services for AI applications. It provides the lowest priced AI transcription in the market, with features like image generation, voice AI, computer vision, data collection, and batch processing. Salad democratizes cloud computing by leveraging consumer GPUs to deliver cost-effective AI/ML inference at scale. The platform is trusted by hundreds of machine learning and data science teams for its affordability, scalability, and ease of deployment.

ONNX Runtime

ONNX Runtime is a production-grade AI engine designed to accelerate machine learning training and inferencing in various technology stacks. It supports multiple languages and platforms, optimizing performance for CPU, GPU, and NPU hardware. ONNX Runtime powers AI in Microsoft products and is widely used in cloud, edge, web, and mobile applications. It also enables large model training and on-device training, offering state-of-the-art models for tasks like image synthesis and text generation.

Modular

Modular is a fast, scalable Gen AI inference platform that offers a comprehensive suite of tools and resources for AI development and deployment. It provides solutions for AI model development, deployment options, AI inference, research, and resources like documentation, models, tutorials, and step-by-step guides. Modular supports GPU and CPU performance, intelligent scaling to any cluster, and offers deployment options for various editions. The platform enables users to build agent workflows, utilize AI retrieval and controlled generation, develop chatbots, engage in code generation, and improve resource utilization through batch processing.

fal

fal is an AI platform that offers cutting-edge AI models and tools for image and video generation, editing, and audio processing. It partners with leading AI companies to bring state-of-the-art technology to its users, enabling them to create stunning visual and audio content with ease. fal is at the forefront of the AI-driven media creation revolution, providing developers and creators with advanced tools to push the boundaries of creativity.

Outspeed

Outspeed is a platform for Realtime Voice and Video AI applications, providing networking and inference infrastructure to build fast, real-time voice and video AI apps. It offers tools for intelligence across industries, including Voice AI, Streaming Avatars, Visual Intelligence, Meeting Copilot, and the ability to build custom multimodal AI solutions. Outspeed is designed by engineers from Google and MIT, offering robust streaming infrastructure, low-latency inference, instant deployment, and enterprise-ready compliance with regulations such as SOC2, GDPR, and HIPAA.

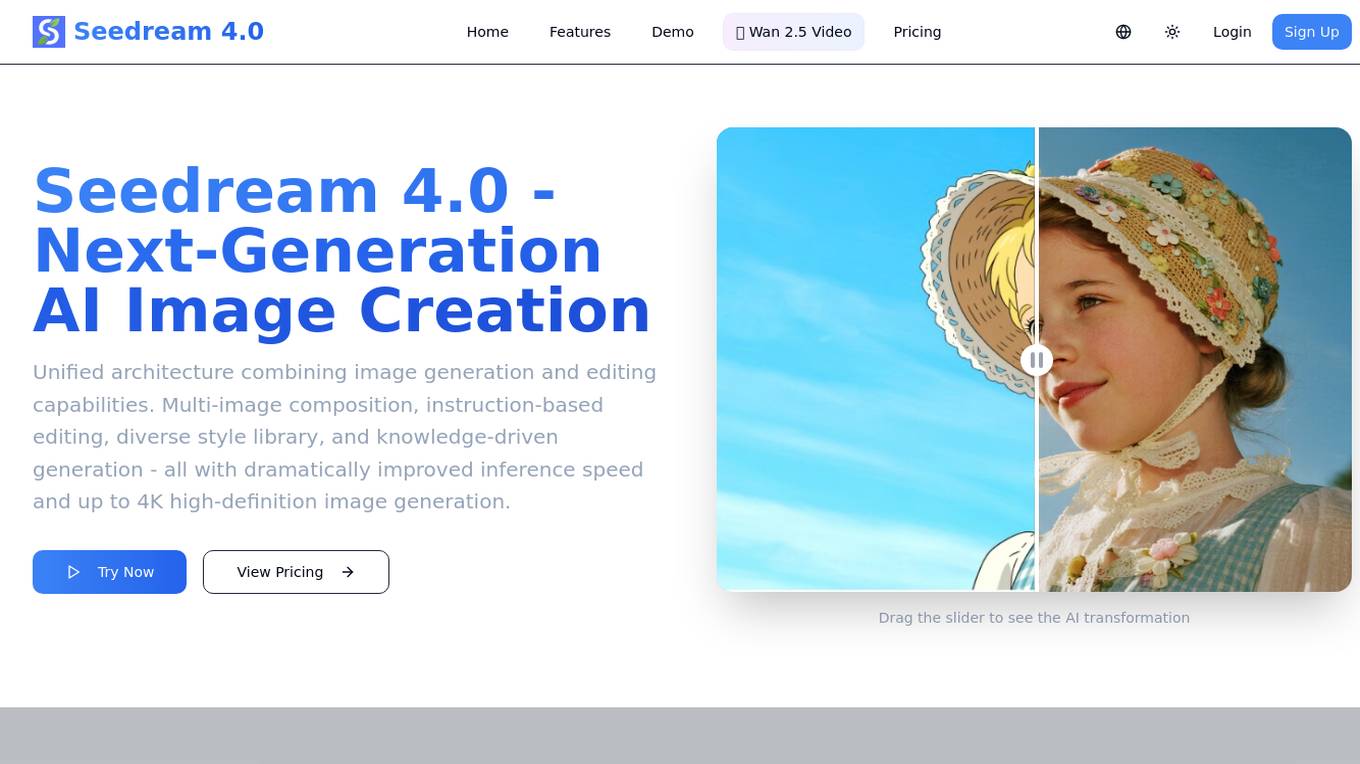

Seedream 4.0

Seedream 4.0 is an AI image generator and editor that offers a unified architecture combining image generation and editing capabilities. It provides features such as multi-image composition, instruction-based editing, diverse style library, and knowledge-driven generation. Users can experience the next generation of AI image creation with dramatically improved inference speed and up to 4K high-definition image generation.

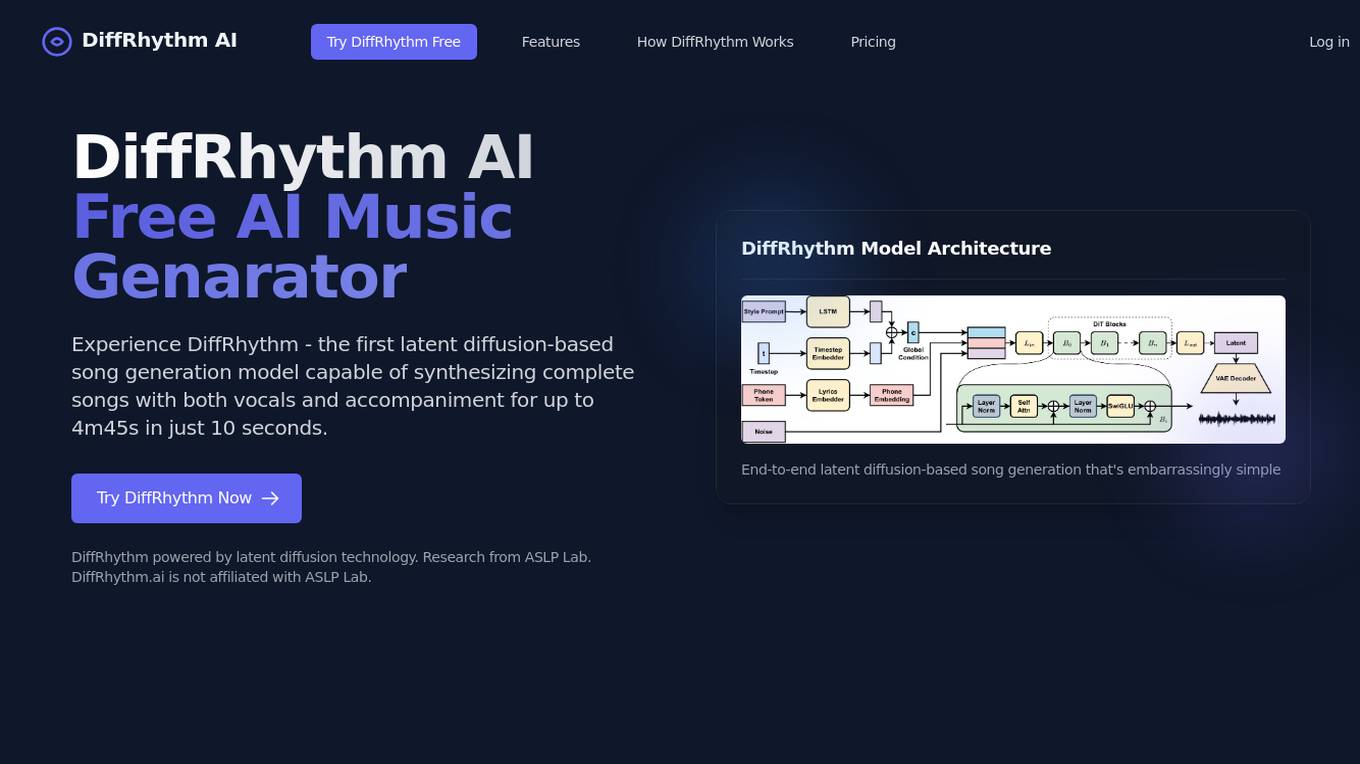

DiffRhythm

DiffRhythm is a free AI music generator that utilizes latent diffusion technology to create complete songs with vocals and accompaniment in just seconds. It offers a simple yet powerful approach to music generation, allowing users to input lyrics and a style prompt to generate professional-sounding music across various genres. With a non-autoregressive structure, DiffRhythm ensures rapid inference speeds and high musicality. The application is scalable, easy to use, and suitable for musicians, creators, and businesses looking to bring their lyrics to life.

Together AI

Together AI is an AI tool that offers a variety of generative AI services, including serverless models, fine-tuning capabilities, dedicated endpoints, and GPU clusters. Users can run or fine-tune leading open source models with only a few lines of code. The platform provides a range of functionalities for tasks such as chat, vision, text-to-speech, code/language reranking, and more. Together AI aims to simplify the process of utilizing AI models for various applications.

Awan LLM

Awan LLM is an AI tool that offers an Unlimited Tokens, Unrestricted, and Cost-Effective LLM Inference API Platform for Power Users and Developers. It allows users to generate unlimited tokens, use LLM models without constraints, and pay per month instead of per token. The platform features an AI Assistant, AI Agents, Roleplay with AI companions, Data Processing, Code Completion, and Applications for profitable AI-powered applications.

vLLM

vLLM is a fast and easy-to-use library for LLM inference and serving. It offers state-of-the-art serving throughput, efficient management of attention key and value memory, continuous batching of incoming requests, fast model execution with CUDA/HIP graph, and various decoding algorithms. The tool is flexible with seamless integration with popular HuggingFace models, high-throughput serving, tensor parallelism support, and streaming outputs. It supports NVIDIA GPUs and AMD GPUs, Prefix caching, and Multi-lora. vLLM is designed to provide fast and efficient LLM serving for everyone.

pplx-api

The pplx-api is an AI tool designed to provide documentation and examples for blazingly fast LLM inference. It offers a reference for developers to integrate AI capabilities into their applications efficiently. The tool focuses on enhancing natural language processing tasks by leveraging advanced models and algorithms. Users can access detailed guides, API references, changelogs, and engage in discussions related to AI technologies.

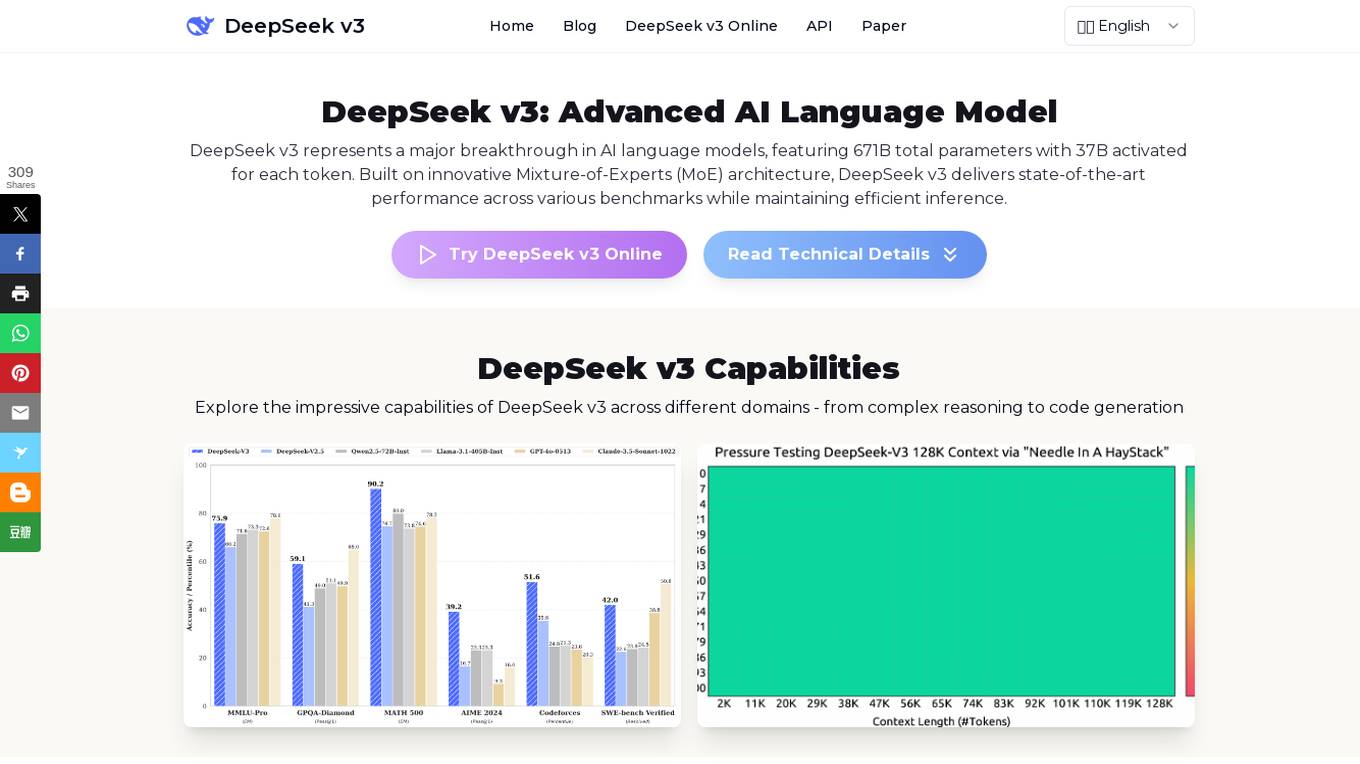

DeepSeek v3

DeepSeek v3 is an advanced AI language model that represents a major breakthrough in AI language models. It features a groundbreaking Mixture-of-Experts (MoE) architecture with 671B total parameters, delivering state-of-the-art performance across various benchmarks while maintaining efficient inference capabilities. DeepSeek v3 is pre-trained on 14.8 trillion high-quality tokens and excels in tasks such as text generation, code completion, and mathematical reasoning. With a 128K context window and advanced Multi-Token Prediction, DeepSeek v3 sets new standards in AI language modeling.

AIMLAPI.com

AIMLAPI.com is an AI tool that provides access to over 200 AI models through a single AI API. It offers a wide range of AI features for tasks such as chat, code, image generation, music generation, video, voice embedding, language, genomic models, and 3D generation. The platform ensures fast inference, top-tier serverless infrastructure, high data security, 99% uptime, and 24/7 support. Users can integrate AI features easily into their products and test API models in a sandbox environment before deployment.

TextSynth

TextSynth is an AI tool that provides access to large language models such as Mistral, Llama, Stable Diffusion, Whisper for text-to-image, text-to-speech, and speech-to-text capabilities via a REST API and a playground. It employs custom inference code for faster inference on standard GPUs and CPUs. Founded in 2020, TextSynth was among the first to offer access to the GPT-2 language model. The service is free with rate limitations, and users can opt for custom support at a small cost per request. All servers are located in France.

Tavus

Tavus is an AI tool that offers digital twin APIs for video generation and conversational video interfaces. It allows users to create immersive AI-generated video experiences using cutting-edge AI technology. Tavus provides best-in-class models like Phoenix-2 for creating realistic digital replicas with natural face movements. The platform offers rapid training, instant inference, support for 30+ languages, and built-in security features to ensure user privacy and safety. Tavus is preferred by developers and product teams for its developer-first approach, ease of integration, and exceptional customer service.

Stable Fast 3D

Stable Fast 3D is a cutting-edge tool that rapidly generates high-quality 3D assets from a single 2D image in just 0.5 seconds. It offers features such as high-quality UV unwrapped mesh, material parameters, albedo colors with reduced illumination bake-in, and optional quad or triangle remeshing. The tool is versatile and can be used by game developers, virtual reality professionals, architects, designers, and others in graphic-intensive fields. Stable Fast 3D revolutionizes workflows by providing fast inference speeds and enhanced capabilities, making it a valuable asset for various industries.

ZeroBot

ZeroBot is the internet's leading voice-enabled chatbot. It allows users to have conversations with AI agents that are tailored to their specific needs. ZeroBot is powered by the Groq LPU™ Inference Engine, which provides instant and smooth chat experiences. With ZeroBot, users can create and speak with AI agents anywhere, anytime.

Nesa Playground

Nesa is a global blockchain network that brings AI on-chain, allowing applications and protocols to seamlessly integrate with AI. It offers secure execution for critical inference, a private AI network, and a global AI model repository. Nesa supports various AI models for tasks like text classification, content summarization, image generation, language translation, and more. The platform is backed by a team with extensive experience in AI and deep learning, with numerous awards and recognitions in the field.

MonsterImage.AI

MonsterImage.AI is an AI-powered tool that allows users to create cool pattern images using Artificial Intelligence. Users can sign in to the platform and receive a link via email to log in. They can write a prompt to describe the image they want to create, select a pattern, specify negative prompts, use a seed for reproduction, adjust guidance scale, controlnet conditioning scale, and inference steps. The tool offers advanced options for creating images and allows users to save their creations in a public collection.

0 - Open Source AI Tools

20 - OpenAI Gpts

Neo Pop Art

Generates mixed media collage art with Dadaism, pop art, and street influences.

Angular Architect AI: Generate Angular Components

Generates Angular components based on requirements, with a focus on code-first responses.

🖌️ Line to Image: Generate The Evolved Prompt!

Transforms lines into detailed prompts for visual storytelling.

Generate text imperceptible to detectors.

Discover how your writing can shine with a unique and human style. This prompt guides you to create rich and varied texts, surprising with original twists and maintaining coherence and originality. Transform your writing and challenge AI detection tools!

Fantasy Banter Bot - Special Teams

I generate witty trash talk for fantasy football leagues.

Product StoryBoard Director

Helps you generate script keyframes, for better experience please visit museclip.ai

Visual Storyteller

Extract the essence of the novel story according to the quantity requirements and generate corresponding images. The images can be used directly to create novel videos.小说推文图片自动批量生成,可自动生成风格一致性图片

CodeGPT

This GPT can generate code for you. For now it creates full-stack apps using Typescript. Just describe the feature you want and you will get a link to the Github code pull request and the live app deployed.