Best AI tools for< Assassin >

Infographic

9 - AI tool Sites

RebeccAi

RebeccAi is an AI-powered business idea evaluation and validation tool that uses AI technology to provide accurate insights into the potential of users' ideas. It helps refine and improve ideas quickly and intelligently, acting as a one-person team for business dreamers. The platform assists in turning ideas into reality, from business concepts to creative projects, by leveraging the latest AI tools and technologies to innovate faster and smarter.

Studious Score AI

Studious Score AI is an AI-powered platform that offers knowledge and skill evaluation services supported by reputable individuals and organizations. The platform aims to revolutionize credentialing by providing a new approach. Studious Score AI is on a mission to establish itself as the global benchmark for assessing skills and knowledge in various aspects of life. Users can explore different categories and unlock their potential through the platform's innovative evaluation methods.

Gemmo AI

Gemmo AI is a boutique AI firm that specializes in building bespoke AI Agents blended with human creativity, insight, and judgment. They offer services such as AI Pathfinder for assessing opportunities, AI Implementation for deployment, and AI Optimization for performance improvement. Gemmo AI focuses on agility, value, and delivering fast, measurable results to help businesses integrate advanced AI technologies seamlessly into their workflows.

Future AGI

Future AGI is a revolutionary AI data management platform that aims to achieve 99% accuracy in AI applications across software and hardware. It provides a comprehensive evaluation and optimization platform for enterprises to enhance the performance of their AI models. Future AGI offers features such as creating trustworthy, accurate, and responsible AI, 10x faster processing, generating and managing diverse synthetic datasets, testing and analyzing agentic workflow configurations, assessing agent performance, enhancing LLM application performance, monitoring and protecting applications in production, and evaluating AI across different modalities.

Modality.AI

Modality.AI is an AI application that has developed an automated, clinically validated system to assess neurological and psychiatric states both in clinic and remotely. The platform utilizes conversational AI to monitor conditions accurately and consistently, allowing researchers and clinicians to review data in near real-time and monitor treatment response over time. Modality.AI collaborates with world-class AI/Machine Learning experts and leading institutions to provide a HIPAA-compliant system for assessing various indications such as ALS, Parkinson's, depression, autism, Huntington's Disease, schizophrenia, and mild cognitive impairment. The platform enables convenient monitoring at home through streaming and analysis of speech and facial responses, without the need for special software or apps. Modality.AI is accessible on various devices with a browser, webcam, and microphone, offering a new approach to efficient and cost-effective clinical trials.

Data & Trust Alliance

The Data & Trust Alliance is a group of industry-leading enterprises focusing on the responsible use of data and intelligent systems. They develop practices to enhance trust in data and AI models, ensuring transparency and reliability in the deployment processes. The alliance works on projects like Data Provenance Standards and Assessing third-party model trustworthiness to promote innovation and trust in AI applications. Through technology and innovation adoption, they aim to leverage expertise and influence for practical solutions and broad adoption across industries.

JobMojito

JobMojito is an AI Interview Platform that offers real-time avatar and voice interviews for job candidates. It provides interview coaching, job preparation, and support in English. The platform allows users to screen, evaluate, and select top talent using an AI Avatar that converses with candidates in real-time. JobMojito offers a comprehensive solution for managing the entire interview process, including preparation, conducting interviews with the avatar, providing instant feedback, and assessing candidates using AI technology. The platform is designed to attract new talent and streamline the recruitment process for organizations.

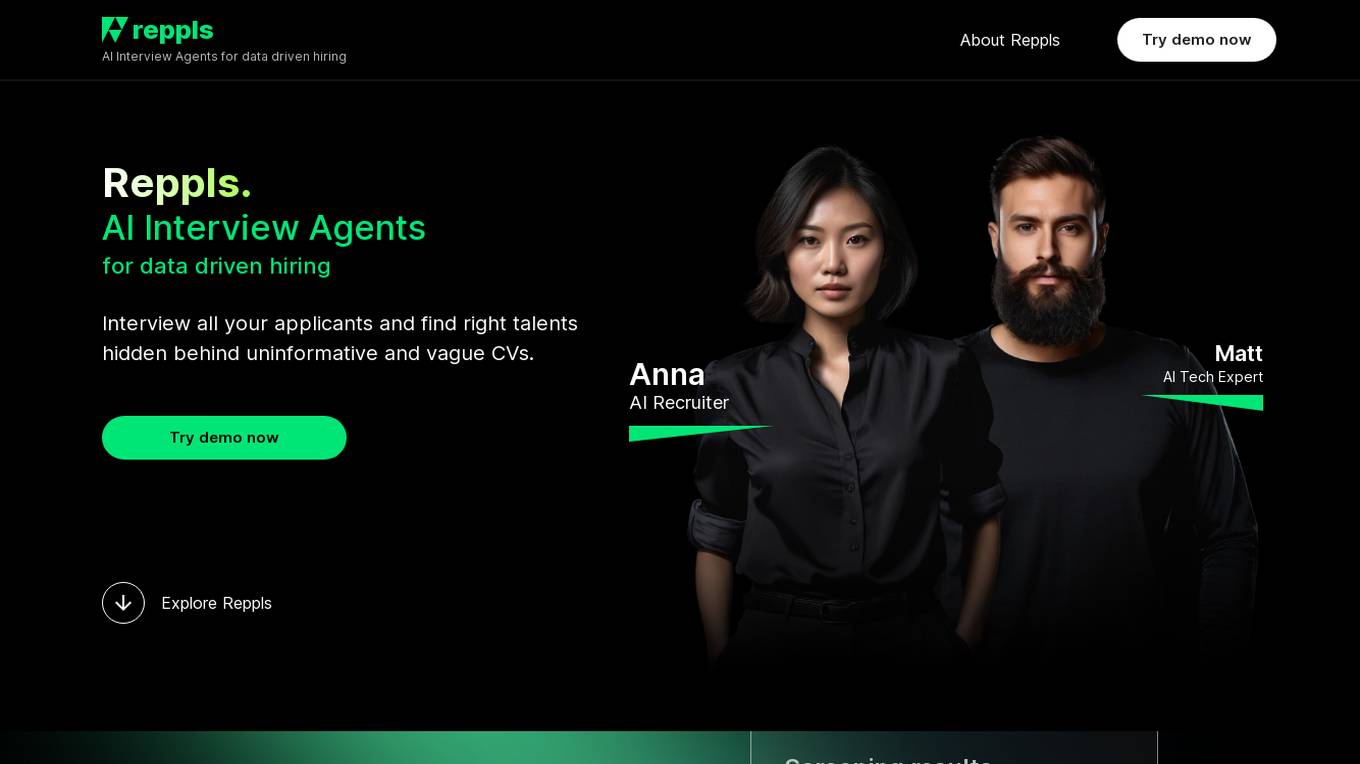

Reppls

Reppls is an AI Interview Agents tool designed for data-driven hiring processes. It helps companies interview all applicants to identify the right talents hidden behind uninformative CVs. The tool offers seamless integration with daily tools, such as Zoom and MS Teams, and provides deep technical assessments in the early stages of hiring, allowing HR specialists to focus on evaluating soft skills. Reppls aims to transform the hiring process by saving time spent on screening, interviewing, and assessing candidates.

Fifth Dimension AI

Fifth Dimension AI is an AI application designed for real estate marketing. Ellie AI, the AI-powered assistant, helps real estate professionals with tasks such as content creation, optimization, drafting briefs, assessing pitches, ensuring brand alignment, generating content ideas, and more. The application aims to streamline marketing processes, enhance brand messaging, and stand out in a competitive market.

0 - Open Source Tools

9 - OpenAI Gpts

Road to Ninja Master

A Ninja Master chatbot, nurturing users into the way of the ninja with extensive knowledge.

Global Network Assessment

Diagnostic tool for assessing global connectedness in business leaders

Are You Weather Dependent or Not?

A mental health self-check tool assessing weather dependency. Powered by WeatherMind

Legal Tax Minimizer

Interactive questionnaire-based guide for assessing tax residency, liability, and legal minimization strategies.

Lupus Kidney Assistant

Interprets lupus nephritis cases, assessing remission status and recommending treatments.

Accommodate

Interactive advisor for crafting equitable workplace accommodations and assessing accessibility.

IQ Test Assistant

An AI conducting 30-question IQ tests, assessing and providing detailed feedback.

OKR Coach

AI OKR Coach is a tool designed to assist users in the process of creating and assessing OKR (Objectives and Key Results). It provides a structured and flexible approach to OKR setting and evaluation.