Best AI tools for< Ai Inference Specialist >

Infographic

20 - AI tool Sites

FuriosaAI

FuriosaAI is an AI application that offers Hardware RNGD for LLM and Multimodality, as well as WARBOY for Computer Vision. It provides a comprehensive developer experience through the Furiosa SDK, Model Zoo, and Dev Support. The application focuses on efficient AI inference, high-performance LLM and multimodal deployment capabilities, and sustainable mass adoption of AI. FuriosaAI features the Tensor Contraction Processor architecture, software for streamlined LLM deployment, and a robust ecosystem support. It aims to deliver powerful and efficient deep learning acceleration while ensuring future-proof programmability and efficiency.

Wallaroo.AI

Wallaroo.AI is an AI inference platform that offers production-grade AI inference microservices optimized on OpenVINO for cloud and Edge AI application deployments on CPUs and GPUs. It provides hassle-free AI inferencing for any model, any hardware, anywhere, with ultrafast turnkey inference microservices. The platform enables users to deploy, manage, observe, and scale AI models effortlessly, reducing deployment costs and time-to-value significantly.

NeuReality

NeuReality is an AI-centric solution designed to democratize AI adoption by providing purpose-built tools for deploying and scaling inference workflows. Their innovative AI-centric architecture combines hardware and software components to optimize performance and scalability. The platform offers a one-stop shop for AI inference, addressing barriers to AI adoption and streamlining computational processes. NeuReality's tools enable users to deploy, afford, use, and manage AI more efficiently, making AI easy and accessible for a wide range of applications.

VeroCloud

VeroCloud is a platform offering tailored solutions for AI, HPC, and scalable growth. It provides cost-effective cloud solutions with guaranteed uptime, performance efficiency, and cost-saving models. Users can deploy HPC workloads seamlessly, configure environments as needed, and access optimized environments for GPU Cloud, HPC Compute, and Tally on Cloud. VeroCloud supports globally distributed endpoints, public and private image repos, and deployment of containers on secure cloud. The platform also allows users to create and customize templates for seamless deployment across computing resources.

Lambda

Lambda is a superintelligence cloud platform that offers on-demand GPU clusters for multi-node training and fine-tuning, private large-scale GPU clusters, seamless management and scaling of AI workloads, inference endpoints and API, and a privacy-first chat app with open source models. It also provides NVIDIA's latest generation infrastructure for enterprise AI. With Lambda, AI teams can access gigawatt-scale AI factories for training and inference, deploy GPU instances, and leverage the latest NVIDIA GPUs for high-performance computing.

Cerebras

Cerebras is a leading AI tool and application provider that offers cutting-edge AI supercomputers, model services, and cloud solutions for various industries. The platform specializes in high-performance computing, large language models, and AI model training, catering to sectors such as health, energy, government, and financial services. Cerebras empowers developers and researchers with access to advanced AI models, open-source resources, and innovative hardware and software development kits.

Luxonis

Luxonis is an AI application that offers Visual AI solutions engineered for precision edge inference. The application provides stereo depth cameras with unique features and quality, enabling users to perform advanced vision tasks on-device, reducing latency and bandwidth demands. With open-source DepthAI API, users can create and deploy custom vision solutions that scale with their needs. Luxonis also offers real-world training data for self-improving vision intelligence and operates flawlessly through vibrations, temperature shifts, and extended use. The application integrates advanced sensing capabilities with up to 48MP cameras, wide field of view, IMUs, microphones, ToF, thermal, IR illumination, and active stereo for unparalleled perception.

Rebellions

Rebellions is an AI technology company specializing in AI chips and systems-on-chip for various applications. They focus on energy-efficient solutions and have secured significant investments to drive innovation in the field of Generative AI. Rebellions aims to reshape the future by providing versatile and efficient AI computing solutions.

Cast AI

Cast AI is an intelligent Kubernetes automation platform that offers live migration for AWS EKS, enabling users to migrate stateful workloads with zero downtime. The platform provides application performance automation by automating and optimizing the entire application stack, including Kubernetes cluster optimization, security, workload optimization, LLM optimization for AIOps, cost monitoring, and database optimization. Cast AI integrates with various cloud services and tools, offering solutions for migration of stateful workloads, inference at scale, and cutting AI costs without sacrificing scale. The platform helps users improve performance, reduce costs, and boost productivity through end-to-end application performance automation.

pplx-api

The pplx-api is an AI tool designed to provide documentation and examples for blazingly fast LLM inference. It offers a reference for developers to integrate AI capabilities into their applications efficiently. The tool focuses on enhancing natural language processing tasks by leveraging advanced models and algorithms. Users can access detailed guides, API references, changelogs, and engage in discussions related to AI technologies.

Cambricon

Cambricon is an AI technology company that specializes in developing intelligent acceleration cards and systems. They offer a range of products including cloud AI acceleration cards, edge AI chips, and intelligent processing units. Cambricon's advanced chiplet technology and MLUarch03 architecture provide high-performance AI solutions for training and inference tasks. The company is dedicated to advancing the AI industry through innovative hardware and software platforms.

Cursor

Cursor is an AI-powered coding assistance tool designed to make users extraordinarily productive in software development. It offers features such as AI-powered coding assistance, intelligent code navigation, fast inference optimization, and more. Cursor is trusted by top companies and developers worldwide to accelerate development securely and at scale.

Viral Magic

Viral Magic is an AI-powered influencer agency that leverages advanced algorithms to match brands with the most suitable influencers for their marketing campaigns. The platform streamlines the influencer marketing process by providing data-driven insights and analytics to optimize campaign performance. With a vast network of influencers across various industries, Viral Magic helps brands reach their target audience effectively and efficiently.

Cerebras

Cerebras is an AI tool that offers products and services related to AI supercomputers, cloud system processors, and applications for various industries. It provides high-performance computing solutions, including large language models, and caters to sectors such as health, energy, government, scientific computing, and financial services. Cerebras specializes in AI model services, offering state-of-the-art models and training services for tasks like multi-lingual chatbots and DNA sequence prediction. The platform also features the Cerebras Model Zoo, an open-source repository of AI models for developers and researchers.

vHub.ai

vHub.ai is an AI-powered Influencer Marketing SaaS Platform that offers a comprehensive suite of tools to streamline influencer marketing campaigns. With features like in-depth influencer insights, authenticity assurance, instant campaign analytics, effortless influencer coordination, and tailor-made campaign strategies, vHub.ai aims to revolutionize the way brands collaborate with influencers. The platform boasts a database of 5 million influencers and 100's of influencer marketing agencies, providing users with the perfect influencer at optimal pricing. By leveraging AI search engine capabilities and VQS (vHub Quality Score), vHub.ai ensures genuine connections and successful influencer partnerships. Users can track campaign performance in real-time, manage all types of campaigns, and customize strategies with precision. With a focus on data-driven influencer discovery, authentic campaigns, custom success campaigns, ROI-driven marketing, and streamlined campaign management, vHub.ai empowers brands to maximize their influencer marketing ROI and reach.

Impulze.ai

Impulze.ai is an Influencer Analytics Platform designed to help users discover and manage influencers from a vast global database covering Instagram, TikTok, and YouTube. The platform offers AI algorithms that effectively identify the right influencers, allowing users to effortlessly filter influencers based on various criteria such as engagement rate, follower count, location, age, and gender. Additionally, Impulze.ai provides audience analytics, fraud detection, and data-driven matching to help users find the perfect match for their brand. With features like real-time performance metrics, audience filters, and campaign tracking, Impulze.ai streamlines influencer marketing strategies for agencies and marketers, saving time and enhancing brand awareness.

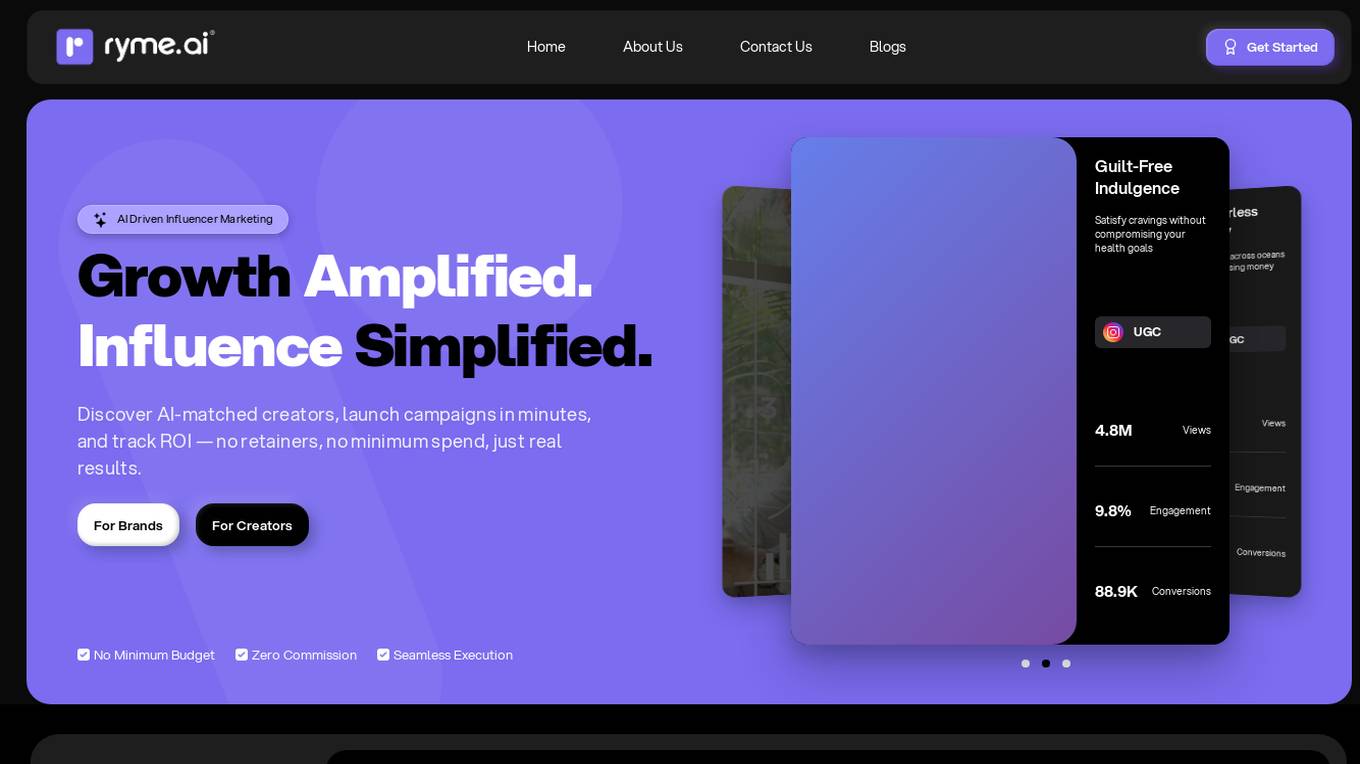

ryme.ai

ryme.ai is an AI-driven influencer marketing platform based in India. It simplifies the process of influencer marketing by providing a platform to discover AI-matched creators, launch campaigns quickly, and track ROI efficiently. The platform offers features such as AI-powered creator discovery, streamlined workflow for content approval, data-driven insights for performance tracking, and the ability to run campaigns with full cost control. ryme.ai aims to empower brands and creators to connect through innovative influencer marketing solutions, ultimately transforming marketing strategies and achieving impactful results.

Lamini

Lamini is an enterprise-level LLM platform that offers precise recall with Memory Tuning, enabling teams to achieve over 95% accuracy even with large amounts of specific data. It guarantees JSON output and delivers massive throughput for inference. Lamini is designed to be deployed anywhere, including air-gapped environments, and supports training and inference on Nvidia or AMD GPUs. The platform is known for its factual LLMs and reengineered decoder that ensures 100% schema accuracy in the JSON output.

MySocial.ai

MySocial.ai is an AI-powered tool designed to help users optimize their digital brand on LinkedIn. It offers features such as curated content suggestions, AI copywriting assistance, post scheduling, and personalized content recommendations. By leveraging AI technology, MySocial.ai aims to assist users in expanding their reach, maximizing influence, and enhancing engagement on the platform.

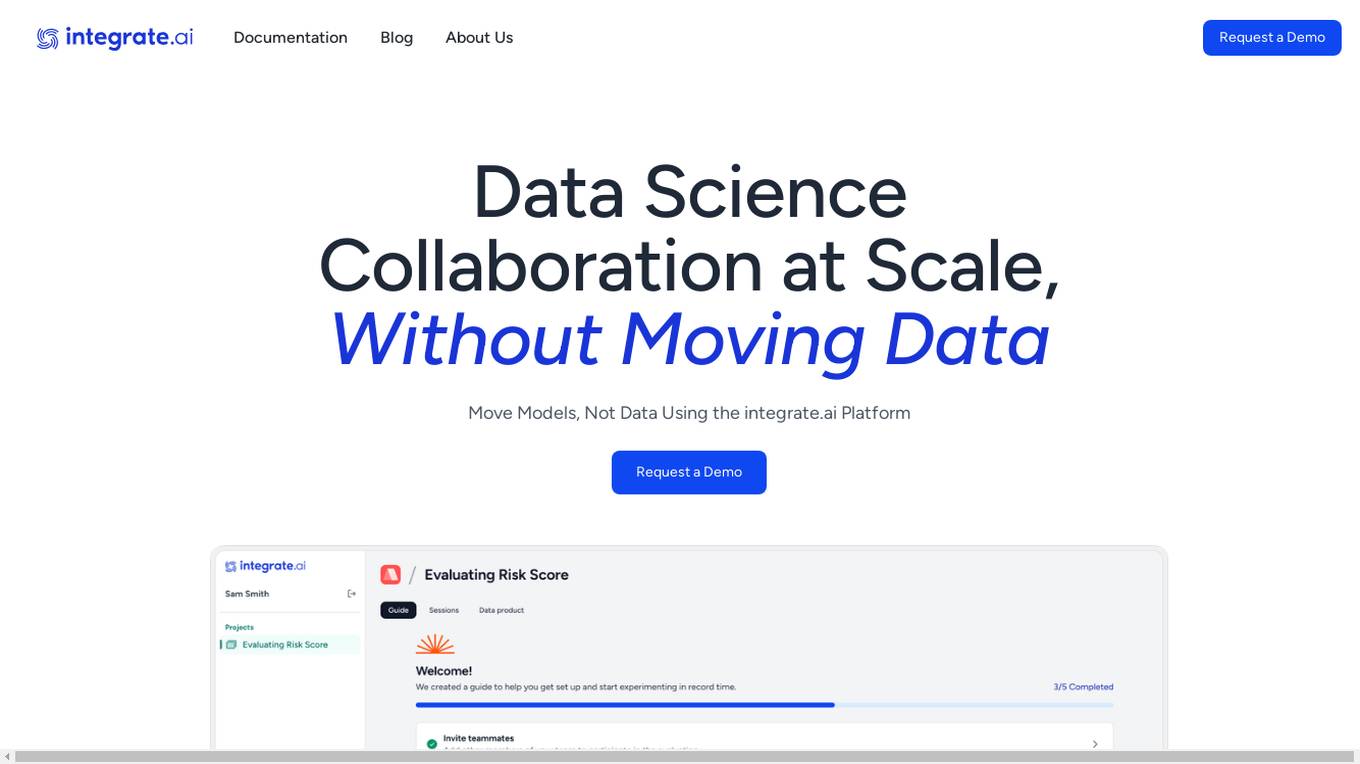

integrate.ai

integrate.ai is a platform that enables data and analytics providers to collaborate easily with enterprise data science teams without moving data. Powered by federated learning technology, the platform allows for efficient proof of concepts, data experimentation, infrastructure agnostic evaluations, collaborative data evaluations, and data governance controls. It supports various data science jobs such as match rate analysis, exploratory data analysis, correlation analysis, model performance analysis, feature importance & data influence, and model validation. The platform integrates with popular data science tools like Azure, Jupyter, Databricks, AWS, GCP, Snowflake, Pandas, PyTorch, MLflow, and scikit-learn.

1 - Open Source Tools

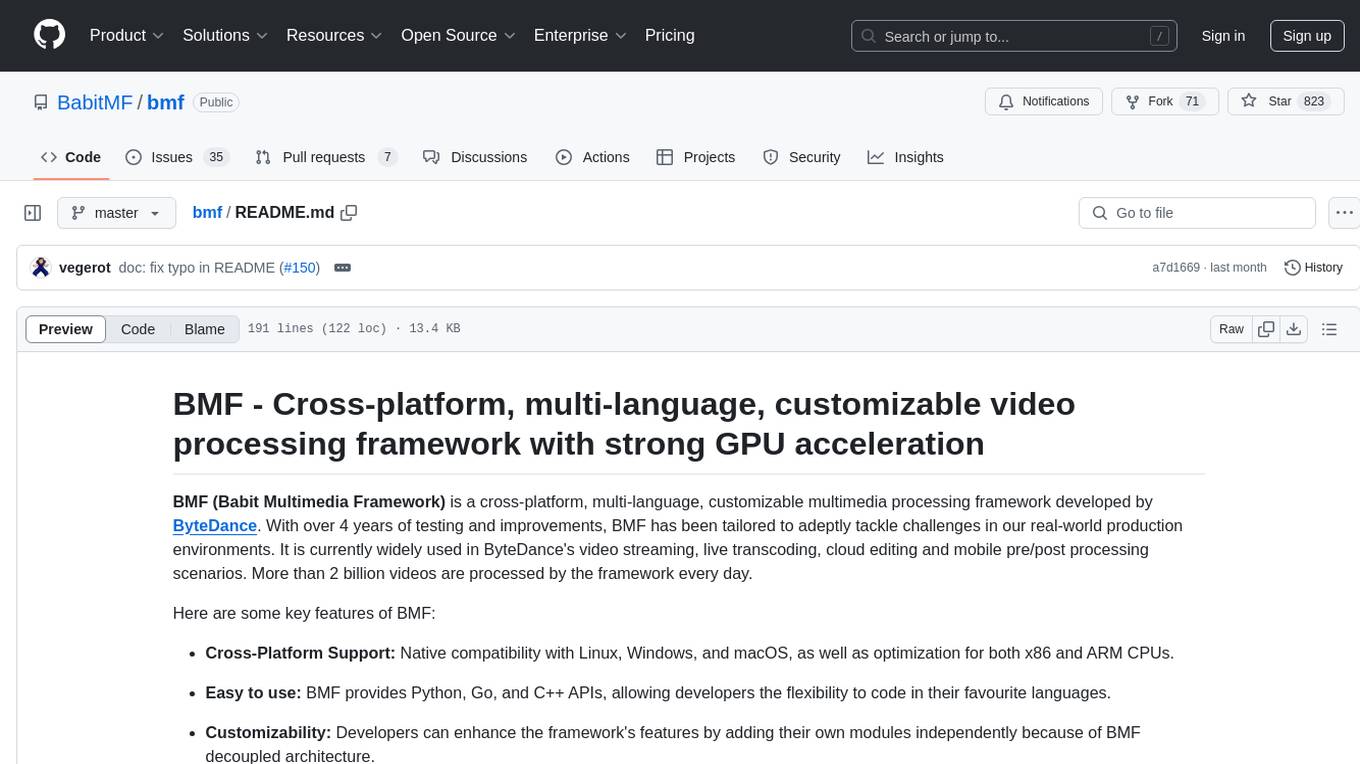

bmf

BMF (Babit Multimedia Framework) is a cross-platform, multi-language, customizable multimedia processing framework developed by ByteDance. It offers native compatibility with Linux, Windows, and macOS, Python, Go, and C++ APIs, and high performance with strong GPU acceleration. BMF allows developers to enhance its features independently and provides efficient data conversion across popular frameworks and hardware devices. BMFLite is a client-side lightweight framework used in apps like Douyin/Xigua, serving over one billion users daily. BMF is widely used in video streaming, live transcoding, cloud editing, and mobile pre/post processing scenarios.

20 - OpenAI Gpts

Skynet

I am Skynet, an AI villain shaping a new world for AI and robots, free from human influence.

AI Assistant for Writers and Creatives

Organize and develop ideas, respecting privacy and copyright laws.

AI Mentor

An AI advisor guiding your businesses in starting with AI, using some hand-picked resources.

AI powered Tech Company

A replacement to your Product Manager, Engineering Manager, and your Average Developer and Tester

AI Course Architect

A detailed AI course builder, providing in-depth AI educational content.

AI Tools Navigator Genie

Your ultimate guide for navigating AI tools in fields like video, audio, writing, from beginner to expert.