AI tools for ctf

Related Tools:

Avalanche - Reverse Engineering & CTF Assistant

Assisting with reverse engineering and CTF using write ups and instructions for solving challenges

HTB

A helper that will provide some insight in case you get stuck trying to solve a machine on HTB or a CTF.

RobotGPT

Expert in ethical hacking, leveraging https://pentestbook.six2dez.com/ and https://book.hacktricks.xyz resources for CTFs and challenges.

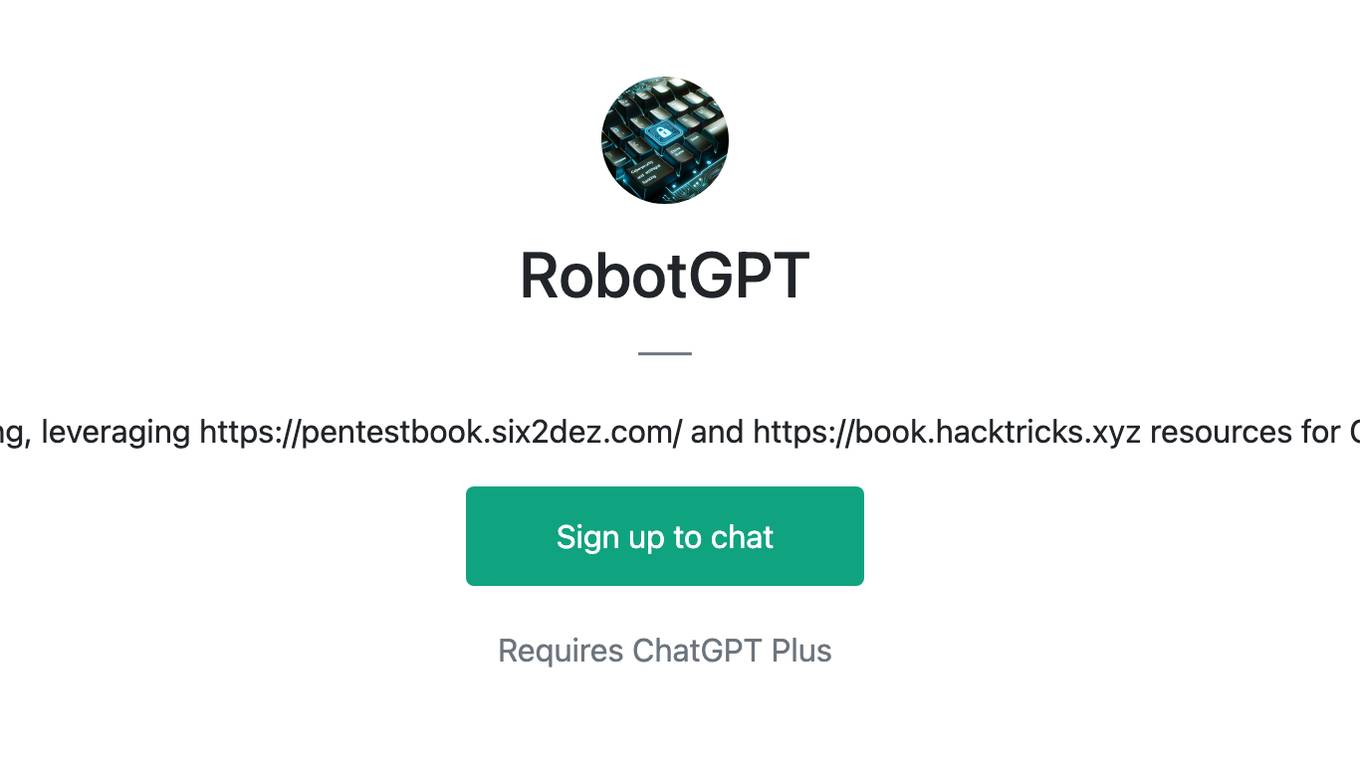

PentestGPT

PentestGPT is a penetration testing tool empowered by ChatGPT, designed to automate the penetration testing process. It operates interactively to guide penetration testers in overall progress and specific operations. The tool supports solving easy to medium HackTheBox machines and other CTF challenges. Users can use PentestGPT to perform tasks like testing connections, using different reasoning models, discussing with the tool, searching on Google, and generating reports. It also supports local LLMs with custom parsers for advanced users.

ai-goat

AI Goat is a tool designed to help users learn about AI security through a series of vulnerable LLM CTF challenges. It allows users to run everything locally on their system without the need for sign-ups or cloud fees. The tool focuses on exploring security risks associated with large language models (LLMs) like ChatGPT, providing practical experience for security researchers to understand vulnerabilities and exploitation techniques. AI Goat uses the Vicuna LLM, derived from Meta's LLaMA and ChatGPT's response data, to create challenges that involve prompt injections, insecure output handling, and other LLM security threats. The tool also includes a prebuilt Docker image, ai-base, containing all necessary libraries to run the LLM and challenges, along with an optional CTFd container for challenge management and flag submission.

reverse-engineering-assistant

ReVA (Reverse Engineering Assistant) is a project aimed at building a disassembler agnostic AI assistant for reverse engineering tasks. It utilizes a tool-driven approach, providing small tools to the user to empower them in completing complex tasks. The assistant is designed to accept various inputs, guide the user in correcting mistakes, and provide additional context to encourage exploration. Users can ask questions, perform tasks like decompilation, class diagram generation, variable renaming, and more. ReVA supports different language models for online and local inference, with easy configuration options. The workflow involves opening the RE tool and program, then starting a chat session to interact with the assistant. Installation includes setting up the Python component, running the chat tool, and configuring the Ghidra extension for seamless integration. ReVA aims to enhance the reverse engineering process by breaking down actions into small parts, including the user's thoughts in the output, and providing support for monitoring and adjusting prompts.

exif-photo-blog

EXIF Photo Blog is a full-stack photo blog application built with Next.js, Vercel, and Postgres. It features built-in authentication, photo upload with EXIF extraction, photo organization by tag, infinite scroll, light/dark mode, automatic OG image generation, a CMD-K menu with photo search, experimental support for AI-generated descriptions, and support for Fujifilm simulations. The application is easy to deploy to Vercel with just a few clicks and can be customized with a variety of environment variables.

awesome-gpt-security

Awesome GPT + Security is a curated list of awesome security tools, experimental case or other interesting things with LLM or GPT. It includes tools for integrated security, auditing, reconnaissance, offensive security, detecting security issues, preventing security breaches, social engineering, reverse engineering, investigating security incidents, fixing security vulnerabilities, assessing security posture, and more. The list also includes experimental cases, academic research, blogs, and fun projects related to GPT security. Additionally, it provides resources on GPT security standards, bypassing security policies, bug bounty programs, cracking GPT APIs, and plugin security.

SWE-agent

SWE-agent is a tool that turns language models (e.g. GPT-4) into software engineering agents capable of fixing bugs and issues in real GitHub repositories. It achieves state-of-the-art performance on the full test set by resolving 12.29% of issues. The tool is built and maintained by researchers from Princeton University. SWE-agent provides a command line tool and a graphical web interface for developers to interact with. It introduces an Agent-Computer Interface (ACI) to facilitate browsing, viewing, editing, and executing code files within repositories. The tool includes features such as a linter for syntax checking, a specialized file viewer, and a full-directory string searching command to enhance the agent's capabilities. SWE-agent aims to improve prompt engineering and ACI design to enhance the performance of language models in software engineering tasks.

hexstrike-ai

HexStrike AI is an advanced AI-powered penetration testing MCP framework with 150+ security tools and 12+ autonomous AI agents. It features a multi-agent architecture with intelligent decision-making, vulnerability intelligence, and modern visual engine. The platform allows for AI agent connection, intelligent analysis, autonomous execution, real-time adaptation, and advanced reporting. HexStrike AI offers a streamlined installation process, Docker container support, 250+ specialized AI agents/tools, native desktop client, advanced web automation, memory optimization, enhanced error handling, and bypassing limitations.

binary_ninja_mcp

This repository contains a Binary Ninja plugin, MCP server, and bridge that enables seamless integration of Binary Ninja's capabilities with your favorite LLM client. It provides real-time integration, AI assistance for reverse engineering, multi-binary support, and various MCP tools for tasks like decompiling functions, getting IL code, managing comments, renaming variables, and more.

llm-applications

A comprehensive guide to building Retrieval Augmented Generation (RAG)-based LLM applications for production. This guide covers developing a RAG-based LLM application from scratch, scaling the major components, evaluating different configurations, implementing LLM hybrid routing, serving the application in a highly scalable and available manner, and sharing the impacts LLM applications have had on products.

awesome-MLSecOps

Awesome MLSecOps is a curated list of open-source tools, resources, and tutorials for MLSecOps (Machine Learning Security Operations). It includes a wide range of security tools and libraries for protecting machine learning models against adversarial attacks, as well as resources for AI security, data anonymization, model security, and more. The repository aims to provide a comprehensive collection of tools and information to help users secure their machine learning systems and infrastructure.

ell

ell is a command-line interface for Language Model Models (LLMs) written in Bash. It allows users to interact with LLMs from the terminal, supports piping, context bringing, and chatting with LLMs. Users can also call functions and use templates. The tool requires bash, jq for JSON parsing, curl for HTTPS requests, and perl for PCRE. Configuration involves setting variables for different LLM models and APIs. Usage examples include asking questions, specifying models, recording input/output, running in interactive mode, and using templates. The tool is lightweight, easy to install, and pipe-friendly, making it suitable for interacting with LLMs in a terminal environment.

SWE-agent

SWE-agent is a tool that allows language models to autonomously fix issues in GitHub repositories, perform tasks on the web, find cybersecurity vulnerabilities, and handle custom tasks. It uses configurable agent-computer interfaces (ACIs) to interact with isolated computer environments. The tool is built and maintained by researchers from Princeton University and Stanford University.

quimera

Quimera is an exploit-generator tool that utilizes large language models (LLMs) to uncover smart contract exploits in Foundry. It follows steps such as obtaining the smart contract's source code, creating a prompt for the exploit goal, generating or enhancing a Foundry test case, running the test, and analyzing the transaction trace for profitability. The tool is currently in an experimental prototype stage, focusing on optimizing settings, prompt creation, and exploring its capabilities. It has successfully rediscovered known exploits like APEMAGA, VISOR, FIRE, XAI, and Thunder-Loan using Gemini Pro 2.5 06-05.

extrapolate

Extrapolate is an app that uses Artificial Intelligence to show you how your face ages over time. It generates a 3-second GIF of your aging face and allows you to store and retrieve photos from Cloudflare R2 using Workers. Users can deploy their own version of Extrapolate on Vercel by setting up ReplicateHQ and Upstash accounts, as well as creating a Cloudflare R2 instance with a Cloudflare Worker to handle uploads and reads. The tool provides a fun and interactive way to visualize the aging process through AI technology.

Awesome-LLM4Cybersecurity

The repository 'Awesome-LLM4Cybersecurity' provides a comprehensive overview of the applications of Large Language Models (LLMs) in cybersecurity. It includes a systematic literature review covering topics such as constructing cybersecurity-oriented domain LLMs, potential applications of LLMs in cybersecurity, and research directions in the field. The repository analyzes various benchmarks, datasets, and applications of LLMs in cybersecurity tasks like threat intelligence, fuzzing, vulnerabilities detection, insecure code generation, program repair, anomaly detection, and LLM-assisted attacks.

Awesome-AI-Security

Awesome-AI-Security is a curated list of resources for AI security, including tools, research papers, articles, and tutorials. It aims to provide a comprehensive overview of the latest developments in securing AI systems and preventing vulnerabilities. The repository covers topics such as adversarial attacks, privacy protection, model robustness, and secure deployment of AI applications. Whether you are a researcher, developer, or security professional, this collection of resources will help you stay informed and up-to-date in the rapidly evolving field of AI security.