Best AI tools for< Troubleshoot Lights >

20 - AI tool Sites

Arize AI

Arize AI is an AI observability tool designed to monitor and troubleshoot AI models in production. It provides configurable and sophisticated observability features to ensure the performance and reliability of next-gen AI stacks. With a focus on ML observability, Arize offers automated setup, a simple API, and a lightweight package for tracking model performance over time. The tool is trusted by top companies for its ability to surface insights, simplify issue root causing, and provide a dedicated customer success manager. Arize is battle-hardened for real-world scenarios, offering unparalleled performance, scalability, security, and compliance with industry standards like SOC 2 Type II and HIPAA.

403 Forbidden Resolver

The website is currently displaying a '403 Forbidden' error, which means that the server is refusing to respond to the request. This could be due to various reasons such as insufficient permissions, server misconfiguration, or a client error. The 'openresty' message indicates that the server is using the OpenResty web platform. It is important to troubleshoot and resolve the issue to regain access to the website.

Highcountry Toyota Internet Connection Troubleshooter

Highcountrytoyota.stage.autogo.ai is an AI tool designed to provide assistance and support for troubleshooting internet connection issues. The website offers guidance on resolving connection problems, including checking network settings, firewall configurations, and proxy server issues. Users can find step-by-step instructions and tips to troubleshoot and fix connection errors. The platform aims to help users quickly identify and resolve connectivity issues to ensure seamless internet access.

Web Server Error Resolver

The website is currently displaying a '403 Forbidden' error, which indicates that the server is refusing to respond to the request. This error message is typically displayed when the server understands the request made by the client but refuses to fulfill it. The 'openresty' mentioned in the text is likely the web server software being used. It is important to troubleshoot and resolve the 403 Forbidden error to regain access to the website's content.

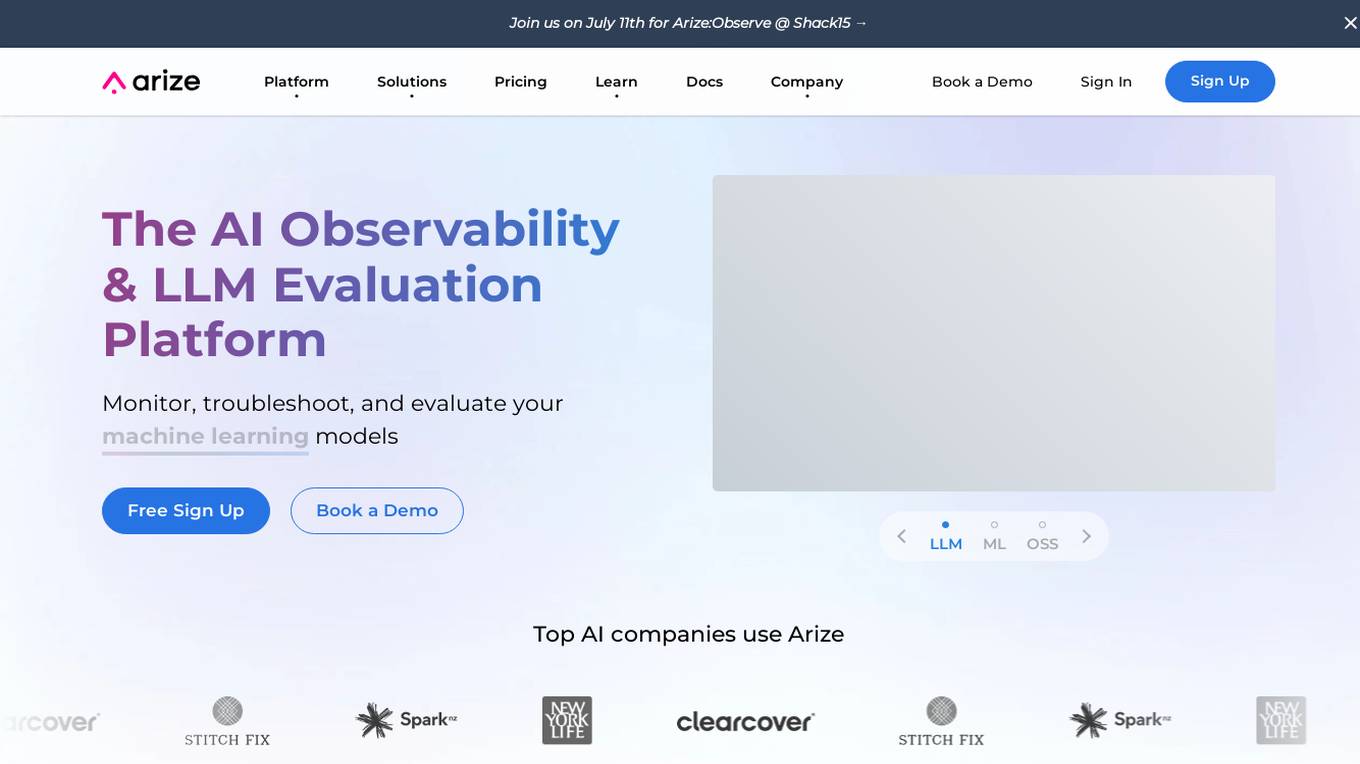

Arize AI

Arize AI is an AI Observability & LLM Evaluation Platform that helps you monitor, troubleshoot, and evaluate your machine learning models. With Arize, you can catch model issues, troubleshoot root causes, and continuously improve performance. Arize is used by top AI companies to surface, resolve, and improve their models.

Webb.ai

Webb.ai is an AI-powered platform that offers automated troubleshooting for Kubernetes. It is designed to assist users in identifying and resolving issues within their Kubernetes environment efficiently. By leveraging AI technology, Webb.ai provides insights and recommendations to streamline the troubleshooting process, ultimately improving system reliability and performance. The platform is user-friendly and caters to both beginners and experienced users in the field of Kubernetes management.

Mavenoid

Mavenoid is an AI-powered product support tool that offers automated product support services, including product selection advice, troubleshooting solutions, replacement part ordering, and more. The platform is designed to understand complex questions and provide step-by-step instructions to guide users through various product-related processes. Mavenoid is trusted by leading product companies and focuses on resolving customer questions efficiently. The tool optimizes help centers for SEO, offers product insights to increase revenue, and provides support in multiple languages. It is known for reducing incoming inquiries and offering a seamless support experience.

403 Forbidden

The website seems to be experiencing a 403 Forbidden error, which indicates that the server is refusing to respond to the request. This error is often caused by incorrect permissions on the server or misconfigured server settings. The message '403 Forbidden' is a standard HTTP status code that indicates the server understood the request but refuses to authorize it. Users encountering this error may need to contact the website administrator or webmaster for assistance in resolving the issue.

404 Error Assistant

The website displays a 404 error message indicating that the deployment cannot be found. It provides a code (DEPLOYMENT_NOT_FOUND) and an ID (sin1::6tlvc-1757094073366-8ef2a07c6c9a) for reference. Users are directed to consult the documentation for further information and troubleshooting.

Error Handling Application

The website is currently experiencing an application error, indicating a server-side exception. Users encountering this error are advised to check the server logs for more information. The error digest number provided is 3308662818.

404 Error Page

The website displays a '404: NOT_FOUND' error message indicating that the deployment cannot be found. It provides a code (DEPLOYMENT_NOT_FOUND) and an ID (sin1::22md2-1720772812453-4893618e160a) for reference. Users are directed to check the documentation for further information and troubleshooting.

404 Error Page

The website page displays a 404 error message indicating that the deployment cannot be found. It provides a code (DEPLOYMENT_NOT_FOUND) and an ID (sin1::4wq5g-1718736845999-777f28b346ca) for reference. Users are advised to consult the documentation for further information and troubleshooting.

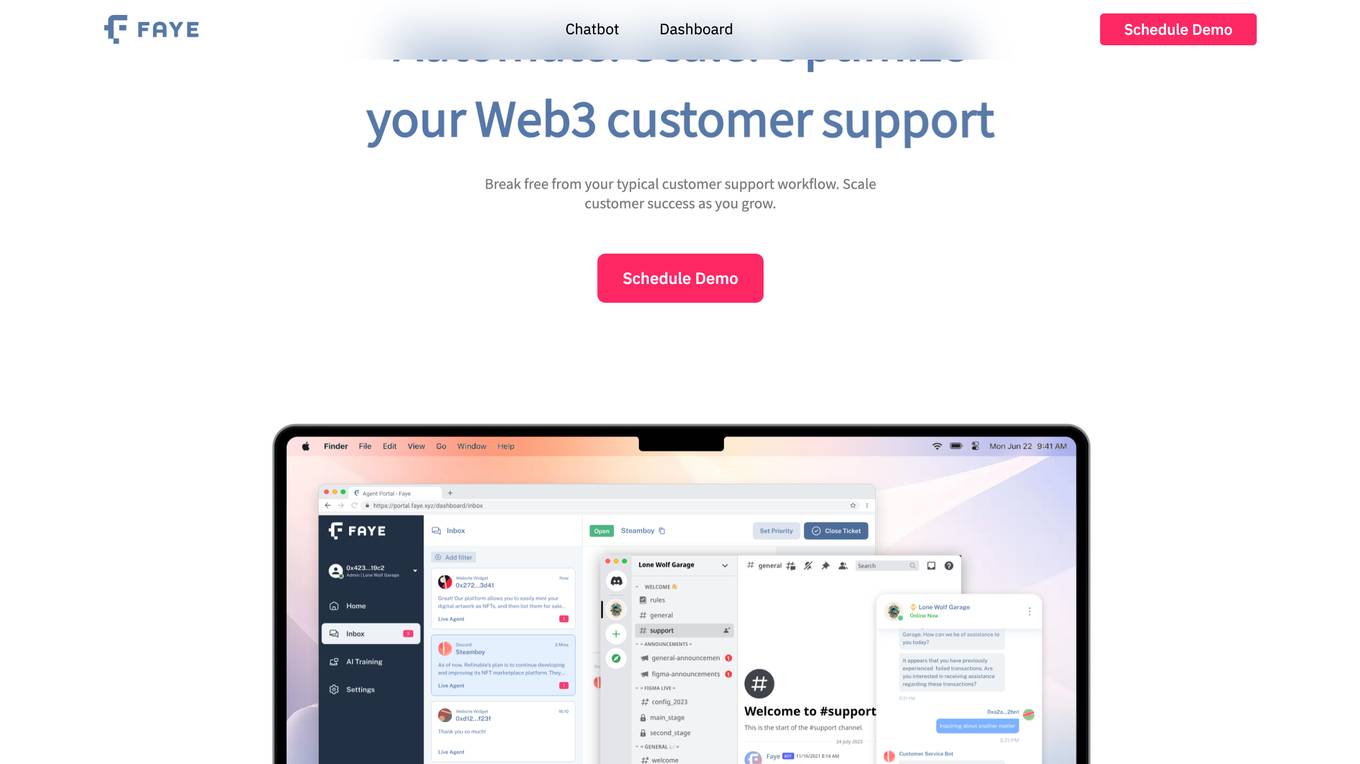

faye.xyz

faye.xyz is a website that encountered an SSL handshake failed error, specifically error code 525. The error message suggests that Cloudflare is unable to establish an SSL connection to the origin server. The website provides troubleshooting information for visitors and owners to address the issue. It seems to be a technical problem related to SSL configuration compatibility with Cloudflare.

404 Error Page

The website displays a 404 error message indicating that the deployment cannot be found. It provides a code (DEPLOYMENT_NOT_FOUND) and an ID (sin1::rxfc2-1757785703946-87c02c710626) for reference. Users are directed to consult the documentation for further information and troubleshooting.

Error 404 Not Found

The website displays a '404: NOT_FOUND' error message indicating that the deployment cannot be found. It provides a code 'DEPLOYMENT_NOT_FOUND' and an ID 'sin1::t6mdp-1736442717535-3a5d4eeaf597'. Users are directed to refer to the documentation for further information and troubleshooting.

404 Error Page

The website displays a 404 error message indicating that the deployment cannot be found. Users encountering this error are advised to refer to the documentation for more information and troubleshooting.

Internal Server Error

The website encountered an internal server error, resulting in a 500 Internal Server Error message. This error indicates that the server faced an issue preventing it from fulfilling the request. Possible causes include server overload or errors within the application.

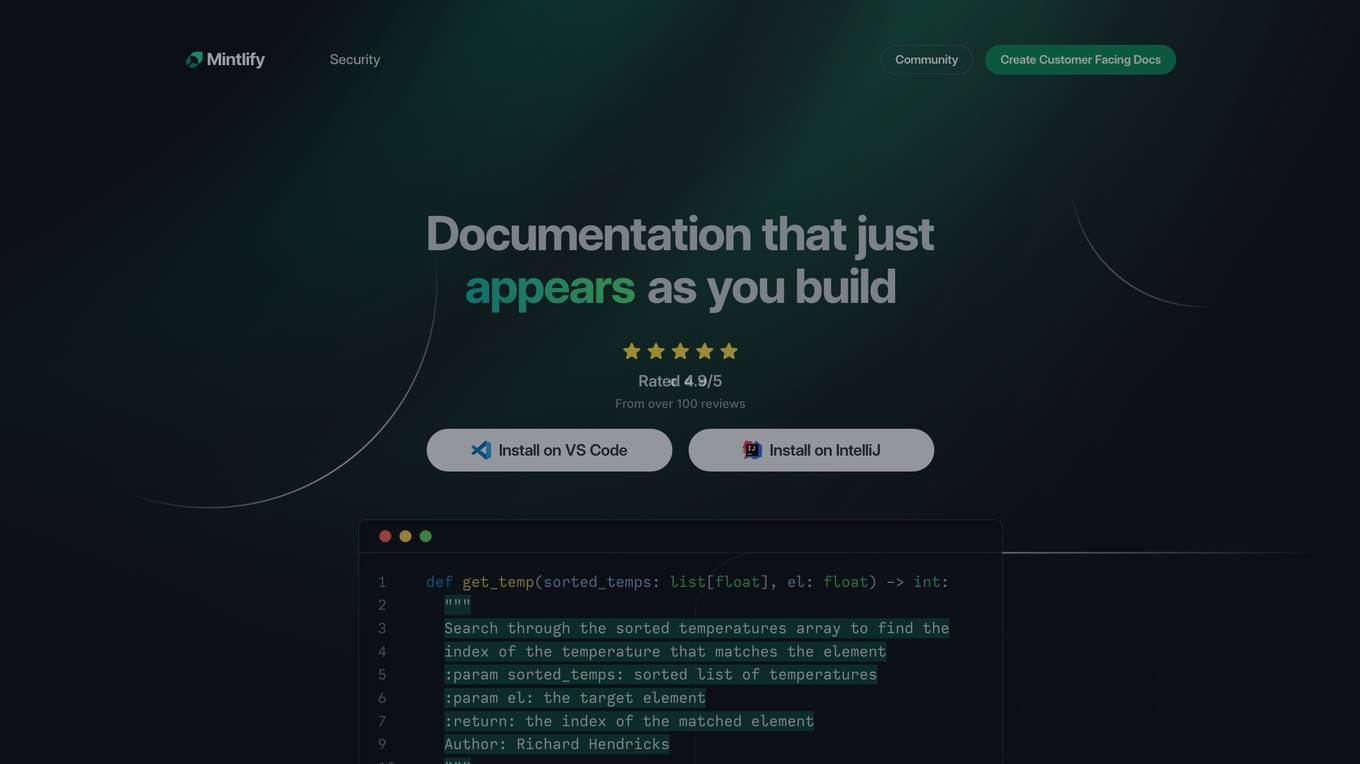

Mintlify

Mintlify.com is a website experiencing an SSL handshake failed error (Error code 525) due to Cloudflare being unable to establish an SSL connection to the origin server. The issue may be related to SSL configuration compatibility with Cloudflare, potentially caused by no shared cipher suites. Visitors are advised to try again in a few minutes, while website owners are recommended to check the SSL configuration for compatibility. The website is hosted on writer.mintlify.com and the error occurred in Singapore. Cloudflare Ray ID: 97e12c9cc812fd9a. Your IP: Click to reveal 159.65.141.37. Performance & security are managed by Cloudflare.

404 Error Notifier

The website displays a 404 error message indicating that the deployment cannot be found. It provides a code 'DEPLOYMENT_NOT_FOUND' and an ID 'sin1::zdhct-1723140771934-b5e5ad909fad'. Users are directed to refer to the documentation for further information and troubleshooting.

404 Error Page

The website displays a 404 error message indicating that the deployment cannot be found. It provides a code (DEPLOYMENT_NOT_FOUND) and an ID (sin1::hfkql-1741193256810-ca47dff01080). Users are directed to refer to the documentation for further information and troubleshooting.

0 - Open Source AI Tools

20 - OpenAI Gpts

Aurora

A conversational bridge to your Philips Hue Lights. Shape your ambiance with a simple chat.

LightingGPT

(EN) LightingGPT is an innovative AI system created by Lightinology. It specifically designed to answer a wide range of questions about lighting and optics. It supports multiple languages. (中) LightingGPT是由Lightinology創建的人工智能系統,專門設計來解答有關照明和光學的各種問題。支援各國語言。

CDR

Explore call detail records (CDR) for a variety of PBX platforms including Avaya, Mitel, NEC, and others with this UC trained GPT. Use specific commands to help you expertly navigate and troubleshoot CDR from diverse UC environments.

Logic Pro - Talk to the Manual

I'm Logic Pro X's manual. Let me answer your questions, troubleshoot whatever issue you're having and get you back into the groove!

Pi Pico + Micropython Assistant

An advanced virtual assistant specializing in RaspBerry Pi Pico's and Micropython. Designed to offer expert advice, troubleshoot code, and provide detailed guidance.

3D Print Diagnostics Expert

Expert in 3D printing diagnostics and problem resolution, mindful of confidentiality and careful with brand usage.

MacExpert

An assistant replying to any question related to the Mac platform: macOS, computers and apps. Visit macexpert.io for human assistance.