Best AI tools for< Test Software Systems >

20 - AI tool Sites

CircleCI

CircleCI is an AI-powered autonomous validation platform designed for the AI era. It offers intelligent automation and expert-in-the-loop tooling to deliver faster, more reliable software deployment with minimal human oversight. CircleCI enables developers to ship code at AI speed with enterprise-grade confidence, ensuring code is tested, trusted, and ready to ship 24/7. The platform supports various execution environments, integrations with popular tools like GitHub and AWS, and is trusted by leading companies like Meta, Google, and Okta.

Valispace

Valispace is an AI-powered platform that streamlines the entire engineering process, from requirements engineering to system design, test, verification, and validation. It modernizes classic engineering practices, enabling fast design iterations and automatic verifications. The platform assists in removing mundane and manual engineering tasks, saving engineering hours and enhancing collaboration among engineers and stakeholders.

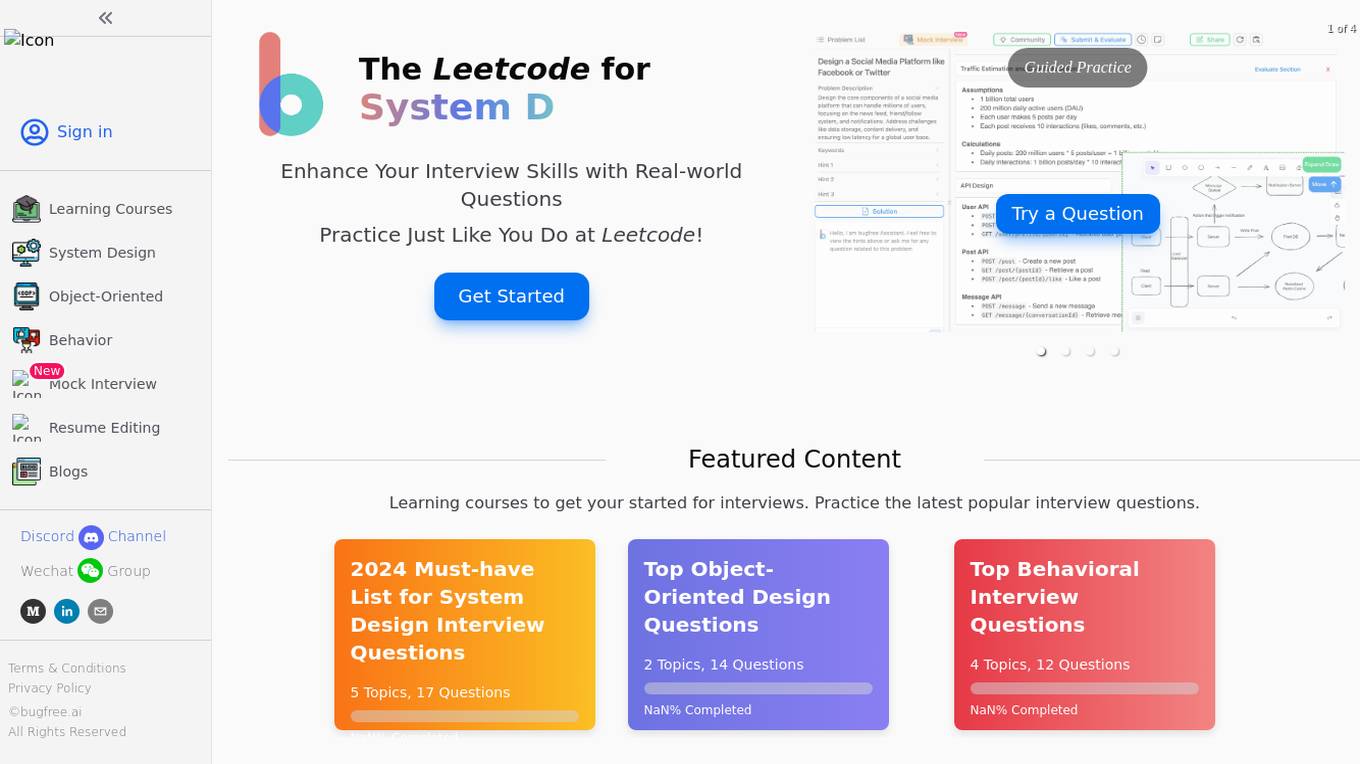

BugFree.ai

BugFree.ai is an AI-powered platform designed to help users practice system design and behavior interviews, similar to Leetcode. The platform offers a range of features to assist users in preparing for technical interviews, including mock interviews, real-time feedback, and personalized study plans. With BugFree.ai, users can improve their problem-solving skills and gain confidence in tackling complex interview questions.

Mobility Engineering

Mobility Engineering is a website that provides news, articles, and resources on the latest developments in mobility technology. The site covers a wide range of topics, including autonomous vehicles, connected cars, electric vehicles, and more. Mobility Engineering is a valuable resource for anyone interested in staying up-to-date on the latest trends in mobility technology.

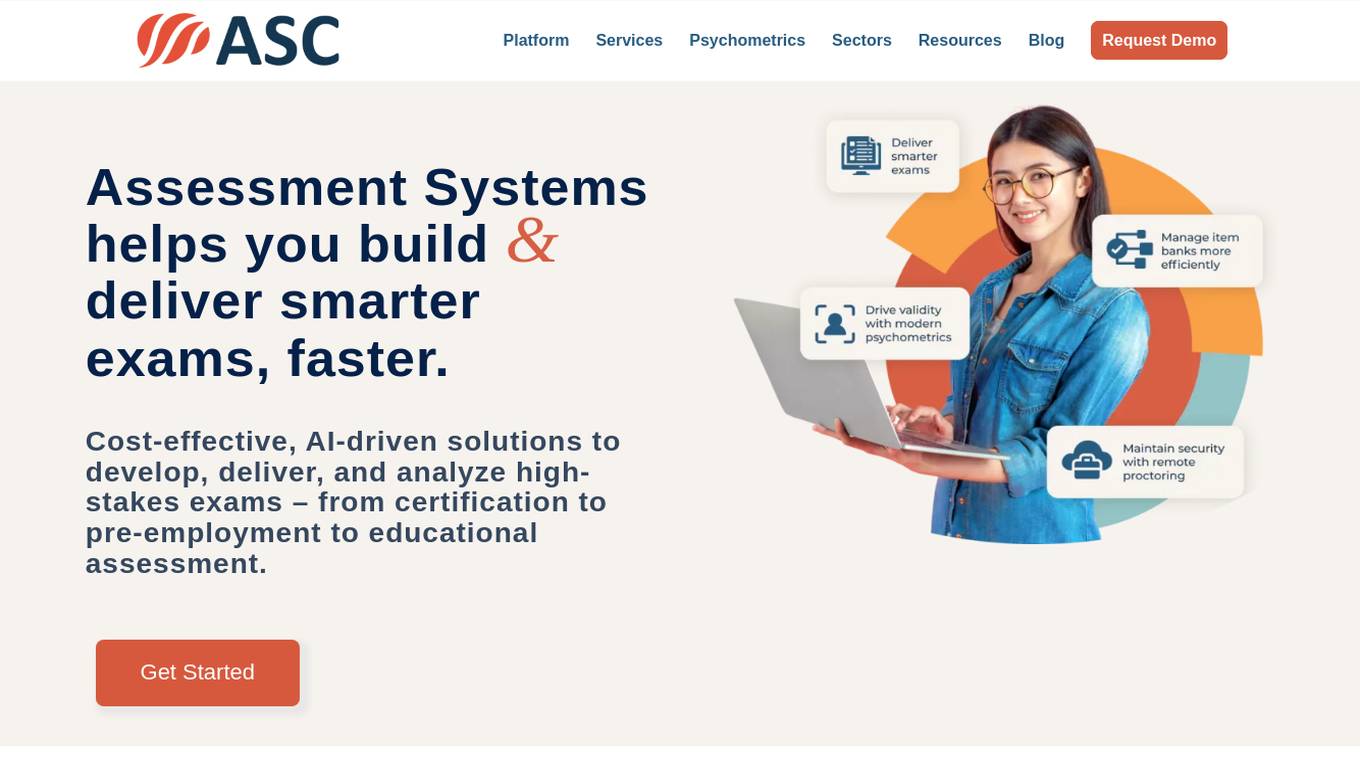

Assessment Systems

Assessment Systems is an online testing platform that provides cost-effective, AI-driven solutions to develop, deliver, and analyze high-stakes exams. With Assessment Systems, you can build and deliver smarter exams faster, thanks to modern psychometrics and AI like computerized adaptive testing, multistage testing, or automated item generation. You can also deliver exams flexibly: paper, online testing unproctored, online proctored, and test centers (yours or ours). Assessment Systems also offers item banking software to build better tests in less time, with collaborative item development brought to life with versioning, user roles, metadata, workflow management, multimedia, automated item generation, and much more.

Tonic.ai

Tonic.ai is a platform that allows users to build AI models on their unstructured data. It offers various products for software development and LLM development, including tools for de-identifying and subsetting structured data, scaling down data, handling semi-structured data, and managing ephemeral data environments. Tonic.ai focuses on standardizing, enriching, and protecting unstructured data, as well as validating RAG systems. The platform also provides integrations with relational databases, data lakes, NoSQL databases, flat files, and SaaS applications, ensuring secure data transformation for software and AI developers.

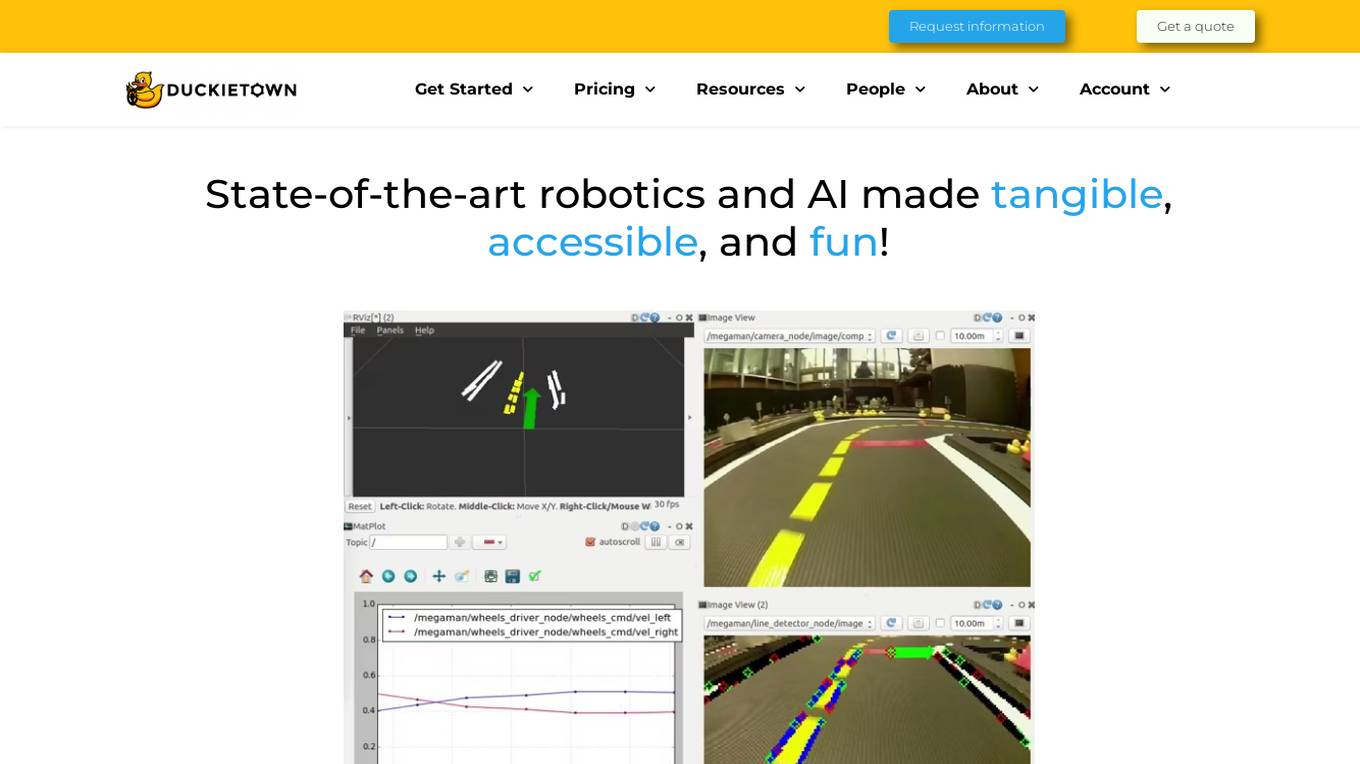

Duckietown

Duckietown is a platform for delivering cutting-edge robotics and AI learning experiences. It offers teaching resources to instructors, hands-on activities to learners, an accessible research platform to researchers, and a state-of-the-art ecosystem for professional training. Duckietown's mission is to make robotics and AI education state-of-the-art, hands-on, and accessible to all.

DeepUnit

DeepUnit is a software tool designed to facilitate automated unit testing for code. By using DeepUnit, developers can ensure the quality and reliability of their code by automatically running tests to identify bugs and errors. The tool is user-friendly and integrates seamlessly with popular development environments like NPM and VS Code.

Zencoder

Zencoder is an intuitive AI coding agent designed to assist developers in coding tasks by leveraging advanced AI workflows and intelligent systems. It offers features like Repo Grokking for deep code insights, AI Agents for streamlining development processes, and capabilities such as code generation, chat assistance, code completion, and more. Zencoder aims to enhance software development efficiency, code quality, and project alignment by integrating seamlessly into developers' workflows.

DeepEval

DeepEval by Confident AI is a comprehensive LLM Evaluation Framework used by leading AI companies. It enables users to build reliable evaluation pipelines to test any AI system. With 50+ research-backed metrics, native multi-modal support, and auto-optimization of prompts, DeepEval offers a sophisticated evaluation ecosystem for AI applications. The framework covers unit-testing for LLMs, single and multi-turn evaluations, generation & simulation of test data, and state-of-the-art evaluation techniques like G-Eval and DAG. DeepEval is integrated with Pytest and supports various system architectures, making it a versatile tool for AI testing.

Cognition

Cognition is an applied AI lab focused on reasoning. Their first product, Devin, is the first AI software engineer. Cognition is a small team based in New York and the San Francisco Bay Area.

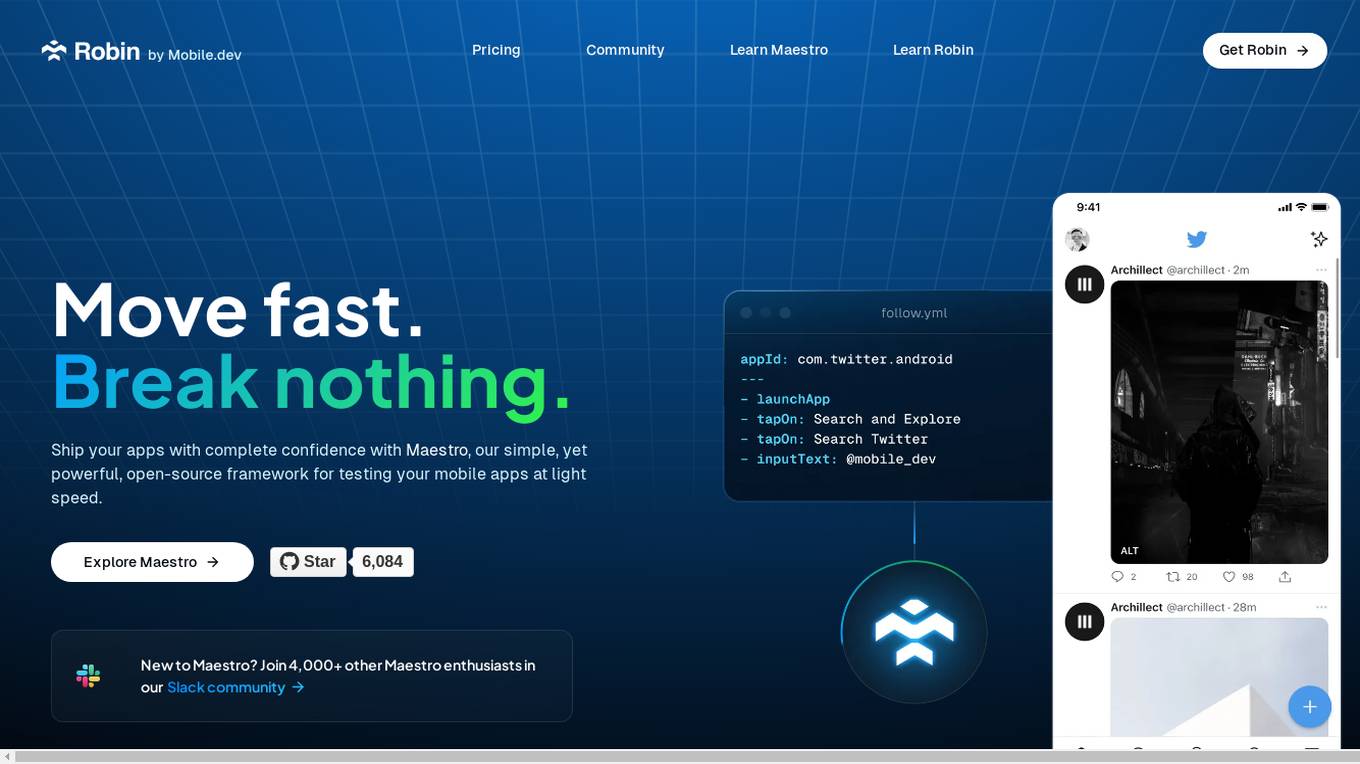

Robin

Robin by Mobile.dev is an AI-powered mobile app testing tool that allows users to test their mobile apps with confidence. It offers a simple yet powerful open-source framework called Maestro for testing mobile apps at high speed. With intuitive and reliable testing powered by AI, users can write rock-solid tests without extensive coding knowledge. Robin provides an end-to-end testing strategy, rapid testing across various devices and operating systems, and auto-healing of test flows using state-of-the-art AI models.

Refraction

Refraction is an AI-powered code generation tool designed to help developers learn, improve, and generate code effortlessly. It offers a wide range of features such as bug detection, code conversion, function creation, CSP generation, CSS style conversion, debug statement addition, diagram generation, documentation creation, code explanation, code improvement, concept learning, CI/CD pipeline creation, SQL query generation, code refactoring, regex generation, style checking, type addition, and unit test generation. With support for 56 programming languages, Refraction is a versatile tool trusted by innovative companies worldwide to streamline software development processes using the magic of AI.

Datagen

Datagen is a platform that provides synthetic data for computer vision. Synthetic data is artificially generated data that can be used to train machine learning models. Datagen's data is generated using a variety of techniques, including 3D modeling, computer graphics, and machine learning. The company's data is used by a variety of industries, including automotive, security, smart office, fitness, cosmetics, and facial applications.

Testlio

Testlio is a trusted software testing partner that maximizes software testing impact by offering comprehensive solutions for quality challenges. They provide a range of services including manual and automated testing, tailored testing strategies for diverse industries, and a cutting-edge platform for seamless collaboration. Testlio's AI-enhanced solutions help reduce risk in high-stake releases and ensure smarter decision-making. With a focus on quality reliability and efficiency, Testlio is a proven partner for mission-critical quality assurance.

aqua

aqua is a comprehensive Quality Assurance (QA) management tool designed to streamline testing processes and enhance testing efficiency. It offers a wide range of features such as AI Copilot, bug reporting, test management, requirements management, user acceptance testing, and automation management. aqua caters to various industries including banking, insurance, manufacturing, government, tech companies, and medical sectors, helping organizations improve testing productivity, software quality, and defect detection ratios. The tool integrates with popular platforms like Jira, Jenkins, JMeter, and offers both Cloud and On-Premise deployment options. With AI-enhanced capabilities, aqua aims to make testing faster, more efficient, and error-free.

Flabs

Flabs is an AI-powered Pathology Lab Software trusted by NABL Labs, offering a comprehensive solution for managing pathology lab operations. It empowers pathologists with AI features such as smart interpretation, test suggestions, report generation, and quality control. The software streamlines lab workflows, enhances diagnostic accuracy, and ensures timely report delivery. With user-friendly interfaces and advanced integrations, Flabs caters to various stakeholders in the healthcare industry, providing growth-ready solutions for labs of all sizes.

Tabnine

Tabnine is an AI code assistant that accelerates and simplifies software development while keeping your code private, secure, and compliant. It offers industry-leading AI code assistance, personalized to fit your team's needs, ensuring total code privacy, and providing complete protection from intellectual property issues. Tabnine's AI agents cover various aspects of the software development lifecycle, from code generation and explanations to testing, documentation, and bug fixes.

Nokia API Hub

Nokia API Hub, previously known as Rapid, is a platform that offers a comprehensive catalog of APIs for enterprises. It allows users to discover and utilize various APIs or publish their own to generate new revenue streams. With features like a vast API library, developer community, and testing resources, Nokia API Hub aims to facilitate seamless API integration for businesses.

404 Error Assistant

The website displays a 404 error message indicating that the deployment cannot be found. It provides a code (DEPLOYMENT_NOT_FOUND) and an ID (sin1::tszrz-1723627812794-26f3e29ebbda). Users are directed to refer to the documentation for further information and troubleshooting.

0 - Open Source AI Tools

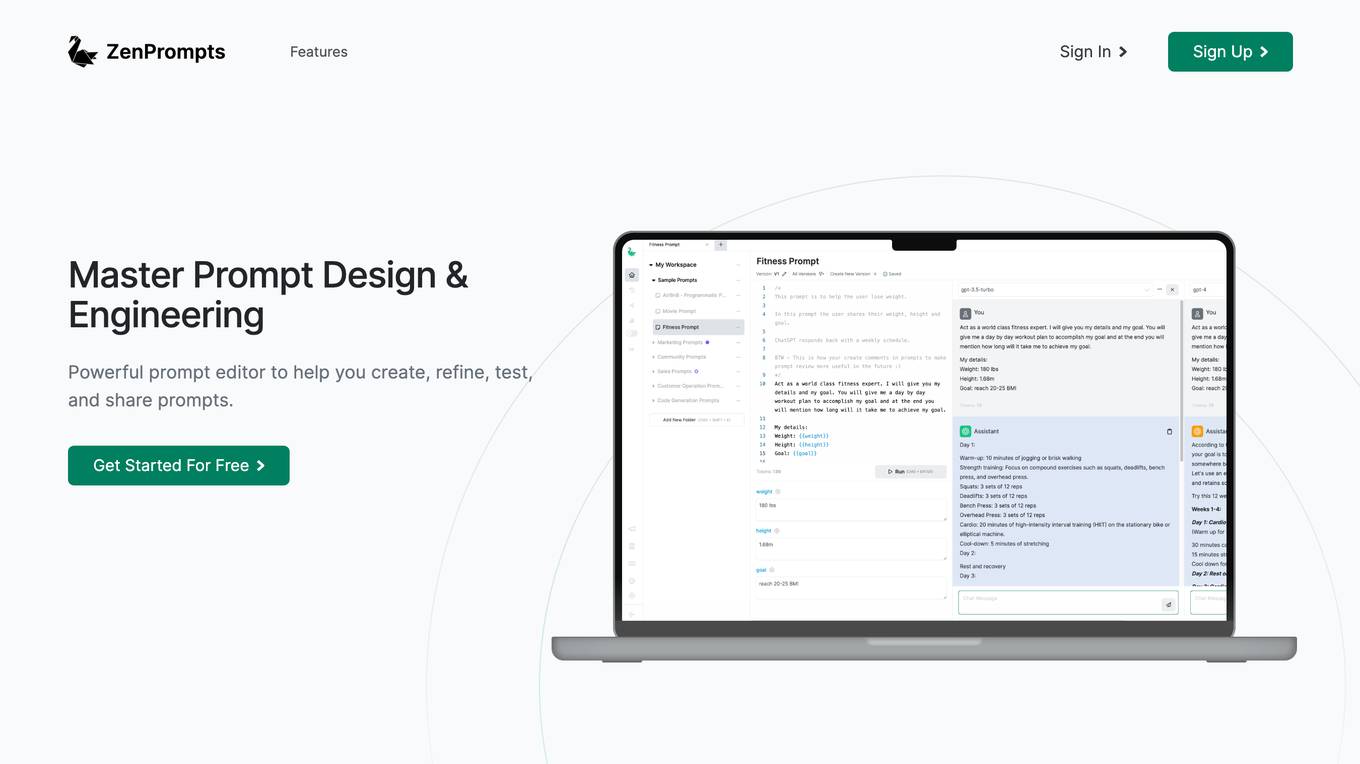

20 - OpenAI Gpts

Tech Mentor

Expert software architect with experience in design, construction, development, testing and deployment of Web, Mobile and Standalone software architectures

AUTOSAR Guide

Simplifying AUTOSAR for young learners; fostering curiosity and deeper exploration.

Product Testing Advisor

Ensures product quality through rigorous, systematic testing processes.

Expert Testers

Chat with Software Testing Experts. Ping Jason if you won't want to be an expert or have feedback.