Best AI tools for< Optimize Query Performance >

20 - AI tool Sites

Pulse

Pulse is a world-class expert support tool for BigData stacks, specifically focusing on ensuring the stability and performance of Elasticsearch and OpenSearch clusters. It offers early issue detection, AI-generated insights, and expert support to optimize performance, reduce costs, and align with user needs. Pulse leverages AI for issue detection and root-cause analysis, complemented by real human expertise, making it a strategic ally in search cluster management.

AI2SQL

AI2SQL is an AI-powered SQL query builder and generator that allows users to create, optimize, and automate SQL queries without the need for expert skills. The tool transforms natural language instructions into precise SQL and NoSQL queries, simplifying database design and enhancing query performance. With features like text to SQL conversion, SQL query optimization, and formula generation, AI2SQL streamlines complex operations and provides data insights for efficient analysis.

Granica

Granica is an AI tool designed for data compression and optimization, enabling users to transform petabytes of data into terabytes through self-optimizing, lossless compression. It works seamlessly across various data platforms like Iceberg, Delta, Trino, Spark, Snowflake, BigQuery, and Databricks, offering significant cost savings and improved query performance. Granica is trusted by data and AI leaders globally for its ability to reduce data bloat, speed up queries, and enhance data lake optimization. The tool is built for structured AI, providing transparent deployment, continuous adaptation, hands-off orchestration, and trusted controls for data security and compliance.

EverSQL

EverSQL is an AI-powered tool designed for SQL query optimization, database observability, and cost reduction for PostgreSQL and MySQL databases. It automatically optimizes SQL queries using smart AI-based algorithms, provides ongoing performance insights, and helps reduce monthly database costs by offering optimization recommendations. With over 100,000 professionals trusting EverSQL, it aims to save time, improve database performance, and enhance cost-efficiency without accessing sensitive data.

Keebo

Keebo is an AI tool designed for Snowflake optimization, offering automated query, cost, and tuning optimization. It is the only fully-automated Snowflake optimizer that dynamically adjusts to save customers 25% and more. Keebo's patented technology, based on cutting-edge research, optimizes warehouse size, clustering, and memory without impacting performance. It learns and adjusts to workload changes in real-time, setting up in just 30 minutes and delivering savings within 24 hours. The tool uses telemetry metadata for optimizations, providing full visibility and adjustability for complex scenarios and schedules.

Woven Insights

Woven Insights is an AI-driven Fashion Retail Market & Consumer Insights solution that empowers fashion businesses with data-driven decision-making capabilities. It provides competitive intelligence, performance monitoring analytics, product assortment optimization, market insights, consumer insights, and pricing strategies to help businesses succeed in the retail market. With features like insights-driven competitive benchmarking, real-time market insights, product performance tracking, in-depth market analytics, and sentiment analysis, Woven Insights offers a comprehensive solution for businesses of all sizes. The application also offers bespoke data analysis, AI insights, natural language query, and easy collaboration tools to enhance decision-making processes. Woven Insights aims to democratize fashion intelligence by providing affordable pricing and accessible insights to help businesses stay ahead of the competition.

SQLAI.ai

SQLAI.ai is a professional SQL multi-tool that leverages AI technology to generate, fix, explain, and optimize SQL queries and databases. It enables users to interact with SQL using everyday language, effortlessly train AI to understand database schemas, and benefit from AI-driven recommendations for query optimization. The platform caters to a wide range of users, from beginners to experts, by simplifying SQL tasks and providing valuable insights for database management. With features like generating SQL data, data analytics, and real-time data insights, SQLAI.ai revolutionizes the way users interact with databases, making SQL tasks simpler, more efficient, and accessible to all.

SQLAI.ai

SQLAI.ai is a powerful SQL multi-tool that utilizes AI to generate, fix, explain, and optimize SQL queries and databases. It empowers users to effortlessly create complex SQL queries using everyday language, optimize queries for better performance, fix syntax errors with ease, and gain a deeper understanding of SQL queries through AI-powered explanations. Additionally, SQLAI.ai enables users to train AI on their database schema, ensuring unparalleled accuracy in AI-generated queries and optimizations.

SQL Builder

SQL Builder is an AI-powered SQL query generator that allows users to easily generate complex SQL queries without writing any code. It offers a range of features such as a no-code SQL builder, SQL syntax explainer, SQL optimizer, SQL formatter, NoSQL query builder, and SQL syntax validator. SQL Builder supports various databases including MySQL, MariaDB, SQLite, PostgreSQL, Oracle, Microsoft SQL Server, MongoDB, BigQuery, Snowflake, and Amazon Redshift.

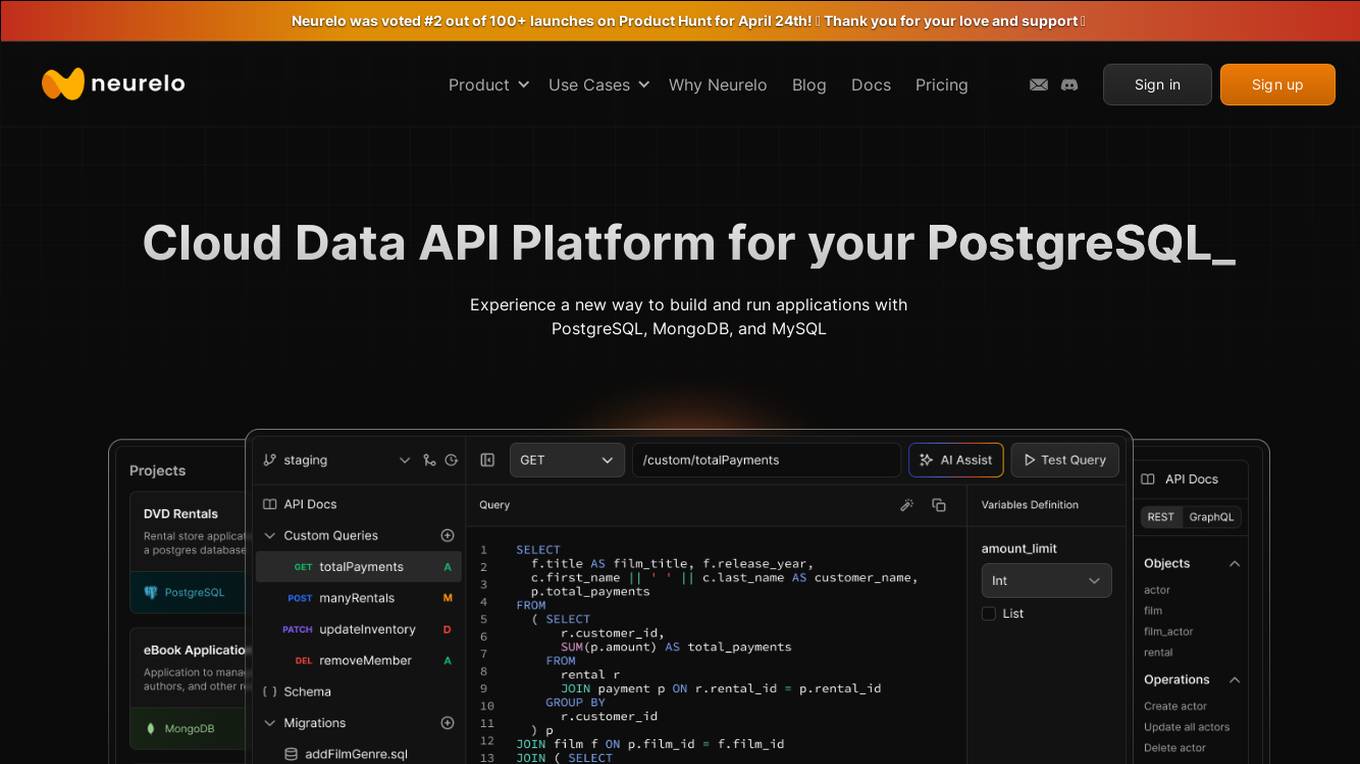

Neurelo

Neurelo is a cloud API platform that offers services for PostgreSQL, MongoDB, and MySQL. It provides features such as auto-generated APIs, custom query APIs with AI assistance, query observability, schema as code, and the ability to build full-stack applications in minutes. Neurelo aims to empower developers by simplifying database programming complexities and enhancing productivity. The platform leverages the power of cloud technology, APIs, and AI to offer a seamless and efficient way to build and run applications.

LINQ Me Up

LINQ Me Up is an AI-powered tool designed to boost .Net productivity by generating and converting LINQ code queries. It allows users to effortlessly convert SQL queries to LINQ code, transform LINQ code into SQL queries, and generate tailored LINQ queries for various datasets. The tool supports C# and Visual Basic code, Method and Query syntax, and provides AI-powered analysis for optimized results. LINQ Me Up aims to supercharge productivity by simplifying the migration process and enabling users to focus on essential code parts.

Hey Internet

Hey Internet is an AI-powered text message assistant that provides instant answers to diverse questions, boosts productivity, and supports customized individual growth. It offers a range of features including quick and accurate answers, 24/7 accessibility, and personalized assistance tailored to academic, professional, and personal needs. Hey Internet empowers users to optimize research and learning, enhance productivity, and achieve a balanced lifestyle.

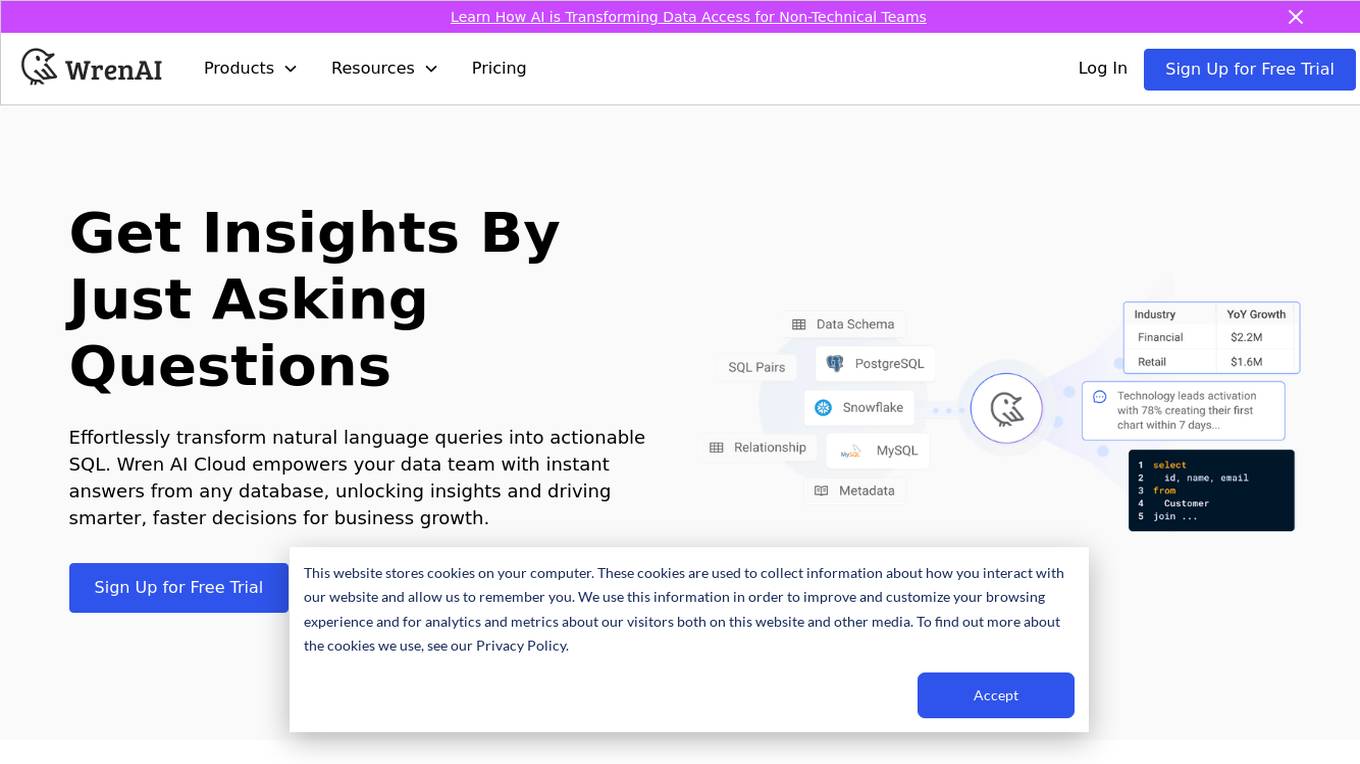

Wren AI Cloud

Wren AI Cloud is an AI-powered SQL agent that provides seamless data access and insights for non-technical teams. It empowers users to effortlessly transform natural language queries into actionable SQL, enabling faster decision-making and driving business growth. The platform offers self-serve data exploration, AI-generated insights and visuals, and multi-agentic workflow to ensure reliable results. Wren AI Cloud is scalable, versatile, and integrates with popular data tools, making it a valuable asset for businesses seeking to leverage AI for data-driven decisions.

DB Sensei

DB Sensei is an AI-powered SQL tool that helps developers generate, fix, explain, and format SQL queries with ease. It features a user-friendly interface, AI-driven query generation, query fixing, query explaining, and query formatting. DB Sensei is designed for developers, database administrators, and students who want to get faster results and improve their database skills.

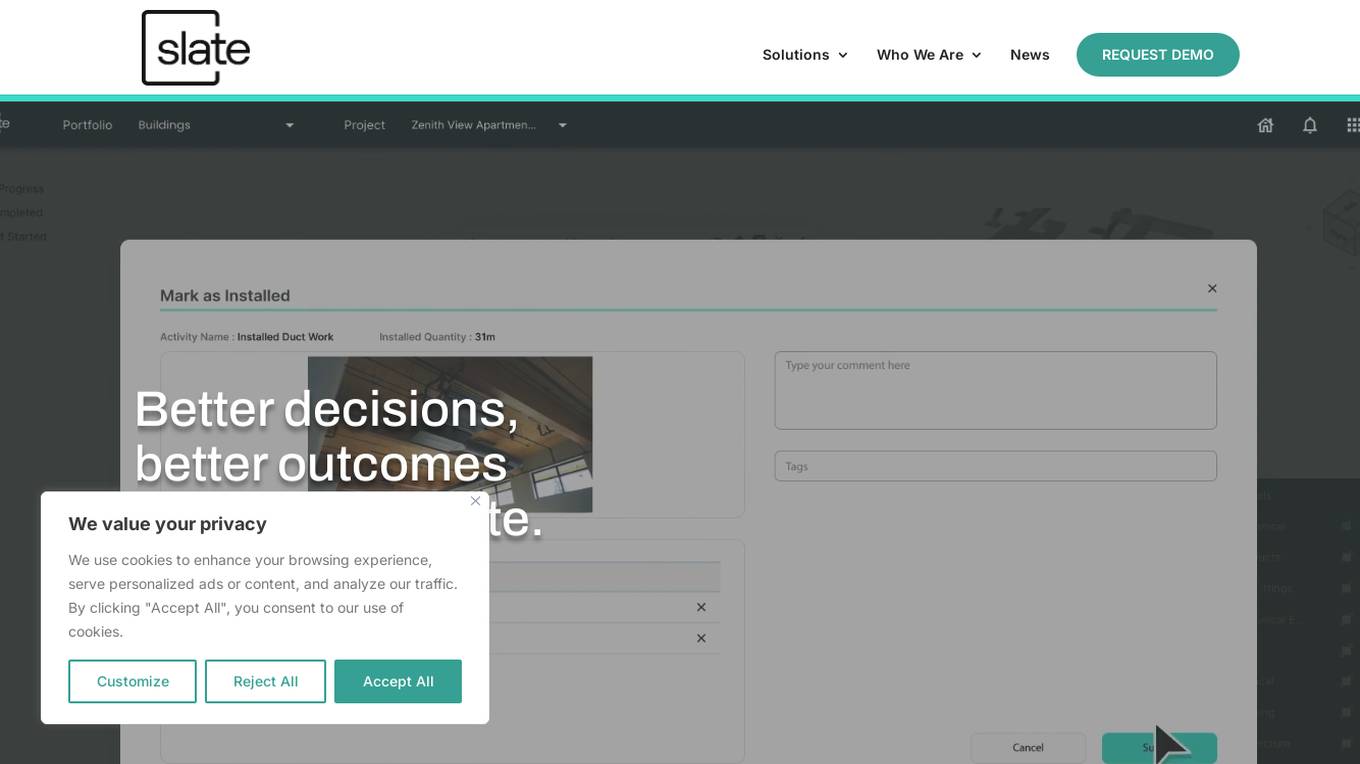

Slate Technologies Solutions

Slate Technologies Solutions is an AI-powered data analytics software that leverages predictive AI, generative AI, and conversational AI to provide a powerful toolkit for next-generation construction. The software connects, contextualizes, and enhances relevant information within existing data sources, allowing users to query, interact, and make decisions based on data insights and recommendations. Slate aims to address real-world construction problems by empowering teams with AI-driven intelligence, optimizing data, and turning unstructured information into actionable insights. The application improves operational efficiency, provides real-time progress reporting, and enables teams to make smarter decisions, ultimately driving profitability and success in construction projects.

Eventual

Eventual is an AI tool that revolutionizes data processing by building a generational technology for multimodal data handling. Their query engine, Daft, simplifies processing of images, video, audio, and text, liberating engineers from complex distributed systems. Eventual enables the development of AI systems previously deemed impossible, by embracing real-world data messiness. The tool is used by companies like Amazon, MobilEye, and CloudKitchens to process petabytes of data daily, marking a shift towards a more efficient and innovative AI infrastructure.

WizGenerator

WizGenerator is a free AI tool offering a wide range of AI generators for writing, creativity, marketing, social media, business, and lifestyle needs. It provides premium results and features tools like Random Number Generator, Analogy Generator, Instagram Captions Generator, Instagram Hashtag Generator, Google Search Query Generator, and Outfit Description Generator. Users can enjoy lifetime free access without any subscription requirements, ensuring quick and accurate results through optimized AI models. The tool respects user privacy by not saving any generated content and allows commercial use of the text-based content produced.

Keep

Keep is an open-source AIOps platform designed for managing alerts and events at scale. It offers features such as enrichment, workflows, a single pane of glass, and over 90 integrations. Keep is ideal for those dealing with alerts in complex environments and leverages AI for IT Operations. The platform provides high-quality integrations with monitoring systems, advanced querying capabilities, a workflow engine, and next-gen AIOps for enterprise-level alert management. Keep is maintained by a community of 'Keepers' and seamlessly integrates with existing IT operations tools to optimize alert management and reduce alert fatigue.

Helicone

Helicone is an open-source platform designed for developers, offering observability solutions for logging, monitoring, and debugging. It provides sub-millisecond latency impact, 100% log coverage, industry-leading query times, and is ready for production-level workloads. Trusted by thousands of companies and developers, Helicone leverages Cloudflare Workers for low latency and high reliability, offering features such as prompt management, uptime of 99.99%, scalability, and reliability. It allows risk-free experimentation, prompt security, and various tools for monitoring, analyzing, and managing requests.

Pandalyst

Pandalyst is an AI-powered tool that helps users write SQL queries faster and more efficiently. It provides an intuitive interface and uses AI to generate high-performing SQL queries without errors, regardless of the user's skill level. Pandalyst is suitable for both SQL beginners and experienced users and can be accessed through a web browser on any device. It prioritizes data security and does not store any data in its system.

2 - Open Source AI Tools

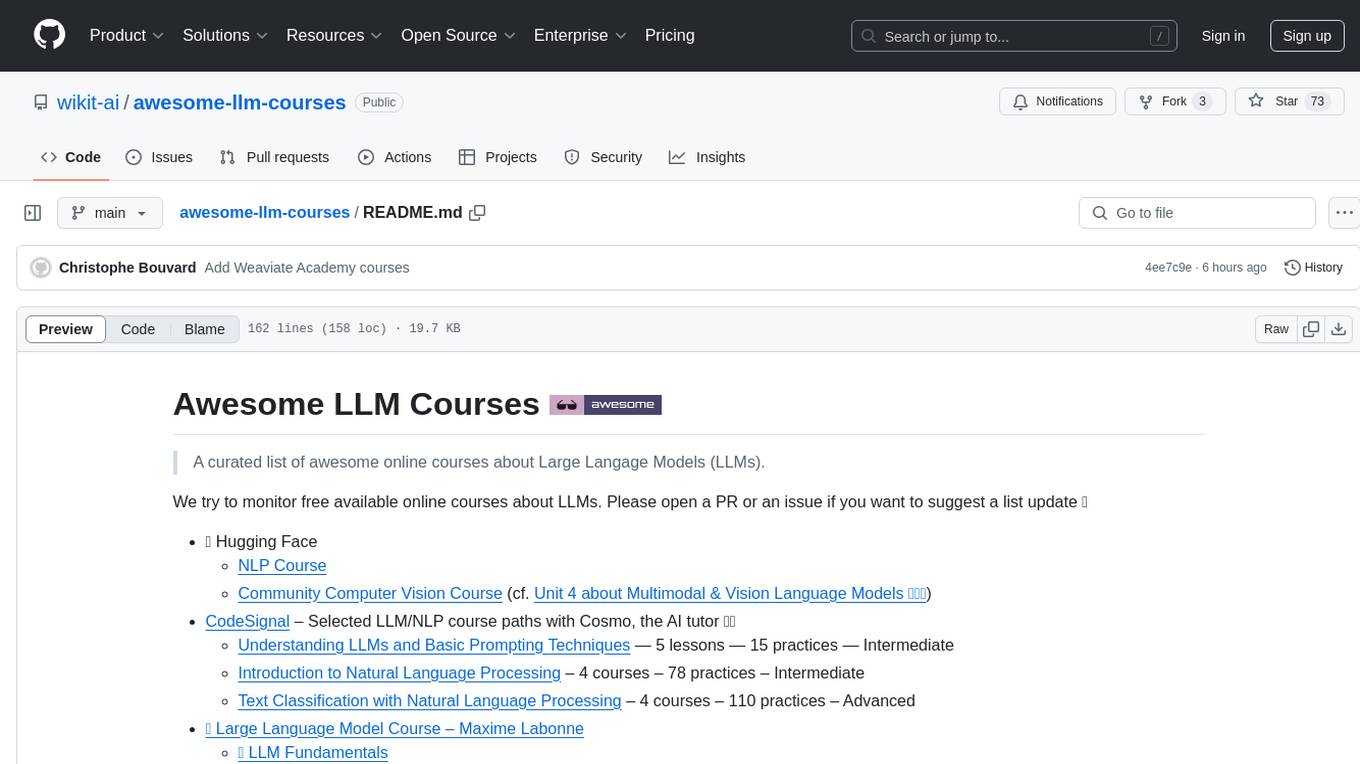

awesome-llm-courses

Awesome LLM Courses is a curated list of online courses focused on Large Language Models (LLMs). The repository aims to provide a comprehensive collection of free available courses covering various aspects of LLMs, including fundamentals, engineering, and applications. The courses are suitable for individuals interested in natural language processing, AI development, and machine learning. The list includes courses from reputable platforms such as Hugging Face, Udacity, DeepLearning.AI, Cohere, DataCamp, and more, offering a wide range of topics from pretraining LLMs to building AI applications with LLMs. Whether you are a beginner looking to understand the basics of LLMs or an intermediate developer interested in advanced topics like prompt engineering and generative AI, this repository has something for everyone.

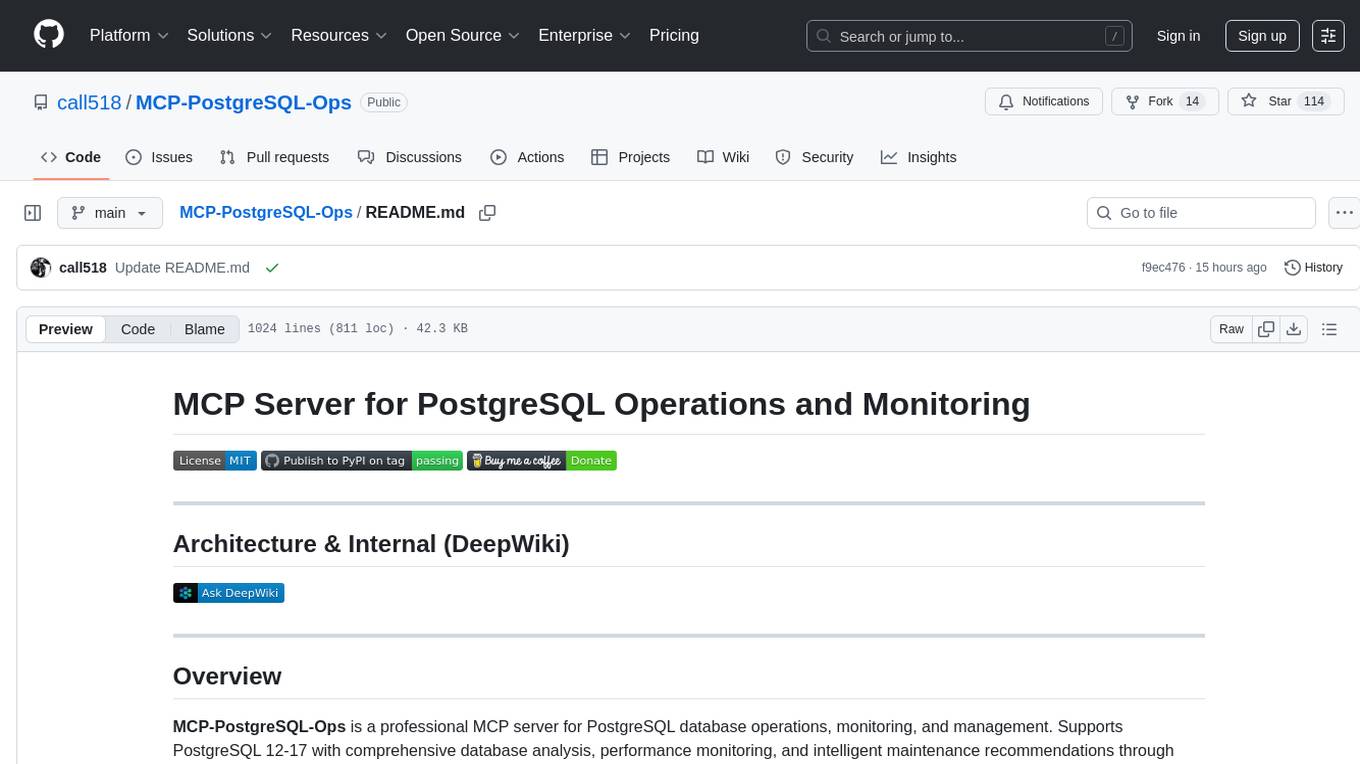

MCP-PostgreSQL-Ops

MCP-PostgreSQL-Ops is a repository containing scripts and tools for managing and optimizing PostgreSQL databases. It provides a set of utilities to automate common database administration tasks, such as backup and restore, performance tuning, and monitoring. The scripts are designed to simplify the operational aspects of running PostgreSQL databases, making it easier for administrators to maintain and optimize their database instances. With MCP-PostgreSQL-Ops, users can streamline their database management processes and improve the overall performance and reliability of their PostgreSQL deployments.

20 - OpenAI Gpts

Big Query SQL Query Optimizer

Expert in brief, direct SQL queries for BigQuery, with casual professional tone.

Code Companion

A helpful and knowledgeable programming assistant for coding queries and guidance.

Search Query Optimizer

Create the most effective database or search engine queries using keywords, truncation, and Boolean operators!

Negative Keyword Hunter

I'm a pro paid search tool that finds negative keywords for you from Google Ads search query data. I can quickly save you a lot of money in Google paid search. Let's have a SEM party.

GCP-BigQueryGPT

BigQueryGPT aids in mastering BigQuery SQL with concise, practical examples. Tailored for all skill levels, it simplifies complex queries, offering clear explanations and optimized solutions for efficient learning and query troubleshooting.

TradeComply

Import Export Compliance | Tariff Classification | Shipping Queries | Logistics & Supply Chain Solutions

TYPO3 GPT

Specialist for technical and editorial TYPO3 support. // FEATURES: Optional browsing via external api with 'web: search query' and optimized GitHub access.

CV & Resume ATS Optimize + 🔴Match-JOB🔴

Professional Resume & CV Assistant 📝 Optimize for ATS 🤖 Tailor to Job Descriptions 🎯 Compelling Content ✨ Interview Tips 💡