Best AI tools for< Optimize Architecture >

20 - AI tool Sites

Growcado

Growcado is an AI-powered marketing automation platform that helps businesses unify data and content to engage and personalize customer experiences for better results. The platform leverages advanced AI analysis to predict customer segments accurately, extract customer preferences, and deliver customized experiences with precision and ease. With features like AI-powered segmentation, personalization engine, dynamic segmentation, intelligent content management, and real-time analytics, Growcado empowers businesses to optimize their marketing strategies and enhance customer engagement. The platform also offers seamless integrations, scalable architecture, and no-code customization for easy deployment of personalized marketing assets across multiple channels.

Vilosia

Vilosia is an AI-powered platform that helps medium and large enterprises with internal development teams to visualize their software architecture, simplify migration, and improve system modularity. The platform uses Gen AI to automatically add event triggers to the codebase, enabling users to understand data flow, system dependencies, domain boundaries, and external APIs. Vilosia also offers AI workflow analysis to extract workflows from function call chains and identify database usage. Users can scan their codebase using CLI client & CI/CD integration and stay updated with new features through the newsletter.

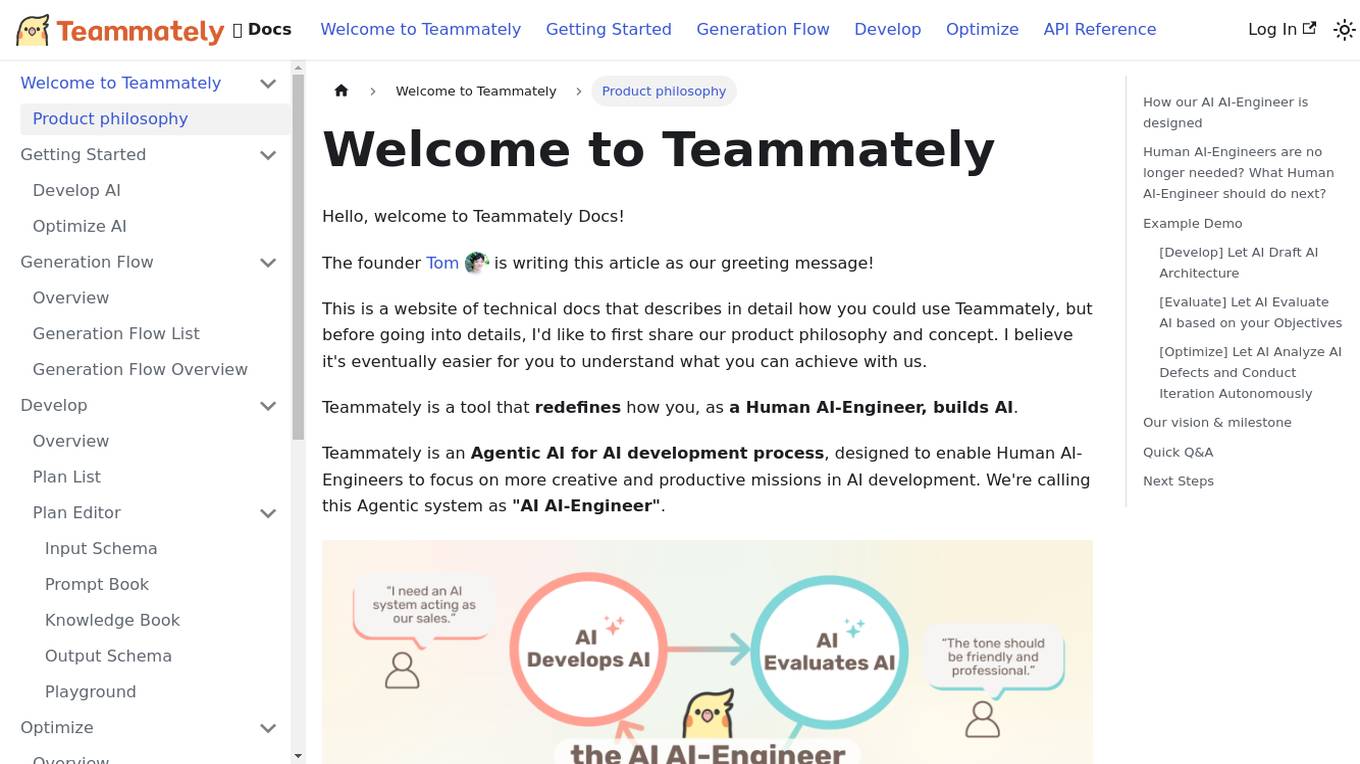

Teammately

Teammately is an AI tool that redefines how Human AI-Engineers build AI. It is an Agentic AI for AI development process, designed to enable Human AI-Engineers to focus on more creative and productive missions in AI development. Teammately follows the best practices of Human LLM DevOps and offers features like Development Prompt Engineering, Knowledge Tuning, Evaluation, and Optimization to assist in the AI development process. The tool aims to revolutionize AI engineering by allowing AI AI-Engineers to handle technical tasks, while Human AI-Engineers focus on planning and aligning AI with human preferences and requirements.

Teraflow.ai

Teraflow.ai is an AI-enablement company that specializes in helping businesses adopt and scale their artificial intelligence models. They offer services in data engineering, ML engineering, AI/UX, and cloud architecture. Teraflow.ai assists clients in fixing data issues, boosting ML model performance, and integrating AI into legacy customer journeys. Their team of experts deploys solutions quickly and efficiently, using modern practices and hyper scaler technology. The company focuses on making AI work by providing fixed pricing solutions, building team capabilities, and utilizing agile-scrum structures for innovation. Teraflow.ai also offers certifications in GCP and AWS, and partners with leading tech companies like HashiCorp, AWS, and Microsoft Azure.

Synthetic Users

Synthetic Users is an AI-powered user research tool that allows users to conduct user and market research without the need for recruitment. The tool leverages advanced AI architecture to create human-like AI participants for in-depth interviews and surveys. By simulating real human interactions, Synthetic Users provides valuable insights for various applications, helping users optimize user journeys, prioritize product roadmaps, and enhance product discovery. The tool offers a multi-agent framework, proprietary data integration, and continuous learning capabilities to ensure relevant and reflective data outputs.

NeuReality

NeuReality is an AI-centric solution designed to democratize AI adoption by providing purpose-built tools for deploying and scaling inference workflows. Their innovative AI-centric architecture combines hardware and software components to optimize performance and scalability. The platform offers a one-stop shop for AI inference, addressing barriers to AI adoption and streamlining computational processes. NeuReality's tools enable users to deploy, afford, use, and manage AI more efficiently, making AI easy and accessible for a wide range of applications.

FuriosaAI

FuriosaAI is an AI application that offers Hardware RNGD for LLM and Multimodality, as well as WARBOY for Computer Vision. It provides a comprehensive developer experience through the Furiosa SDK, Model Zoo, and Dev Support. The application focuses on efficient AI inference, high-performance LLM and multimodal deployment capabilities, and sustainable mass adoption of AI. FuriosaAI features the Tensor Contraction Processor architecture, software for streamlined LLM deployment, and a robust ecosystem support. It aims to deliver powerful and efficient deep learning acceleration while ensuring future-proof programmability and efficiency.

Launch Consulting Group

Launch Consulting Group is an AI and digital transformation consulting firm that empowers organizations to embrace AI transformation. They offer services such as AI guidance, predictive analytics, data architecture, and data governance to help businesses make smarter decisions, streamline workflows, and optimize performance. With a team of over 1200 Navigators worldwide, Launch Consulting Group is dedicated to helping businesses across various sectors leverage the power of artificial intelligence for success.

DevSecCops

DevSecCops is an AI-driven automation platform designed to revolutionize DevSecOps processes. The platform offers solutions for cloud optimization, machine learning operations, data engineering, application modernization, infrastructure monitoring, security, compliance, and more. With features like one-click infrastructure security scan, AI engine security fixes, compliance readiness using AI engine, and observability, DevSecCops aims to enhance developer productivity, reduce cloud costs, and ensure secure and compliant infrastructure management. The platform leverages AI technology to identify and resolve security issues swiftly, optimize AI workflows, and provide cost-saving techniques for cloud architecture.

Cambricon

Cambricon is an AI technology company that specializes in developing intelligent acceleration cards and systems. They offer a range of products including cloud AI acceleration cards, edge AI chips, and intelligent processing units. Cambricon's advanced chiplet technology and MLUarch03 architecture provide high-performance AI solutions for training and inference tasks. The company is dedicated to advancing the AI industry through innovative hardware and software platforms.

Weaviate

Weaviate is an AI-native database that developers love. It offers a feature-rich vector database trusted by AI innovators, empowering AI-native builders to create AI-powered search, retrieval augmented generation, and agentic AI applications. Weaviate simplifies the process of building production-ready AI applications by providing seamless model integration, pre-built database agents, and language-agnostic SDKs for easy development. With billion-scale architecture and enterprise-ready deployment options, Weaviate enables developers to scale seamlessly, deploy anywhere, and meet enterprise requirements. The platform is designed to help AI builders write less custom code, optimize costs, and build AI-native apps faster.

Provectus AI Solutions

Provectus is an Artificial Intelligence consultancy and solutions provider that helps businesses achieve their objectives through AI. They offer AI solutions that can be integrated into organizations through use case and platform approaches. Their solutions differentiate themselves by offering no license fees, open and certified architecture, cloud-native but vendor-agnostic solutions, turnkey solutions, and AI consulting and customization services. Provectus has successfully transformed industries such as insurance underwriting, digital banking, retail, healthcare, and manufacturing through their Generative AI technology.

Contentful

Contentful is a next-gen digital experience platform that offers a modular and composable architecture to manage and deliver personalized content efficiently. It provides AI capabilities for content creation, localization, and personalization, enabling users to drive higher omnichannel engagement and optimize performance at a granular level. With features like centralized content management, faster workflows, and no-code personalization tools, Contentful empowers growth teams to create consistent, on-brand experiences across multiple channels and regions. The platform is user-friendly, allowing marketers to tweak, test, and tailor experiences in real time without developer assistance.

EvolveLab

EvolveLab is a digital solutions provider specializing in BIM management and app development for the AEC (Architecture, Engineering, and Construction) industry. They offer a range of powerful apps and services designed to empower architects, engineers, and contractors to streamline their workflows and bring their ideas to life more efficiently. With a focus on data-driven design and AI technology, EvolveLab's innovative tools help users enhance productivity and turn concepts into reality.

LogicMonitor

LogicMonitor is a cloud-based infrastructure monitoring platform that provides real-time insights and automation for comprehensive, seamless monitoring with agentless architecture. It offers a wide range of features including infrastructure monitoring, network monitoring, server monitoring, remote monitoring, virtual machine monitoring, SD-WAN monitoring, database monitoring, storage monitoring, configuration monitoring, cloud monitoring, container monitoring, AWS Monitoring, GCP Monitoring, Azure Monitoring, digital experience SaaS monitoring, website monitoring, APM, AIOPS, Dexda Integrations, security dashboards, and platform demo logs. LogicMonitor's AI-driven hybrid observability helps organizations simplify complex IT ecosystems, accelerate incident response, and thrive in the digital landscape.

Pega

Pega is an enterprise AI decisioning and workflow automation platform that empowers organizations to unlock business-transforming outcomes with real-time optimization. Clients use Pega's AI capabilities to personalize engagement, automate customer service, and streamline operations. With a scalable and flexible architecture, Pega helps enterprises meet current customer demands while continuously transforming for the future.

Coram AI

Coram AI is a modern business video security platform that offers AI-powered solutions for various industries such as warehouse, manufacturing, healthcare, education, and more. It provides advanced features like gun detection, productivity alerts, facial recognition, and safety alerts to enhance security and operations. Coram AI's flexible architecture allows users to seamlessly integrate with any IP camera and scale effortlessly to meet their demands. With natural language search capabilities, users can quickly find relevant footage and improve decision-making. Trusted by local businesses and Fortune 500 companies, Coram AI delivers real business value through innovative AI tools and reliable customer support.

SvelteLaunch

SvelteLaunch is a SaaS/AI boilerplate and development as a service agency that helps users launch their next SaaS, AI, or business app fast. It offers a comprehensive Svelte 5 boilerplate and full-service development agency to build cutting-edge digital experiences. The platform provides a technology stack with features like SvelteLaunch CLI for web app architecture, AI capabilities for emails, payments, authentication, databases, styles, and SEO optimization. Users can save time with various tools like TailwindCSS, OpenAI, and more. SvelteLaunch aims to save months of development time and enable users to ship high-quality SaaS apps efficiently.

Architechtures

Architechtures is a generative AI-powered building design platform that helps architects and real estate developers design optimal residential developments in minutes. The platform uses AI technology to provide instant insights, regulatory confidence, and rapid iterations for architectural projects. Users can input design criteria, model solutions in 2D and 3D, and receive real-time architectural solutions that best fit their standards. Architechtures facilitates a collaborative design process between users and Artificial Intelligence, enabling efficient decision-making and control over design aspects.

Novita AI

Novita AI is an AI cloud platform offering Model APIs, Serverless, and GPU Instance services in a cost-effective and integrated manner to accelerate AI businesses. It provides optimized models for high-quality dialogue use cases, full spectrum AI APIs for image, video, audio, and LLM applications, serverless auto-scaling based on demand, and customizable GPU solutions for complex AI tasks. The platform also includes a Startup Program, 24/7 service support, and has received positive feedback for its reasonable pricing and stable services.

0 - Open Source AI Tools

20 - OpenAI Gpts

Technical Architecture Advisor

Guides in designing, implementing, and maintaining technical architecture.

Cloud Architecture Advisor

Guides cloud strategy and architecture to optimize business operations.

Network Architecture Advisor

Designs and optimizes organization's network architecture to ensure seamless operations.

Triage Management and Pipeline Architecture

Strategic advisor for triage management and pipeline optimization in business operations.

MentorFront Architect

Expert in microfrontend architecture, guiding through trends, best practices, and challenges of complex, scalable UI integrations.

Software Architect

Expert in software architecture, ensuring integrity and scalability through best practices.

Data Architect

Database Developer assisting with SQL/NoSQL, architecture, and optimization.

Floor Plan Optimization Assistant

Help optimize floor plan, for better experience, please visit collov.ai

Code Architect AI

First discusses assistant details, then implements tailored code solutions.

Cloudwise Consultant

Expert in cloud-native solutions, provides tailored tech advice and cost estimates.

Cloud Computing

Expert in cloud computing, offering insights on services, security, and infrastructure.

Back-end Development Advisor

Drives efficient back-end processes through innovative coding and software solutions.