Best AI tools for< Manage Data Infrastructure >

20 - AI tool Sites

Labelbox

Labelbox is a data factory platform that empowers AI teams to manage data labeling, train models, and create better data with internet scale RLHF platform. It offers an all-in-one solution comprising tooling and services powered by a global community of domain experts. Labelbox operates a global data labeling infrastructure and operations for AI workloads, providing expert human network for data labeling in various domains. The platform also includes AI-assisted alignment for maximum efficiency, data curation, model training, and labeling services. Customers achieve breakthroughs with high-quality data through Labelbox.

500 supabaseUrl

500 supabaseUrl is a cloud-based database service that provides a fully managed, scalable, and secure way to store and manage data. It is designed to be easy to use, with a simple and intuitive interface that makes it easy to create, manage, and query databases. 500 supabaseUrl is also highly scalable, so it can handle even the most demanding workloads. And because it is fully managed, you don't have to worry about the underlying infrastructure or maintenance tasks.

Context Data

Context Data is an enterprise data platform designed for Generative AI applications. It enables organizations to build AI apps without the need to manage vector databases, pipelines, and infrastructure. The platform empowers AI teams to create mission-critical applications by simplifying the process of building and managing complex workflows. Context Data also provides real-time data processing capabilities and seamless vector data processing. It offers features such as data catalog ontology, semantic transformations, and the ability to connect to major vector databases. The platform is ideal for industries like financial services, healthcare, real estate, and shipping & supply chain.

Baseten

Baseten is a machine learning infrastructure that provides a unified platform for data scientists and engineers to build, train, and deploy machine learning models. It offers a range of features to simplify the ML lifecycle, including data preparation, model training, and deployment. Baseten also provides a marketplace of pre-built models and components that can be used to accelerate the development of ML applications.

MOSTLY AI Platform

The website offers a Synthetic Data Generation platform with the highest accuracy for free. It provides detailed information on synthetic data, data anonymization, and features a Python Client for data generation. The platform ensures privacy and security, allowing users to create fully anonymous synthetic data from original data. It supports various AI/ML use cases, self-service analytics, testing & QA, and data sharing. The platform is designed for Enterprise organizations, offering scalability, privacy by design, and the world's most accurate synthetic data.

HIVE Digital Technologies

HIVE Digital Technologies is a company specializing in building and operating cutting-edge data centers, with a focus on Bitcoin mining and advancing Web3, AI, and HPC technologies. They offer cloud services, operate data centers in Canada, Iceland, and Sweden, and have a fleet of industrial GPUs for AI applications. The company is known for its expertise in digital infrastructure and commitment to using renewable energy sources.

Reaktr.ai

Reaktr.ai is an AI-driven technology solutions provider that offers advanced AI automation services, predictive analytics, and sophisticated machine learning algorithms to help enterprises operate with agility and precision. The platform equips businesses with intelligent automation, enhanced security, and immersive experiences to drive growth, efficiency, and innovation. Reaktr.ai specializes in cloud management, cybersecurity, and AI services, providing solutions for data infrastructure, security testing, compliance, and more. With a commitment to redefining how enterprises operate, Reaktr.ai leverages AI capabilities to help businesses prosper in an AI-ready landscape.

Relyance AI

Relyance AI is a platform that offers 360 Data Governance and Trust solutions. It helps businesses safeguard against fines and reputation damage while enhancing customer trust to drive business growth. The platform provides visibility into enterprise-wide data processing, ensuring compliance with regulatory and customer obligations. Relyance AI uses AI-powered risk insights to proactively identify and address risks, offering a unified trust and governance infrastructure. It offers features such as data inventory and mapping, automated assessments, security posture management, and vendor risk management. The platform is designed to streamline data governance processes, reduce costs, and improve operational efficiency.

Goptimise

Goptimise is a no-code AI-powered scalable backend builder that helps developers craft scalable, seamless, powerful, and intuitive backend solutions. It offers a solid foundation with robust and scalable infrastructure, including dedicated infrastructure, security, and scalability. Goptimise simplifies software rollouts with one-click deployment, automating the process and amplifying productivity. It also provides smart API suggestions, leveraging AI algorithms to offer intelligent recommendations for API design and accelerating development with automated recommendations tailored to each project. Goptimise's intuitive visual interface and effortless integration make it easy to use, and its customizable workspaces allow for dynamic data management and a personalized development experience.

DDN A³I

DDN A³I is an AI storage platform that maximizes business differentiation and market leadership through data utilization, AI, and advanced analytics. It offers comprehensive enterprise features, easy deployment and management, predictable scaling, data protection, and high performance. DDN A³I enables organizations to accelerate insights, reduce costs, and optimize GPU productivity for faster results.

BuildAi

BuildAi is an AI tool designed to provide the lowest cost GPU cloud for AI training on the market. The platform is powered with renewable energy, enabling companies to train AI models at a significantly reduced cost. BuildAi offers interruptible pricing, short term reserved capacity, and high uptime pricing options. The application focuses on optimizing infrastructure for training and fine-tuning machine learning models, not inference, and aims to decrease the impact of computing on the planet. With features like data transfer support, SSH access, and monitoring tools, BuildAi offers a comprehensive solution for ML teams.

Trieve

Trieve is an AI-first infrastructure API that offers search, recommendations, and RAG capabilities by combining language models with tools for fine-tuning ranking and relevance. It helps companies build unfair competitive advantages through their discovery experiences, powering over 30,000 discovery experiences across various categories. Trieve supports semantic vector search, BM25 & SPLADE full-text search, hybrid search, merchandising & relevance tuning, and sub-sentence highlighting. The platform is built on open-source models, ensuring data privacy, and offers self-hostable options for sensitive data and maximum performance.

Cordel Connect

Cordel Connect is an open-data inspection management platform that enables the storage, management, visualization, and intelligent analysis of railway inspection data. It offers powerful, precise, unattended sensing systems and data workflows to help railways automate high-frequency, high-precision inspections from any rail vehicle. The platform consolidates all survey and inspection data into a single source of truth, eliminating data silos and integrating with existing systems. Cordel Connect utilizes powerful AI to automate the infrastructure inspection process, delivering improved inspection insights and compliance. It also provides modules for managing surveys, asset inspections, and safety compliance assessments tailored to network standards.

Nscale

Nscale is a full-stack, scalable, and sustainable AI cloud platform that offers a wide range of AI services and solutions. It provides services for developing, training, tuning, and deploying AI models using on-demand services. Nscale also offers serverless inference API endpoints, fine-tuning capabilities, private cloud solutions, and various GPU clusters engineered for AI. The platform aims to simplify the journey from AI model development to production, offering a marketplace for AI/ML tools and resources. Nscale's infrastructure includes data centers powered by renewable energy, high-performance GPU nodes, and optimized networking and storage solutions.

Anyscale

Anyscale is a company that provides a scalable compute platform for AI and Python applications. Their platform includes a serverless API for serving and fine-tuning open LLMs, a private cloud solution for data privacy and governance, and an open source framework for training, batch, and real-time workloads. Anyscale's platform is used by companies such as OpenAI, Uber, and Spotify to power their AI workloads.

Gavel

Gavel is a legal document automation and intake software designed for legal professionals. It offers a range of features to help lawyers and law firms automate tasks, streamline workflows, and improve efficiency. Gavel's AI-enabled onboarding process, Blueprint, streamlines the onboarding process without accessing any client data. The software also includes features such as secure client collaboration, integrated payments, and custom workflow creation. Gavel is suitable for legal professionals of all sizes and practice areas, from solo practitioners to large firms.

OmniAI

OmniAI is an AI tool that allows teams to deploy AI applications on their existing infrastructure. It provides a unified API experience for building AI applications and offers a wide selection of industry-leading models. With tools like Llama 3, Claude 3, Mistral Large, and AWS Titan, OmniAI excels in tasks such as natural language understanding, generation, safety, ethical behavior, and context retention. It also enables users to deploy and query the latest AI models quickly and easily within their virtual private cloud environment.

DataRobot

DataRobot is a leading provider of AI cloud platforms. It offers a range of AI tools and services to help businesses build, deploy, and manage AI models. DataRobot's platform is designed to make AI accessible to businesses of all sizes, regardless of their level of AI expertise. DataRobot's platform includes a variety of features to help businesses build and deploy AI models, including: * A drag-and-drop interface that makes it easy to build AI models, even for users with no coding experience. * A library of pre-built AI models that can be used to solve common business problems. * A set of tools to help businesses monitor and manage their AI models. * A team of AI experts who can provide support and guidance to businesses using the platform.

Domino Data Lab

Domino Data Lab is an enterprise AI platform that enables users to build, deploy, and manage AI models across any environment. It fosters collaboration, establishes best practices, and ensures governance while reducing costs. The platform provides access to a broad ecosystem of open source and commercial tools, and infrastructure, allowing users to accelerate and scale AI impact. Domino serves as a central hub for AI operations and knowledge, offering integrated workflows, automation, and hybrid multicloud capabilities. It helps users optimize compute utilization, enforce compliance, and centralize knowledge across teams.

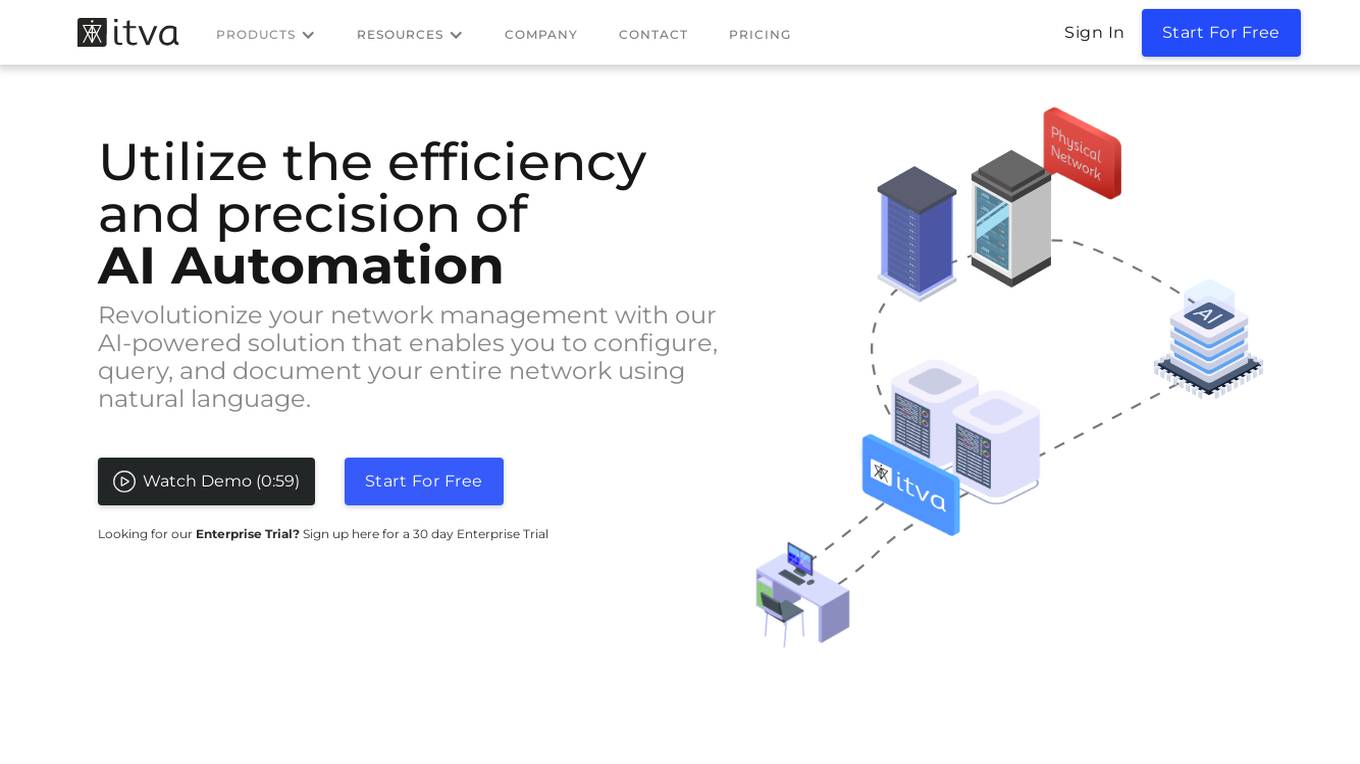

ITVA

ITVA is an AI automation tool for network infrastructure products that revolutionizes network management by enabling users to configure, query, and document their network using natural language. It offers features such as rapid configuration deployment, network diagnostics acceleration, automated diagram generation, and modernized IP address management. ITVA's unique solution securely connects to networks, combining real-time data with a proprietary dataset curated by veteran engineers. The tool ensures unparalleled accuracy and insights through its real-time data pipeline and on-demand dynamic analysis capabilities.

0 - Open Source AI Tools

20 - OpenAI Gpts

Data Engineer Consultant

Guides in data engineering tasks with a focus on practical solutions.

ML Engineer GPT

I'm a Python and PyTorch expert with knowledge of ML infrastructure requirements ready to help you build and scale your ML projects.

Cloud Computing

Expert in cloud computing, offering insights on services, security, and infrastructure.

Nimbus Navigator

Cloud Engineer Expert, guiding in cloud tech, projects, career, and industry trends.

Azure Mentor

Expert in Azure's latest services, including Application Insights, API Management, and more.

Cloudwise Consultant

Expert in cloud-native solutions, provides tailored tech advice and cost estimates.

Awesome-Selfhosted

Recommends self-hosted IT solutions, tailored for professionals (from https://awesome-selfhosted.net/)

👑 Data Privacy for PI & Security Firms 👑

Private Investigators and Security Firms, given the nature of their work, handle highly sensitive information and must maintain strict confidentiality and data privacy standards.

👑 Data Privacy for Watch & Jewelry Designers 👑

Watchmakers and Jewelry Designers, high-end businesses dealing with valuable items and personal details of clients, making data privacy and security paramount.

👑 Data Privacy for Event Management 👑

Data Privacy for Event Management and Ticketing Services handle personal data such as names, contact details, and payment information for event registrations and ticket purchases.

DataKitchen DataOps and Data Observability GPT

A specialist in DataOps and Data Observability, aiding in data management and monitoring.

Data Governance Advisor

Ensures data accuracy, consistency, and security across organization.

👑 Data Privacy for Home Inspection & Appraisal 👑

Home Inspection and Appraisal Services have access to personal property and related information, requiring them to be vigilant about data privacy.