Best AI tools for< Interoperate With Pytorch >

6 - AI tool Sites

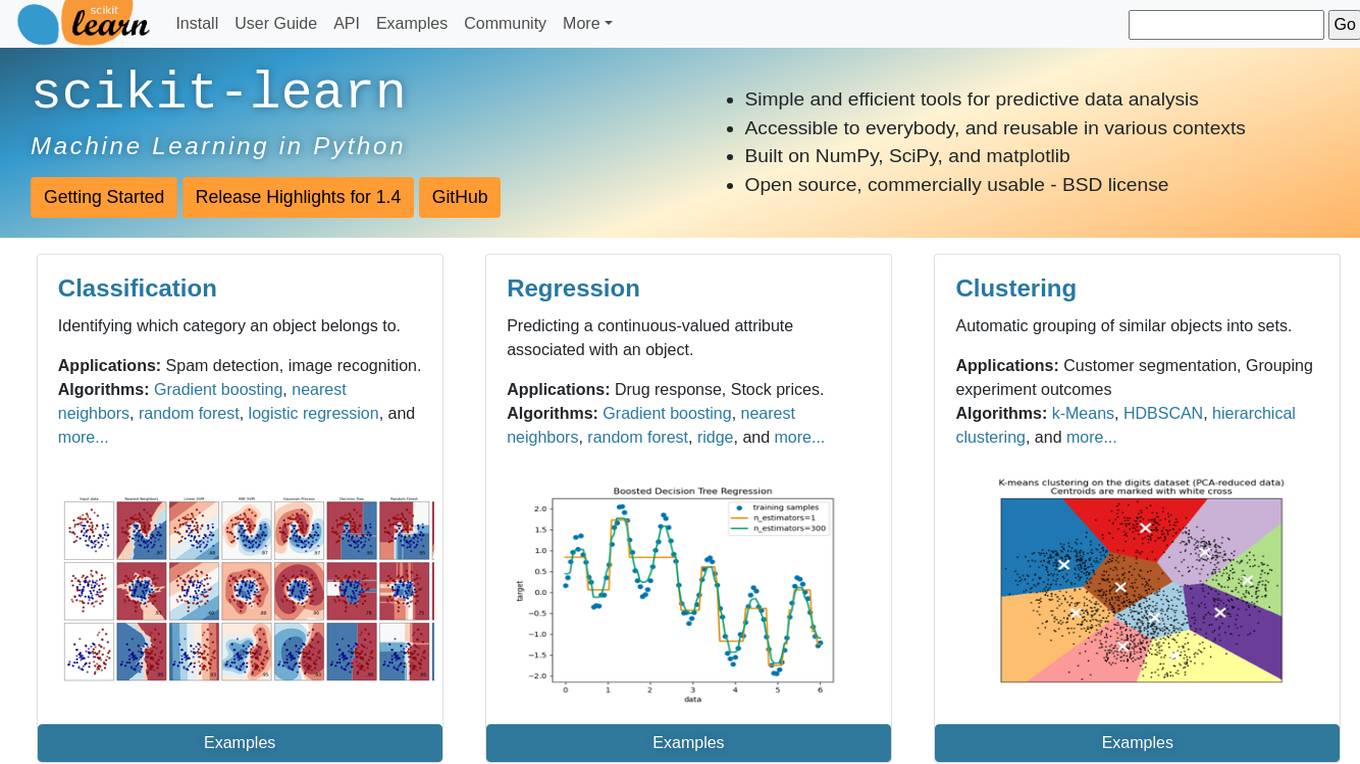

scikit-learn

Scikit-learn is a free software machine learning library for the Python programming language. It features various classification, regression and clustering algorithms including support vector machines, random forests, gradient boosting, k-means and DBSCAN, and is designed to interoperate with the Python numerical and scientific libraries NumPy and SciPy.

MagicSchool.ai

MagicSchool.ai is an AI-powered platform designed specifically for educators and students. It offers a comprehensive suite of 60+ AI tools to help teachers with lesson planning, differentiation, assessment writing, IEP writing, clear communication, and more. MagicSchool.ai is easy to use, with an intuitive interface and built-in training resources. It is also interoperable with popular LMS platforms and offers easy export options. MagicSchool.ai is committed to responsible AI for education, with a focus on safety, privacy, and compliance with FERPA and state privacy laws.

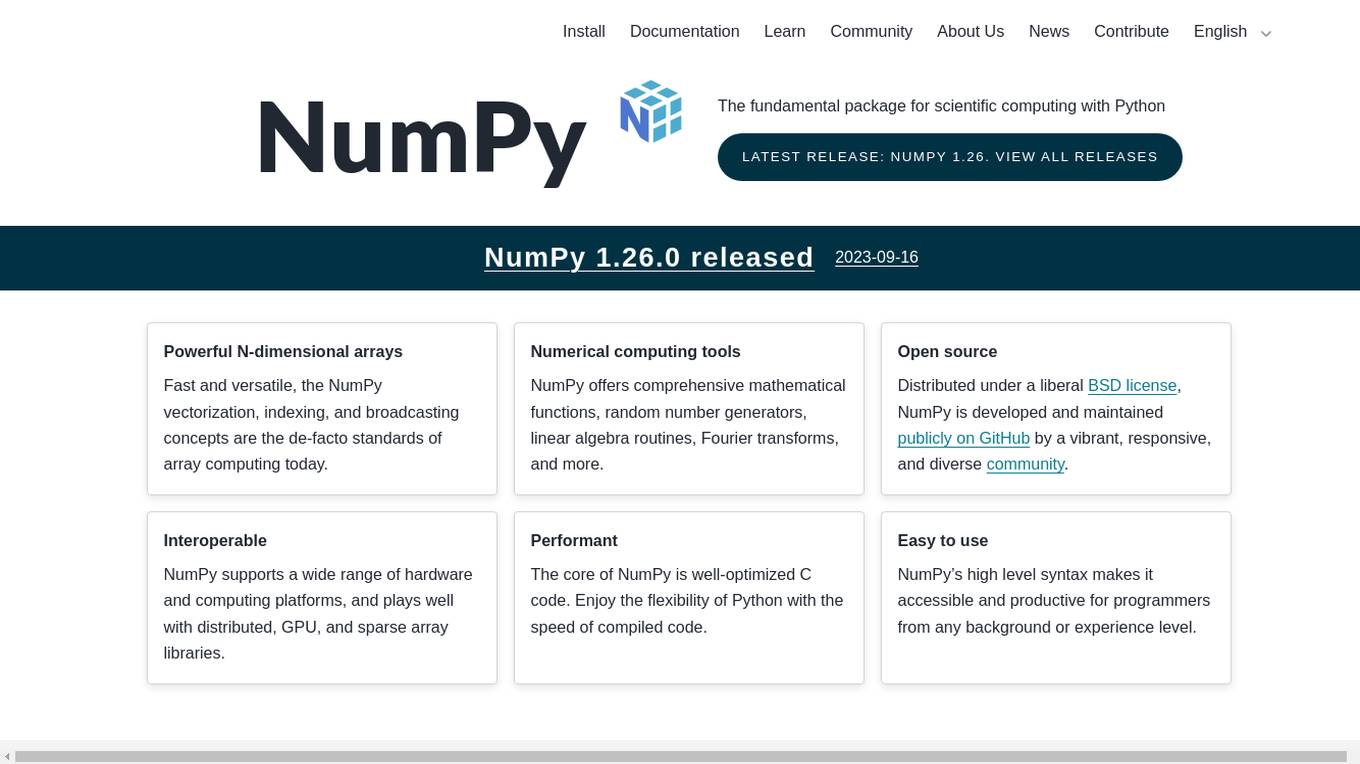

NumPy

NumPy is a library for the Python programming language, adding support for large, multi-dimensional arrays and high-level mathematical functions to perform operations on these arrays. It is the fundamental package for scientific computing with Python and is used in a wide range of applications, including data science, machine learning, and image processing. NumPy is open source and distributed under a liberal BSD license, and is developed and maintained publicly on GitHub by a vibrant, responsive, and diverse community.

AnthologyAI

AnthologyAI is a breakthrough Artificial Intelligence Consumer Insights & Predictions Platform that provides access to compliant consumer data with deep, real-time context sourced directly from consumers. The platform offers powerful predictive models and enables businesses to predict market dynamics and consumer behaviors with unparalleled precision. AnthologyAI's platform is interoperable for both enterprises and startups, available through an API storefront for real-time interactions and various data platforms for model aggregations. The platform empowers businesses across industries to understand consumer behavior, make data-driven decisions, and drive business outcomes.

Genies

Genies is an Avatar Technology Company that offers a platform for creating customizable, persistent, and interoperable avatars. The company leverages machine learning and computer graphics to enable limitless compatibility and customization for fashion, items, and avatars. Genies provides an Interoperable Framework and Traits Framework for developers to build personalized experiences based on user behaviors. Users can unlock virtual items and take them through the network of experiences. The platform aims to revolutionize communication by making avatars a daily method of interaction.

Futureverse

Futureverse is a revolutionary AI and metaverse technology platform that empowers developers to create open, scalable, and interoperable apps, games, and experiences. The platform includes tools like FuturePass smart wallet SDK for user onboarding, D.O.T. Asset Pipeline for instant 3D character generation, AI Gaming Platform for strategy sports games, and more. Futureverse also leads the development of The Root Network, a modular toolkit for scalable and secure metaverse experiences. The platform enables users to own, train, and trade unique artificial intelligence via digital Brains, revolutionizing content creation and world building.

1 - Open Source AI Tools

GrAIdient

GrAIdient is a framework designed to enable the development of deep learning models using the internal GPU of a Mac. It provides access to the graph of layers, allowing for unique model design with greater understanding, control, and reproducibility. The goal is to challenge the understanding of deep learning models, transitioning from black box to white box models. Key features include direct access to layers, native Mac GPU support, Swift language implementation, gradient checking, PyTorch interoperability, and more. The documentation covers main concepts, architecture, and examples. GrAIdient is MIT licensed.