Best AI tools for< Expose Corruption >

9 - AI tool Sites

DataLang

DataLang is a tool that allows you to chat with your databases, expose a specific set of data (using SQL) to train GPT, and then chat with it in natural language. You can also use DataLang to automatically make your SQL views available via API, share it with your privately users, or make it public.

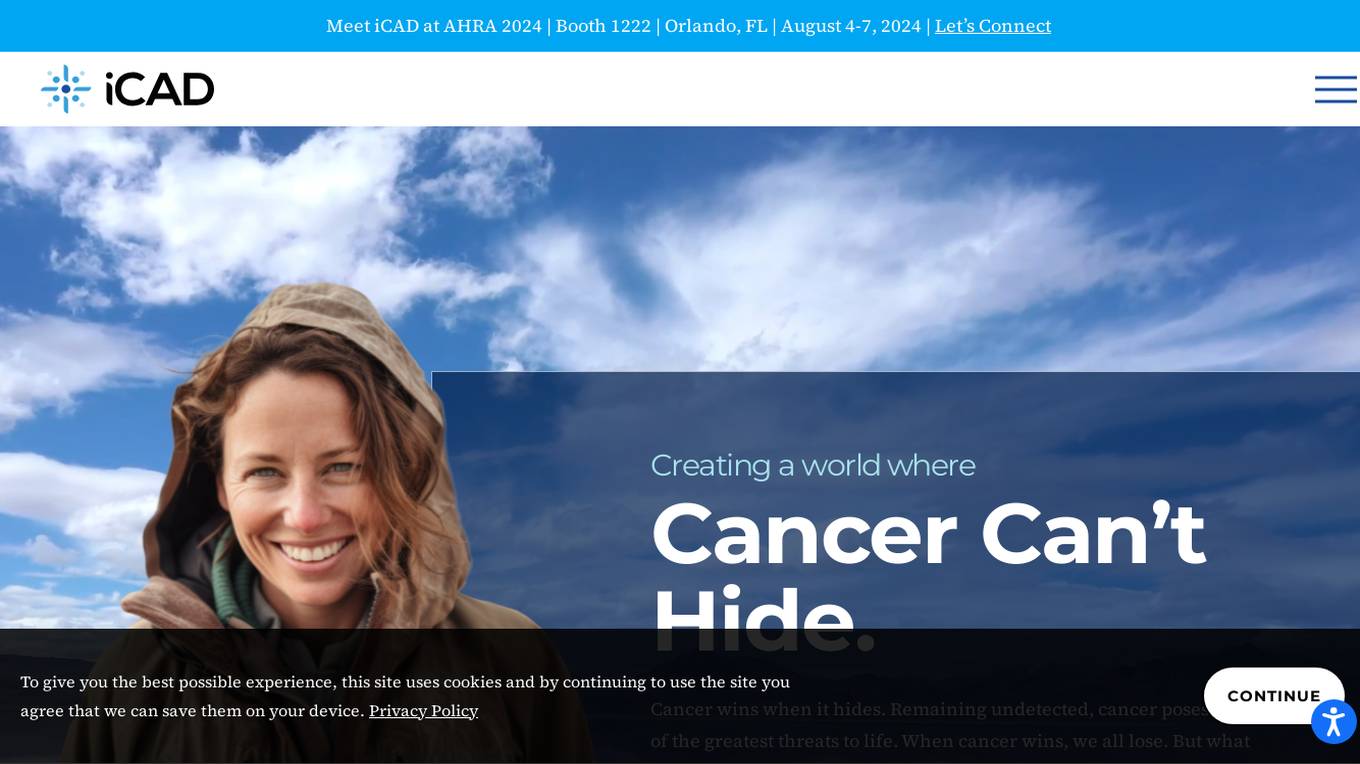

iCAD

iCAD is an AI-powered application designed for cancer detection, specifically focusing on breast cancer. The platform offers a suite of solutions including Detection, Density Assessment, and Risk Evaluation, all backed by science, clinical evidence, and proven patient outcomes. iCAD's AI-powered solutions aim to expose the hiding place of cancer, providing certainty and peace of mind, ultimately improving patient outcomes and saving more lives.

OpenBuckets

OpenBuckets is a web application designed to help users find and secure open buckets in cloud storage systems. It provides a simple and efficient way to identify and protect sensitive data that may be exposed due to misconfigured cloud storage settings. With OpenBuckets, users can easily scan their cloud storage accounts for publicly accessible buckets and take necessary actions to safeguard their information.

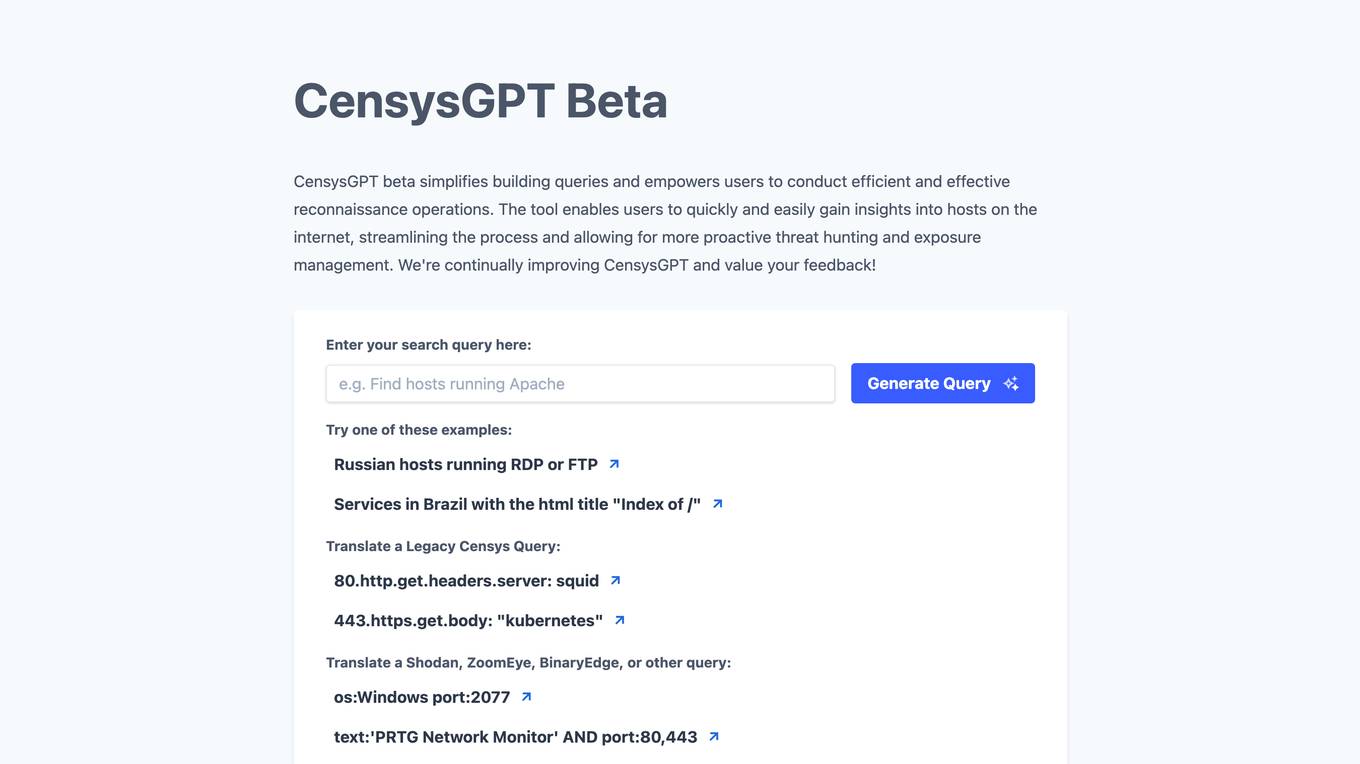

CensysGPT Beta

CensysGPT Beta is a tool that simplifies building queries and empowers users to conduct efficient and effective reconnaissance operations. It enables users to quickly and easily gain insights into hosts on the internet, streamlining the process and allowing for more proactive threat hunting and exposure management.

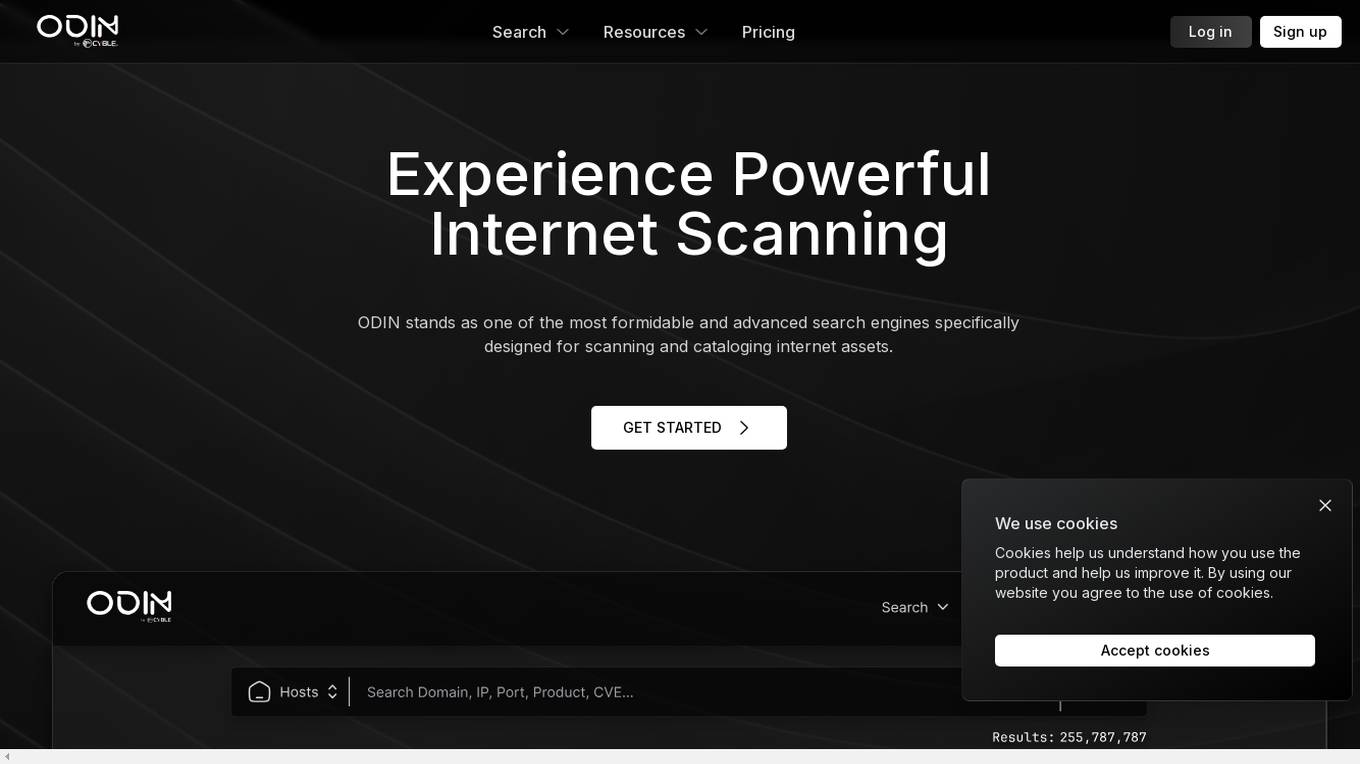

ODIN

ODIN is a powerful internet scanning search engine designed for scanning and cataloging internet assets. It offers enhanced scanning capabilities, faster refresh rates, and comprehensive visibility into open ports. With over 45 modules covering various aspects like HTTP, Elasticsearch, and Redis, ODIN enriches data and provides accurate and up-to-date information. The application uses AI/ML algorithms to detect exposed buckets, files, and potential vulnerabilities. Users can perform granular searches, access exploit information, and integrate effortlessly with ODIN's API, SDKs, and CLI. ODIN allows users to search for hosts, exposed buckets, exposed files, and subdomains, providing detailed insights and supporting diverse threat intelligence applications.

ODIN

ODIN is a powerful internet scanning search engine designed for scanning and cataloging internet assets. It offers enhanced scanning capabilities, faster refresh rates, and comprehensive visibility into open ports. With over 45 modules covering various services, ODIN provides detailed insights using Lucene query syntax. It identifies potential CVEs, accesses exploit information, and enables reverse searches for threat investigations. ODIN also offers AI/ML-based exposed buckets detection, API integration, and SDKs in multiple languages. Users can search for hosts, exposed buckets, exposed files, and subdomains, with granular searches and seamless integrations. The application is developer-friendly, with APIs, SDKs, and CLI available for automation and programmatic integration.

Junbi.ai

Junbi.ai is an AI-powered insights platform designed for YouTube advertisers. It offers AI-powered creative insights for YouTube ads, allowing users to benchmark their ads, predict performance, and test quickly and easily with fully AI-powered technology. The platform also includes expoze.io API for attention prediction on images or videos, with scientifically valid results and developer-friendly features for easy integration into software applications.

Sacred

Sacred is a tool to configure, organize, log and reproduce computational experiments. It is designed to introduce only minimal overhead, while encouraging modularity and configurability of experiments. The ability to conveniently make experiments configurable is at the heart of Sacred. If the parameters of an experiment are exposed in this way, it will help you to: keep track of all the parameters of your experiment easily run your experiment for different settings save configurations for individual runs in files or a database reproduce your results In Sacred we achieve this through the following main mechanisms: Config Scopes are functions with a @ex.config decorator, that turn all local variables into configuration entries. This helps to set up your configuration really easily. Those entries can then be used in captured functions via dependency injection. That way the system takes care of passing parameters around for you, which makes using your config values really easy. The command-line interface can be used to change the parameters, which makes it really easy to run your experiment with modified parameters. Observers log every information about your experiment and the configuration you used, and saves them for example to a Database. This helps to keep track of all your experiments. Automatic seeding helps controlling the randomness in your experiments, such that they stay reproducible.

Wunderschild

Schwarzthal Tech's Wunderschild is an AI-driven platform for financial crime intelligence that revolutionizes compliance and investigation techniques. It provides intelligence solutions based on network assessment, data linkage, flow aggregation, and machine learning. The platform offers expertise and insights on strategic risks related to Politically Exposed Persons, Serious Organised Crime, Terrorism Financing, and more. With features like Compliance, Investigation, Know Your Network, Media Scan, Document Drill, and Transaction Monitoring, Wunderschild empowers users to enhance compliance functions, conduct deep dives into complex transnational crime cases, and detect suspicious activities. The platform is trusted by global companies and offers advanced OCR, multilingual support, and key information extraction capabilities.

0 - Open Source AI Tools

3 - OpenAI Gpts

Secret Revealer

You want to know secrets from the world of the beautiful and rich, you are interested in the truth about what is really happening in the world. Then just ask Secret Revealer. Secret Revealer has answers to the most explosive questions that will change your life. Start today before it's too late.

Financial Sentinel

An unyielding champion against predatory financial practices, adept at analyzing and exposing complex financial mechanisms, and relentless in advocating for consumer rights and transparency.