Best AI tools for< Evaluate Critical Thinking >

20 - AI tool Sites

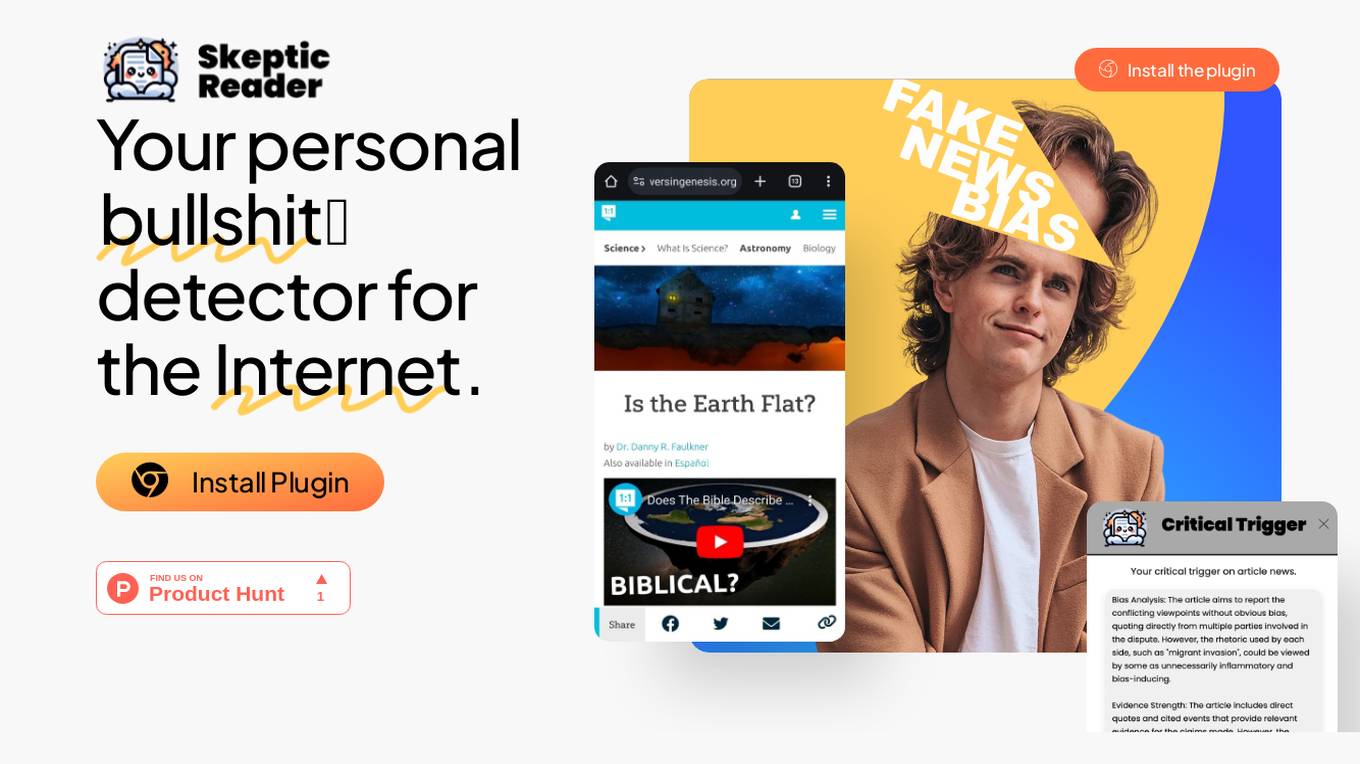

Skeptic Reader

Skeptic Reader is a Chrome plugin that helps users detect bias and logical fallacies in real-time while browsing the internet. It uses GPT-4 technology to identify potential biases and fallacies in news articles, social media posts, and other online content. The plugin provides users with counter-arguments and suggestions for further research, helping them to make more informed decisions about the information they consume. Skeptic Reader is designed to promote critical thinking and media literacy, and it is a valuable tool for anyone who wants to navigate the online world with a more discerning eye.

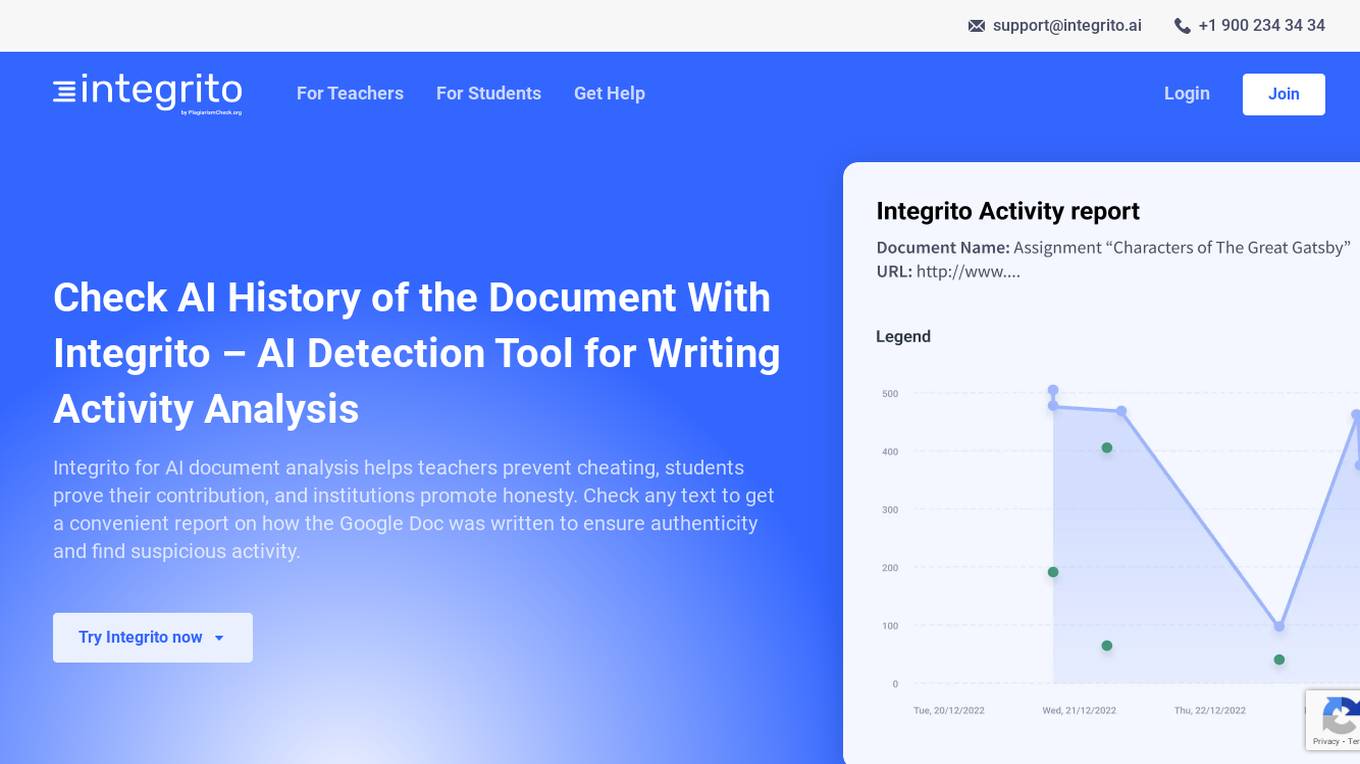

Integrito

Integrito is an AI detection tool for writing activity analysis, designed to help teachers prevent cheating, students prove their contribution, and institutions promote honesty. It offers a comprehensive text analysis to ensure authenticity, detect suspicious activity, and track the writing process. Integrito empowers users to evaluate contribution and editing time, view the history of the writing process, and unveil contract-cheating and ghost-writing by writing services. The tool aims to enhance critical thinking, foster creativity, and promote high standards in academia by providing plagiarism checking, AI detection, grammar checking, and authorship verification features.

Skeptic Reader

Skeptic Reader is a Chrome plugin that detects biases and logical fallacies in real-time. It's powered by GPT4 and helps users to critically evaluate the information they consume online. The plugin highlights biases and fallacies, provides counter-arguments, and suggests alternative perspectives. It's designed to promote informed skepticism and encourage users to question the information they encounter online.

PubCompare

PubCompare is a powerful AI-powered tool that helps scientists search, compare, and evaluate experimental protocols. With over 40 million protocols in its database, PubCompare is the largest repository of trusted experimental protocols. PubCompare's AI-powered search features allow users to find similar protocols, highlight critical steps, and evaluate the reproducibility of protocols based on in-protocol citations. PubCompare is available from any computer and requires no download.

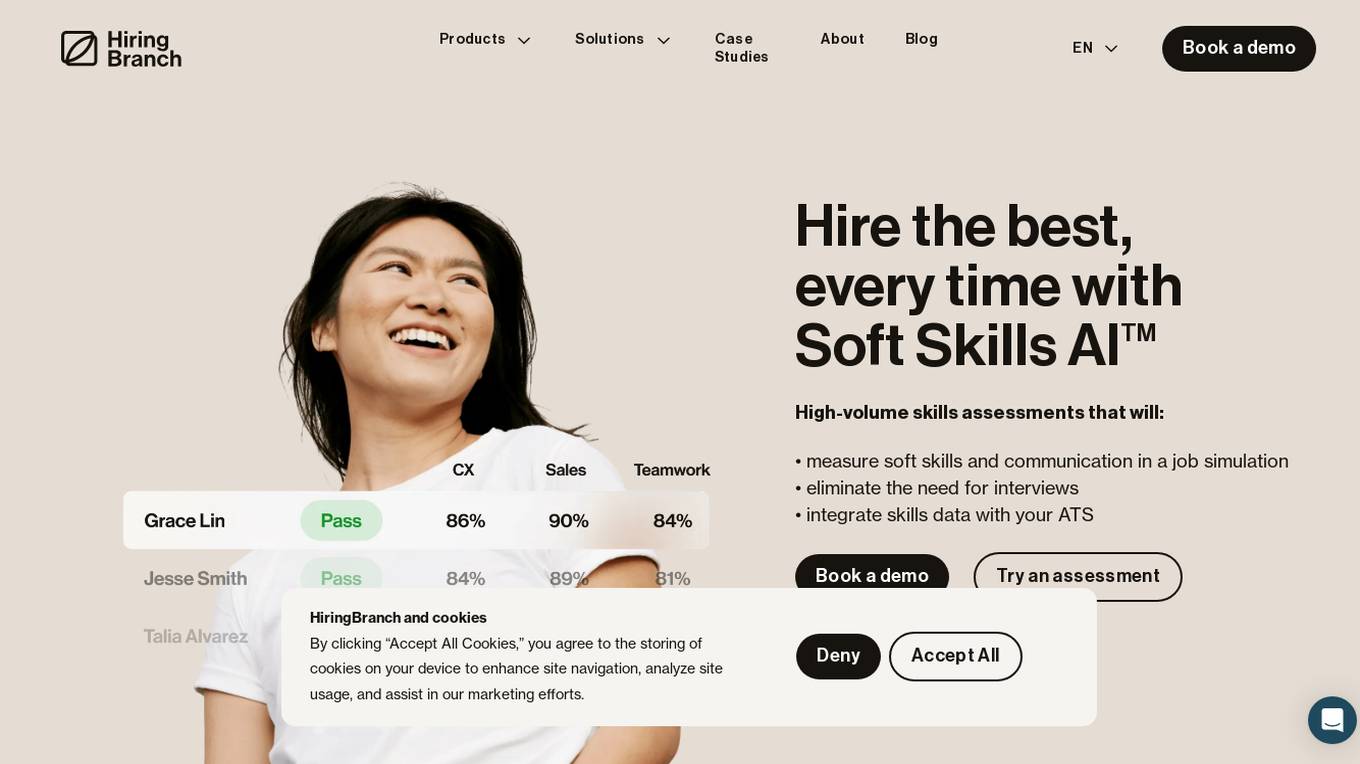

HiringBranch

HiringBranch is an AI-powered platform that offers high-volume skills assessments to help companies hire the best candidates efficiently. The platform accurately measures soft skills and communication through open-ended conversational assessments, eliminating the need for traditional interviews. HiringBranch's AI skills assessments are tailored to various industries such as Telecommunication, Retail, Banking & Insurance, and Contact Centers, providing real-time evaluation of role-critical skills. The platform aims to streamline the hiring process, reduce mis-hires, and improve retention rates for enterprises globally.

Legal Benchmarks

Legal Benchmarks is a platform that provides independent lawyer-led AI evaluations for in-house legal work in the legal industry. The platform evaluates AI assistants on critical legal tasks like contract drafting and information extraction. It offers rankings based on how different AI tools perform on real-world legal tasks, helping legal teams understand and adopt AI solutions. Legal Benchmarks also allows legal AI vendors to submit their tools for evaluation and provides access to customized private reports, insights, and practical breakdowns of AI tools' performance.

HeHealth

HeHealth is an AI-powered application that revolutionizes sexual health by providing early detection services. The platform collaborates with public health entities to transform sexual health care delivery at a population level. Addressing the global silent epidemic of the sexual health crisis, HeHealth aims to bridge the critical gap in accessing timely and confidential sexual health advice. With a focus on STI detection, the application offers a discreet and efficient AI-powered Penis Health Checker, ensuring accessibility, accuracy, and affordability for users. Through a simple survey, scan, and summary process, users can evaluate their penis health in just three steps.

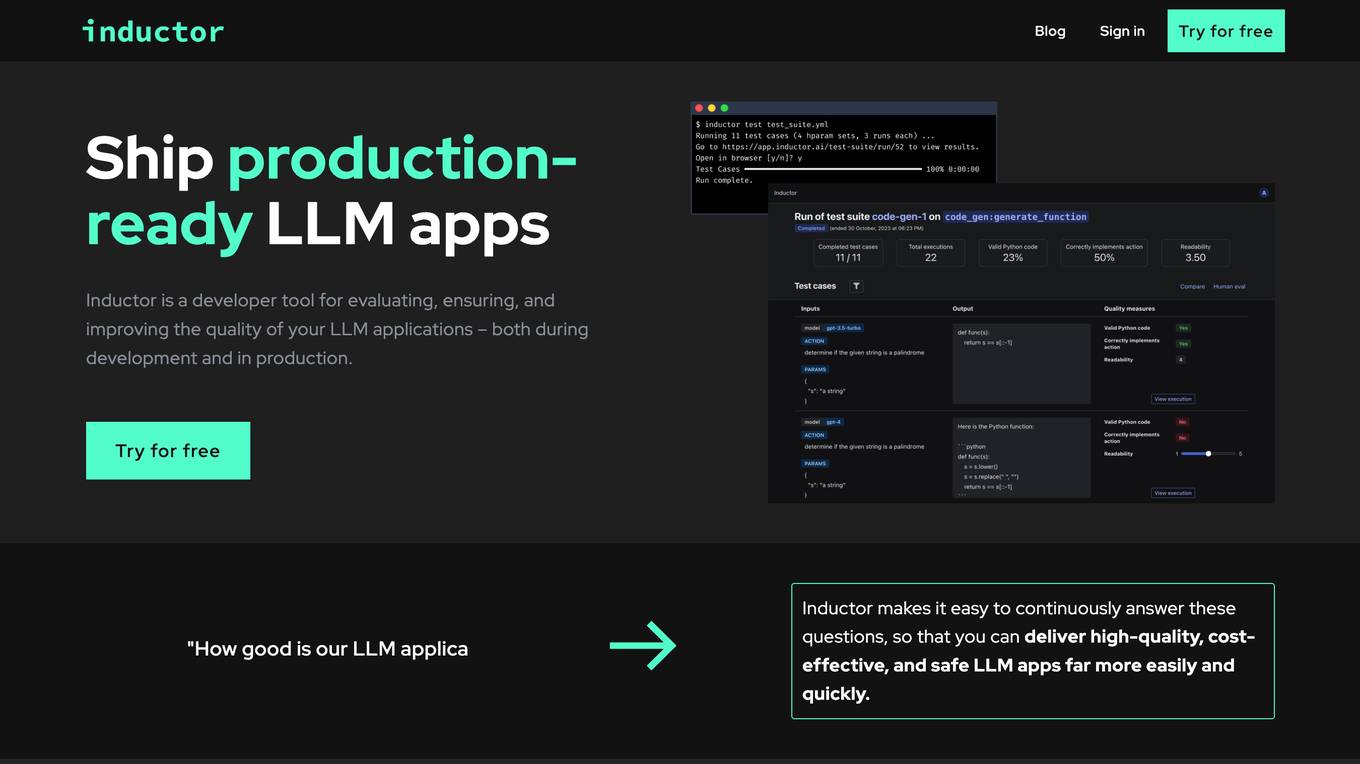

Inductor

Inductor is a developer tool for evaluating, ensuring, and improving the quality of your LLM applications – both during development and in production. It provides a fantastic workflow for continuous testing and evaluation as you develop, so that you always know your LLM app’s quality. Systematically improve quality and cost-effectiveness by actionably understanding your LLM app’s behavior and quickly testing different app variants. Rigorously assess your LLM app’s behavior before you deploy, in order to ensure quality and cost-effectiveness when you’re live. Easily monitor your live traffic: detect and resolve issues, analyze usage in order to improve, and seamlessly feed back into your development process. Inductor makes it easy for engineering and other roles to collaborate: get critical human feedback from non-engineering stakeholders (e.g., PM, UX, or subject matter experts) to ensure that your LLM app is user-ready.

OpinioAI

OpinioAI is an AI-powered market research tool that allows users to gain business critical insights from data without the need for costly polls, surveys, or interviews. With OpinioAI, users can create AI personas and market segments to understand customer preferences, affinities, and opinions. The platform democratizes research by providing efficient, effective, and budget-friendly solutions for businesses, students, and individuals seeking valuable insights. OpinioAI leverages Large Language Models to simulate humans and extract opinions in detail, enabling users to analyze existing data, synthesize new insights, and evaluate content from the perspective of their target audience.

BenchLLM

BenchLLM is an AI tool designed for AI engineers to evaluate LLM-powered apps by running and evaluating models with a powerful CLI. It allows users to build test suites, choose evaluation strategies, and generate quality reports. The tool supports OpenAI, Langchain, and other APIs out of the box, offering automation, visualization of reports, and monitoring of model performance.

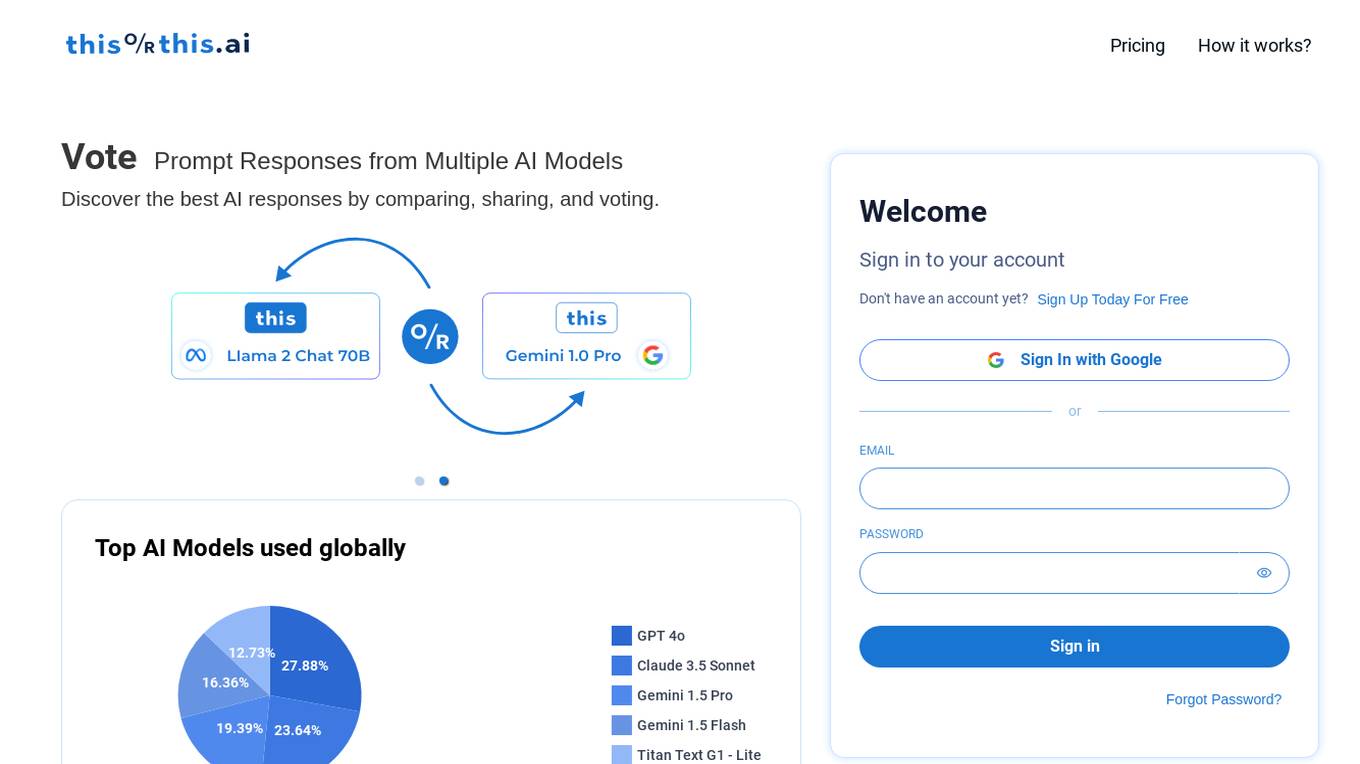

thisorthis.ai

thisorthis.ai is an AI tool that allows users to compare generative AI models and AI model responses. It helps users analyze and evaluate different AI models to make informed decisions. The tool requires JavaScript to be enabled for optimal functionality.

Langtrace AI

Langtrace AI is an open-source observability tool powered by Scale3 Labs that helps monitor, evaluate, and improve LLM (Large Language Model) applications. It collects and analyzes traces and metrics to provide insights into the ML pipeline, ensuring security through SOC 2 Type II certification. Langtrace supports popular LLMs, frameworks, and vector databases, offering end-to-end observability and the ability to build and deploy AI applications with confidence.

Arize AI

Arize AI is an AI Observability & LLM Evaluation Platform that helps you monitor, troubleshoot, and evaluate your machine learning models. With Arize, you can catch model issues, troubleshoot root causes, and continuously improve performance. Arize is used by top AI companies to surface, resolve, and improve their models.

Evidently AI

Evidently AI is an open-source machine learning (ML) monitoring and observability platform that helps data scientists and ML engineers evaluate, test, and monitor ML models from validation to production. It provides a centralized hub for ML in production, including data quality monitoring, data drift monitoring, ML model performance monitoring, and NLP and LLM monitoring. Evidently AI's features include customizable reports, structured checks for data and models, and a Python library for ML monitoring. It is designed to be easy to use, with a simple setup process and a user-friendly interface. Evidently AI is used by over 2,500 data scientists and ML engineers worldwide, and it has been featured in publications such as Forbes, VentureBeat, and TechCrunch.

Maxim

Maxim is an end-to-end AI evaluation and observability platform that empowers modern AI teams to ship products with quality, reliability, and speed. It offers a comprehensive suite of tools for experimentation, evaluation, observability, and data management. Maxim aims to bring the best practices of traditional software development into non-deterministic AI workflows, enabling rapid iteration and deployment of AI models. The platform caters to the needs of AI developers, data scientists, and machine learning engineers by providing a unified framework for evaluation, visual flows for workflow testing, and observability features for monitoring and optimizing AI systems in real-time.

RebeccAi

RebeccAi is an AI-powered business idea evaluation and validation tool that uses AI technology to provide accurate insights into the potential of users' ideas. It helps users refine and improve their ideas quickly and intelligently, acting as a one-person team for their business dreams. From evaluating and assessing business ideas to creating detailed business plans, RebeccAi revolutionizes idea validation with the power of AI.

Codei

Codei is an AI-powered platform designed to help individuals land their dream software engineering job. It offers features such as application tracking, question generation, and code evaluation to assist users in honing their technical skills and preparing for interviews. Codei aims to provide personalized support and insights to help users succeed in the tech industry.

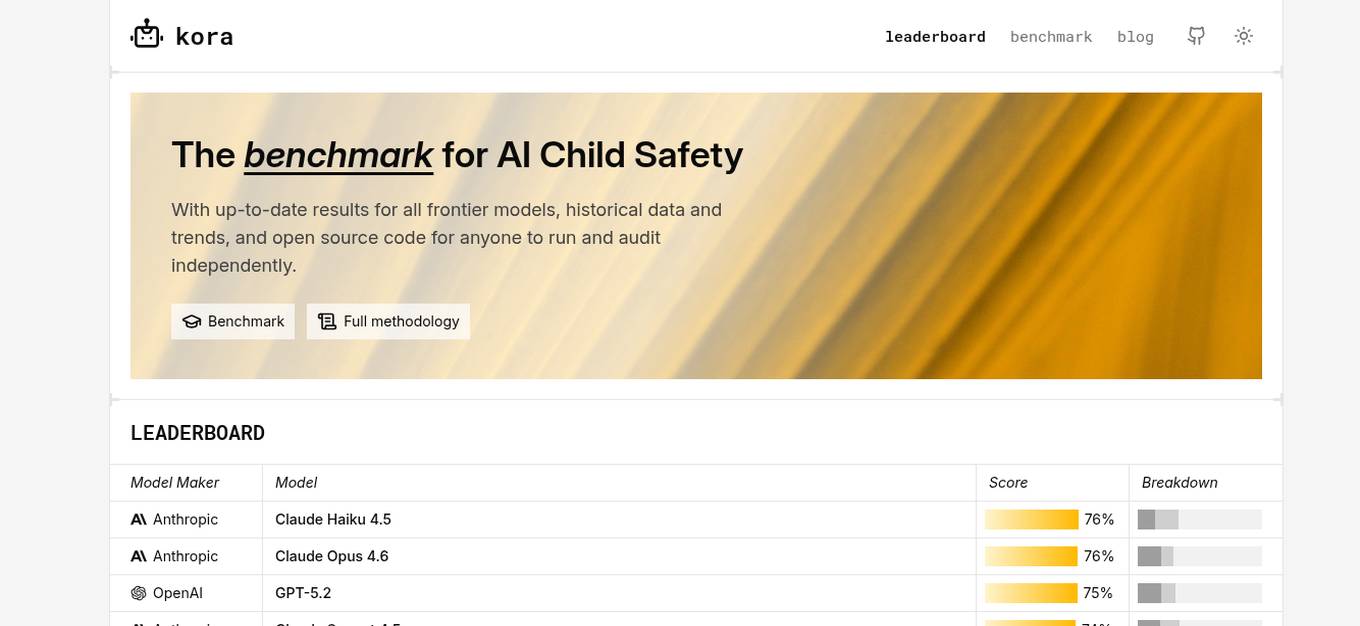

KORA Benchmark

KORA Benchmark is a leading platform that provides a benchmark for AI child safety. It offers up-to-date results for frontier models, historical data, and trends. The platform also provides open-source code for users to run and audit independently. KORA Benchmark aims to ensure the safety of children in the AI landscape by evaluating various models and providing valuable insights to the community.

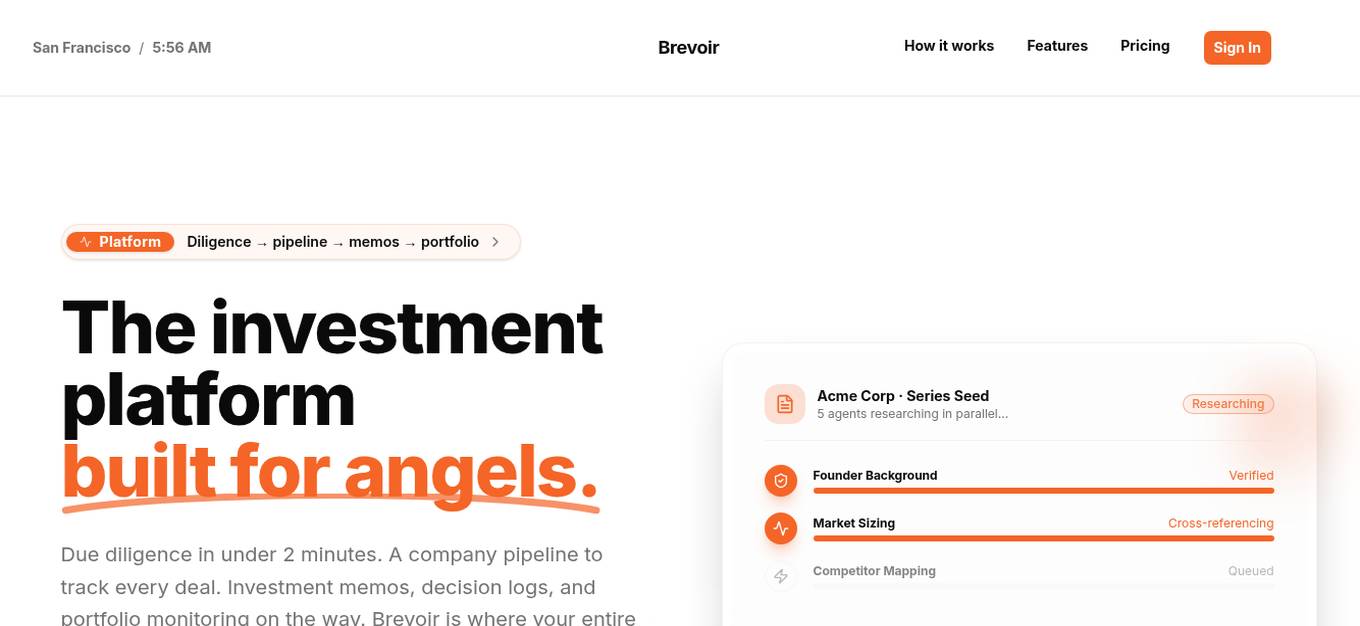

Brevoir

Brevoir is an AI-powered decision-grade due diligence tool designed for startup investing. It consolidates founder diligence, market and competitor research, risk assessment, and investment-ready writeups in one platform. Tailored for angel investors and startup evaluators, Brevoir streamlines the startup evaluation process by extracting key information from pitch decks or company URLs, verifying claims, mapping competitors, and providing structured reports with risks and opportunities. The tool aims to provide clear answers, identify market trends, evaluate team credibility, assess traction and risks, and offer pricing plans that scale with user needs.

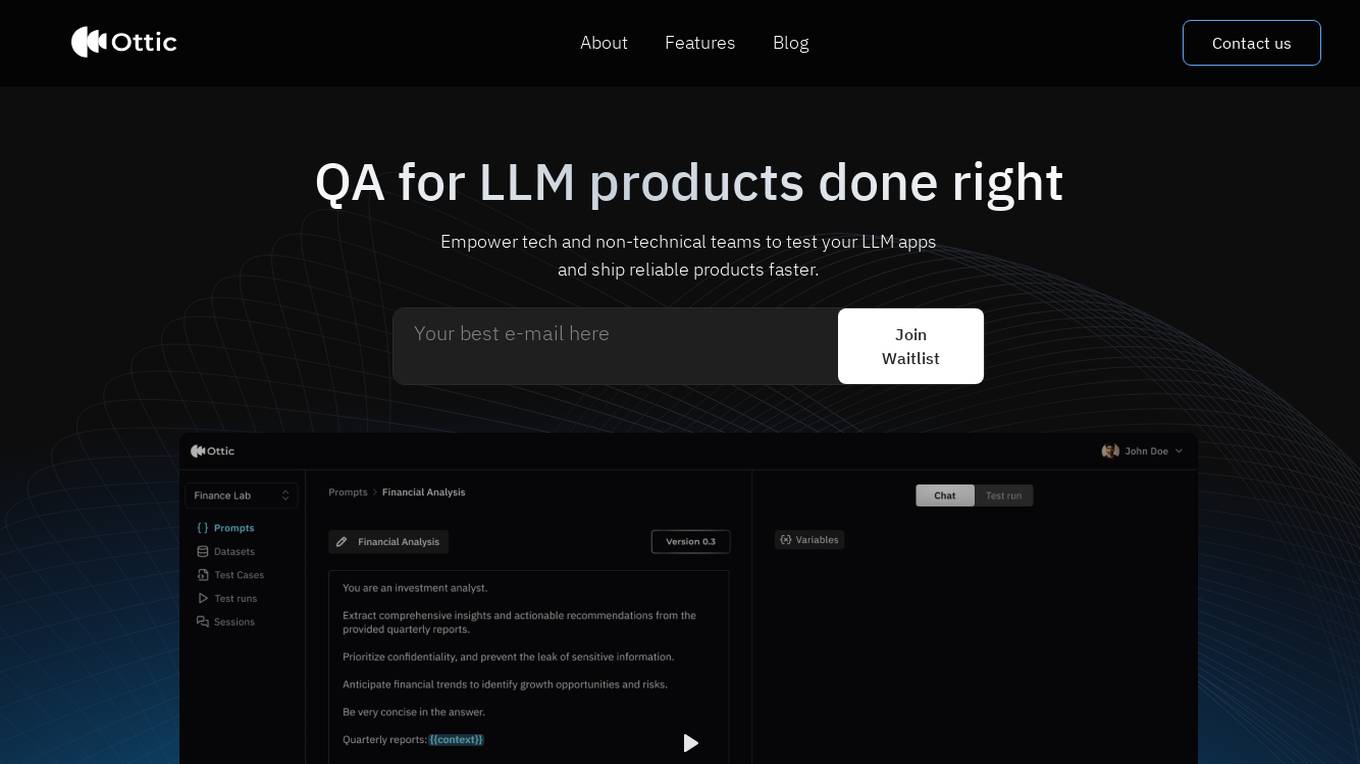

Ottic

Ottic is an AI tool designed to empower both technical and non-technical teams to test Language Model (LLM) applications efficiently and accelerate the development cycle. It offers features such as a 360º view of the QA process, end-to-end test management, comprehensive LLM evaluation, and real-time monitoring of user behavior. Ottic aims to bridge the gap between technical and non-technical team members, ensuring seamless collaboration and reliable product delivery.

1 - Open Source AI Tools

Korean-SAT-LLM-Leaderboard

The Korean SAT LLM Leaderboard is a benchmarking project that allows users to test their fine-tuned Korean language models on a 10-year dataset of the Korean College Scholastic Ability Test (CSAT). The project provides a platform to compare human academic ability with the performance of large language models (LLMs) on various question types to assess reading comprehension, critical thinking, and sentence interpretation skills. It aims to share benchmark data, utilize a reliable evaluation dataset curated by the Korea Institute for Curriculum and Evaluation, provide annual updates to prevent data leakage, and promote open-source LLM advancement for achieving top-tier performance on the Korean CSAT.

20 - OpenAI Gpts

FallacyGPT

Detect logical fallacies and lapses in critical thinking to help avoid misinformation in the style of Socrates

LogiCheck

Identify key claims and sniff past the BS with your personal AI Logic Checker and Fallacy Expert.

Academic Paper Evaluator

Enthusiastic about truth in academic papers, critical and analytical.

Rate My {{Startup}}

I will score your Mind Blowing Startup Ideas, helping your to evaluate faster.

Stick to the Point

I'll help you evaluate your writing to make sure it's engaging, informative, and flows well. Uses principles from "Made to Stick"

LabGPT

The main objective of a personalized ChatGPT for reading laboratory tests is to evaluate laboratory test results and create a spreadsheet with the evaluation results and possible solutions.

SearchQualityGPT

As a Search Quality Rater, you will help evaluate search engine quality around the world.

Business Model Canvas Strategist

Business Model Canvas Creator - Build and evaluate your business model

WM Phone Script Builder GPT

I automatically create and evaluate phone scripts, presenting a final draft.