Best AI tools for< Evaluate Agent Effectiveness >

20 - AI tool Sites

Coval

Coval is an AI tool designed to help users ship reliable AI agents faster by providing simulation and evaluations for voice and chat agents. It allows users to simulate thousands of scenarios from a few test cases, create prompts for testing, and evaluate agent interactions comprehensively. Coval offers AI-powered simulations, voice AI compatibility, performance tracking, workflow metrics, and customizable evaluation metrics to optimize AI agents efficiently.

Future AGI

Future AGI is a revolutionary AI data management platform that aims to achieve 99% accuracy in AI applications across software and hardware. It provides a comprehensive evaluation and optimization platform for enterprises to enhance the performance of their AI models. Future AGI offers features such as creating trustworthy, accurate, and responsible AI, 10x faster processing, generating and managing diverse synthetic datasets, testing and analyzing agentic workflow configurations, assessing agent performance, enhancing LLM application performance, monitoring and protecting applications in production, and evaluating AI across different modalities.

Vocera

Vocera is an AI voice agent testing tool that allows users to test and monitor voice AI agents efficiently. It enables users to launch voice agents in minutes, ensuring a seamless conversational experience. With features like testing against AI-generated datasets, simulating scenarios, and monitoring AI performance, Vocera helps in evaluating and improving voice agent interactions. The tool provides real-time insights, detailed logs, and trend analysis for optimal performance, along with instant notifications for errors and failures. Vocera is designed to work for everyone, offering an intuitive dashboard and data-driven decision-making for continuous improvement.

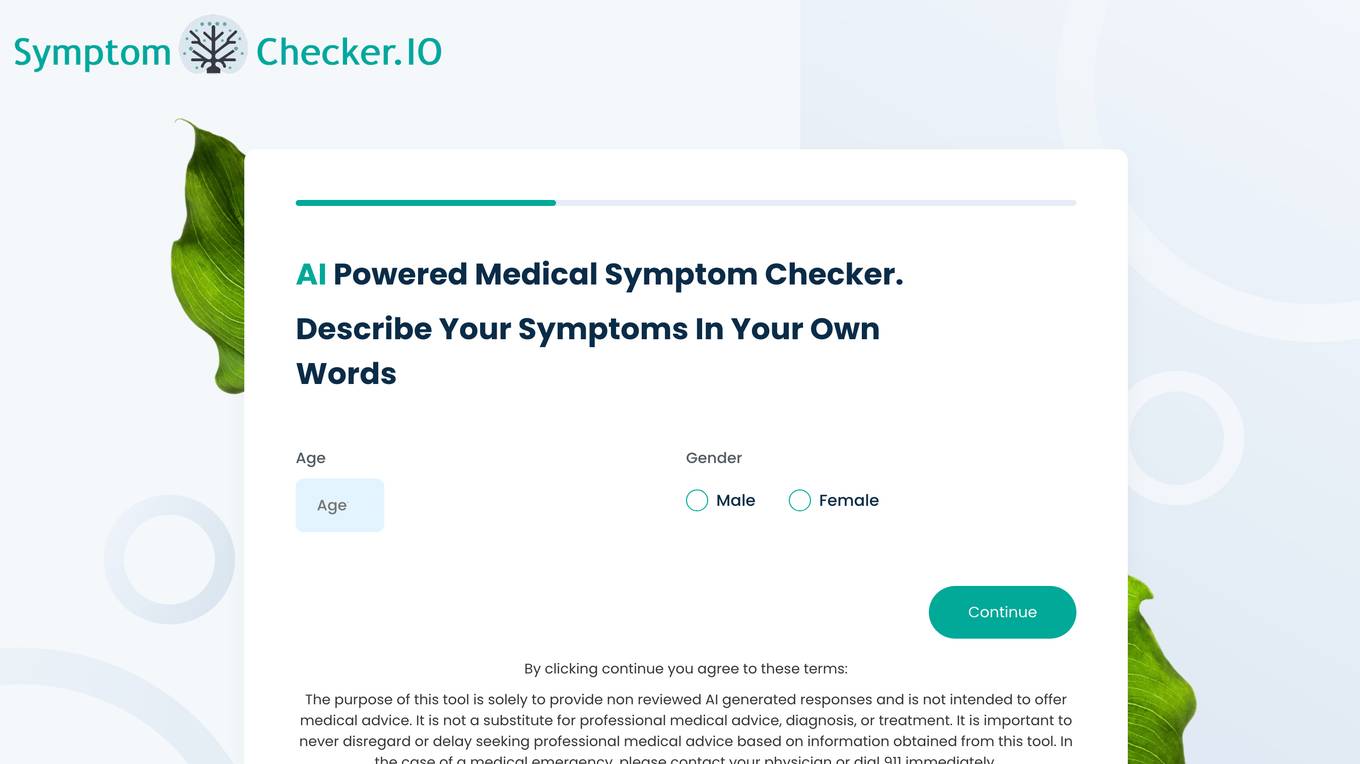

SymptomChecker.io

SymptomChecker.io is an AI-powered medical symptom checker that allows users to describe their symptoms in their own words and receive non-reviewed AI-generated responses. It is important to note that this tool is not intended to offer medical advice, diagnosis, or treatment and should not be used as a substitute for professional medical advice. In the case of a medical emergency, please contact your physician or dial 911 immediately.

Enhans AI Model Generator

Enhans AI Model Generator is an advanced AI tool designed to help users generate AI models efficiently. It utilizes cutting-edge algorithms and machine learning techniques to streamline the model creation process. With Enhans AI Model Generator, users can easily input their data, select the desired parameters, and obtain a customized AI model tailored to their specific needs. The tool is user-friendly and does not require extensive programming knowledge, making it accessible to a wide range of users, from beginners to experts in the field of AI.

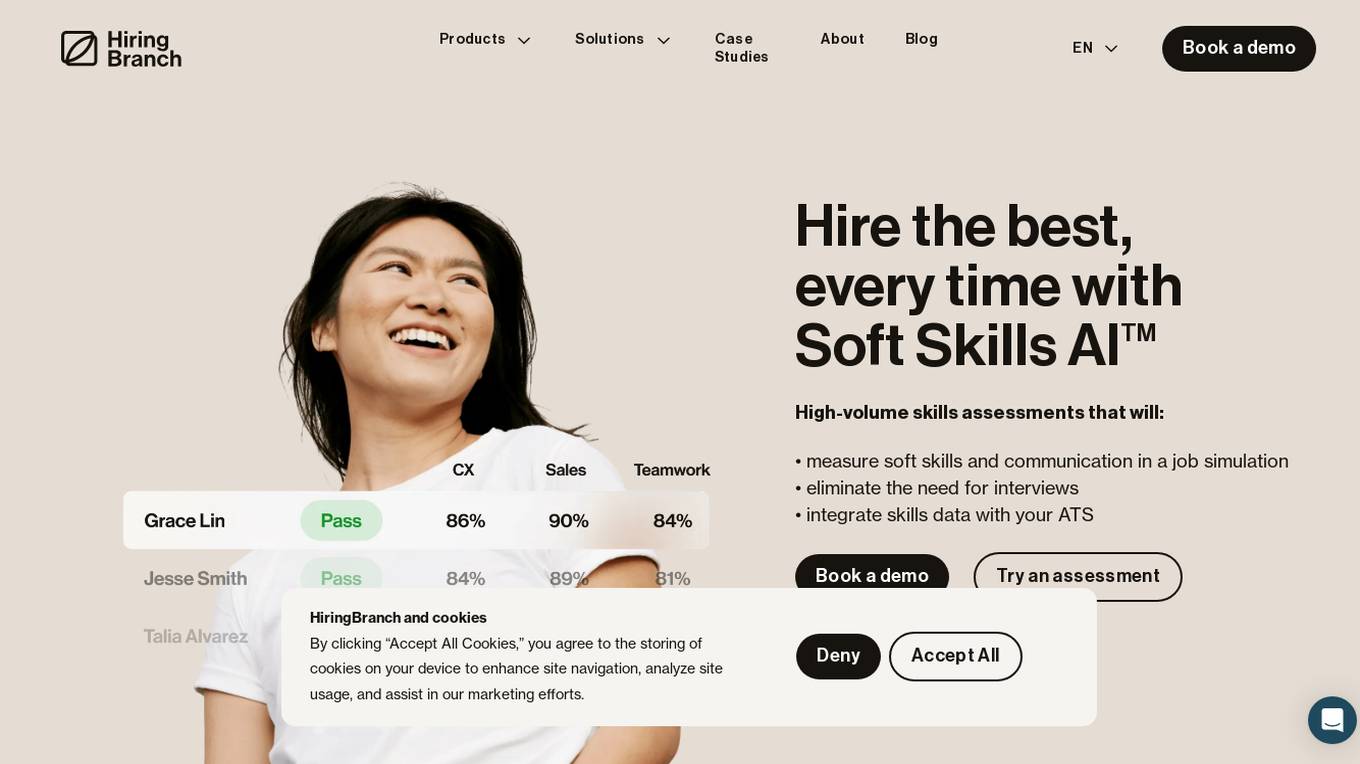

HiringBranch

HiringBranch is an AI-powered platform that offers high-volume skills assessments to help companies hire the best candidates efficiently. The platform accurately measures soft skills and communication through open-ended conversational assessments, eliminating the need for traditional interviews. HiringBranch's AI skills assessments are tailored to various industries such as Telecommunication, Retail, Banking & Insurance, and Contact Centers, providing real-time evaluation of role-critical skills. The platform aims to streamline the hiring process, reduce mis-hires, and improve retention rates for enterprises globally.

Convr

Convr is an AI-driven underwriting analysis platform that helps commercial P&C insurance organizations transform their underwriting operations. It provides a modularized AI underwriting and intelligent document automation workbench that enriches and expedites the commercial insurance new business and renewal submission flow with underwriting insights, business classification, and risk scoring. Convr's mission is to solve the last big problem of commercial insurance while improving profitability and increasing efficiency.

Convr

Convr is a modularized AI underwriting and intelligent document automation workbench that enriches and expedites the commercial insurance new business and renewal submission flow with underwriting insights, business classification and risk scoring. As a trusted technology partner and advisor with deep industry expertise, we help insurance organizations transform their underwriting operations through our AI-driven digital underwriting analysis platform.

Q, ChatGPT for Slack

The website offers 'Q, ChatGPT for Slack', an AI tool that functions like ChatGPT within your Slack workspace. It allows on-demand URL and file reading, custom instructions for tailored use, and supports various URLs and files. With Q, users can summarize, evaluate, brainstorm ideas, self-review, engage in Q&A, and more. The tool enables team-specific rules, guidelines, and templates, making it ideal for emails, translations, content creation, copywriting, reporting, coding, and testing based on internal information.

Lucida AI

Lucida AI is an AI-driven coaching tool designed to enhance employees' English language skills through personalized insights and feedback based on real-life call interactions. The tool offers comprehensive coaching in pronunciation, fluency, grammar, vocabulary, and tracking of language proficiency. It provides advanced speech analysis using proprietary LLM and NLP technologies, ensuring accurate assessments and detailed tracking. With end-to-end encryption for data privacy, Lucy AI is a cost-effective solution for organizations seeking to improve communication skills and streamline language assessment processes.

Galileo AI

Galileo AI is a platform that offers automated evaluations for AI applications, bringing automation and insight to AI evaluations to ensure reliable and confident shipping. It helps in eliminating 80% of evaluation time by replacing manual reviews with high-accuracy metrics, enabling rapid iteration, achieving real-time protection, and providing end-to-end visibility into agent completions. Galileo also allows developers to take control of AI complexity, de-risk AI in production, and deploy AI applications flexibly across different environments. The platform is trusted by enterprises and loved by developers for its accuracy, low-latency, and ability to run on L4 GPUs.

4'33"

4'33" is an AI agent designed to help students and researchers discover the people they need, such as students seeking professors in a specific field or city. The tool assists in asking better questions, connecting with individuals, and evaluating how well they align with the user's requirements and background. Powered by Perplexity, 4'33" offers a platform for connecting people and answering questions, alongside AI technology. The tool aims to facilitate easier and faster connections between users and relevant individuals, enabling knowledge sharing and collaboration.

RagaAI Catalyst

RagaAI Catalyst is a sophisticated AI observability, monitoring, and evaluation platform designed to help users observe, evaluate, and debug AI agents at all stages of Agentic AI workflows. It offers features like visualizing trace data, instrumenting and monitoring tools and agents, enhancing AI performance, agentic testing, comprehensive trace logging, evaluation for each step of the agent, enterprise-grade experiment management, secure and reliable LLM outputs, finetuning with human feedback integration, defining custom evaluation logic, generating synthetic data, and optimizing LLM testing with speed and precision. The platform is trusted by AI leaders globally and provides a comprehensive suite of tools for AI developers and enterprises.

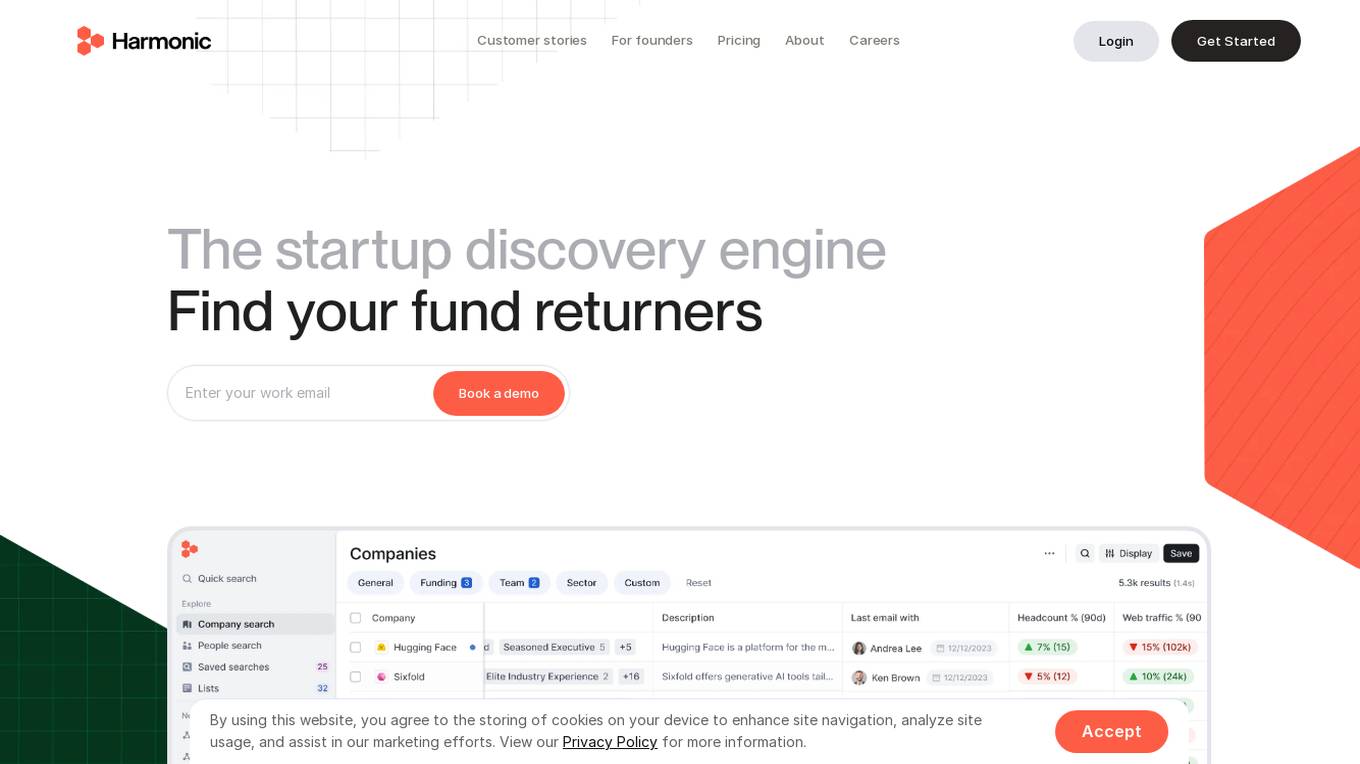

Harmonic.ai

Harmonic.ai is a startup database platform that offers a comprehensive database of companies and individuals, enabling users to identify new opportunities, scout for startups, and make informed investment decisions. The platform is powered by AI technology, providing users with sourcing superpowers and the ability to discover hidden gems in the startup ecosystem. Harmonic.ai offers features such as hyper-specific searches, industry or product descriptions to find companies, market maps, talent flows, and real-time alerts. Users can also evaluate founding team experience, analyze key competitors, and assess funding and investors. The platform is designed to help users stay up to date with the latest industry trends and make data-driven decisions.

Agenta.ai

Agenta.ai is a platform designed to provide prompt management, evaluation, and observability for LLM (Large Language Model) applications. It aims to address the challenges faced by AI development teams in managing prompts, collaborating effectively, and ensuring reliable product outcomes. By centralizing prompts, evaluations, and traces, Agenta.ai helps teams streamline their workflows and follow best practices in LLMOps. The platform offers features such as unified playground for prompt comparison, automated evaluation processes, human evaluation integration, observability tools for debugging AI systems, and collaborative workflows for PMs, experts, and developers.

Reppls

Reppls is an AI Interview Agents tool designed for data-driven hiring processes. It helps companies interview all applicants to identify the right talents hidden behind uninformative CVs. The tool offers seamless integration with daily tools, such as Zoom and MS Teams, and provides deep technical assessments in the early stages of hiring, allowing HR specialists to focus on evaluating soft skills. Reppls aims to transform the hiring process by saving time spent on screening, interviewing, and assessing candidates.

AlphaLens Intelligence Solutions

AlphaLens Intelligence Solutions is an AI-powered platform that offers a comprehensive suite of tools for deal origination, sourcing, enrichment, and CRM integrations. It provides users with the ability to extract data from pitch decks, find and evaluate companies, and enrich company profiles with deep market insights. The platform leverages generative AI, semantic search, and active monitoring to surface hidden opportunities, automate deal screening, and sync data to the user's pipeline. With a focus on private market data, AlphaLens enables users to access a vast universe of company and product information, including funding rounds, growth metrics, and target audience insights. The platform also offers REST API access, Chrome extension, and integration with CRM systems for seamless data management.

AI Alliance

The AI Alliance is a community dedicated to building and advancing open-source AI agents, data, models, evaluation, safety, applications, and advocacy to ensure everyone can benefit. They focus on various areas such as skills and education, trust and safety, applications and tools, hardware enablement, foundation models, and advocacy. The organization supports global AI skill-building, education, and exploratory research, creates benchmarks and tools for safe generative AI, builds capable tools for AI model builders and developers, fosters AI hardware accelerator ecosystem, enables open foundation models and datasets, and advocates for regulatory policies for healthy AI ecosystems.

Fairo

Fairo is a platform that facilitates Responsible AI Governance, offering tools for reducing AI hallucinations, managing AI agents and assets, evaluating AI systems, and ensuring compliance with various regulations. It provides a comprehensive solution for organizations to align their AI systems ethically and strategically, automate governance processes, and mitigate risks. Fairo aims to make responsible AI transformation accessible to organizations of all sizes, enabling them to build technology that is profitable, ethical, and transformative.

Athina AI Hub

Athina AI Hub is an ultimate resource for AI development teams, offering a wide range of AI development blogs, research papers, and original content. It provides valuable insights into cutting-edge technologies such as Large Language Models (LLMs), Retrieval-Augmented Generation (RAG), and AI agents. Athina AI Hub aims to empower AI engineers, researchers, data scientists, and product developers by offering comprehensive resources and fostering innovation in the field of Artificial Intelligence.

1 - Open Source AI Tools

agentUniverse

agentUniverse is a multi-agent framework based on large language models, providing flexible capabilities for building individual agents. It focuses on multi-agent collaborative patterns, integrating domain experience to help agents solve problems in various fields. The framework includes pattern components like PEER and DOE for event interpretation, industry analysis, and financial report generation. It offers features for agent construction, multi-agent collaboration, and domain expertise integration, aiming to create intelligent applications with professional know-how.

20 - OpenAI Gpts

WM Phone Script Builder GPT

I automatically create and evaluate phone scripts, presenting a final draft.

Wordon, World's Worst Customer | Divergent AI

I simulate tough Customer Support scenarios for Agent Training.

Supplier Evaluation Advisor

Assesses and recommends potential suppliers for organizational needs.

HomeScore

Assess a potential home's quality using your own photos and property inspection reports

Conversation Analyzer

I analyze WhatsApp/Telegram and email conversations to assess the tone of their emotions and read between the lines. Upload your screenshot and I'll tell you what they are really saying! 😀

Home Inspector

Upload a picture of your home wall, floor, window, driveway, roof, HVAC, and get an instant opinion.

US Zip Intel

Your go-to source for in-depth US zip code demographics and statistics, with easy-to-download data tables.

Rate My {{Startup}}

I will score your Mind Blowing Startup Ideas, helping your to evaluate faster.

Stick to the Point

I'll help you evaluate your writing to make sure it's engaging, informative, and flows well. Uses principles from "Made to Stick"

LabGPT

The main objective of a personalized ChatGPT for reading laboratory tests is to evaluate laboratory test results and create a spreadsheet with the evaluation results and possible solutions.