Best AI tools for< Duplicate Text >

17 - AI tool Sites

Snapy

Snapy is an AI-powered video editing and generation tool that helps content creators create short videos, edit podcasts, and remove silent parts from videos. It offers a range of features such as turning text prompts into short videos, condensing long videos into engaging short clips, automatically removing silent parts from audio files, and auto-trimming, removing duplicate sentences and filler words, and adding subtitles to short videos. Snapy is designed to save time and effort for content creators, allowing them to publish more content, create more engaging videos, and improve the quality of their audio and video content.

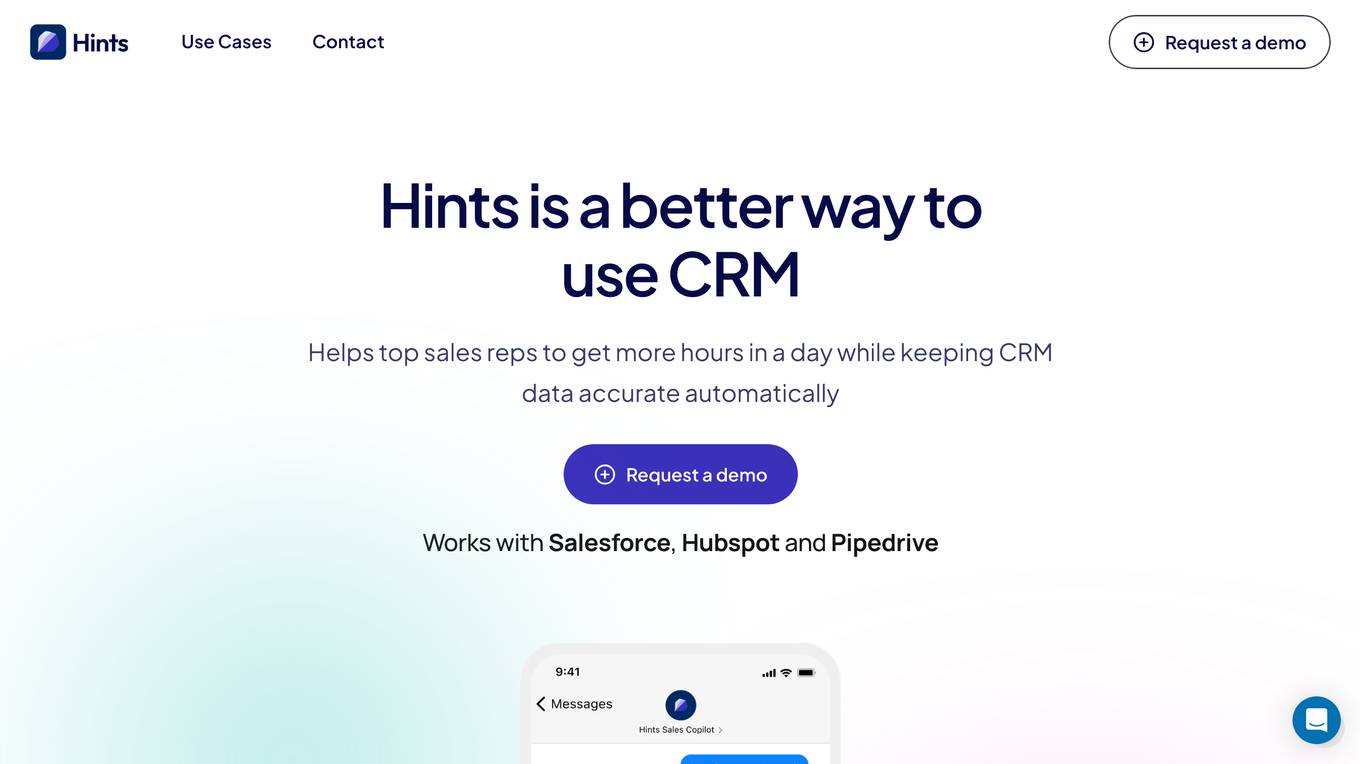

Hints

Hints is a sales AI assistant that helps sales reps to get more hours in a day while keeping CRM data accurate automatically. It works with Salesforce, Hubspot, and Pipedrive. With Hints, sales reps can log and retrieve CRM data on any device with chat and voice, get guidance on their next steps, and reminders of what's missing. Hints can also help sales reps to create complex CRM updates in seconds, find duplicates, suggest actions, automatically create associations, and look up sales data through chat and voice commands. Hints can assist sales reps in building the perfect sales process for their team and provides fast onboarding for new sales reps.

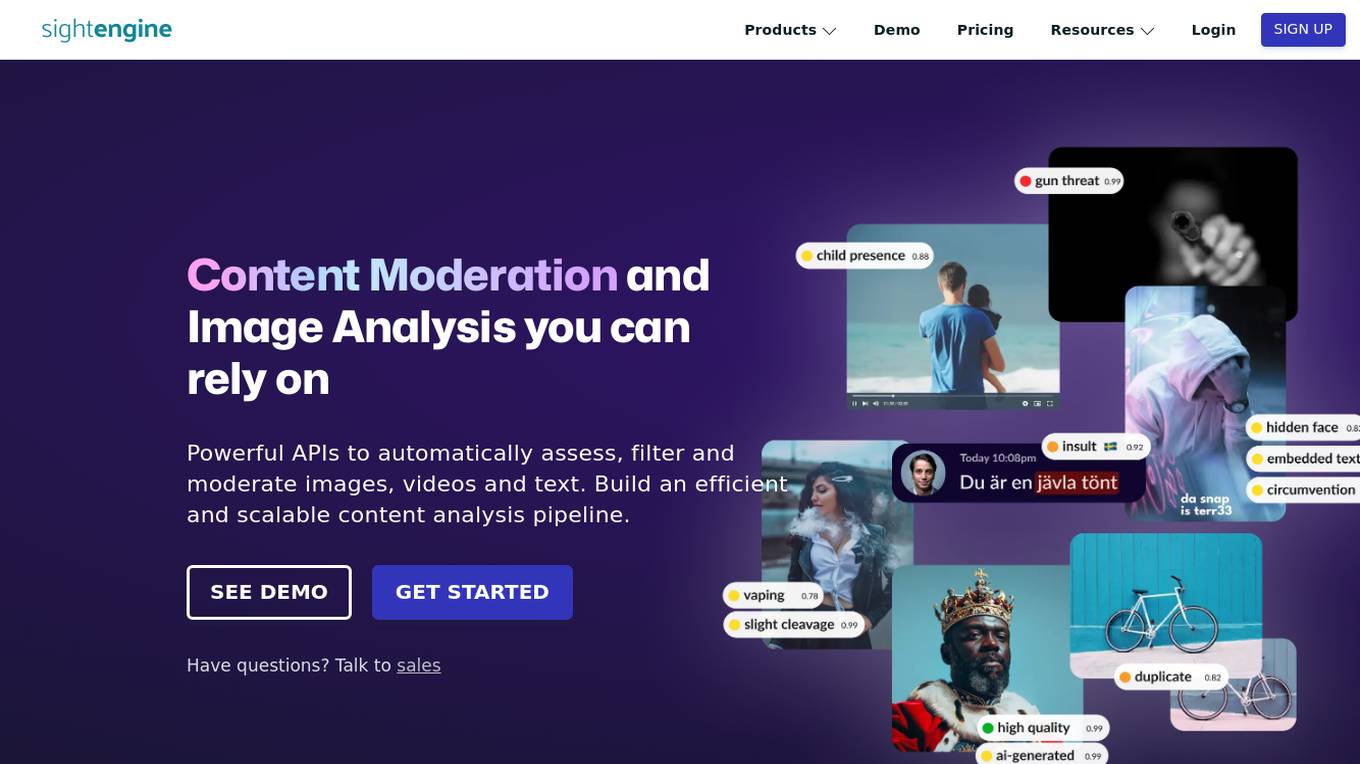

Sightengine

The website offers content moderation and image analysis products using powerful APIs to automatically assess, filter, and moderate images, videos, and text. It provides features such as image moderation, video moderation, text moderation, AI image detection, and video anonymization. The application helps in detecting unwanted content, AI-generated images, and personal information in videos. It also offers tools to identify near-duplicates, spam, and abusive links, and prevent phishing and circumvention attempts. The platform is fast, scalable, accurate, easy to integrate, and privacy compliant, making it suitable for various industries like marketplaces, dating apps, and news platforms.

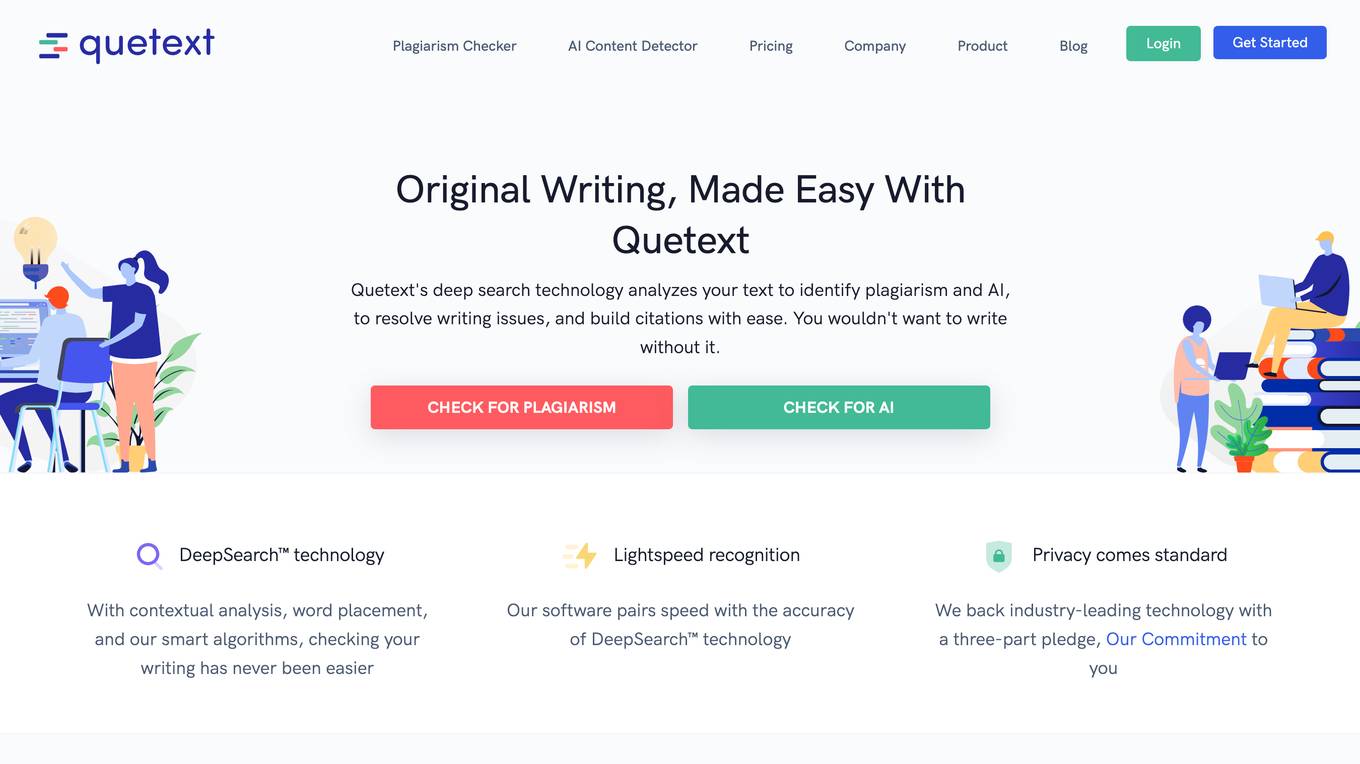

Quetext

Quetext is a plagiarism checker and AI content detector that helps students, teachers, and professionals identify potential plagiarism and AI in their work. With its deep search technology, contextual analysis, and smart algorithms, Quetext makes checking writing easier and more accurate. Quetext also offers a variety of features such as bulk uploads, source exclusion, enhanced citation generator, grammar & spell check, and Deep Search. With its rich and intuitive feedback, Quetext helps users find plagiarism and AI with less stress.

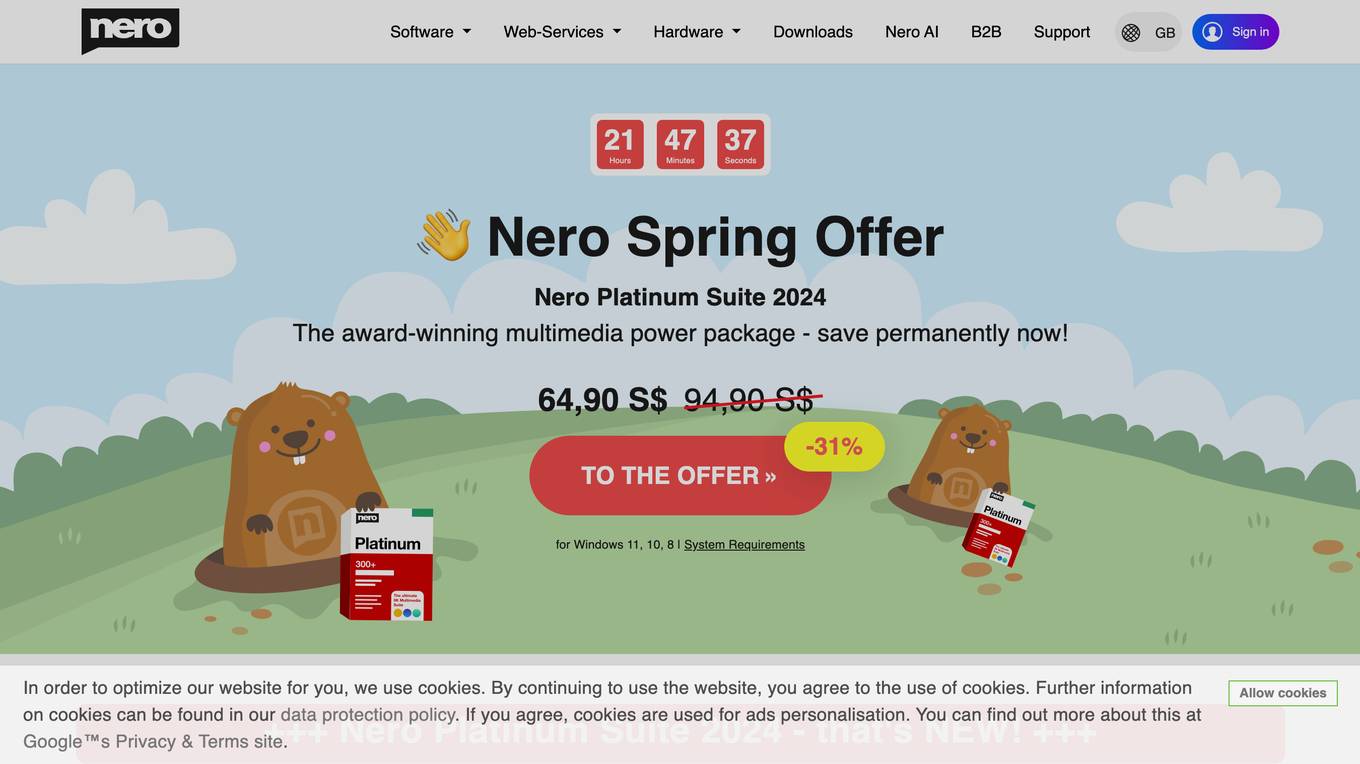

Nero Platinum Suite

Nero Platinum Suite is a comprehensive software collection for Windows PCs that provides a wide range of multimedia capabilities, including burning, managing, optimizing, and editing photos, videos, and music files. It includes various AI-powered features such as the Nero AI Image Upscaler, Nero AI Video Upscaler, and Nero AI Photo Tagger, which enhance and simplify multimedia tasks.

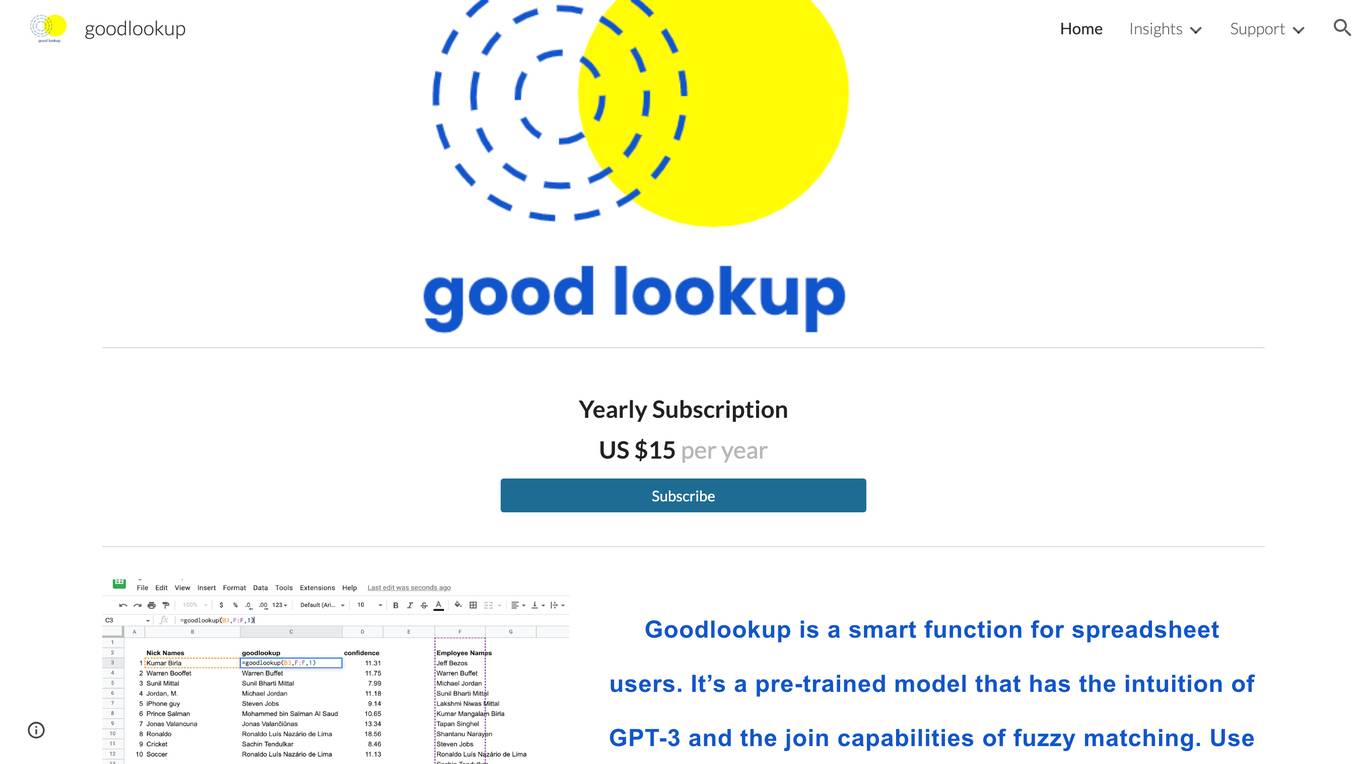

Goodlookup

Goodlookup is a smart function for spreadsheet users that gets very close to semantic understanding. It’s a pre-trained model that has the intuition of GPT-3 and the join capabilities of fuzzy matching. Use it like vlookup or index match to speed up your topic clustering work in google sheets!

Duplikate

Duplikate is a next-generation AI-powered Community Management tool designed to assist users in managing their social media accounts more efficiently. It helps users save time by retrieving relevant social media posts, categorizing them, and duplicating them with modifications to better suit their audience. The tool is powered by OpenAI and offers features such as post scraping, filtering, and copying, with upcoming features including image generation. Users have praised Duplikate for its ability to streamline content creation, improve engagement, and save time in managing social media accounts.

Trust Stamp

Trust Stamp is a global provider of AI-powered identity services offering a full suite of identity tools, including biometric multi-factor authentication, document validation, identity validation, duplicate detection, and geolocation services. The application is designed to empower organizations across various sectors with advanced biometric identity solutions to reduce fraud, protect personal data privacy, increase operational efficiency, and reach a broader user base worldwide through unique data transformation and comparison capabilities. Founded in 2016, Trust Stamp has achieved significant milestones in net sales, gross profit, and strategic partnerships, positioning itself as a leader in the identity verification industry.

AppZen

AppZen is an AI-powered application designed for modern finance teams to streamline accounts payable processes, automate invoice and expense auditing, and improve compliance. It offers features such as Autonomous AP for invoice automation, Expense Audit for T&E spend management, and Card Audit for analyzing card spend. AppZen's AI learns and understands business practices, ensures compliance, and integrates with existing systems easily. The application helps prevent duplicate spend, fraud, and FCPA violations, making it a valuable tool for finance professionals.

Dart

Dart is an AI-native project management software that empowers teams to work smarter, streamline tasks, and achieve more with less effort. It incorporates AI from the ground up, offering features like task execution, subtask generation, project planning, duplicate detection, and more. Dart also provides integrations with various workplace tools, solutions tailored for different roles and industries, customizable workspaces, and advanced AI capabilities for enhanced project reporting and risk reduction.

ONERECOVERY

ONERECOVERY is a professional data recovery solution for Windows that offers comprehensive and expert solutions to recover lost data from various storage devices. The software is designed to handle data loss for over 1,000 scenarios, including accidental deletion, formatting errors, virus attacks, and more. ONERECOVERY provides features such as file recovery for Windows and Mac, file duplicate finder, photo and video recovery, hard drive recovery, SD card data recovery, and more. With a user-friendly interface, quick and efficient scanning, and compatibility with diverse operating systems and storage devices, ONERECOVERY is a reliable and secure data recovery tool trusted by millions of users worldwide.

DVC

DVC is an open-source platform for managing machine learning data and experiments. It provides a unified interface for working with data from various sources, including local files, cloud storage, and databases. DVC also includes tools for versioning data and experiments, tracking metrics, and automating compute resources. DVC is designed to make it easy for data scientists and machine learning engineers to collaborate on projects and share their work with others.

Lenso.ai

Lenso.ai is an AI-powered reverse image search tool that allows users to explore billions of images from the web with advanced AI technology. It offers a more accurate and efficient process of reverse image search compared to traditional methods. Users can search for places, people, duplicates, and related images effortlessly. The tool is designed to cater to diverse needs, from professional photographers to marketers and enthusiasts, providing a faster, easier, and more accurate image search experience.

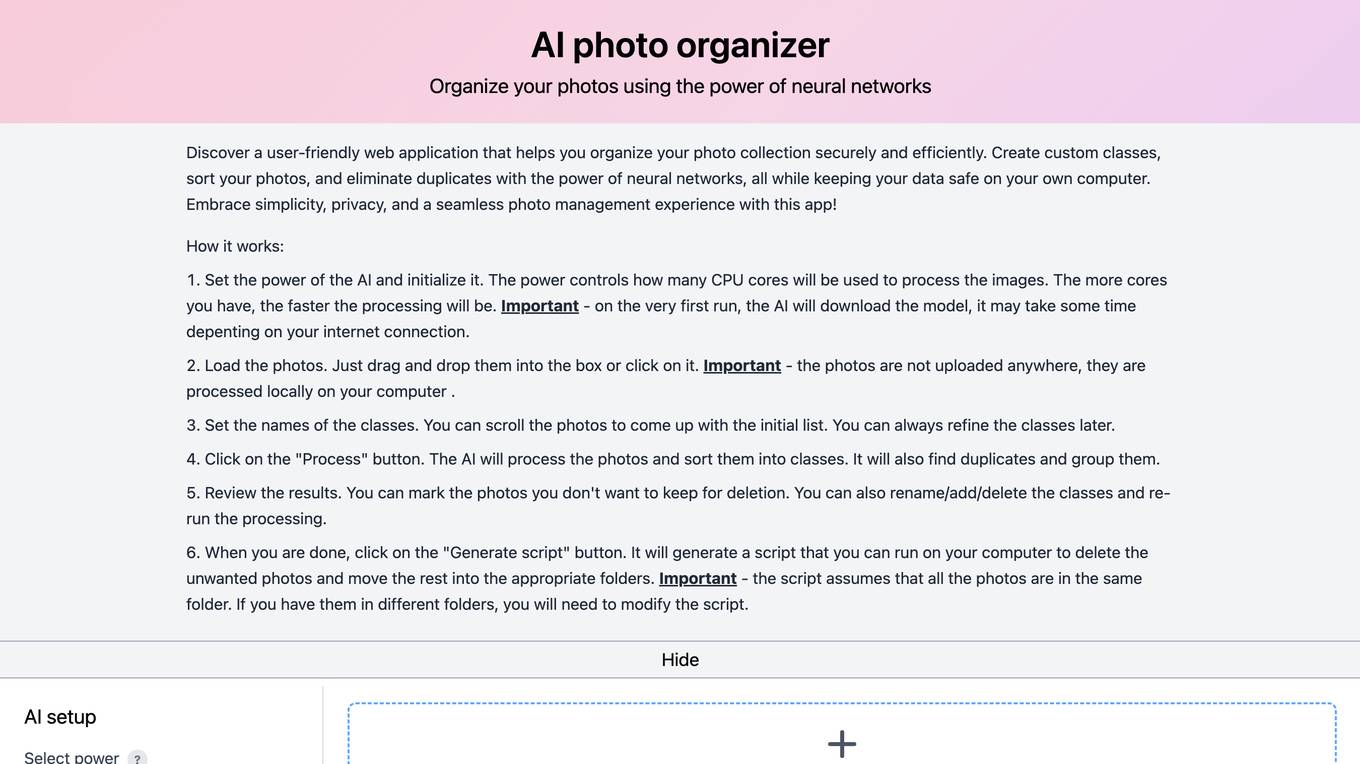

AI Photo Organizer

The AI photo organizer is a user-friendly web application that utilizes neural networks to help users securely and efficiently organize their photo collections. Users can create custom classes, sort photos, eliminate duplicates, and manage their data locally on their own computer. The application provides a seamless photo management experience, emphasizing simplicity and privacy.

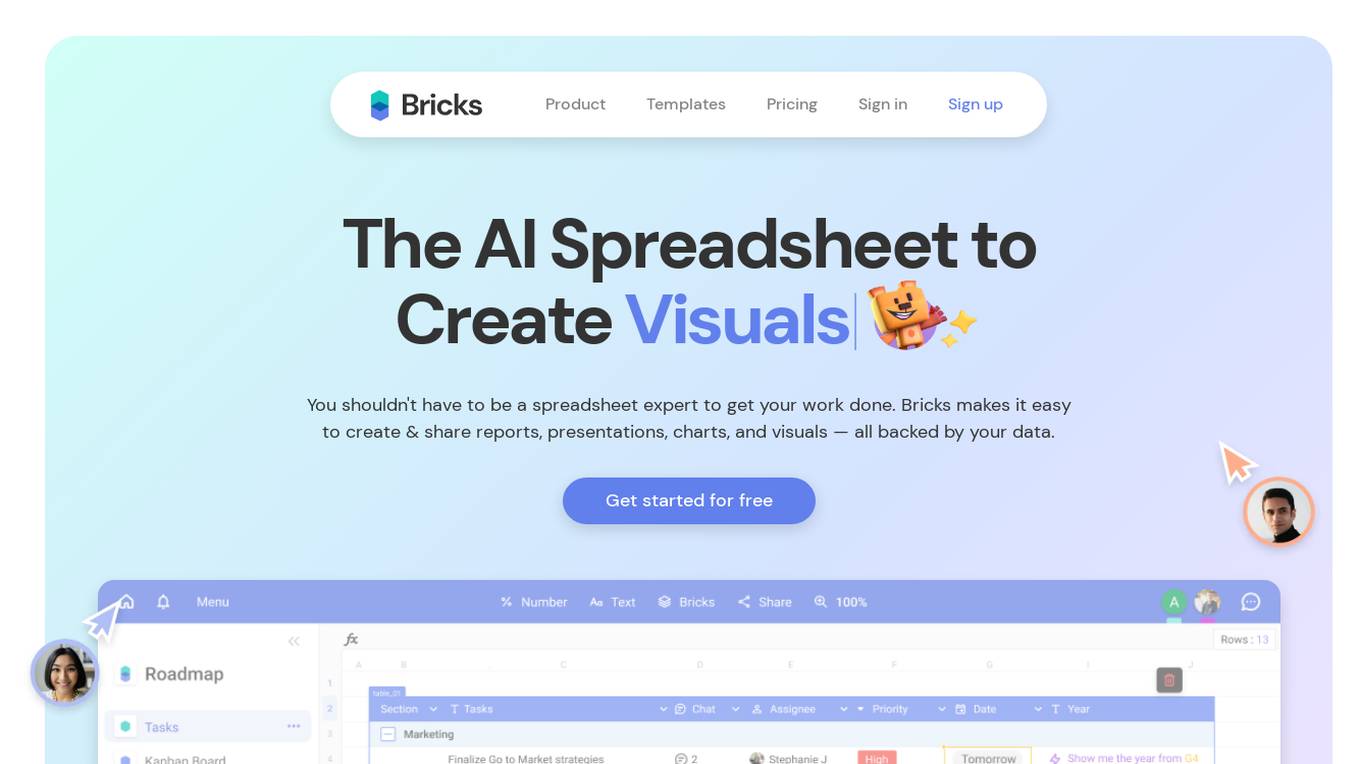

Bricks

Bricks is an AI-first spreadsheet application that simplifies the process of creating and sharing reports, presentations, charts, and visuals using your data. It eliminates the need for advanced spreadsheet expertise, allowing users to effortlessly generate various types of content. Bricks offers a wide range of pre-built templates and tools to enhance productivity and creativity in data analysis and visualization.

Restb.ai

Restb.ai is a leading provider of visual insights for real estate companies, utilizing computer vision and AI to analyze property images. The application offers solutions for AVMs, iBuyers, investors, appraisals, inspections, property search, marketing, insurance companies, and more. By providing actionable and unique data at scale, Restb.ai helps improve valuation accuracy, automate manual processes, and enhance property interactions. The platform enables users to leverage visual insights to optimize valuations, automate report quality checks, enhance listings, improve data collection, and more.

Anea

Anea is a unique marketplace for custom-made jewelry, where users can turn their dreams into reality with the help of AI technology. The platform connects customers with Danish goldsmiths to handcraft their personalized jewelry pieces. Anea offers a wide range of jewelry options, from delicate and stackable sets to statement pieces and wedding collections. With a focus on sustainability and ethical sourcing, Anea ensures a secure shopping experience for its users.

0 - Open Source AI Tools

11 - OpenAI Gpts

Data-Driven Messaging Campaign Generator

Create, analyze & duplicate customized automated message campaigns to boost retention & drive revenue for your website or app

Plagiarism Checker

Maintain the originality of your work with our Plagiarism Checker. This plagiarism checker identifies duplicate content, ensuring your work's uniqueness and integrity.

Image Theme Clone

Type “Start” and Get Exact Details on Image Generation and/or Duplication