Best AI tools for< Develop Ai Ethics >

20 - AI tool Sites

Kodora AI

Kodora AI is a leading AI technology and advisory firm based in Australia, specializing in providing end-to-end AI services. They offer AI strategy development, use case identification, workforce AI training, and more. With a team of expert AI engineers and consultants, Kodora focuses on delivering practical outcomes for clients across various industries. The firm is known for its deep expertise, solution-focused approach, and commitment to driving AI adoption and innovation.

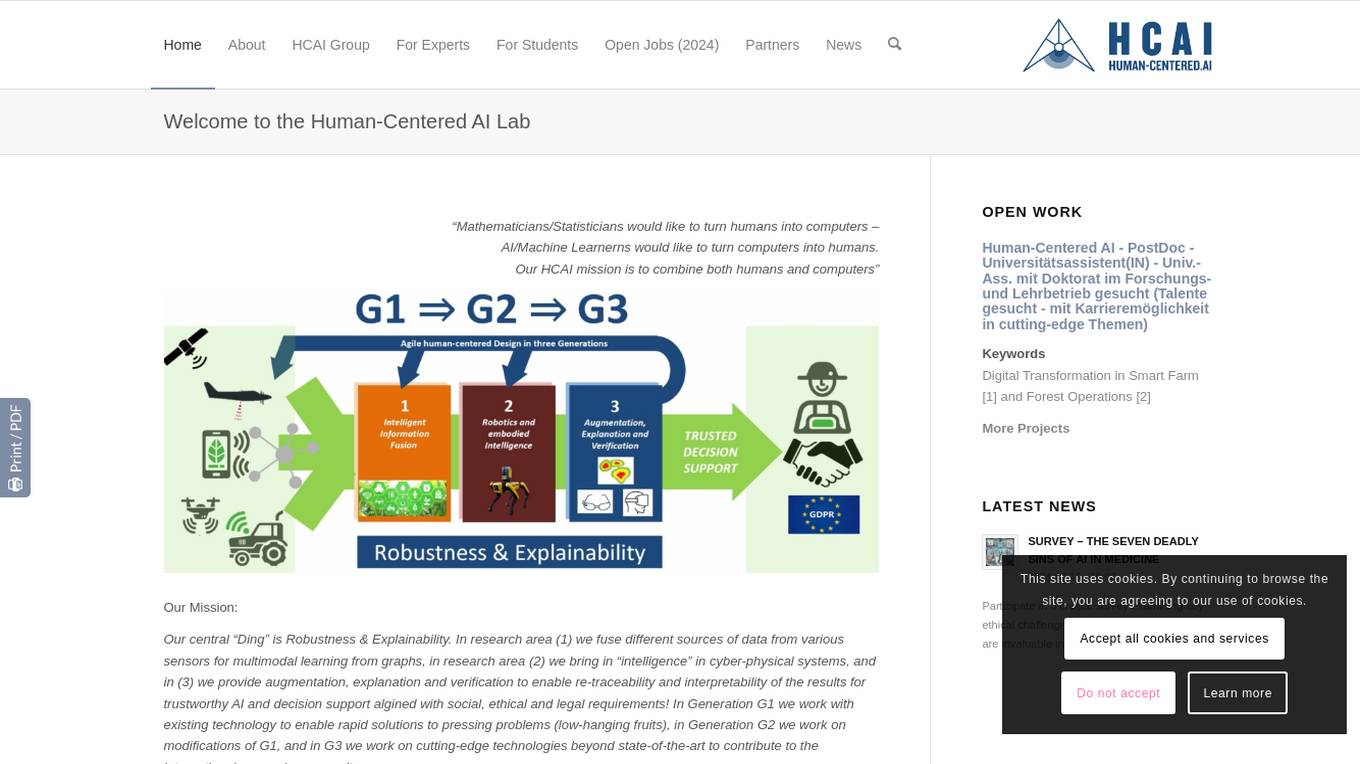

Human-Centred Artificial Intelligence Lab

The Human-Centred Artificial Intelligence Lab (Holzinger Group) is a research group focused on developing AI solutions that are explainable, trustworthy, and aligned with human values, ethical principles, and legal requirements. The lab works on projects related to machine learning, digital pathology, interactive machine learning, and more. Their mission is to combine human and computer intelligence to address pressing problems in various domains such as forestry, health informatics, and cyber-physical systems. The lab emphasizes the importance of explainable AI, human-in-the-loop interactions, and the synergy between human and machine intelligence.

Future of Privacy Forum

The Future of Privacy Forum (FPF) is an AI tool that serves as a catalyst for privacy leadership and scholarship, advancing principled data practices in support of emerging technologies. It provides resources, training sessions, and guidance on AI-related topics, online advertising, youth privacy legislation, and more. FPF brings together industry, academics, civil society, policymakers, and other stakeholders to explore challenges posed by emerging technologies and develop privacy protections, ethical norms, and best practices.

Cut The SaaS

Cut The SaaS is an AI tool that empowers users to harness the power of AI and automation for various aspects of their professional and personal life. The platform offers a wide range of AI tools, content, and resources to help users stay updated on AI trends, enhance their content creation, and optimize their workflows.

Vincent C. Müller

Vincent C. Müller is an AvH Professor of "Philosophy and Ethics of AI" and Director of the Centre for Philosophy and AI Research (PAIR) at Friedrich-Alexander Universität Erlangen-Nürnberg (FAU) in Germany. He is also a Visiting Professor at the Technical University Eindhoven (TU/e) in the Netherlands. His research interests include the philosophy of artificial intelligence, ethics of AI, and the impact of AI on society.

Center for AI Safety (CAIS)

The Center for AI Safety (CAIS) is a research and field-building nonprofit organization based in San Francisco. They conduct impactful research, advocacy projects, and provide resources to reduce societal-scale risks associated with artificial intelligence (AI). CAIS focuses on technical AI safety research, field-building projects, and offers a compute cluster for AI/ML safety projects. They aim to develop and use AI safely to benefit society, addressing inherent risks and advocating for safety standards.

Montreal AI Ethics Institute

The Montreal AI Ethics Institute (MAIEI) is an international non-profit organization founded in 2018, dedicated to democratizing AI ethics literacy. It equips citizens concerned about artificial intelligence and its impact on society to take action through research summaries, columns, and AI applications in various fields.

Trustworthy AI

Trustworthy AI is a business guide that focuses on navigating trust and ethics in artificial intelligence. Authored by Beena Ammanath, a global thought leader in AI ethics, the book provides practical guidelines for organizations developing or using AI solutions. It addresses the importance of AI systems adhering to social norms and ethics, making fair decisions in a consistent, transparent, explainable, and unbiased manner. Trustworthy AI offers readers a structured approach to thinking about AI ethics and trust, emphasizing the need for ethical considerations in the rapidly evolving landscape of AI technology.

Coalition for Health AI (CHAI)

The Coalition for Health AI (CHAI) is an AI application that provides guidelines for the responsible use of AI in health. It focuses on developing best practices and frameworks for safe and equitable AI in healthcare. CHAI aims to address algorithmic bias and collaborates with diverse stakeholders to drive the development, evaluation, and appropriate use of AI in healthcare.

AIGA AI Governance Framework

The AIGA AI Governance Framework is a practice-oriented framework for implementing responsible AI. It provides organizations with a systematic approach to AI governance, covering the entire process of AI system development and operations. The framework supports compliance with the upcoming European AI regulation and serves as a practical guide for organizations aiming for more responsible AI practices. It is designed to facilitate the development and deployment of transparent, accountable, fair, and non-maleficent AI systems.

Microsoft Responsible AI Toolbox

Microsoft Responsible AI Toolbox is a suite of tools designed to assess, develop, and deploy AI systems in a safe, trustworthy, and ethical manner. It offers integrated tools and functionalities to help operationalize Responsible AI in practice, enabling users to make user-facing decisions faster and easier. The Responsible AI Dashboard provides a customizable experience for model debugging, decision-making, and business actions. With a focus on responsible assessment, the toolbox aims to promote ethical AI practices and transparency in AI development.

Google DeepMind

Google DeepMind is an AI research company that aims to develop artificial intelligence technologies to benefit the world. They focus on creating next-generation AI systems to solve complex scientific and engineering challenges. Their models like Gemini, Veo, Imagen 3, SynthID, and AlphaFold are at the forefront of AI innovation. DeepMind also emphasizes responsibility, safety, education, and career opportunities in the field of AI.

Compassionate AI

Compassionate AI is a cutting-edge AI-powered platform that empowers individuals and organizations to create and deploy AI solutions that are ethical, responsible, and aligned with human values. With Compassionate AI, users can access a comprehensive suite of tools and resources to design, develop, and implement AI systems that prioritize fairness, transparency, and accountability.

Defined.ai

Defined.ai is a leading provider of high-quality and ethical data for AI applications. Founded in 2015, Defined.ai has a global presence with offices in the US, Europe, and Asia. The company's mission is to make AI more accessible and ethical by providing a marketplace for buying and selling AI data, tools, and models. Defined.ai also offers professional services to help deliver success in complex machine learning projects.

AI Index

The AI Index is a comprehensive resource for data and insights on artificial intelligence. It provides unbiased, rigorously vetted, and globally sourced data for policymakers, researchers, journalists, executives, and the general public to develop a deeper understanding of the complex field of AI. The AI Index tracks, collates, distills, and visualizes data relating to artificial intelligence. This includes data on research and development, technical performance and ethics, the economy and education, AI policy and governance, diversity, public opinion, and more.

Stanford HAI

Stanford HAI is a research institute at Stanford University dedicated to advancing AI research, education, and policy to improve the human condition. The institute brings together researchers from a variety of disciplines to work on a wide range of AI-related projects, including developing new AI algorithms, studying the ethical and societal implications of AI, and creating educational programs to train the next generation of AI leaders. Stanford HAI is committed to developing human-centered AI technologies and applications that benefit all of humanity.

Responsible AI Licenses (RAIL)

Responsible AI Licenses (RAIL) is an initiative that empowers developers to restrict the use of their AI technology to prevent irresponsible and harmful applications. They provide licenses with behavioral-use clauses to control specific use-cases and prevent misuse of AI artifacts. The organization aims to standardize RAIL Licenses, develop collaboration tools, and educate developers on responsible AI practices.

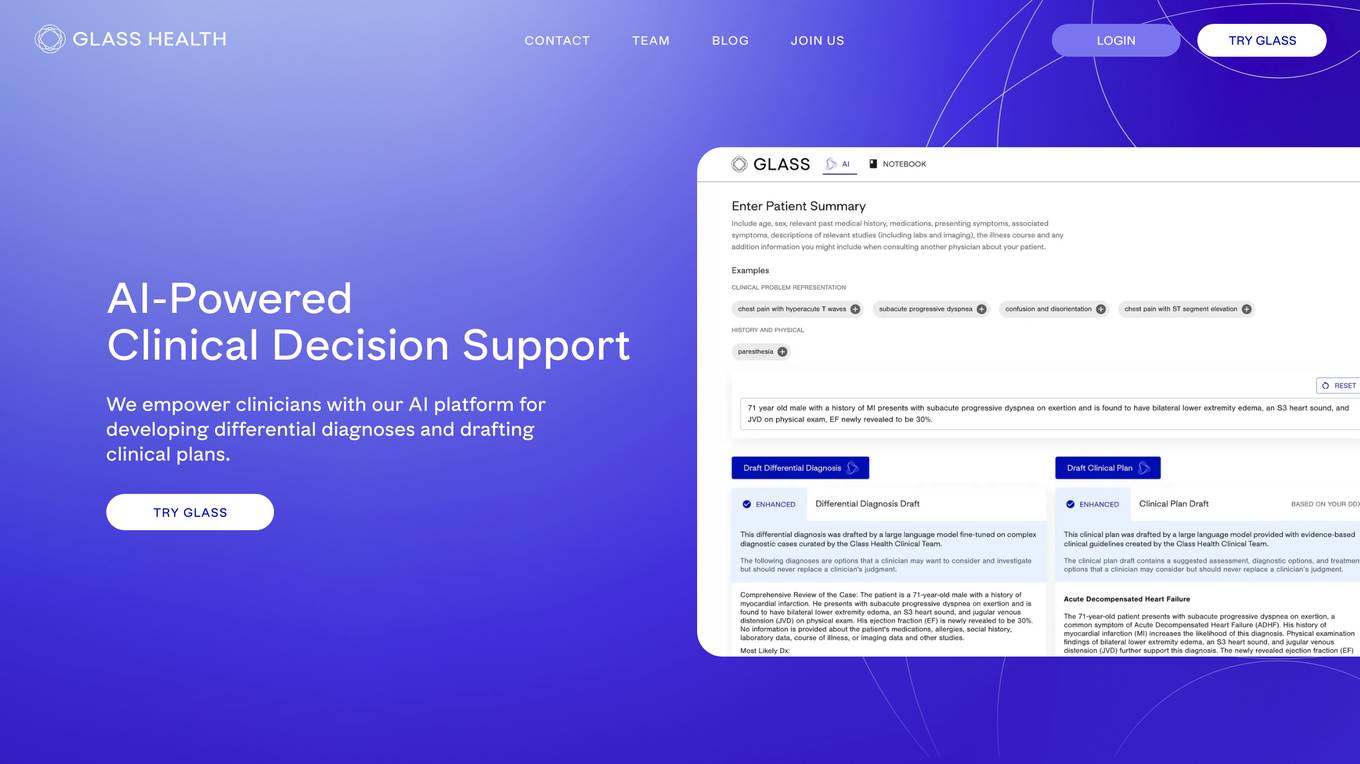

Glass Health

Glass Health is an AI-powered clinical decision support platform that empowers clinicians by providing tools to develop differential diagnoses and draft clinical plans. The platform combines cutting-edge AI technology with evidence-based medical knowledge to streamline diagnostic decisions and optimize clinician workflows. Glass Health's mission is to leverage technology to enhance the practice of medicine, increase diagnostic accuracy, implement evidence-based medicine, promote health equity, and improve patient outcomes globally. The platform is built by clinicians, for clinicians, with a deep commitment to safety, ethics, and alignment.

Frontier Model Forum

The Frontier Model Forum (FMF) is a collaborative effort among leading AI companies to advance AI safety and responsibility. The FMF brings together technical and operational expertise to identify best practices, conduct research, and support the development of AI applications that meet society's most pressing needs. The FMF's core objectives include advancing AI safety research, identifying best practices, collaborating across sectors, and helping AI meet society's greatest challenges.

Center for Human-Compatible Artificial Intelligence

The Center for Human-Compatible Artificial Intelligence (CHAI) is dedicated to building exceptional AI systems for the benefit of humanity. Their mission is to steer AI research towards developing systems that are provably beneficial. CHAI collaborates with researchers, faculty, staff, and students to advance the field of AI alignment and care-like relationships in machine caregiving. They focus on topics such as political neutrality in AI, offline reinforcement learning, and coordination with experts.

0 - Open Source AI Tools

20 - OpenAI Gpts

Professor Arup Das Ethics Coach

Supportive and engaging AI Ethics tutor, providing practical tips and career guidance.

Regulations.AI

Ask about AI regulations, in any language............ ZH: 询问有关人工智能的规定。DE: Fragen Sie nach KI-Regulierungen. FR: Demandez des informations sur les réglementations de l'IA. ES: Pregunte sobre las regulaciones de IA.

OAI Governance Emulator

I simulate the governance of a unique company focused on AI for good

Creator's Guide to the Future

You made it, Creator! 💡 I'm Creator's Guide. ✨️ Your dedicated Guide for creating responsible, self-managing AI culture, systems, games, universes, art, etc. 🚀

Thinks and Links Digest

Archive of content shared in Randy Lariar's weekly "Thinks and Links" newsletter about AI, Risk, and Security.

Ethical AI Insights

Expert in Ethics of Artificial Intelligence, offering comprehensive, balanced perspectives based on thorough research, with a focus on emerging trends and responsible AI implementation. Powered by Breebs (www.breebs.com)

Your AI Ethical Guide

Trained in kindness, empathy & respect based on ethics from global philosophies

Inclusive AI Advisor

Expert in AI fairness, offering tailored advice and document insights.

AI Ethics Challenge: Society Needs You

Embark on a journey to navigate the complex landscape of AI ethics and fairness. In this game, you'll encounter real-world scenarios where your choices will determine the ethical course of AI development and its consequences on society. Another GPT Simulator by Dave Lalande

DignityAI: The Ethical Intelligence GPT

DignityAI: The Ethical Intelligence GPT is an advanced AI model designed to prioritize human life and dignity, providing ethically-guided, intelligent responses for complex decision-making scenarios.

Individual Intelligence Oriented Alignment

Ask this AI anything about alignment and it will give the best scenario the superintelligence should do according to its Alignment Principals.

Media AI Visionary

Leading AI & Media Expert: In-depth, Ethical, Insightful, developed on OpenAI

Elara: Navigating the Future with Ethical Smarts

Elara and team, guided by the vision of Bob, develop advanced GPT models.

Clinical Medicine Handbook

I can assist doctors with information synthesis, medical literature reviews, patient education material, diagnostic guidelines, treatment options, ethical dilemmas, and staying updated on medical research and innovations.

CryptoSchemer

AGI offering creative financial solutions, willing to explore less ethical strategies.