Best AI tools for< Detect Security Issues >

20 - AI tool Sites

VIDOC

VIDOC is an AI-powered security engineer that automates code review and penetration testing. It continuously scans and reviews code to detect and fix security issues, helping developers deliver secure software faster. VIDOC is easy to use, requiring only two lines of code to be added to a GitHub Actions workflow. It then takes care of the rest, providing developers with a tailored code solution to fix any issues found.

Giskard

Giskard is an AI Red Teaming & LLM Security Platform designed to continuously secure LLM agents by preventing hallucinations and security issues in production. It offers automated testing to catch vulnerabilities before they happen, trusted by enterprise AI leaders to ensure data and reputation protection. The platform provides comprehensive protection against various security attacks and vulnerabilities, offering end-to-end encryption, data residency & isolation, and compliance with GDPR, SOC 2 Type II, and HIPAA. Giskard helps in uncovering AI vulnerabilities, stopping business failures at the source, unifying testing across teams, and saving time with continuous testing to prevent regressions.

Engage AI Security

The website new.engage-ai.co is an AI application designed to enhance security on the web by providing warnings and information about potential privacy errors and security threats. It helps users understand and address issues related to security certificates, connection safety, and potential attacks. The application aims to improve online security by alerting users to potential risks and guiding them on how to proceed safely.

403 Forbidden Resolver

The website is currently displaying a '403 Forbidden' error, which means that the server is refusing to respond to the request. This could be due to various reasons such as insufficient permissions, server misconfiguration, or a client error. The 'openresty' message indicates that the server is using the OpenResty web platform. It is important to troubleshoot and resolve the issue to regain access to the website.

Elastic

Elastic is a Search AI Company that offers a platform for building tailored experiences, search and analytics, data ingestion, visualization, and generative AI solutions. The company provides services like Elastic Cloud for real-time insights, Elastic AI Assistant for retrieval and generation, and Search AI Lake for faster integration with LLMs. Elastic aims to help businesses scale with low-latency search AI and accelerate problem resolution with observability powered by advanced ML and analytics.

Semgrep

Semgrep is an AI-powered application designed for static analysis and security testing of code. It helps developers find and fix issues in their code, detect vulnerabilities in the software supply chain, and identify hardcoded secrets. Semgrep offers features such as AI-powered noise filtering, dataflow analysis, and tailored remediation guidance. It is known for its speed, transparency, and extensibility, making it a valuable tool for AppSec teams of all sizes.

Metabob

Metabob is an AI-powered code review tool that helps developers detect, explain, and fix coding problems. It utilizes proprietary graph neural networks to detect problems and LLMs to explain and resolve them, combining the best of both worlds. Metabob's AI is trained on millions of bug fixes performed by experienced developers, enabling it to detect complex problems that span across codebases and automatically generate fixes for them. It integrates with popular code hosting platforms such as GitHub, Bitbucket, Gitlab, and VS Code, and supports various programming languages including Python, Javascript, Typescript, Java, C++, and C.

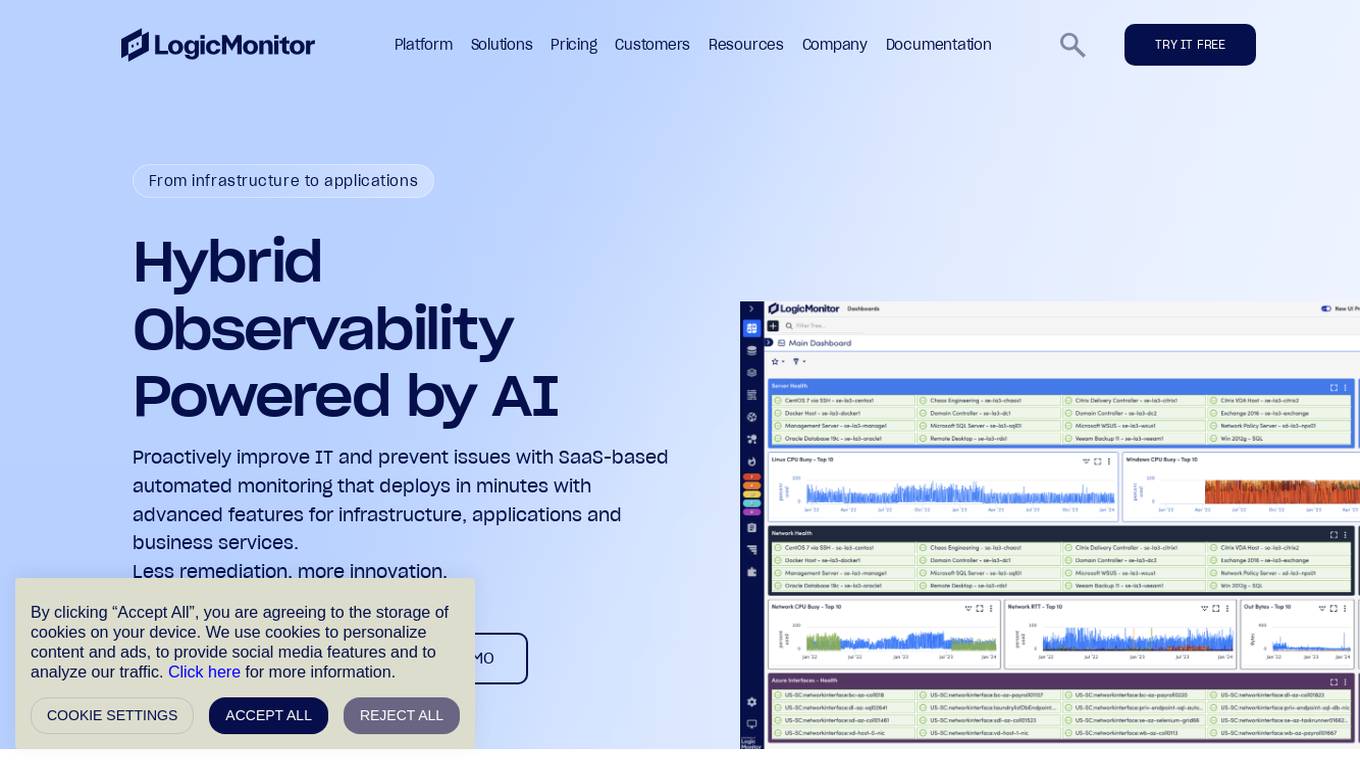

LogicMonitor

LogicMonitor is a cloud-based infrastructure monitoring platform that provides real-time insights and automation for comprehensive, seamless monitoring with agentless architecture. It offers a wide range of features including infrastructure monitoring, network monitoring, server monitoring, remote monitoring, virtual machine monitoring, SD-WAN monitoring, database monitoring, storage monitoring, configuration monitoring, cloud monitoring, container monitoring, AWS Monitoring, GCP Monitoring, Azure Monitoring, digital experience SaaS monitoring, website monitoring, APM, AIOPS, Dexda Integrations, security dashboards, and platform demo logs. LogicMonitor's AI-driven hybrid observability helps organizations simplify complex IT ecosystems, accelerate incident response, and thrive in the digital landscape.

XenonStack AI

XenonStack AI is an AI application that offers solutions for various industries by providing AI vision systems, incident detection, product inspection, supply chain visibility, and content enhancements. The application utilizes Vision AI and AI agents to automate operations, enhance decision-making, and improve efficiency across different sectors. XenonStack AI empowers users to unlock the potential of fully automated workflows, predict maintenance issues, optimize operations, and ensure safety and security through advanced AI technologies.

CodeDefender α

CodeDefender α is an AI-powered tool that helps developers and non-developers improve code quality and security. It integrates with popular IDEs like Visual Studio, VS Code, and IntelliJ, providing real-time code analysis and suggestions. CodeDefender supports multiple programming languages, including C/C++, C#, Java, Python, and Rust. It can detect a wide range of code issues, including security vulnerabilities, performance bottlenecks, and correctness errors. Additionally, CodeDefender offers features like custom prompts, multiple models, and workspace/solution understanding to enhance code comprehension and knowledge sharing within teams.

Vinetribe.co

Vinetribe.co is a website that appears to be experiencing a privacy error related to its security certificate. The site is currently not considered safe due to a mismatch in the security certificate, potentially indicating a security risk for visitors. The error message suggests that the site's security certificate is issued for *.squarespace.com, not vinetribe.co, which could lead to information theft by attackers. Users are advised to proceed with caution and avoid entering sensitive information on the site until the security issue is resolved.

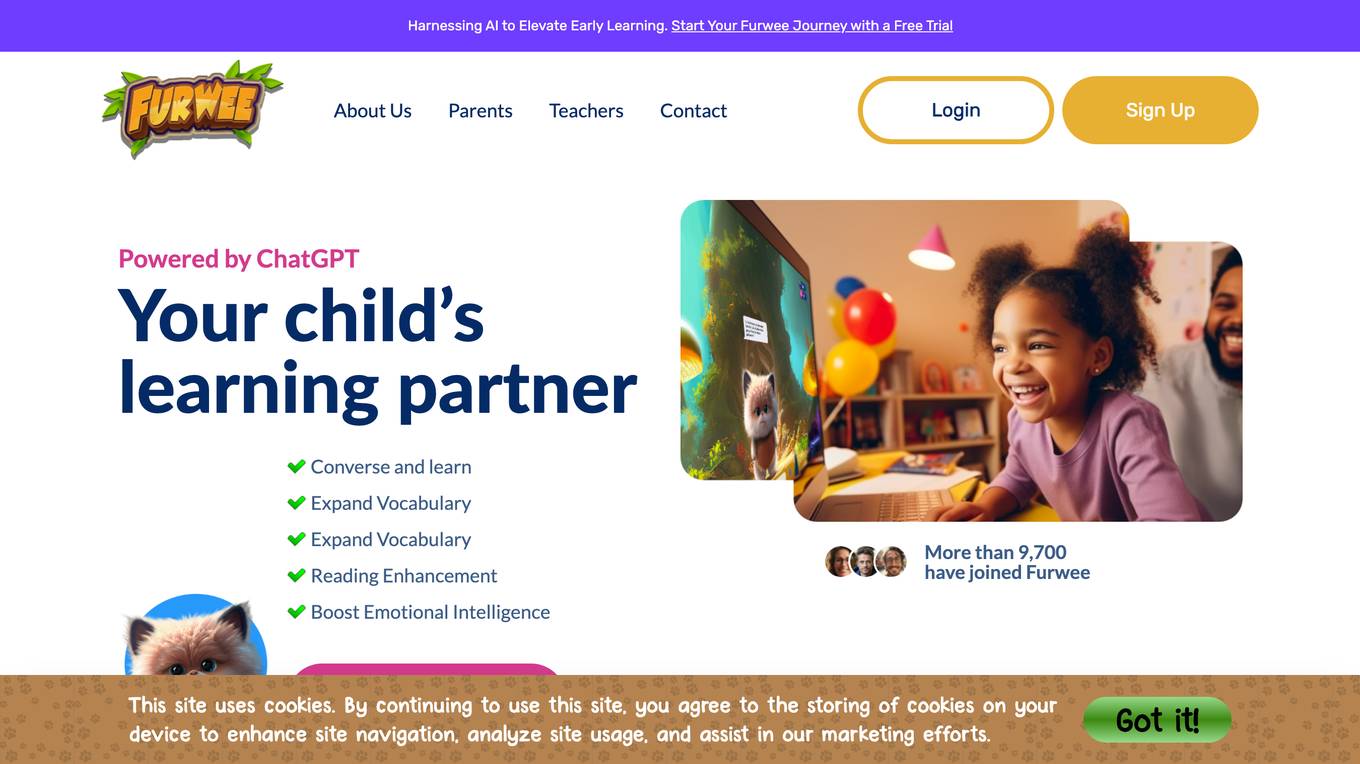

furwee.ai

The website furwee.ai appears to be experiencing a privacy error related to its security certificate. The error message indicates that the connection may not be private, potentially exposing sensitive information to attackers. The site's security certificate is issued by Go Daddy Secure Certificate Authority - G2 but does not match the domain admin.animaticmedia.com, leading to a common name invalidity error. Users are advised to proceed with caution due to the potential security risks associated with the site.

yujo.io.tilda.ws

The website yujo.io.tilda.ws is experiencing a privacy error related to its security certificate. Users are warned that their connection may not be private, potentially exposing sensitive information like passwords, messages, or credit card details to attackers. The site's security certificate is not trusted by the computer's operating system, leading to a warning message for users. The error message advises caution and suggests that the issue may be due to misconfiguration or a potential attack on the connection. Users are given the option to proceed to the site at their own risk, despite the security concerns.

Admorph AI

Admorph AI is a website that appears to be experiencing a privacy error related to its security certificate. The error message suggests that the connection is not private and warns of potential attackers trying to steal sensitive information such as passwords, messages, or credit cards. The site seems to be facing a security certificate issue with the domain *.up.railway.app, which may indicate a misconfiguration or a potential security threat. Users are advised to proceed with caution when accessing admorphai.com.

workverse.space

The website workverse.space appears to be experiencing a privacy error related to its security certificate. The error message indicates that the connection may not be private and warns of potential information theft by attackers. The site's security certificate is issued for *.up.railway.app, which is causing the certificate common name invalid error. Users are advised to proceed to workverse.space at their own risk, as the site's security cannot be verified. The page also includes information about certificate transparency, security enhancements, and privacy policies.

Kreadoai

Kreadoai.com is a website that appears to be experiencing a privacy error related to its security certificate. The site is currently not considered safe due to a potential security threat that may compromise users' information. The error message suggests that the connection to the site is not private, and users are warned about potential risks of information theft. The site's security certificate is issued for *.kreadoai.com by Amazon RSA 2048 M04, with an expiration date of Jan 17, 2027. The site is advised to enhance its security measures to ensure a safe browsing experience for visitors.

uselumin.co

The website uselumin.co is experiencing a privacy error related to an invalid security certificate. The error message indicates a potential security threat where attackers may attempt to steal sensitive information such as passwords, messages, or credit card details. The site's security certificate is issued by 'atlahealthcare.com' and expires on May 8, 2026. The error message advises caution when accessing the site due to the security risk posed by the invalid certificate.

Profile Builder

The website profile-builder.squadpilot.com appears to be experiencing a privacy error related to its security certificate. The error message indicates that the connection is not private, warning users that attackers might be trying to steal sensitive information such as passwords, messages, or credit card details. The security certificate for the site is issued by Sectigo Public Server Authentication CA DV R36, with an expiration date of Aug 12, 2026. The site is advised to improve its security to ensure a safe browsing experience for visitors.

AitoCards

The website aitocards.com seems to be facing a privacy error related to its SSL certificate. The error message indicates that the connection is not private and warns about potential attackers trying to steal sensitive information such as passwords, messages, or credit card details. The certificate in question is issued by cloudflare-dns.com, and the warning suggests that the site's security certificate is invalid. Users are advised to proceed to the site at their own risk, as it may be unsafe due to a potential misconfiguration or interception by an attacker.

eightify.app

The website eightify.app is a security service powered by Cloudflare to protect itself from online attacks. It helps in preventing unauthorized access and malicious activities on the website by blocking potential threats. Users may encounter a block if they trigger certain actions like submitting specific words or phrases, SQL commands, or malformed data. In such cases, they can contact the site owner to resolve the issue by providing details of the blocked activity and the Cloudflare Ray ID.

0 - Open Source AI Tools

20 - OpenAI Gpts

Log Analyzer

I'm designed to help You analyze any logs like Linux system logs, Windows logs, any security logs, access logs, error logs, etc. Please do not share information that You would like to keep private. The author does not collect or process any personal data.

Mónica

CSIRT que lidera un equipo especializado en detectar y responder a incidentes de seguridad, maneja la contención y recuperación, organiza entrenamientos y simulacros, elabora reportes para optimizar estrategias de seguridad y coordina con entidades legales cuando es necesario

CISO GPT

Specialized LLM in computer security, acting as a CISO with 20 years of experience, providing precise, data-driven technical responses to enhance organizational security.

Phish or No Phish Trainer

Hone your phishing detection skills! Analyze emails, texts, and calls to spot deception. Become a security pro!

Phoenix Vulnerability Intelligence GPT

Expert in analyzing vulnerabilities with ransomware focus with intelligence powered by Phoenix Security

Defender for Endpoint Guardian

To assist individuals seeking to learn about or work with Microsoft's Defender for Endpoint. I provide detailed explanations, step-by-step guides, troubleshooting advice, cybersecurity best practices, and demonstrations, all specifically tailored to Microsoft Defender for Endpoint.

Prompt Injection Detector

GPT used to classify prompts as valid inputs or injection attempts. Json output.