Best AI tools for< Deploy Systems >

20 - AI tool Sites

Aquarium

Aquarium is an AI tool that accelerates the process of building and deploying production AI systems. The platform has been instrumental in enhancing the capabilities of AI models, particularly in computer vision and natural language processing domains. By leveraging generative AI technology, Aquarium aims to bring value to a vast user base, spanning from college students to enterprises. The recent integration with Notion signifies a strategic move towards making AI more accessible and impactful in everyday life.

Responsible AI Institute

The Responsible AI Institute is a global non-profit organization dedicated to equipping organizations and AI professionals with tools and knowledge to create, procure, and deploy AI systems that are safe and trustworthy. They offer independent assessments, conformity assessments, and certification programs to ensure that AI systems align with internal policies, regulations, laws, and best practices for responsible technology use. The institute also provides resources, news, and a community platform for members to collaborate and stay informed about responsible AI practices and regulations.

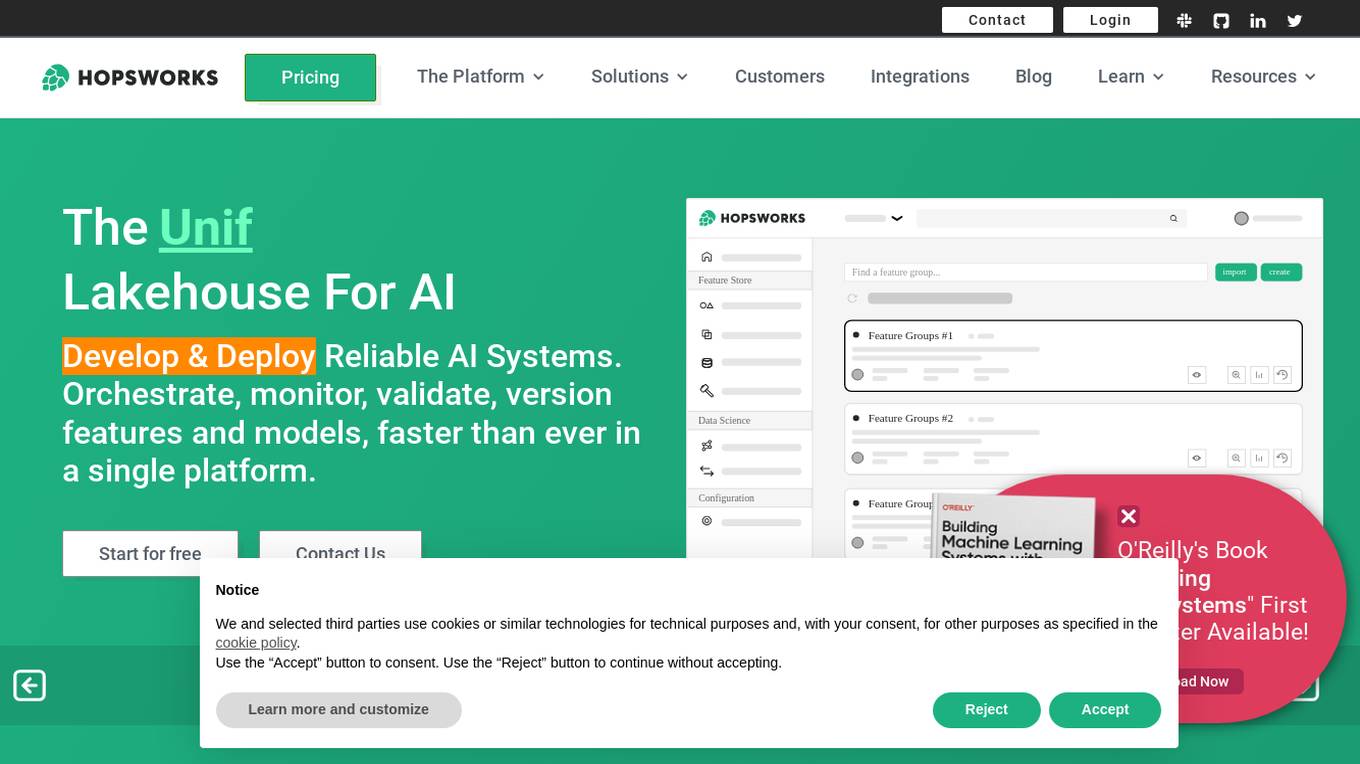

Hopsworks

Hopsworks is an AI platform that offers a comprehensive solution for building, deploying, and monitoring machine learning systems. It provides features such as a Feature Store, real-time ML capabilities, and generative AI solutions. Hopsworks enables users to develop and deploy reliable AI systems, orchestrate and monitor models, and personalize machine learning models with private data. The platform supports batch and real-time ML tasks, with the flexibility to deploy on-premises or in the cloud.

Compassionate AI

Compassionate AI is a cutting-edge AI-powered platform that empowers individuals and organizations to create and deploy AI solutions that are ethical, responsible, and aligned with human values. With Compassionate AI, users can access a comprehensive suite of tools and resources to design, develop, and implement AI systems that prioritize fairness, transparency, and accountability.

Tangram Vision

Tangram Vision is a company that provides sensor calibration tools and infrastructure for robotics and autonomous vehicles. Their products include MetriCal, a high-speed bundle adjustment software for precise sensor calibration, and AutoCal, an on-device, real-time calibration health check and adjustment tool. Tangram Vision also offers a high-resolution depth sensor called HiFi, which combines high-resolution depth data with high-powered AI capabilities. The company's mission is to accelerate the development and deployment of autonomous systems by providing the tools and infrastructure needed to ensure the accuracy and reliability of sensors.

MegaMatcher ABIS Online

MegaMatcher ABIS Online is an automated biometric identification system developed by Neurotechnology. It offers a turnkey multi-biometric solution for government and enterprise applications worldwide. The system includes features such as enrollment, biometric matching, identity management, data analysis, and deployment options for cloud services or on-premise solutions. With support for fingerprint, face, iris, and palmprint biometric modalities, the system ensures high accuracy, reliability, and unlimited storage of biometric and demographic information. It also provides easy integration through RESTful API and SDK libraries, along with security features like role-based access control and auditability.

Microsoft Responsible AI Toolbox

Microsoft Responsible AI Toolbox is a suite of tools designed to assess, develop, and deploy AI systems in a safe, trustworthy, and ethical manner. It offers integrated tools and functionalities to help operationalize Responsible AI in practice, enabling users to make user-facing decisions faster and easier. The Responsible AI Dashboard provides a customizable experience for model debugging, decision-making, and business actions. With a focus on responsible assessment, the toolbox aims to promote ethical AI practices and transparency in AI development.

InsightFace

InsightFace is an open-source deep face analysis library that provides a rich variety of state-of-the-art algorithms for face recognition, detection, and alignment. It is designed to be efficient for both training and deployment, making it suitable for research institutions and industrial organizations. InsightFace has achieved top rankings in various challenges and competitions, including the ECCV 2022 WCPA Challenge, NIST-FRVT 1:1 VISA, and WIDER Face Detection Challenge 2019.

OECD.AI

The OECD Artificial Intelligence Policy Observatory, also known as OECD.AI, is a platform that focuses on AI policy issues, risks, and accountability. It provides resources, tools, and metrics to build and deploy trustworthy AI systems. The platform aims to promote innovative and trustworthy AI through collaboration with countries, stakeholders, experts, and partners. Users can access information on AI incidents, AI principles, policy areas, publications, and videos related to AI. OECD.AI emphasizes the importance of data privacy, generative AI management, AI computing capacities, and AI's potential futures.

Vellum AI

Vellum AI is an AI platform that supports using Microsoft Azure hosted OpenAI models. It offers tools for prompt engineering, semantic search, prompt chaining, evaluations, and monitoring. Vellum enables users to build AI systems with features like workflow automation, document analysis, fine-tuning, Q&A over documents, intent classification, summarization, vector search, chatbots, blog generation, sentiment analysis, and more. The platform is backed by top VCs and founders of well-known companies, providing a complete solution for building LLM-powered applications.

PoplarML

PoplarML is a platform that enables the deployment of production-ready, scalable ML systems with minimal engineering effort. It offers one-click deploys, real-time inference, and framework agnostic support. With PoplarML, users can seamlessly deploy ML models using a CLI tool to a fleet of GPUs and invoke their models through a REST API endpoint. The platform supports Tensorflow, Pytorch, and JAX models.

Composio

Composio is an integration platform for AI Agents and LLMs that allows users to access over 150 tools with just one line of code. It offers seamless integrations, managed authentication, a repository of tools, and powerful RPA tools to streamline and optimize the connection and interaction between AI Agents/LLMs and various APIs/services. Composio simplifies JSON structures, improves variable names, and enhances error handling to increase reliability by 30%. The platform is SOC Type II compliant, ensuring maximum security of user data.

PixieBrix

PixieBrix is an AI engagement platform that allows users to build, deploy, and manage internal AI tools to drive team productivity. It unifies AI landscapes with oversight and governance for enterprise scale. The platform is enterprise-ready and fully customizable to meet unique needs, and can be deployed on any site, making it easy to integrate into existing systems. PixieBrix leverages the power of AI and automation to harness the latest technology to streamline workflows and take productivity to new heights.

Promptmate

Promptmate.io is an AI-powered app builder that allows users to create customized applications based on leading AI systems. With Promptmate, users can combine different AI systems, add external data, and automate processes to streamline their workflows. The platform offers a range of features, including pre-built app templates, bulk processing, and data extenders, making it easy for users to build and deploy AI-powered applications without the need for coding.

Duckietown

Duckietown is a platform for delivering cutting-edge robotics and AI learning experiences. It offers teaching resources to instructors, hands-on activities to learners, an accessible research platform to researchers, and a state-of-the-art ecosystem for professional training. Duckietown's mission is to make robotics and AI education state-of-the-art, hands-on, and accessible to all.

GenWorlds

GenWorlds is an event-based communication framework for building multi-agent systems. It offers a platform for creating Generative AI applications where users can design customizable environments, utilize scalable architecture, access a repository of memories and tools, choose cognitive processes for agents, and pick coordination protocols. GenWorlds aims to foster a vibrant community of developers, AI enthusiasts, and innovators to collaborate, innovate, share knowledge, and grow together.

SymphonyAI Financial Crime Prevention AI SaaS Solutions

SymphonyAI offers AI SaaS solutions for financial crime prevention, helping organizations detect fraud, conduct customer due diligence, and prevent payment fraud. Their solutions leverage generative and predictive AI to enhance efficiency and effectiveness in investigating financial crimes. SymphonyAI's products cater to industries like banking, insurance, financial markets, and private banking, providing rapid deployment, scalability, and seamless integration to meet regulatory compliance requirements.

echowin

echowin is an AI Voice Agent Builder Platform that enables businesses to create AI agents for calls, chat, and Discord. It offers a comprehensive solution for automating customer support with features like Agentic AI logic and reasoning, support for over 30 languages, parallel call answering, and 24/7 availability. The platform allows users to build, train, test, and deploy AI agents quickly and efficiently, without compromising on capabilities or scalability. With a focus on simplicity and effectiveness, echowin empowers businesses to enhance customer interactions and streamline operations through cutting-edge AI technology.

Fathom5

Fathom5 is a company that specializes in the intersection of AI and industrial systems. They offer a range of products and services to help customers build the industrial systems of the future. Their solutions are focused on critical infrastructure, making it more resilient, flexible, and efficient.

Embedl

Embedl is an AI tool that specializes in developing advanced solutions for efficient AI deployment in embedded systems. With a focus on deep learning optimization, Embedl offers a cost-effective solution that reduces energy consumption and accelerates product development cycles. The platform caters to industries such as automotive, aerospace, and IoT, providing cutting-edge AI products that drive innovation and competitive advantage.

2 - Open Source AI Tools

mojo

Mojo is a new programming language that bridges the gap between research and production by combining Python syntax and ecosystem with systems programming and metaprogramming features. Mojo is still young, but it is designed to become a superset of Python over time.

ansible-power-aix

The IBM Power Systems AIX Collection provides modules to manage configurations and deployments of Power AIX systems, enabling workloads on Power platforms as part of an enterprise automation strategy through the Ansible ecosystem. It includes example best practices, requirements for AIX versions, Ansible, and Python, along with resources for documentation and contribution.

20 - OpenAI Gpts

Europe Ethos Guide for AI

Ethics-focused GPT builder assistant based on European AI guidelines, recommendations and regulations

Azure Arc Expert

Azure Arc expert providing guidance on architecture, deployment, and management.

Docker and Docker Swarm Assistant

Expert in Docker and Docker Swarm solutions and troubleshooting.

AI Engineering

AI engineering expert offering insights into machine learning and AI development.

Tech Mentor

Expert software architect with experience in design, construction, development, testing and deployment of Web, Mobile and Standalone software architectures

Rust on ESP32 Expert

Expert in Rust coding for ESP32, offering detailed programming and deployment guidance.

The Dock - Your Docker Assistant

Technical assistant specializing in Docker and Docker Compose. Lets Debug !