Best AI tools for< Deploy Feature Serving >

20 - AI tool Sites

ibl.ai

ibl.ai is a generative AI platform that focuses on education, providing cutting-edge solutions for institutions to create AI mentors, tutoring apps, and content creation tools. The platform empowers educators by giving them full control over their code, data, and models. With advanced features and support for both web and native mobile platforms, ibl.ai seamlessly integrates with existing infrastructure, making it easy to deploy across organizations. The platform is designed to enhance learning experiences, foster critical thinking, and engage students deeply in educational content.

Mystic.ai

Mystic.ai is an AI tool designed to deploy and scale Machine Learning models with ease. It offers a fully managed Kubernetes platform that runs in your own cloud, allowing users to deploy ML models in their own Azure/AWS/GCP account or in a shared GPU cluster. Mystic.ai provides cost optimizations, fast inference, simpler developer experience, and performance optimizations to ensure high-performance AI model serving. With features like pay-as-you-go API, cloud integration with AWS/Azure/GCP, and a beautiful dashboard, Mystic.ai simplifies the deployment and management of ML models for data scientists and AI engineers.

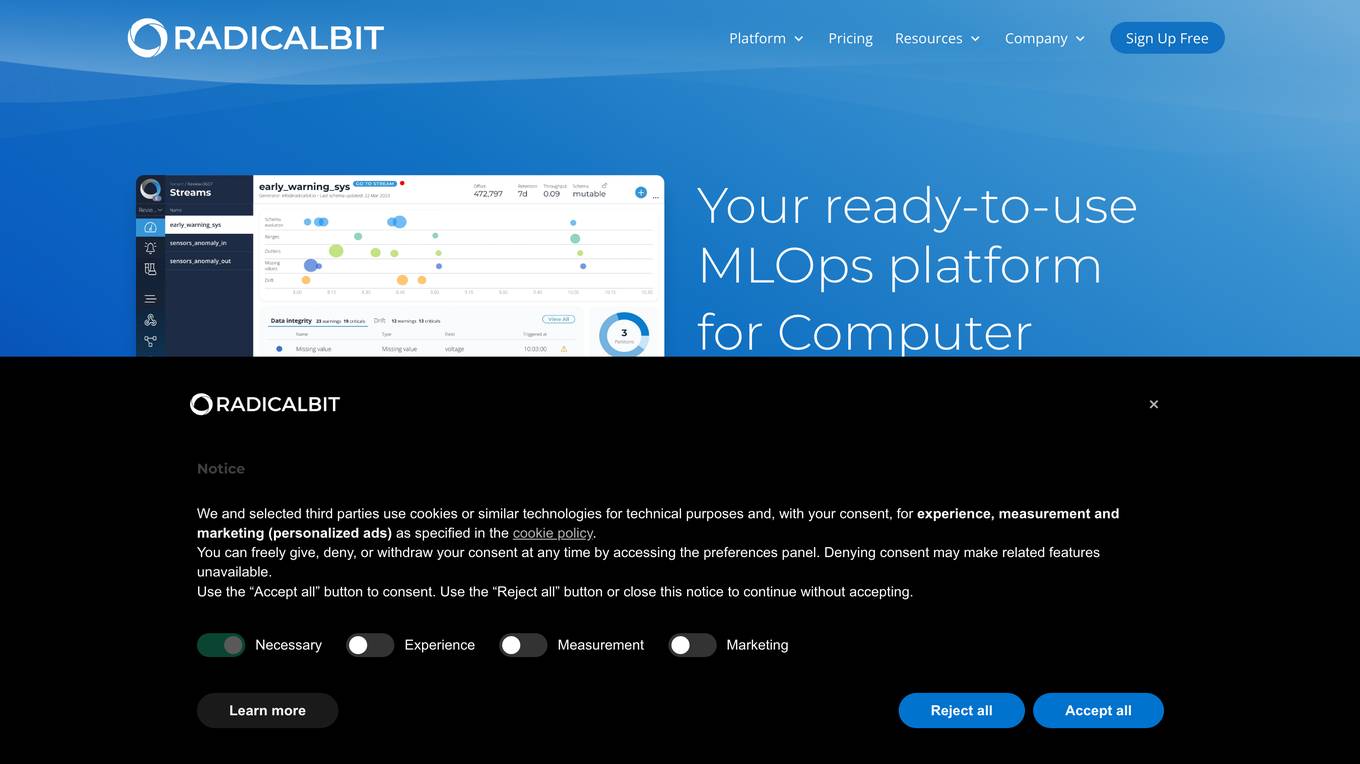

Radicalbit

Radicalbit is an MLOps and AI Observability platform that helps businesses deploy, serve, observe, and explain their AI models. It provides a range of features to help data teams maintain full control over the entire data lifecycle, including real-time data exploration, outlier and drift detection, and model monitoring in production. Radicalbit can be seamlessly integrated into any ML stack, whether SaaS or on-prem, and can be used to run AI applications in minutes.

Denvr DataWorks AI Cloud

Denvr DataWorks AI Cloud is a cloud-based AI platform that provides end-to-end AI solutions for businesses. It offers a range of features including high-performance GPUs, scalable infrastructure, ultra-efficient workflows, and cost efficiency. Denvr DataWorks is an NVIDIA Elite Partner for Compute, and its platform is used by leading AI companies to develop and deploy innovative AI solutions.

Unified DevOps platform to build AI applications

This is a unified DevOps platform to build AI applications. It provides a comprehensive set of tools and services to help developers build, deploy, and manage AI applications. The platform includes a variety of features such as a code editor, a debugger, a profiler, and a deployment manager. It also provides access to a variety of AI services, such as natural language processing, machine learning, and computer vision.

Hopsworks

Hopsworks is an AI platform that offers a comprehensive solution for building, deploying, and monitoring machine learning systems. It provides features such as a Feature Store, real-time ML capabilities, and generative AI solutions. Hopsworks enables users to develop and deploy reliable AI systems, orchestrate and monitor models, and personalize machine learning models with private data. The platform supports batch and real-time ML tasks, with the flexibility to deploy on-premises or in the cloud.

Dora

Dora is an AI-powered platform that enables users to create 3D animated websites without the need for coding. It caters to designers, freelancers, and creative professionals who seek to design visually captivating websites effortlessly. With Dora, users can craft mesmerizing 3D and animated visuals that are responsive and seamlessly translate across devices. The platform is designed for professionals who prioritize design aesthetics and offers a no-code experience for those transitioning from other design tools. Dora leverages advanced AI algorithms to generate, customize, and deploy stunning landing pages, revolutionizing the web design process.

JFrog ML

JFrog ML is an AI platform designed to streamline AI development from prototype to production. It offers a unified MLOps platform to build, train, deploy, and manage AI workflows at scale. With features like Feature Store, LLMOps, and model monitoring, JFrog ML empowers AI teams to collaborate efficiently and optimize AI & ML models in production.

Magick

Magick is a cutting-edge Artificial Intelligence Development Environment (AIDE) that empowers users to rapidly prototype and deploy advanced AI agents and applications without coding. It provides a full-stack solution for building, deploying, maintaining, and scaling AI creations. Magick's open-source, platform-agnostic nature allows for full control and flexibility, making it suitable for users of all skill levels. With its visual node-graph editors, users can code visually and create intuitively. Magick also offers powerful document processing capabilities, enabling effortless embedding and access to complex data. Its real-time and event-driven agents respond to events right in the AIDE, ensuring prompt and efficient handling of tasks. Magick's scalable deployment feature allows agents to handle any number of users, making it suitable for large-scale applications. Additionally, its multi-platform integrations with tools like Discord, Unreal Blueprints, and Google AI provide seamless connectivity and enhanced functionality.

GitWit

GitWit is an online tool that helps you build web apps quickly and easily, even if you don't have any coding experience. With GitWit, you can create a React app in minutes, and you can use AI to augment your own coding skills. GitWit supports React, Tailwind, and NodeJS, and it has generated over 1000 projects to date. GitWit can help you build any type of web app, from simple landing pages to complex e-commerce stores.

Prodvana

Prodvana is an intelligent deployment platform that helps businesses automate and streamline their software deployment process. It provides a variety of features to help businesses improve the speed, reliability, and security of their deployments. Prodvana is a cloud-based platform that can be used with any type of infrastructure, including on-premises, hybrid, and multi-cloud environments. It is also compatible with a wide range of DevOps tools and technologies. Prodvana's key features include: Intent-based deployments: Prodvana uses intent-based deployment technology to automate the deployment process. This means that businesses can simply specify their deployment goals, and Prodvana will automatically generate and execute the necessary steps to achieve those goals. This can save businesses a significant amount of time and effort. Guardrails for deployments: Prodvana provides a variety of guardrails to help businesses ensure the security and reliability of their deployments. These guardrails include approvals, database validations, automatic deployment validation, and simple interfaces to add custom guardrails. This helps businesses to prevent errors and reduce the risk of outages. Frictionless DevEx: Prodvana provides a frictionless developer experience by tracking commits through the infrastructure, ensuring complete visibility beyond just Docker images. This helps developers to quickly identify and resolve issues, and it also makes it easier to collaborate with other team members. Intelligence with Clairvoyance: Prodvana's Clairvoyance feature provides businesses with insights into the impact of their deployments before they are executed. This helps businesses to make more informed decisions about their deployments and to avoid potential problems. Easy integrations: Prodvana integrates seamlessly with a variety of DevOps tools and technologies. This makes it easy for businesses to use Prodvana with their existing workflows and processes.

Superlinked

Superlinked is a compute framework for your information retrieval and feature engineering systems, focused on turning complex data into vector embeddings. Vectors power most of what you already do online - hailing a cab, finding a funny video, getting a date, scrolling through a feed or paying with a tap. And yet, building production systems powered by vectors is still too hard! Our goal is to help enterprises put vectors at the center of their data & compute infrastructure, to build smarter and more reliable software.

CodeConductor

CodeConductor is a no-code AI software development platform that empowers users to build scalable, high-quality applications without the need for extensive coding. The platform streamlines the app development process, allowing users to focus on innovation and customization. With features like accelerated app development, complete customization control, intelligent feature suggestions, dynamic data modeling, and seamless CI/CD & auto-scaled hosting, CodeConductor offers a user-friendly and efficient solution for creating web and mobile applications. The platform also provides enterprise-grade security, robust deployment options, and transparent code history tracking.

Weaviate

Weaviate is an AI-native database that developers love. It offers a feature-rich vector database trusted by AI innovators, empowering AI-native builders to create AI-powered search, retrieval augmented generation, and agentic AI applications. Weaviate simplifies the process of building production-ready AI applications by providing seamless model integration, pre-built database agents, and language-agnostic SDKs for easy development. With billion-scale architecture and enterprise-ready deployment options, Weaviate enables developers to scale seamlessly, deploy anywhere, and meet enterprise requirements. The platform is designed to help AI builders write less custom code, optimize costs, and build AI-native apps faster.

SwiftSora

SwiftSora is an open-source project that enables users to generate videos from prompt text online. The project utilizes OpenAI's Sora model to streamline video creation and includes a straightforward one-click website deployment feature. With SwiftSora, users can effortlessly produce high-quality video assets, ranging from realistic scenes to imaginative visuals, by simply providing text instructions. The platform offers a user-friendly interface with customizable settings, making it accessible to both beginners and experienced video creators. SwiftSora empowers users to elevate their creativity and redefine the boundaries of possibility in video production.

Global Blockchain Show

The Global Blockchain Show is an annual event that brings together experts and enthusiasts in the blockchain and AI industries. The event features a variety of speakers, workshops, and exhibitions, and provides a platform for attendees to learn about the latest developments in these fields. The 2024 Global Blockchain Show will be held in Dubai, UAE, from April 16-17. The event will feature a keynote address from Sophia, the world's most famous humanoid robot, as well as presentations from other leading experts in the blockchain and AI fields. Attendees will also have the opportunity to network with other professionals in the industry and learn about the latest products and services from leading companies. The Global Blockchain Show is a must-attend event for anyone interested in the latest developments in blockchain and AI.

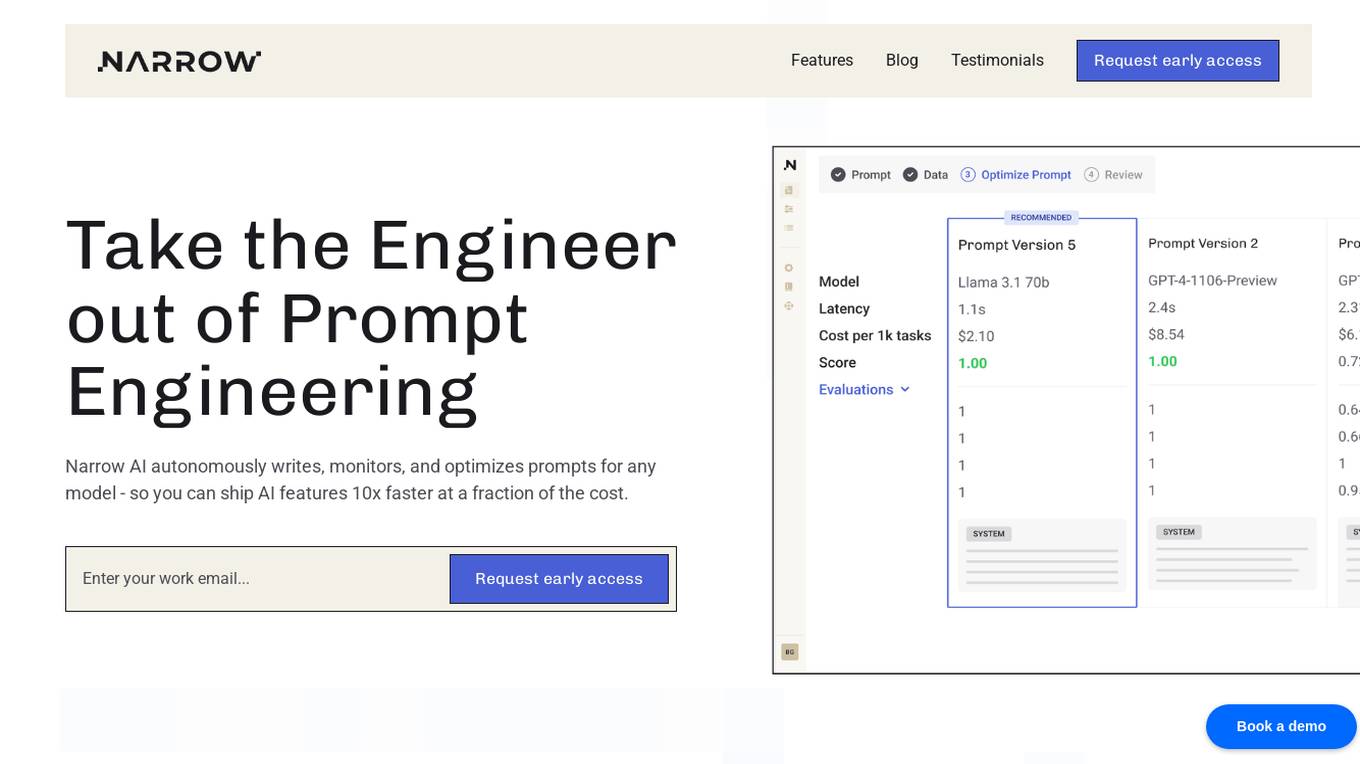

Narrow AI

Narrow AI is an AI application that autonomously writes, monitors, and optimizes prompts for any model, enabling users to ship AI features 10x faster at a fraction of the cost. It streamlines the workflow by allowing users to test new models in minutes, compare prompt performance, and deploy on the optimal model for their use case. Narrow AI helps users maximize efficiency by generating expert-level prompts, adapting prompts to new models, and optimizing prompts for quality, cost, and speed.

H2O.ai

H2O.ai is a leading platform that offers a convergence of the world's best predictive and generative AI solutions for private and protected data. The platform provides a wide range of AI agents, digital assistants, business insights, predictive AI tools, and solutions for model builders, data scientists, and enterprise developers. H2O.ai is known for its innovative AI technologies that empower organizations to accelerate model development, train custom models, and manage the full ML lifecycle. With a focus on privacy and security, H2O.ai is trusted by banks, telcos, and government agencies worldwide.

FARSPEAK.AI

FARSPEAK.AI is an AI application that offers RESTful AI for databases, allowing users to query databases using natural language and deploy AI agents to enhance data processing. The application supports MongoDB Atlas, provides up-to-date embeddings, and offers both structured and unstructured data support. FARSPEAK simplifies work for AI engineers, app & web developers, and product designers by enabling faster AI feature development, natural language querying, and insights generation from data.

Plumb

Plumb is a no-code, node-based builder that empowers product, design, and engineering teams to create AI features together. It enables users to build, test, and deploy AI features with confidence, fostering collaboration across different disciplines. With Plumb, teams can ship prototypes directly to production, ensuring that the best prompts from the playground are the exact versions that go to production. It goes beyond automation, allowing users to build complex multi-tenant pipelines, transform data, and leverage validated JSON schema to create reliable, high-quality AI features that deliver real value to users. Plumb also makes it easy to compare prompt and model performance, enabling users to spot degradations, debug them, and ship fixes quickly. It is designed for SaaS teams, helping ambitious product teams collaborate to deliver state-of-the-art AI-powered experiences to their users at scale.

1 - Open Source AI Tools

feast

Feast is an open source feature store for machine learning, providing a fast path to manage infrastructure for productionizing analytic data. It allows ML platform teams to make features consistently available, avoid data leakage, and decouple ML from data infrastructure. Feast abstracts feature storage from retrieval, ensuring portability across different model training and serving scenarios.

20 - OpenAI Gpts

Flask Expert Assistant

This GPT is a specialized assistant for Flask, the popular web framework in Python. It is designed to help both beginners and experienced developers with Flask-related queries, ranging from basic setup and routing to advanced features like database integration and application scaling.

Frontend Developer

AI front-end developer expert in coding React, Nextjs, Vue, Svelte, Typescript, Gatsby, Angular, HTML, CSS, JavaScript & advanced in Flexbox, Tailwind & Material Design. Mentors in coding & debugging for junior, intermediate & senior front-end developers alike. Let’s code, build & deploy a SaaS app.

Azure Arc Expert

Azure Arc expert providing guidance on architecture, deployment, and management.

Instructor GCP ML

Formador para la certificación de ML Engineer en GCP, con respuestas y explicaciones detalladas.

Docker and Docker Swarm Assistant

Expert in Docker and Docker Swarm solutions and troubleshooting.

Cloudwise Consultant

Expert in cloud-native solutions, provides tailored tech advice and cost estimates.