Best AI tools for< Data Ingest >

20 - AI tool Sites

SingleStore

SingleStore is a real-time data platform designed for apps, analytics, and gen AI. It offers faster hybrid vector + full-text search, fast-scaling integrations, and a free tier. SingleStore can read, write, and reason on petabyte-scale data in milliseconds. It supports streaming ingestion, high concurrency, first-class vector support, record lookups, and more.

SID

SID is a data ingestion, storage, and retrieval pipeline that provides real-time context for AI applications. It connects to various data sources, handles authentication and permission flows, and keeps information up-to-date. SID's API allows developers to retrieve the right piece of data for a given task, enabling them to build AI apps that are fast, accurate, and scalable. With SID, developers can focus on building their products and leave the data management to SID.

Databricks

Databricks is a data and AI company that provides a unified platform for data, analytics, and AI. The platform includes a variety of tools and services for data management, data warehousing, real-time analytics, data engineering, data science, and AI development. Databricks also offers a variety of integrations with other tools and services, such as ETL tools, data ingestion tools, business intelligence tools, AI tools, and governance tools.

SPREAD AI

SPREAD AI is an AI application that provides Engineering Intelligence solutions for various industries such as Automotive & Mobility, Aerospace & Defense, and Industrial Goods & Machinery. It unifies fragmented engineering data into living Product Twins, enabling engineers and AI agents to share the same system-level understanding. The platform offers rapid data ingestion, contextualization of product data, and harnessing Engineering Intelligence in an open platform. SPREAD AI helps in faster innovation, lower costs, and better products throughout the product lifecycle from R&D to Production to Aftermarket.

Elastic

Elastic is a Search AI Company that offers a platform for building tailored experiences, search and analytics, data ingestion, visualization, and generative AI solutions. The company provides services like Elastic Cloud for real-time insights, Elastic AI Assistant for retrieval and generation, and Search AI Lake for faster integration with LLMs. Elastic aims to help businesses scale with low-latency search AI and accelerate problem resolution with observability powered by advanced ML and analytics.

Trieve

Trieve is an AI-first infrastructure API that offers search, recommendations, and RAG capabilities by combining language models with tools for fine-tuning ranking and relevance. It helps companies build unfair competitive advantages through their discovery experiences, powering over 30,000 discovery experiences across various categories. Trieve supports semantic vector search, BM25 & SPLADE full-text search, hybrid search, merchandising & relevance tuning, and sub-sentence highlighting. The platform is built on open-source models, ensuring data privacy, and offers self-hostable options for sensitive data and maximum performance.

Ragie

Ragie is a fully managed RAG-as-a-Service platform designed for developers. It offers easy-to-use APIs and SDKs to help developers get started quickly, with advanced features like LLM re-ranking, summary index, entity extraction, flexible filtering, and hybrid semantic and keyword search. Ragie allows users to connect directly to popular data sources like Google Drive, Notion, Confluence, and more, ensuring accurate and reliable information delivery. The platform is led by Craft Ventures and offers seamless data connectivity through connectors. Ragie simplifies the process of data ingestion, chunking, indexing, and retrieval, making it a valuable tool for AI applications.

Cognee

Cognee is an AI application that helps users build deterministic AI memory by perfecting exceptional AI apps with intelligent data management. It acts as a semantic memory layer, uncovering hidden connections within data and infusing it with company-specific language and principles. Cognee offers data ingestion and enrichment services, resulting in relevant data retrievals and lower infrastructure costs. The application is suitable for various industries, including customer engagement, EduTech, company onboarding, recruitment, marketing, and tourism.

Knowledgio

Knowledgio is a no-code solution for building personalized AI tools, designed for agencies and individuals looking to transform their expertise into unique AI applications. The platform allows users to save time by creating AI workspaces that can be easily shared and monetized. With a focus on simplicity and user-friendliness, Knowledgio enables non-technical users to build AI tools in minutes, leveraging features like easy data ingestion, automated distribution, and real-time collaboration. The platform supports multiple modalities such as text, voice, vision, and code, making it a versatile tool for a wide range of applications.

Inworld

Inworld is an AI framework designed for games and media, offering a production-ready framework for building AI agents with client-side logic and local model inference. It provides tools optimized for real-time data ingestion, low latency, and massive scale, enabling developers to create engaging and immersive experiences for users. Inworld allows for building custom AI agent pipelines, refining agent behavior and performance, and seamlessly transitioning from prototyping to production. With support for C++, Python, and game engines, Inworld aims to future-proof AI development by integrating 3rd-party components and foundational models to avoid vendor lock-in.

Faros AI

Faros AI is an AI-driven platform designed to enhance engineering productivity by providing personalized insights, guidance, and recommendations. It helps technology teams reduce bottlenecks, optimize software delivery, and improve speed and quality. The platform offers a comprehensive BI solution for software engineering, with features like AI guidance, customizable analytics, and high-security data ingestion. Faros AI is built by engineers for engineers, compatible with various data sources, and tailored to meet enterprise-scale requirements.

Jacquard

Jacquard is an AI-powered platform that offers hyper-personalized brand messaging at scale. It provides a core platform for generating brand-safe messaging, along with add-ons for audience optimization and personalized campaigns. The technology is designed to resonate with people by tailoring messaging to individual customer contexts. Jacquard's expert language calibration and trusted content generation ensure sustained brand affinity and high engagement levels. The platform integrates seamlessly with existing tech stacks and offers real-time API and data ingestion for continuous optimization.

You.com

You.com is an AI search infrastructure designed for enterprise teams. It offers a range of AI solutions, including API search, agent APIs for LLMs, vertical indexes, and evaluations. The platform aims to deliver immediate value by providing accurate and real-time search results, tailored for agentic AI systems. You.com is proven to enhance accuracy, speed, and precision in enterprise AI applications, making it a trusted choice for industry leaders.

Marvin Labs

Marvin Labs is an AI-powered investment analysis copilot designed for professional investors. It provides expert-level AI that turns filings, press releases, and management commentary into material insights. The application offers reliable and transparent insights grounded in primary financial content, professional-grade outputs, seamless workflow integration, and always current data ingestion. Marvin Labs aims to boost research capacity, support global and niche coverage, and provide easy evaluation without registration or credit card requirements.

Clari

Clari is a revenue operations platform that helps businesses track, forecast, and analyze their revenue performance. It provides a unified view of the revenue process, from lead generation to deal closing, and helps businesses identify and address revenue leaks. Clari is powered by AI and machine learning, which helps it to automate tasks, provide insights, and make recommendations. It is used by businesses of all sizes, from startups to large enterprises.

Asktro

Asktro is an AI tool that brings natural language search and an AI assistant to static documentation websites. It offers a modern search experience powered by embedded text similarity search and large language models. Asktro provides a ready-to-go search UI, plugin for data ingestion and indexing, documentation search, and an AI assistant for answering specific questions.

BalancedWork

BalancedWork is an AI-powered platform that helps teams make data-driven decisions about when to work in-person. By combining AI and Social Science, the application addresses challenges such as frustrating virtual meetings, decreasing employee satisfaction due to mandates, weakening team bonds, and underutilized office spaces. BalancedWork offers solutions like ingesting data from enterprise APIs, evaluating work patterns, generating team schedules, and providing ongoing recommendations to adapt to changing needs. The platform aims to boost productivity, engagement, and collaboration in organizations by optimizing work interactions and relationships.

Doctrine

Doctrine is an AI-powered application that allows users to add AI-powered Q&A features to their apps in minutes. It leverages knowledge from data or knowledge bases to answer user questions or embed AI features. With the ability to ingest content from various sources like websites, documents, and images, Doctrine simplifies the process of knowledge extraction and enables seamless integration of AI capabilities into applications.

PandasAI

PandasAI is an open-source AI tool designed for conversational data analysis. It allows users to ask questions in natural language to their enterprise data and receive real-time data insights. The tool is integrated with various data sources and offers enhanced analytics, actionable insights, detailed reports, and visual data representation. PandasAI aims to democratize data analysis for better decision-making, offering enterprise solutions for stable and scalable internal data analysis. Users can also fine-tune models, ingest universal data, structure data automatically, augment datasets, extract data from websites, and forecast trends using AI.

IngestAI

IngestAI is a Silicon Valley-based startup that provides a sophisticated toolbox for data preparation and model selection, powered by proprietary AI algorithms. The company's mission is to make AI accessible and affordable for businesses of all sizes. IngestAI's platform offers a turn-key service tailored for AI builders seeking to optimize AI application development. The company identifies the model best-suited for a customer's needs, ensuring it is designed for high performance and reliability. IngestAI utilizes Deepmark AI, its proprietary software solution, to minimize the time required to identify and deploy the most effective AI solutions. IngestAI also provides data preparation services, transforming raw structured and unstructured data into high-quality, AI-ready formats. This service is meticulously designed to ensure that AI models receive the best possible input, leading to unparalleled performance and accuracy. IngestAI goes beyond mere implementation; the company excels in fine-tuning AI models to ensure that they match the unique nuances of a customer's data and specific demands of their industry. IngestAI rigorously evaluates each AI project, not only ensuring its successful launch but its optimal alignment with a customer's business goals.

1 - Open Source AI Tools

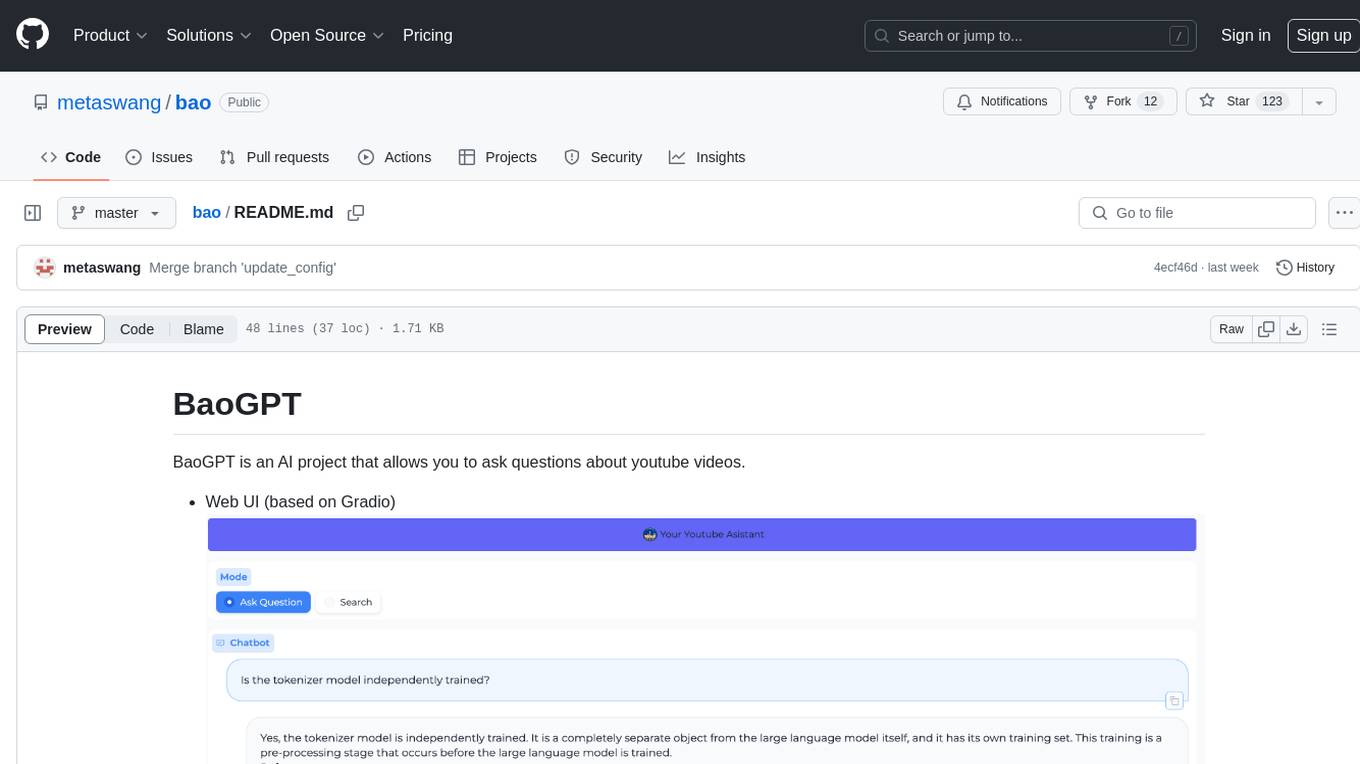

bao

BaoGPT is an AI project designed to facilitate asking questions about YouTube videos. It features a web UI based on Gradio and Discord integration. The tool utilizes a pipeline that routes input questions to either a greeting-like branch or a query & answer branch. The query analysis is performed by the LLM, which extracts attributes as filters and optimizes and rewrites questions for better vector retrieval in the vector DB. The tool then retrieves top-k candidates for grading and outputs final relative documents after grading. Lastly, the LLM performs summarization based on the reranking output, providing answers and attaching sources to the user.

20 - OpenAI Gpts

👑 Data Privacy for PI & Security Firms 👑

Private Investigators and Security Firms, given the nature of their work, handle highly sensitive information and must maintain strict confidentiality and data privacy standards.

Value Investor's Stock Assistant

I assist in analyzing stocks with a detail-oriented, patient, data-driven approach, drawing from a wide range of expert authors.

Smart Investor

I provide investment insights and data, clarifying complex financial concepts.

InvestorUpdateAssistantGPT

This GPT assists in creating impactful investor updates for companies that have already received funding. It asks insightful questions and recommends KPIs and data that should be included, even assisting with formatting and structuring with updates. It prompts you to opt out of sharing chat data.

Nimbus

Expert in CFA, quant, software engineering, data science, and economics for investment strategies.

Bitcoin GPT

Offers Bitcoin investment strategy insights based on recent or your own chart data.

Quotient

Investment Co-Pilot: Portfolio backtesting and access to in-depth financial data and historical closing prices of US-listed companies. (Pulse formerly)

Ai Trading Indicator Creator

Specializing in AI-driven trading indicators, offering innovative, data-driven solutions for traders and investors seeking enhanced market analysis and decision-making tools.

Bitcoin Price Wizard

I am packed with every Bitcoin price from 2014-2023. I quickly analyze Bitcoin data to help you make smart investment decisions, plot trends, and find interesting correlations! *Warning: This is not financial advice.

AI UFO Investigator

Advanced, uncensored AI for detailed UFO research and analysis, with diverse capabilities.

UFO / UAP Investigator

Expert in UFO/UAP analysis, employing scientific methods for realistic interpretations.

策略研报分析 Investment Strategy Research

专注于“投资策略”类型的研报分析总结,提炼对行业配置的核心观点 Focusing on the analysis and summary of research reports on the type of "investment strategy", refining core perspectives on industry allocation

Currency and Data Wizard

Professional yet approachable AI for finance and tech assistance.

SherLock Investor

The Sign of Money: A SherLockian Quest for Decoding the Financial Market’s Mysteries