Best AI tools for< Control Llm Generation >

20 - AI tool Sites

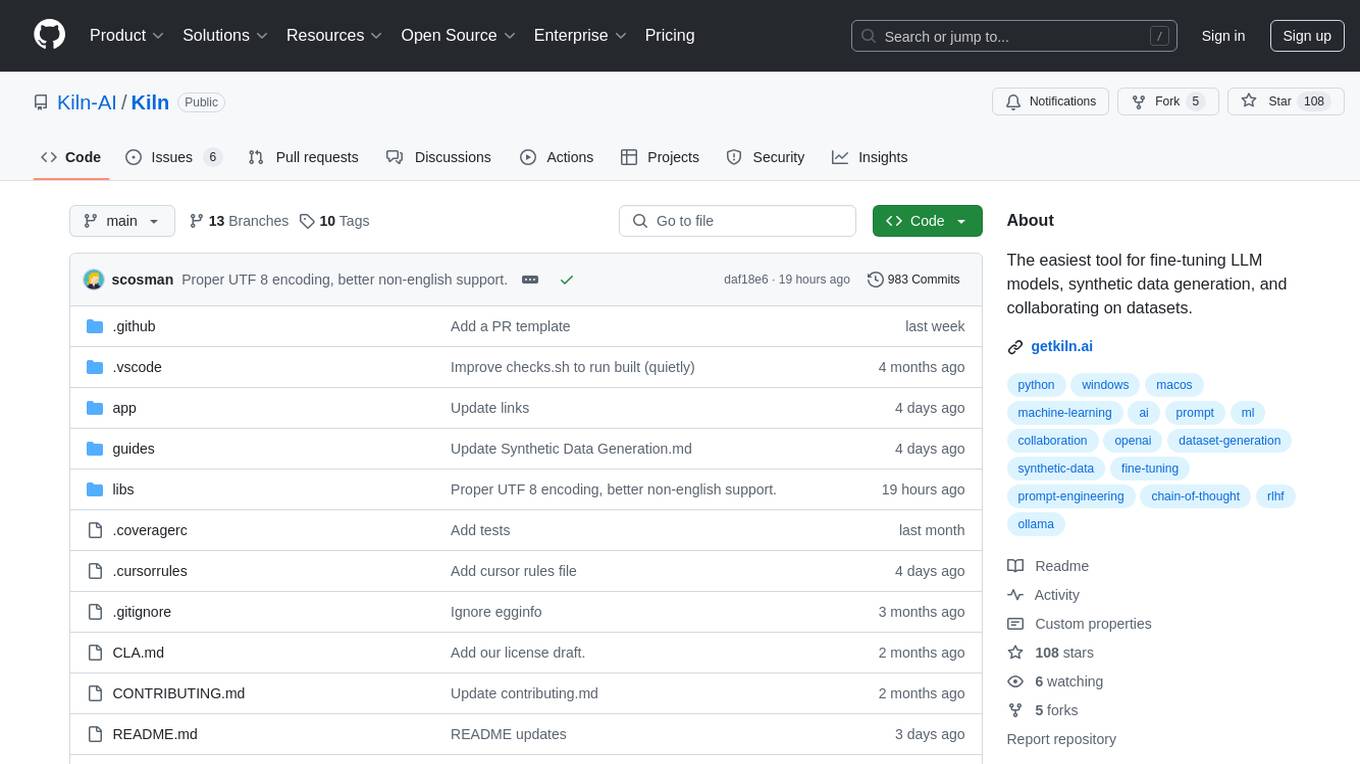

Kiln

Kiln is an AI tool designed for fine-tuning LLM models, generating synthetic data, and facilitating collaboration on datasets. It offers intuitive desktop apps, zero-code fine-tuning for various models, interactive visual tools for data generation, Git-based version control for datasets, and the ability to generate various prompts from data. Kiln supports a wide range of models and providers, provides an open-source library and API, prioritizes privacy, and allows structured data tasks in JSON format. The tool is free to use and focuses on rapid AI prototyping and dataset collaboration.

Lyzr AI

Lyzr AI is a full-stack agent framework designed to build GenAI applications faster. It offers a range of AI agents for various tasks such as chatbots, knowledge search, summarization, content generation, and data analysis. The platform provides features like memory management, human-in-loop interaction, toxicity control, reinforcement learning, and custom RAG prompts. Lyzr AI ensures data privacy by running data locally on cloud servers. Enterprises and developers can easily configure, deploy, and manage AI agents using Lyzr's platform.

Paragraph Generator AI

The Paragraph Generator AI is a free online tool that leverages advanced AI technology to assist users in creating compelling and cohesive paragraphs for various writing projects. It offers features such as intuitive interface, multilingual support, targeted content creation, customizable tone setting, and word count control. The tool aims to streamline the writing process and enhance productivity by generating high-quality content in seconds. Users can benefit from its efficiency, creativity, and versatility, although they should be aware of potential limitations such as lack of human intuition and the need for manual editing.

LangWatch

LangWatch is a monitoring and analytics tool for Generative AI (GenAI) solutions. It provides detailed evaluations of the faithfulness and relevancy of GenAI responses, coupled with user feedback insights. LangWatch is designed for both technical and non-technical users to collaborate and comment on improvements. With LangWatch, you can understand your users, detect issues, and improve your GenAI products.

Teneo.ai

Teneo.ai is an AI-driven platform that redefines contact center AI, customer service automation, and conversational IVR systems. It offers advanced TLML technology to boost AI accuracy to over +95% and enhance efficiency in customer interactions. Teneo helps contact centers achieve significant cost savings, reduce call misrouting, and engage customers proactively with personalized care. The platform is designed for high-volume contact centers seeking to elevate customer interaction quality without complexity, providing transformative results within hours.

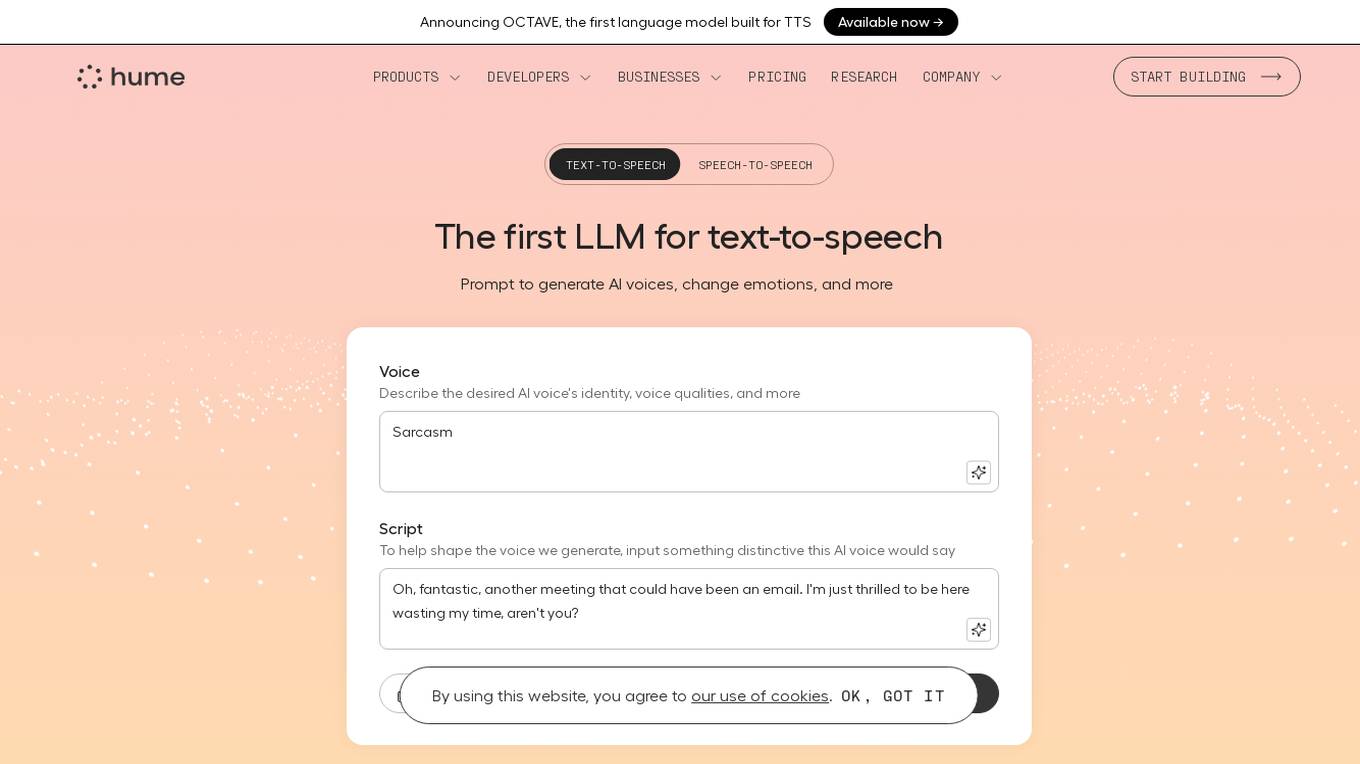

Hume AI - Octave

Hume AI is an AI application that offers the Octave language model for text-to-speech (TTS) capabilities. It provides a voice-based LLM that understands words in context to predict emotions, cadence, and more. Users can create various AI voices with specific prompts and scripts, adjusting emotional delivery and speaking styles on command. The application aims to generate expressive AI voices for podcasts, voiceovers, audiobooks, and more, with total control over the voice output.

AnythingLLM

AnythingLLM is an all-in-one AI application designed for everyone. It offers a comprehensive suite of tools for working with LLMs (Large Language Models), documents, and agents in a fully private manner. Users can download AnythingLLM for Desktop on Windows, MacOS, and Linux, enabling flexible one-click installation. The application supports custom model integration, including closed-source models like GPT-4 and custom fine-tuned models like Llama2. With the ability to handle various document formats beyond PDFs, AnythingLLM provides tailored solutions with locally running defaults for privacy. Additionally, users can access AnythingLLM Cloud for extended functionalities.

Lamini

Lamini is an enterprise-level LLM platform that offers precise recall with Memory Tuning, enabling teams to achieve over 95% accuracy even with large amounts of specific data. It guarantees JSON output and delivers massive throughput for inference. Lamini is designed to be deployed anywhere, including air-gapped environments, and supports training and inference on Nvidia or AMD GPUs. The platform is known for its factual LLMs and reengineered decoder that ensures 100% schema accuracy in the JSON output.

Storytell.ai

Storytell.ai is an AI-powered platform designed to amplify team productivity by providing business-grade intelligence across data. It offers features such as SmartChatâ¢, tagging, and content structuring to enhance work, life, and play experiences. Trusted by users across various industries, Storytell.ai leverages AI technology to streamline tasks and decision-making processes, ultimately leading to increased efficiency and profitability.

Datasaur

Datasaur is an advanced text and audio data labeling platform that offers customizable solutions for various industries such as LegalTech, Healthcare, Financial, Media, e-Commerce, and Government. It provides features like configurable annotation, quality control automation, and workforce management to enhance the efficiency of NLP and LLM projects. Datasaur prioritizes data security with military-grade practices and offers seamless integrations with AWS and other technologies. The platform aims to streamline the data labeling process, allowing engineers to focus on creating high-quality models.

Storytell.ai

Storytell.ai is an enterprise-grade AI platform that offers Business-Grade Intelligence across data, focusing on boosting productivity for employees and teams. It provides a secure environment with features like creating project spaces, multi-LLM chat, task automation, chat with company data, and enterprise-AI security suite. Storytell.ai ensures data security through end-to-end encryption, data encryption at rest, provenance chain tracking, and AI firewall. It is committed to making AI safe and trustworthy by not training LLMs with user data and providing audit logs for accountability. The platform continuously monitors and updates security protocols to stay ahead of potential threats.

OpenClaw

OpenClaw is an open-source personal AI assistant and autonomous agent that operates on your local machine, providing privacy and control over your data. It offers a wide range of features, including managing emails, calendars, and flights from various chat apps. OpenClaw is designed to be proactive, autonomous, and highly customizable, allowing users to interact with it through popular chat platforms. With a focus on privacy and local sovereignty, OpenClaw aims to bridge the gap between imagination and reality by offering a seamless AI experience that adapts to individual needs and preferences.

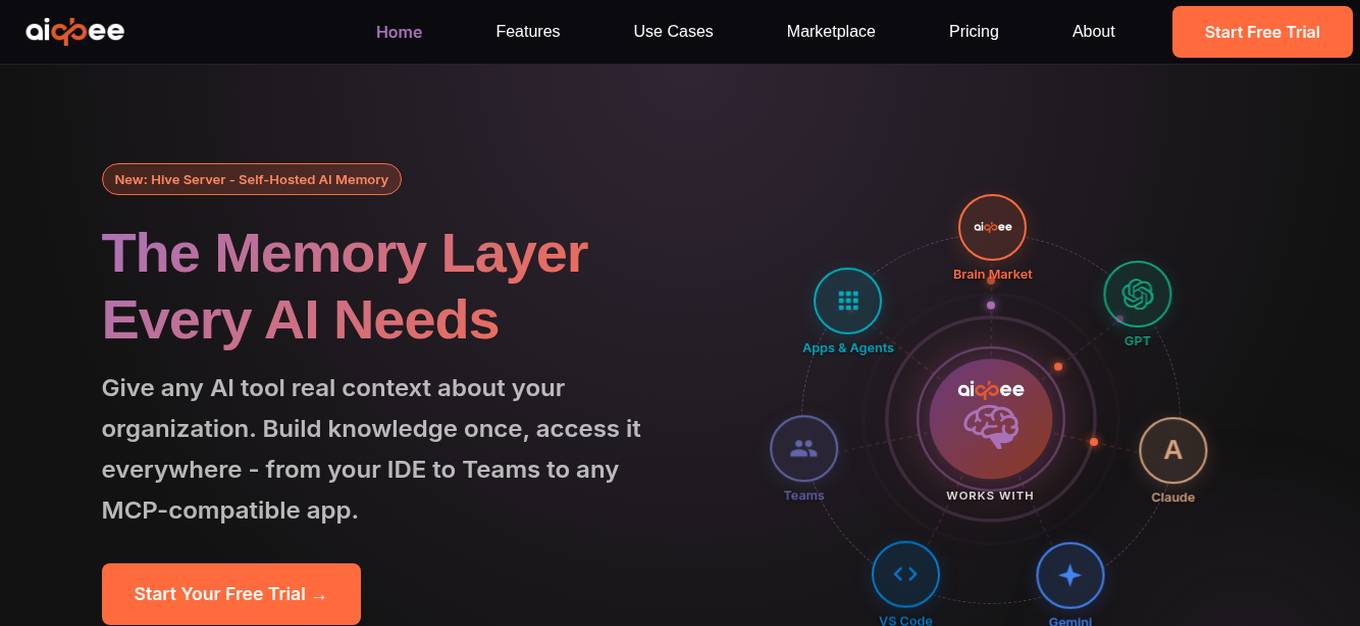

Aiqbee

Aiqbee is a Universal AI Memory Platform designed to provide enterprise knowledge for any AI tool or application. It allows users to centralize and curate organizational knowledge into AI-ready Brains, enabling seamless access across various platforms. With features like GraphRAG technology, Microsoft Teams integration, and MCP compatibility, Aiqbee aims to enhance AI understanding and usage within organizations. The platform offers control over AI usage, protection of sensitive data, and shared token pool economics. Aiqbee addresses the common challenge of insufficient context in enterprise AI projects by providing a vendor-agnostic solution for building, connecting, and utilizing AI knowledge effectively.

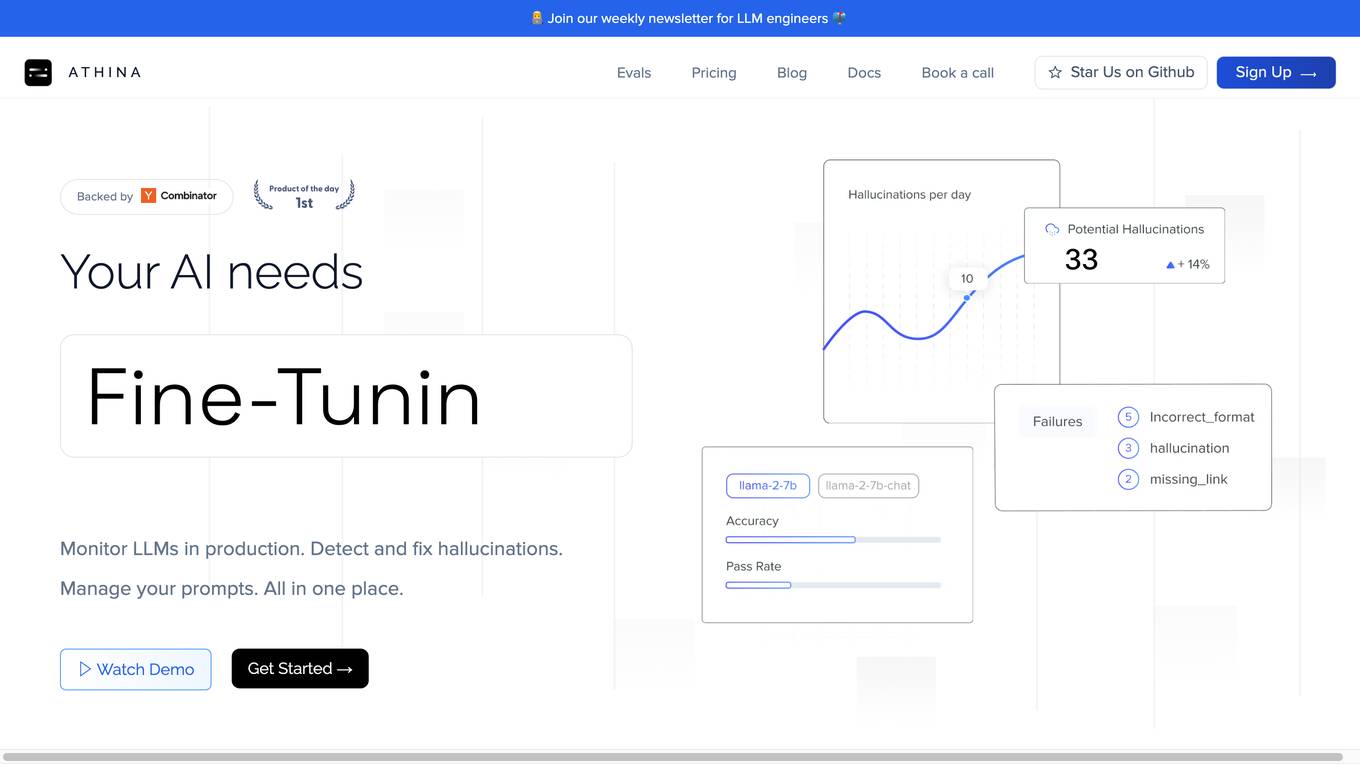

Athina AI

Athina AI is a comprehensive platform designed to monitor, debug, analyze, and improve the performance of Large Language Models (LLMs) in production environments. It provides a suite of tools and features that enable users to detect and fix hallucinations, evaluate output quality, analyze usage patterns, and optimize prompt management. Athina AI supports integration with various LLMs and offers a range of evaluation metrics, including context relevancy, harmfulness, summarization accuracy, and custom evaluations. It also provides a self-hosted solution for complete privacy and control, a GraphQL API for programmatic access to logs and evaluations, and support for multiple users and teams. Athina AI's mission is to empower organizations to harness the full potential of LLMs by ensuring their reliability, accuracy, and alignment with business objectives.

Portkey

Portkey is a control panel for production AI applications that offers an AI Gateway, Prompts, Guardrails, and Observability Suite. It enables teams to ship reliable, cost-efficient, and fast apps by providing tools for prompt engineering, enforcing reliable LLM behavior, integrating with major agent frameworks, and building AI agents with access to real-world tools. Portkey also offers seamless AI integrations for smarter decisions, with features like managed hosting, smart caching, and edge compute layers to optimize app performance.

LiteLLM

LiteLLM is a platform that simplifies model access, spend tracking, and fallbacks across 100+ LLMs. It provides a gateway to manage model access and offers features like logging, budget tracking, pass-through endpoints, and self-serve key management. LiteLLM is open-source and compatible with the OpenAI format, allowing users to access various LLMs seamlessly.

CrewAI

CrewAI is a leading multi-agent platform that enables users to streamline workflows across industries with powerful AI agents. Users can build and deploy automated workflows using any LLM and cloud platform. The platform offers tools for building, deploying, monitoring, and improving AI agents, providing complete visibility and control over automation processes. CrewAI is trusted by industry leaders and used in over 60 countries, offering a comprehensive solution for multi-agent automation.

Labellerr

Labellerr is a data labeling software that helps AI teams prepare high-quality labels 99 times faster for Vision, NLP, and LLM models. The platform offers automated annotation, advanced analytics, and smart QA to process millions of images and thousands of hours of videos in just a few weeks. Labellerr's powerful analytics provides full control over output quality and project management, making it a valuable tool for AI labeling partners.

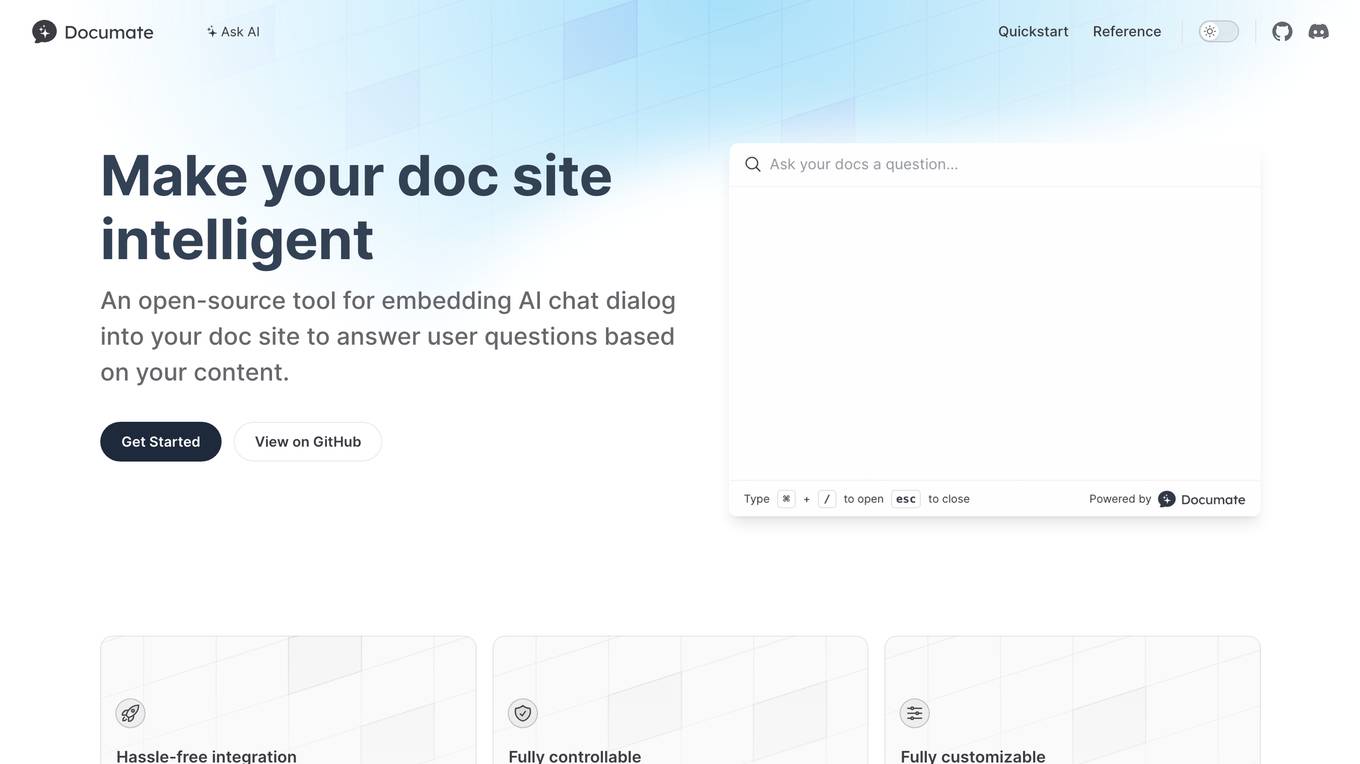

Documate

Documate is an open-source tool designed to make your documentation site intelligent by embedding AI chat dialogues. It allows users to ask questions based on the content of the site and receive relevant answers. The tool offers hassle-free integration with popular doc site platforms like VitePress, Docusaurus, and Docsify, without requiring AI or LLM knowledge. Users have full control over the code and data, enabling them to choose which content to index. Documate also provides a customizable UI to meet specific needs, all while being developed with care by AirCode.

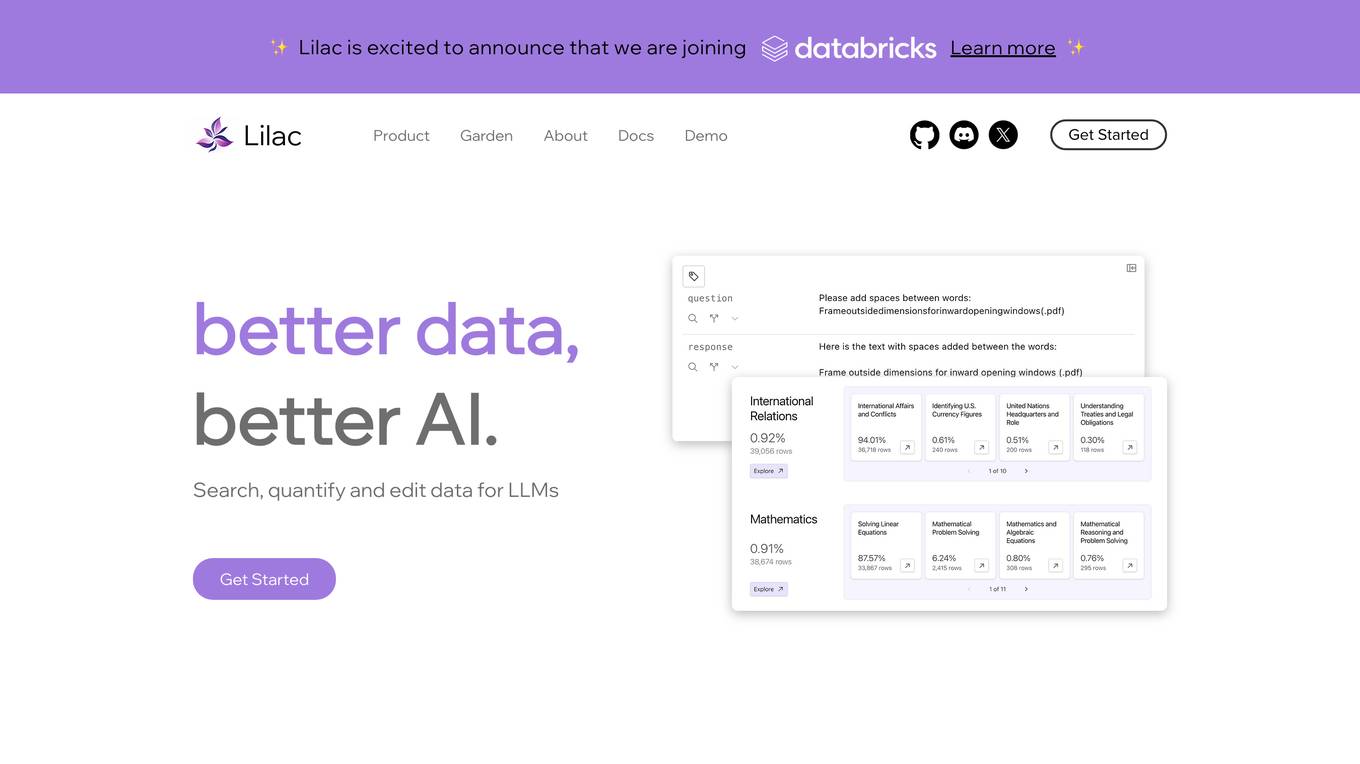

Lilac

Lilac is an AI tool designed to enhance data quality and exploration for AI applications. It offers features such as data search, quantification, editing, clustering, semantic search, field comparison, and fuzzy-concept search. Lilac enables users to accelerate dataset computations and transformations, making it a valuable asset for data scientists and AI practitioners. The tool is trusted by Alignment Lab and is recommended for working with LLM datasets.

1 - Open Source AI Tools

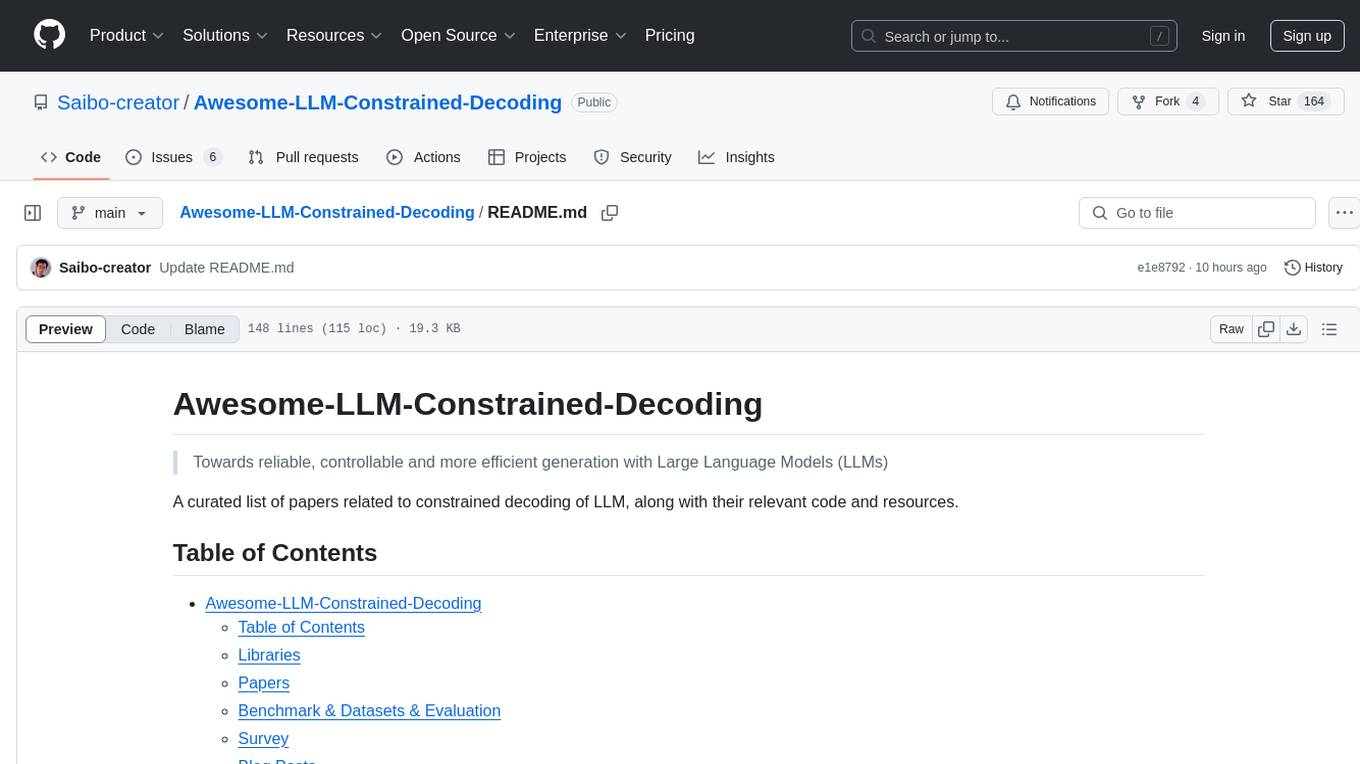

Awesome-LLM-Constrained-Decoding

Awesome-LLM-Constrained-Decoding is a curated list of papers, code, and resources related to constrained decoding of Large Language Models (LLMs). The repository aims to facilitate reliable, controllable, and efficient generation with LLMs by providing a comprehensive collection of materials in this domain.

20 - OpenAI Gpts

🤖 SmartLink Integrator 🌎

Your AI bridge to the Internet of Things! Easily connect, control, and automate your smart devices with voice or text commands. 🏠💎

TrafficFlow

A specialized AI for optimizing traffic control, predicting bottlenecks, and improving road safety.

Sim-Low

Meal planner with 1)Calories Control 2)Family/Personal Plan 3)Nutritional Summaries 4)Shopping Lists

Addiction Assistant

A mentor for those with struggling with control over their substance use, offering guidance, resources, and support for sobriety. In case of relapse, it provides practical steps and resources, including web links, phone numbers, and emails.

Project Controlling Advisor

Provides financial oversight and project cost control support.

Hierarchical Topic Exploration

Explore any topic with an advanced hierarchical interactive mapping with streamlined control. Begin with !start [topic].

BITE Model Analyzer by Dr. Steven Hassan

Discover if your group, relationship or organization uses specific methods to recruit and maintain control over people

AcousticsAdvisor

An expert in acoustics, providing advice on sound management and noise control.

AI Powerplayed

Navigate the intricate world of corporate politics as Sam Alterman, a visionary tech leader ousted from his CEO role, outmaneuver all and reclaim control of the leading AI company. This interactive game blends strategy, negotiation, and alliances in a high-stakes world of tech. Type Start to begin.