Best AI tools for< Build Image >

20 - AI tool Sites

SentiSight.ai

SentiSight.ai is a machine learning platform for image recognition solutions, offering services such as object detection, image segmentation, image classification, image similarity search, image annotation, computer vision consulting, and intelligent automation consulting. Users can access pre-trained models, background removal, NSFW detection, text recognition, and image recognition API. The platform provides tools for image labeling, project management, and training tutorials for various image recognition models. SentiSight.ai aims to streamline the image annotation process, empower users to build and train their own models, and deploy them for online or offline use.

PhotoTravel AI

PhotoTravel AI is an innovative AI application that allows users to take photos of themselves at famous landmarks worldwide without physically traveling to those locations. Users can upload their images to build their AI model, which then generates realistic photos of them at iconic tourist spots. The application provides an affordable and convenient alternative to traditional travel, enabling users to create and share memories from the comfort of their homes.

Imagga

Imagga is a leading provider of image recognition solutions for developers and businesses. Its API empowers intelligent apps with customizable machine learning technology. Imagga's solutions include tagging, categorization, cropping, color extraction, visual search, facial recognition, custom training, and content moderation. These solutions are used by over 30K startups, developers, and students, and trusted by over 200 business customers in more than 82 countries worldwide.

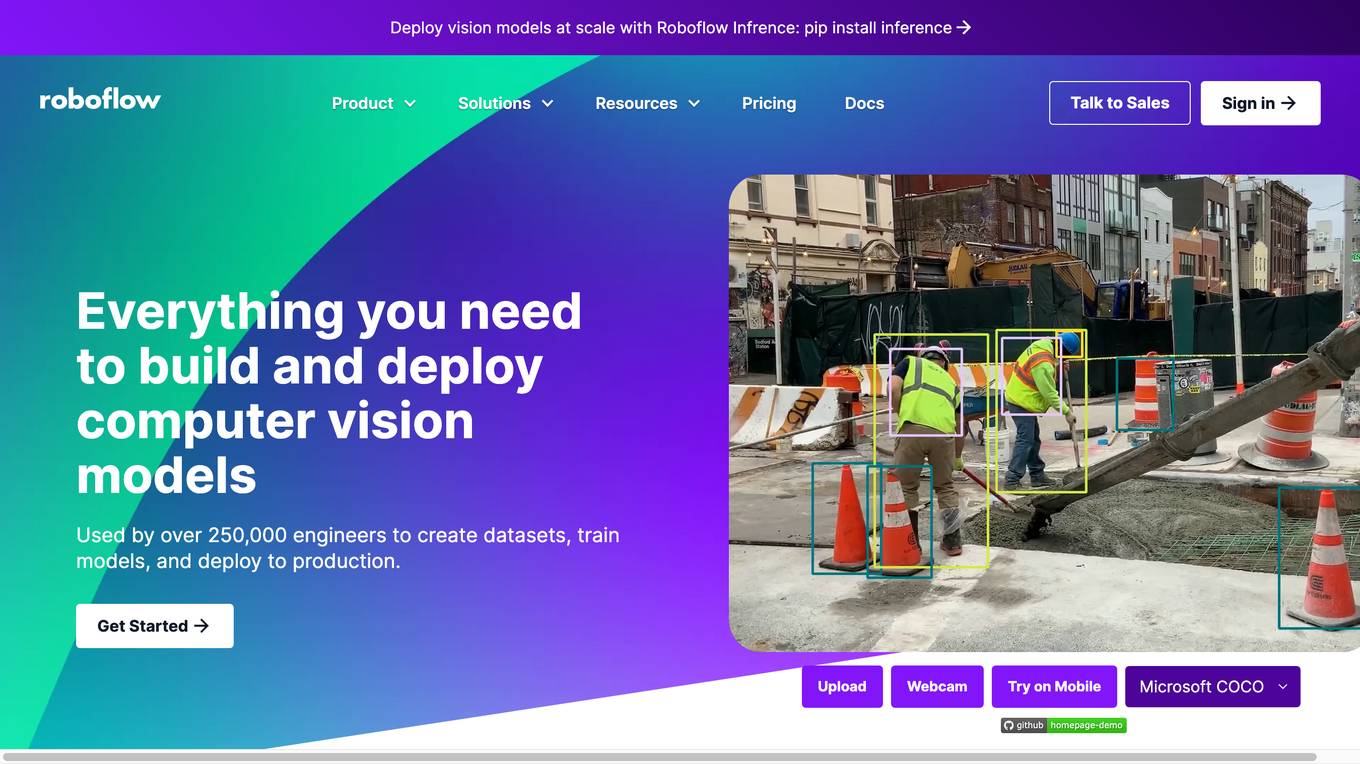

Roboflow

Roboflow is a platform that provides tools for building and deploying computer vision models. It offers a range of features, including data annotation, model training, and deployment. Roboflow is used by over 250,000 engineers to create datasets, train models, and deploy to production.

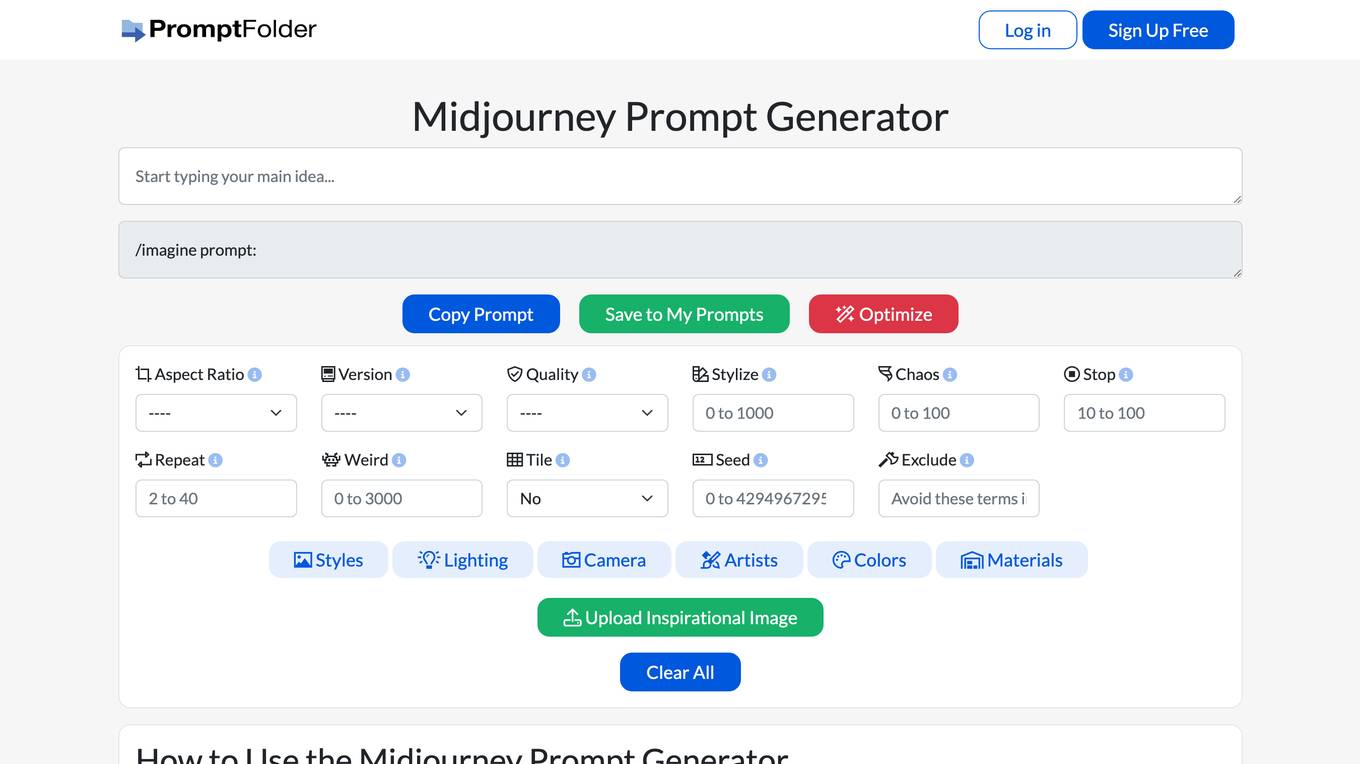

Midjourney Prompt Generator

Midjourney Prompt Generator is a tool that helps users create prompts for Midjourney, an AI-powered image generation bot. It provides a user-friendly interface for setting parameters, applying style presets, and weighting parts of the prompt. The tool also allows users to save and share their prompts. Midjourney Prompt Generator is a valuable resource for anyone who wants to get the most out of Midjourney.

Create

Create is a free-to-use AI app builder that lets you code using plain text and images. With Create, you can design and build apps like a pro, without having to write a single line of code. Create is perfect for building internal tools, prototypes, and even full-fledged applications.

Toolmark.ai

Toolmark.ai is a no-code AI tool builder that allows users to create AI tools without any coding knowledge. With Toolmark.ai, users can build AI tools that generate text, images, voice, and more using GPT, Dall-E, Google Gemini, and other AI models. Toolmark.ai also offers a marketplace where creators can design and sell their AI tools.

CGDream

CGDream is an AI image generator that allows users to visualize their ideas by generating images from text prompts. It offers various features such as text-to-image, image-to-image, and 3D model-to-image generation. Users can also apply filters to enhance the quality and style of the generated images. The tool is particularly useful for creative professionals, designers, and anyone looking to explore their imagination and bring their ideas to life.

Clevis

Clevis is a platform that allows users to build and sell AI-powered applications without the need for coding. With a variety of pre-built processing steps, users can create apps with features like text generation, image generation, and web scraping. Clevis provides an easy-to-use editor, templates, sharing options, and API integration to help users launch their AI-powered apps quickly. The platform also offers customization options, data upload capabilities, and the ability to schedule app runs. Clevis offers a free trial period and flexible pricing plans to cater to different user needs.

PageAI

PageAI is the best AI website builder for professionals, offering a seamless solution for creating production-ready websites in minutes. It eliminates the need for manual coding by utilizing AI agents to design, code, and customize websites based on user descriptions. With features like professional templates, customization options, and one-click deployment, PageAI streamlines the website building process and saves valuable time for developers. The platform is equipped with the latest technologies and best practices to ensure SEO optimization, clean code, and a user-friendly experience. PageAI stands out as a comprehensive tool that caters to various aspects of website development, from design to deployment.

Sink In

Sink In is a cloud-based platform that provides access to Stable Diffusion AI image generation models. It offers a variety of models to choose from, including majicMIX realistic, MeinaHentai, AbsoluteReality, DreamShaper, and more. Users can generate images by inputting text prompts and selecting the desired model. Sink In charges $0.0015 for each 512x512 image generated, and it offers a 99.9% reliability guarantee for images generated in the last 30 days.

Bibit AI

Bibit AI is a real estate marketing AI designed to enhance the efficiency and effectiveness of real estate marketing and sales. It can help create listings, descriptions, and property content, and offers a host of other features. Bibit AI is the world's first AI for Real Estate. We are transforming the real estate industry by boosting efficiency and simplifying tasks like listing creation and content generation.

Build Club

Build Club is an AI tool designed to help individuals learn and explore various aspects of artificial intelligence. The platform offers a wide range of courses, challenges, hackathons, and community projects to enhance users' AI skills. Users can build AI models for tasks like image and video generation, AI marketing, and creating AI agents. Build Club aims to create a collaborative learning environment for AI enthusiasts to grow their knowledge and skills in the field of artificial intelligence.

SitesGPT

SitesGPT is a premier AI Website Builder that leverages Artificial Intelligence (AI) technology to revolutionize website creation. It offers a user-friendly platform where individuals and businesses can effortlessly build dynamic, responsive websites with just a few clicks. With features like mobile optimization, unparalleled flexibility, zero cost to start, robust cloud infrastructure, and round-the-clock operation, SitesGPT stands out as a cost-effective and efficient solution for website development. The fusion of AI and website building not only enhances speed and efficiency but also ensures scalability and customization, making professional website creation accessible to a broader audience.

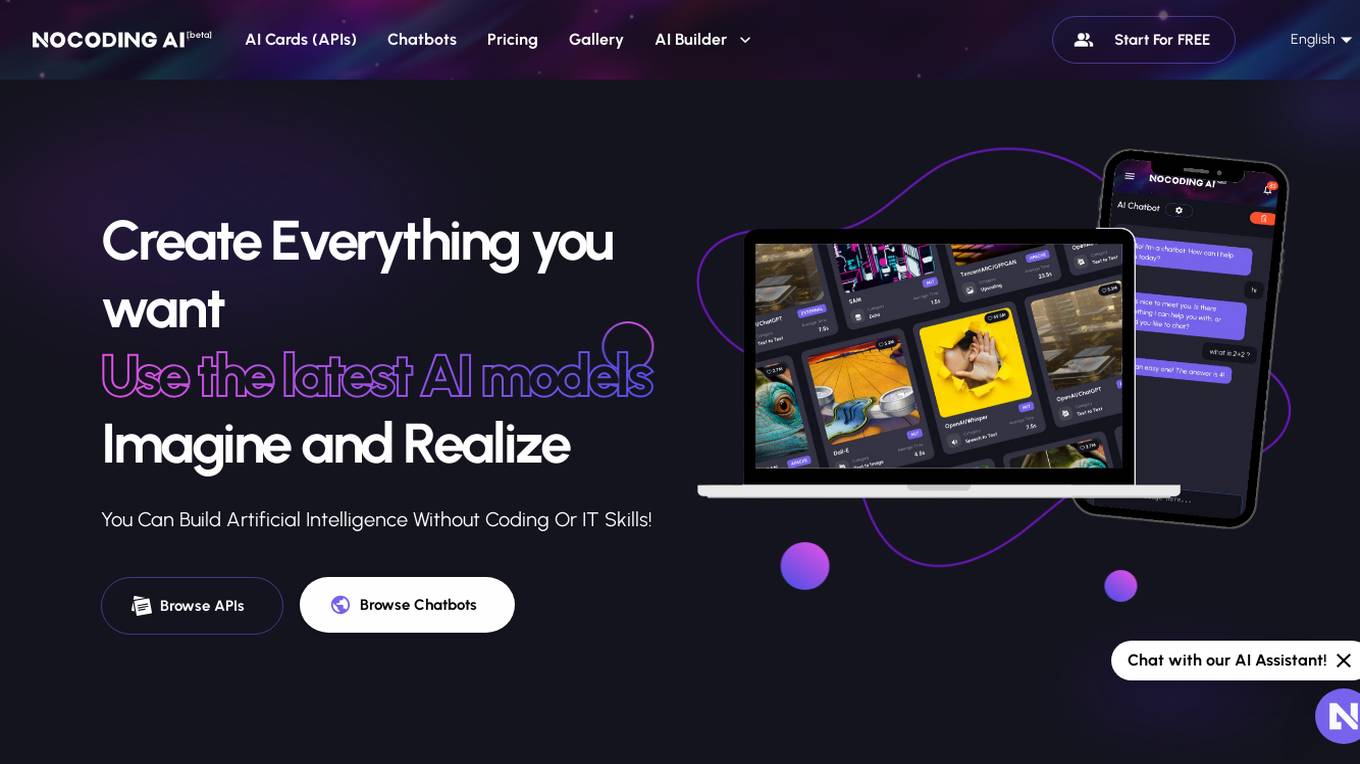

NOCODING AI

NOCODING AI is an innovative AI tool that allows users to create advanced applications without the need for coding skills. The platform offers a user-friendly interface with drag-and-drop functionality, making it easy for individuals and businesses to develop custom solutions. With NOCODING AI, users can build chatbots, automate workflows, analyze data, and more, all without writing a single line of code. The tool leverages machine learning algorithms to streamline the development process and empower users to bring their ideas to life quickly and efficiently.

Z.ai

Z.ai is a free AI chatbot and agent powered by GLM-5 and GLM-4.7. It provides users with an interactive platform to engage with AI technology for various purposes. The chatbot is designed to assist users in answering questions, providing information, and offering personalized recommendations. With advanced algorithms and machine learning capabilities, Z.ai aims to enhance user experience and streamline communication processes.

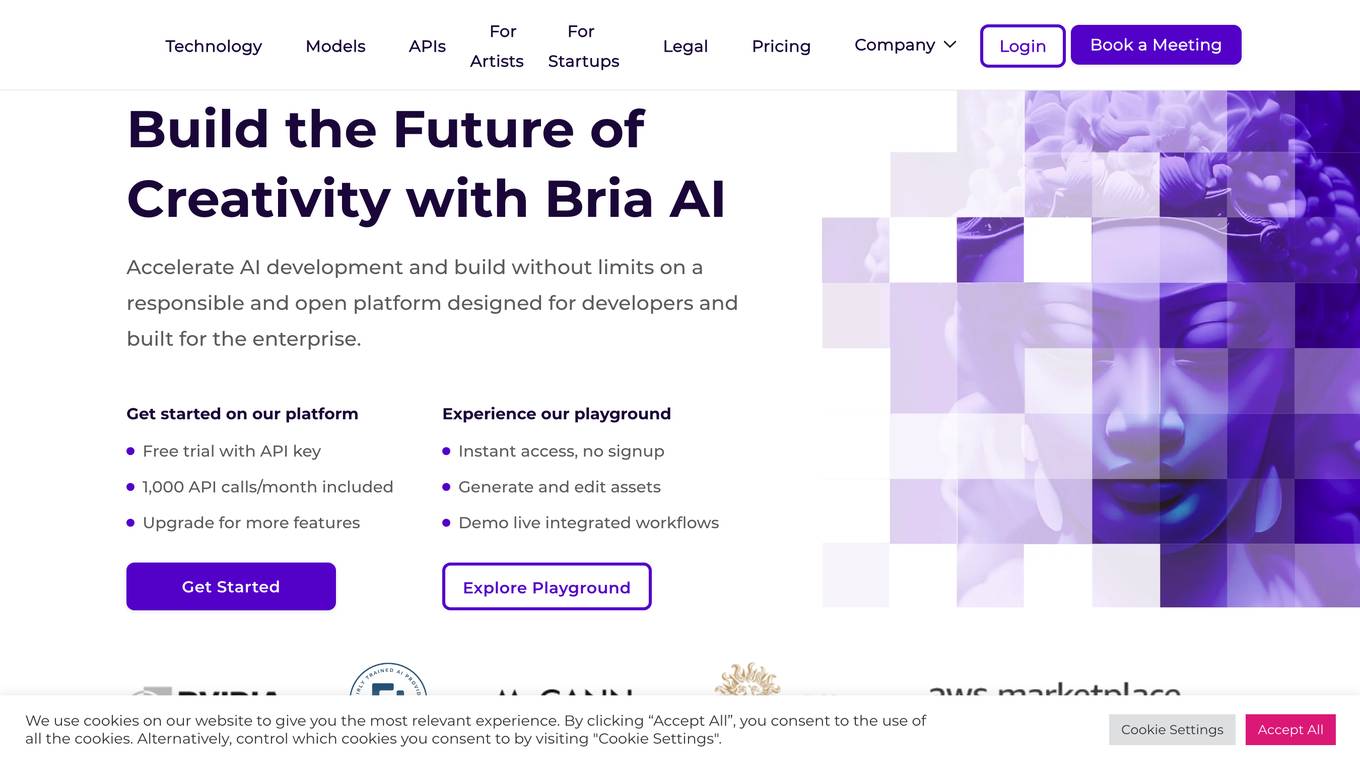

BRIA.ai

BRIA.ai is a visual generative AI platform that provides developers and businesses with the tools they need to build and deploy AI-powered applications. The platform includes a suite of pre-trained foundation models, APIs, and tools that can be used to generate and modify images, videos, and other visual content. BRIA.ai is committed to responsible AI practices and ensures that all of its models are trained on licensed and safe-to-use data.

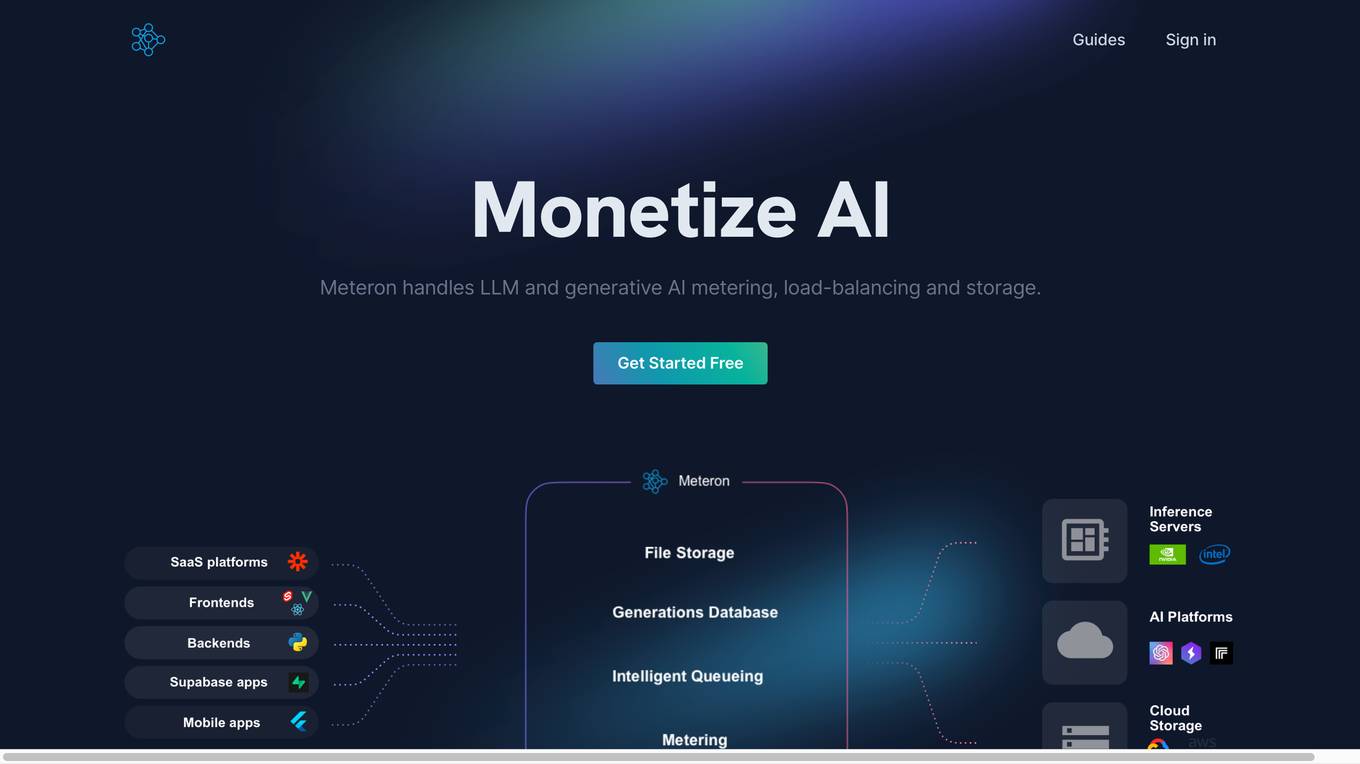

Meteron AI

Meteron AI is an all-in-one AI toolset that helps developers build AI-powered products faster and easier. It provides a simple, yet powerful metering mechanism, elastic scaling, unlimited storage, and works with any model. With Meteron, developers can focus on building AI products instead of worrying about the underlying infrastructure.

Gradio

Gradio is a tool that allows users to quickly and easily create web-based interfaces for their machine learning models. With Gradio, users can share their models with others, allowing them to interact with and use the models remotely. Gradio is easy to use and can be integrated with any Python library. It can be used to create a variety of different types of interfaces, including those for image classification, natural language processing, and time series analysis.

Helix AI

Helix AI is a private GenAI platform that enables users to build AI applications using open source models. The platform offers tools for RAG (Retrieval-Augmented Generation) and fine-tuning, allowing deployment on-premises or in a Virtual Private Cloud (VPC). Users can access curated models, utilize Helix API tools to connect internal and external APIs, embed Helix Assistants into websites/apps for chatbot functionality, write AI application logic in natural language, and benefit from the innovative RAG system for Q&A generation. Additionally, users can fine-tune models for domain-specific needs and deploy securely on Kubernetes or Docker in any cloud environment. Helix Cloud offers free and premium tiers with GPU priority, catering to individuals, students, educators, and companies of varying sizes.

1 - Open Source AI Tools

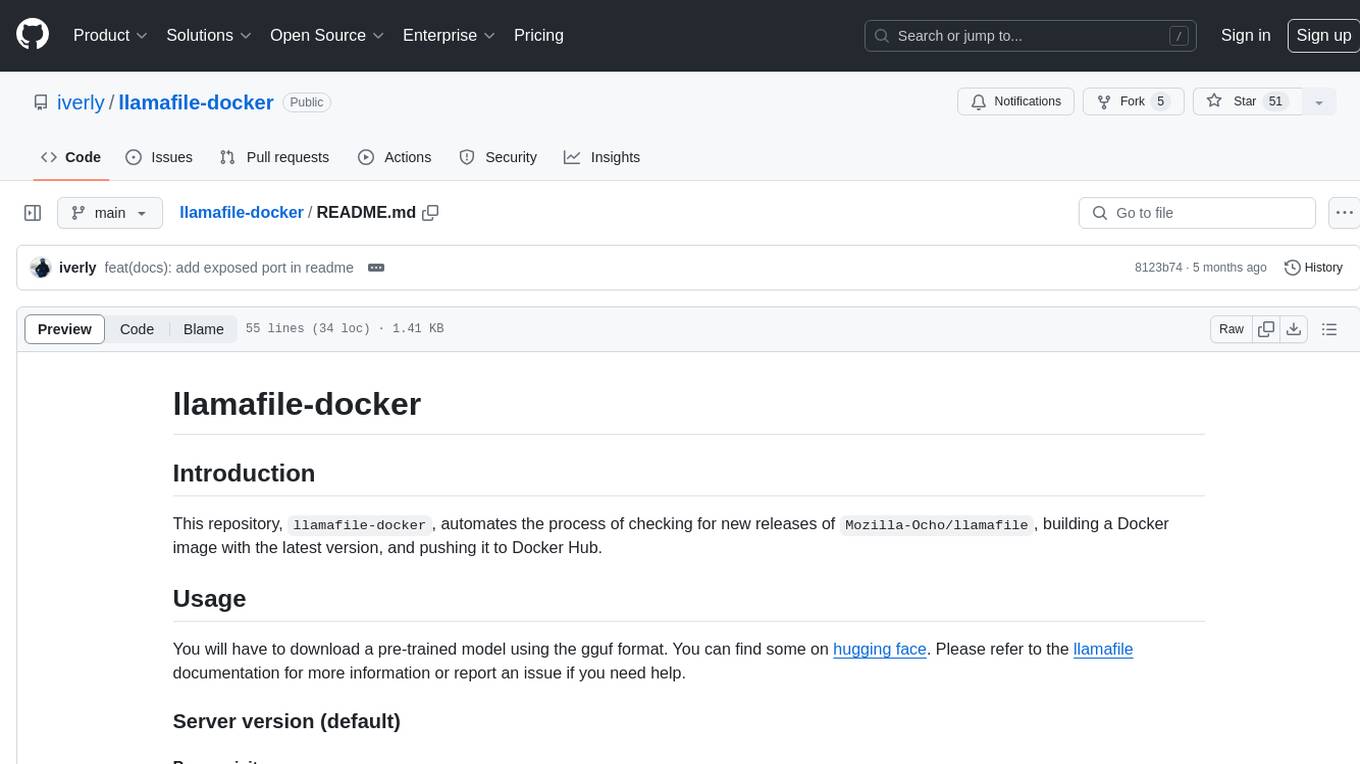

llamafile-docker

This repository, llamafile-docker, automates the process of checking for new releases of Mozilla-Ocho/llamafile, building a Docker image with the latest version, and pushing it to Docker Hub. Users can download a pre-trained model in gguf format and use the Docker image to interact with the model via a server or CLI version. Contributions are welcome under the Apache 2.0 license.

20 - OpenAI Gpts

Build a Brand

Unique custom images based on your input. Just type ideas and the brand image is created.

psy_self

Welcome to 'SelfEsteemSculptor' – your digital guide for enhancing self-esteem. Discover strategies to build your confidence, overcome self-doubt, and cultivate a strong, positive self-image.

Abundance

A guide for self-sufficiency and nature awareness, with internet search and image generation.

There's An API For That - The #1 API Finder

The most advanced API finder, available for over 2000 manually curated tasks. Chat with me to find the best AI tools for any use case.

SteamMaster: Inventor of Ages

Enter a richly detailed steampunk universe in 'SteamMaster: Inventor of Ages'. As an inventor, design and build imaginative steam-powered devices, navigate through a world of Victorian elegance mixed with futuristic technology, and invent solutions to challenges. Another AI Game by Dave Lalande