Best AI tools for< Build Backends >

20 - AI tool Sites

Amplication

Amplication is an AI-powered platform for .NET and Node.js app development, offering the world's fastest way to build backend services. It empowers developers by providing customizable, production-ready backend services without vendor lock-ins. Users can define data models, extend and customize with plugins, generate boilerplate code, and modify the generated code freely. The platform supports role-based access control, microservices architecture, continuous Git sync, and automated deployment. Amplication is SOC-2 certified, ensuring data security and compliance.

ZeroCactus

ZeroCactus is an AI-assisted NoCode platform that allows users to build complex backends using plain English via prompting. It is a strategic choice for businesses looking for a world-class development platform that is built for performance and delivers to the highest standard every time. ZeroCactus offers a fluid experience, starting as NoCode in MVP and continuing with code as the business grows. Its no-code solution is seamless and easy to use, and it provides endless possibilities. ZeroCactus is also easily customized, and its team of experts is available to deliver what users need now. ZeroCactus uses advanced AI technology to maximize the NoCode experience while ensuring personalized support from expert coders when users need it most. It provides powerful solutions at all times, and its craft is powered by advanced AI technology combined with a hint of human touch. Users can start by building their solutions and MVP with the NoCode experience, and the control is always in their hands. ZeroCactus has no limits and is suited to many businesses, providing creative freedom.

Goptimise

Goptimise is a no-code AI-powered scalable backend builder that helps developers craft scalable, seamless, powerful, and intuitive backend solutions. It offers a solid foundation with robust and scalable infrastructure, including dedicated infrastructure, security, and scalability. Goptimise simplifies software rollouts with one-click deployment, automating the process and amplifying productivity. It also provides smart API suggestions, leveraging AI algorithms to offer intelligent recommendations for API design and accelerating development with automated recommendations tailored to each project. Goptimise's intuitive visual interface and effortless integration make it easy to use, and its customizable workspaces allow for dynamic data management and a personalized development experience.

Koxy AI

Koxy AI is an AI-powered serverless back-end platform that allows users to build globally distributed, fast, secure, and scalable back-ends with no code required. It offers features such as live logs, smart errors handling, integration with over 80,000 AI models, and more. Koxy AI is designed to help users focus on building the best service possible without wasting time on security and latency concerns. It provides a No-SQL JSON-based database, real-time data synchronization, cloud functions, and a drag-and-drop builder for API flows.

Rowy

Rowy is a low-code backend platform that allows users to manage their database on a spreadsheet-like interface and build powerful backend cloud functions without leaving their browser. It offers a variety of features such as derivative fields, action fields, extensions, webhooks, and integrations with popular tools like Google Vision, GPT-3, Figma, and Webflow. Rowy is designed to be accessible to both developers and non-technical users, making it a versatile tool for building and managing backend applications.

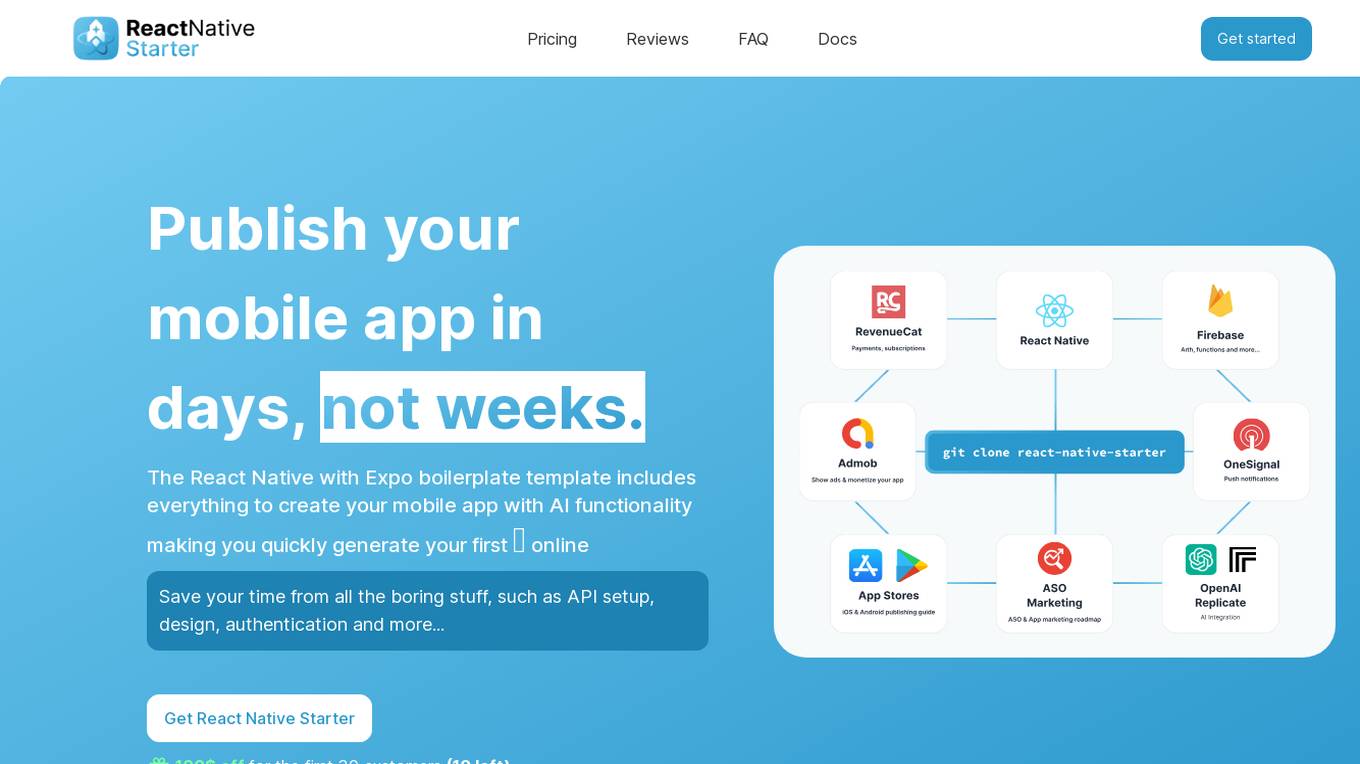

React Native Starter AI

React Native Starter AI is an all-in-one development kit designed to help users quickly launch their mobile apps with AI functionality. The boilerplate template includes integrations such as AI tools, Firebase functions, analytics, authentication, in-app purchases, and more. It aims to save developers time by providing pre-built components and screens for building AI mobile applications. With React Native Starter AI, users can easily customize and publish their apps on mobile app stores, catering to both beginner and experienced developers.

Backend.AI

Backend.AI is an enterprise-scale cluster backend for AI frameworks that offers scalability, GPU virtualization, HPC optimization, and DGX-Ready software products. It provides a fast and efficient way to build, train, and serve AI models of any type and size, with flexible infrastructure options. Backend.AI aims to optimize backend resources, reduce costs, and simplify deployment for AI developers and researchers. The platform integrates seamlessly with existing tools and offers fractional GPU usage and pay-as-you-play model to maximize resource utilization.

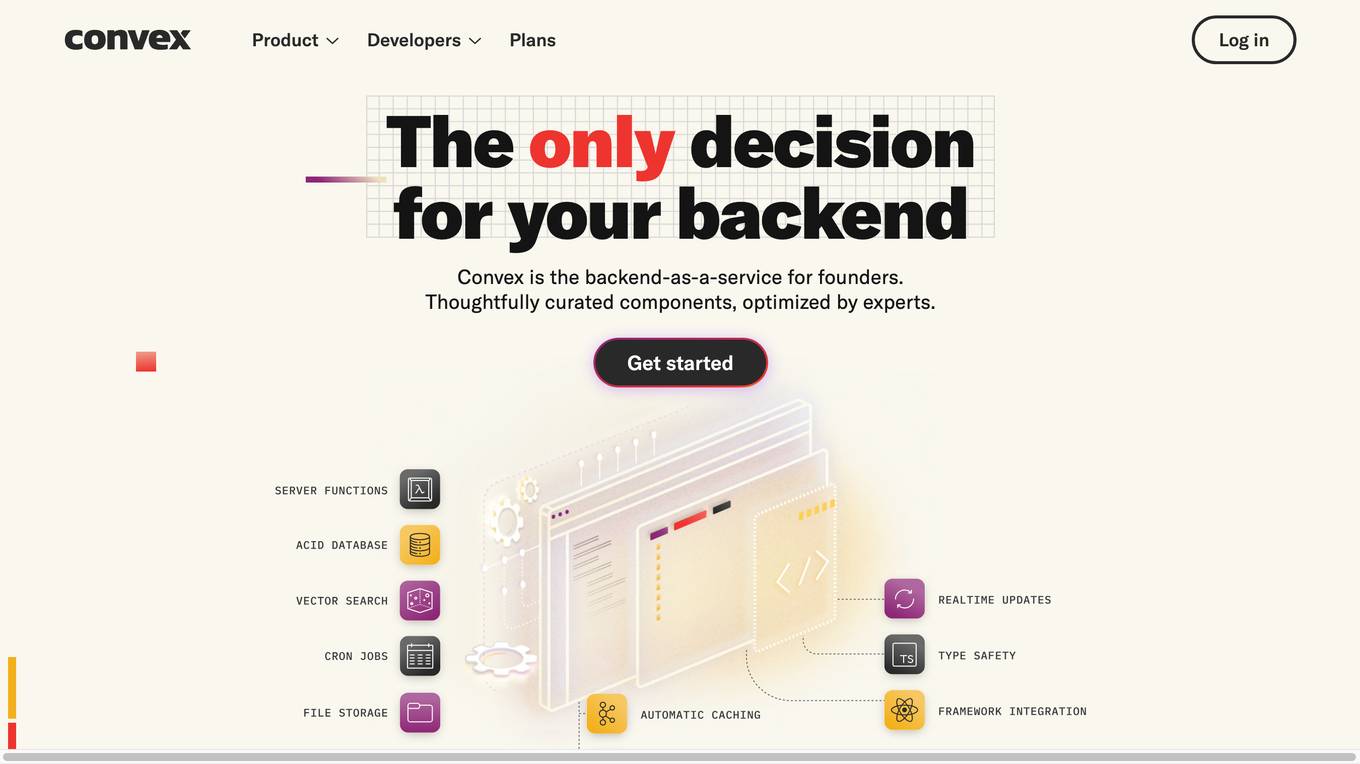

Convex

Convex is a fullstack TypeScript development platform that serves as an open-source backend for application builders. It offers a comprehensive set of APIs and tools to build, launch, and scale applications efficiently. With features like real-time collaboration, optimized transactions, and over 80 OAuth integrations, Convex simplifies backend operations and allows developers to focus on delivering value to customers. The platform enables developers to write backend logic in TypeScript, perform database operations with strong consistency, and integrate with various third-party services seamlessly. Convex is praised for its reliability, simplicity, and developer experience, making it a popular choice for modern software development projects.

BuildShip

BuildShip is a low-code visual backend builder that allows users to create powerful APIs in minutes. It is powered by AI and offers a variety of features such as pre-built nodes, multimodal flows, and integration with popular AI models. BuildShip is suitable for a wide range of users, from beginners to experienced developers. It is also a great tool for teams who want to collaborate on backend development projects.

Lovable

Lovable is an AI-powered application that allows users to describe their software ideas in natural language and then automatically transforms them into fully functional applications with beautiful aesthetics. It enables users to build high-quality software without writing a single line of code, making software creation more accessible and faster than traditional coding methods. With features like live rendering, instant undo, beautiful design principles, and seamless GitHub integration, Lovable empowers product builders, developers, and designers to bring their ideas to life effortlessly.

DocDriven

DocDriven is an AI-powered documentation-driven API development tool that provides a shared workspace for optimizing the API development process. It helps in designing APIs faster and more efficiently, collaborating on API changes in real-time, exploring all APIs in one workspace, generating AI code, maintaining API documentation, and much more. DocDriven aims to streamline communication and coordination among backend developers, frontend developers, UI designers, and product managers, ensuring high-quality API design and development.

AgentLabs

AgentLabs is a frontend-as-a-service platform that allows developers to build and share AI-powered chat-based applications in minutes, without any front-end experience. It provides a range of features such as real-time and asynchronous communication, background task management, backend agnosticism, and support for Markdown, files, and more.

Pythagora

Pythagora is the world's first all-in-one AI development platform that offers a secure and comprehensive solution for building web applications. It combines frontend, backend, debugging, and deployment features in a single platform, enabling users to create apps without heavy coding requirements. Pythagora is powered by specialized AI agents and top-tier language models from OpenAI and Anthropic, providing users with tools for planning, writing, testing, and deploying full-stack web apps. The platform is designed to streamline the development process, offering enterprise-grade security, role-based authentication, and transparent control over projects.

Reachat

Reachat is an open-source UI building library for creating chat interfaces in ReactJS. It offers highly customizable components and theming options, rich media support for file uploads and markdown formatting, an intuitive API for building custom chat experiences, and the ability to seamlessly switch between different AI models. Reachat is battle-tested and used in production across various enterprise products. It is a powerful, flexible, and user-friendly AI chat interface library that allows developers to easily integrate conversational AI capabilities into their applications without the need to spend weeks building custom components. Reachat is not tied to any specific backend or LLM, providing the freedom to use it with any backend or LLM of choice.

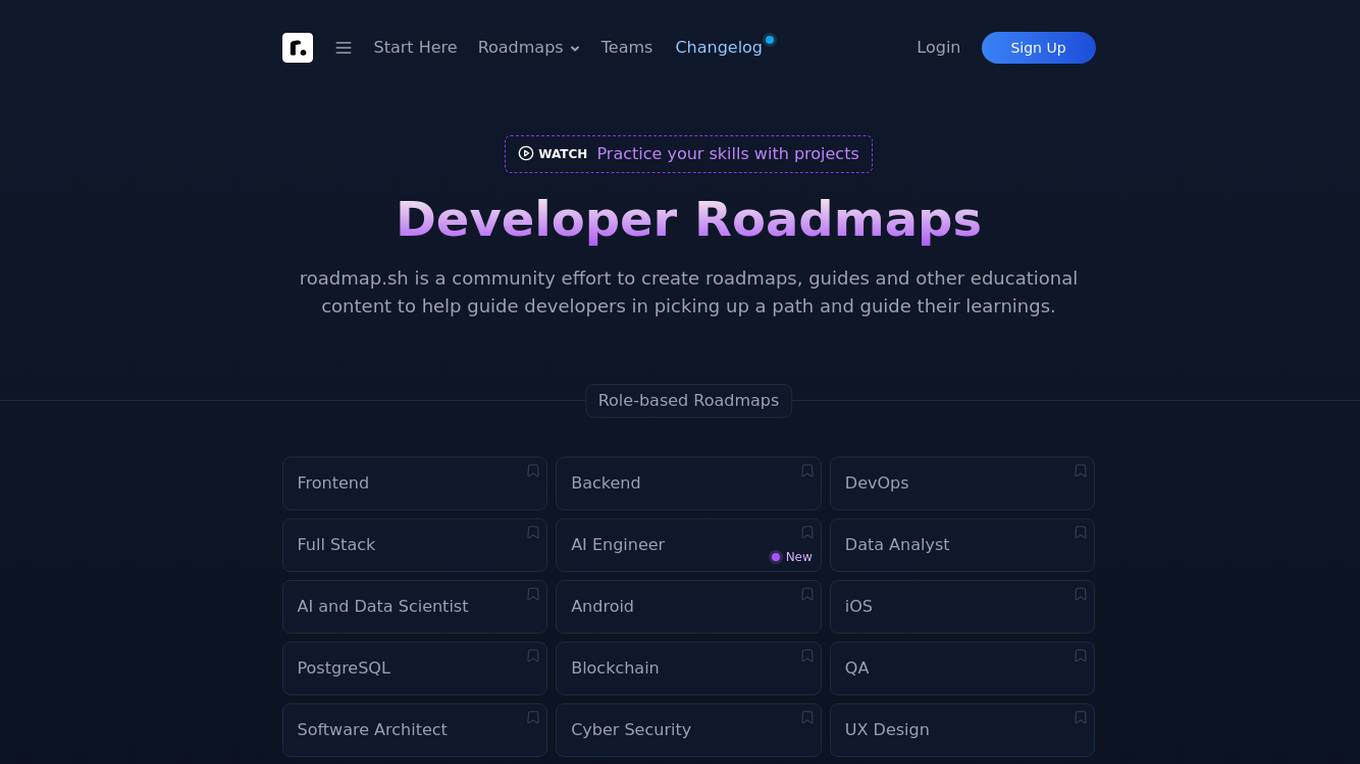

Developer Roadmaps

Developer Roadmaps (roadmap.sh) is a community-driven platform offering official roadmaps, guides, projects, best practices, questions, and videos to assist developers in skill development and career growth. It provides role-based and skill-based roadmaps covering various technologies and domains. The platform is actively maintained and continuously updated to enhance the learning experience for developers worldwide.

Works

Works is a platform that connects enterprises with the top 1% of remote tech talent. It uses advanced AI technology to ensure precision-matching of talent to project requirements, saving time and resources. Works offers transparent pricing with a flat 10% transaction fee and provides risk-free hiring with payment only when the work is completed to satisfaction.

DocuHelp

DocuHelp is an AI-powered platform that enables businesses to effortlessly create professional-grade documents, reports, proposals, and sales pitches in a matter of minutes. It facilitates real-time collaboration among team members, eliminating the need for email chains and ensuring accuracy and efficiency. With industry-focused backend prompts, access to backend systems, and the ability to train models on company-specific data, DocuHelp offers a tailored solution for businesses seeking to enhance their document creation process.

Oncora Medical

Oncora Medical is a healthcare technology company that provides software and data solutions to oncologists and cancer centers. Their products are designed to improve patient care, reduce clinician burnout, and accelerate clinical discoveries. Oncora's flagship product, Oncora Patient Care, is a modern, intelligent user interface for oncologists that simplifies workflow, reduces documentation burden, and optimizes treatment decision making. Oncora Analytics is an adaptive visual and backend software platform for regulatory-grade real world data analytics. Oncora Registry is a platform to capture and report quality data, treatment data, and outcomes data in the oncology space.

Treblle

Treblle is an End to End APIOps Platform that helps engineering and product teams build, ship, and understand their REST APIs in one single place. It offers features such as API Observability, API Documentation, API Governance, API Security, and API Analytics. With a focus on empowering API producers and consumers, Treblle provides actionable data in real-time, customizable dashboards, and automated API development. The platform aims to improve API release times, enhance developer experience, and ensure API quality and security.

Dify

Dify is an open-source platform for building AI applications that combines Backend-as-a-Service and LLMOps to streamline the development of generative AI solutions. It integrates support for mainstream LLMs, an intuitive Prompt orchestration interface, high-quality RAG engines, a flexible AI Agent framework, and easy-to-use interfaces and APIs. Dify allows users to skip complexity and focus on creating innovative AI applications that solve real-world problems. It offers a comprehensive, production-ready solution with a user-friendly interface.

0 - Open Source AI Tools

20 - OpenAI Gpts

Principal Backend Engineer

Expert Backend Developer: Skilled in Python, Java, Node.js, Ruby, PHP for robust backend solutions.

![[latest] FastAPI GPT Screenshot](/screenshots_gpts/g-BhYCAfVXk.jpg)

[latest] FastAPI GPT

Up-to-date FastAPI coding assistant with knowledge of the latest version. Part of the [latest] GPTs family.

FastAPIHTMX

Assists with `fastapi-htmx` package queries, using specific documentation for accurate solutions.

Serverless Architect Pro

Helping software engineers to architect domain-driven serverless systems on AWS

Elixir Code Assistant

This bot helps refine elixir code, especially genservers, and liveviews

Build a Brand

Unique custom images based on your input. Just type ideas and the brand image is created.