Best AI tools for< Build Apis >

20 - AI tool Sites

Amplication

Amplication is an AI-powered platform for .NET and Node.js app development, offering the world's fastest way to build backend services. It empowers developers by providing customizable, production-ready backend services without vendor lock-ins. Users can define data models, extend and customize with plugins, generate boilerplate code, and modify the generated code freely. The platform supports role-based access control, microservices architecture, continuous Git sync, and automated deployment. Amplication is SOC-2 certified, ensuring data security and compliance.

Console

Console is a REST API development tool that helps developers design, build, test, and document REST APIs. It provides a user-friendly interface for creating and managing API specifications, generating code in multiple languages, and testing APIs with mock servers. Console also includes documentation features for generating API documentation and interactive API playgrounds.

CodeSpell

CodeSpell is an AI-powered code completion tool designed to streamline the software development life cycle (SDLC). It assists developers in generating code, documenting it, fixing errors, building APIs, automating tests, and setting up infrastructure directly within their integrated development environment (IDE). CodeSpell's unique feature, Design Studio, automates project setup by generating scaffolding, APIs, and infrastructure scripts tailored to the user's stack, reducing manual coding effort and accelerating development. The tool is compatible with popular IDEs and supports code generation in any language. CodeSpell aims to transition the software industry from AI-assisted code generation to an AI-driven landscape.

BuildShip

BuildShip is a low-code visual backend builder that allows users to create powerful APIs in minutes. It is powered by AI and offers a variety of features such as pre-built nodes, multimodal flows, and integration with popular AI models. BuildShip is suitable for a wide range of users, from beginners to experienced developers. It is also a great tool for teams who want to collaborate on backend development projects.

Aipify

Aipify is a platform that allows users to build AI-powered APIs in seconds. With Aipify, users can access the latest AI models, including GPT-4, to enhance their applications' capabilities. Aipify's APIs are easy to use and affordable, making them a great choice for businesses of all sizes.

DocDriven

DocDriven is an AI-powered documentation-driven API development tool that provides a shared workspace for optimizing the API development process. It helps in designing APIs faster and more efficiently, collaborating on API changes in real-time, exploring all APIs in one workspace, generating AI code, maintaining API documentation, and much more. DocDriven aims to streamline communication and coordination among backend developers, frontend developers, UI designers, and product managers, ensuring high-quality API design and development.

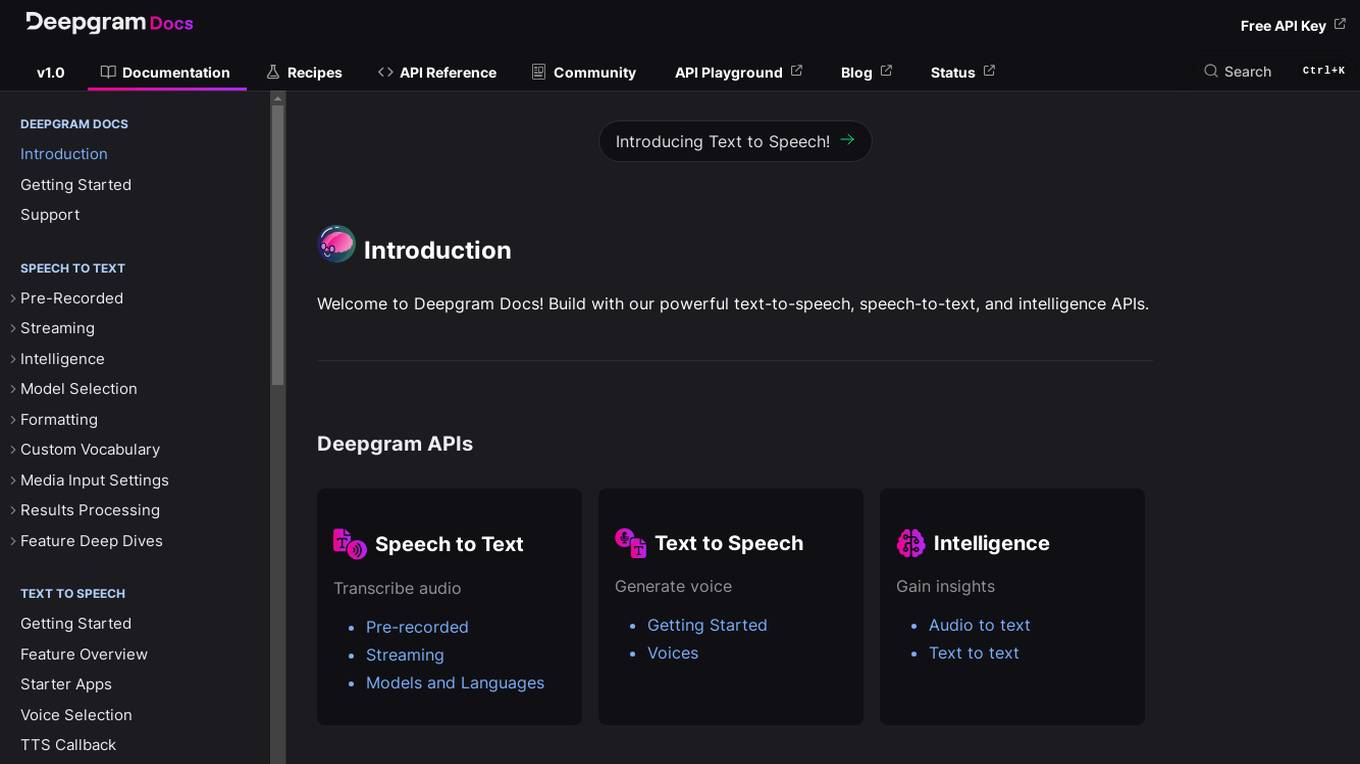

Deepgram

Deepgram is a powerful API platform that provides developers with tools for building speech-to-text, text-to-speech, and intelligence applications. With Deepgram, developers can easily add speech recognition, text-to-speech, and other AI-powered features to their applications.

Hanabi.rest

Hanabi.rest is an AI-based API building platform that allows users to create REST APIs from natural language and screenshots using AI technology. Users can deploy the APIs on Cloudflare Workers and roll them out globally. The platform offers a live editor for testing database access and API endpoints, generates code compatible with various runtimes, and provides features like sharing APIs via URL, npm package integration, and CLI dump functionality. Hanabi.rest simplifies API design and deployment by leveraging natural language processing, image recognition, and v0.dev components.

Vellum AI

Vellum AI is an AI platform that supports using Microsoft Azure hosted OpenAI models. It offers tools for prompt engineering, semantic search, prompt chaining, evaluations, and monitoring. Vellum enables users to build AI systems with features like workflow automation, document analysis, fine-tuning, Q&A over documents, intent classification, summarization, vector search, chatbots, blog generation, sentiment analysis, and more. The platform is backed by top VCs and founders of well-known companies, providing a complete solution for building LLM-powered applications.

SignalWire

SignalWire is a cloud communications platform that provides a suite of APIs and tools for building voice, messaging, and video applications. With SignalWire, developers can quickly and easily create AI-powered applications without extensive coding. SignalWire's platform is designed to be scalable, reliable, and easy to use, making it a great choice for businesses of all sizes.

novita.ai

novita.ai is an AI-assisted tool designed to aid developers in code generation tasks. It offers a state-of-the-art large language model, Code Llama, which provides intelligent recommendations and transforms the coding experience. The platform leverages advancements in machine learning to enhance developers' productivity and accuracy in writing error-free code.

Prodmagic

Prodmagic is an AI tool that enables users to easily build advanced chatbots in minutes. With Prodmagic, users can connect their custom LLMs to create chatbots that can be integrated on any website with just a single line of code. The platform allows for easy customization of chatbots' appearance and behavior without the need for coding. Prodmagic also offers integration with custom LLMs and OpenAI, providing a seamless experience for users looking to enhance their chatbot capabilities. Additionally, Prodmagic provides essential features such as chat history, error monitoring, and simple pricing plans, making it a convenient and cost-effective solution for chatbot development.

SmythOS

SmythOS is an AI-powered platform that allows users to create and deploy AI agents in minutes. With a user-friendly interface and drag-and-drop functionality, SmythOS enables users to build custom agents for various tasks without the need for manual coding. The platform offers pre-built agent templates, universal integration with AI models and APIs, and the flexibility to deploy agents locally or to the cloud. SmythOS is designed to streamline workflow automation, enhance productivity, and provide a seamless experience for developers and businesses looking to leverage AI technology.

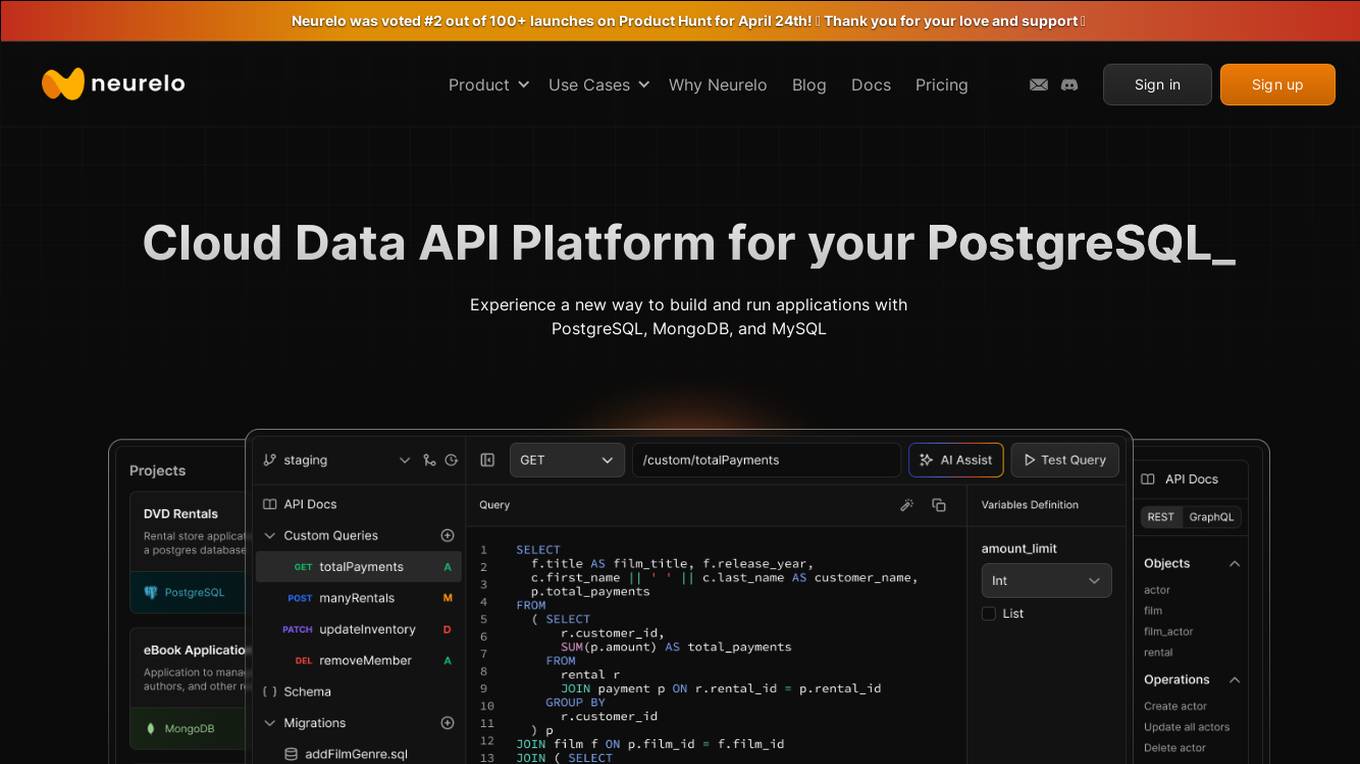

Neurelo

Neurelo is a cloud API platform that offers services for PostgreSQL, MongoDB, and MySQL. It provides features such as auto-generated APIs, custom query APIs with AI assistance, query observability, schema as code, and the ability to build full-stack applications in minutes. Neurelo aims to empower developers by simplifying database programming complexities and enhancing productivity. The platform leverages the power of cloud technology, APIs, and AI to offer a seamless and efficient way to build and run applications.

Daily

Daily is a platform offering real-time voice, video, and AI solutions for developers. It provides ultra-low latency, open-source SDKs, and enterprise reliability since 2016. Daily collaborates with NVIDIA on Voice Agent Blueprint, offers Pipecat - a vendor-neutral open-source orchestration framework, Daily Bots for Pipecat Cloud deployment, and Daily Infrastructure for running real-time calls on WebRTC global infrastructure. The platform ensures the best video quality on every network, with a global mesh network, low latency, and enterprise-grade security features.

Azure Static Web Apps

Azure Static Web Apps is a platform provided by Microsoft Azure for building and deploying modern web applications. It allows developers to easily host static web content and serverless APIs with seamless integration to popular frameworks like React, Angular, and Vue. With Azure Static Web Apps, developers can quickly set up continuous integration and deployment workflows, enabling them to focus on building great user experiences without worrying about infrastructure management.

Tavus

Tavus is an AI tool that offers digital twin APIs for video generation and conversational video interfaces. It allows users to create immersive AI-generated video experiences using cutting-edge AI technology. Tavus provides best-in-class models like Phoenix-2 for creating realistic digital replicas with natural face movements. The platform offers rapid training, instant inference, support for 30+ languages, and built-in security features to ensure user privacy and safety. Tavus is preferred by developers and product teams for its developer-first approach, ease of integration, and exceptional customer service.

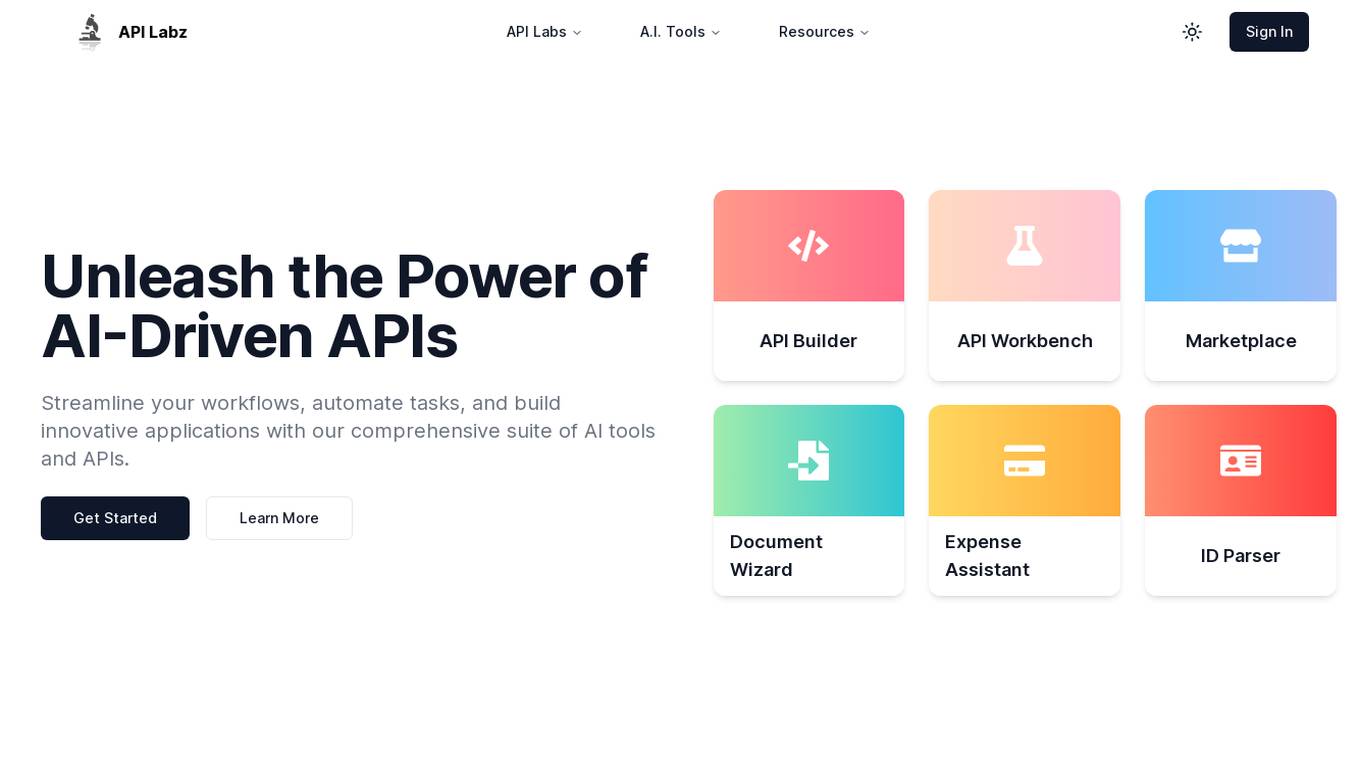

API Labz

API Labz is an AI-powered platform that offers a comprehensive suite of tools and APIs to streamline workflows, automate tasks, and build innovative applications. From custom API building to document processing and financial analysis, API Labz provides intelligent solutions to drive efficiency and enhance business processes. The platform also includes features like AI-powered ID parsing, seamless integrations with third-party applications, and a curated API marketplace for easy access to pre-built APIs.

Sink In

Sink In is a cloud-based platform that provides access to Stable Diffusion AI image generation models. It offers a variety of models to choose from, including majicMIX realistic, MeinaHentai, AbsoluteReality, DreamShaper, and more. Users can generate images by inputting text prompts and selecting the desired model. Sink In charges $0.0015 for each 512x512 image generated, and it offers a 99.9% reliability guarantee for images generated in the last 30 days.

Infobip

Infobip is a leading provider of omnichannel communications solutions, enabling businesses to connect with their customers through a variety of channels, including SMS, RCS, MMS, WhatsApp, Viber, and more. Infobip's platform is used by over 70,000 businesses worldwide, and it processes over 450 billion interactions per year. Infobip's solutions are designed to help businesses improve customer engagement, increase sales, and reduce costs.

0 - Open Source AI Tools

20 - OpenAI Gpts

![[latest] FastAPI GPT Screenshot](/screenshots_gpts/g-BhYCAfVXk.jpg)

[latest] FastAPI GPT

Up-to-date FastAPI coding assistant with knowledge of the latest version. Part of the [latest] GPTs family.

ResourceFinder

Assists in identifying and utilizing APIs and files effectively to enhance user-designed GPTs.

API Quest Guide

API Finder: Analyze, Clarify, Suggest, build code, Iterate, test ... International version

Azure Mentor

Expert in Azure's latest services, including Application Insights, API Management, and more.

Build a Brand

Unique custom images based on your input. Just type ideas and the brand image is created.