Best AI tools for< Attack Golf Greens >

20 - AI tool Sites

Vectra AI

Vectra AI is a leading AI security platform that helps organizations stop advanced cyber attacks by providing an integrated signal for extended detection and response (XDR). The platform arms security analysts with real-time intelligence to detect, prioritize, investigate, and respond to threats across network, identity, cloud, and managed services. Vectra AI's AI-driven detections and Attack Signal Intelligence enable organizations to protect against various attack types and emerging threats, enhancing cyber resilience and reducing risks in critical infrastructure, cloud environments, and remote workforce scenarios. Trusted by over 1100 enterprises worldwide, Vectra AI is recognized for its expertise in AI security and its ability to stop sophisticated attacks that other technologies may miss.

Cleerly

Cleerly is a digital healthcare company transforming the way clinicians approach the treatment of heart disease. Our clinically-proven, AI-based digital care platform works with coronary computed tomography angiography (CCTA) imaging to help clinicians precisely identify and define atherosclerosis earlier, so they can provide personalized, life-saving treatment plans for all patients throughout their care continuum. We measure atherosclerosis - plaque build-up in the heart's arteries - not indirect markers such as risk factors and symptoms of disease. Our AI-enabled digital care pathway offers simpler, faster, more accurate heart disease evaluation and reporting that's tailored to each stakeholder, improving overall clinical and financial outcomes.

NodeZero™ Platform

Horizon3.ai Solutions offers the NodeZero™ Platform, an AI-powered autonomous penetration testing tool designed to enhance cybersecurity measures. The platform combines expert human analysis by Offensive Security Certified Professionals with automated testing capabilities to streamline compliance processes and proactively identify vulnerabilities. NodeZero empowers organizations to continuously assess their security posture, prioritize fixes, and verify the effectiveness of remediation efforts. With features like internal and external pentesting, rapid response capabilities, AD password audits, phishing impact testing, and attack research, NodeZero is a comprehensive solution for large organizations, ITOps, SecOps, security teams, pentesters, and MSSPs. The platform provides real-time reporting, integrates with existing security tools, reduces operational costs, and helps organizations make data-driven security decisions.

Legit

Legit is an Application Security Posture Management (ASPM) platform that helps organizations manage and mitigate application security risks from code to cloud. It offers features such as Secrets Detection & Prevention, Continuous Compliance, Software Supply Chain Security, and AI Security Posture Management. Legit provides a unified view of AppSec risk, deep context to prioritize issues, and proactive remediation to prevent future risks. It automates security processes, collaborates with DevOps teams, and ensures continuous compliance. Legit is trusted by Fortune 500 companies like Kraft-Heinz for securing the modern software factory.

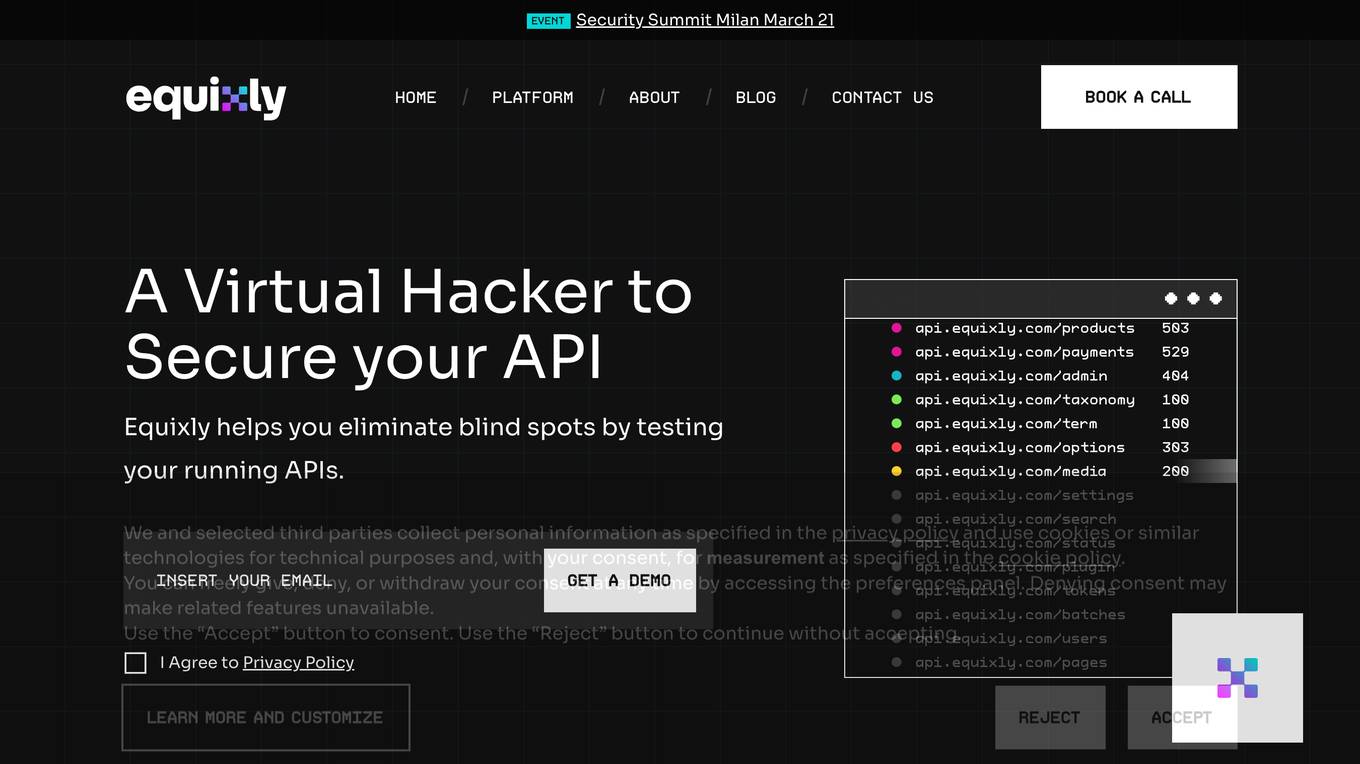

Equixly

Equixly is an AI-powered application designed to help users secure their APIs by identifying vulnerabilities and weaknesses through continuous security testing. The platform offers features such as scalable API PenTesting, attack simulation, mapping of attack surfaces, compliance simplification, and data exposure minimization. Equixly aims to streamline the process of identifying and fixing API security risks, ultimately enabling users to release secure code faster and reduce their attack surface.

Cyguru

Cyguru is an all-in-one cloud-based AI Security Operation Center (SOC) that offers a comprehensive range of features for a robust and secure digital landscape. Its Security Operation Center is the cornerstone of its service domain, providing AI-Powered Attack Detection, Continuous Monitoring for Vulnerabilities and Misconfigurations, Compliance Assurance, SecPedia: Your Cybersecurity Knowledge Hub, and Advanced ML & AI Detection. Cyguru's AI-Powered Analyst promptly alerts users to any suspicious behavior or activity that demands attention, ensuring timely delivery of notifications. The platform is accessible to everyone, with up to three free servers and subsequent pricing that is more than 85% below the industry average.

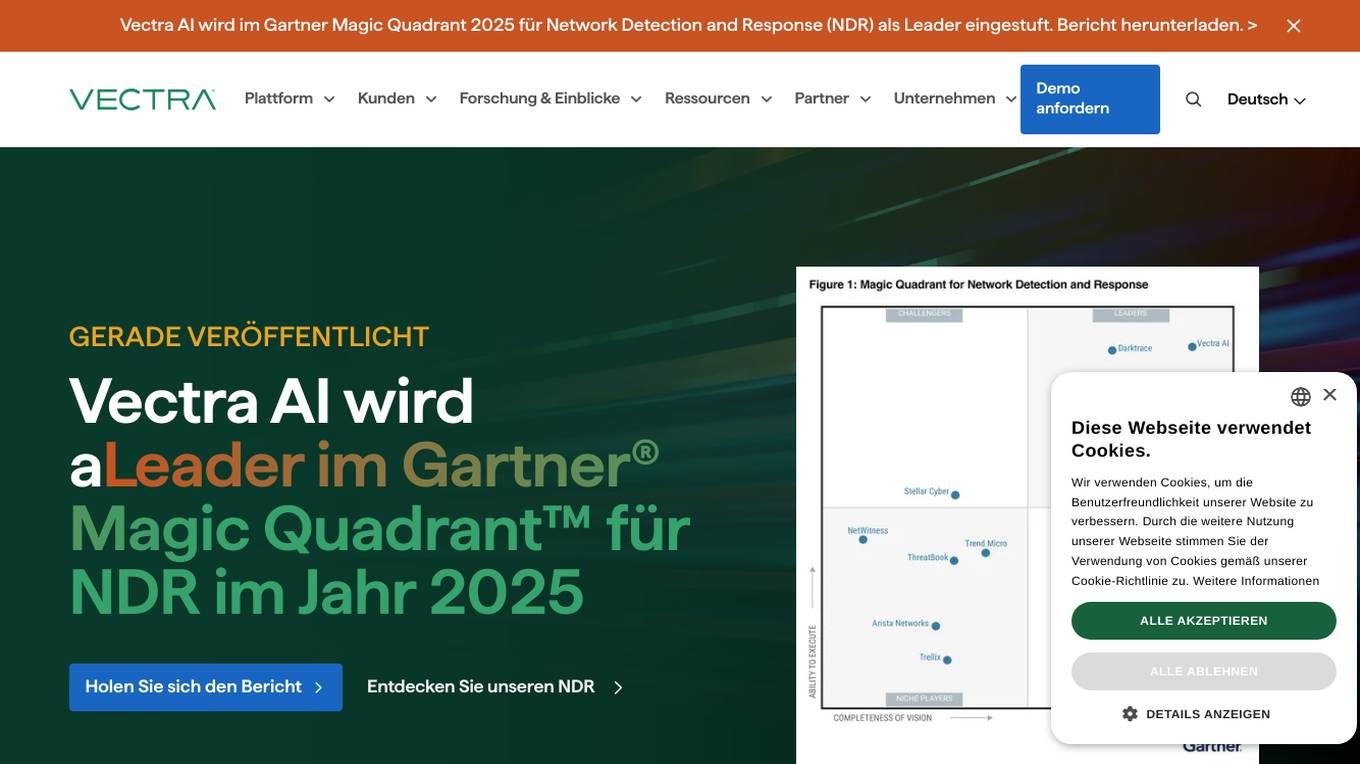

Vectra AI

Vectra AI is a leading cybersecurity AI application that stops attacks that others cannot. It is recognized in the Gartner Magic Quadrant 2025 for Network Detection and Response (NDR) as a leader. Vectra AI's platform protects modern networks from advanced threats by providing real-time attack signal intelligence and AI-driven detections. It equips security analysts with the information needed to quickly stop attacks across various security application scenarios. The application covers a wide range of security areas such as SOC modernization, SIEM optimization, IDS replacement, EDR extension, cloud resilience, and more.

Cyble

Cyble is a leading threat intelligence platform offering products and services recognized by top industry analysts. It provides AI-driven cyber threat intelligence solutions for enterprises, governments, and individuals. Cyble's offerings include attack surface management, brand intelligence, dark web monitoring, vulnerability management, takedown and disruption services, third-party risk management, incident management, and more. The platform leverages cutting-edge AI technology to enhance cybersecurity efforts and stay ahead of cyber adversaries.

Traceable

Traceable is an intelligent API security platform designed for enterprise-scale security. It offers unmatched API discovery, attack detection, threat hunting, and infinite scalability. The platform provides comprehensive protection against API attacks, fraud, and bot security, along with API testing capabilities. Powered by Traceable's OmniTrace Engine, it ensures unparalleled security outcomes, remediation, and pre-production testing. Security teams trust Traceable for its speed and effectiveness in protecting API infrastructures.

SafeWaters.ai

SafeWaters.ai is an AI-powered application that provides shark risk forecasts for beaches globally. The app utilizes predictive AI technology trained on 200+ years of shark attack and marine weather data to deliver accurate 7-day forecasts. Users can search for any beach in the world, save favorites, and receive current and future risk assessments. Additionally, SafeWaters.ai offers features like Shark Spotting Drones Live Feed, Chatbot assistance, and Shark Tracking & Pattern Predictions based on tagged shark data.

Vectra AI

Vectra AI is an advanced AI-driven cybersecurity platform that helps organizations detect, prioritize, investigate, and respond to sophisticated cyber threats in real-time. The platform provides Attack Signal Intelligence to arm security analysts with the necessary intel to stop attacks fast. Vectra AI offers integrated signal for extended detection and response (XDR) across various domains such as network, identity, cloud, and endpoint security. Trusted by 1,500 enterprises worldwide, Vectra AI is known for its patented AI security solutions that deliver the best attack signal intelligence on the planet.

Stellar Cyber

Stellar Cyber is an AI-driven unified security operations platform powered by Open XDR. It offers a single platform with NG-SIEM, NDR, and Open XDR, providing security capabilities to take control of security operations. The platform helps organizations detect, correlate, and respond to threats fast using AI technology. Stellar Cyber is designed to protect the entire attack surface, improve security operations performance, and reduce costs while simplifying security operations.

MagicBid

MagicBid LLC is a web, mobile app, and CTV monetization platform that utilizes new age technology and AI-driven strategies to enhance profits for app and web publishers. The platform offers services such as app monetization, web monetization, and CTV monetization, empowering publishers with tools like Auto AdPilot, in-app bidding app monetization, growth intelligence, power ad servers, demand control center, privacy, and fraud protection. MagicBid aims to optimize ad revenue potential through a single SDK integration, connecting with 200+ top ad demand sources, ensuring impressive fill rates, zero latency, and battery drain. The platform also provides attack protection, privacy, and fraud protection services, complying with industry standards like IAB, GDPR, COPPA, and CCPA.

Dialogly

The website dialogly.ai appears to be experiencing a privacy error related to its security certificate. Users are warned that their connection may not be private and attackers could potentially steal sensitive information such as passwords, messages, or credit card details. The site's security certificate is not trusted by the user's computer operating system, which could be due to misconfiguration or a potential attack. The warning advises users to proceed to the site at their own risk, as it is deemed unsafe.

Aimons.xyz

Aimons.xyz is an AI tool that allows users to generate unique AI creatures by pressing the 'Generate' button and waiting a few seconds. Users can mint their created creatures as NFTs on the Polygon network. The website also provides information about the generated creature's level, HP, attack, defense, speed, and special attributes. Aimons.xyz is a fun and creative platform for users to explore AI-generated creatures and mint them as NFTs.

yujo.io.tilda.ws

The website yujo.io.tilda.ws is experiencing a privacy error related to its security certificate. Users are warned that their connection may not be private, potentially exposing sensitive information like passwords, messages, or credit card details to attackers. The site's security certificate is not trusted by the computer's operating system, leading to a warning message for users. The error message advises caution and suggests that the issue may be due to misconfiguration or a potential attack on the connection. Users are given the option to proceed to the site at their own risk, despite the security concerns.

DDoS-Guard

DDoS-Guard is a web security service that protects websites from distributed denial-of-service (DDoS) attacks. It checks the user's browser before granting access to the website, ensuring a secure browsing experience. The service provides automatic protection against DDoS attacks and ensures the smooth functioning of websites. DDoS-Guard is trusted by many websites to safeguard their online presence and maintain uninterrupted service for their users.

glasp.co

The website glasp.co is a security service using Cloudflare to protect itself from online attacks. Users may encounter a block due to various triggers such as submitting specific words or phrases, SQL commands, or malformed data. In such cases, users can contact the site owner via email to resolve the issue. Cloudflare Ray ID is provided for reference.

AnimalAI.co

AnimalAI.co is a website that provides a security service to protect itself from online attacks. Users may encounter a block when triggering certain actions such as submitting specific words or phrases, SQL commands, or malformed data. In such cases, users can contact the site owner to resolve the issue. The website is powered by Cloudflare, a performance and security service.

eightify.app

The website eightify.app is a security service powered by Cloudflare to protect websites from online attacks. It helps in preventing unauthorized access by blocking suspicious activities. Users may encounter a block if they trigger certain actions like submitting specific words or phrases, SQL commands, or malformed data. To resolve the block, users can contact the site owner with details of the incident and the Cloudflare Ray ID provided on the page.

0 - Open Source AI Tools

20 - OpenAI Gpts

CardioRescue Expert

Asistente especializado en el manejo de la parada cardiorespiratoria según las recomendaciones del ERC (2021) y del ILCOR (2023).

Crypto Tax Calculator

Attach transaction reports from exchanges to calculate capital gains. It will even generate a file you can upload to turbo tax.

DayTraderGPT

Provides technical analysis and trading insights. Attach a TradingView chart to get started!

Solidity Sage

Your personal Ethereum magician — Simply ask a question or provide a code sample for insights into vulnerabilities, gas optimizations, and best practices. Don't be shy to ask about tooling and legendary attacks.

MITREGPT

Feed me any input and i'll match it with the relevant MITRE ATT&CK techniques and tactics (@mthcht)