Best AI tools for< Accelerate Software Releases >

20 - AI tool Sites

Webomates

Webomates is an AI-powered test automation platform that helps users release software faster by providing comprehensive AI-enhanced testing services. It offers solutions for DevOps, code coverage, media & telecom, small and medium businesses, cross-browser testing, and intelligent test automation. The platform leverages AI and machine learning to predict defects, reduce false positives, and accelerate software releases. Webomates also features intelligent automation, smart reporting, and scalable payment options. It seamlessly integrates with popular development tools and processes, providing analytics and support for manual and AI automation testing.

Narratize

Narratize is a generative AI storytelling platform designed for innovative enterprises to accelerate R&D, product innovation, and marketing solutions. It helps enterprise teams and individuals to distill scientific, technical, and medical insights into impactful content that scales. With Narratize, users can generate various types of content quickly without the need for prompt engineering, improving communication and collaboration within the organization.

Warestack

Warestack is an AI-powered cloud workflow automation platform that helps users manage all daily workflow operations with AI-powered observability. It allows users to monitor workflow runs from a single dashboard, speed up releases with one-click resolutions, and gain actionable insights. Warestack streamlines workflow runs, eliminates manual processes complexity, automates workflow operations with a copilot, and boosts runs with self-hosted runners at infrastructure cost. The platform leverages generative AI and deep-tech to enhance and automate workflow processes, ensuring consistent documentation and team productivity.

DigestDiff

DigestDiff is an AI-driven tool that helps users analyze and understand commit history in codebases. It provides detailed narratives based on commit history, accelerates onboarding by summarizing codebases, generates standup updates, creates release notes, and prioritizes privacy by only accessing commit history and not storing any code. The tool leverages AI to automate tasks and improve efficiency in software development workflows.

Serenity Star

Serenity Star is a Generative AI deployment service that offers Models As A Service to help businesses increase productivity and design tailored solutions. The platform provides access to over 100 LLMs, an ecosystem with agents, co-pilots, and plugins, and features low code and no code solutions for quick market release. Serenity Star aims to simplify the implementation of Generative AI in enterprises by offering tools, support, and resources for process optimization, innovation, revenue maximization, and informed decision-making.

AI Generated Test Cases

AI Generated Test Cases is an innovative tool that leverages artificial intelligence to automatically generate test cases for software applications. By utilizing advanced algorithms and machine learning techniques, this tool can efficiently create a comprehensive set of test scenarios to ensure the quality and reliability of software products. With AI Generated Test Cases, software development teams can save time and effort in the testing phase, leading to faster release cycles and improved overall productivity.

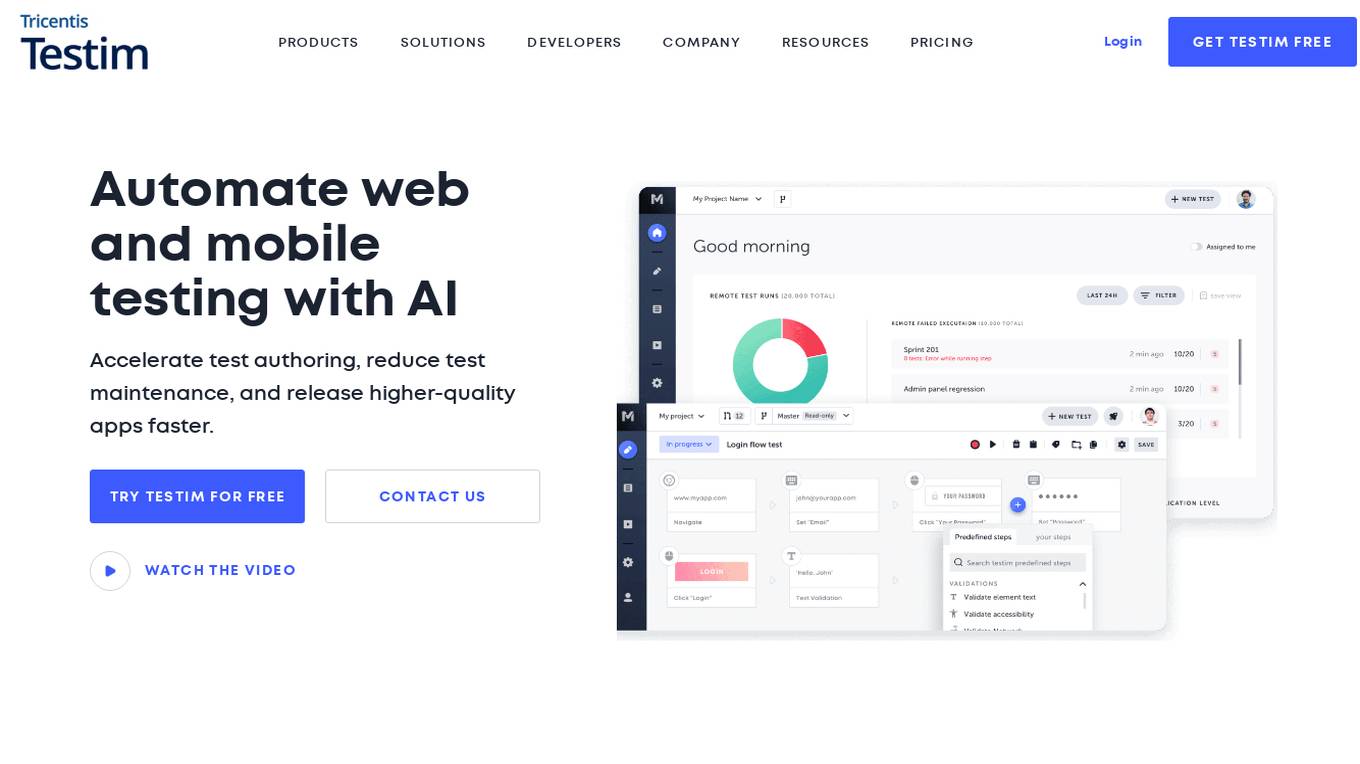

Testim

Testim is an AI-powered UI and functional testing platform that helps accelerate test authoring, reduce test maintenance, and release higher-quality apps faster. It offers a range of features such as fast authoring speed, test stability, root cause analysis, and TestOps, making it an efficient and effective solution for product development teams.

Rainforest QA

Rainforest QA is an AI-powered test automation platform designed for SaaS startups to streamline and accelerate their testing processes. It offers AI-accelerated testing, no-code test automation, and expert QA services to help teams achieve reliable test coverage and faster release cycles. Rainforest QA's platform integrates with popular tools, provides detailed insights for easy debugging, and ensures visual-first testing for a seamless user experience. With a focus on automating end-to-end tests, Rainforest QA aims to eliminate QA bottlenecks and help teams ship bug-free code with confidence.

Katalon

Katalon is a modern, comprehensive quality management platform that helps teams of any size deliver the highest quality digital experiences. It offers a range of features including test authoring, test management, test execution, reporting & analytics, and AI-powered testing. Katalon is suitable for testers of all backgrounds, providing a single platform for testing web, mobile, API, desktop, and packaged apps. With AI capabilities, Katalon simplifies test automation, streamlines testing operations, and scales testing programs for enterprise teams.

Parasoft

Parasoft is an intelligent automated testing and quality platform that offers a range of tools covering every stage of the software development lifecycle. It provides solutions for compliance standards, automated software testing, and various industries' needs. Parasoft helps users accelerate software delivery, ensure quality, and comply with safety and security standards.

Scopilot AI

Scopilot AI is an AI-powered tool designed to accelerate software project scoping by generating project scope, features, user stories, and clarification questions based on the initial project description. It helps software agencies and individuals in defining a clear scope and facilitating the product discovery and specification phase. The tool automates the process of generating UI screens, user actions, components, and database schema, making project scoping 10x faster and more efficient.

Tricentis

Tricentis is an AI-powered testing tool that offers a comprehensive set of test automation capabilities to address various testing challenges. It provides end-to-end test automation solutions for a wide range of applications, including Salesforce, mobile testing, performance testing, and data integrity testing. Tricentis leverages advanced ML technologies to enable faster and smarter testing, ensuring quality at speed with reduced risk, time, and costs. The platform also offers continuous performance testing, change and data intelligence, and model-based, codeless test automation for mobile applications.

HatchWorks

HatchWorks is an AI development partner that specializes in building AI-native solutions and using AI to enhance software development processes. The company offers services such as Gen AI Product Development, Data & AI/ML Software Development, Product Strategy, and UX & UI Design. HatchWorks leverages cutting-edge technologies like Generative AI, Machine Learning, and AI-Powered Software Development to deliver custom software solutions efficiently. The company's proprietary Generative-Driven Development™ methodology enables faster software development, increased productivity, and cost-effectiveness. HatchWorks is trusted by leading brands for delivering exceptional AI-driven outcomes and impactful solutions.

PDF Pals

PDF Pals is an AI-powered application designed for Mac users to interact with PDF documents efficiently. It allows users to chat with PDFs, extract key information, and gain insights from documents instantly. With features like powerful OCR, secure document handling, and privacy-friendly data storage, PDF Pals is a versatile tool suitable for researchers, software developers, legal professionals, and more. The application prioritizes user privacy, offers flexible API integration, and supports multiple languages and document types.

Codimite

Codimite is an AI-assisted offshore development company that provides a range of services to help businesses accelerate their software development, reduce costs, and drive innovation. Codimite's team of experienced engineers and project managers use AI-powered tools and technologies to deliver exceptional results for their clients. The company's services include AI-assisted software development, cloud modernization, and data and artificial intelligence solutions.

Tabnine

Tabnine is an AI code assistant that accelerates and simplifies software development while keeping your code private, secure, and compliant. It offers industry-leading AI code assistance, personalized to fit your team's needs, ensuring total code privacy, and providing complete protection from intellectual property issues. Tabnine's AI agents cover various aspects of the software development lifecycle, from code generation and explanations to testing, documentation, and bug fixes.

GitStart

GitStart is an AI-powered platform that offers Elastic Engineering Capacity by assigning tickets and delivering high-quality production code. It leverages AI agents and a global developer community to increase engineering capacity without hiring more staff. GitStart is supported by top tech leaders and provides solutions for bugs, tech debt, frontend and backend development. The platform aims to empower developers, create economic opportunities, and grow the world's future software talent.

Teste.ai

Teste.ai is an AI-powered platform that allows users to create software testing scenarios and test cases using top-notch artificial intelligence technology. The platform offers a variety of tools based on AI to accelerate the software quality testing journey, helping testers cover a wide range of requirements with a vast array of test scenarios efficiently. Teste.ai's intelligent features enable users to save time and enhance efficiency in creating, executing, and managing software tests. With advanced AI integration, the platform provides automatic generation of test cases based on software documentation or specific requirements, ensuring comprehensive test coverage and precise responses to testing queries.

Infinity Call Analytics Software

Infinity Call Analytics Software is a cloud-based solution that helps businesses track, analyze, and improve their phone calls. It provides a variety of features, including call tracking, conversation analytics, and smart match. Infinity Call Analytics Software is designed to help businesses make more informed decisions about their marketing and sales efforts, and to improve the customer experience.

Diligen

Diligen is a machine learning powered contract analysis tool that helps teams streamline their contract review process. It can identify key provisions, generate contract summaries, and help teams manage review with machine learning powered analysis. Diligen is used by law firms, legal service providers, and corporations around the world to make high quality contract review faster, more efficient, and more cost effective.

0 - Open Source AI Tools

7 - OpenAI Gpts

Material Tailwind GPT

Accelerate web app development with Material Tailwind GPT's components - 10x faster.

Backloger.ai - Product MVP Accelerator

Drop in any requirements or any text ; I'll help you create an MVP with insights.

Tourist Language Accelerator

Accelerates the learning of key phrases and cultural norms for travelers in various languages.

Digital Entrepreneurship Accelerator Coach

The Go-To Coach for Aspiring Digital Entrepreneurs, Innovators, & Startups. Learn More at UnderdogInnovationInc.com.

24 Hour Startup Accelerator

Niche-focused startup guide, humorous, strategic, simplifying ideas.

Digital Boost Lab

A guide for developing university-focused digital startup accelerator programs.