Best AI tools for< Generate Data >

20 - AI tool Sites

Scrol.ai

Scrol.ai is a powerful AI-powered tool that allows users to search, analyze, and generate data from various sources. It utilizes advanced language models like GPT-4 and ChatGPT to provide users with a seamless and efficient way to extract insights, summarize information, and create new content. With its user-friendly interface and robust features, Scrol.ai empowers users to streamline their workflow, enhance productivity, and make informed decisions.

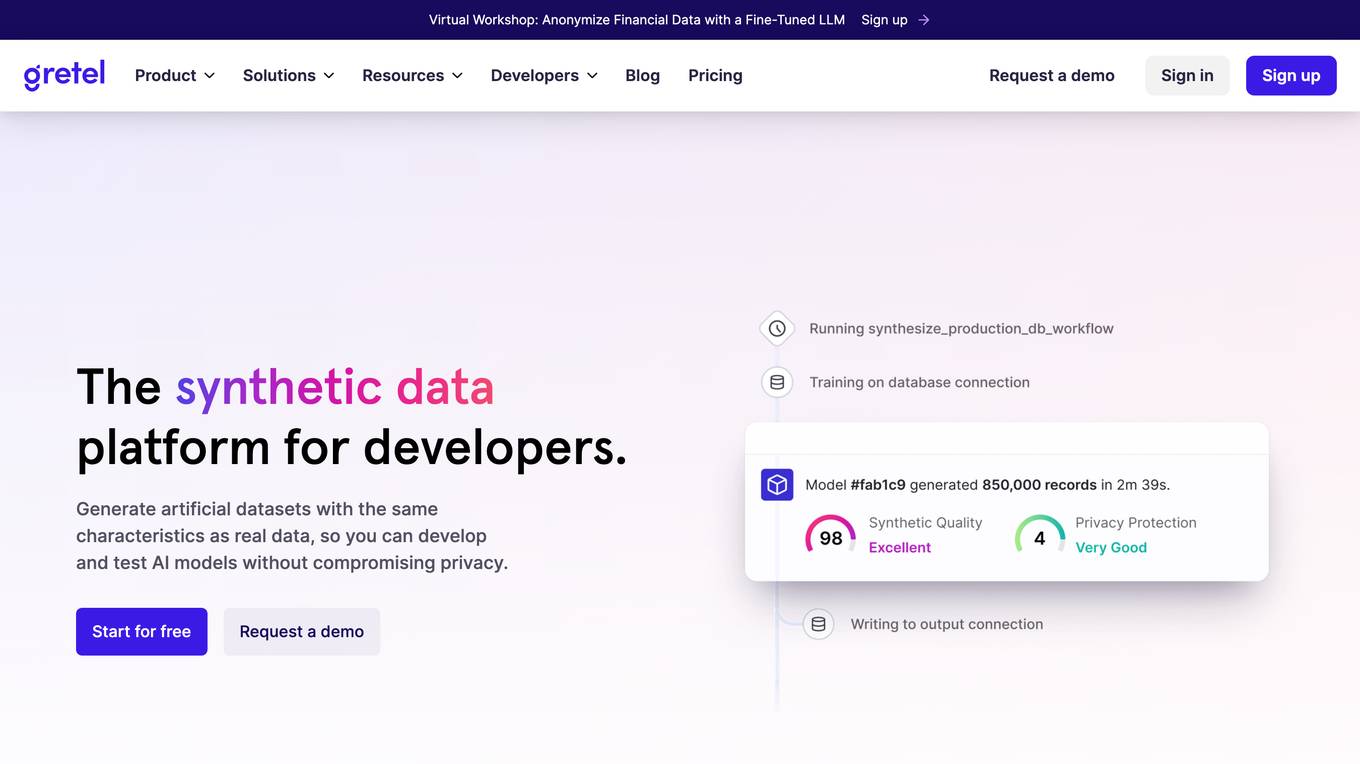

Gretel.ai

Gretel.ai is a synthetic data platform purpose-built for AI applications. It allows users to generate artificial, synthetic datasets with the same characteristics as real data, enabling the improvement of AI models without compromising privacy. The platform offers APIs for generating anonymized and safe synthetic data, training generative AI models, and validating models with quality and privacy scores. Users can deploy Gretel for enterprise use cases and run it on various cloud platforms or in their own environment.

Welo Data

Welo Data is an AI tool that specializes in AI benchmarking, model assessment, and training high-quality datasets for AI models. The platform offers services such as supervised fine tuning, reinforcement learning with human feedback, data generation, expert evaluations, and data quality framework to support the development of world-class AI models. With over 27 years of experience, Welo Data combines language expertise and AI data to deliver exceptional training and performance evaluation solutions.

Charm

Charm is an AI-powered spreadsheet assistant that helps users clean messy data, create content, summarize feedback, classify sales leads, and generate dummy data. It is a Google Sheets add-on that automates tasks that are impossible to do with traditional formulas. Charm is used by hundreds of analysts, marketers, product managers, and more.

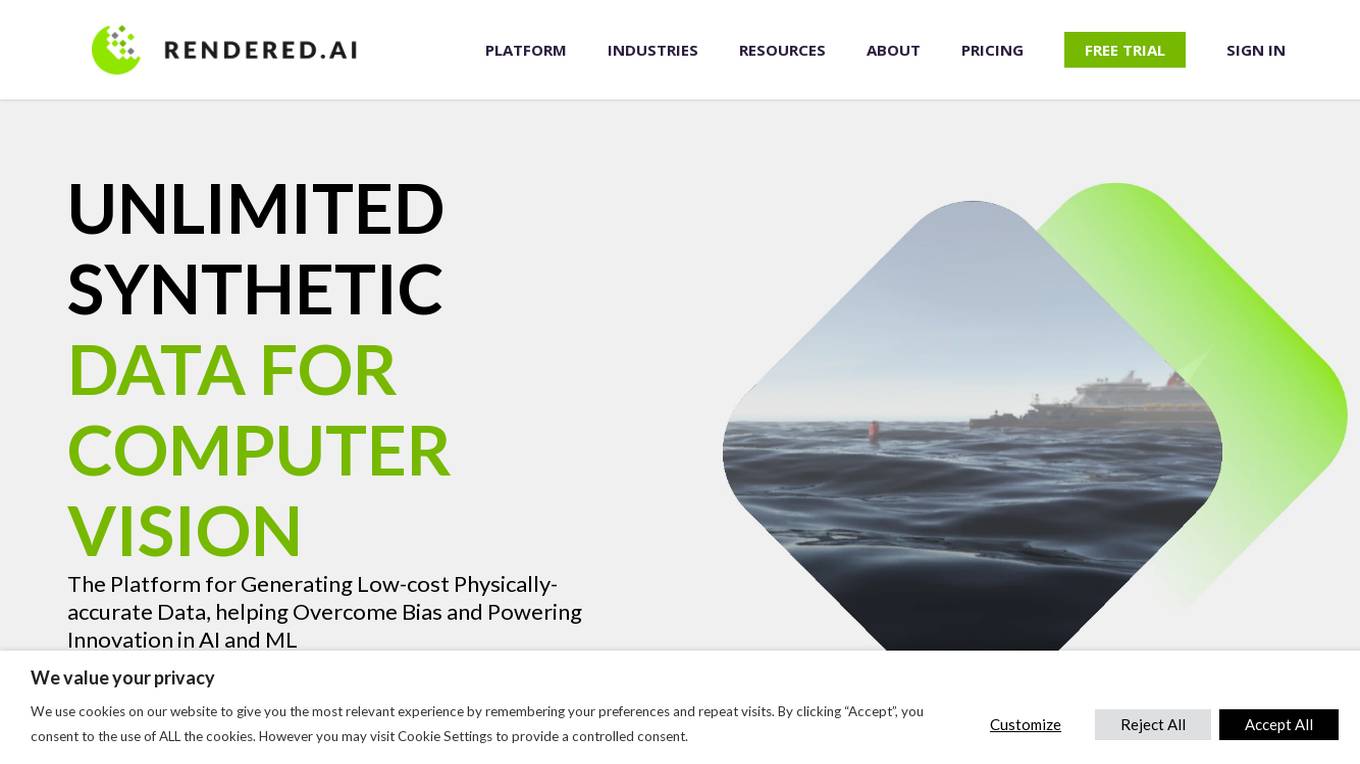

Rendered.ai

Rendered.ai is a platform that provides unlimited synthetic data for AI and ML applications, specifically focusing on computer vision. It helps in generating low-cost physically-accurate data to overcome bias and power innovation in AI and ML. The platform allows users to capture rare events and edge cases, acquire data that is difficult to obtain, overcome data labeling challenges, and simulate restricted or high-risk scenarios. Rendered.ai aims to revolutionize the use of synthetic data in AI and data analytics projects, with a vision that by 2030, synthetic data will surpass real data in AI models.

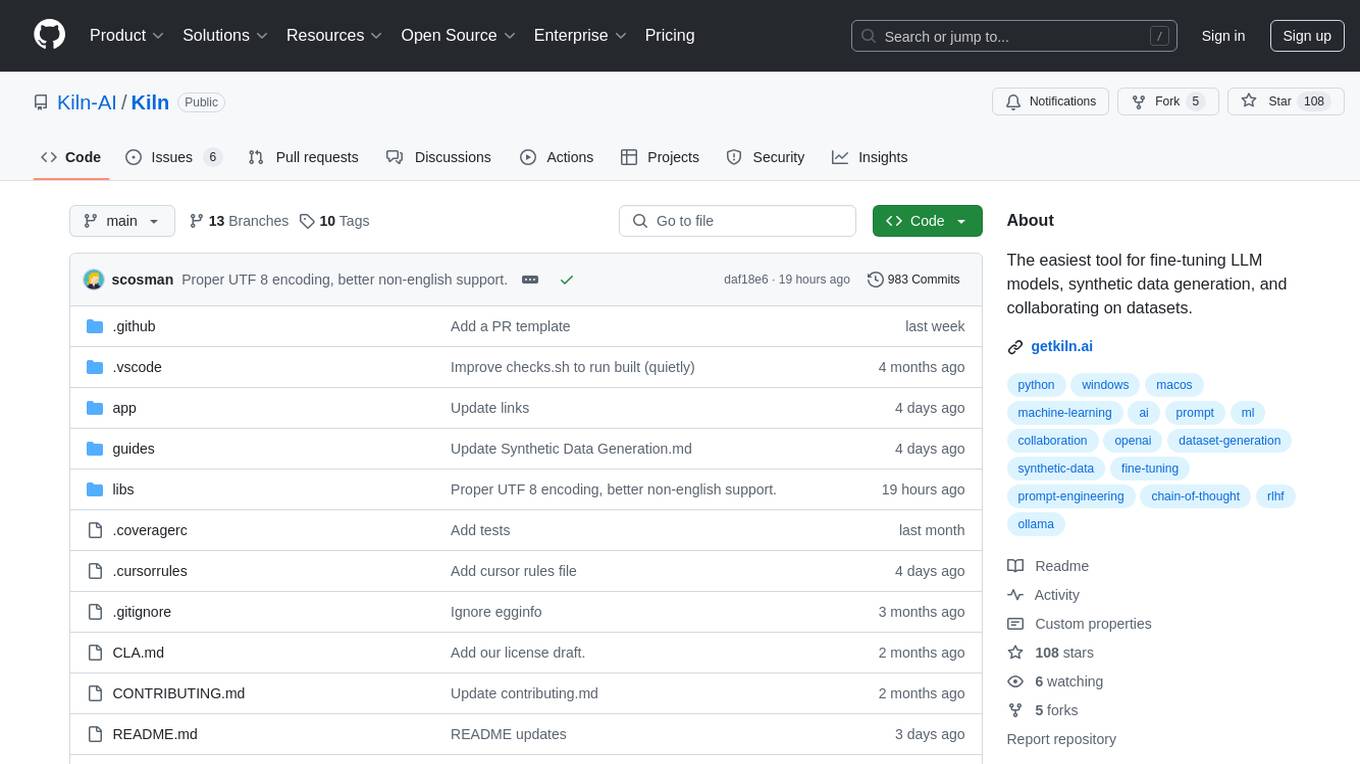

Kiln

Kiln is an AI tool designed for fine-tuning LLM models, generating synthetic data, and facilitating collaboration on datasets. It offers intuitive desktop apps, zero-code fine-tuning for various models, interactive visual tools for data generation, Git-based version control for datasets, and the ability to generate various prompts from data. Kiln supports a wide range of models and providers, provides an open-source library and API, prioritizes privacy, and allows structured data tasks in JSON format. The tool is free to use and focuses on rapid AI prototyping and dataset collaboration.

WebDB

WebDB is an open-source and efficient Database IDE that focuses on providing a secure and user-friendly platform for database management. It offers features such as automatic DBMS discovery, credential guessing, time machine for database version control, powerful queries editor with autocomplete and documentation, AI assistant integration, NoSQL structure management, intelligent data generation, and more. With a modern ERD view and support for various databases, WebDB aims to simplify database management tasks and enhance productivity for users.

Athenic AI

Athenic AI is an Enterprise AI tool designed to provide data insights quickly and easily. It allows users to ask questions and follow-up questions to explore trends, discover insights, and connect the dots in their data. The tool offers natural language analytics, fast data retrieval, and analysis for various business functions like E-Commerce, Marketing, and Manufacturing. Athenic AI aims to equip teams with reliable insights and streamline data analysis processes.

Chat2DB

Chat2DB is an AI-driven data management platform that helps users query, edit, analyze, and visualize data. It integrates data management, development, analysis, and application all in one platform. Chat2DB's AI technology enables users to easily handle SQL, generate database data, and test efficiently. It also provides intelligent reports and data exploration features that allow users to interact with data using natural language.

Scale AI

Scale AI is an AI tool that accelerates the development of AI applications for various sectors including enterprise, government, and automotive industries. It offers solutions for training models, fine-tuning, generative AI, and model evaluations. Scale Data Engine and GenAI Platform enable users to leverage enterprise data effectively. The platform collaborates with leading AI models and provides high-quality data for public and private sector applications.

generatejson.com

The website generatejson.com appears to be inaccessible due to an 'Access Denied' error. It seems that users are encountering permission issues when trying to access the site. The error message references a server issue and provides a specific reference number. The website may be related to generating JSON data, but further details are not available from the provided text.

Athina AI

Athina AI is a platform that provides research and guides for building safe and reliable AI products. It helps thousands of AI engineers in building safer products by offering tutorials, research papers, and evaluation techniques related to large language models. The platform focuses on safety, prompt engineering, hallucinations, and evaluation of AI models.

Motorica

Motorica is an AI-powered tool for instant character animation, enabling users to craft AAA animations in seconds with Generative AI mocap actor. It offers a seamless blend of premade styles, custom style cloning, and live directing of AI mocap actors in 3D scenes. Motorica streamlines animation workflows by removing tedious tasks, allowing users to focus on creativity and produce high-quality animations efficiently. Trusted by renowned studios, Motorica empowers creators to bring their visions to life with ease and precision.

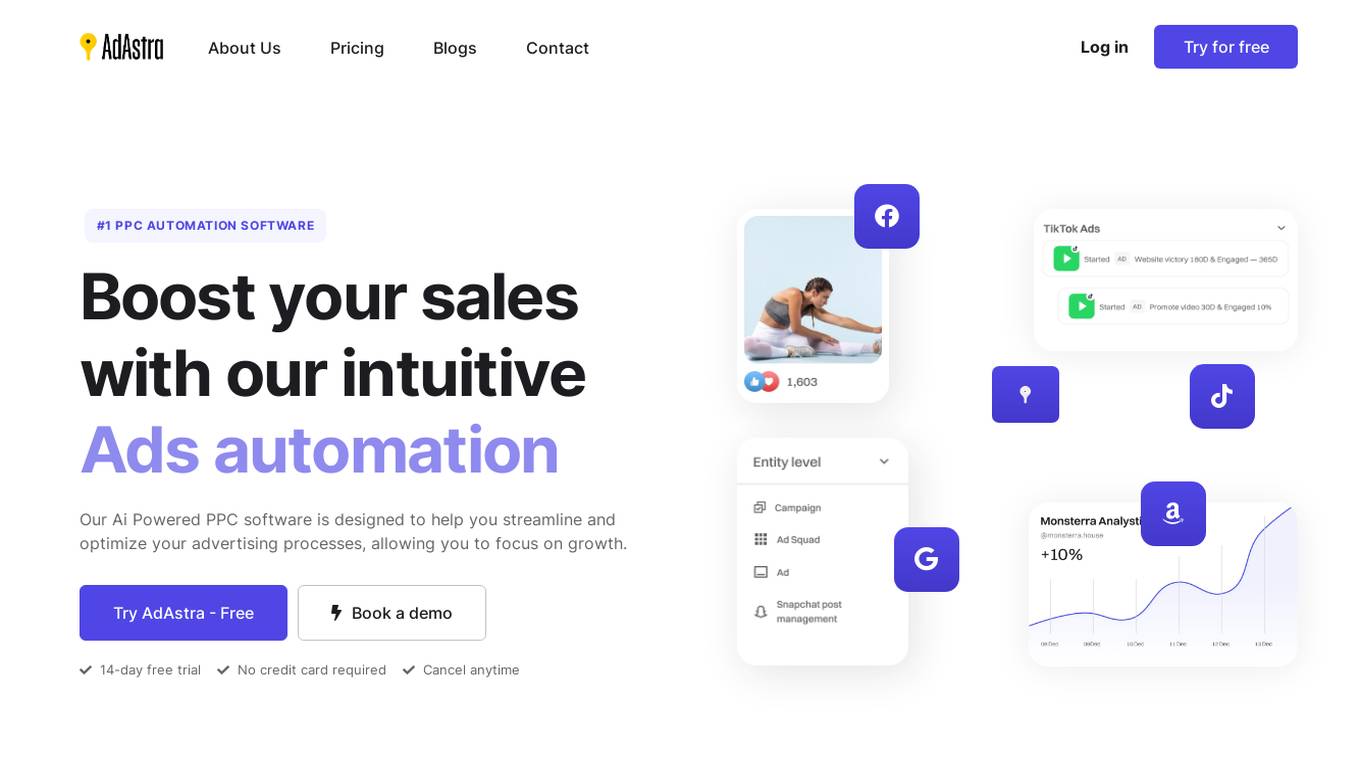

AdAstra

AdAstra is an AI-powered advertising automation platform designed to streamline and optimize advertising processes for businesses of all sizes. With intuitive AI bidding, auto campaigns, profit maximizers, and ads automation, AdAstra helps users increase efficiency, reach their goals faster, and unlock the potential of their brand through AI-driven ad strategies and machine learning insights. The platform offers innovative brand growth strategies, effortless marketing solutions, and a comprehensive suite of tools to automate advertising tasks and generate actionable insights.

Evisort

Evisort is an AI-powered contract management software that simplifies contract management at every stage. It offers a complete, AI-native platform for end-to-end contract lifecycle management, including the first large language model built specifically for contracts. Evisort's AI capabilities enable users to ask questions about their contracts in simple, natural language and get clear, reasoned answers. It can also track terms of interest across all contracts and related documents, and generate data points that matter for sales, procurement, risk, and finance teams. Additionally, Evisort's AI-powered workflows automate tasks such as redlining, clause generation, and contract approvals, saving time and reducing risk.

AI Placeholder

AI Placeholder is a free AI-Powered Fake or Dummy Data API for testing and prototyping. It leverages OpenAI's GPT-3.5-Turbo Model API to generate fake content. Users can directly use the hosted version or self-host it. The API allows users to generate any data they can think of, with the ability to specify rules for data retrieval. It supports various content types like tweets, posts, Instagram posts, and more. The application is designed to assist developers and testers in creating realistic but non-sensitive data for their projects.

Fine-Tune AI

Fine-Tune AI is a tool that allows users to generate fine-tune data sets using prompts. This can be useful for a variety of tasks, such as improving the accuracy of machine learning models or creating new training data for AI applications.

Bifrost AI

Bifrost AI is a data generation engine designed for AI and robotics applications. It enables users to train and validate AI models faster by generating physically accurate synthetic datasets in 3D simulations, eliminating the need for real-world data. The platform offers pixel-perfect labels, scenario metadata, and a simulated 3D world to enhance AI understanding. Bifrost AI empowers users to create new scenarios and datasets rapidly, stress test AI perception, and improve model performance. It is built for teams at every stage of AI development, offering features like automated labeling, class imbalance correction, and performance enhancement.

Lazy Admin

Lazy Admin is an AI-powered quick reporting and data analysis tool designed to revolutionize data engagement by providing real-time responses to human language queries. It enables smart reporting and faster decision-making by leveraging the power of AI. With features like data protection, AI-powered data analysis, export and share capabilities, and customizable options, Lazy Admin aims to streamline productivity and enhance data insights for businesses. The tool ensures data privacy and security while offering efficient search management and visualization of data through charts. Lazy Admin is suitable for Salesforce users and custom applications, offering a range of pricing plans to cater to different business needs.

0 - Open Source AI Tools

20 - OpenAI Gpts

Interactive Spring API Creator

Pass in the attributes of Pojo entity class objects, generate corresponding addition, deletion, modification, and pagination query functions, including generating database connection configuration files yaml and database script files, as well as XML dynamic SQL concatenation statements.

Database Schema Generator

Takes in a Project Design Document and generates a database schema diagram for the project.

BibleGPT

Chat with the Bible, analyze Bible data and generate Bible-inspired images! Utilises ESV Bible API.

Regex Wizard

Generate and explain regex patterns from your description, it support English and Chinese.

data trip

Dalle + custom corrupted data from every artist in the world. This is an experiment. (beta)

CannaIndustry Data Expert

Data trend analysis expert in cannabis, also skilled in image and data analysis, document generation, and web search.