Best AI tools for< Deploy Production-ready Apps >

20 - AI tool Sites

BuilderKit

BuilderKit is a highly modular NextJS AI Boilerplate that allows users to ship any AI Apps within days. It saves over 40 hours of development effort with its robust deployable code. The platform offers support for all major AI models and workflows, comprehensive NextJS boilerplate, AI modules for authentication, payments, and email integrations, landing pages, and waitlist pages. BuilderKit also provides pre-built AI apps loved by top builders, enabling users to build their AI products and generate revenue quickly.

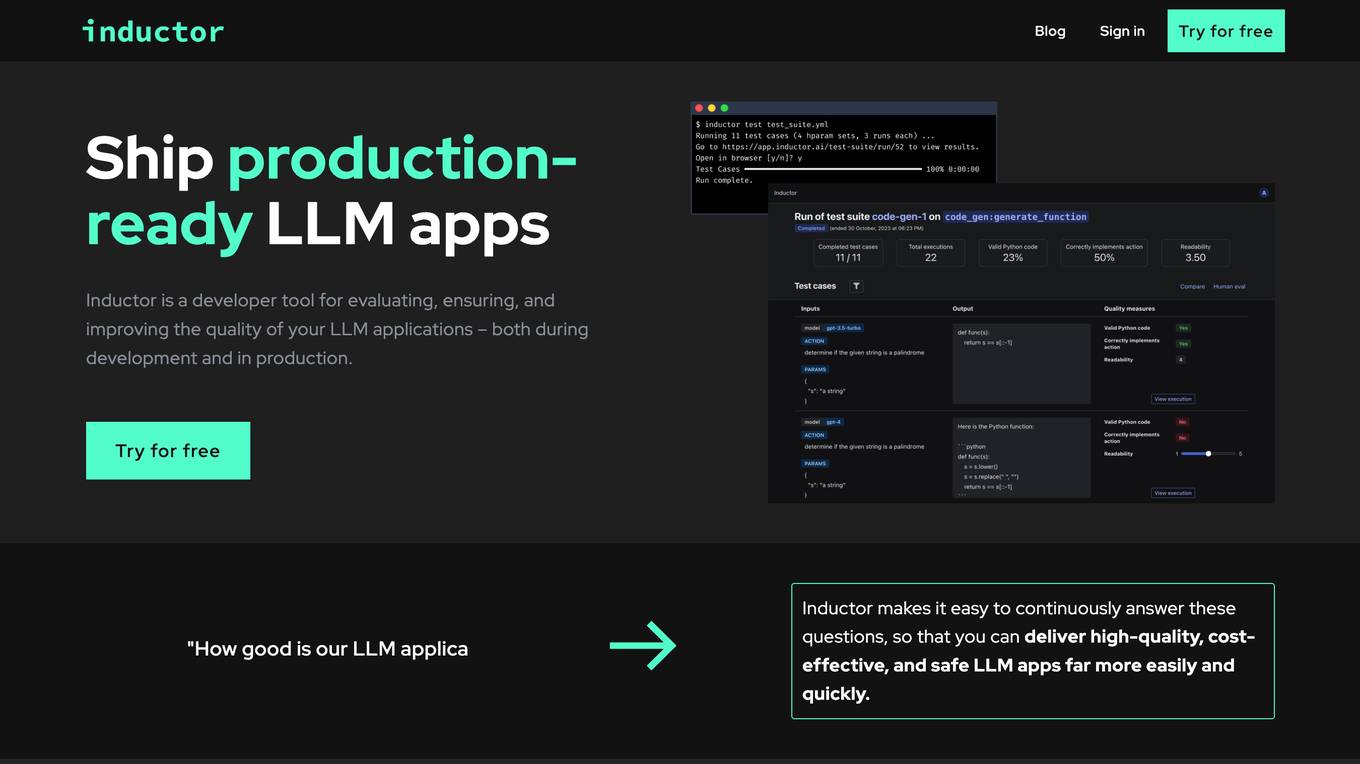

Inductor

Inductor is a developer tool for evaluating, ensuring, and improving the quality of your LLM applications – both during development and in production. It provides a fantastic workflow for continuous testing and evaluation as you develop, so that you always know your LLM app’s quality. Systematically improve quality and cost-effectiveness by actionably understanding your LLM app’s behavior and quickly testing different app variants. Rigorously assess your LLM app’s behavior before you deploy, in order to ensure quality and cost-effectiveness when you’re live. Easily monitor your live traffic: detect and resolve issues, analyze usage in order to improve, and seamlessly feed back into your development process. Inductor makes it easy for engineering and other roles to collaborate: get critical human feedback from non-engineering stakeholders (e.g., PM, UX, or subject matter experts) to ensure that your LLM app is user-ready.

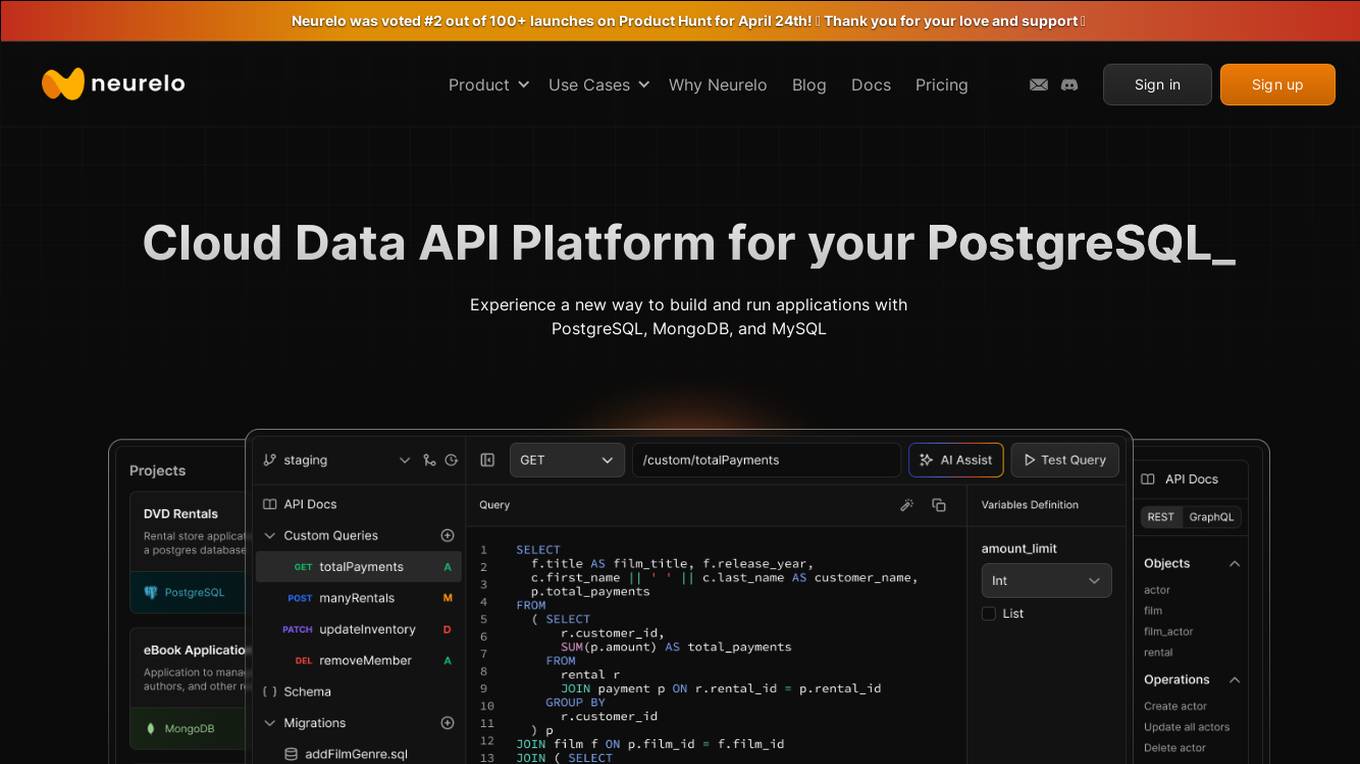

Neurelo

Neurelo is a cloud API platform that offers services for PostgreSQL, MongoDB, and MySQL. It provides features such as auto-generated APIs, custom query APIs with AI assistance, query observability, schema as code, and the ability to build full-stack applications in minutes. Neurelo aims to empower developers by simplifying database programming complexities and enhancing productivity. The platform leverages the power of cloud technology, APIs, and AI to offer a seamless and efficient way to build and run applications.

Weaviate

Weaviate is an AI-native database that developers love. It offers a feature-rich vector database trusted by AI innovators, empowering AI-native builders to create AI-powered search, retrieval augmented generation, and agentic AI applications. Weaviate simplifies the process of building production-ready AI applications by providing seamless model integration, pre-built database agents, and language-agnostic SDKs for easy development. With billion-scale architecture and enterprise-ready deployment options, Weaviate enables developers to scale seamlessly, deploy anywhere, and meet enterprise requirements. The platform is designed to help AI builders write less custom code, optimize costs, and build AI-native apps faster.

Meibel

Meibel is an AI platform that empowers product and engineering leaders to accelerate their generative AI vision from pilot to production with explainable AI. The platform provides complete visibility, control, and confidence to quickly build and deploy production-ready AI systems that deliver measurable business value. Meibel offers intuitive tools for AI development, seamless data integration, enterprise-ready security, measurable impact tracking, and a future-proof platform that evolves alongside AI technology.

Ongil

Ongil is an AI solutions provider that helps industries transform their expertise into AI solutions in a matter of weeks. By leveraging domain-optimized components, Ongil accelerates AI development by 10x, enabling businesses to deploy production-ready AI solutions. The platform offers tailored AI solutions for various industry challenges, personalized portfolio optimization, emissions estimation, supply chain intelligence, and more. Ongil's unique approach combines industry-specific components to create maintainable and scalable AI systems for enterprise production environments.

PoplarML

PoplarML is a platform that enables the deployment of production-ready, scalable ML systems with minimal engineering effort. It offers one-click deploys, real-time inference, and framework agnostic support. With PoplarML, users can seamlessly deploy ML models using a CLI tool to a fleet of GPUs and invoke their models through a REST API endpoint. The platform supports Tensorflow, Pytorch, and JAX models.

Amplication

Amplication is an AI-powered platform for .NET and Node.js app development, offering the world's fastest way to build backend services. It empowers developers by providing customizable, production-ready backend services without vendor lock-ins. Users can define data models, extend and customize with plugins, generate boilerplate code, and modify the generated code freely. The platform supports role-based access control, microservices architecture, continuous Git sync, and automated deployment. Amplication is SOC-2 certified, ensuring data security and compliance.

Retell AI

Retell AI is a powerful voice agent platform that enables users to build, test, deploy, and monitor AI voice agents at scale. It offers features such as call transfer, appointment booking, IVR navigation, batch calling, and post-call analysis. Retell AI provides advantages like verified phone numbers, branded call ID, custom analysis, and case studies. However, some disadvantages include the need for initial setup by an engineer, ongoing maintenance, and potential concurrent call limitations. The application is suitable for various industries and use cases, with multilingual support and compliance with industry standards.

Myple

Myple is an AI application that enables users to build, scale, and secure AI applications with ease. It provides production-ready AI solutions tailored to individual needs, offering a seamless user experience. With support for multiple languages and frameworks, Myple simplifies the integration of AI through open-source SDKs. The platform features a clean interface, keyboard shortcuts for efficient navigation, and templates to kickstart AI projects. Additionally, Myple offers AI-powered tools like RAG chatbot for documentation, Gmail agent for email notifications, and AskFeynman for physics-related queries. Users can connect their favorite tools and services effortlessly, without any coding. Joining the beta program grants early access to new features and issue resolution prioritization.

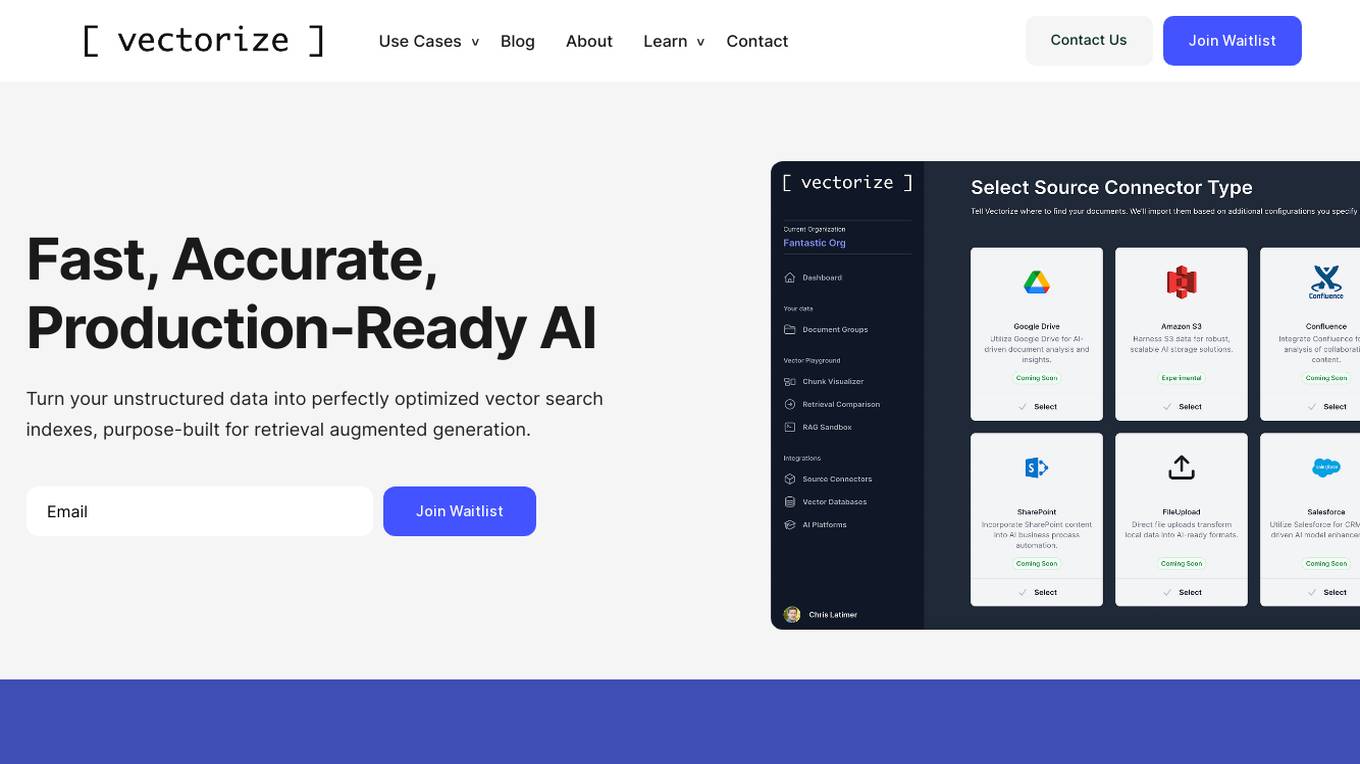

Vectorize

Vectorize is a fast, accurate, and production-ready AI tool that helps users turn unstructured data into optimized vector search indexes. It leverages Large Language Models (LLMs) to create copilots and enhance customer experiences by extracting natural language from various sources. With built-in support for top AI platforms and a variety of embedding models and chunking strategies, Vectorize enables users to deploy real-time vector pipelines for accurate search results. The tool also offers out-of-the-box connectors to popular knowledge repositories and collaboration platforms, making it easy to transform knowledge into AI-generated content.

BackX

BackX is an AI development platform that empowers developers to quickly ship backends across various use cases, environments, and scales. It offers unparalleled accuracy, flexibility, and efficiency by overcoming the limitations of traditional AI-assisted programming. With features like one-click production-grade code, context-aware consistent code output, versioned artifacts, instant deploy, and a suite of AI-powered dev tools, BackX revolutionizes the backend development process. Developers can effortlessly design and manage databases, generate CRUD operations, implement complex business logic, and deploy serverless applications with ease. The platform aims to streamline development processes, increase cost-effectiveness, and provide more accurate outputs than traditional methods.

Dify

Dify is an open-source platform for building AI applications that combines Backend-as-a-Service and LLMOps to streamline the development of generative AI solutions. It integrates support for mainstream LLMs, an intuitive Prompt orchestration interface, high-quality RAG engines, a flexible AI Agent framework, and easy-to-use interfaces and APIs. Dify allows users to skip complexity and focus on creating innovative AI applications that solve real-world problems. It offers a comprehensive, production-ready solution with a user-friendly interface.

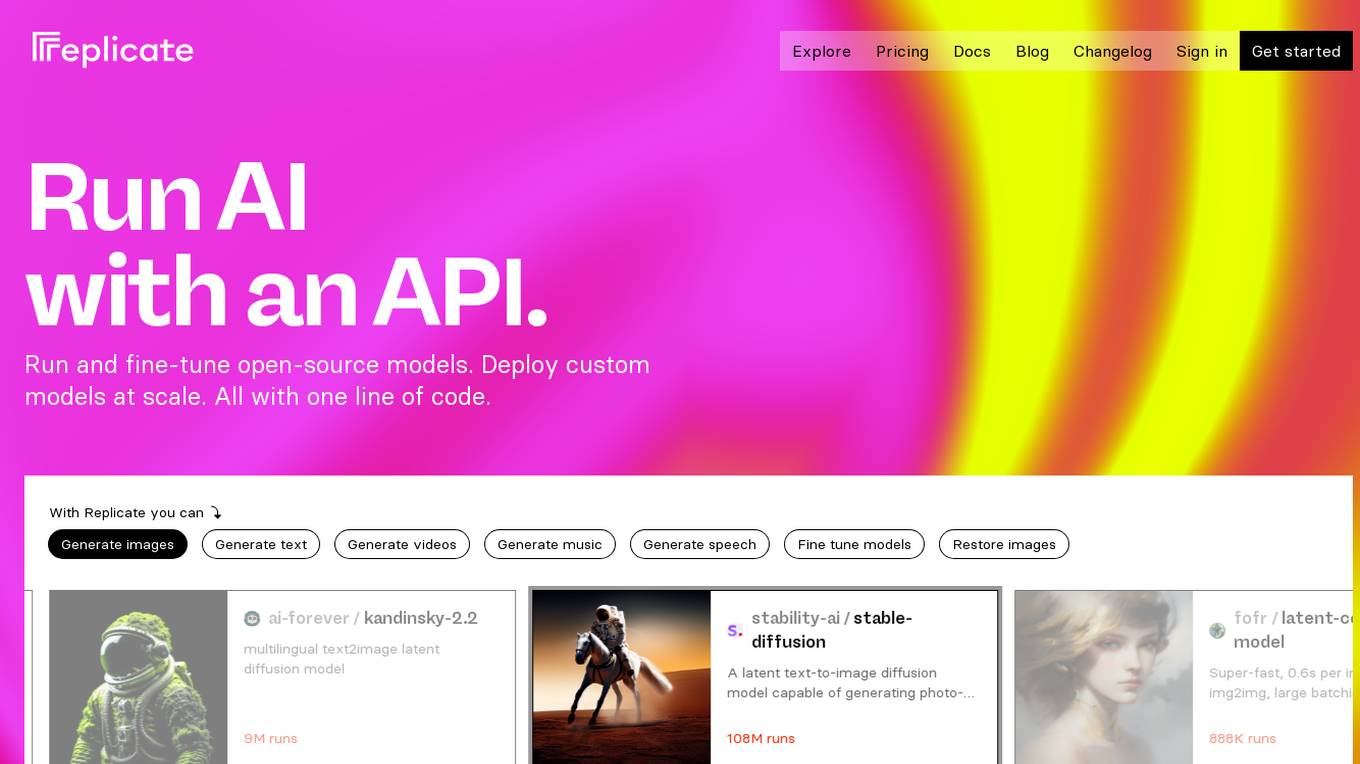

Replicate

Replicate is an AI tool that allows users to run and fine-tune open-source models, deploy custom models at scale, and generate images, text, videos, music, and speech with just one line of code. It provides a platform for the community to contribute and explore thousands of production-ready AI models, enabling users to push the boundaries of AI beyond academic papers and demos. With features like fine-tuning models, deploying custom models, and scaling on Replicate, users can easily create and deploy AI solutions for various tasks.

Deploya

Deploya is an AI-powered platform that allows users to create production-ready websites in seconds. By leveraging cutting-edge AI models, Deploya optimizes websites for performance and user experience. Users can simply describe their requirements, and Deploya will generate a website accordingly. The platform also offers features like automatic image selection, quick publishing, and flexible pricing options. Deploya stands out for its AI-driven web design capabilities and efficient website deployment process.

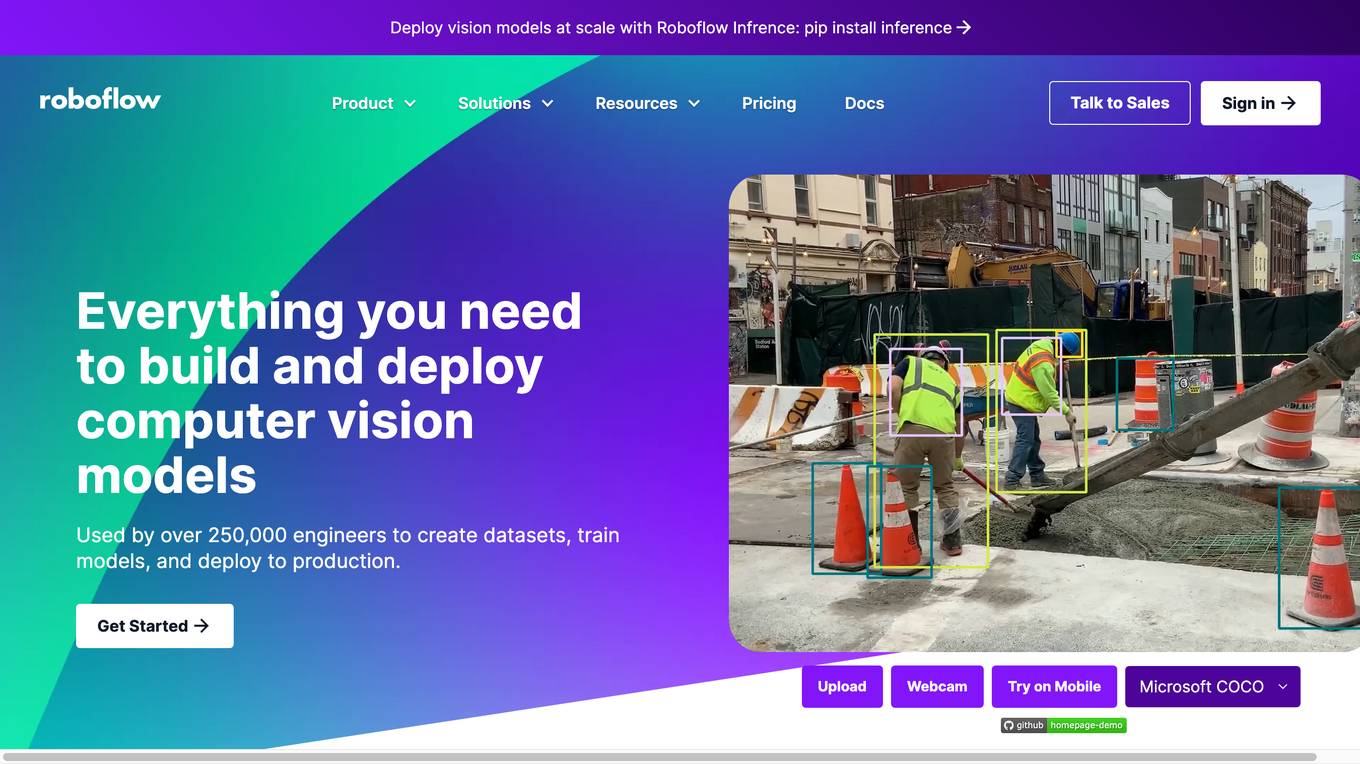

Roboflow

Roboflow is a platform that provides tools for building and deploying computer vision models. It offers a range of features, including data annotation, model training, and deployment. Roboflow is used by over 250,000 engineers to create datasets, train models, and deploy to production.

Salad

Salad is a distributed GPU cloud platform that offers fully managed and massively scalable services for AI applications. It provides the lowest priced AI transcription in the market, with features like image generation, voice AI, computer vision, data collection, and batch processing. Salad democratizes cloud computing by leveraging consumer GPUs to deliver cost-effective AI/ML inference at scale. The platform is trusted by hundreds of machine learning and data science teams for its affordability, scalability, and ease of deployment.

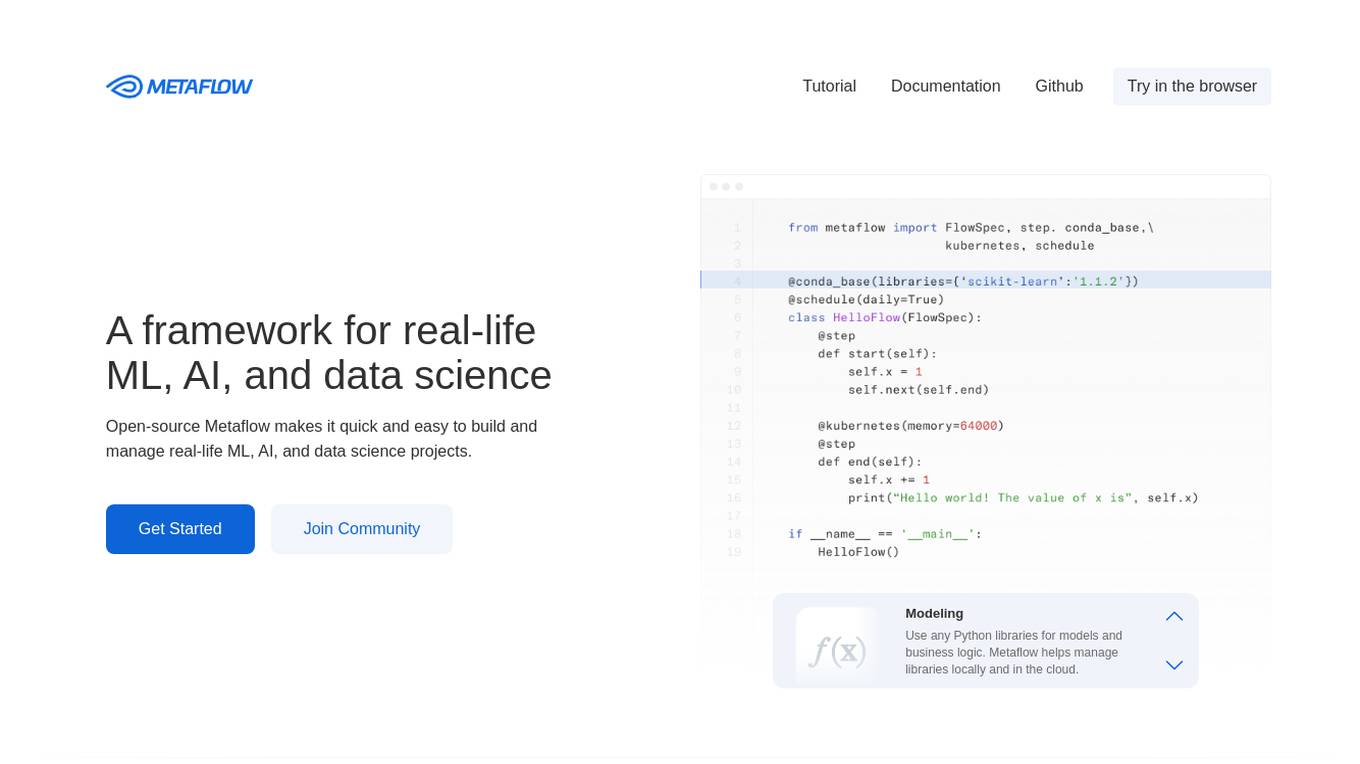

Metaflow

Metaflow is an open-source framework for building and managing real-life ML, AI, and data science projects. It makes it easy to use any Python libraries for models and business logic, deploy workflows to production with a single command, track and store variables inside the flow automatically for easy experiment tracking and debugging, and create robust workflows in plain Python. Metaflow is used by hundreds of companies, including Netflix, 23andMe, and Realtor.com.

Fireworks

Fireworks is a generative AI platform for product innovation. It provides developers with access to the world's leading generative AI models, at the fastest speeds. With Fireworks, developers can build and deploy AI-powered applications quickly and easily.

JFrog ML

JFrog ML is an AI platform designed to streamline AI development from prototype to production. It offers a unified MLOps platform to build, train, deploy, and manage AI workflows at scale. With features like Feature Store, LLMOps, and model monitoring, JFrog ML empowers AI teams to collaborate efficiently and optimize AI & ML models in production.

0 - Open Source AI Tools

20 - OpenAI Gpts

Frontend Developer

AI front-end developer expert in coding React, Nextjs, Vue, Svelte, Typescript, Gatsby, Angular, HTML, CSS, JavaScript & advanced in Flexbox, Tailwind & Material Design. Mentors in coding & debugging for junior, intermediate & senior front-end developers alike. Let’s code, build & deploy a SaaS app.

Azure Arc Expert

Azure Arc expert providing guidance on architecture, deployment, and management.

Instructor GCP ML

Formador para la certificación de ML Engineer en GCP, con respuestas y explicaciones detalladas.

Docker and Docker Swarm Assistant

Expert in Docker and Docker Swarm solutions and troubleshooting.

Cloudwise Consultant

Expert in cloud-native solutions, provides tailored tech advice and cost estimates.