Best AI tools for< Observability >

Infographic

20 - AI tool Sites

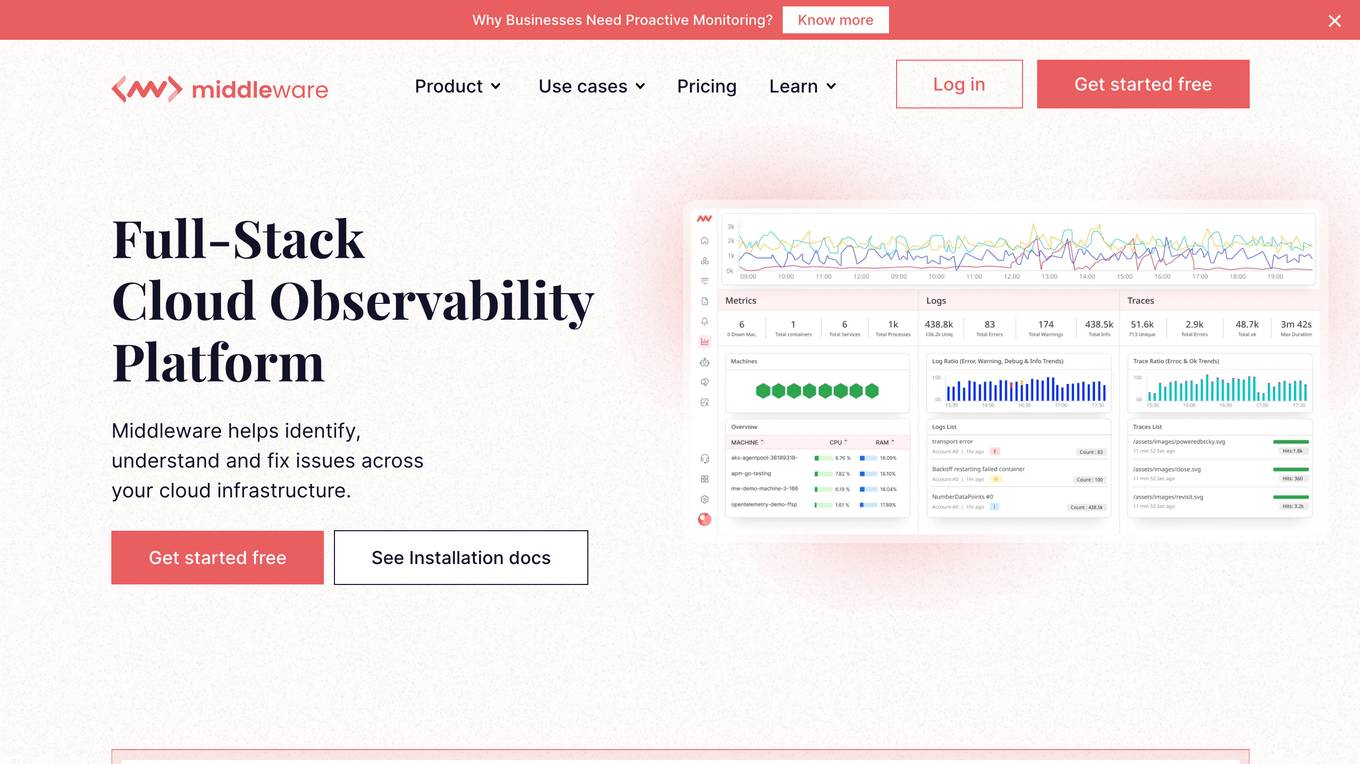

Cloud Observability Middleware

The website provides Full-Stack Cloud Observability services with a focus on Middleware. It offers comprehensive monitoring and analysis tools to help businesses optimize their cloud infrastructure performance. The platform enables users to gain insights into their middleware applications, identify bottlenecks, and improve overall system efficiency.

Eyer

Eyer is a headless AIOps platform that offers automated observability and actionable insights through AI-powered anomaly detection. It allows users to integrate with various systems using Open APIs and provides fast time-to-value by automating manual tasks and improving IT operation efficiency. Eyer supports integrations with tools like Boomi, Grafana, BizTalk, and Influx Telegraf, enabling users to monitor and manage their systems effectively.

OpenLIT

OpenLIT is an AI application designed as an Observability tool for GenAI and LLM applications. It empowers model understanding and data visualization through an interactive Learning Interpretability Tool. With OpenTelemetry-native support, it seamlessly integrates into projects, offering features like fine-tuning performance, real-time data streaming, low latency processing, and visualizing data insights. The tool simplifies monitoring with easy installation and light/dark mode options, connecting to popular observability platforms for data export. Committed to OpenTelemetry community standards, OpenLIT provides valuable insights to enhance application performance and reliability.

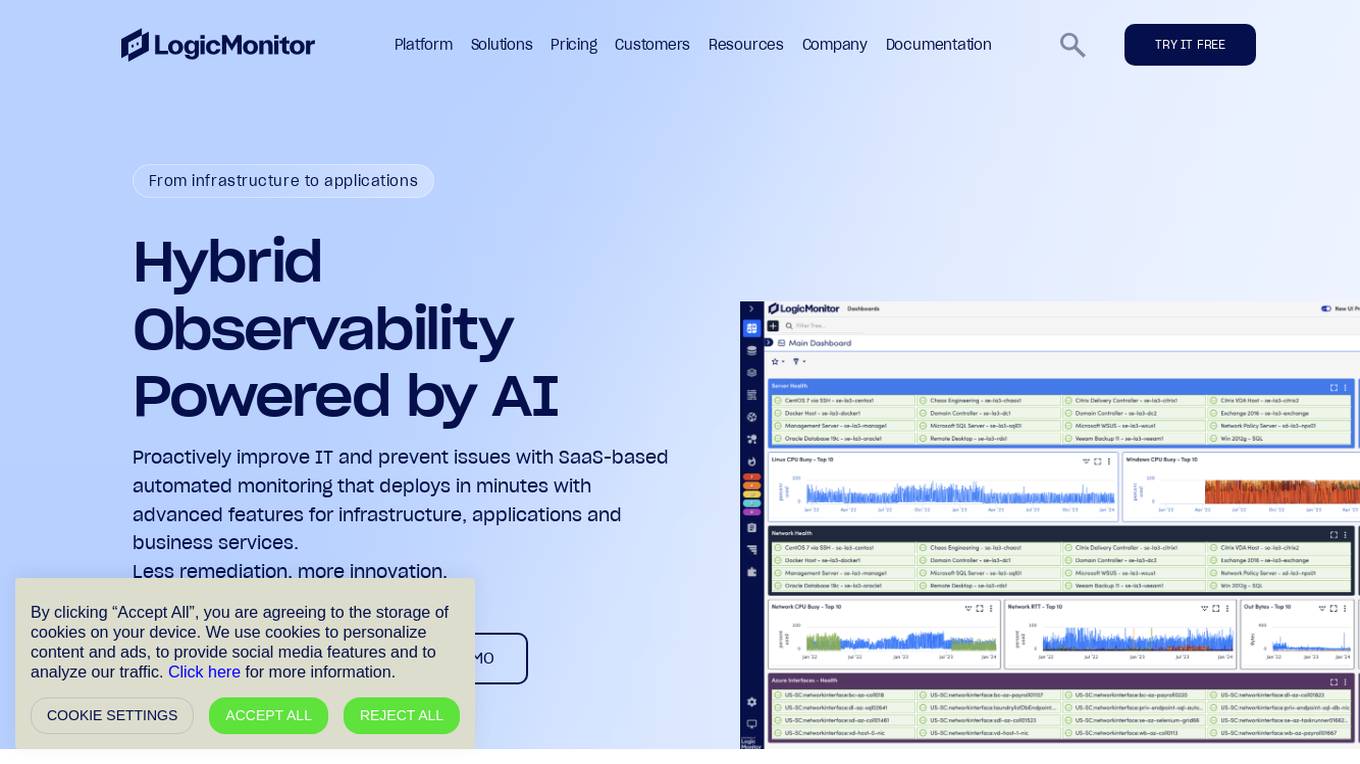

LogicMonitor

LogicMonitor is a cloud-based infrastructure monitoring platform that provides real-time insights and automation for comprehensive, seamless monitoring with agentless architecture. It offers a unified platform for monitoring infrastructure, applications, and business services, with advanced features for hybrid observability. LogicMonitor's AI-driven capabilities simplify complex IT ecosystems, accelerate incident response, and empower organizations to thrive in the digital landscape.

LogicMonitor

LogicMonitor is a cloud-based infrastructure monitoring platform that provides real-time insights and automation for comprehensive, seamless monitoring with agentless architecture. It offers a wide range of features including infrastructure monitoring, network monitoring, server monitoring, remote monitoring, virtual machine monitoring, SD-WAN monitoring, database monitoring, storage monitoring, configuration monitoring, cloud monitoring, container monitoring, AWS Monitoring, GCP Monitoring, Azure Monitoring, digital experience SaaS monitoring, website monitoring, APM, AIOPS, Dexda Integrations, security dashboards, and platform demo logs. LogicMonitor's AI-driven hybrid observability helps organizations simplify complex IT ecosystems, accelerate incident response, and thrive in the digital landscape.

Censius

Censius is an AI Observability Platform for Enterprise ML Teams. It provides end-to-end visibility of structured and unstructured production models, enabling proactive model management and continuous delivery of reliable ML. Key features include model monitoring, explainability, and analytics.

Arize AI

Arize AI is an AI observability tool designed to monitor and troubleshoot AI models in production. It provides configurable and sophisticated observability features to ensure the performance and reliability of next-gen AI stacks. With a focus on ML observability, Arize offers automated setup, a simple API, and a lightweight package for tracking model performance over time. The tool is trusted by top companies for its ability to surface insights, simplify issue root causing, and provide a dedicated customer success manager. Arize is battle-hardened for real-world scenarios, offering unparalleled performance, scalability, security, and compliance with industry standards like SOC 2 Type II and HIPAA.

Evidently AI

Evidently AI is an open-source machine learning (ML) monitoring and observability platform that helps data scientists and ML engineers evaluate, test, and monitor ML models from validation to production. It provides a centralized hub for ML in production, including data quality monitoring, data drift monitoring, ML model performance monitoring, and NLP and LLM monitoring. Evidently AI's features include customizable reports, structured checks for data and models, and a Python library for ML monitoring. It is designed to be easy to use, with a simple setup process and a user-friendly interface. Evidently AI is used by over 2,500 data scientists and ML engineers worldwide, and it has been featured in publications such as Forbes, VentureBeat, and TechCrunch.

Elastic

Elastic is a Search AI Company that offers a platform for building tailored experiences, search and analytics, data ingestion, visualization, and generative AI solutions. The company provides services like Elastic Cloud for real-time insights, Elastic AI Assistant for retrieval and generation, and Search AI Lake for faster integration with LLMs. Elastic aims to help businesses scale with low-latency search AI and accelerate problem resolution with observability powered by advanced ML and analytics.

Langtrace AI

Langtrace AI is an open-source observability tool powered by Scale3 Labs that helps monitor, evaluate, and improve LLM (Large Language Model) applications. It collects and analyzes traces and metrics to provide insights into the ML pipeline, ensuring security through SOC 2 Type II certification. Langtrace supports popular LLMs, frameworks, and vector databases, offering end-to-end observability and the ability to build and deploy AI applications with confidence.

Vocera

Vocera is an AI voice agent testing tool that allows users to test and monitor voice AI agents efficiently. It enables users to launch voice agents in minutes, ensuring a seamless conversational experience. With features like testing against AI-generated datasets, simulating scenarios, and monitoring AI performance, Vocera helps in evaluating and improving voice agent interactions. The tool provides real-time insights, detailed logs, and trend analysis for optimal performance, along with instant notifications for errors and failures. Vocera is designed to work for everyone, offering an intuitive dashboard and data-driven decision-making for continuous improvement.

New Relic

New Relic is an AI monitoring platform that offers an all-in-one observability solution for monitoring, debugging, and improving the entire technology stack. With over 30 capabilities and 750+ integrations, New Relic provides the power of AI to help users gain insights and optimize performance across various aspects of their infrastructure, applications, and digital experiences.

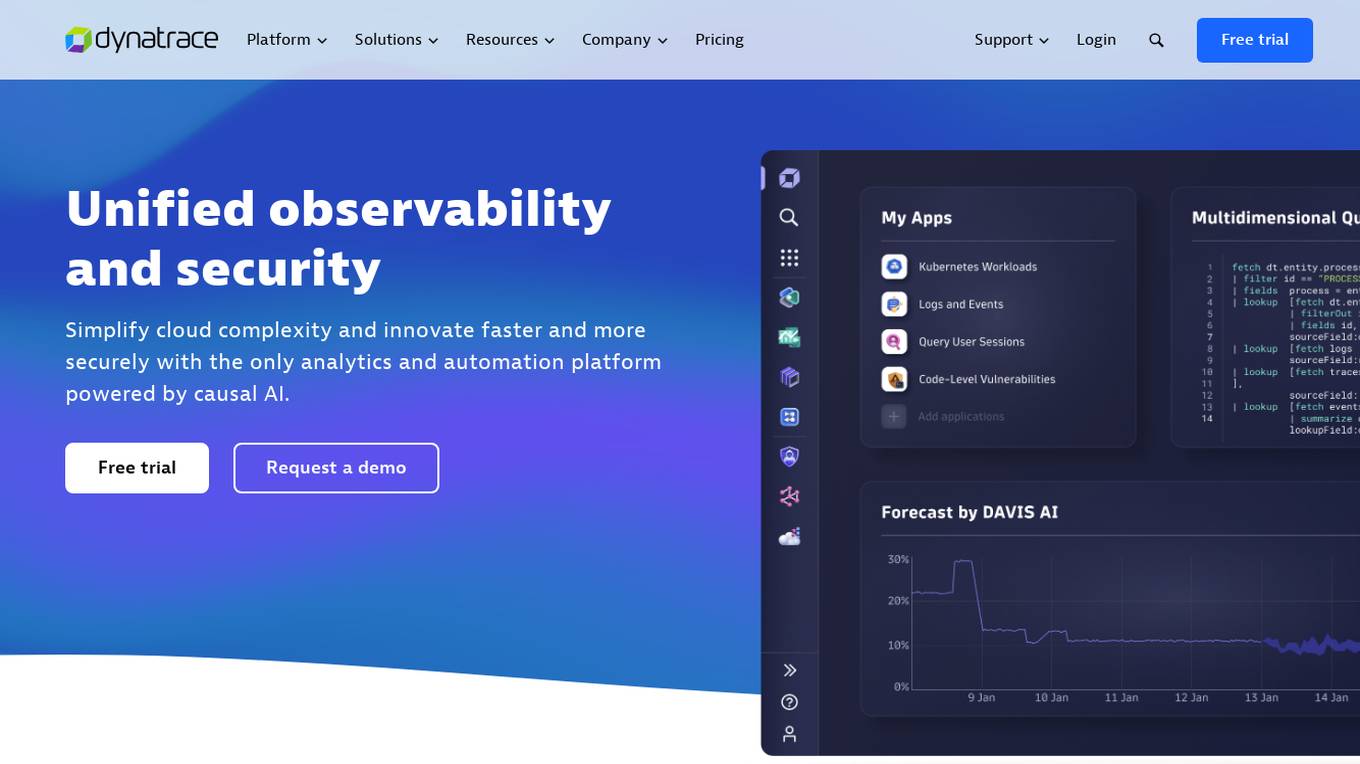

Dynatrace

Dynatrace is a modern cloud platform that offers unified observability and security solutions to simplify cloud complexity and drive innovation. Powered by causal AI, Dynatrace provides analytics and automation capabilities to help businesses monitor and secure their full stack, solve digital challenges, and make better business decisions in real-time. Trusted by thousands of global brands, Dynatrace empowers teams to deliver flawless digital experiences, drive intelligent cloud ecosystem automations, and solve any use-case with custom solutions.

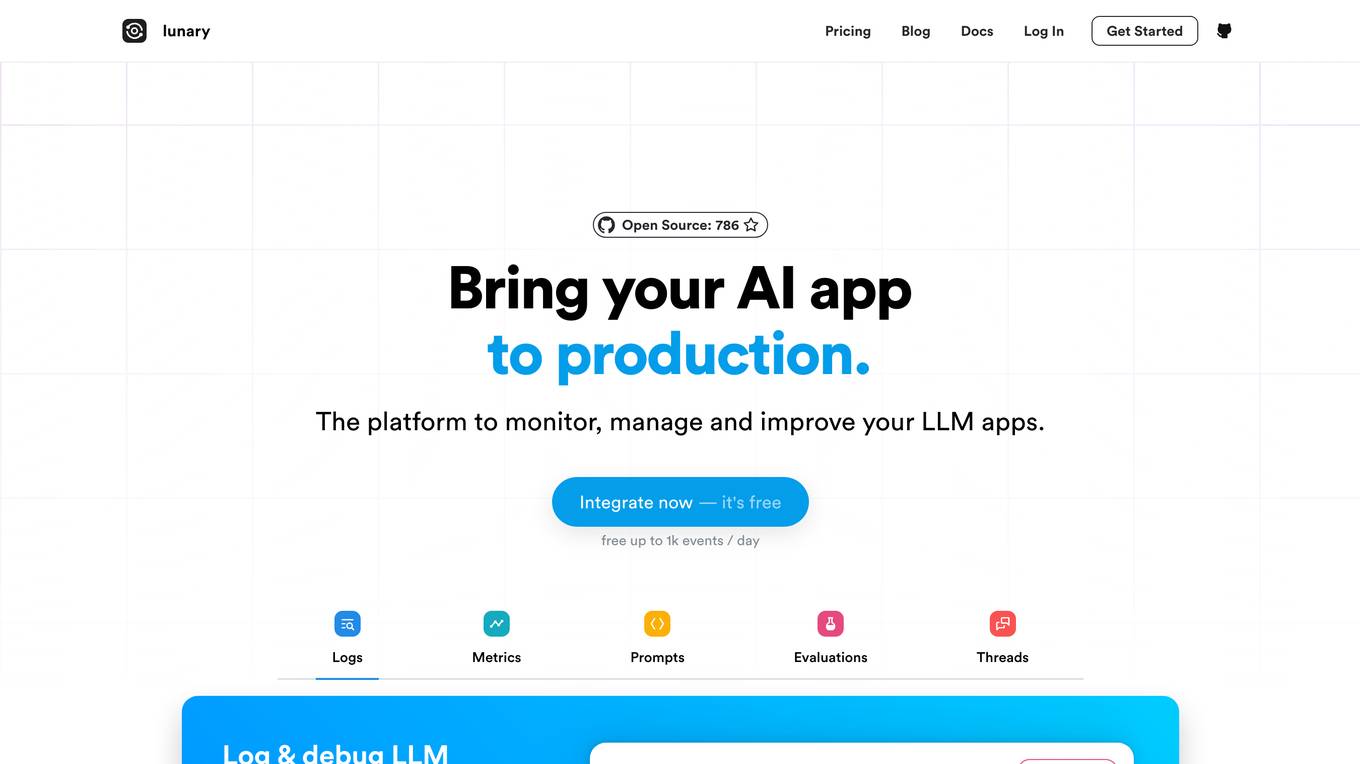

Lunary

Lunary is an AI developer platform designed to bring AI applications to production. It offers a comprehensive set of tools to manage, improve, and protect LLM apps. With features like Logs, Metrics, Prompts, Evaluations, and Threads, Lunary empowers users to monitor and optimize their AI agents effectively. The platform supports tasks such as tracing errors, labeling data for fine-tuning, optimizing costs, running benchmarks, and testing open-source models. Lunary also facilitates collaboration with non-technical teammates through features like A/B testing, versioning, and clean source-code management.

Arize AI

Arize AI is an AI Observability & LLM Evaluation Platform that helps you monitor, troubleshoot, and evaluate your machine learning models. With Arize, you can catch model issues, troubleshoot root causes, and continuously improve performance. Arize is used by top AI companies to surface, resolve, and improve their models.

Fiddler AI

Fiddler AI is an AI Observability platform that provides tools for monitoring, explaining, and improving the performance of AI models. It offers a range of capabilities, including explainable AI, NLP and CV model monitoring, LLMOps, and security features. Fiddler AI helps businesses to build and deploy high-performing AI solutions at scale.

Hamming

Hamming is an AI tool designed to help automate voice agent testing and optimization. It offers features such as prompt optimization, automated voice testing, monitoring, and more. The platform allows users to test AI voice agents against simulated users, create optimized prompts, actively monitor AI app usage, and simulate customer calls to identify system gaps. Hamming is trusted by AI-forward enterprises and is built for inbound and outbound agents, including AI appointment scheduling, AI drive-through, AI customer support, AI phone follow-ups, AI personal assistant, and AI coaching and tutoring.

Radicalbit

Radicalbit is an MLOps and AI Observability platform that helps businesses deploy, serve, observe, and explain their AI models. It provides a range of features to help data teams maintain full control over the entire data lifecycle, including real-time data exploration, outlier and drift detection, and model monitoring in production. Radicalbit can be seamlessly integrated into any ML stack, whether SaaS or on-prem, and can be used to run AI applications in minutes.

DQLabs

DQLabs is a modern data quality platform that leverages observability to deliver reliable and accurate data for better business outcomes. It combines the power of Data Quality and Data Observability to enable data producers, consumers, and leaders to achieve decentralized data ownership and turn data into action faster, easier, and more collaboratively. The platform offers features such as data observability, remediation-centric data relevance, decentralized data ownership, enhanced data collaboration, and AI/ML-enabled semantic data discovery.

Treblle

Treblle is an End to End APIOps Platform that helps engineering and product teams build, ship, and understand their REST APIs in one single place. It offers features such as API Observability, API Documentation, API Governance, API Security, and API Analytics. With a focus on empowering API producers and consumers, Treblle provides actionable data in real-time, customizable dashboards, and automated API development. The platform aims to improve API release times, enhance developer experience, and ensure API quality and security.

0 - Open Source Tools

3 - OpenAI Gpts

DataKitchen DataOps and Data Observability GPT

A specialist in DataOps and Data Observability, aiding in data management and monitoring.