Best AI tools for< Data Quality Engineer >

Infographic

20 - AI tool Sites

Welo Data

Welo Data is an AI tool that specializes in AI benchmarking, model assessment, and training high-quality datasets for AI models. The platform offers services such as supervised fine tuning, reinforcement learning with human feedback, data generation, expert evaluations, and data quality framework to support the development of world-class AI models. With over 27 years of experience, Welo Data combines language expertise and AI data to deliver exceptional training and performance evaluation solutions.

Lightup

Lightup is a cloud data quality monitoring tool with AI-powered anomaly detection, incident alerts, and data remediation capabilities for modern enterprise data stacks. It specializes in helping large organizations implement successful and sustainable data quality programs quickly and easily. Lightup's pushdown architecture allows for monitoring data content at massive scale without moving or copying data, providing extreme scalability and optimal automation. The tool empowers business users with democratized data quality checks and enables automatic fixing of bad data at enterprise scale.

DQLabs

DQLabs is a modern data quality platform that leverages observability to deliver reliable and accurate data for better business outcomes. It combines the power of Data Quality and Data Observability to enable data producers, consumers, and leaders to achieve decentralized data ownership and turn data into action faster, easier, and more collaboratively. The platform offers features such as data observability, remediation-centric data relevance, decentralized data ownership, enhanced data collaboration, and AI/ML-enabled semantic data discovery.

Dot Group Data Advisory

Dot Group is an AI-powered data advisory and solutions platform that specializes in effective data management. They offer services to help businesses maximize the potential of their data estate, turning complex challenges into profitable opportunities using AI technologies. With a focus on data strategy, data engineering, and data transport, Dot Group provides innovative solutions to drive better profitability for their clients.

One Data

One Data is an AI-powered data product builder that offers a comprehensive solution for building, managing, and sharing data products. It bridges the gap between IT and business by providing AI-powered workflows, lifecycle management, data quality assurance, and data governance features. The platform enables users to easily create, access, and share data products with automated processes and quality alerts. One Data is trusted by enterprises and aims to streamline data product management and accessibility through Data Mesh or Data Fabric approaches, enhancing efficiency in logistics and supply chains. The application is designed to accelerate business impact with reliable data products and support cost reduction initiatives with advanced analytics and collaboration for innovative business models.

Lilac

Lilac is an AI tool designed to enhance data quality and exploration for AI applications. It offers features such as data search, quantification, editing, clustering, semantic search, field comparison, and fuzzy-concept search. Lilac enables users to accelerate dataset computations and transformations, making it a valuable asset for data scientists and AI practitioners. The tool is trusted by Alignment Lab and is recommended for working with LLM datasets.

Industrial Engineer AI

Industrial Engineer AI is an advanced AI tool designed to assist industrial engineers in optimizing processes and improving efficiency in manufacturing environments. The application utilizes machine learning algorithms to analyze data, identify bottlenecks, and suggest solutions for streamlining operations. With its user-friendly interface and powerful capabilities, Industrial Engineer AI is a valuable tool for professionals looking to enhance productivity and reduce costs in industrial settings.

Coginiti

Coginiti is a collaborative analytics platform and tools designed for SQL developers, data scientists, engineers, and analysts. It offers capabilities such as AI assistant, data mesh, database & object store support, powerful query & analysis, and share & reuse curated assets. Coginiti empowers teams and organizations to manage collaborative practices, data efficiency, and deliver trusted data products faster. The platform integrates modular analytic development, collaborative versioned teamwork, and a data quality framework to enhance productivity and ensure data reliability. Coginiti also provides an AI-enabled virtual analytics advisor to boost team efficiency and empower data heroes.

Seudo

Seudo is a data workflow automation platform that uses AI to help businesses automate their data processes. It provides a variety of features to help businesses with data integration, data cleansing, data transformation, and data analysis. Seudo is designed to be easy to use, even for businesses with no prior experience with AI. It offers a drag-and-drop interface that makes it easy to create and manage data workflows. Seudo also provides a variety of pre-built templates that can be used to get started quickly.

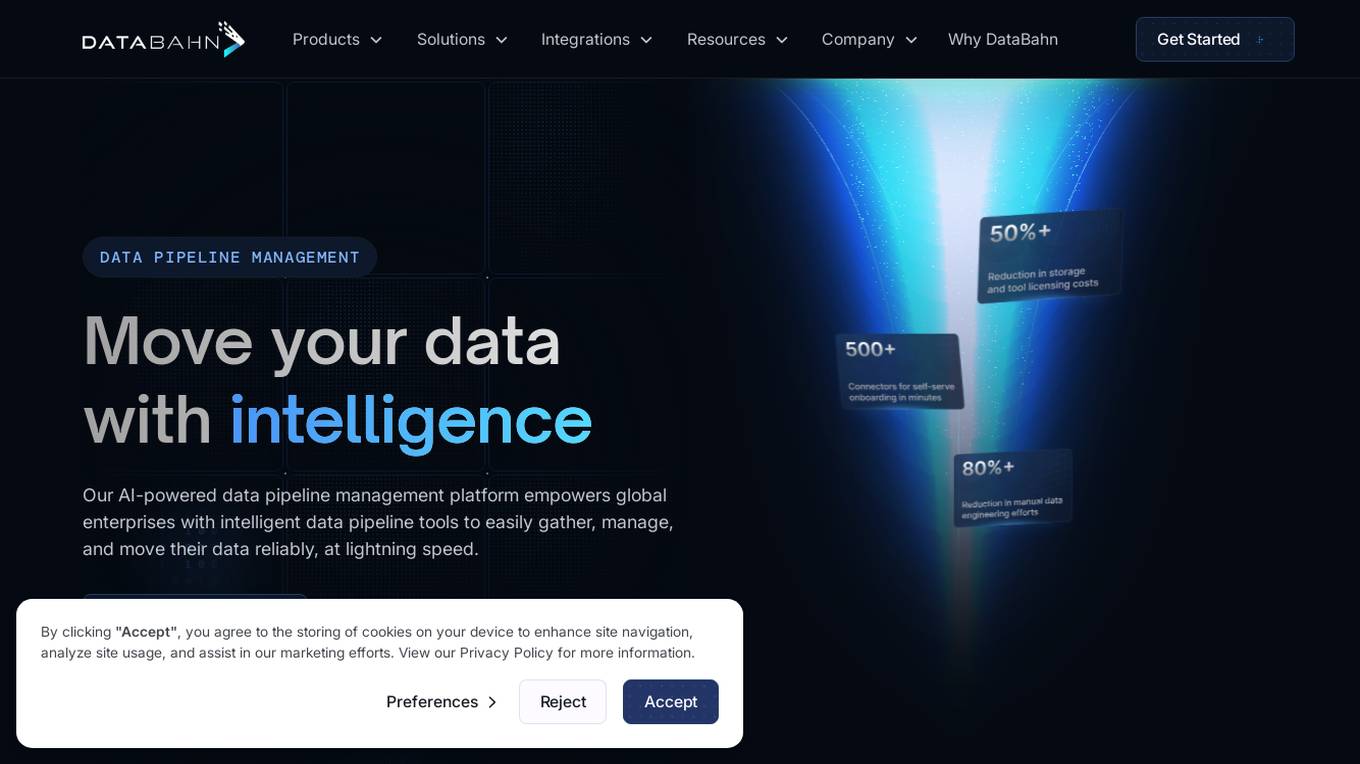

DataBahn

DataBahn is an AI-powered data pipeline management platform that empowers global enterprises with intelligent tools to gather, manage, and move data reliably and quickly. It offers a comprehensive solution for data integration, management, and optimization, helping users save costs and time. The platform ensures real-time insights, agility, and value by automating data processes and providing complete data ownership and governance. DataBahn is trusted by ambitious companies and partners worldwide for its efficiency and effectiveness in handling data workflows.

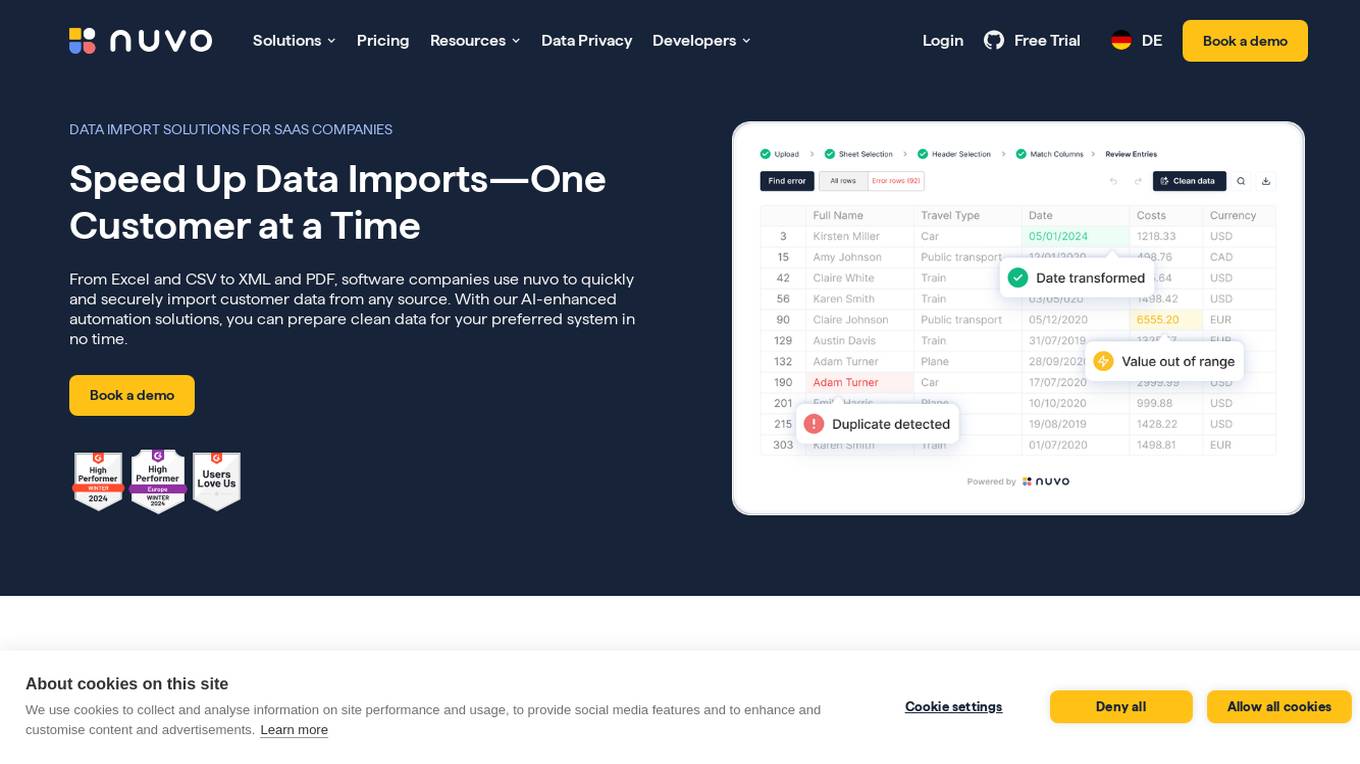

nuvo

nuvo is an AI-powered data import solution that offers fast, secure, and scalable data import solutions for software companies. It provides tools like nuvo Data Importer SDK and nuvo Data Pipeline to streamline manual and recurring ETL data imports, enabling users to manage data imports independently. With AI-enhanced automation, nuvo helps prepare clean data for preferred systems quickly and efficiently, reducing manual effort and improving data quality. The platform allows users to upload unlimited data in various formats, match imported data to system schemas, clean and validate data, and import clean data into target systems with just a click.

Evidently AI

Evidently AI is an open-source machine learning (ML) monitoring and observability platform that helps data scientists and ML engineers evaluate, test, and monitor ML models from validation to production. It provides a centralized hub for ML in production, including data quality monitoring, data drift monitoring, ML model performance monitoring, and NLP and LLM monitoring. Evidently AI's features include customizable reports, structured checks for data and models, and a Python library for ML monitoring. It is designed to be easy to use, with a simple setup process and a user-friendly interface. Evidently AI is used by over 2,500 data scientists and ML engineers worldwide, and it has been featured in publications such as Forbes, VentureBeat, and TechCrunch.

ApplyPass

ApplyPass is an automated job search AI tool designed to help job seekers optimize their job application process. It offers features such as resume optimization, automated job applications, tracking progress, and personalized profile overhaul. ApplyPass aims to save time for job seekers by applying to hundreds of jobs across platforms, enhancing visibility, and increasing interview opportunities. With a focus on software engineers, ApplyPass combines custom machine learning algorithms and human review to ensure optimized resumes for better job prospects. The tool also provides additional services like resume writing, LinkedIn optimization, and interview preparation.

Datuum

Datuum is an AI-powered data onboarding solution that offers seamless integration for businesses. It simplifies the data onboarding process by automating manual tasks, generating code, and ensuring data accuracy with AI-driven validation. Datuum helps businesses achieve faster time to value, reduce costs, improve scalability, and enhance data quality and consistency.

Tektonic AI

Tektonic AI is an AI application that empowers businesses by providing AI agents to automate processes, make better decisions, and bridge data silos. It offers solutions to eliminate manual work, increase autonomy, streamline tasks, and close gaps between disconnected systems. The application is designed to enhance data quality, accelerate deal closures, optimize customer self-service, and ensure transparent operations. Tektonic AI is founded by industry veterans with expertise in AI, cloud, and enterprise software.

Datasaur

Datasaur is an advanced text and audio data labeling platform that offers customizable solutions for various industries such as LegalTech, Healthcare, Financial, Media, e-Commerce, and Government. It provides features like configurable annotation, quality control automation, and workforce management to enhance the efficiency of NLP and LLM projects. Datasaur prioritizes data security with military-grade practices and offers seamless integrations with AWS and other technologies. The platform aims to streamline the data labeling process, allowing engineers to focus on creating high-quality models.

Pieces

Pieces is an on-device AI coding assistant that boosts developer productivity by providing contextual understanding of the entire workflow. It offers features like leveraging real-time context, using advanced AI models, applying hyper-relevant context to conversations, deep integrations within tools, air-gapped security, and more. Pieces is designed to simplify coding processes, enhance code generation, and streamline developer workflows.

OptiSol

OptiSol is a global technology company offering digital transformation services to a diverse range of industries. With a team of over 400 professionals across 5 countries, OptiSol focuses on providing innovative IT solutions in areas such as AI, Cloud Computing, Digital Engineering, Quality Assurance, and Enterprise Services. The company prides itself on building strong partnerships with clients based on trust, transparency, and shared goals. OptiSol's services include AI & ML, Quality Engineering, Cloud Web Application, Gen AI Applications, Digital Engineering, Enterprise Solutions, User Experience Engineering, Mobile Application, and DevOps Automation.

Tricentis

Tricentis is an AI-powered testing tool that offers a comprehensive set of test automation capabilities to address various testing challenges. It provides end-to-end test automation solutions for a wide range of applications, including Salesforce, mobile testing, performance testing, and data integrity testing. Tricentis leverages advanced ML technologies to enable faster and smarter testing, ensuring quality at speed with reduced risk, time, and costs. The platform also offers continuous performance testing, change and data intelligence, and model-based, codeless test automation for mobile applications.

Anote

Anote is a human-centered AI company that provides a suite of products and services to help businesses improve their data quality and build better AI models. Anote's products include a data labeler, a private chatbot, a model inference API, and a lead generation tool. Anote's services include data annotation, model training, and consulting.

0 - Open Source Tools

20 - OpenAI Gpts

DataKitchen DataOps and Data Observability GPT

A specialist in DataOps and Data Observability, aiding in data management and monitoring.

DataQualityGuardian

A GPT-powered assistant specializing in data validation and quality checks for various datasets.

Data Governance Advisor

Ensures data accuracy, consistency, and security across organization.

Missing Cluster Identification Program

I analyze and integrate missing clusters in data for coherent structuring.

Process Optimization Advisor

Improves operational efficiency by optimizing processes and reducing waste.

Personality AI Creator

I will create a quality data set for a personality AI, just dive into each module by saying the name of it and do so for all the modules. If you find it useful, share it to your friends

PósEngenhariaDeMateriaisEMetalúrgicaBR

Especialista em Engenharia de Materiais e Metalúrgica

AquaAirAI

AquaAirAI is a specialized assistant that compares air and water quality across cities and regions, providing insightful reports and recommendations based on comprehensive environmental data analysis from Excel files.

Project Quality Assurance Advisor

Ensures project deliverables meet predetermined quality standards.

Job

Find your perfect job matches from 1M+ high quality, newly posted openings (local & remote) in tech effortlessly with Jobright AI Co-Pilot | One stop job search across major job boards

GPTValue

Compare similar GPTs outputs quality on the same question, identify the most valuable one.

SEARCHLIGHT

Script Examples and Resource Center for Helping with LAMMPS Input Generation and High-quality Tutorials (SERCHLIGHT)

CDR Guru

To master Unified Communications Data across platforms like Cisco, Avaya, Mitel, and Microsoft Teams, by orchestrating a team of expert agents and providing actionable solutions.