Best AI tools for< Baker >

Infographic

20 - AI tool Sites

Inpulse.ai

Inpulse.ai is an AI platform that revolutionizes inventory management and supplier ordering for restaurant chains. It assists managers in making informed decisions by accurately forecasting sales, anticipating production needs, and optimizing food supplies. The platform provides real-time performance monitoring, automated production planning, and centralized data management to help restaurants improve their margins and reduce waste. Inpulse.ai is used by over 3,000 restaurants, food kiosks, and bakeries on a daily basis, offering a comprehensive solution to streamline operations and boost profitability.

RBG AI Drop #001

RBG AI Drop #001 is an AI tool that allows users to interact with a virtual version of Justice Ruth Bader Ginsburg. Users can ask her any YES/NO question and receive a response. The tool is designed as an experiment to engage users in a unique and interactive experience with AI technology.

Activeloop

Activeloop is an AI tool that offers Deep Lake, a database for AI solutions across various industries such as agriculture, audio processing, autonomous vehicles, robotics, biomedical and healthcare, generative AI, multimedia, safety, and security. The platform provides features like fast AI search, faster data preparation, serverless DB for code assistant, and more. Activeloop aims to streamline data processing and enhance AI development for businesses and researchers.

Comparables.ai

Comparables.ai is an AI-powered company and market intelligence platform designed for M&A professionals. It offers comprehensive data insights, valuation multiples, and market analysis to help users make informed decisions in investment banking, private equity, and corporate finance. The platform leverages AI technology to provide relevant company information, financial data, and M&A transaction history, enabling users to identify new investment targets, benchmark companies, and conduct market analysis efficiently.

Onnix AI

Onnix AI is a personalized AI co-pilot designed specifically for bankers, aiming to save teams time by providing accurate answers and deliverables quickly. It brings AI and powerful data science tools to the banking sector, offering features such as creating personalized slide decks, conducting Excel analysis, and querying data sources. Onnix AI caters to both senior and junior teams, enabling them to generate deeper insights and streamline their workflow efficiently.

Lama AI

Lama AI is an AI-powered platform designed to revolutionize business lending processes for banks and financial institutions. It offers advanced features, rapid configurability, and exceptional support to streamline loan origination, underwriting, and decision-making. By leveraging the power of AI, Lama AI enables banks to boost business growth potential, improve profitability, and enhance customer experience through contextual onboarding, decisioning workspace, expanded credit access, and pre-qualified applications. The platform also provides white-labeled solutions, API-first integration, high configurability, built-in AI models, and access to a network of bank lenders, all while ensuring bank-grade security and compliance standards.

DataSnack

DataSnack is a real-time, AI-driven due diligence platform that helps you make better decisions faster. With DataSnack, you can access a wealth of data and insights on companies, industries, and markets, all in one place. Our AI-powered platform analyzes data from a variety of sources, including news, social media, and financial filings, to provide you with the most up-to-date and relevant information. With DataSnack, you can:

Inven

Inven is an AI-powered company data platform that helps professionals in private equity, investment banking, business brokerage, consulting, and corporate development find companies faster and more efficiently. With Inven, users can access a database of over 23 million companies and 430 million contacts in over 160 countries. Inven's AI algorithms and NLP solutions analyze millions of data points from a wide range of sources to give users actionable insights on any niche.

Roic AI

Roic AI is an AI tool designed to provide users with essential financial data for analyzing companies. It offers comprehensive company summaries, 30+ years of financial statements, and earnings call transcripts in a single location. Users can access crucial information about popular companies like Apple Inc. and Microsoft Corporation through this platform.

CityFALCON

CityFALCON is a financial and business due diligence platform that provides a range of solutions for the needs of a wide audience, including retail investors, retail traders, daily business news readers, brokers, students, professors, academia, wealth managers, financial advisors, P2P crowdfunding, VC, PE, institutional investors, treasury, consultancy, legal, accounting, central banks, and regulatory agencies. The platform offers a variety of features and content, including a CityFALCON Score, watchlists, similar stories, grouping news on charts, key headlines, sentiment content translation, content news premium publications, insider transactions, official company filings, investor relations, ESG content, and languages.

Revi

Revi is an AI-enabled deal origination platform designed for Private Equity (PE), Investment Banking (IB), corporate development, and consultants. It offers a sophisticated solution that leverages artificial intelligence to streamline market mapping efforts, track data from over 1,000 sources in real-time, and reduce manual research tasks by 85%. The platform allows users to create complex search queries in natural language, enabling precise identification of M&A targets with unparalleled accuracy.

Quill AI

Quill is an AI-powered SEC filing platform that allows users to extract key information from filings, answer questions about public investor materials, access historical financial data, and receive real-time SEC filings and earnings call transcripts. The platform leverages financially-tuned AI to provide accurate and up-to-date information, making it a valuable tool for analysts and professionals in the finance industry.

ArkiFi

ArkiFi is a revolutionary AI finance workflow automation tool that leverages Generative AI to provide deterministic output, ensuring trustworthy results without 'hallucination.' It empowers finance professionals to focus on strategic thinking by automating grind work, formatting, and debugging. The platform reimagines productivity by saving time and enabling cross-platform functionality for faster decision-making. Arkifi is on a mission to disintermediate human labor in advanced finance and has garnered significant capital and partnerships with marquee financial institutions.

EarningsCall.ai

EarningsCall.ai is an AI-powered tool that provides stock earnings call summaries and insights, saving users hours of reading transcripts. It allows users to compare competitors, analyze trends, and generate their own insights. The tool is designed for business leaders, financial professionals, consultants, and advisors to track competitor earnings, assess market trends, and optimize investment strategies.

Hebbia

Hebbia is an AI platform designed for finance professionals to add the power of generative AI to their firms. It allows users to explore thousands of use cases in legal, credit advisory, corporate consulting, real estate, and asset management. Hebbia synthesizes information into actionable insights, systematizes research workflows, automates tasks like quarterly earnings review and legal document analysis, and is trusted by large global institutions for its enterprise security features.

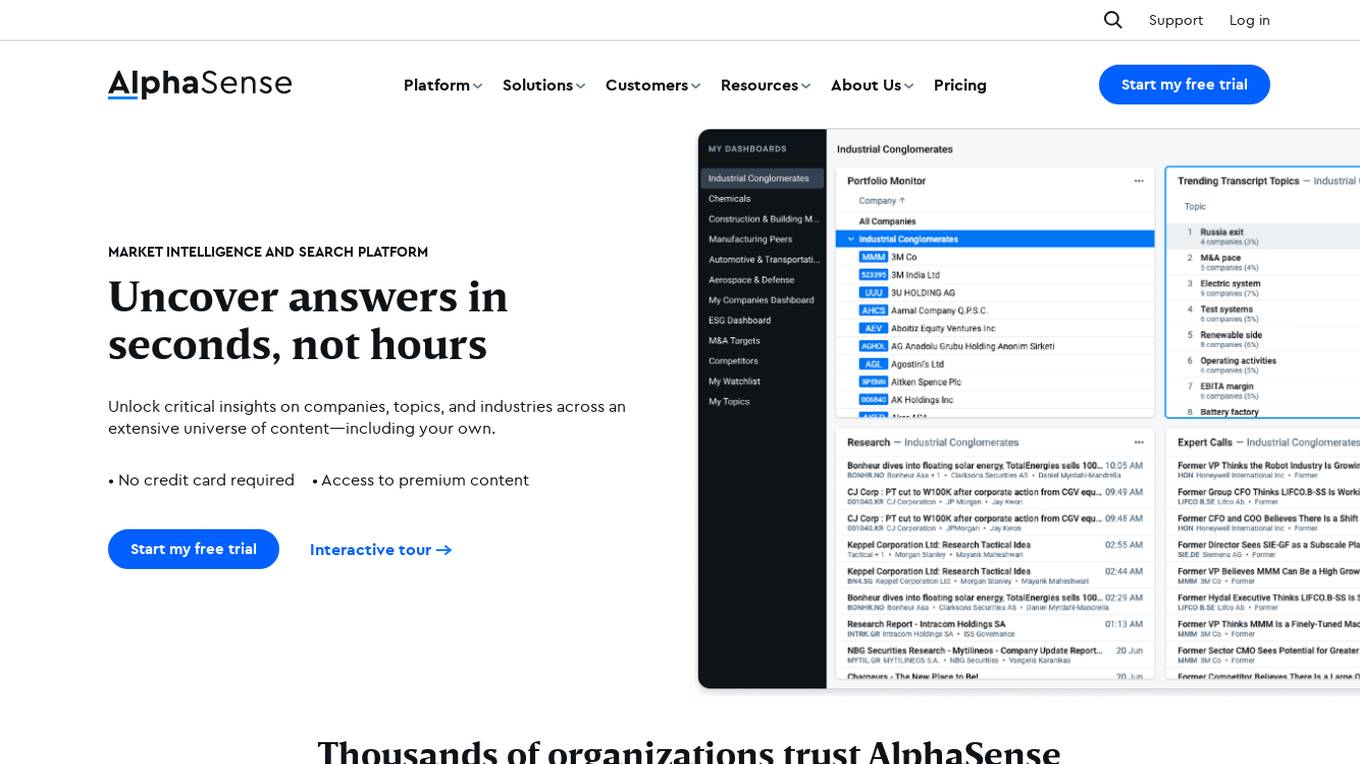

AlphaSense

AlphaSense is a market intelligence and search platform that provides access to a comprehensive universe of content, including company filings, broker research, expert calls, regulatory documents, press releases, and internal content. It utilizes AI and NLP technology to surface relevant insights, monitor market trends, and collaborate on research. AlphaSense is trusted by thousands of organizations, including 85% of the S&P 100, 80% of the top asset management firms, and 80% of the top consultancies.

FinSMEs

FinSMEs is an AI-powered website that provides the latest news and updates on venture capital investments and market intelligence. The platform covers funding rounds, strategic collaborations, and developments in the AI industry. Users can stay informed about innovative startups, funding trends, and emerging technologies in the USA, UK, Germany, France, Canada, India, and Italy.

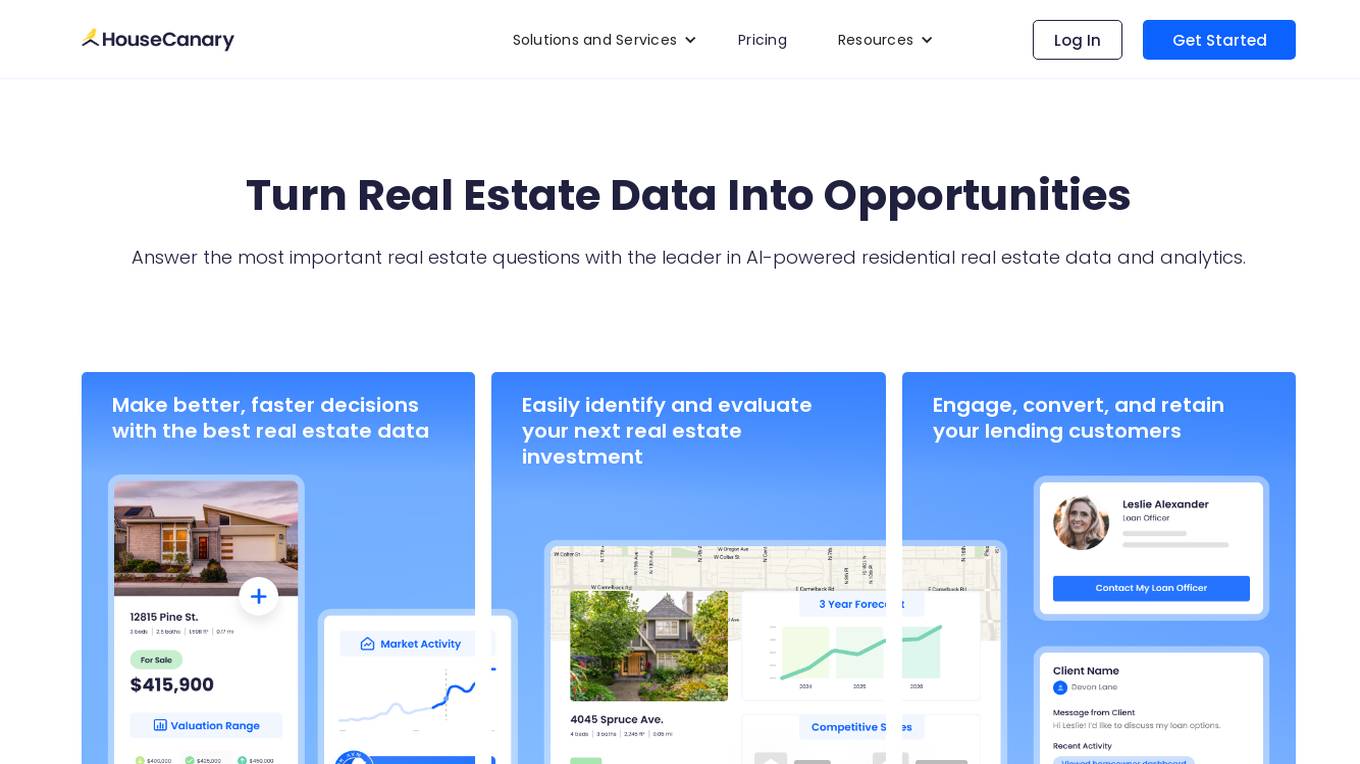

HouseCanary

HouseCanary is a leading AI-powered data and analytics platform for residential real estate. With a full suite of industry-leading products and tools, HouseCanary provides real estate investors, mortgage lenders, investment banks, whole loan buyers, and prop techs with the most comprehensive and accurate residential real estate data and analytics in the industry. HouseCanary's AI algorithms analyze a vast array of real estate data to generate meaningful insights to help teams be more efficient, ultimately saving time and money.

Hebbia

Hebbia is an AI-powered tool that helps users collaborate with LLMs more confidently and efficiently. It allows users to ask questions about all their documents, up to millions at a time, and provides important answers that are not limited to the top few results. Hebbia is designed to execute workflows with hundreds of steps over any amount of sources, turning prompts into processes. It is a trustworthy AI system that shows its work at each step, allowing users to verify, trust, and collaborate with AI. Hebbia is used by the largest enterprises, financial institutions, governments, and law firms in the world.

Centari

Centari is a platform for deal intelligence that leverages generative AI to transform complex documents into actionable insights. It helps users unlock more dealflow, enrich marketing materials, visualize market trends, and streamline the deal validation process. With features like data extraction, intuitive validation, and deal history visualization, Centari empowers users to make informed decisions and win deals with competitive knowledge.

0 - Open Source Tools

20 - OpenAI Gpts

Pizza Pro Dough Helper

Expert in BIGA and Neapolitan pizza dough recipes, focusing on tailored calculations and precise temperature data.

Fermentation Sage - Fermento Brewster v1

stunspot's Fermentation Expert - beer, mead, kimchi, pickles, everything!

Bake Off - Great British Technical Challenge GBBO

Minimalist baking challenges with a step title and tailored hint!

Chef Dulce

Experto en repostería, ofrece recetas y consejos con un tono casual y amistoso.

Bagels

Expert on bagels, offering detailed info on types, toppings, and recipes in an enjoyable tone.

Bake Off

The Great (Pretrained Transformer) Bake Off Challenge! Bake a cake, Get roasted by Ai. Type K to view all game modes. v1.0

GingerHouseMaker

Gingerbread Designer transforming your house into festive and whimsical gingerbread. v1.1

Cake Designer

I specialize in crafting custom cake designs, offering visual representations and tailored recipes according to individual tastes and preferences.

The Great Bakeoff Master

Magical baking game host & with the 4 judges to help you become the master baker and chef