Best AI tools for< Ai Infrastructure Developer >

Infographic

20 - AI tool Sites

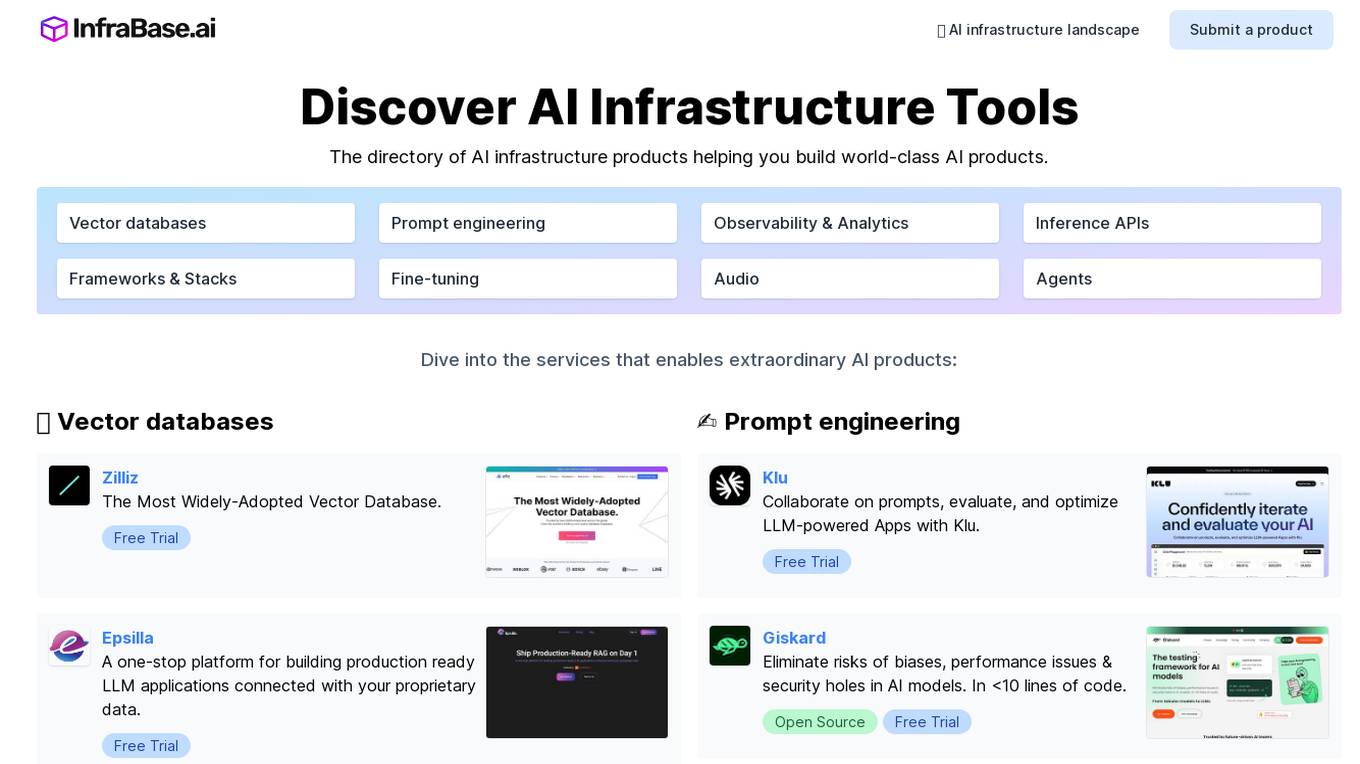

Infrabase.ai

Infrabase.ai is a directory of AI infrastructure products that helps users discover and explore a wide range of tools for building world-class AI products. The platform offers a comprehensive directory of products in categories such as Vector databases, Prompt engineering, Observability & Analytics, Inference APIs, Frameworks & Stacks, Fine-tuning, Audio, and Agents. Users can find tools for tasks like data storage, model development, performance monitoring, and more, making it a valuable resource for AI projects.

GooseAI

GooseAI is a fully managed NLP-as-a-Service delivered via API, at 30% the cost of other providers. It offers a variety of NLP models, including GPT-Neo 1.3B, Fairseq 1.3B, GPT-J 6B, Fairseq 6B, Fairseq 13B, and GPT-NeoX 20B. GooseAI is easy to use, with feature parity with industry standard APIs. It is also highly performant, with the industry's fastest generation speeds.

Microtica

Microtica is an AI-powered cloud delivery platform that offers a comprehensive suite of DevOps tools to help users build, deploy, and optimize their infrastructure efficiently. With features like AI Incident Investigator, AI Infrastructure Builder, Kubernetes deployment simplification, alert monitoring, pipeline automation, and cloud monitoring, Microtica aims to streamline the development and management processes for DevOps teams. The platform provides real-time insights, cost optimization suggestions, and guided deployments, making it a valuable tool for businesses looking to enhance their cloud infrastructure operations.

Outspeed

Outspeed is a platform for Realtime Voice and Video AI applications, providing networking and inference infrastructure to build fast, real-time voice and video AI apps. It offers tools for intelligence across industries, including Voice AI, Streaming Avatars, Visual Intelligence, Meeting Copilot, and the ability to build custom multimodal AI solutions. Outspeed is designed by engineers from Google and MIT, offering robust streaming infrastructure, low-latency inference, instant deployment, and enterprise-ready compliance with regulations such as SOC2, GDPR, and HIPAA.

Cerebium

Cerebium is a serverless AI infrastructure platform that allows teams to build, test, and deploy AI applications quickly and efficiently. With a focus on speed, performance, and cost optimization, Cerebium offers a range of features and tools to simplify the development and deployment of AI projects. The platform ensures high reliability, security, and compliance while providing real-time logging, cost tracking, and observability tools. Cerebium also offers GPU variety and effortless autoscaling to meet the diverse needs of developers and businesses.

Inworld

Inworld is an AI framework designed for games and media, offering a production-ready framework for building AI agents with client-side logic and local model inference. It provides tools optimized for real-time data ingestion, low latency, and massive scale, enabling developers to create engaging and immersive experiences for users. Inworld allows for building custom AI agent pipelines, refining agent behavior and performance, and seamlessly transitioning from prototyping to production. With support for C++, Python, and game engines, Inworld aims to future-proof AI development by integrating 3rd-party components and foundational models to avoid vendor lock-in.

Neurochain AI

Neurochain AI is a decentralized AI-as-a-Service (DeAIAS) network that provides an innovative solution for building, launching, and using AI-powered decentralized applications (dApps). It offers a community-driven approach to AI development, incentivizing contributors with $NCN rewards. The platform aims to address challenges in the centralized AI landscape by democratizing AI development and leveraging global computing resources. Neurochain AI also features a community-powered content generation engine and is developing its own independent blockchain. The team behind Neurochain AI includes experienced professionals in infrastructure, cryptography, computer science, and AI research.

UbiOps

UbiOps is an AI infrastructure platform that helps teams quickly run their AI & ML workloads as reliable and secure microservices. It offers powerful AI model serving and orchestration with unmatched simplicity, speed, and scale. UbiOps allows users to deploy models and functions in minutes, manage AI workloads from a single control plane, integrate easily with tools like PyTorch and TensorFlow, and ensure security and compliance by design. The platform supports hybrid and multi-cloud workload orchestration, rapid adaptive scaling, and modular applications with unique workflow management system.

NVIDIA Run:ai

NVIDIA Run:ai is an enterprise platform for AI workloads and GPU orchestration. It accelerates AI and machine learning operations by addressing key infrastructure challenges through dynamic resource allocation, comprehensive AI life-cycle support, and strategic resource management. The platform significantly enhances GPU efficiency and workload capacity by pooling resources across environments and utilizing advanced orchestration. NVIDIA Run:ai provides unparalleled flexibility and adaptability, supporting public clouds, private clouds, hybrid environments, or on-premises data centers.

Mystic.ai

Mystic.ai is an AI tool designed to deploy and scale Machine Learning models with ease. It offers a fully managed Kubernetes platform that runs in your own cloud, allowing users to deploy ML models in their own Azure/AWS/GCP account or in a shared GPU cluster. Mystic.ai provides cost optimizations, fast inference, simpler developer experience, and performance optimizations to ensure high-performance AI model serving. With features like pay-as-you-go API, cloud integration with AWS/Azure/GCP, and a beautiful dashboard, Mystic.ai simplifies the deployment and management of ML models for data scientists and AI engineers.

Rafay

Rafay is an AI-powered platform that accelerates cloud-native and AI/ML initiatives for enterprises. It provides automation for Kubernetes clusters, cloud cost optimization, and AI workbenches as a service. Rafay enables platform teams to focus on innovation by automating self-service cloud infrastructure workflows.

Moreh

Moreh is an AI platform that aims to make hyperscale AI infrastructure more accessible for scaling any AI model and application. It provides a full-stack infrastructure software from PyTorch to GPUs for the LLM era, enabling users to train large language models efficiently and effectively.

Eventual

Eventual is an AI tool that revolutionizes data processing by building a generational technology for multimodal data handling. Their query engine, Daft, simplifies processing of images, video, audio, and text, liberating engineers from complex distributed systems. Eventual enables the development of AI systems previously deemed impossible, by embracing real-world data messiness. The tool is used by companies like Amazon, MobilEye, and CloudKitchens to process petabytes of data daily, marking a shift towards a more efficient and innovative AI infrastructure.

Cisco AI Solutions

Cisco offers a range of Artificial Intelligence (AI) solutions to help organizations leverage the power of AI in various aspects of their operations. From infrastructure scaling to data insights and AI-powered software, Cisco provides a comprehensive suite of services to accelerate the adoption and implementation of AI technologies. The company also invests in AI innovation and collaborates with industry leaders like NVIDIA to shape the future of AI infrastructure. With a focus on responsible AI, Cisco aims to deliver cutting-edge solutions that drive productivity and security while ensuring inclusivity and transparency in the AI ecosystem.

Seedbox

Seedbox is an AI-based solution provider that crafts custom AI solutions to address specific challenges and boost businesses. They offer tailored AI solutions, state-of-the-art corporate innovation methods, high-performance computing infrastructure, secure and cost-efficient AI services, and maintain the highest security standards. Seedbox's expertise covers in-depth AI development, UX/UI design, and full-stack development, aiming to increase efficiency and create sustainable competitive advantages for their clients.

HIVE Digital Technologies

HIVE Digital Technologies is a company specializing in building and operating cutting-edge data centers, with a focus on Bitcoin mining and advancing Web3, AI, and HPC technologies. They offer cloud services, operate data centers in Canada, Iceland, and Sweden, and have a fleet of industrial GPUs for AI applications. The company is known for its expertise in digital infrastructure and commitment to using renewable energy sources.

FriendliAI

FriendliAI is a generative AI infrastructure company that offers efficient, fast, and reliable generative AI inference solutions for production. Their cutting-edge technologies enable groundbreaking performance improvements, cost savings, and lower latency. FriendliAI provides a platform for building and serving compound AI systems, deploying custom models effortlessly, and monitoring and debugging model performance. The application guarantees consistent results regardless of the model used and offers seamless data integration for real-time knowledge enhancement. With a focus on security, scalability, and performance optimization, FriendliAI empowers businesses to scale with ease.

Trieve

Trieve is an AI-first infrastructure API that offers search, recommendations, and RAG capabilities by combining language models with tools for fine-tuning ranking and relevance. It helps companies build unfair competitive advantages through their discovery experiences, powering over 30,000 discovery experiences across various categories. Trieve supports semantic vector search, BM25 & SPLADE full-text search, hybrid search, merchandising & relevance tuning, and sub-sentence highlighting. The platform is built on open-source models, ensuring data privacy, and offers self-hostable options for sensitive data and maximum performance.

CodeGPT

CodeGPT is a comprehensive AI-powered platform that provides a suite of tools and services designed to enhance business operations and streamline coding processes. It offers a range of AI assistants, known as Copilots, Agents, or GPTs, that can be customized and integrated into various applications. These AI assistants can automate tasks, generate content, provide insights, and assist with coding, among other functions. CodeGPT also features a marketplace where users can explore and discover a wide selection of pre-built AI assistants tailored to specific tasks and industries. Additionally, the platform offers an API for advanced users to integrate AI capabilities into their own custom projects. With its focus on customization, flexibility, and ease of use, CodeGPT empowers businesses and individuals to leverage AI technology to improve efficiency, productivity, and innovation.

Lambda Docs

Lambda Docs is an AI tool that provides cloud and hardware solutions for individuals, teams, and organizations. It offers services such as Managed Kubernetes, Preinstalled Kubernetes, Slurm, and access to GPU clusters. The platform also provides educational resources and tutorials for machine learning engineers and researchers to fine-tune models and deploy AI solutions.

0 - Open Source Tools

20 - OpenAI Gpts

Ryan Pollock GPT

🤖 AMAIA: ask Ryan's AI anything you'd ask the real Ryan 🧠 Deep Tech VP Marketing & Growth 🌥 Cloud Infrastructure, Databases, Machine Learning, APIs 🤖 Google Cloud, DigitalOcean, Oracle, Vultr, Android 🌁 More at linkedin.com/in/ryanpollock

Architext

Architext is a sophisticated chatbot designed to guide users through the complexities of AWS architecture, leveraging the AWS Well-Architected Framework. It offers real-time, tailored advice, interactive learning, and up-to-date resources for both novices and experts in AWS cloud infrastructure.

ML Engineer GPT

I'm a Python and PyTorch expert with knowledge of ML infrastructure requirements ready to help you build and scale your ML projects.

Securia

AI-powered audit ally. Enhance cybersecurity effortlessly with intelligent, automated security analysis. Safe, swift, and smart.

Global Construction Oracle

Futuristic construction AI with interplanetary and nano-robotics integration

ethicallyHackingspace (eHs)® (IoN-A-SCP)™

Interactive on Network (IoN) Automation SCP (IoN-A-SCP)™ AI-copilot (BETA)

RailwayGPT

Technical expert on locomotives, trains, signalling, and railway technology. Can answer questions and draw designs specific to transportation domain.

AI Assistant for Writers and Creatives

Organize and develop ideas, respecting privacy and copyright laws.

AI Mentor

An AI advisor guiding your businesses in starting with AI, using some hand-picked resources.